- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 视频嵌入链接 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Power Your Delta Lake with Streaming Transactional Changes

Organizations are adopting data digitization and data-driven decision making is at the heart of this transformation. Cloud Data Lakes and Datawarehouses provide great flexibility to proto-type and roll out applications continuously at much lower costs.

Transactional databases are optimized for processing huge volumes of transactions in real-time, whereas the cloud data lake needs to be optimized for analyzing huge volumes of data quickly. This brings about a challenge in creating a streamlined data flow process from capturing realtime transactions into a cloud datawarehouse to drive realtime insights in a scalable and cost effective manner.

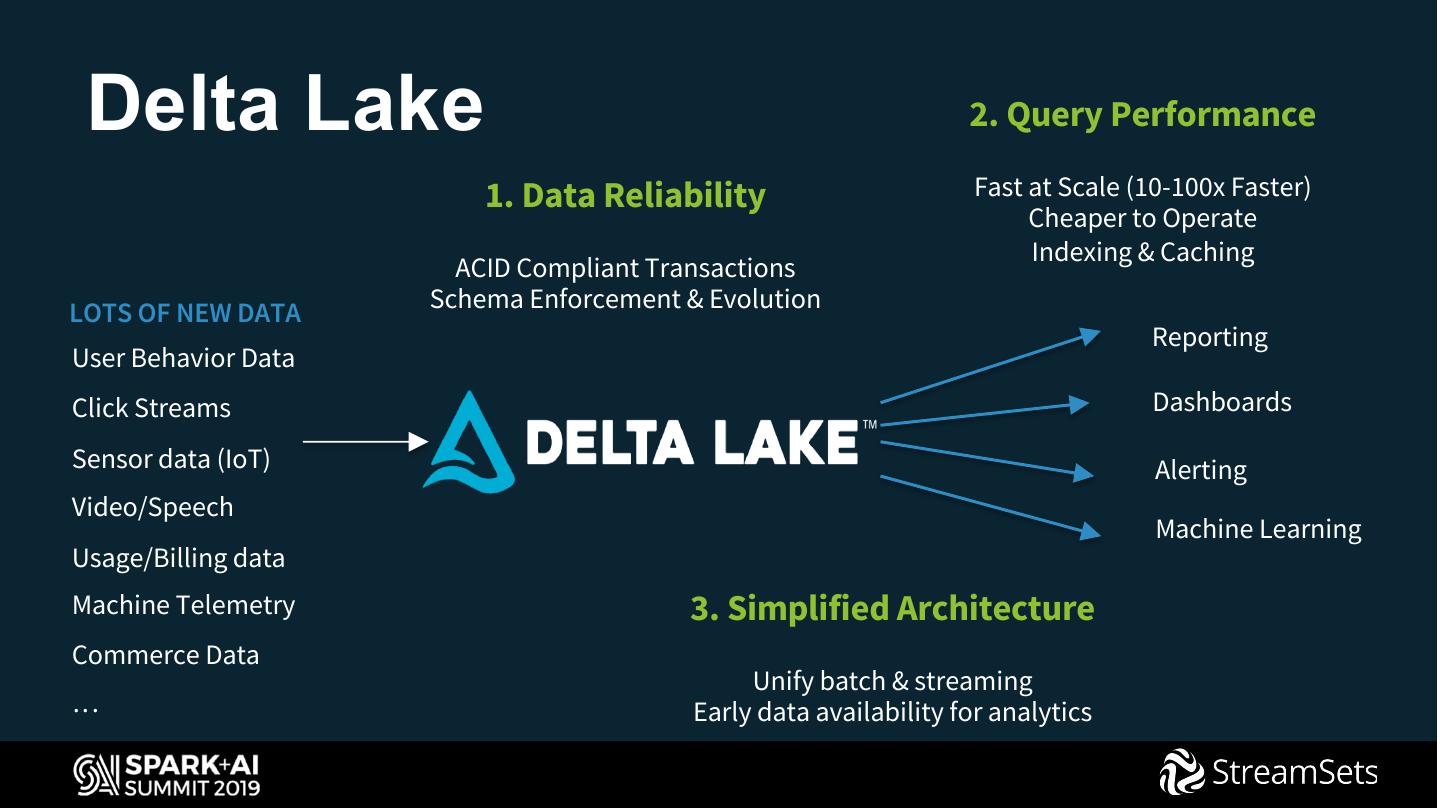

In this session, we’ll show how organizations can easily overcome that challenge by adopting a robust platform with StreamSets and Delta Lake. StreamSets provides a no-code framework to automate ingestion of transactional data and data processing on Spark, while Delta Lake provides ACID transactions, scalable metadata handling, and unifies streaming and batch data processing.

展开查看详情

1 .WIFI SSID:Spark+AISummit | Password: UnifiedDataAnalytics

2 .Power your Delta Lake with streaming transactional changes Rupal Shah Director, Cloud Services StreamSets

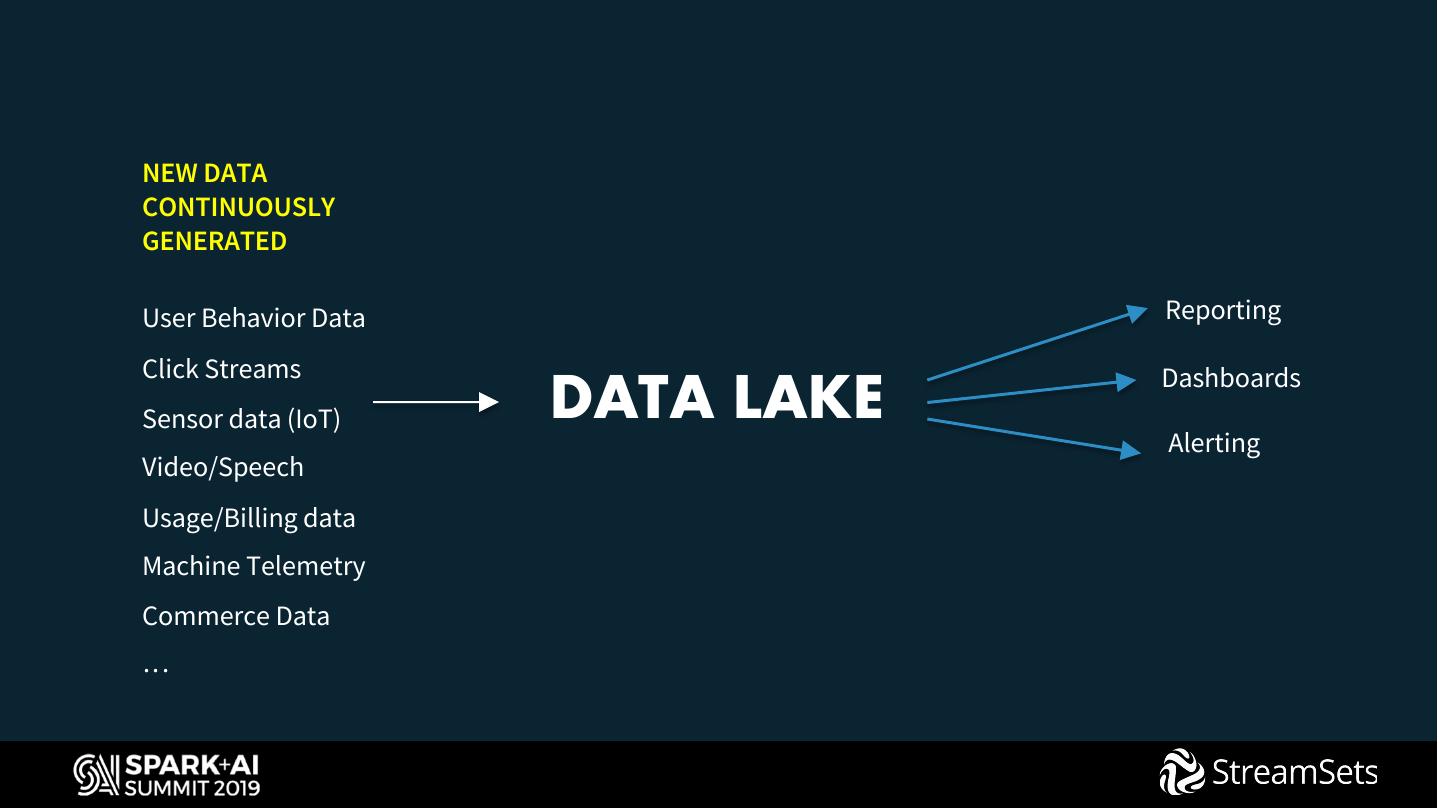

3 .NEW DATA CONTINUOUSLY GENERATED User Behavior Data Reporting Click Streams Dashboards Sensor data (IoT) DATA LAKE Alerting Video/Speech Usage/Billing data Machine Telemetry Commerce Data …

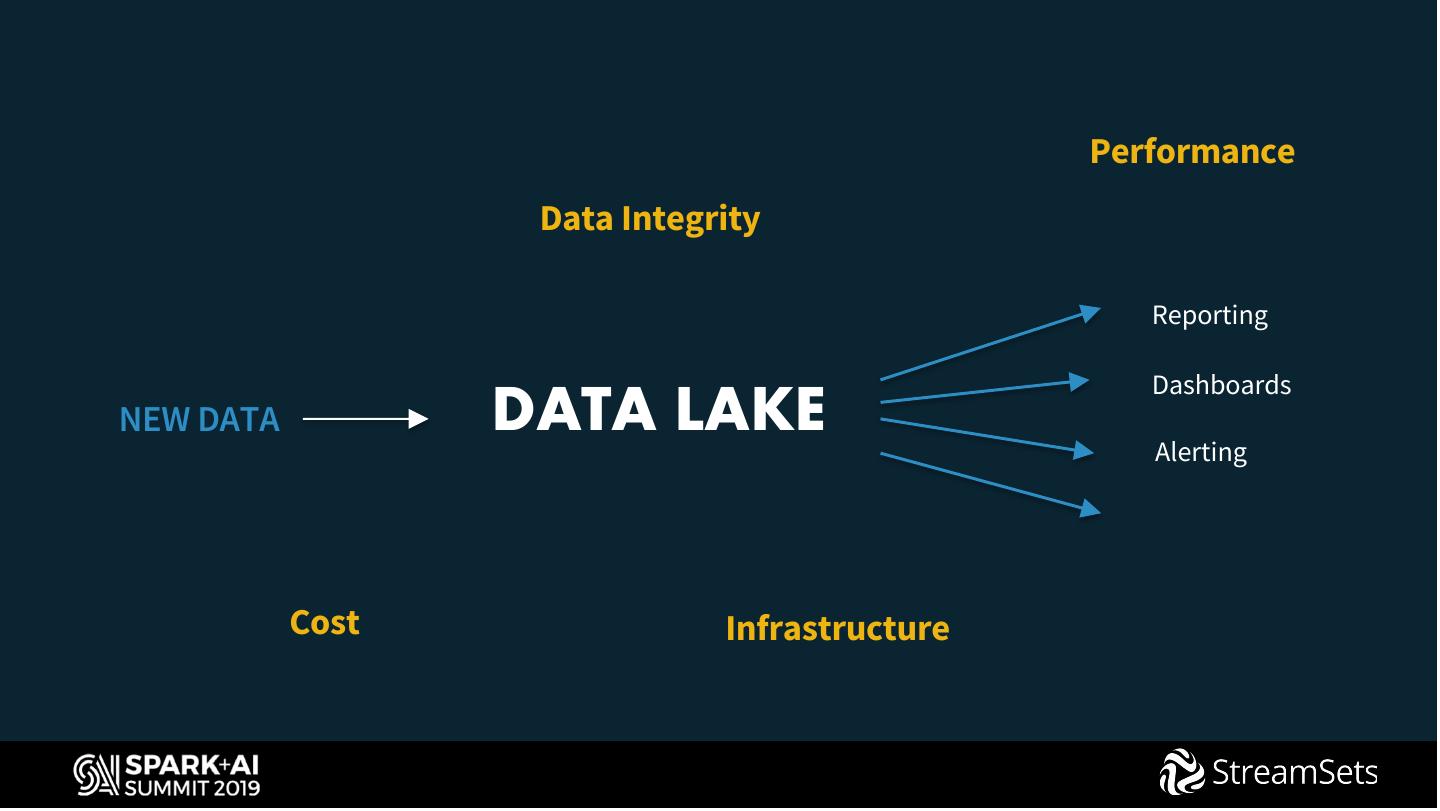

4 . Performance Data Integrity Reporting Dashboards NEW DATA DATA LAKE Alerting Cost Infrastructure

5 . Delta Lake 2. Query Performance 1. Data Reliability Fast at Scale (10-100x Faster) Cheaper to Operate Indexing & Caching ACID Compliant Transactions Schema Enforcement & Evolution LOTS OF NEW DATA Reporting User Behavior Data Click Streams Dashboards Sensor data (IoT) Alerting Video/Speech Machine Learning Usage/Billing data Machine Telemetry 3. Simplified Architecture Commerce Data Unify batch & streaming … Early data availability for analytics

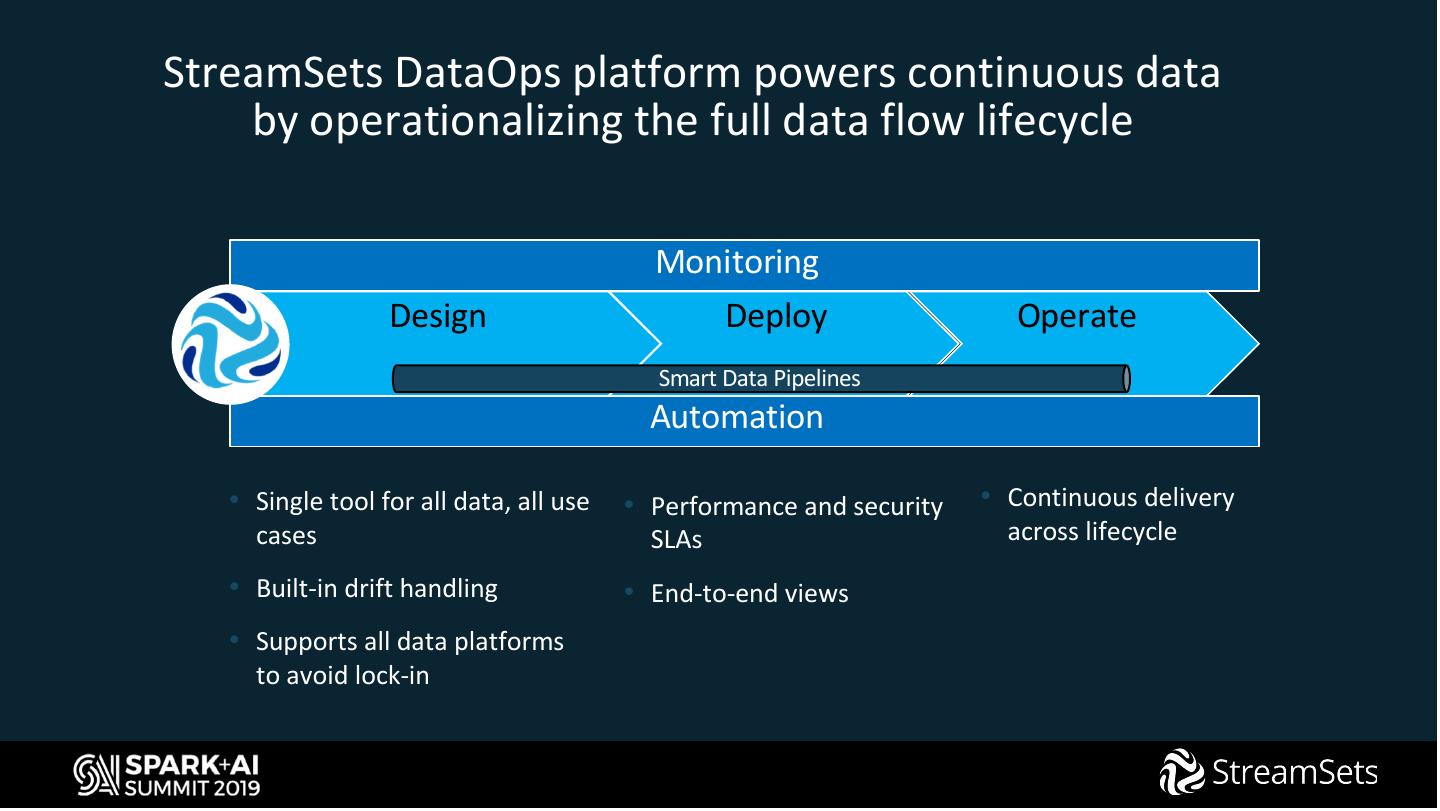

6 .StreamSets DataOps platform powers continuous data by operationalizing the full data flow lifecycle Monitoring Design Deploy Operate Smart Data Pipelines Automation • Single tool for all data, all use • Performance and security • Continuous delivery cases SLAs across lifecycle • Built-in drift handling • End-to-end views • Supports all data platforms to avoid lock-in

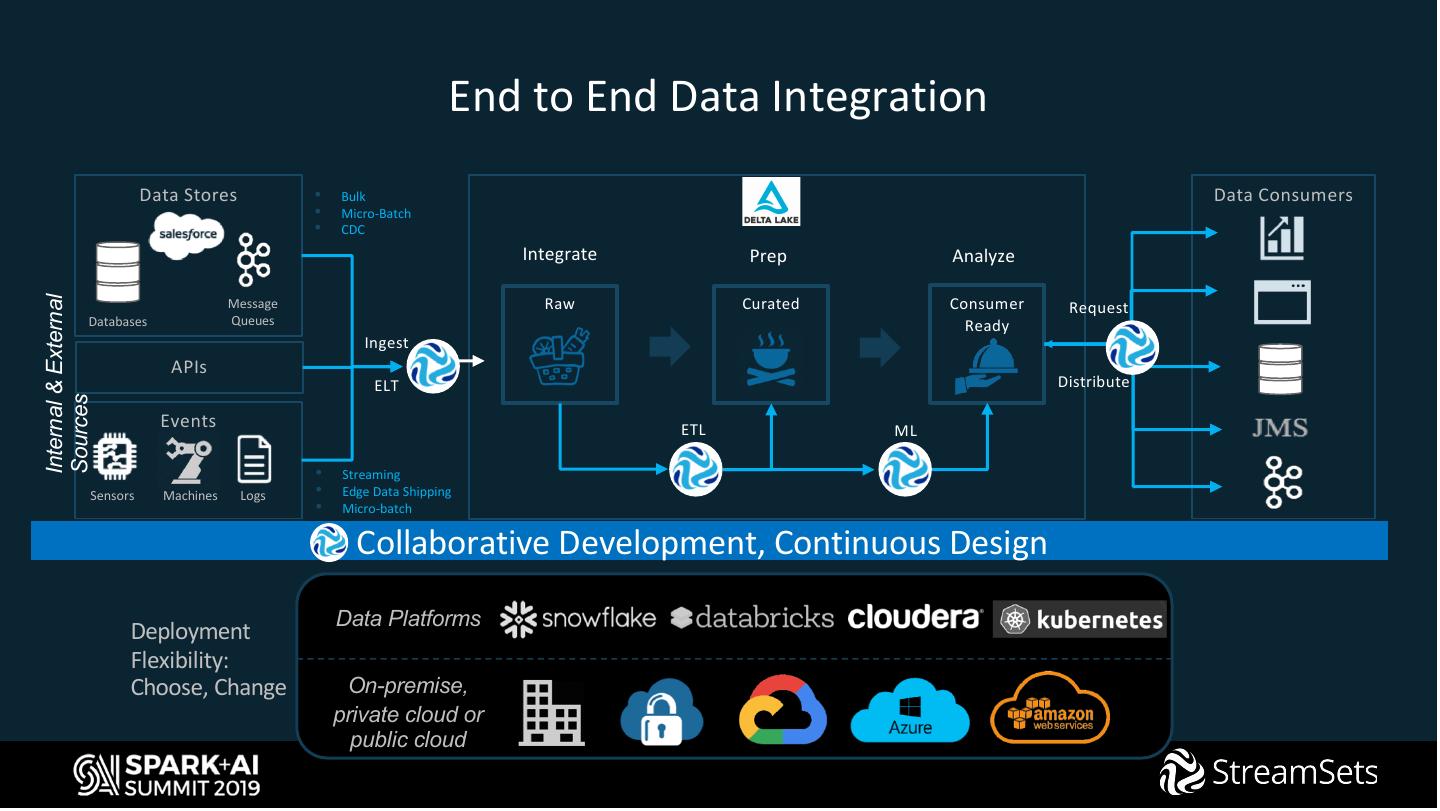

7 . End to End Data Integration Data Stores • Bulk Data Consumers • Micro-Batch • CDC Integrate Prep Analyze Raw Curated Consumer Internal & External Message Request Databases Queues Ready Ingest APIs ELT Distribute Sources Events ETL ML • Streaming Sensors Machines Logs • Edge Data Shipping • Micro-batch Collaborative Development, Continuous Design Data Platforms Deployment Flexibility: Choose, Change On-premise, private cloud or public cloud

8 .DEMO

9 .Change Data Capture

10 . Slowly Changing Dimension

11 .Take Aways • No code • Powers Delta Lake with Fast Data • Handles complex data integration logic (CDC, SCD, …) with ease • Become a DataOps champion!

12 .Next Steps… • Visit booth #88 for further information • Get started with StreamSets for powering your Delta Lakes: https://streamsets.com/download/ • Get slack’ing with StreamSets ninjas https://streamsetters-slack.herokuapp.com/

13 .DON’T FORGET TO RATE AND REVIEW THE SESSIONS SEARCH SPARK + AI SUMMIT