- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Percona XtraDB Cluster

以前从未使用过Percona Xtradb群集?加入这个45分钟的教程,我们将向您介绍全功能PerconaXtradb集群的概念。

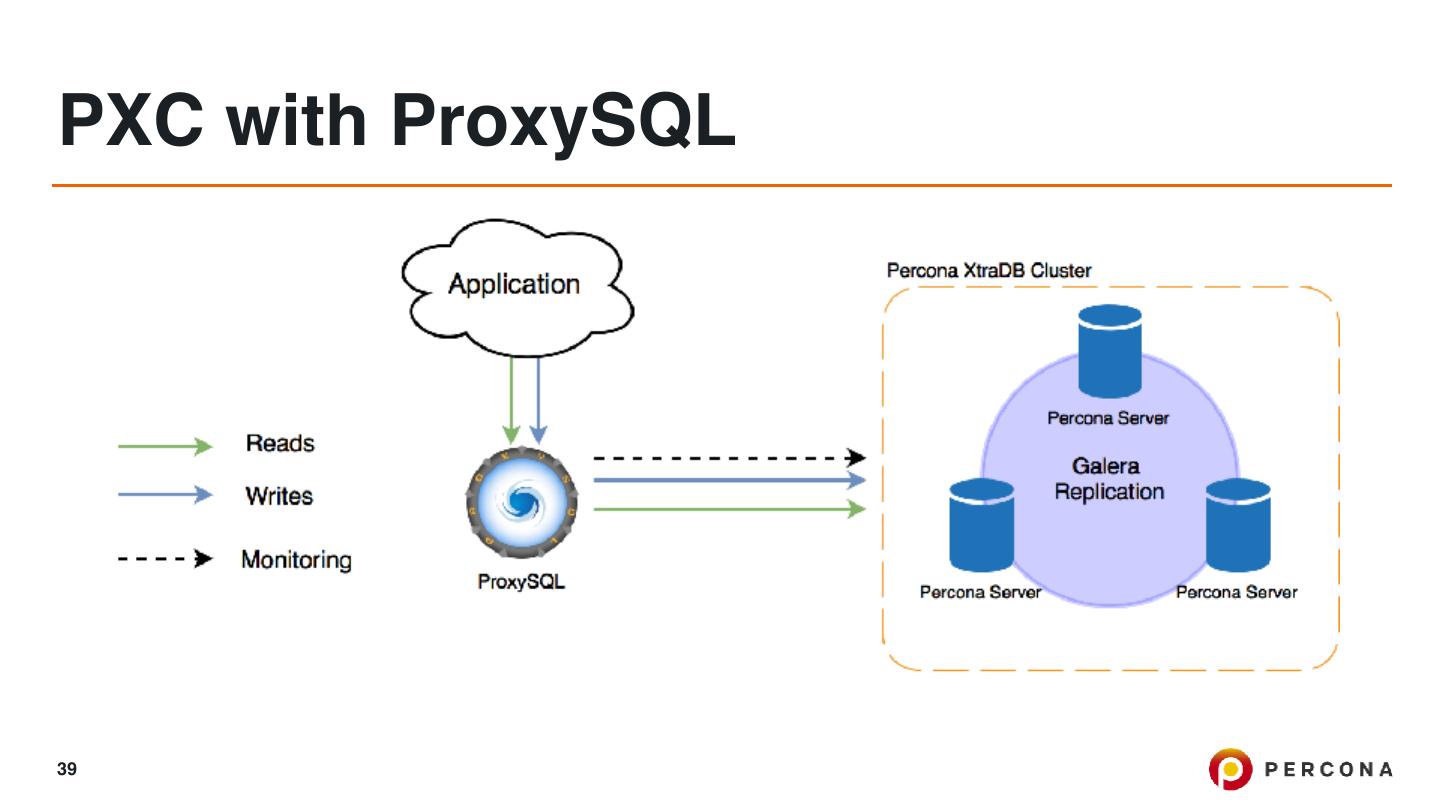

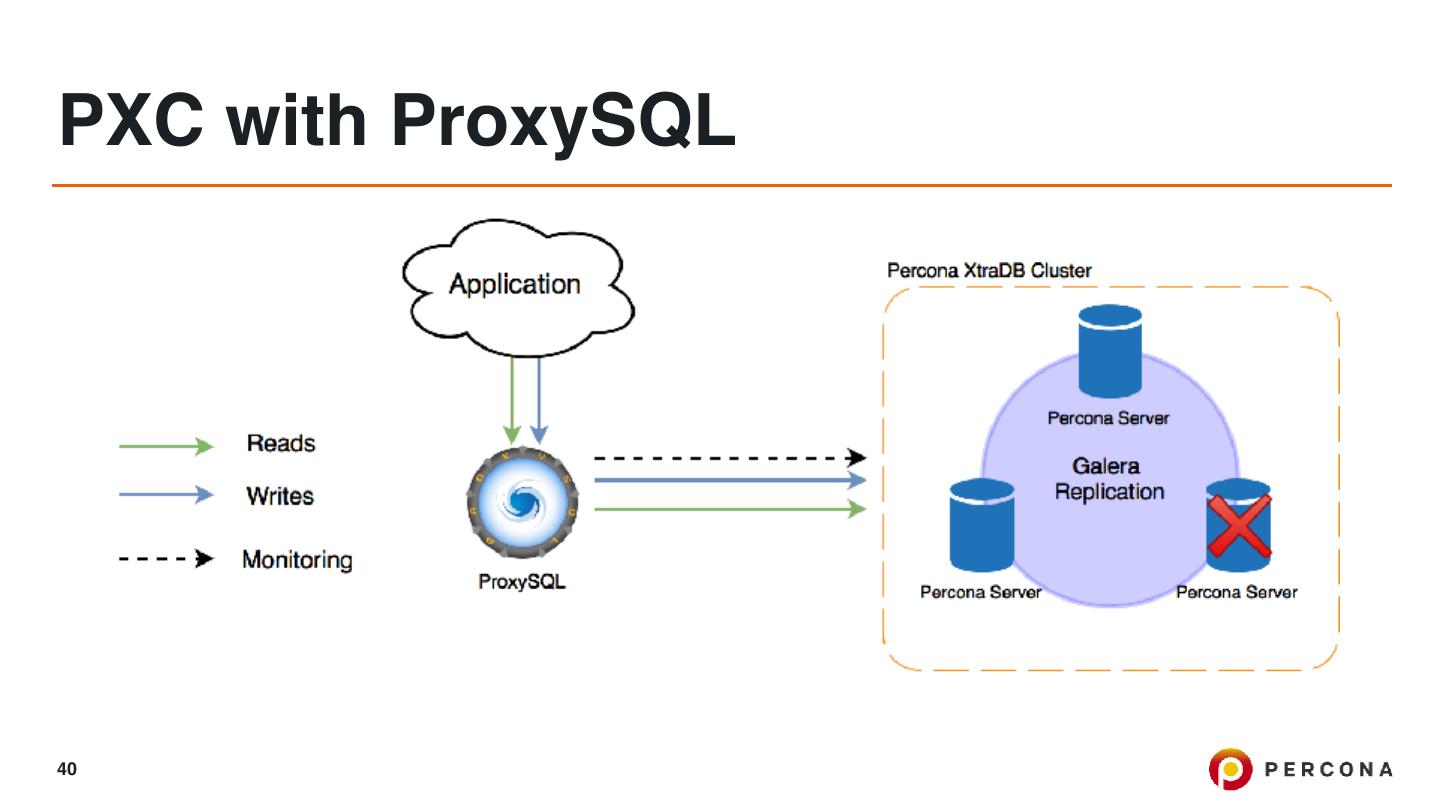

在本教程中,我们将向您展示如何使用proxysql安装percona xtradb集群,并使用percona监控和管理(pmm)对其进行监控。

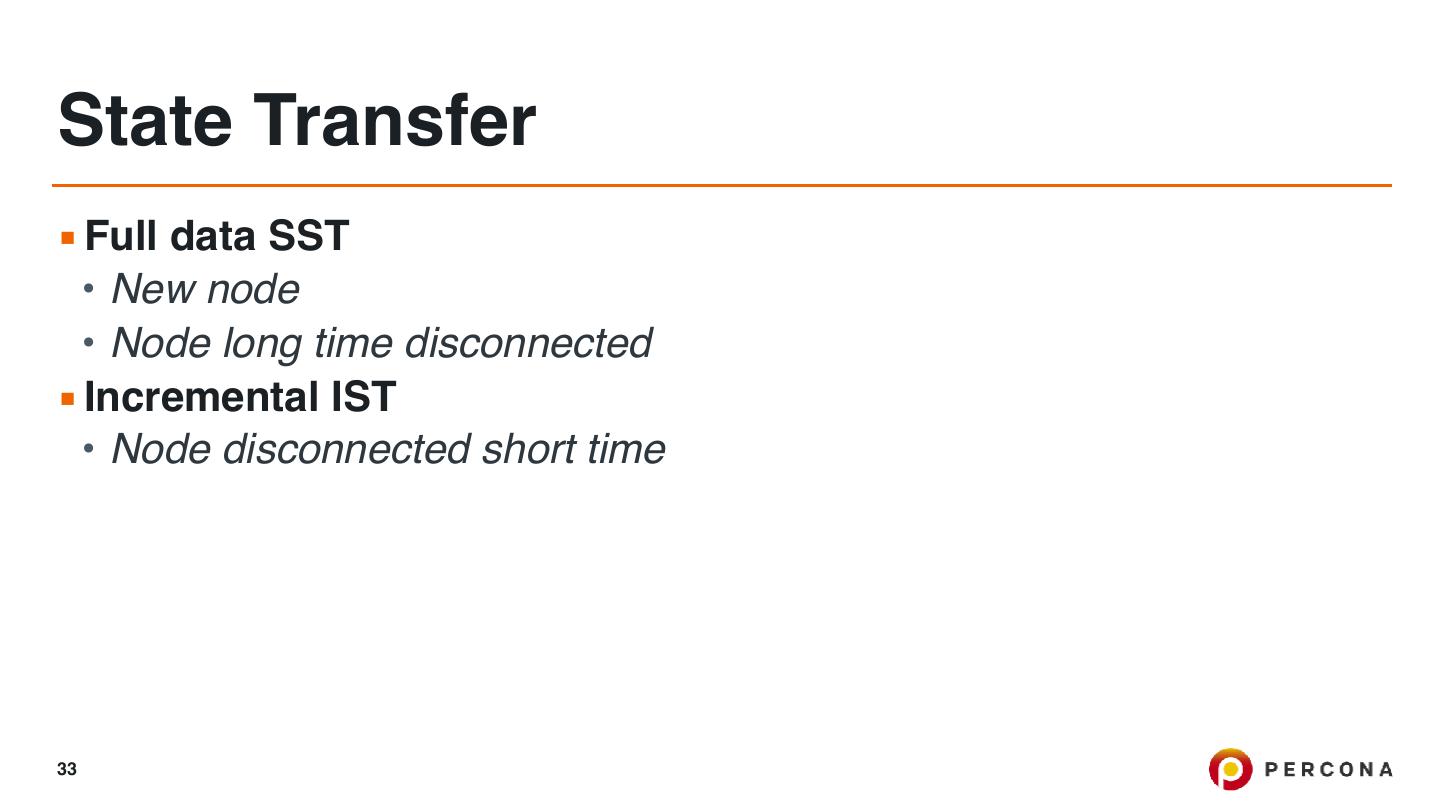

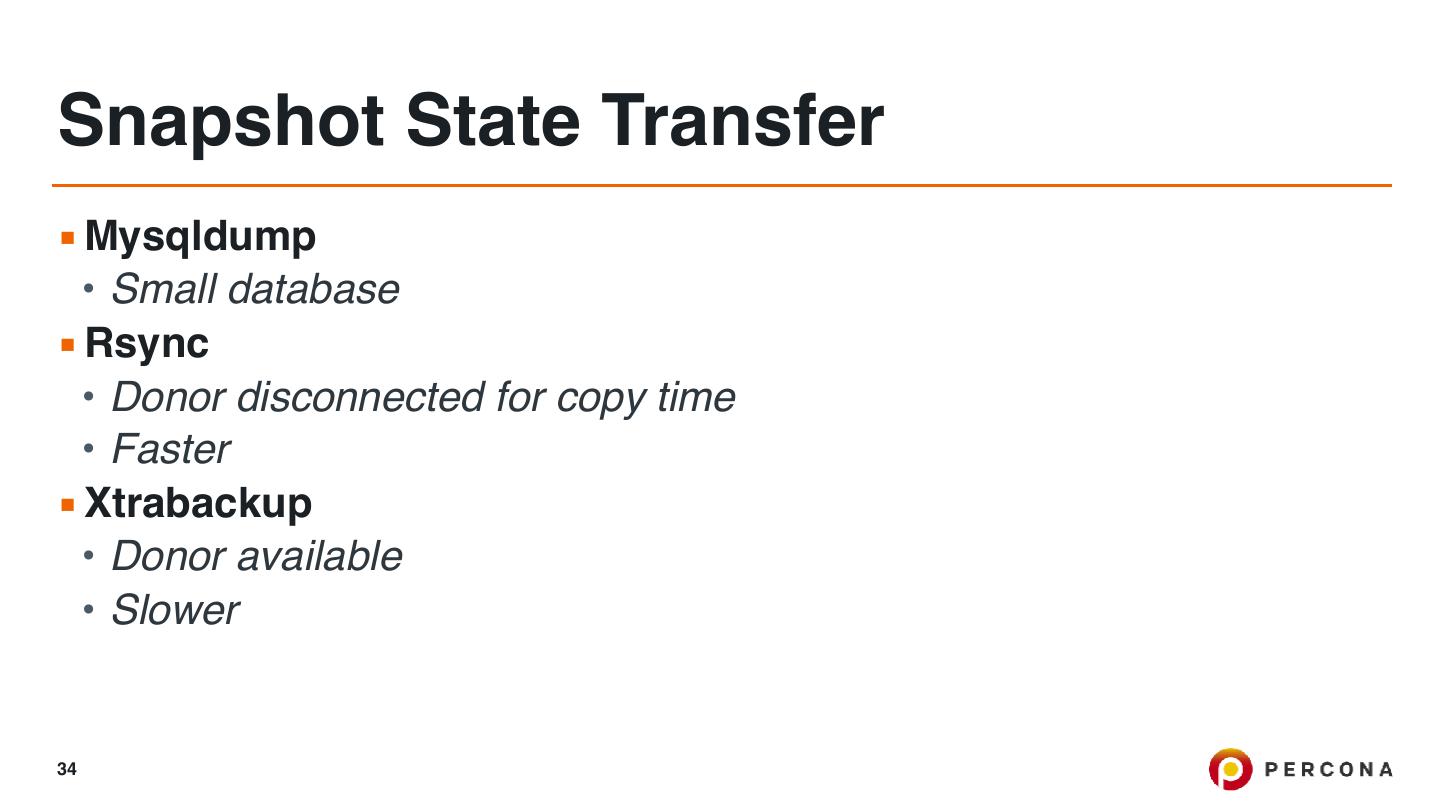

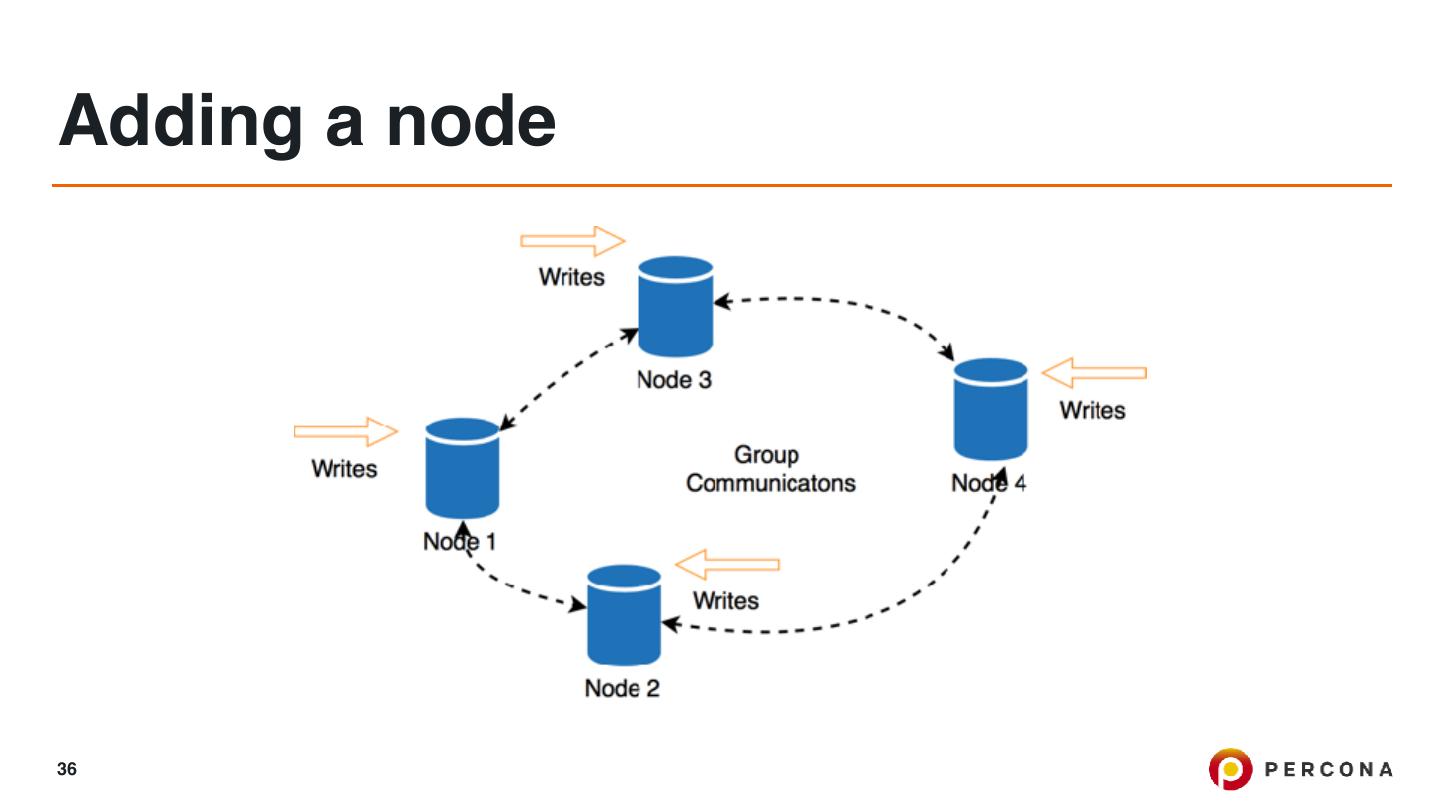

我们还将讨论引导、IST、SST、认证、常见故障情况和在线模式更改等主题。

展开查看详情

1 . Percona XtraDB Cluster MySQL Scaling and High Availability with PXC 5.7 Tibor Korocz Architect 2018.04.18 1 © 2016 Percona

2 .Scaling and High Availability (application) 2

3 .Scaling and High Availability (application) 3

4 .Scaling and High Availability (application) - Application does not change too often (static) - If we need more performance we adding more resources - Easy to scale and achieve High Availability But what happens with the database? 4

5 .Scaling and High Availability (database) - We have to distribute all the changes to all the server in real time. - It has to be available for all the applications - The application has to be able to do changes 5

6 .Traditional Replication server-centric, one server streams the data to another one 6

7 .Topologies 7

8 .Topologies 8

9 .Async - Master writes the events in the binary log - Slave request them - Master does not know whether or when a slave has retrieved and processed them - If the master crashes, transactions that it has committed might not have been transmitted to any slave - Failover might cause data loss 9

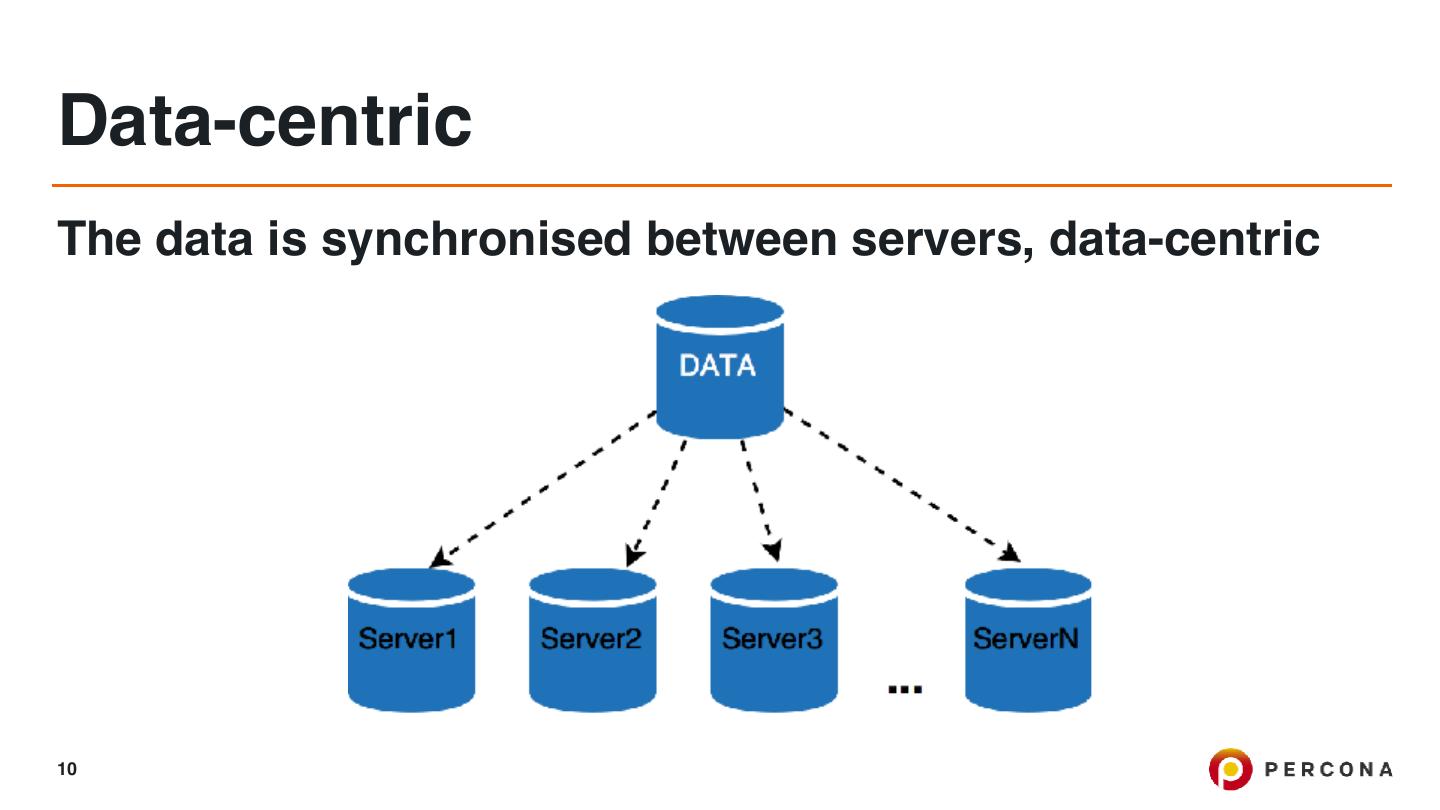

10 .Data-centric The data is synchronised between servers, data-centric 10

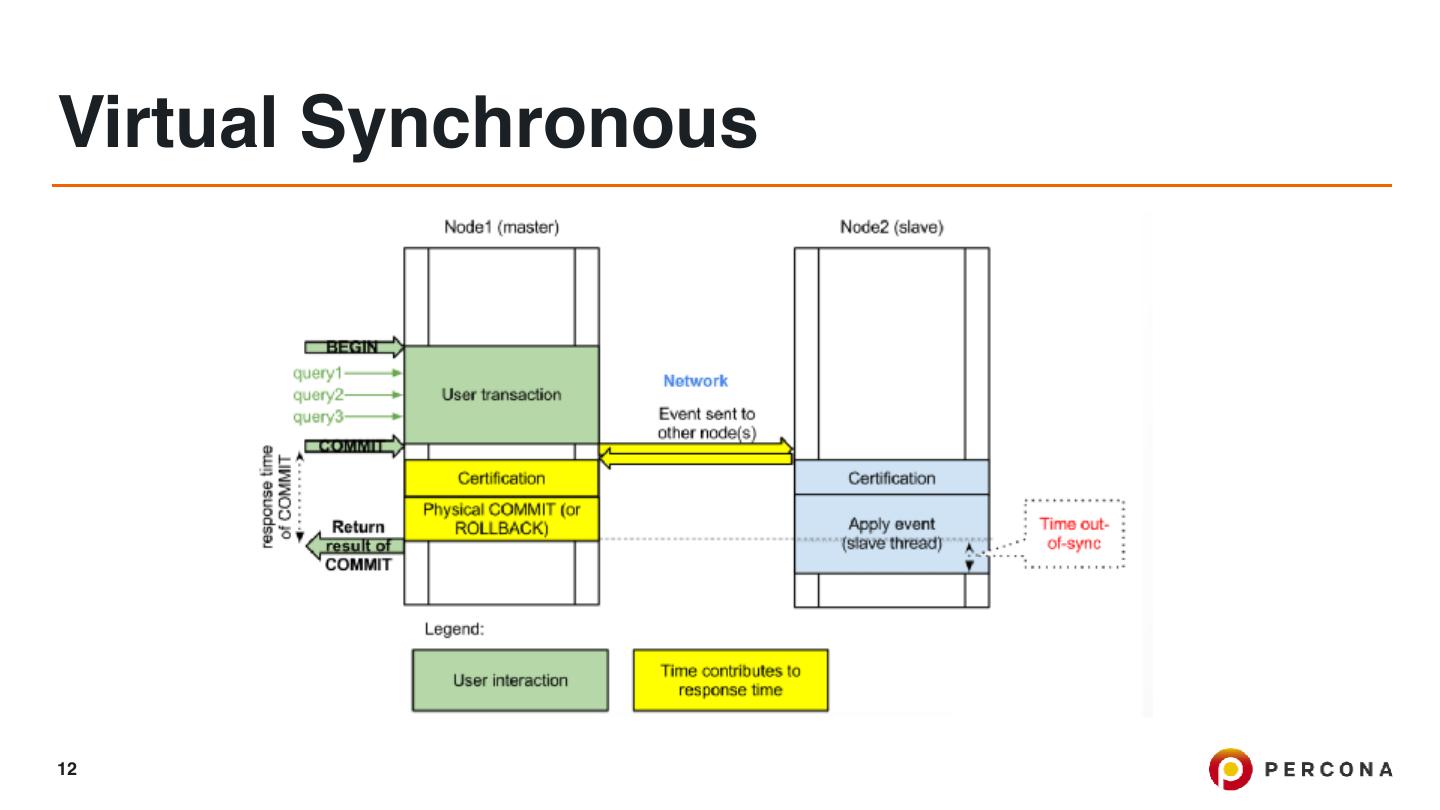

11 .Virtual Synchronous - "synchronous" guarantees that if changes happened on one node of the cluster, they happened on other nodes “synchronously" - It is always highly available (no data loss) - Data replicas are always consistent - Transactions can be executed on all nodes in parallel - No complex, time-consuming failovers 11

12 .Virtual Synchronous 12

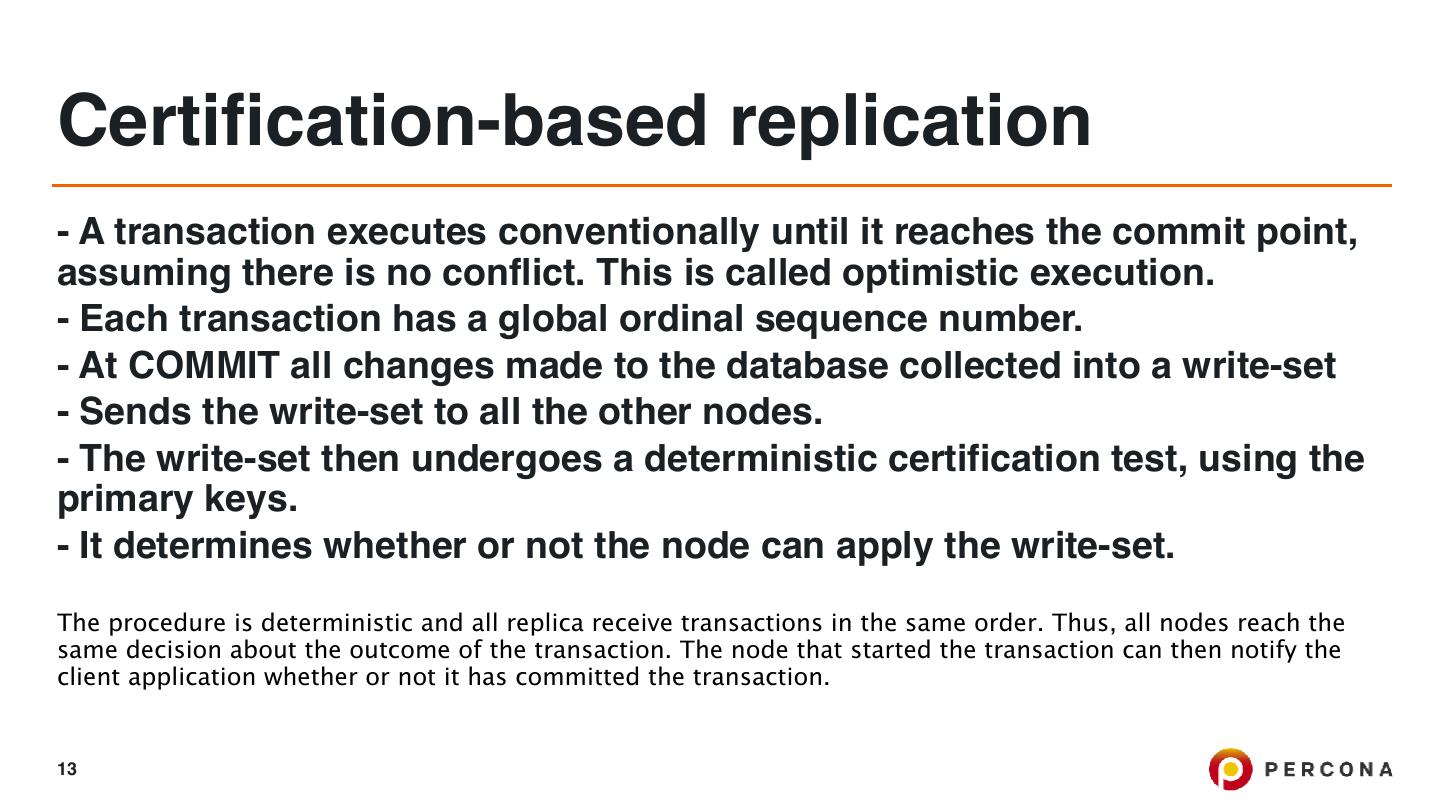

13 .Certification-based replication - A transaction executes conventionally until it reaches the commit point, assuming there is no conflict. This is called optimistic execution. - Each transaction has a global ordinal sequence number. - At COMMIT all changes made to the database collected into a write-set - Sends the write-set to all the other nodes. - The write-set then undergoes a deterministic certification test, using the primary keys. - It determines whether or not the node can apply the write-set. The procedure is deterministic and all replica receive transactions in the same order. Thus, all nodes reach the same decision about the outcome of the transaction. The node that started the transaction can then notify the client application whether or not it has committed the transaction. 13

14 .Expected errors Brute force abort: Occurs when other node execute conflicting transaction and local active transaction needs to be killed. - wsrep_local_bf_aborts Local certification failure: When 2 nodes executes conflicting workload and add it to the queue at the same time. - wsrep_local_cert_fauilers 14

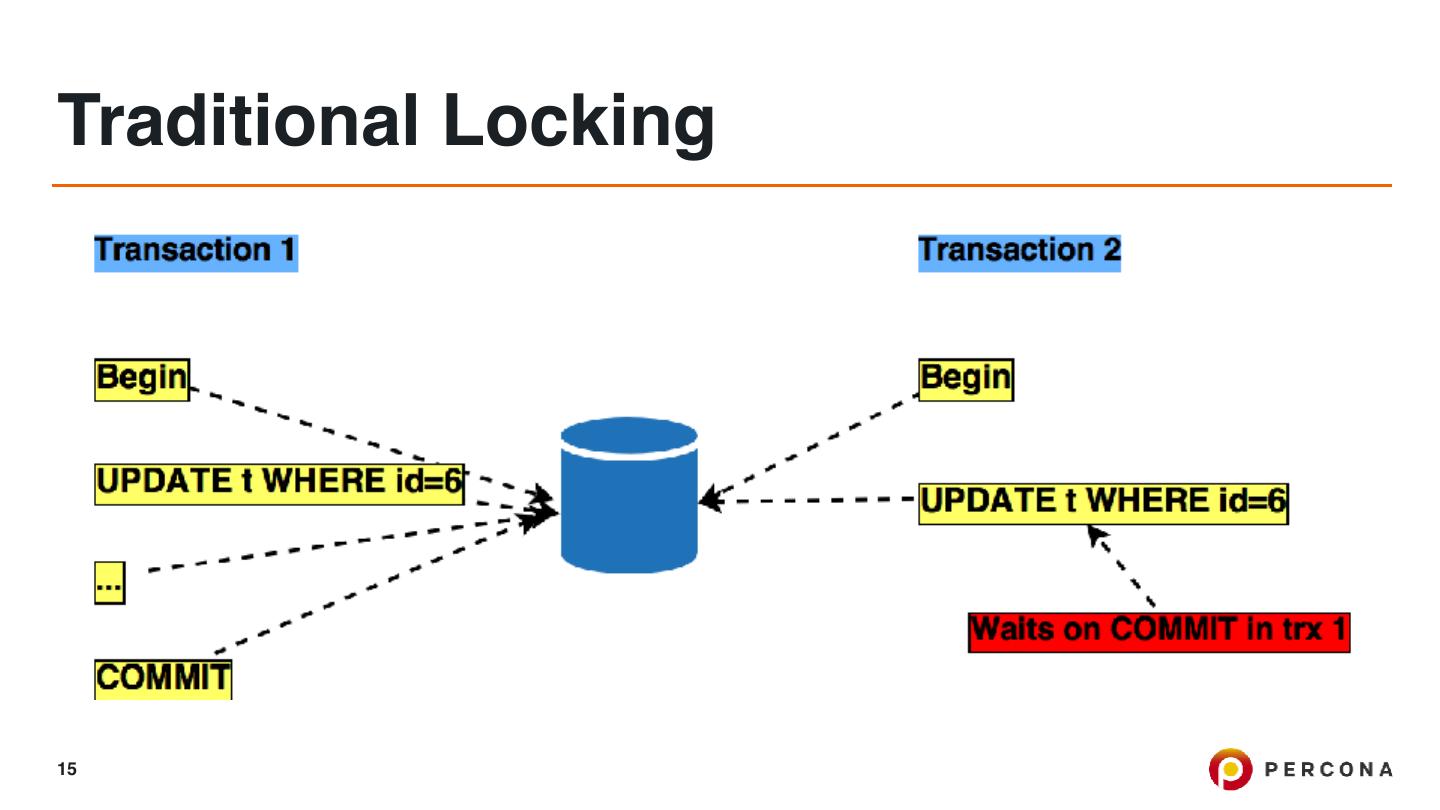

15 .Traditional Locking 15

16 .Optimistic Locking 16

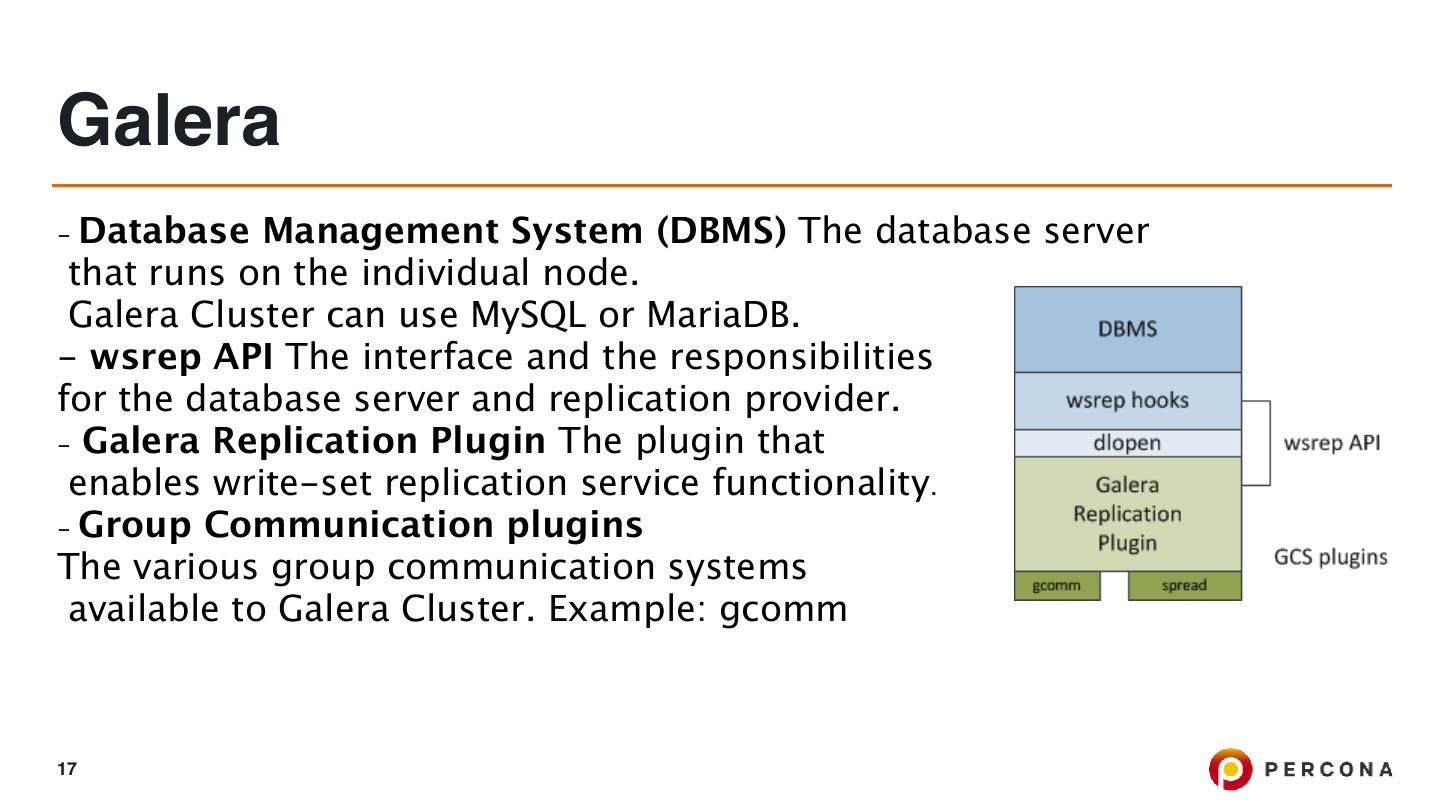

17 .Galera - Database Management System (DBMS) The database server that runs on the individual node. Galera Cluster can use MySQL or MariaDB. - wsrep API The interface and the responsibilities for the database server and replication provider. - Galera Replication Plugin The plugin that enables write-set replication service functionality. - Group Communication plugins The various group communication systems available to Galera Cluster. Example: gcomm 17

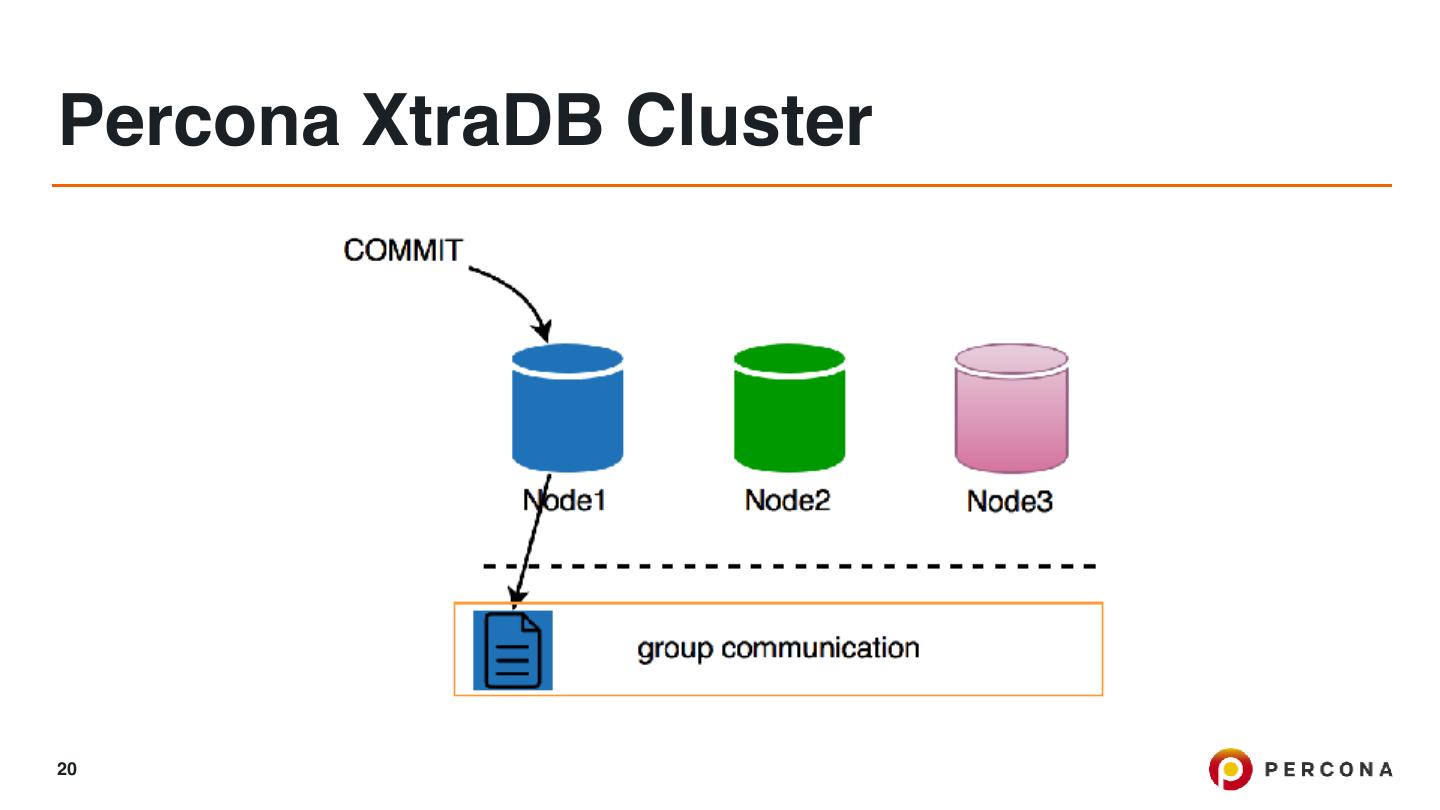

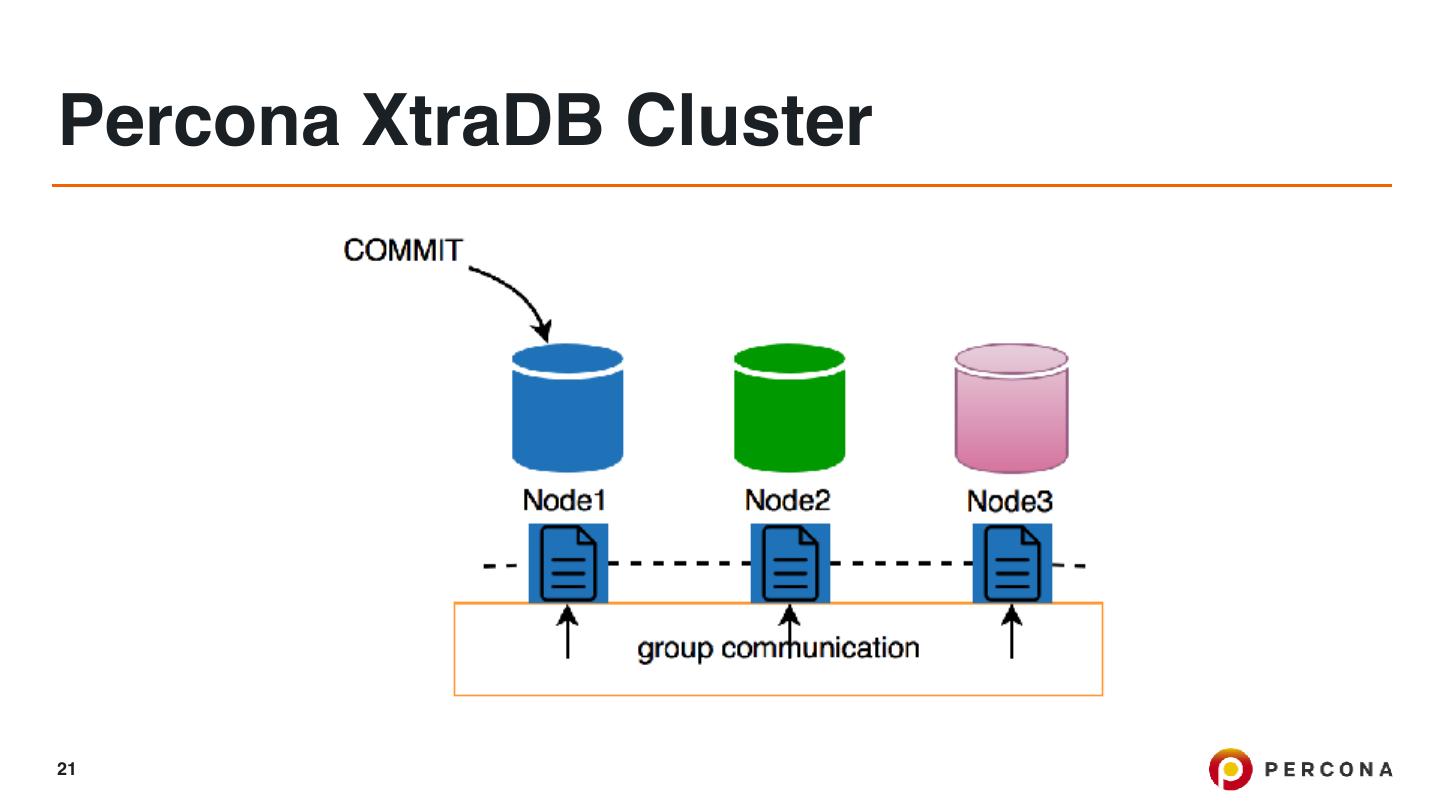

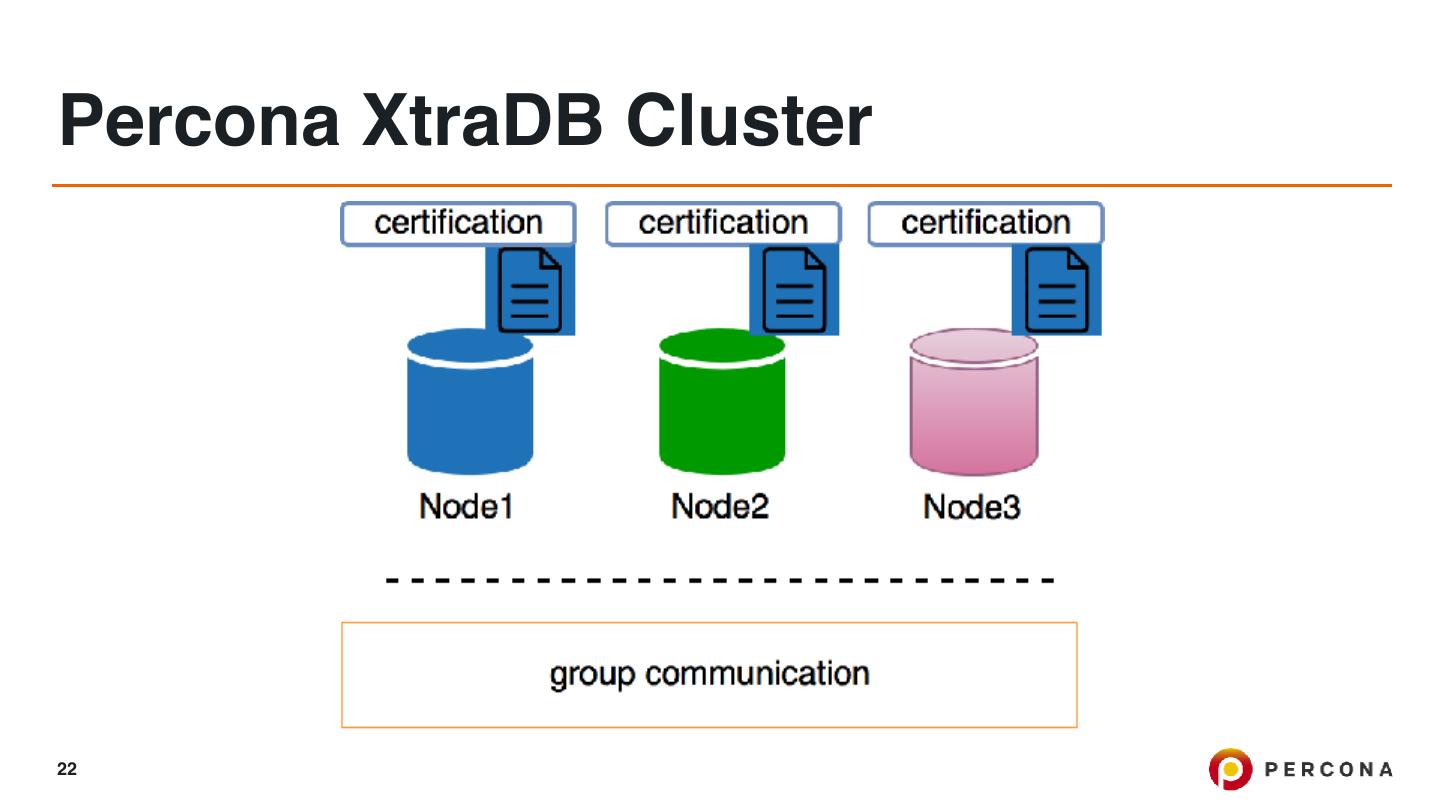

18 .Percona XtraDB Cluster Percona XtraDB Cluster is based on Percona Server running with the XtraDB storage engine. It uses the Galera library, which is an implementation of the write set replication (wsrep) API developed by Codership Oy. The default and recommended data transfer method is via Percona XtraBackup. 18

19 .Percona XtraDB Cluster 19

20 .Percona XtraDB Cluster 20

21 .Percona XtraDB Cluster 21

22 .Percona XtraDB Cluster 22

23 .Percona XtraDB Cluster 23

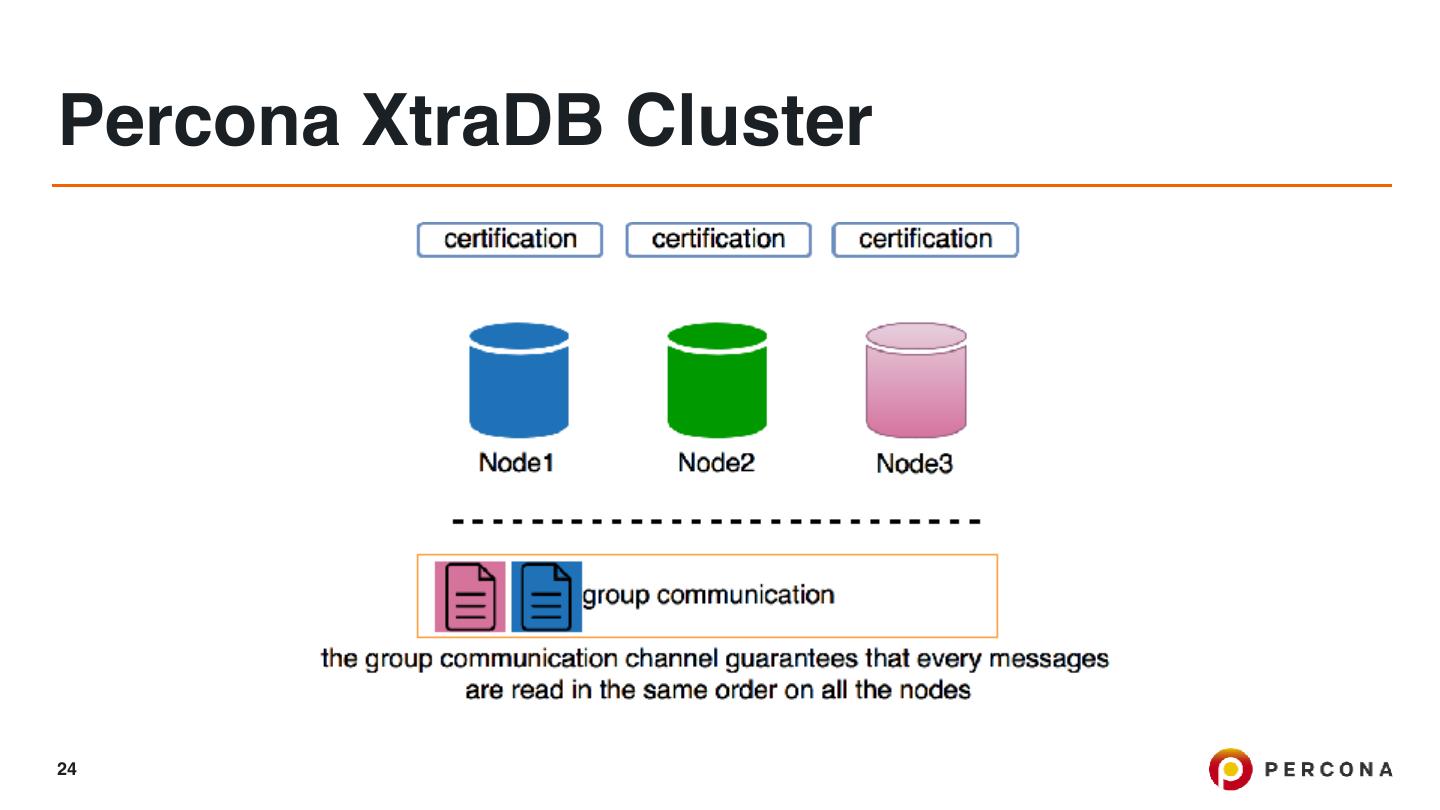

24 .Percona XtraDB Cluster 24

25 .Percona XtraDB Cluster 25

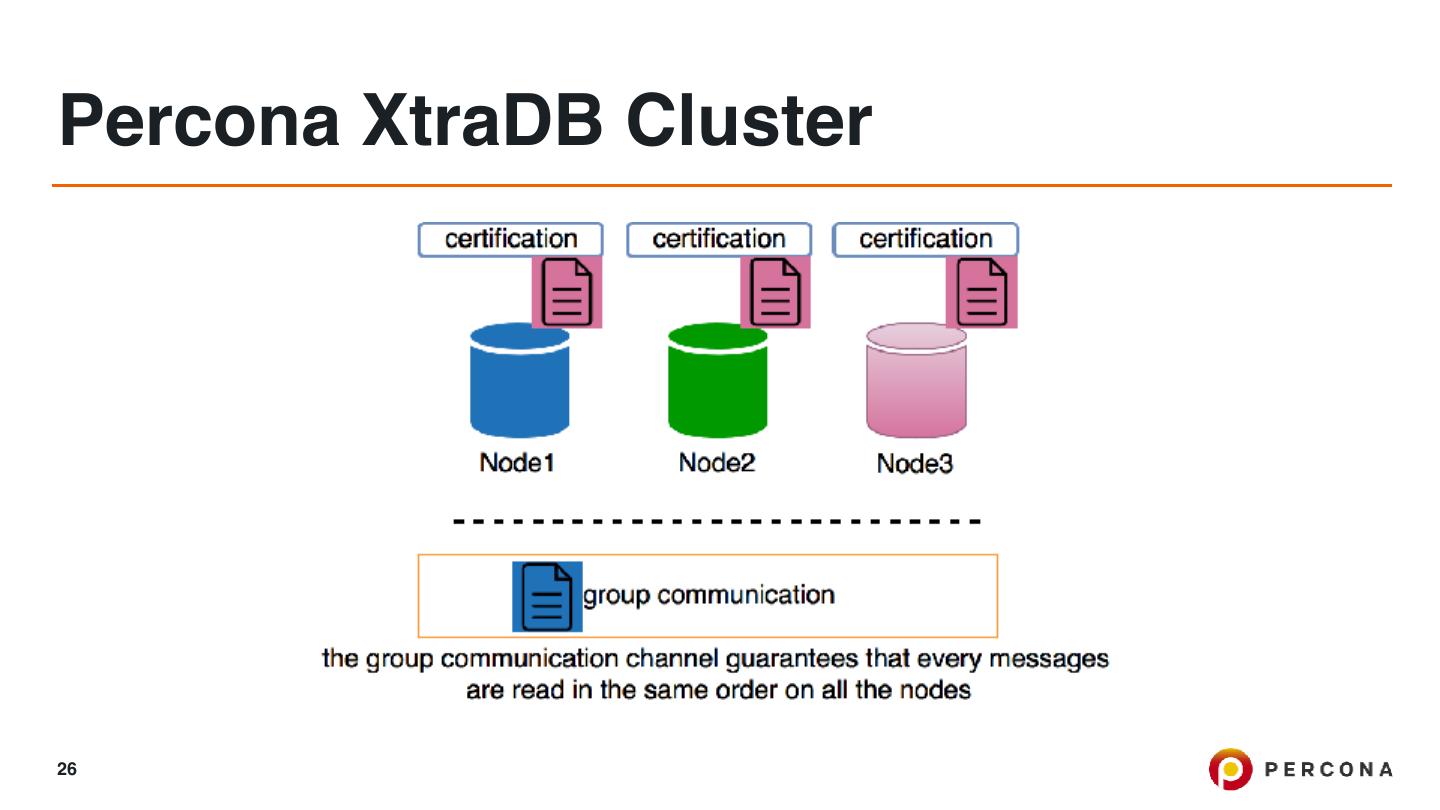

26 .Percona XtraDB Cluster 26

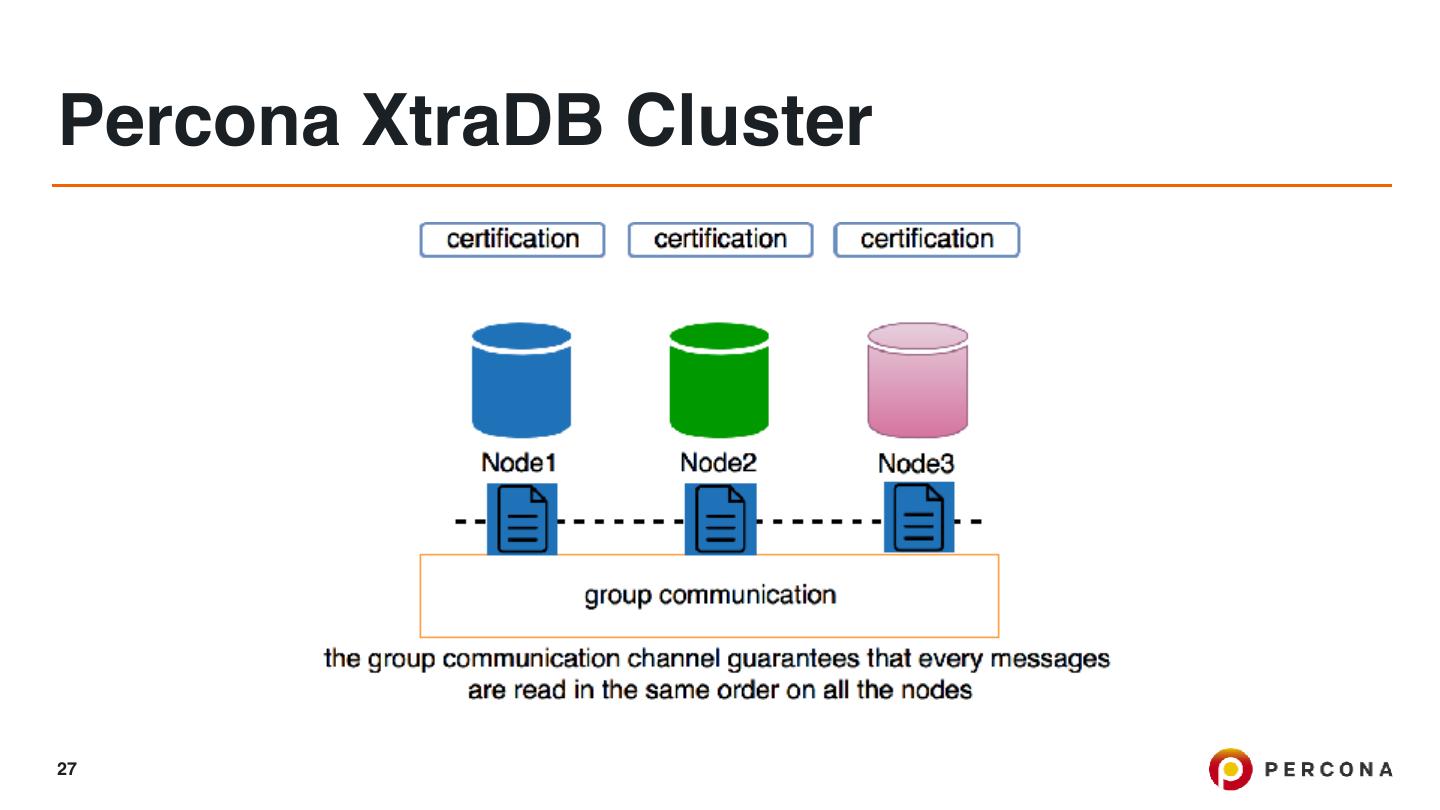

27 .Percona XtraDB Cluster 27

28 .Percona XtraDB Cluster 28

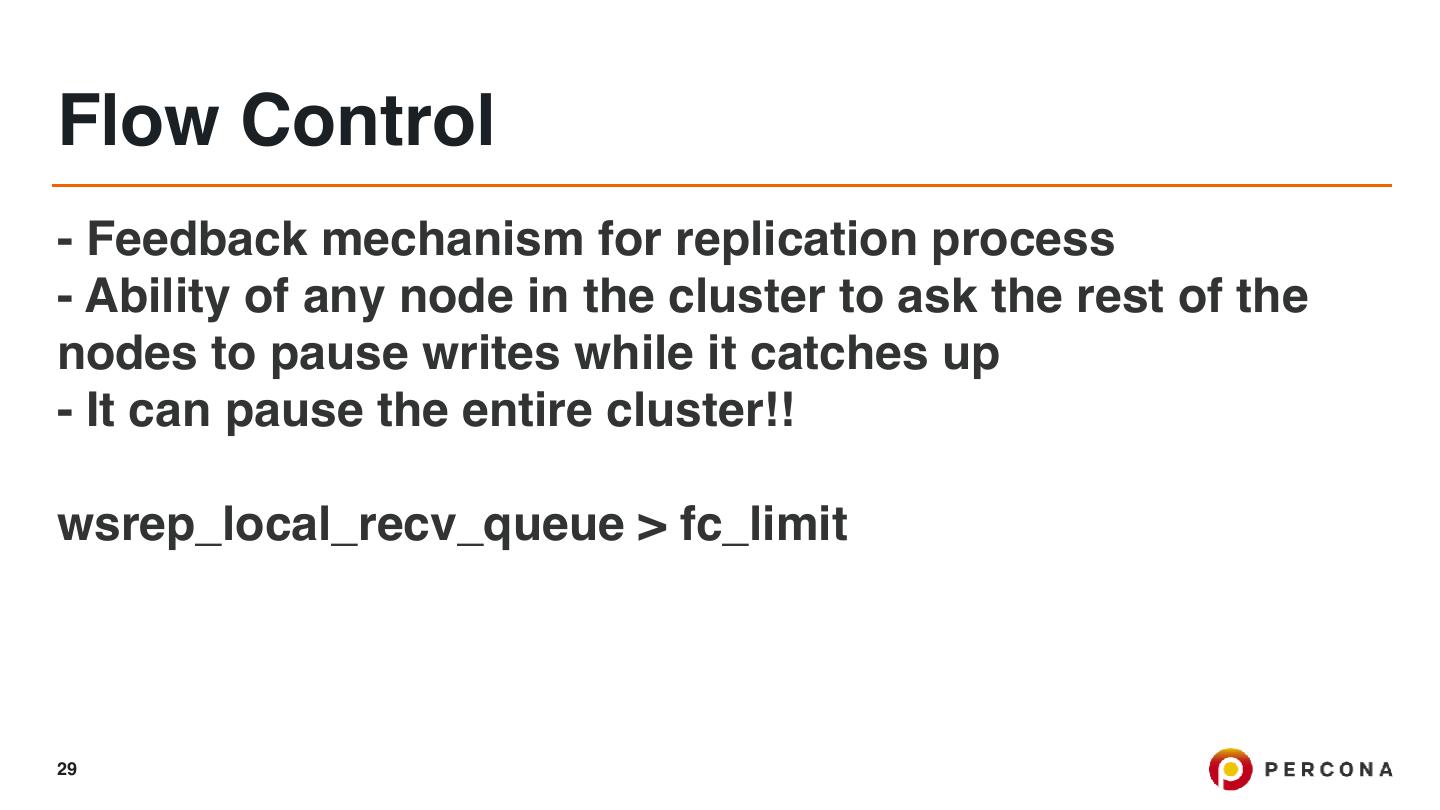

29 .Flow Control - Feedback mechanism for replication process - Ability of any node in the cluster to ask the rest of the nodes to pause writes while it catches up - It can pause the entire cluster!! wsrep_local_recv_queue > fc_limit 29