- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Opensource Column Store Databases: MariaDB ColumnStore vs. ClickHouse

在MySQL上运行一个分析(OLAP)工作负载可能是缓慢而痛苦的。专门设计的存储格式(“列存储”)可以显著提高分析查询的性能。周围有许多OpenSource列存储数据库。在本文中,我将重点讨论其中两个支持MySQL协议的组件:Mariadb Columnstore和Clickhouse。

我将展示一些实时基准和用例,并演示Mariadb Columnstore和Clickhouse如何用于典型的OLAP查询。我还将做一个快速演示。

展开查看详情

1 .Opensource Column Store Databases: MariaDB ColumnStore vs. ClickHouse Alexander Rubin VirtualHealth

2 .About me ● Working with MySQL for 10-15 years ○ Started at MySQL AB 2006 - Sun Microsystems, Oracle (MySQL Consulting) - Percona since 2014 ○ Recently joined Virtual Health (medical records startup) 2

3 . MariaDB ColumnStore, ClickHouse and Storage Formats Caution: 1. This talk is not about specifics of implementation ○ A number of presentations about Clickhouse and MariaDB @ Percona Live 2019 2. This is all about: ○ What? -- what is the problem ○ Why? -- why queries are slow ○ How? -- how to solve 3. Examples are real-world example, medical insurance records ○ (but no actual PII data shown) 3

4 .Into: MySQL and Slow Queries Simple query - top 10 - clients who visited doctors most often (data from 2017-2019) mysql> SELECT -> client_id, -> min(date) as first_visit, -> max(date) as last_visit, -> count(distinct date) as days_visited, -> count(cv.id) as visits, -> count(distinct cv.service_location_name) as locations -> FROM client_visit cv -> GROUP BY client_id -> ORDER by visits desc -> LIMIT 10; +-----------+-------------+------------+--------------+--------+-----------+ | client_id | first_visit | last_visit | days_visited | visits | locations | +-----------+-------------+------------+--------------+--------+-----------+ | ......... | 2017-08-07 | 2019-05-24 | .. | ... | .. | 10 rows in set (46.24 sec) 4 MySQL = { }

5 .What exactly is slow? Is 47 seconds slow? … depends on expectations ● Data Science world it is blazing fast ● Realtime report/dashboard - extremely slow … Web based queries - users tends to reload page if it is slow … Leaving MySQL with tons of queries doing the same thing 5

6 .What to do? Some ideas: 1. Use index Luke! 2. Table per report 3. Pre-aggregate - table per group of reports 4. Something else 6

7 .Use index But, it is already using index: id: 1 select_type: SIMPLE table: cv partitions: NULL type: index possible_keys: FK_client_visit key: FK_client_visit key_len: 5 ref: NULL rows: 10483873 filtered: 100.00 Extra: Using temporary; Using filesort 1 row in set, 1 warning (0.00 sec) PRIMARY KEY (`id`), KEY `FK_client_visit_author_id` (`client_id`) 7

8 . Ok, better index: covered index mysql> alter table client_visit add key comb(client_id, date, service_location_name); Query OK, 0 rows affected (38.48 sec) Records: 0 Duplicates: 0 Warnings: 0 table: cv partitions: NULL type: index Still possible_keys: FK_client_id,comb key: comb slow! key_len: 776 ref: NULL rows: 10483873 filtered: 100.00 Extra: Using index; Using temporary; Using filesort 10 rows in set (12.18 sec) 8

9 .Ok, how large is the table? mysql> show table status like 'client_visit'\G *************************** 1. row *************************** Name: client_visit Engine: InnoDB Version: 10 Row_format: Dynamic 24 columns, including Rows: 10483873 `notes` text Avg_row_length: 233 `description` text Data_length: 2 452 602 880 etc... Index_length: 1 644 773 376 ~= 4G on disk, that is it! 9

10 .Ok, other options in MySQL? Create table per each report Problems 1. Too many tables 2. Hard to maintain 10

11 .Ok, other options in MySQL? Pre-aggregate in a table: ● group by client_id + avg, sum, … ● group by date + avg,sum Final report will do another aggregation if needed Problems: 1. Some aggregates can’t be re-aggregated 2. Still too many tables 3. Hard to maintain 11

12 .And it was only the beginning… now this: SELECT cv.client_id as client_id, min(date) as first_visit, max(date) as last_visit, count(distinct date) as days_visited, Highly count(distinct cv.id) as visits, normalized count(distinct cp.cpt_code) as procedures, schema count(distinct cv.service_location_name) as locations, sum(billed_amount) as total_billed, max(billed_amount) as max_price, avg(billed_amount) as avg_price FROM client_visit cv join client_procedure cp on cp.encounter_id = cv.encounter_id join client_procedure_claim cpc on cp.id = cpc.client_procedure_id join client_claim cc on cc.id = cpc.client_claim_id GROUP BY client_id ORDER BY total_billed desc LIMIT 10 12

13 . 4 table JOIN, all large tables +-----------+-------------+------------+--------------+--------+------------+-----------+--------------+-----------+-------------+ | client_id | first_visit | last_visit | days_visited | visits | procedures | locations | total_billed | max_price | avg_price | +-----------+-------------+------------+--------------+--------+------------+-----------+--------------+-----------+-------------+ | ....... | 2018-02-14 | 2019-04-22 | 64 | 64 | .. | .. | 200K | 11K | 449.34 | ... 10 rows in set (5 min 18.16 sec) 13

14 .Why MySQL is slow for such queries? 1. Row oriented - even if we retrieve 2 fields it will need to read a row 2. InnoDB organize table by pages (16k page) - will need to read more 3. MySQL will use 1 cpu core per query, not utilizing all cores 14

15 . Why MySQL is slow for such queries? Row-oriented DBMS (MySQL) Column-oriented DBMS 15 https://clickhouse.yandex/docs/en/

16 .Column Store Databases MariaDB Columnstore https://mariadb.com/kb/en/library/mariadb-columnstore/ Tips and Tricks with MariaDB ColumnStore Wednesday 5:10 PM - 5:35 PM@ Texas 16

17 .Column Store Databases Yandex Clickhouse https://clickhouse.yandex/ Low Cost Transactional and Analytics With MySQL and Clickhouse, Have Your Cake and Eat It Too! Wednesday 5:40 PM - 6:05 PM Clickhouse Features to Blow your Mind Thursday 11:55 AM - 12:45 PM 17

18 .Column-store tests Testing box 1: ● AWS ec2 instance, c5d.4xlarge ● RAM: 32.0 GiB ● vCPU: 16 ● Disk: NVMe SSD + EBS Testing box 2: ● AWS ec2 instance, c5d.18xlarge ● RAM: 144.0 GiB ● vCPU: 72 ● Disk: NVMe SSD + EBS 18

19 .Is it worth using column store: Q1 MySQL Clickhouse MariaDB Response time (sec) 46.24 0.754 11.43 Speed increase 62x 4x compared to MySQL 6248% 404% (times, %) AWS ec2 instance, c5d.4xlarge 19

20 .Is it worth using column store: Q2 MySQL Clickhouse MariaDB Response time (sec) 5 min 18.16 sec 33.83 sec 1 min 2.16 sec Speed increase 9x 5x compared to MySQL 940% 511% (times, %) AWS ec2 instance, c5d.4xlarge 20

21 . Table sizes on disk MySQL Clickhouse ColumnStore client_visit 5,876,219,904 793,976,832 3,606,462,464 client_procedure 13,841,203,200 2,253,180,928 9,562,865,664 client_procedure_claim 2,466,250,752 292,007,936 335,683,584 client_claim 11,710,496,768 2,400,182,272 6,720,749,568 Total 33,894,170,624 5,739,347,968 20,225,761,280 Smaller compared to MySQL size (x) 5.91 1.68 Compression 21

22 .Exporting from MySQL Usually 3 options 1. ETL to Star Schema 2. ETL to flat de-normalized tables 3. Copy / replicate realtime (as is) I will talk about option 3. 22

23 .Yandex Clickhouse sudo apt-get install clickhouse-client clickhouse-server 23

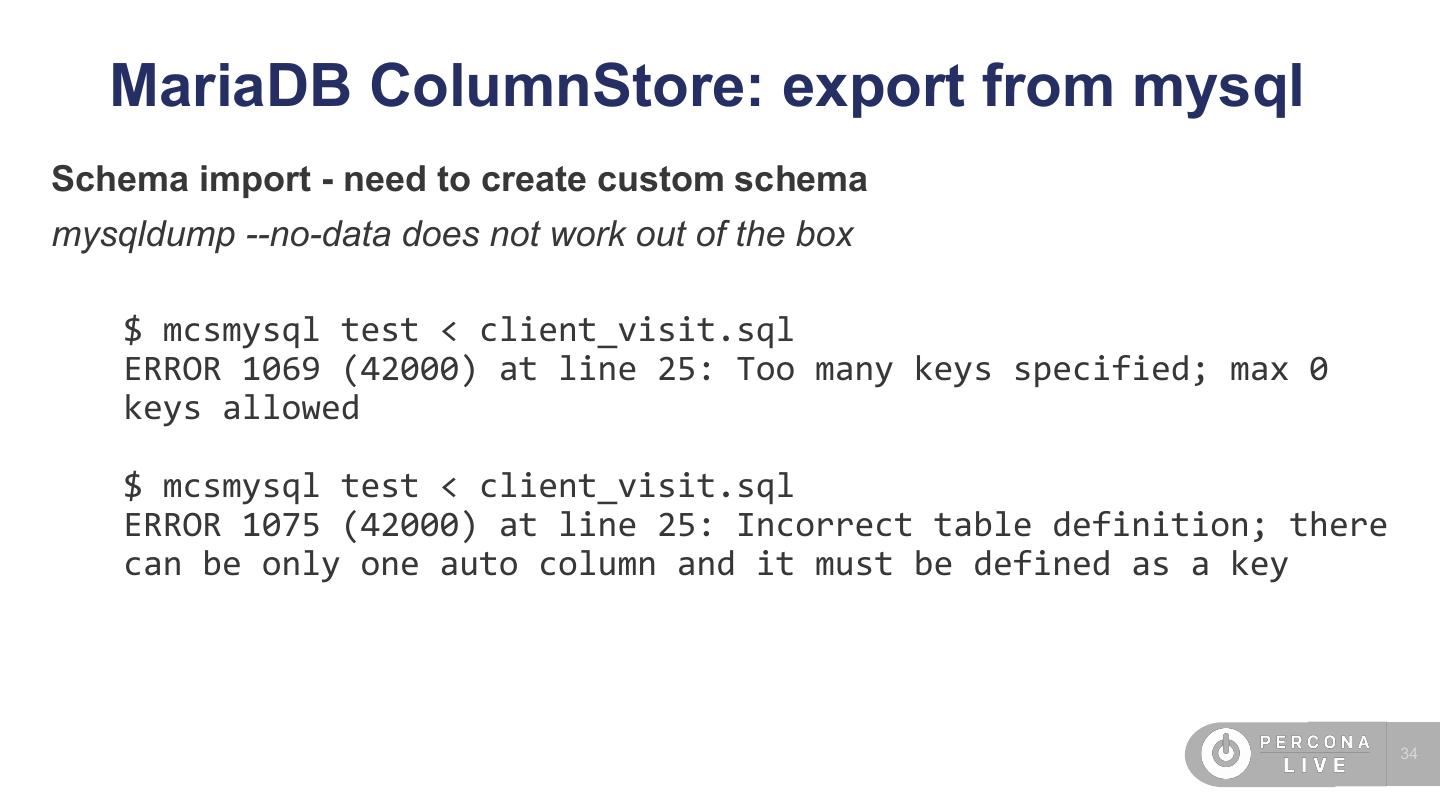

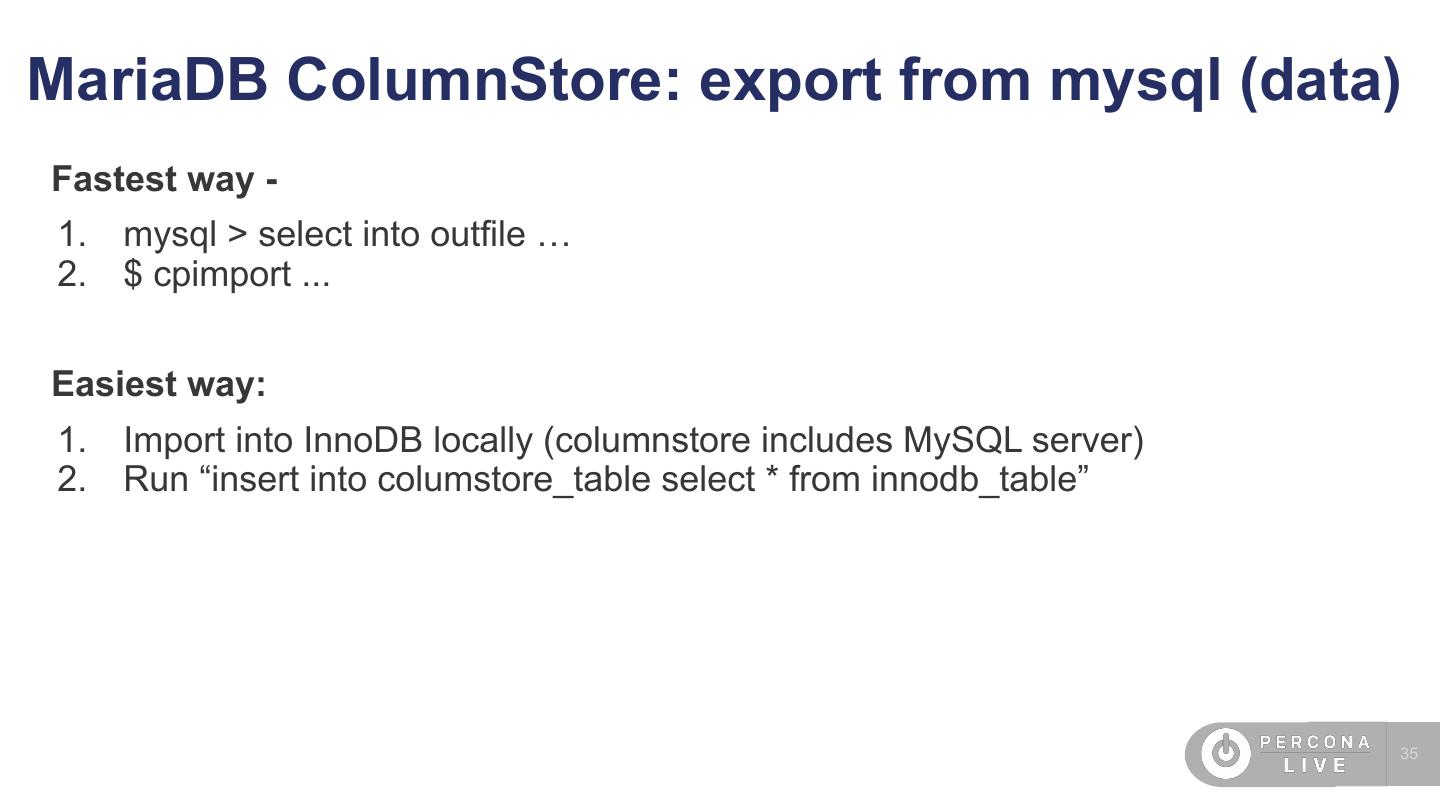

24 .Clickhouse: export from mysql (schema) https://github.com/Altinity/clickhouse-mysql-data-reader 1. Schema import $ clickhouse-mysql --create-table-sql \ --src-host=mysql-replica-host \ --src-user=export \ --src-password=xxxxxx \ --src-schemas=main \ --src-tables=client_condition,client_procedure,client_visit It will choose partition key and sort key, i.e. ENGINE = MergeTree() PARTITION BY toYYYYMM(created_date) ORDER BY client_id Beware: enum is not supported (bug) 24

25 .Clickhouse: export from MySQL (data) 1. Use clickhouse-mysql-data-reader (slower) 2. Use native Clickhouse MySQL connection: INSERT INTO client_procedure_claim SELECT * FROM mysql('host', 'db', 'client_procedure_claim', 'export', 'xxxxx') Ok. 0 rows in set. Elapsed: 17.821 sec. Processed 37.40 million rows, 299.18 MB (2.10 million rows/s., 16.79 MB/s.) 25

26 .Clickhouse: connect using MySQL client https://github.com/sysown/proxysql/wiki/ClickHouse-Support $ wget https://github.com/sysown/proxysql/releases/download/v2.0.4/p roxysql_2.0.4-ubuntu18_amd64.deb $ dpkg -i proxysql_2.0.4-clickhouse-ubuntu18_amd64.deb $ proxysql --clickhouse-server 26

27 . Clickhouse: connect using MySQL client $ mysql -h 127.0.0.1 -P 6032 ... Admin> SELECT * FROM clickhouse_users; Empty set (0.00 sec) Admin> INSERT INTO clickhouse_users VALUES ('clicku','clickp',1,100); Query OK, 1 row affected (0.00 sec) Admin> LOAD CLICKHOUSE USERS TO RUNTIME; Query OK, 0 rows affected (0.00 sec) Admin> SAVE CLICKHOUSE USERS TO DISK; Query OK, 0 rows affected (0.01 sec) https://github.com/sysown/proxysql/wiki/ClickHouse-Support 27

28 . Clickhouse: connect using MySQL client mysql -h 127.0.0.1 -P 6090 -uclicku -pclickp ... Server version: 5.5.30 (ProxySQL ClickHouse Module) mysql> select client_id, count(cv.id) as visits, count(distinct cv.service_location_name) as locations from client_visit cv group by client_id order by visits desc limit 10; +-----------+--------+-----------+ | client_id | visits | locations | +-----------+--------+-----------+ ... 10 rows in set (0.53 sec) 28

29 .ProxySQL to Clickhouse - experimental Some bugs exists: select min(date) as first_visit, max(date) as last_visit from client_visit; ERROR 2013 (HY000): Lost connection to MySQL server during query 29