- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Amazon Database Migration Service

在本文中,我们将了解Amazon迁移工具。会谈将涵盖迁移前的可能性、潜在陷阱以及对其功能的高级概述。

展开查看详情

1 .Amazon’s Database Migration Service, a magical wand for moving from closed source solutions ? Dimitri Vanoverbeke Solution Engineer @ Percona

2 . Who Am I • I am your innocent Percona Solution Engineer. • Living in Bruges, Belgium • Works together with Sales, consulting, any departement. • @dim0 on twitter (personal) www.percona.com

3 . What is the exact point? • Migrating towards a new infrastructure can be cumbersome • A “silver bullet” to migrate Oracle, Microsoft SQL, Postgres to MySQL or another solution? • Easy migrations not requiring large downtime • Easy schema migrations using the Amazon Schema migration tool. • Performs automated feasibility assessment • Supports a multitude of source database environments and a multitude of target databases. !3 www.percona.com

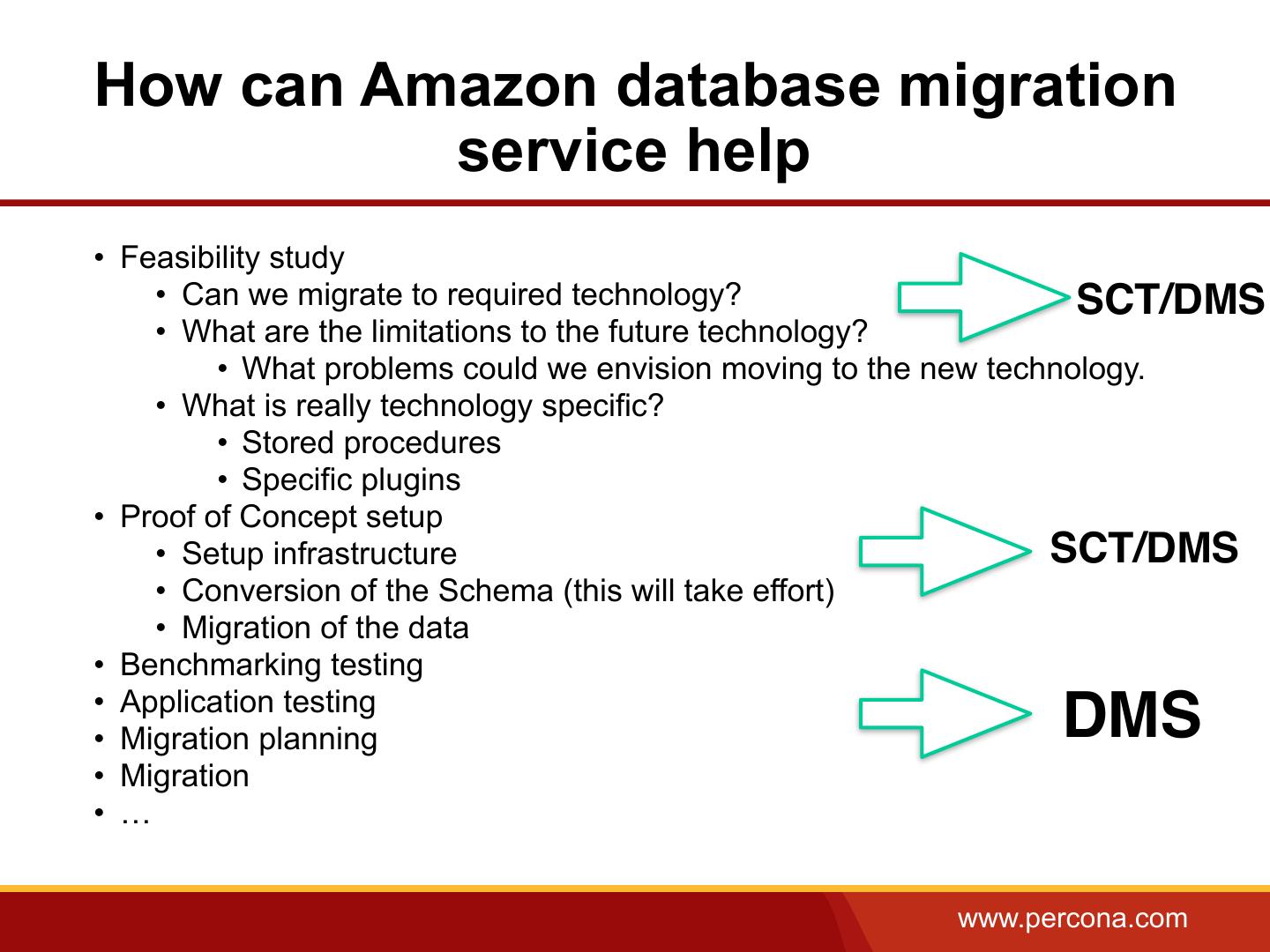

4 .What is a typical migration process • Feasibility study • Can we migrate to required technology? • What are the limitations to the future technology? • What problems could we envision moving to the new technology. • What is really technology specific? • Stored procedures • Specific plugins • Proof of Concept setup • Setup infrastructure • Schema migration • Migration of the data • Benchmarking testing • Application testing • Migration planning • Migration • … www.percona.com

5 .So what tools does Amazon provide • Schema migration tool (Amazon SCT) • Windows, Ubuntu, MacOS package • Java tool • Amazon Database migration services • AWS hosted environment providing replication to a multitude of technologies • AWS DBaaS ecospace • Amazon RDS • Amazon RDS Aurora • Amazon EC2 with your own choice of database www.percona.com

6 . Schema Migration Tool interesting features • Schema assessment report (per schema) • Verifies the schema for any problematic behavior: • Assesses the amount of manual actions for moving the schema to the new environment. • Verifies functions and checks if it can be migrated to the new environment. • Creates seperation in the following categories: • < 1hour, < 4 hours, > 4 hours • A list of manual actions (or better proposed manual actions) • Converts schema’s and provides you with the table create statements. • Can verify application code for embedded SQL statements. www.percona.com

7 .Schema conversion tool (best use) • You can essentially analyze the amount of effort for: • Functionality gaps • PL/SQL usage and modification • Column type differences • Assessment • Essentially you could compare multiple targets and choose the one with less effort. www.percona.com

8 . Schema migration Tool • Two components • Java tool (eek) • Database driver • Multiple connectors supported (needs to be Java connector) • Supports a lot of Technologies, even data warehousing solutions for migration to Redshift. • Typically used for large Databases www.percona.com

9 .Highlevel Best Practices / Limitations • JVM based • Options for Performance • Balance speed with Memory consumption [JVMUserOptions] -Xmx16128m -Xms4096m • Modify the amount of Memory • Run it on Linux (Mac OS X implementation seems broken) • Use it to convert schema’s or copy database schema’s into the new infrastructure. • You can still do it manual if it’s the same engine environment (using xtrabackup or mysqldump) www.percona.com

10 . So what is Amazon Database migration services • Replication instance • Sources • Targets • Events and notifications www.percona.com

11 . Replication instance • Replication instance that replicates the activities in the transaction log of the source database, transforms and pushes the changes to the database backend. • Multi AZ option (to make sure the replication environment is not a SPOF) www.percona.com

12 .Replication instance www.percona.com

13 . Sources • On premise sources • EC2 instances • Amazon RDS instances • Versions: • Oracle 10.2 and later • Microsoft SQL server • PostgreSQL • MongoDB 2.6 > • Amazon RDS (Aurora, MySQL, …) • MySQL 5.5 - 5.7 • MariaDB www.percona.com

14 . Targets • On premise • AWS EC2 instance • Amazon RDS instance • Versions: • Oracle > 10g • Microsoft SQL server • MySQL 5.5 - 5.7 • Postgres • Amazon RDS MySQL, Oracle, Aurora, MariaDB www.percona.com

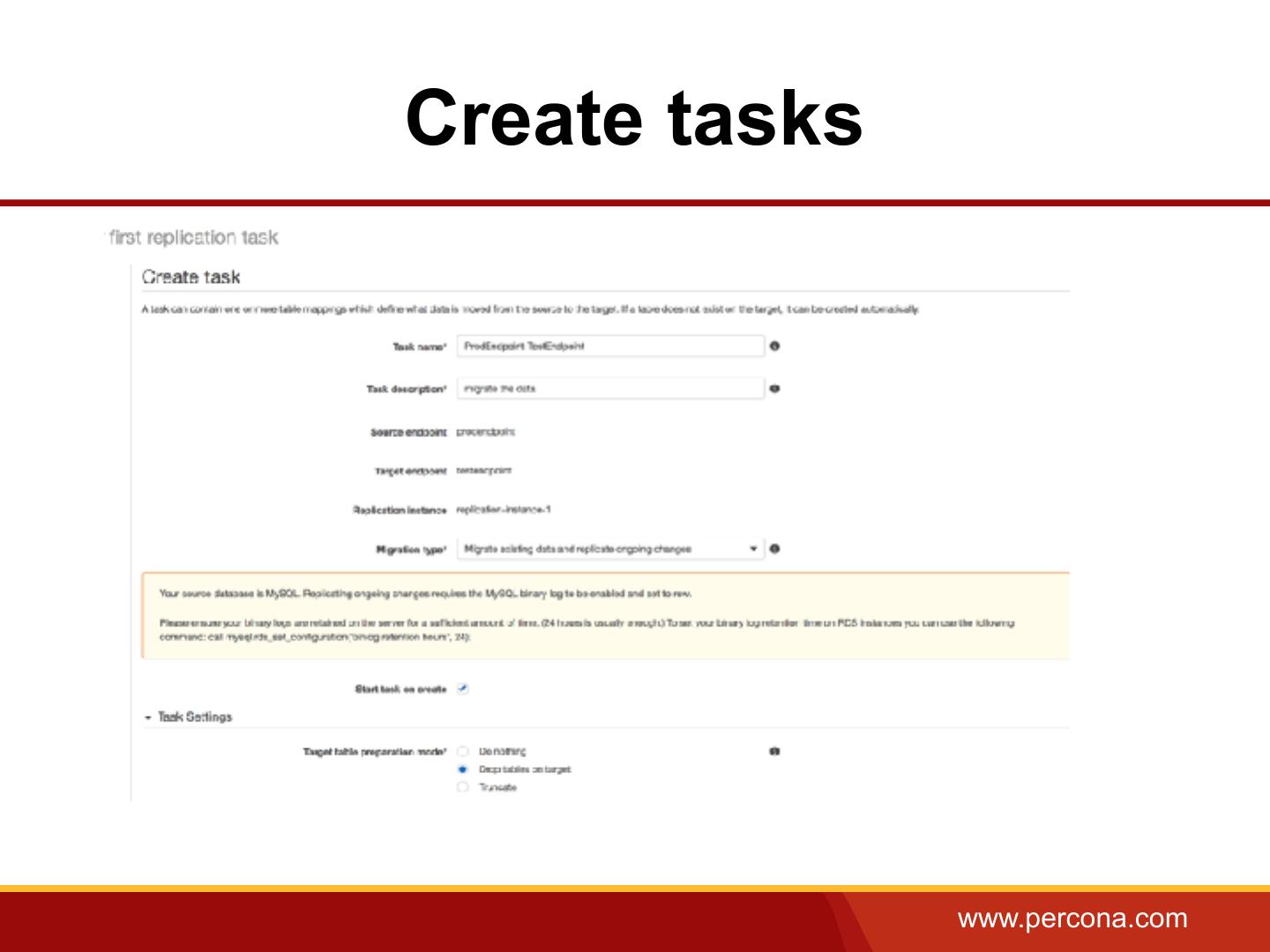

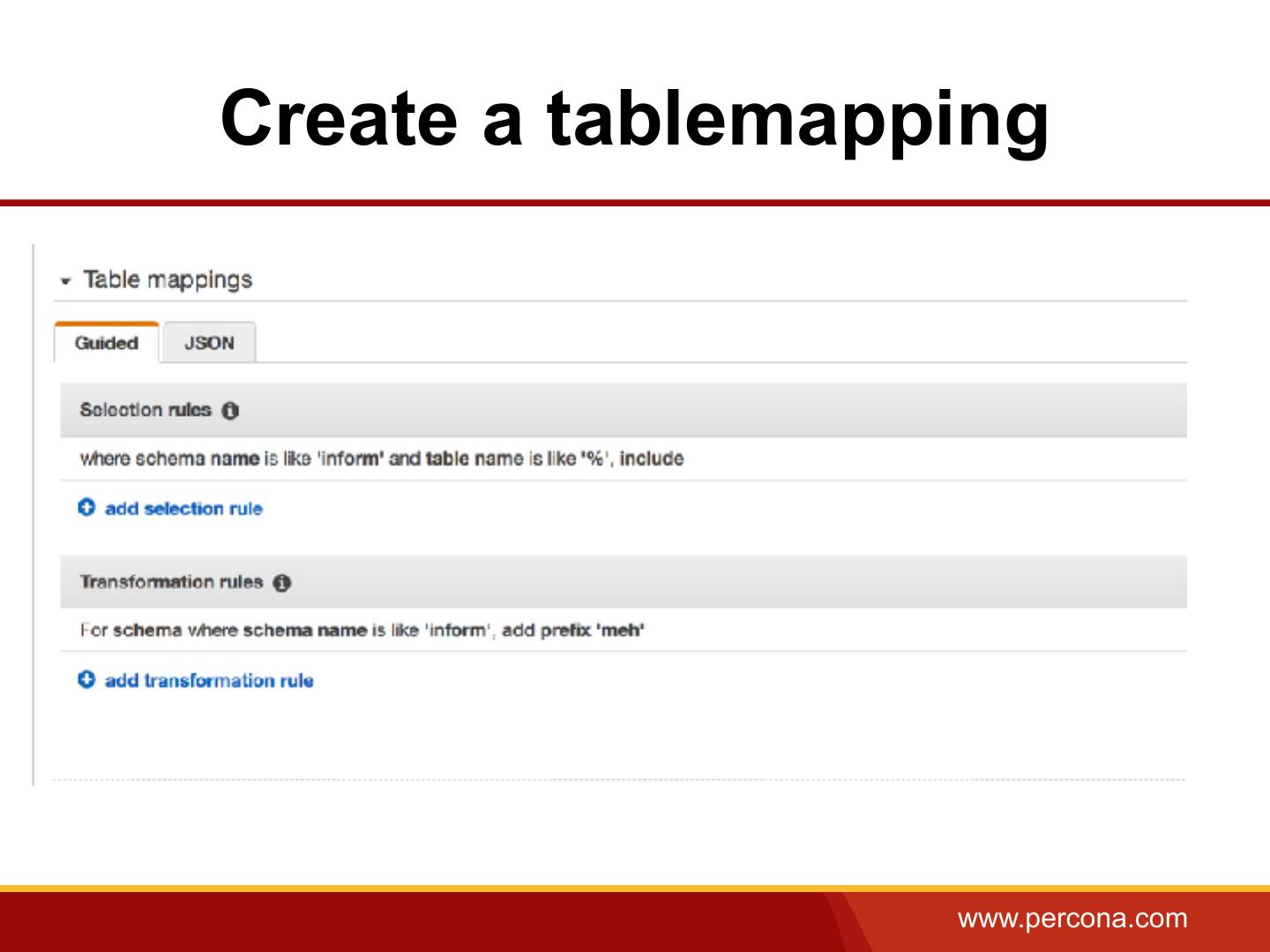

15 . Tasks • Tasks are the actual workers for the migration. • Define the schema's or tables to migrate • It’s like a trigger for the actual tasks done on the replication instance level. • Migration tasks • Full load of data to target database • Perform cached changes during load • Migrate + Migrate changes on the source data base • Only migrate changes • Table mapping tasks (Filter, transform) www.percona.com

16 . Events and notifications Based on tasks Can be determined events on the replication instance: BEGIN_SCALE_STORAGE – The storage for the replication instance is being increased. REPLICATION_INSTANCE_FAILURE – The replication instance has gone into storage failure. www.percona.com

17 . So how do I start? • Perform a schema conversion using SCT If not DMS will try to do it for you, if you use the correct parameters • Prepare your source/target database • eg. Oracle Archivelog mode, and identification key logging • Create the target database DMS user • Create a replication server. (there’s a button) • Create source and target endpoints that have connection information about your data stores. • Create one or more tasks to migrate data between the source and target data stores. www.percona.com

18 .Create a replication instance www.percona.com

19 .Create tasks www.percona.com

20 .Create a tablemapping www.percona.com

21 .Status information www.percona.com

22 .How can Amazon database migration service help • Feasibility study • Can we migrate to required technology? SCT/DMS • What are the limitations to the future technology? • What problems could we envision moving to the new technology. • What is really technology specific? • Stored procedures • Specific plugins • Proof of Concept setup • Setup infrastructure SCT/DMS • Conversion of the Schema (this will take effort) • Migration of the data • Benchmarking testing • Application testing • Migration planning DMS • Migration • … www.percona.com

23 .Best Practices for replication instance • Make sure you scale it enough, investigate how much caching is required for the data set • Parallel import (8 tables are loaded by default) • Limit or increase the threads • Having multiple tasks can put strain on the replication instance • Data migration services create full table scans on the source environment • It does migrate the schema if it can but SCT might be better to do so. (Best practice) • Limit LOB’s (as it might influence replication performance) www.percona.com

24 .Best Practices for replication instance • Make sure to migrate the Data if you did not do this prior to it. • Activate task log • Don’t do DDL’s on the source database (it will ignore most of them) www.percona.com

25 .Best Practices for replication instance • It doesn't create secondary indexes, non-primary key constraints. (remove them from the source) • Foreign key constraints need to be disabled during the migration's "full load" and "cached change apply" phases. • Avoid using Multi-AZ on the target during migration of the data. • Perform validation!!! EnableValidation = true www.percona.com

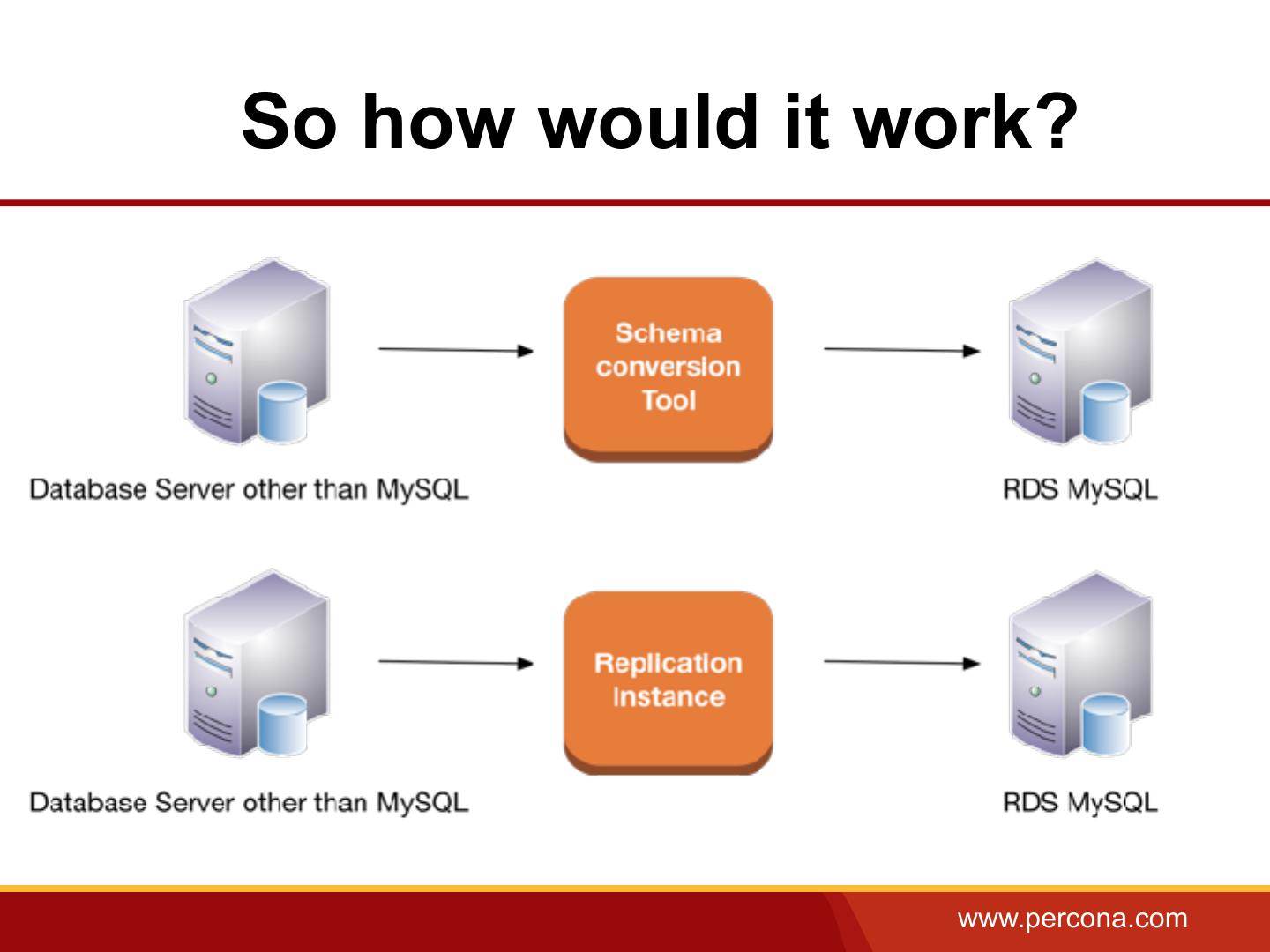

26 .So how would it work? www.percona.com

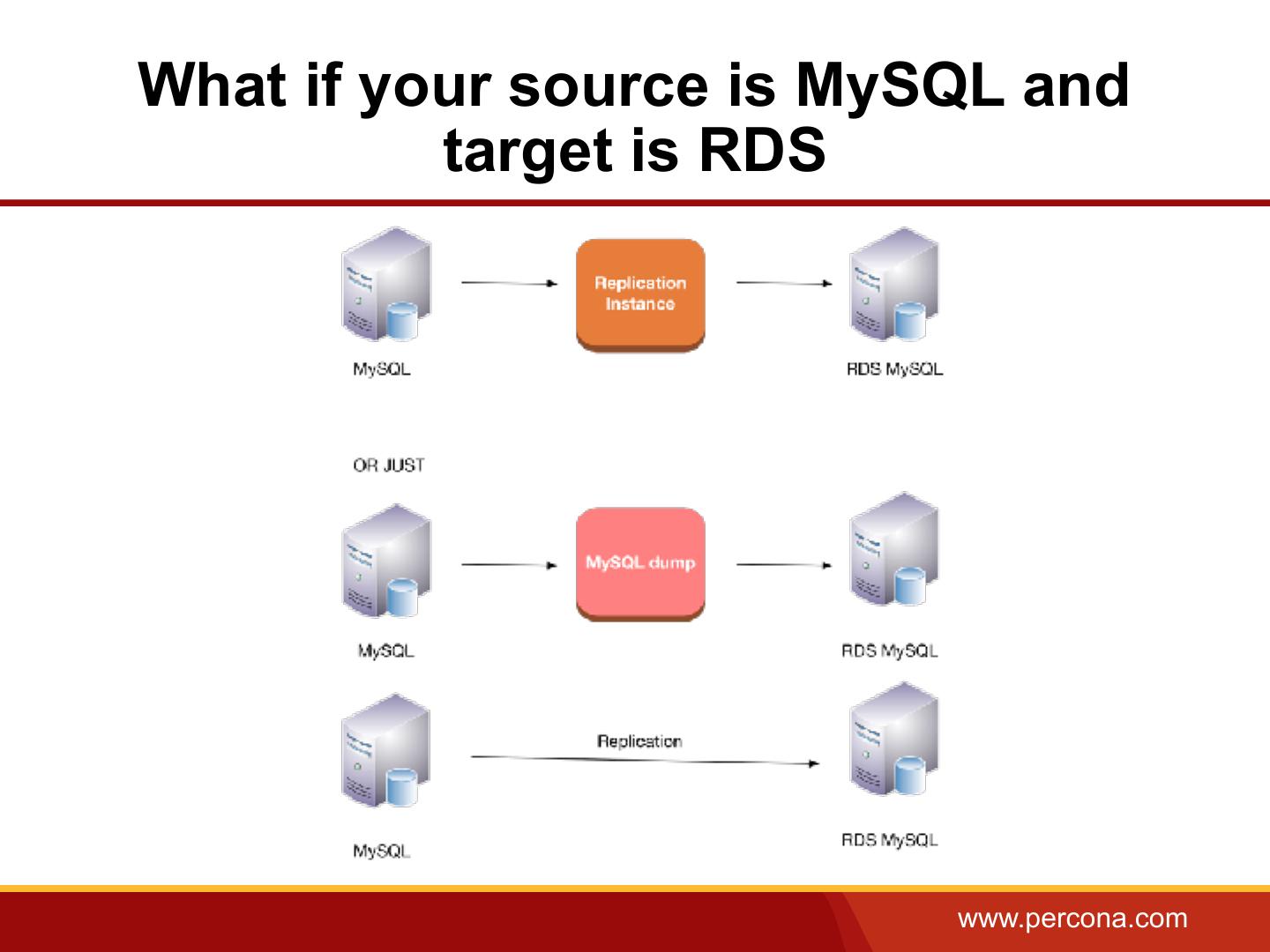

27 .What if your source is MySQL and target is RDS www.percona.com

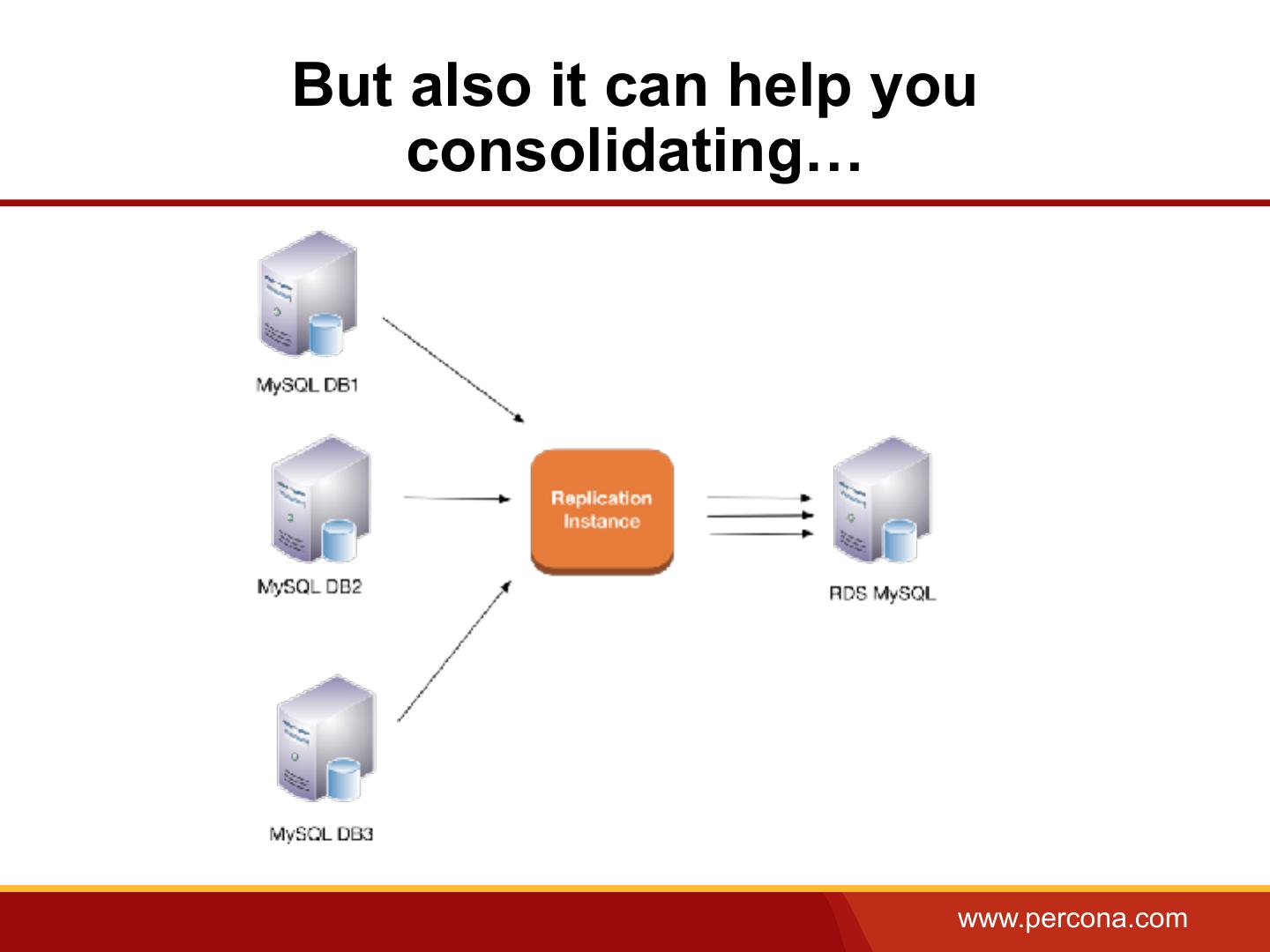

28 .But also it can help you consolidating… www.percona.com

29 . Stay aware • Make sure you are aware what you are getting into. • This tool is to facilitate migrations not make them a success • Test your migration and document your findings • Imply your development team, moving to another database engine might require training • Understand that some parts are a blackbox www.percona.com