- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Millions of Core Banking online payment transactions with TiDB

展开查看详情

1 . Millions of core banking online payment transactions with TiDB Yu Jun yujun@pingcap.com

2 .About me Yu Jun ( 余军 ), Senior Solution Architect , PingCAP CTO@BSG China/ AE@Teradata / Red Hat/ Hewlett-Packard Distributed & availability/FOSS/FSI Industry 20+ years as an infrastructure engineer, open source advocate yujun@pingcap.com

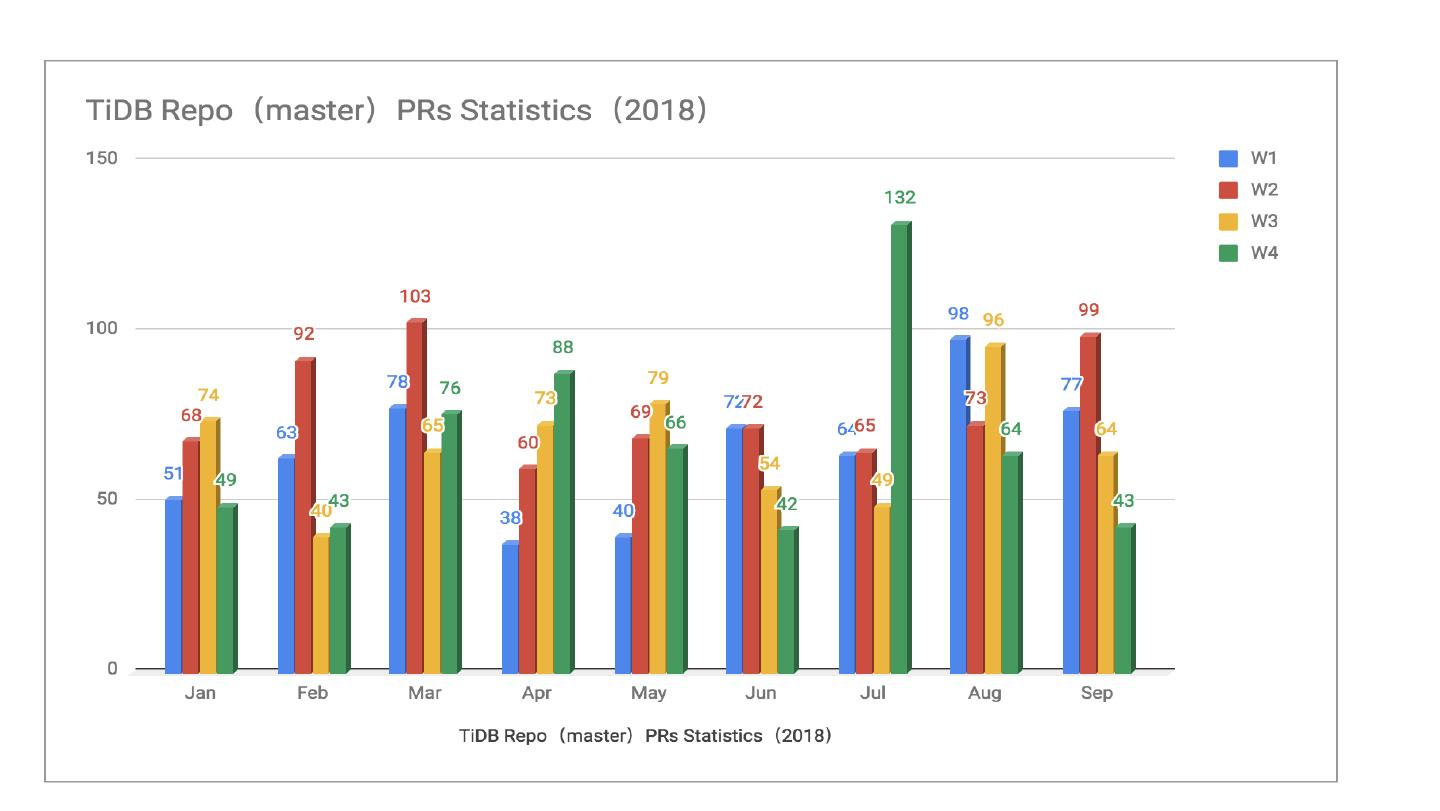

3 .TiDB Stras Increasing Trend TiDB Contributors Increasing Trend

4 .

5 .

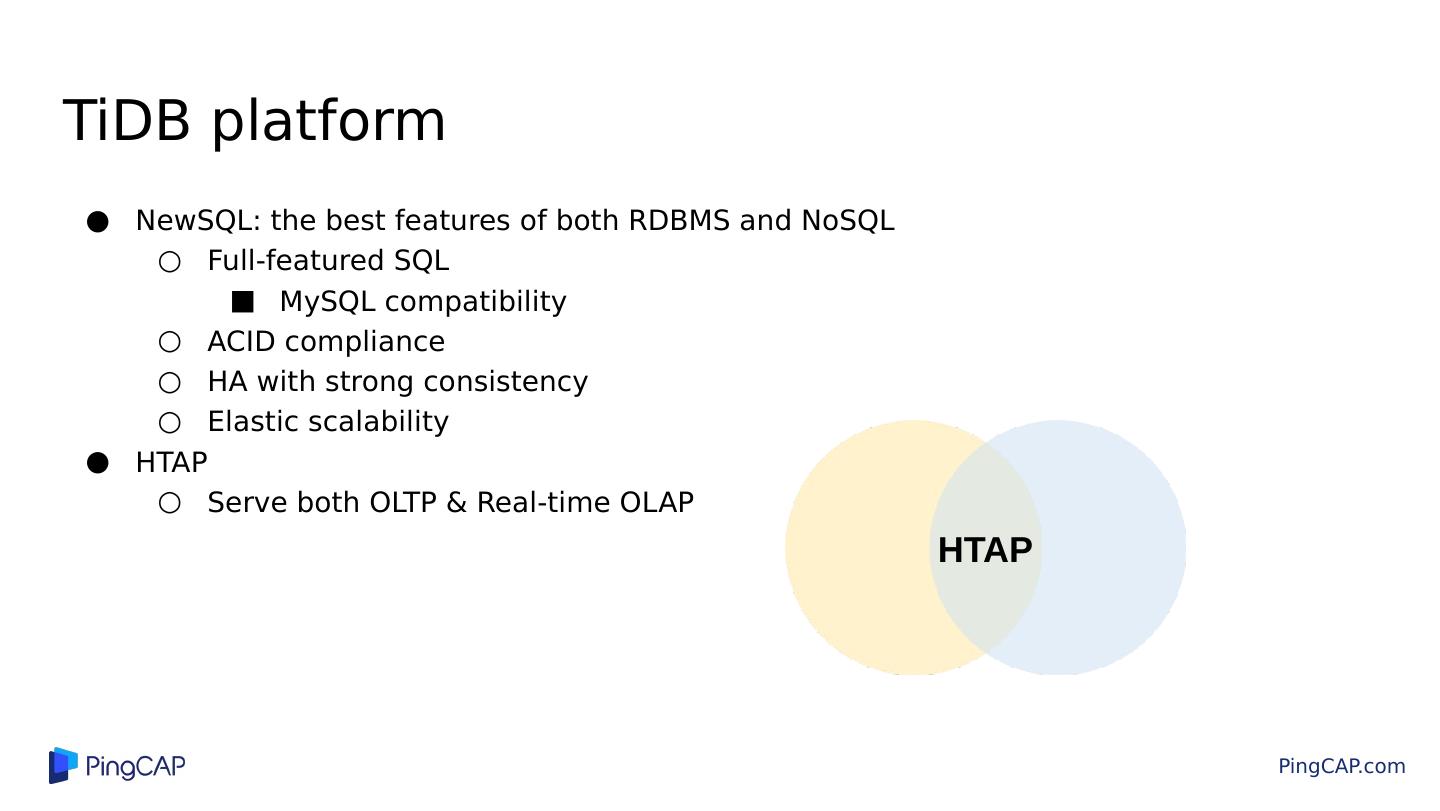

6 .TiDB platform NewSQL: the best features of both RDBMS and NoSQL Full-featured SQL MySQL compatibility ACID compliance HA with strong consistency Elastic scalability HTAP Serve both OLTP & Real-time OLAP HTAP

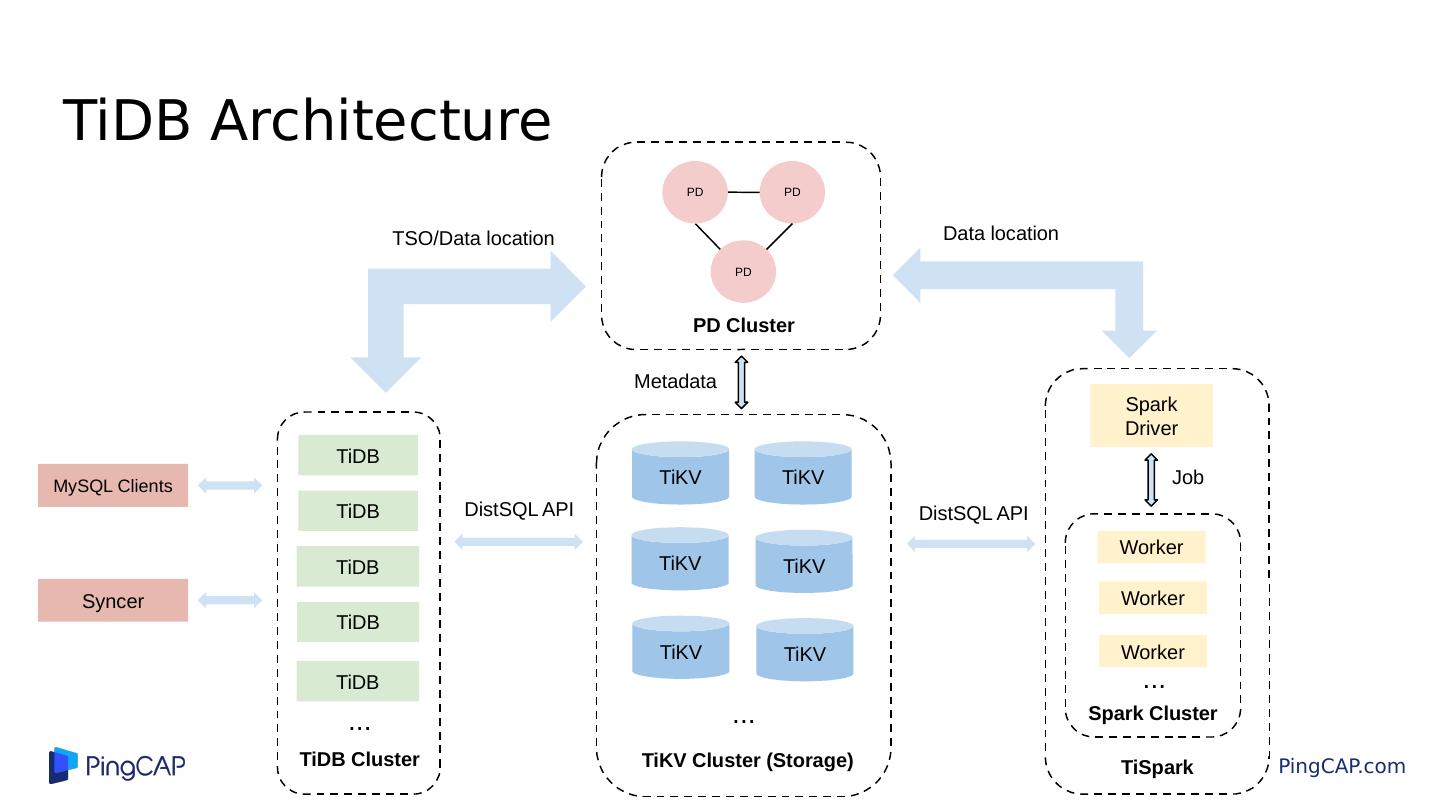

7 .TiDB Architecture TiDB TiDB Worker Spark Driver TiKV Cluster (Storage) Metadata TiKV TiKV TiKV MySQL Clients Syncer Data location Job TiSpark DistSQL API TiKV TiDB TSO/Data location Worker Worker Spark Cluster TiDB Cluster TiDB ... ... ... DistSQL API PD PD PD Cluster TiKV TiKV TiDB PD

8 .Components TiDB (tidb-server) TiKV (tikv-server) Placement Driver (PD) TiSpark ( Analytic Engine) TheFlash (Cloumn based Analytic Engine) Tools (syncer / TiDB-Lightning / {tikv,pd}-ctl) TiDB-operator for Kubernetes

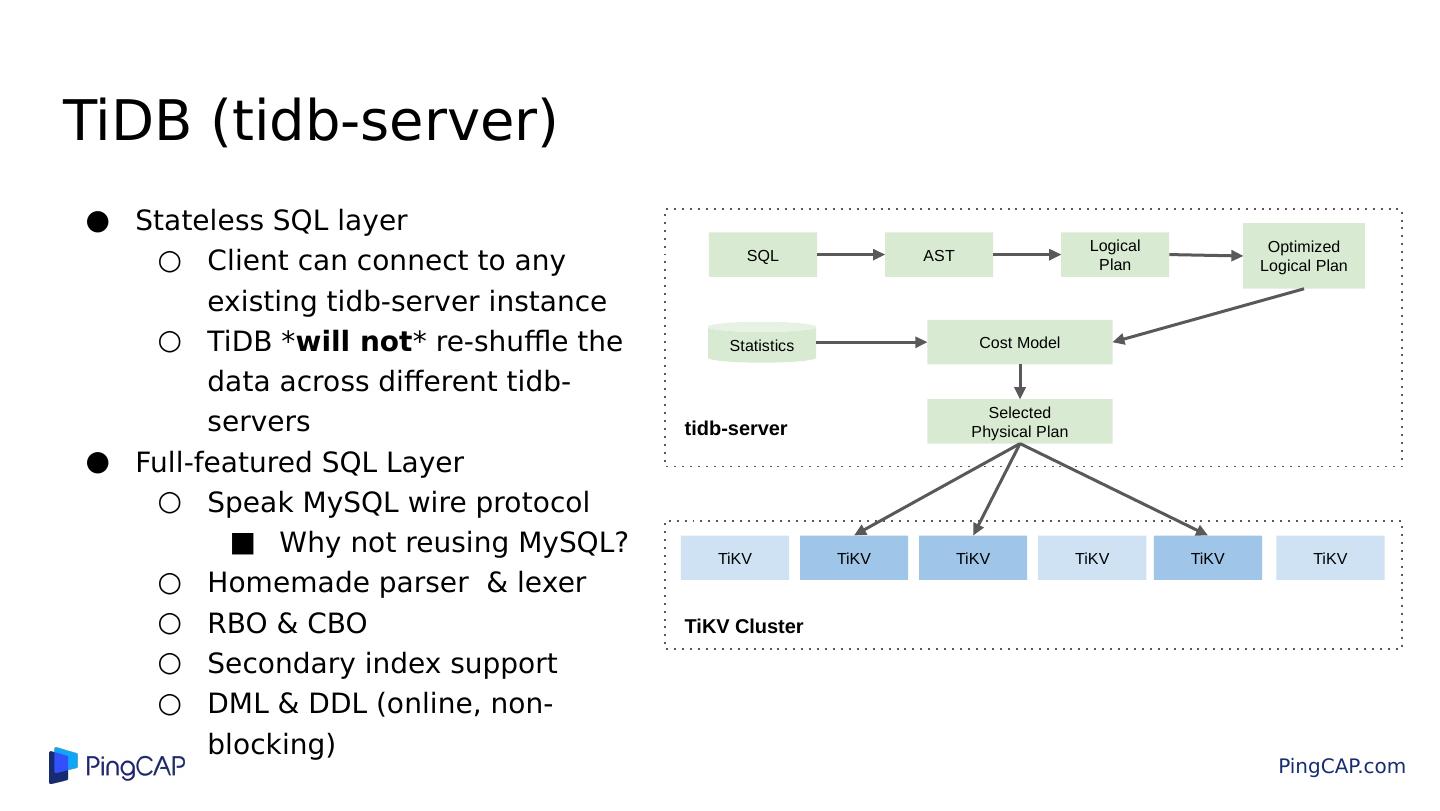

9 .TiDB (tidb-server) Stateless SQL layer Client can connect to any existing tidb-server instance TiDB * will not * re-shuffle the data across different tidb-servers Full-featured SQL Layer Speak MySQL wire protocol Why not reusing MySQL? Homemade parser & lexer RBO & CBO Secondary index support DML & DDL (online, non-blocking) SQL AST Logical Plan Optimized Logical Plan Cost Model Selected Physical Plan TiKV TiKV TiKV tidb-server Statistics TiKV TiKV TiKV TiKV Cluster

10 .Table mapping - From SQL to KV What happens behind: CREATE TABLE user ( id INT PRIMARY KEY, name TEXT, email TEXT );

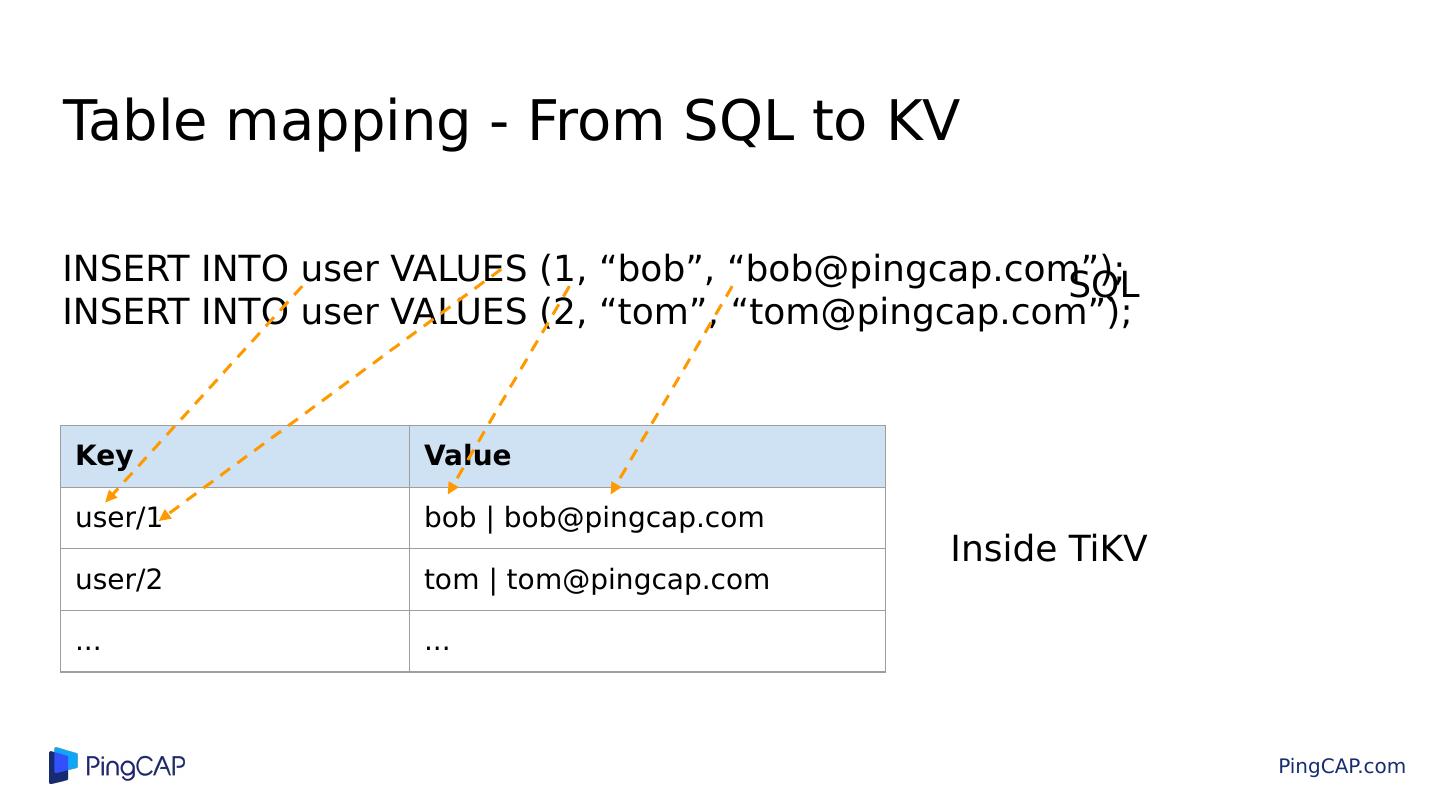

11 .Table mapping - From SQL to KV Key Value user/1 bob | bob@pingcap.com user/2 tom | tom@pingcap.com ... ... INSERT INTO user VALUES (1, “bob”, “bob@pingcap.com”); INSERT INTO user VALUES (2, “tom”, “tom@pingcap.com”); Inside TiKV SQL

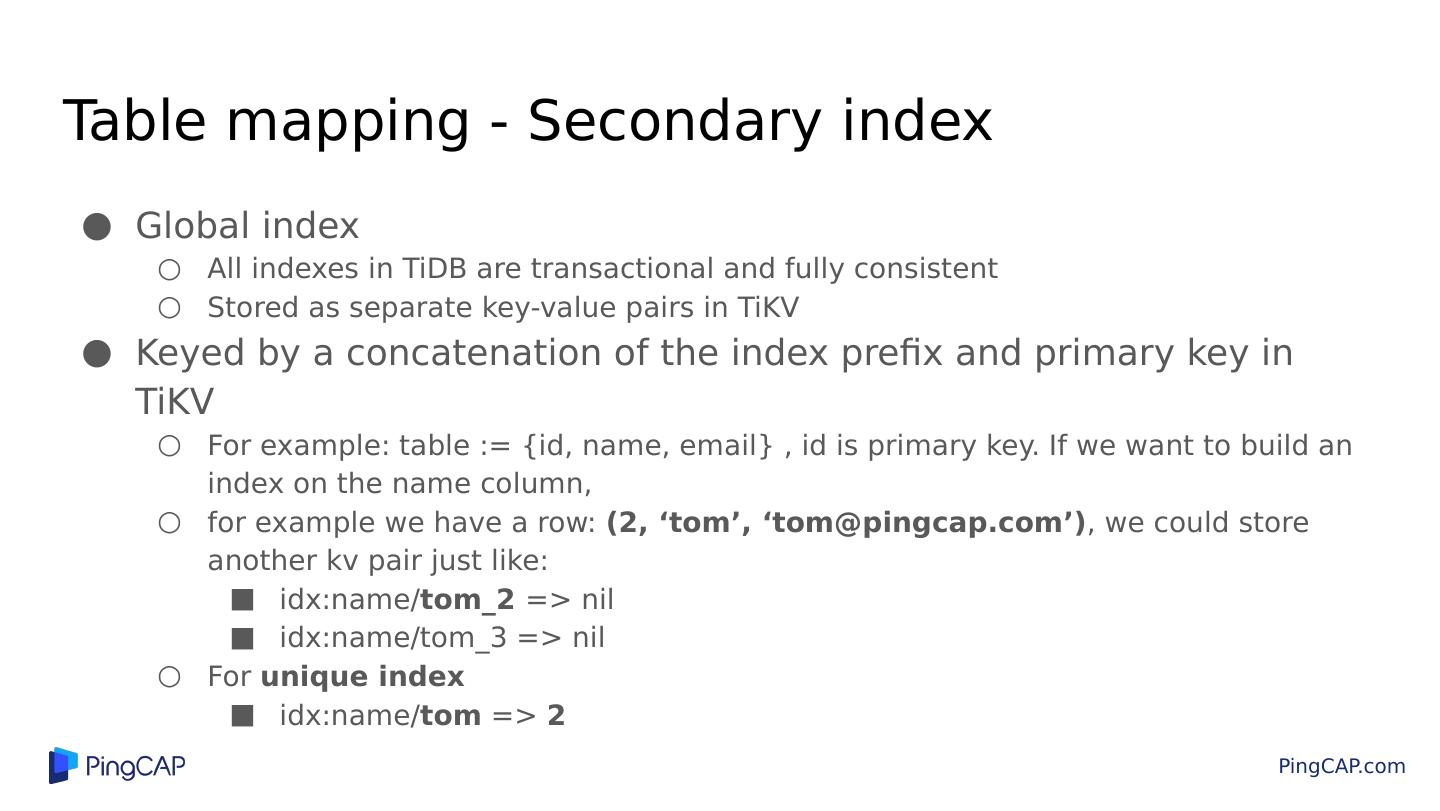

12 .Table mapping - Secondary index Global index All indexes in TiDB are transactional and fully consistent Stored as separate key-value pairs in TiKV Keyed by a concatenation of the index prefix and primary key in TiKV For example: table := {id, name, email} , id is primary key. If we want to build an index on the name column, for example we have a row: (2, ‘tom’, ‘tom@pingcap.com’) , we could store another kv pair just like: idx:name/ tom_2 => nil idx:name/tom_3 => nil For unique index idx:name/ tom => 2

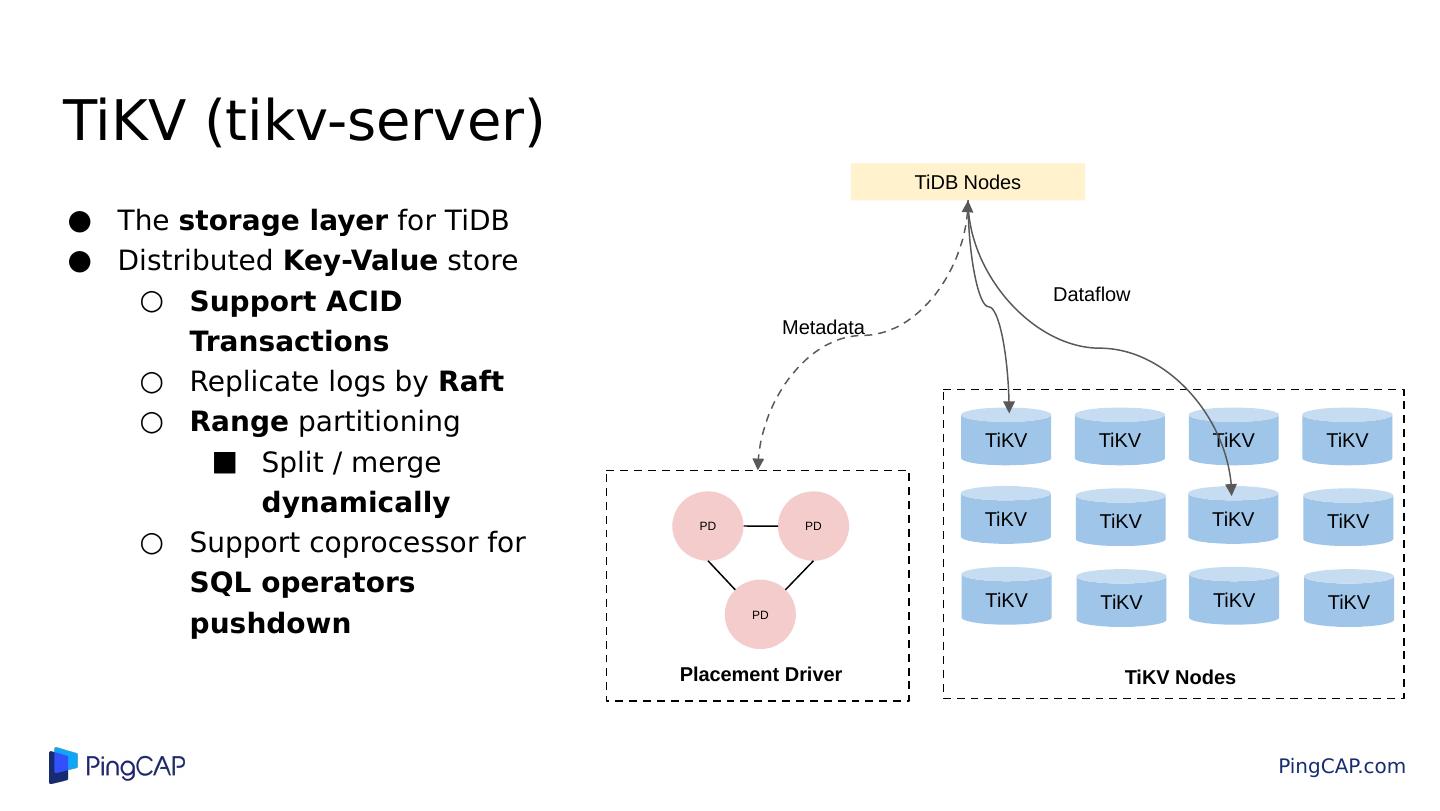

13 .TiKV (tikv-server) The storage layer for TiDB Distributed Key-Value store Support ACID Transactions Replicate logs by Raft Range partitioning Split / merge dynamically Support coprocessor for SQL operators pushdown TiKV TiKV TiKV TiKV TiKV TiKV PD PD PD Placement Driver TiKV TiKV TiKV TiKV TiKV TiKV TiKV Nodes TiDB Nodes Metadata Dataflow

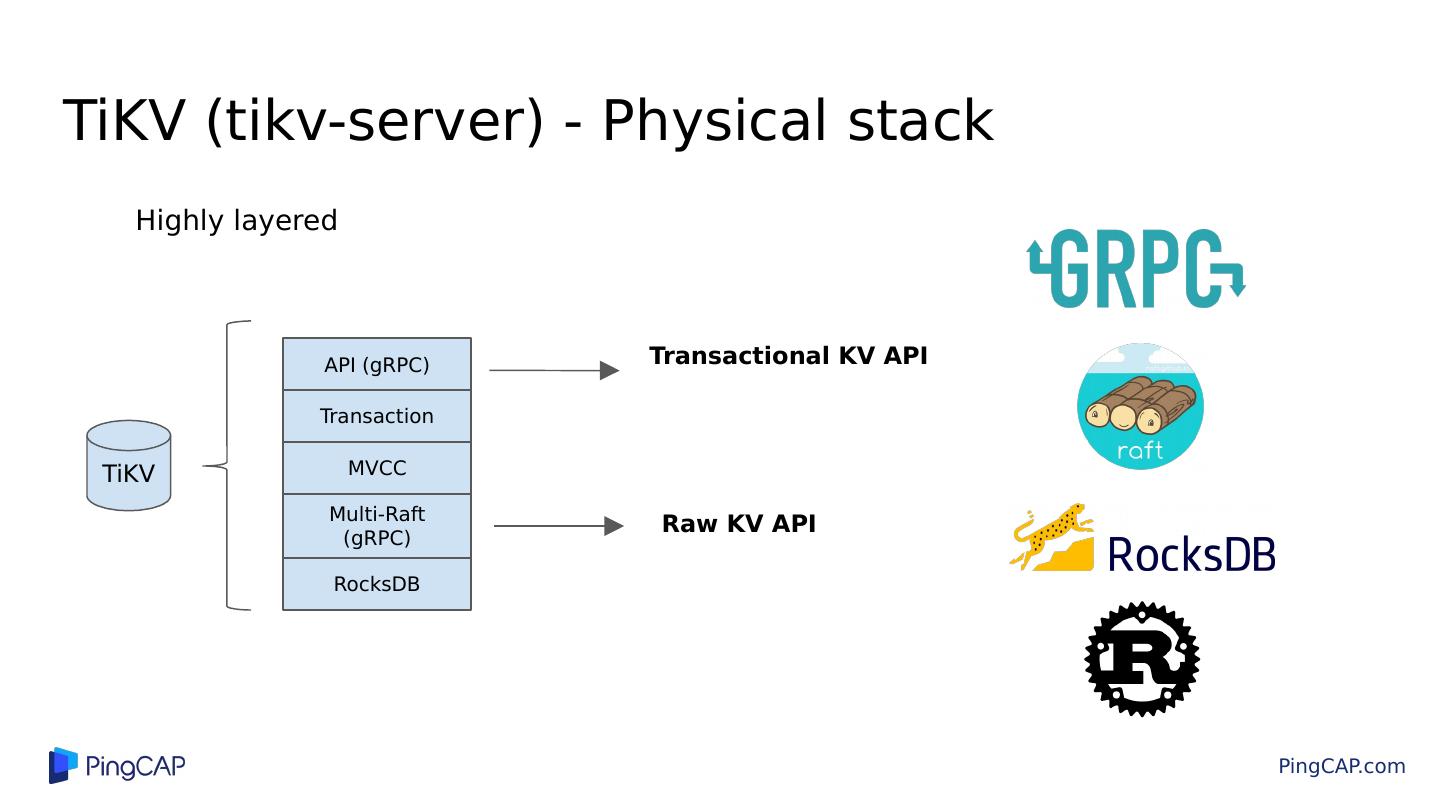

14 .TiKV (tikv-server) - Physical stack Highly layered TiKV API (gRPC) Transaction MVCC Multi- Raft (gRPC) RocksDB Raw KV API Transactional KV API

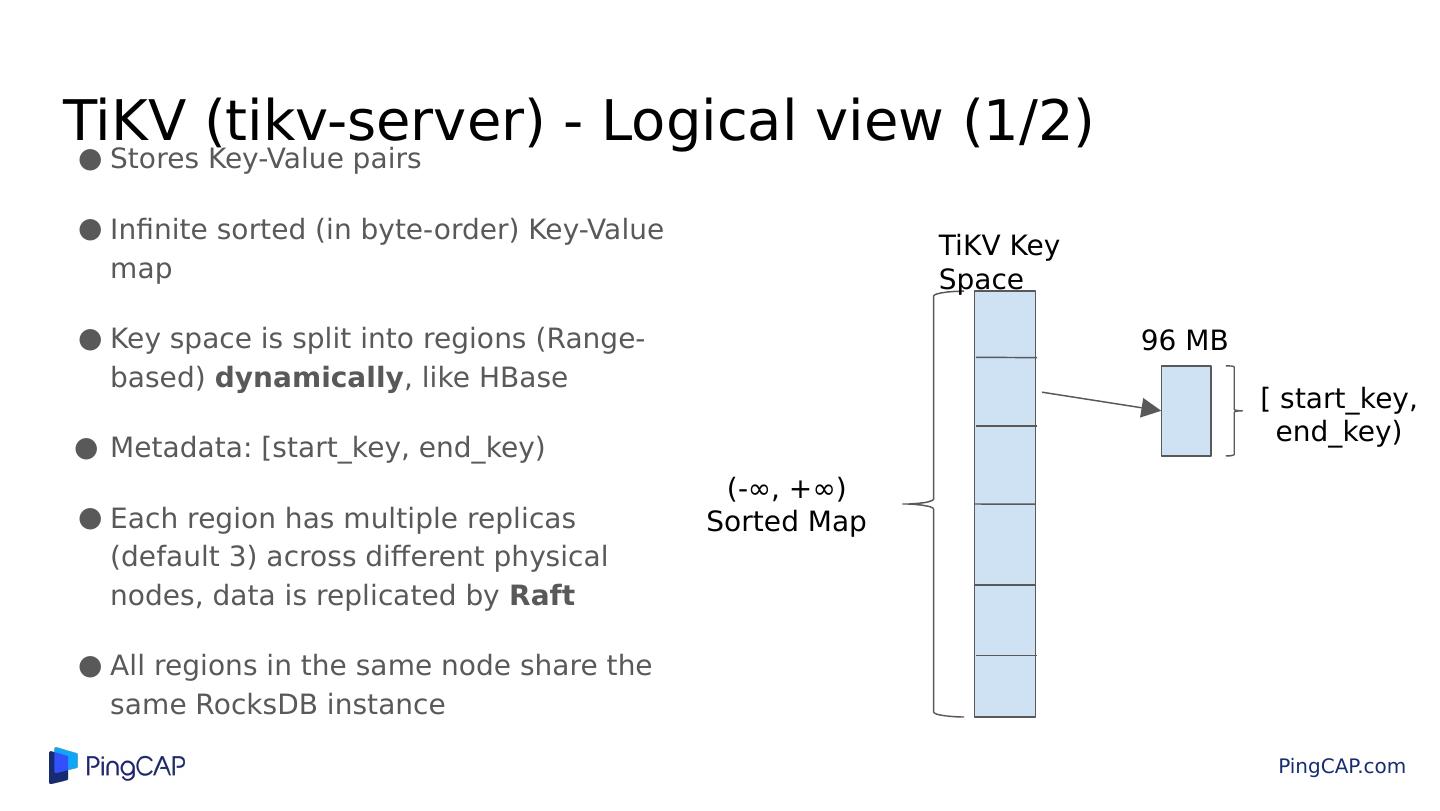

15 .TiKV (tikv-server) - Logical view (1/2) Stores Key-Value pairs Infinite sorted (in byte-order) Key-Value map Key space is split into regions (Range-based) dynamically , like HBase Metadata: [start_key, end_key) Each region has multiple replicas (default 3) across different physical nodes, data is replicated by Raft All regions in the same node share the same RocksDB instance TiKV Key Space [ start_key, end_key) (-∞, +∞) Sorted Map 96 MB

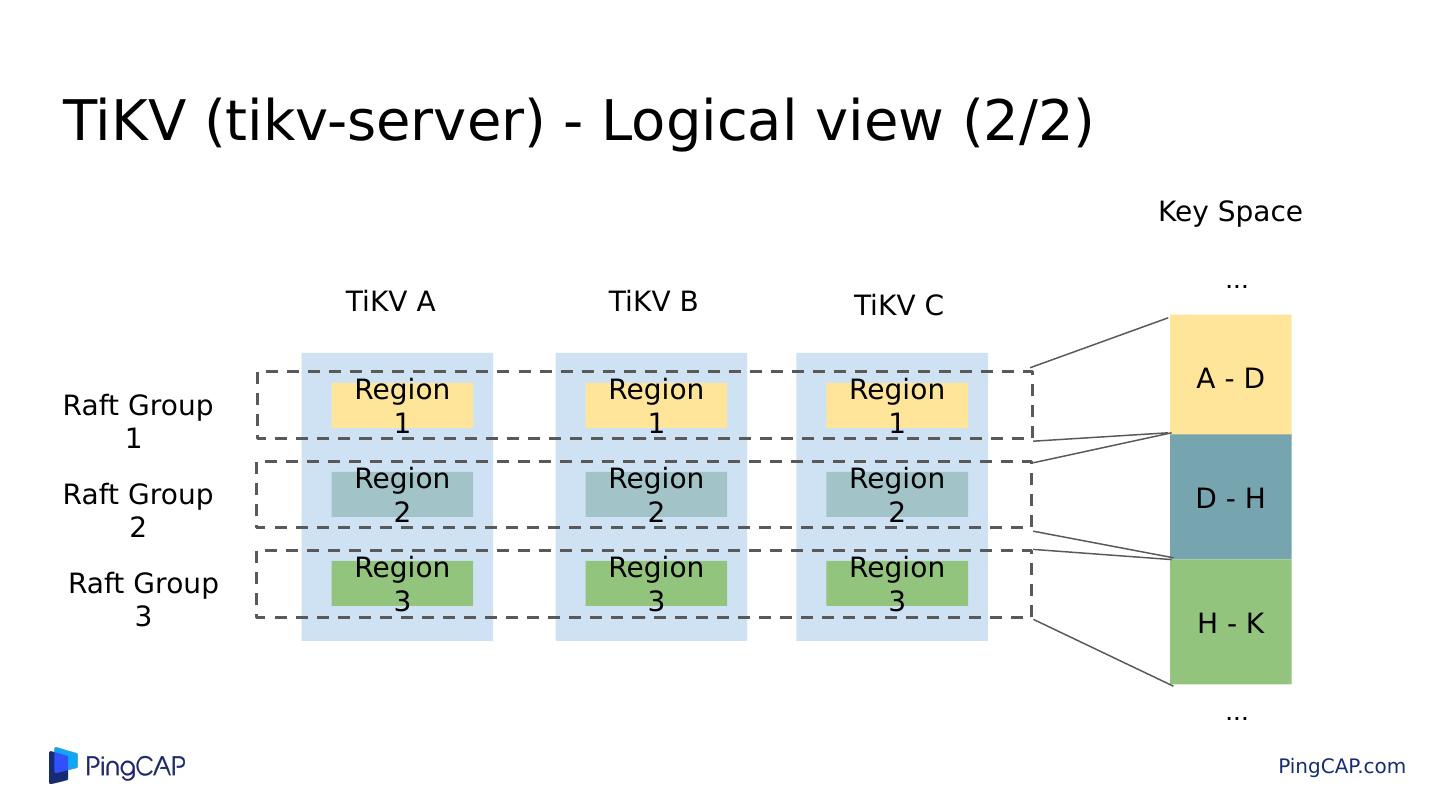

16 .TiKV (tikv-server) - Logical view (2/2) Region 1 Region 2 Region 3 Region 1 Region 2 Region 3 Region 1 Region 2 Region 3 Raft Group 1 Raft Group 2 Raft Group 3 A - D D - H H - K Key Space ... ... TiKV A TiKV B TiKV C

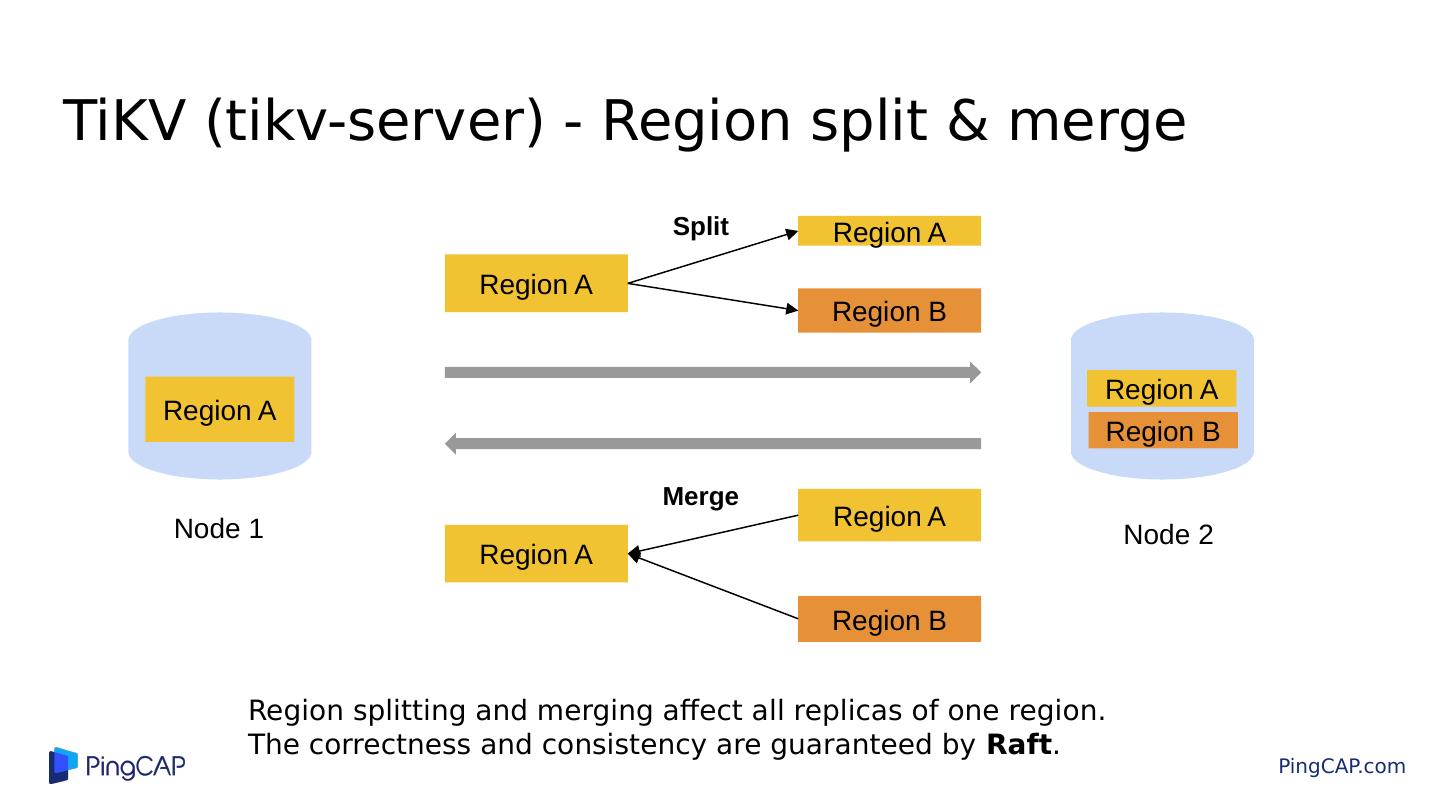

17 .TiKV (tikv-server) - Region split & merge Region A Region A Region B Region A Region A Region B Split Region A Region A Region B Merge Node 2 Node 1 Region splitting and merging affect all replicas of one region. The correctness and consistency are guaranteed by Raft .

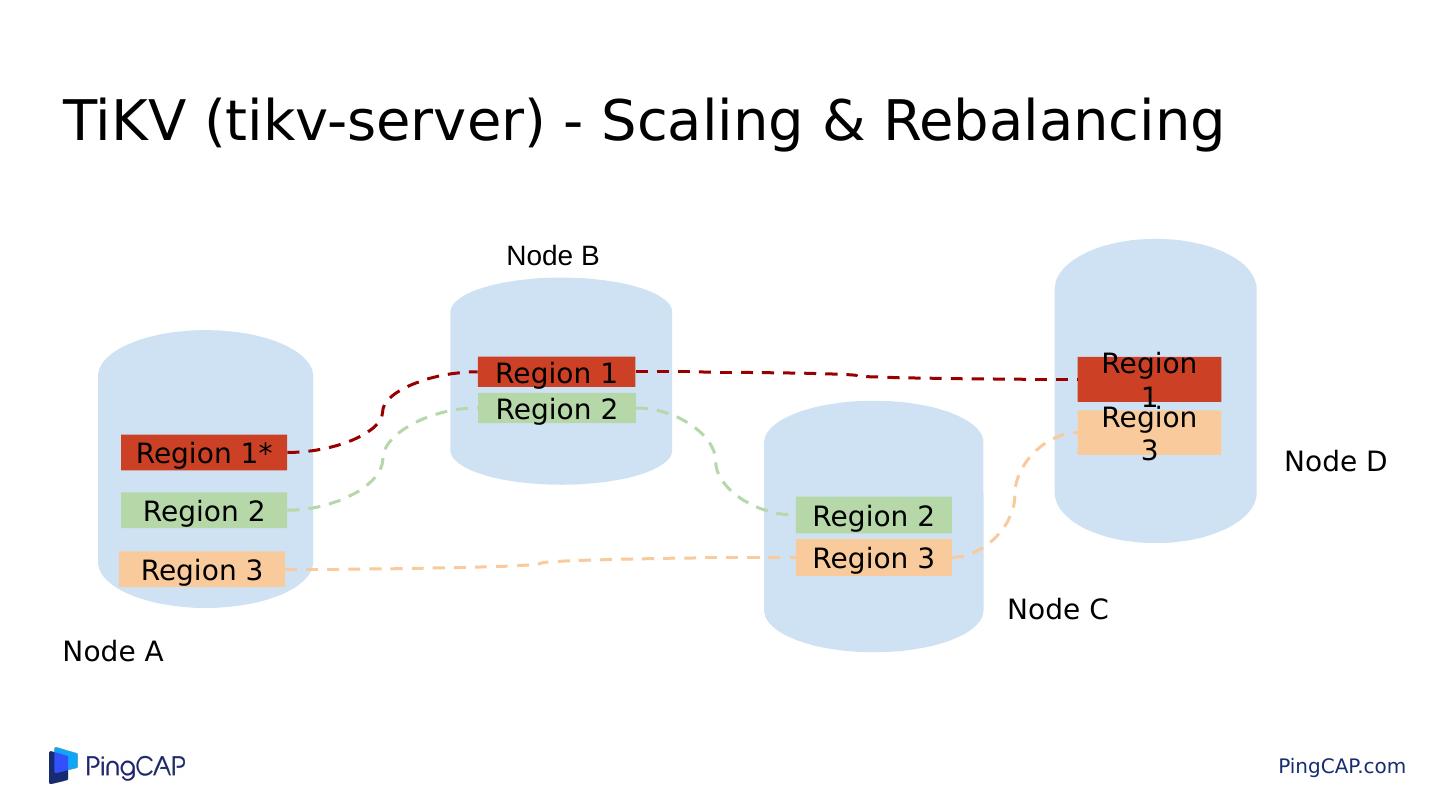

18 .TiKV (tikv-server) - Scaling & Rebalancing Region 1 Region 3 Region 1 Region 2 Region 1* Region 2 Region 2 Region 3 Region 3 Node A Node B Node C Node D

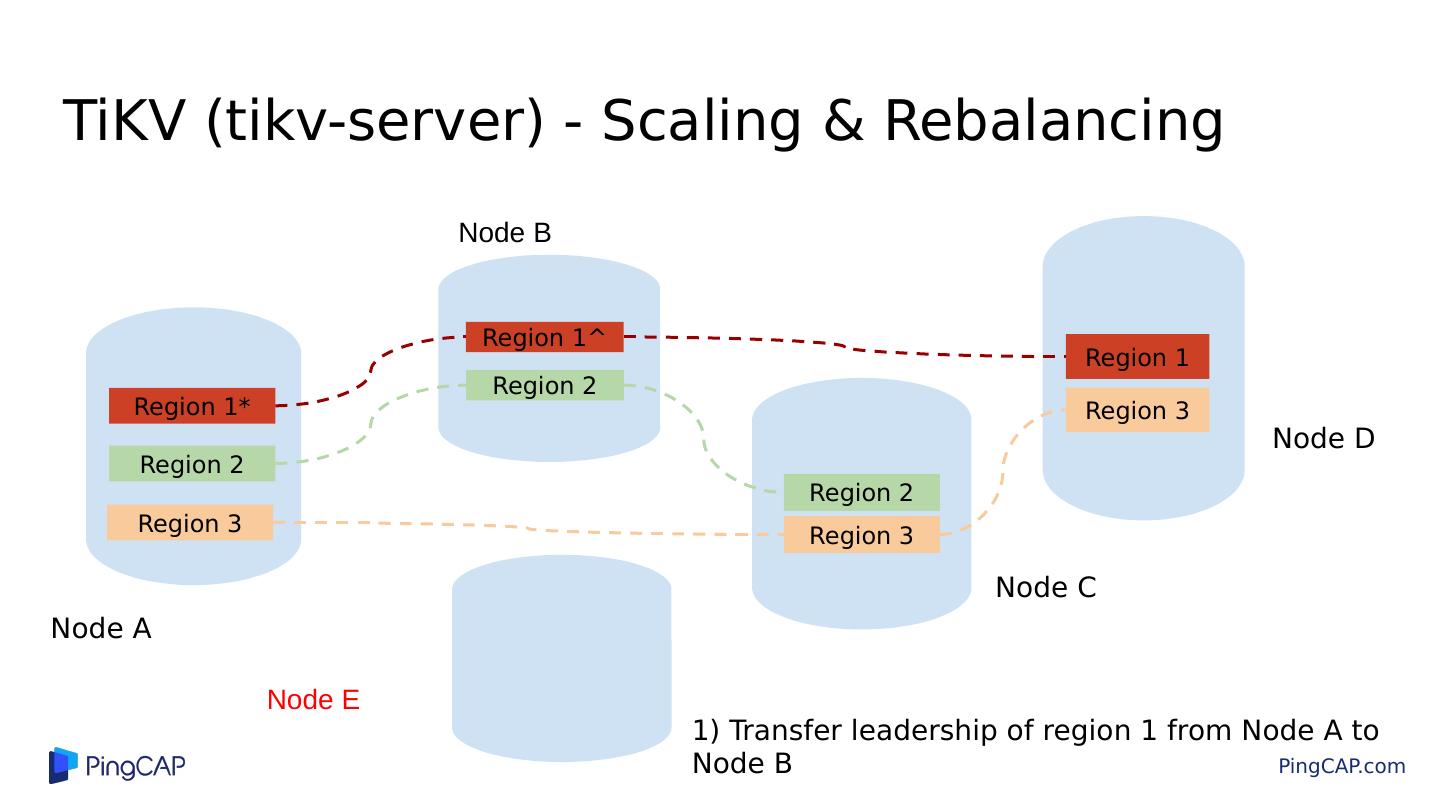

19 .TiKV (tikv-server) - Scaling & Rebalancing Region 1 Region 3 Region 1^ Region 2 Region 1* Region 2 Region 2 Region 3 Region 3 Node A Node B Node E 1) Transfer leadership of region 1 from Node A to Node B Node C Node D

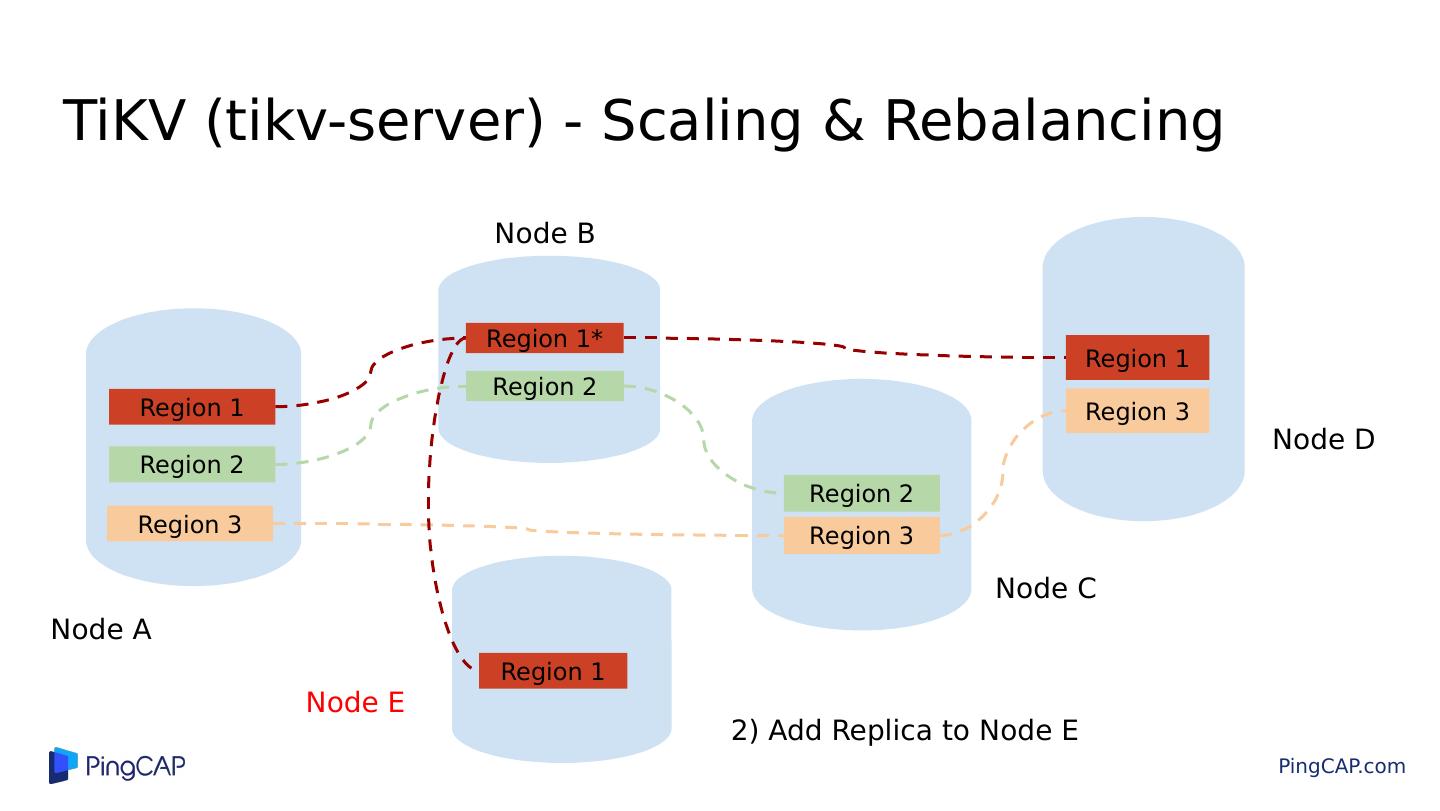

20 .TiKV (tikv-server) - Scaling & Rebalancing Region 1 Region 3 Region 1* Region 2 Region 2 Region 2 Region 3 Region 1 Region 3 Node A Node B 2) Add Replica to Node E Node C Node D Node E Region 1

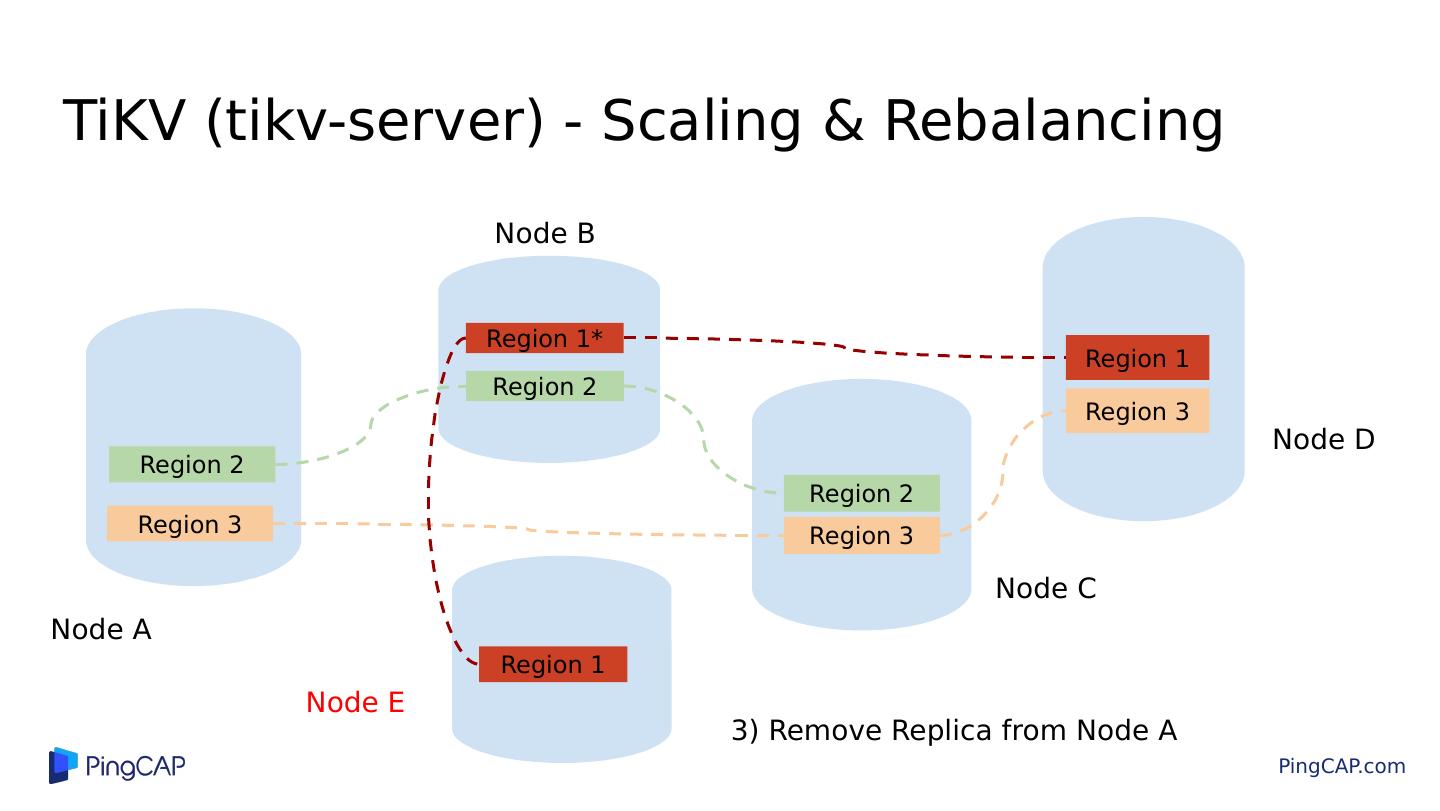

21 .TiKV (tikv-server) - Scaling & Rebalancing Region 1 Region 3 Region 1* Region 2 Region 2 Region 2 Region 3 Region 1 Region 3 Node A Node B 3) Remove Replica from Node A Node C Node D Node E

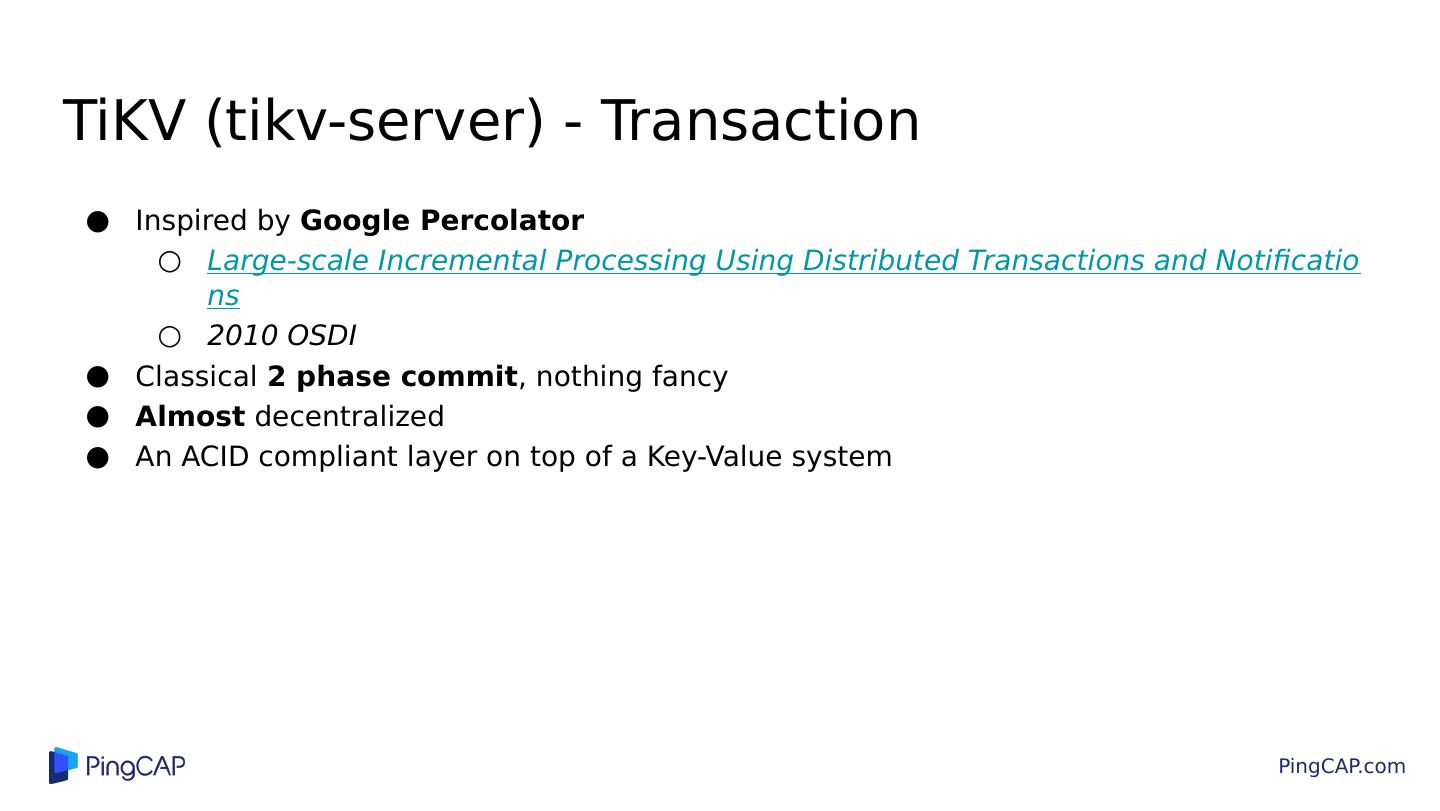

22 .TiKV (tikv-server) - Transaction Inspired by Google Percolator Large-scale Incremental Processing Using Distributed Transactions and Notifications 2010 OSDI Classical 2 phase commit , nothing fancy Almost decentralized An ACID compliant layer on top of a Key-Value system

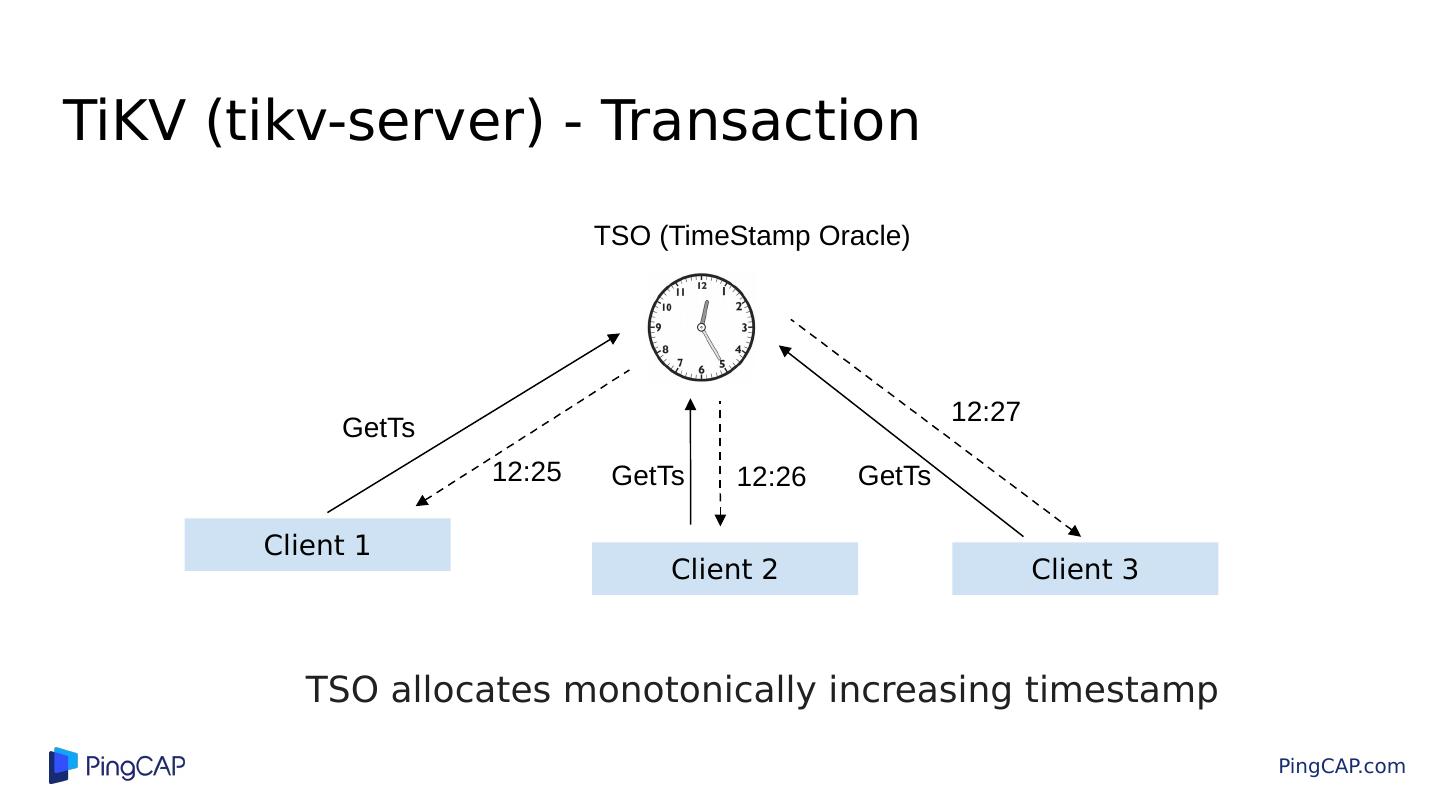

23 .TiKV (tikv-server) - Transaction Client 1 Client 2 Client 3 GetTs 12:25 12:26 12:27 GetTs GetTs TSO (TimeStamp Oracle) TSO allocates monotonically increasing timestamp

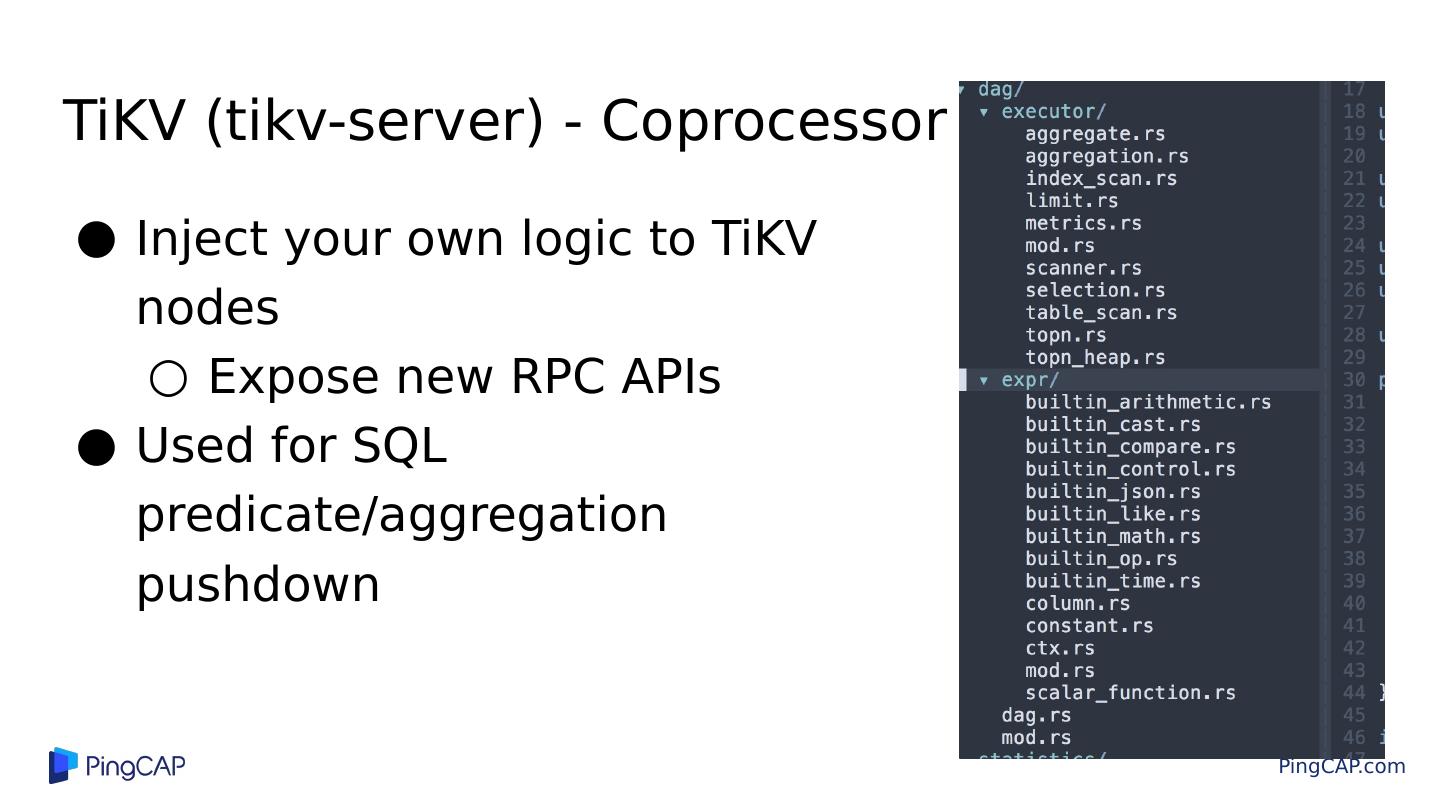

24 .Inject your own logic to TiKV nodes Expose new RPC APIs Used for SQL predicate/aggregation pushdown TiKV (tikv-server) - Coprocessor

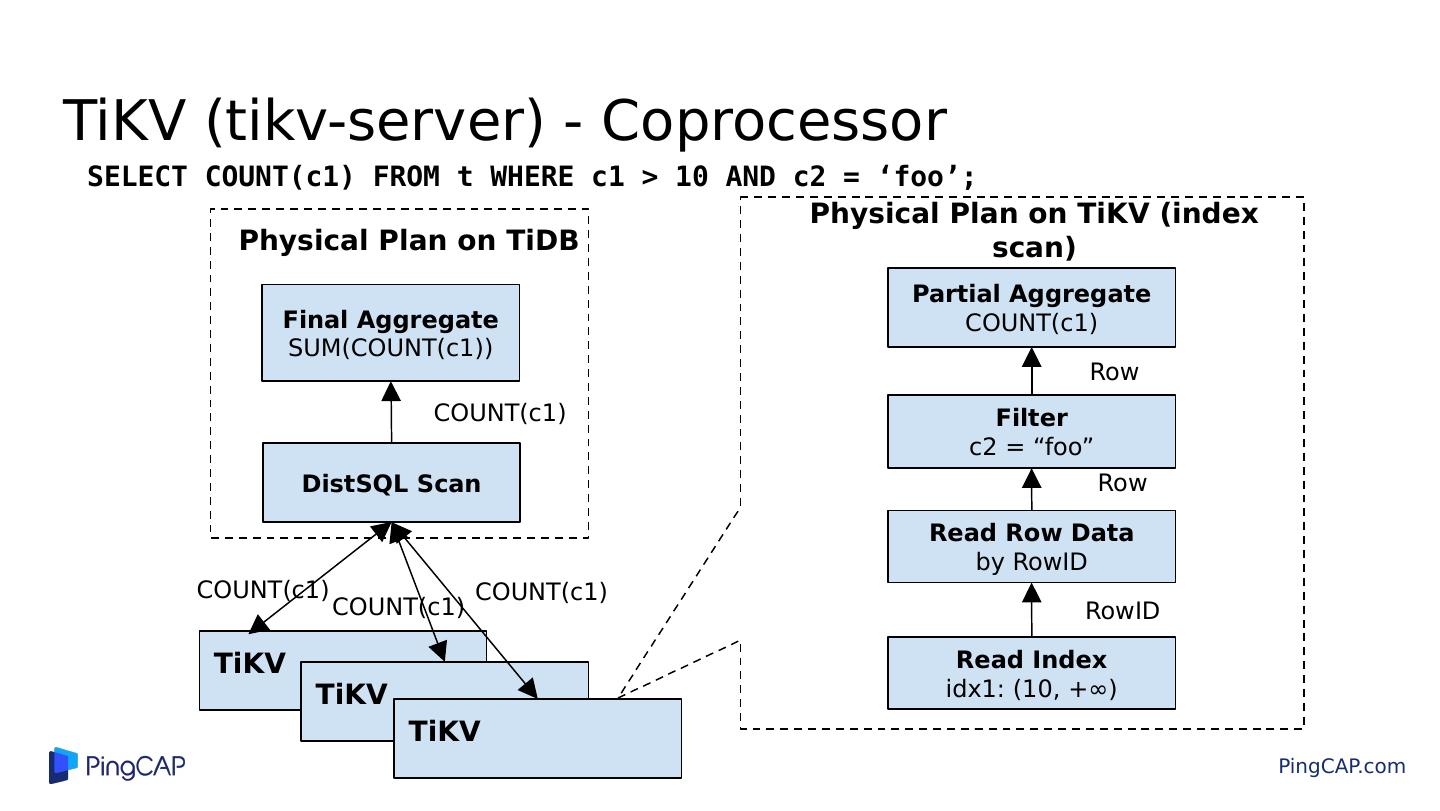

25 .TiKV (tikv-server) - Coprocessor Partial Aggregate COUNT(c1) Filter c2 = “ foo ” Read Index idx1: (10, +∞) Physical Plan on TiKV (index scan) Read Row Data by RowID RowID Row Row Final Aggregate SUM(COUNT(c1)) DistSQL Scan Physical Plan on TiDB COUNT(c1) COUNT(c1) TiKV TiKV TiKV COUNT(c1) COUNT(c1) SELECT COUNT(c1) FROM t WHERE c1 > 10 AND c2 = ‘foo’ ;

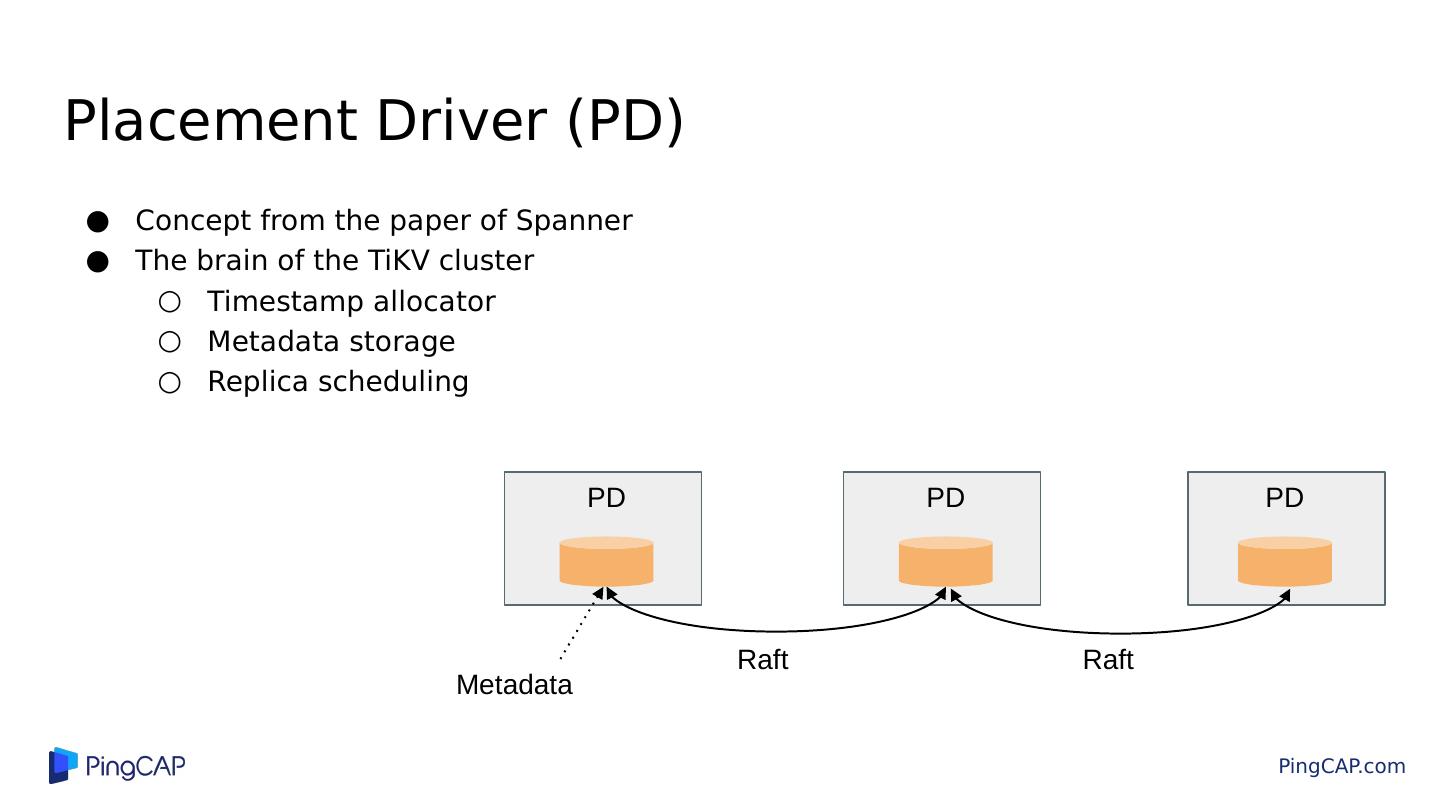

26 .Placement Driver (PD) Concept from the paper of Spanner The brain of the TiKV cluster Timestamp allocator Metadata storage Replica scheduling PD PD PD Raft Raft Metadata

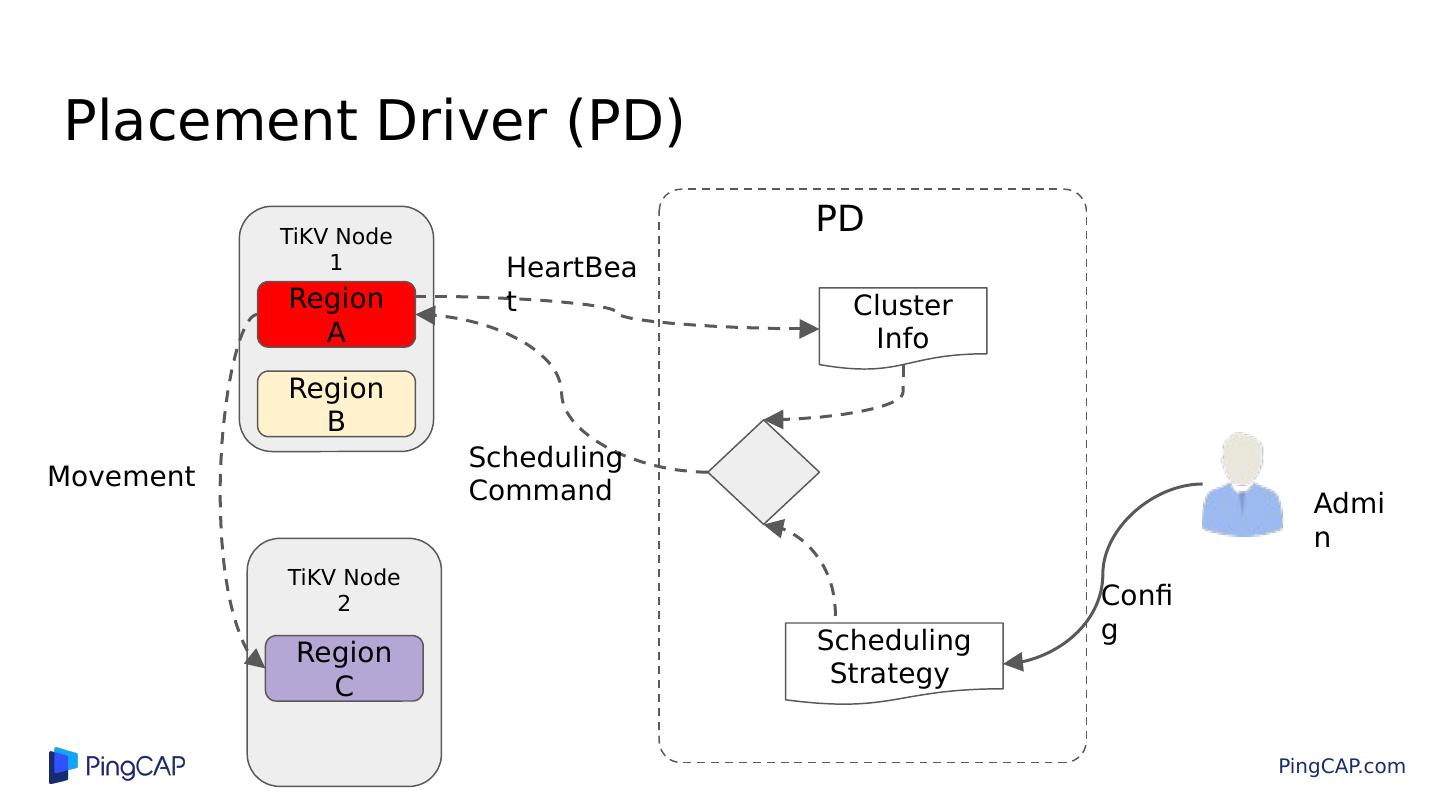

27 .Placement Driver (PD) Region A Region B TiKV Node 1 TiKV Node 2 PD Scheduling Strategy Cluster Info Admin HeartBeat Scheduling Command Region C Config Movement

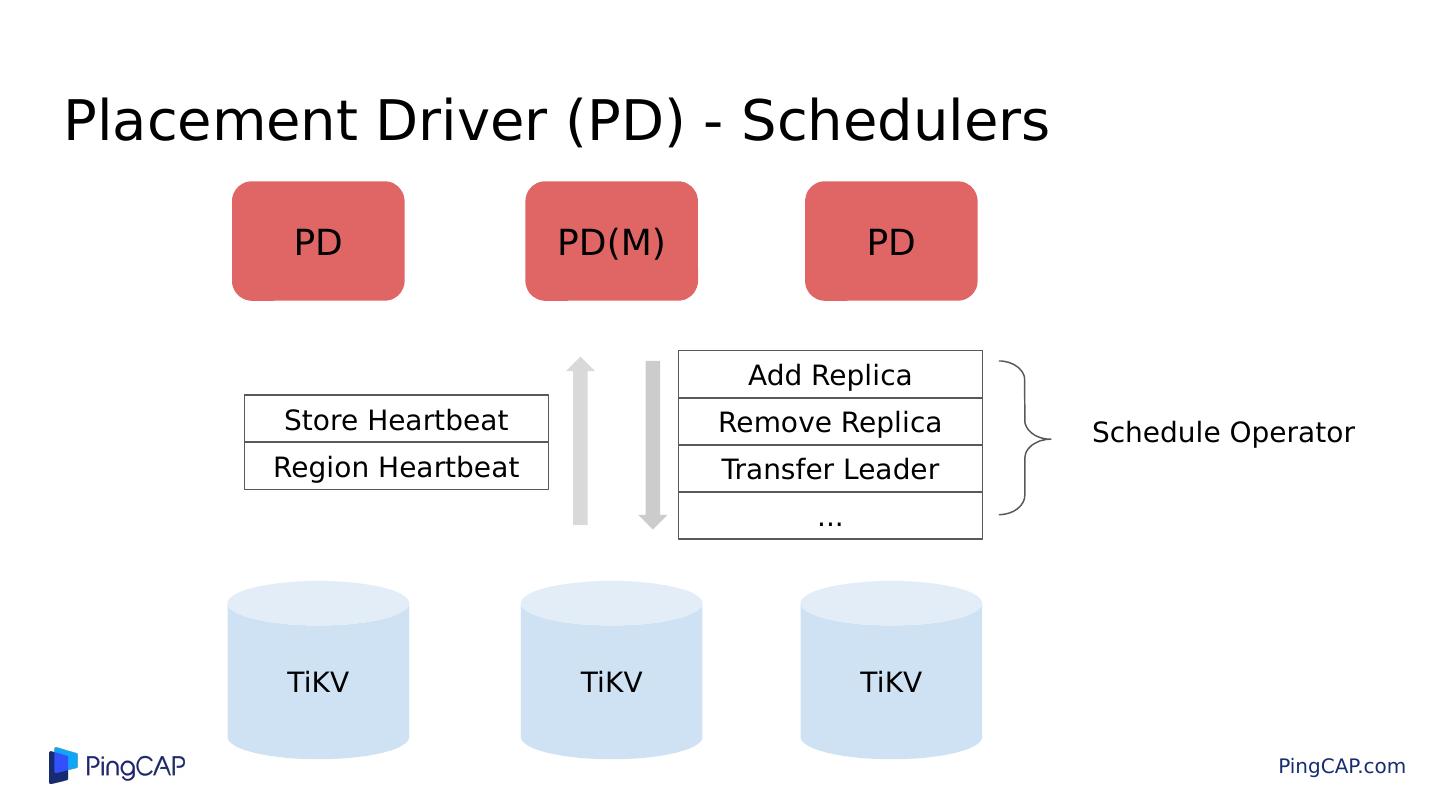

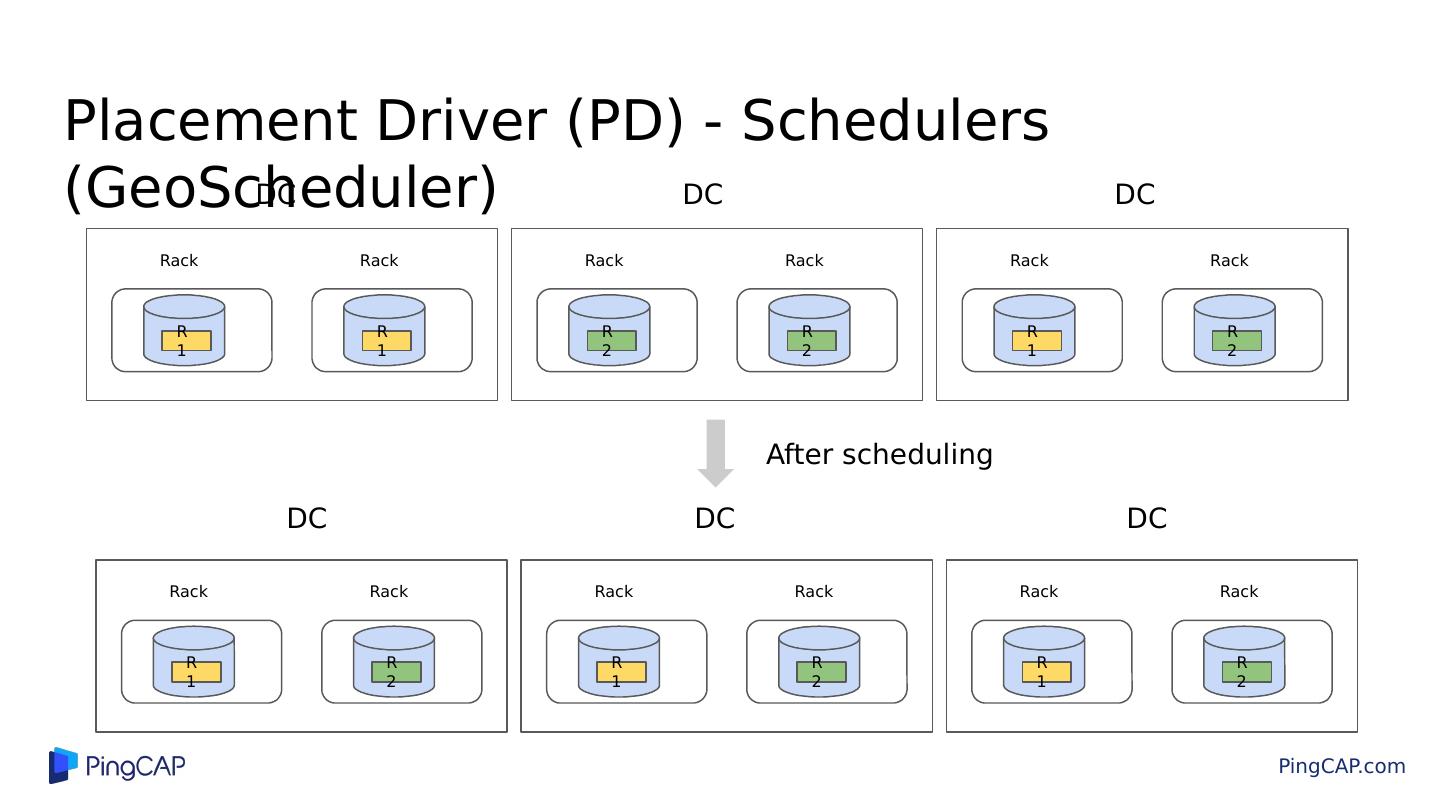

28 .Placement Driver (PD) - Schedulers PD TiKV TiKV TiKV Store Heartbeat Region Heartbeat Add Replica Remove Replica Transfer Leader ... Schedule Operator PD(M) PD

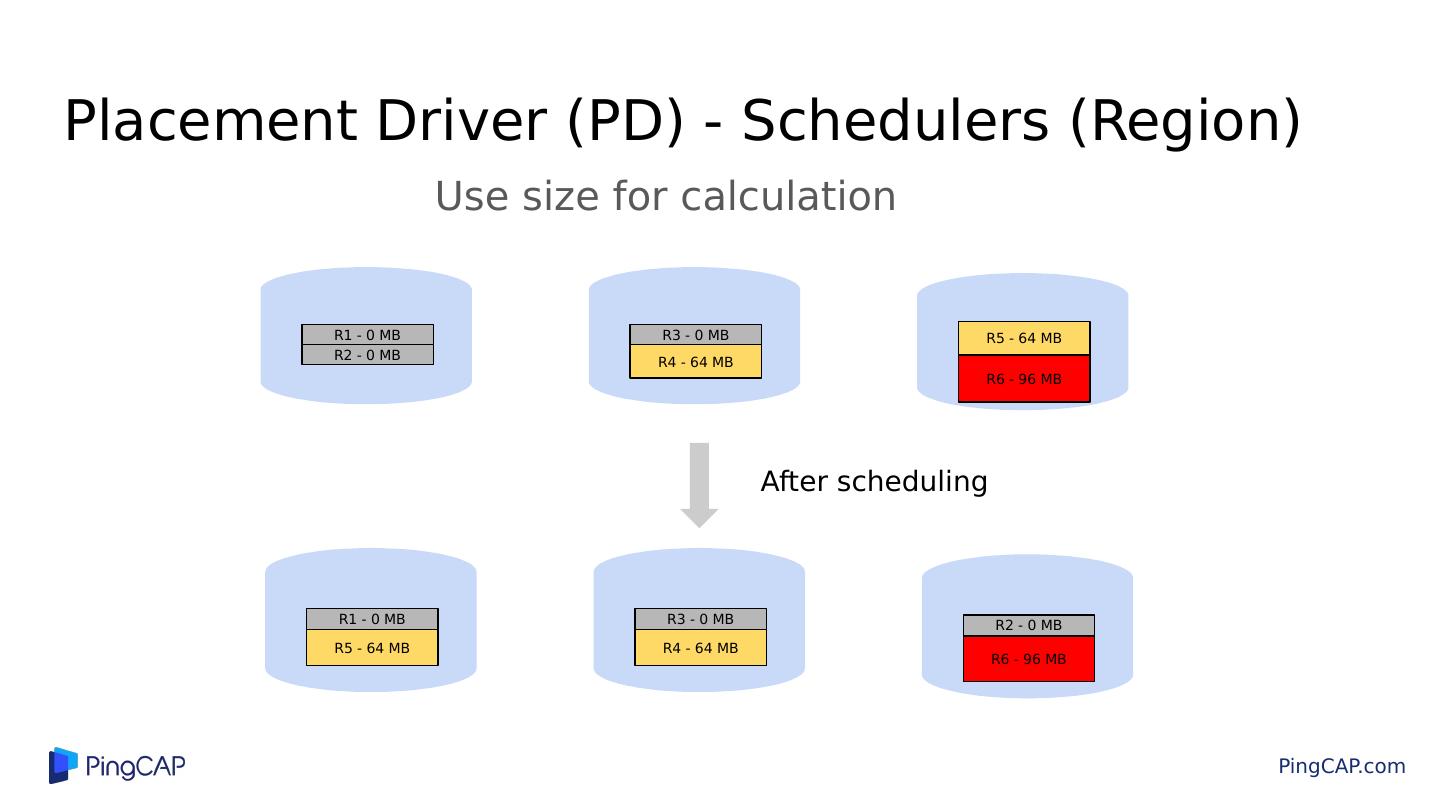

29 .Placement Driver (PD) - Schedulers (Region) Use size for calculation R1 - 0 MB R2 - 0 MB R3 - 0 MB R4 - 64 MB R5 - 64 MB R6 - 96 MB R1 - 0 MB R5 - 64 MB R3 - 0 MB R4 - 64 MB R2 - 0 MB R6 - 96 MB After scheduling