- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Shared Virtual Memory in KVM

展开查看详情

1 .Shared Virtual Memory in KVM Yi Liu (yi.l.liu@intel.com) Senior Software Engineer Intel Corporation

2 .Legal Disclaimer No license (express or implied, by estoppel or otherwise) to any intellectual property rights is granted by this document. Intel disclaims all express and implied warranties, including without limitation, the implied warranties of merchantability, fitness for a particular purpose, and non-infringement, as well as any warranty arising from course of performance, course of dealing, or usage in trade. This document contains information on products, services and/or processes in development. All information provided here is subject to change without notice. Contact your Intel representative to obtain the latest forecast, schedule, specifications and roadmaps. The products and services described may contain defects or errors known as errata which may cause deviations from published specifications. Current characterized errata are available on request. Copies of documents which have an order number and are referenced in this document may be obtained by calling 1-800-548-4725 or by visiting www.intel.com/design/literature.htm. Intel and the Intel logo are trademarks of Intel Corporation or its subsidiaries in the U.S. and/or other countries. *Other names and brands may be claimed as the property of others © Intel Corporation.

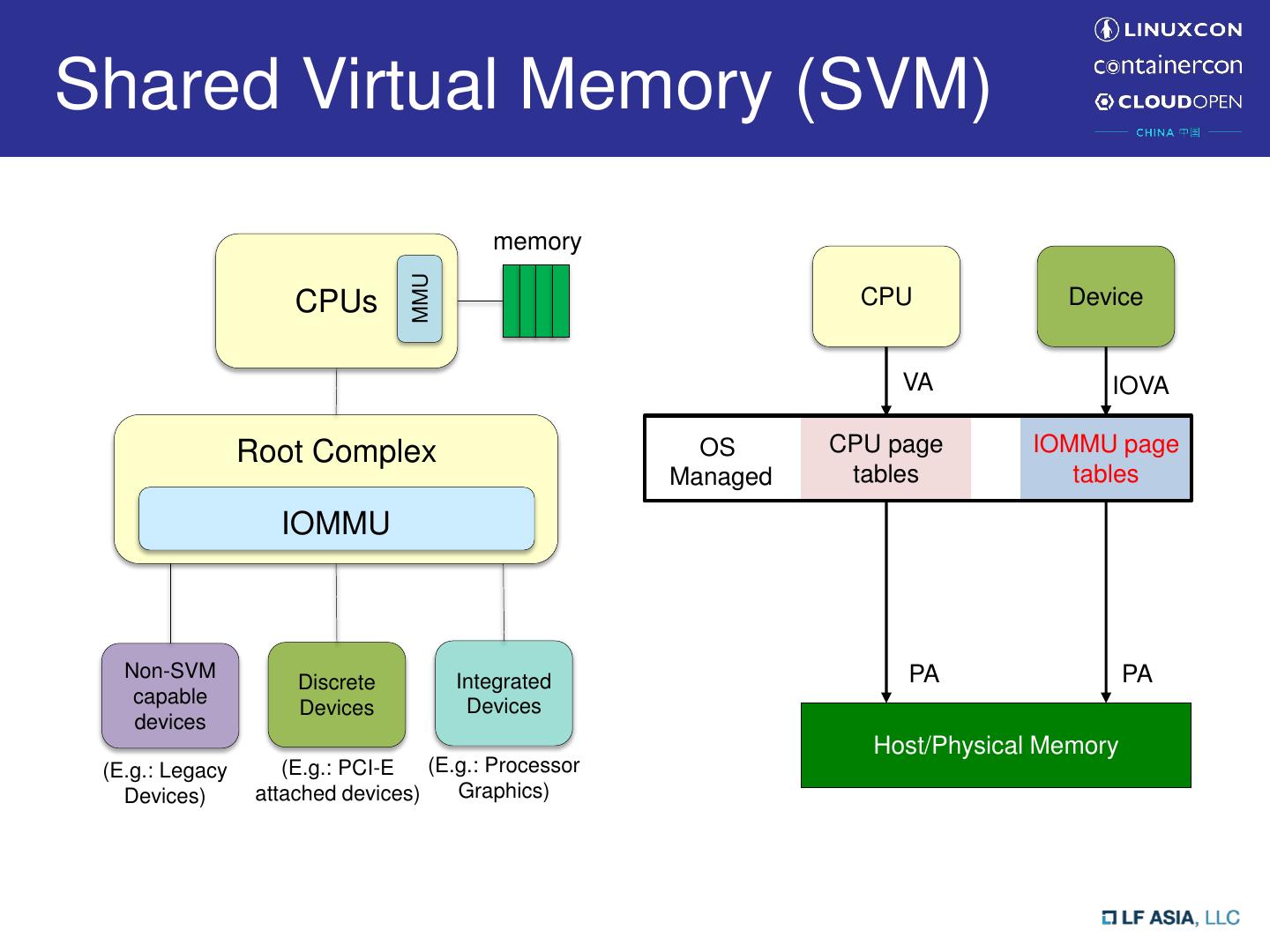

3 .Shared Virtual Memory (SVM) memory MMU CPUs CPU Device VA IOVA Root Complex OS CPU page IOMMU page Managed tables tables IOMMU Non-SVM Integrated PA PA Discrete capable Devices Devices devices Host/Physical Memory (E.g.: Legacy (E.g.: PCI-E (E.g.: Processor Devices) attached devices) Graphics)

4 .Shared Virtual Memory (SVM) memory MMU CPUs CPU Device VA VA Root Complex OS CPU page CPU page Managed tables tables IOMMU Non-SVM Integrated PA PA Discrete capable Devices Devices devices Host/Physical Memory (E.g.: Legacy (E.g.: PCI-E (E.g.: Processor Devices) attached devices) Graphics) Called Shared Virtual Addressing in Linux Community

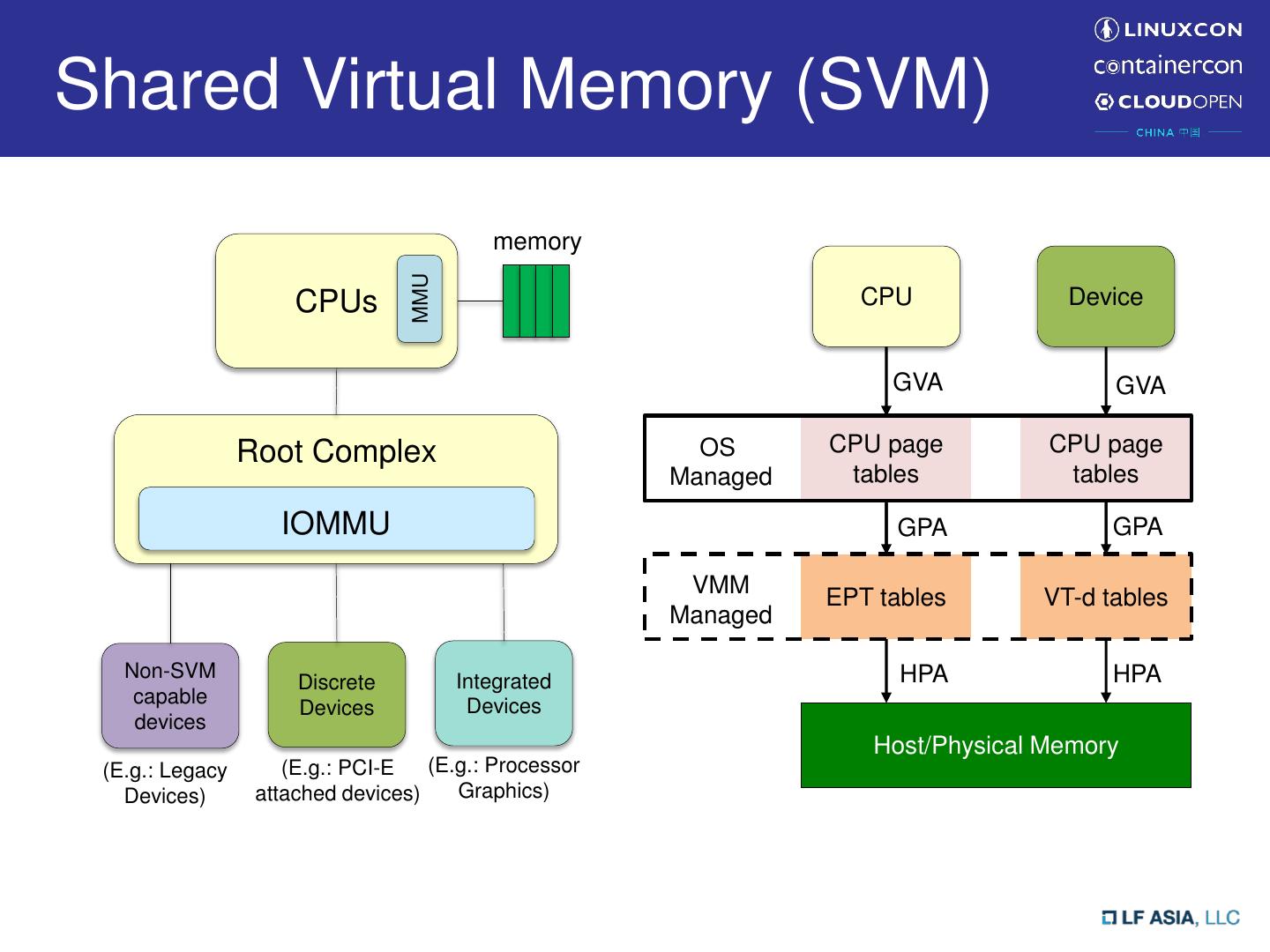

5 .Shared Virtual Memory (SVM) memory MMU CPUs CPU Device GVA GVA Root Complex OS CPU page CPU page Managed tables tables IOMMU GPA GPA VMM EPT tables VT-d tables Managed Non-SVM Integrated HPA HPA Discrete capable Devices Devices devices Host/Physical Memory (E.g.: Legacy (E.g.: PCI-E (E.g.: Processor Devices) attached devices) Graphics)

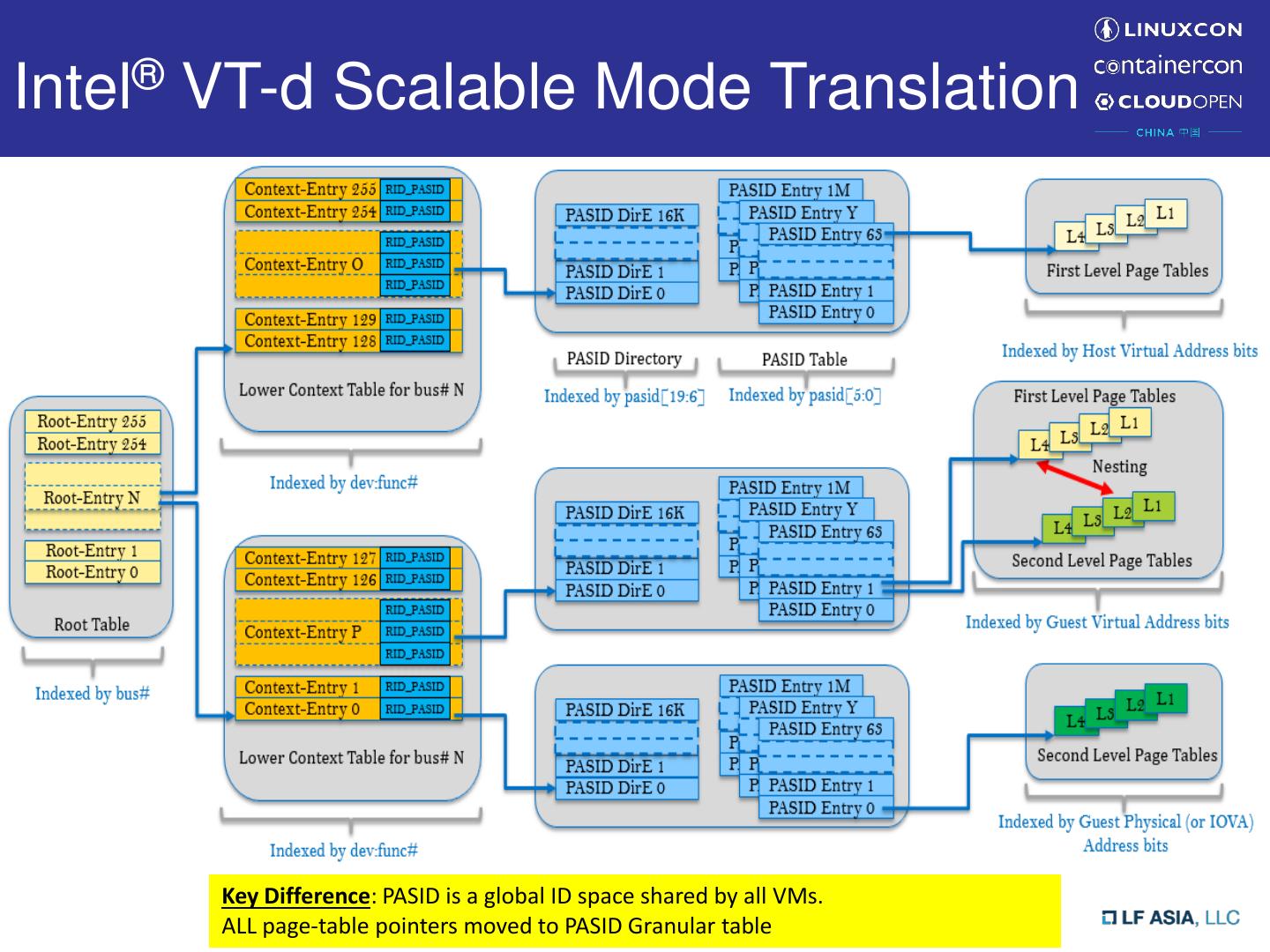

6 .SVM on Intel® VT-d • Process Address Space ID (PASID) – Identify process address space • First-level translation – DMA requests with PASID – For SVM transaction from endpoint device • Second-level translation – DMA requests without PASID – For normal DMA transaction from endpoint device • Translation Types – First-Level translation – Second-Level translation – Nested translation – Pass-Through (address translation bypassed) • Intel® VT-d 3.0 introduced Scalable Mode – SVM can be used together with Intel® Scalable I/O Virtualization

7 .SVM on Intel® VT-d (Cont.) • Nested Translation – Use both first-level and second-level for address translation – Enable SVM in virtualization environment • First-level: GVA->GPA • Second-level: GPA->HPA GVA GPA HPA First Level page table Second Level page table • Most vendor supports nested translation for SVM usage in Virtual Machine

8 .Enable SVM in VM • Need a virtual IOMMU with SVM capability – Proper emulation according to IOMMU spec (e.g. Intel® VT-d specification) • either fully-emulated or virtio-based IOMMU • Notification for guest translation structure changes – Notification mechanism is vendor specific – For Intel® VT-d • “caching-mode”: explicit cache invalidation is required for any translation structure change in software • Enable nested translation on physical IOMMU for given PASID

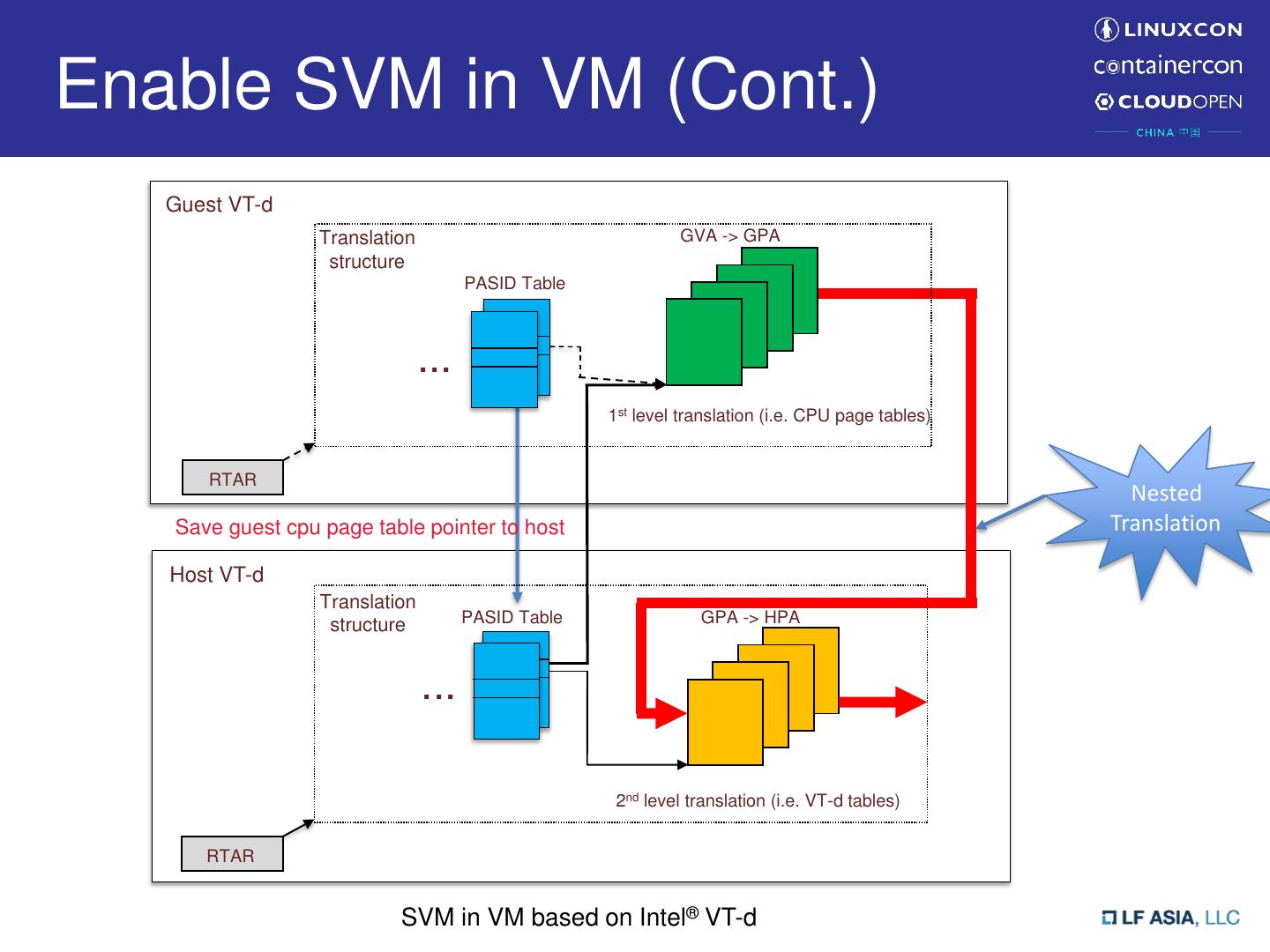

9 .Enable SVM in VM (Cont.) Guest VT-d Translation structure PASID Table ... 1st level translation (i.e. CPU page tables) RTAR Host VT-d Translation structure PASID Table ... 2nd level translation (i.e. VT-d tables) RTAR SVM in VM based on Intel® VT-d

10 .Enable SVM in VM (Cont.) Guest VT-d Translation structure PASID Table ... 1st level translation (i.e. CPU page tables) RTAR Save guest cpu page table pointer to host Host VT-d Translation structure PASID Table ... 2nd level translation (i.e. VT-d tables) RTAR SVM in VM based on Intel® VT-d

11 .Enable SVM in VM (Cont.) Guest VT-d Translation structure PASID Table ... 1st level translation (i.e. CPU page tables) In nested RTAR translation, hardware treats Save guest cpu page table pointer to host 1st-level page table pointer as Host VT-d GPA Translation structure PASID Table ... 2nd level translation (i.e. VT-d tables) RTAR SVM in VM based on Intel® VT-d

12 .Enable SVM in VM (Cont.) Guest VT-d Translation GVA -> GPA structure PASID Table ... 1st level translation (i.e. CPU page tables) RTAR Nested Save guest cpu page table pointer to host Translation Host VT-d Translation structure PASID Table GPA -> HPA ... 2nd level translation (i.e. VT-d tables) RTAR SVM in VM based on Intel® VT-d

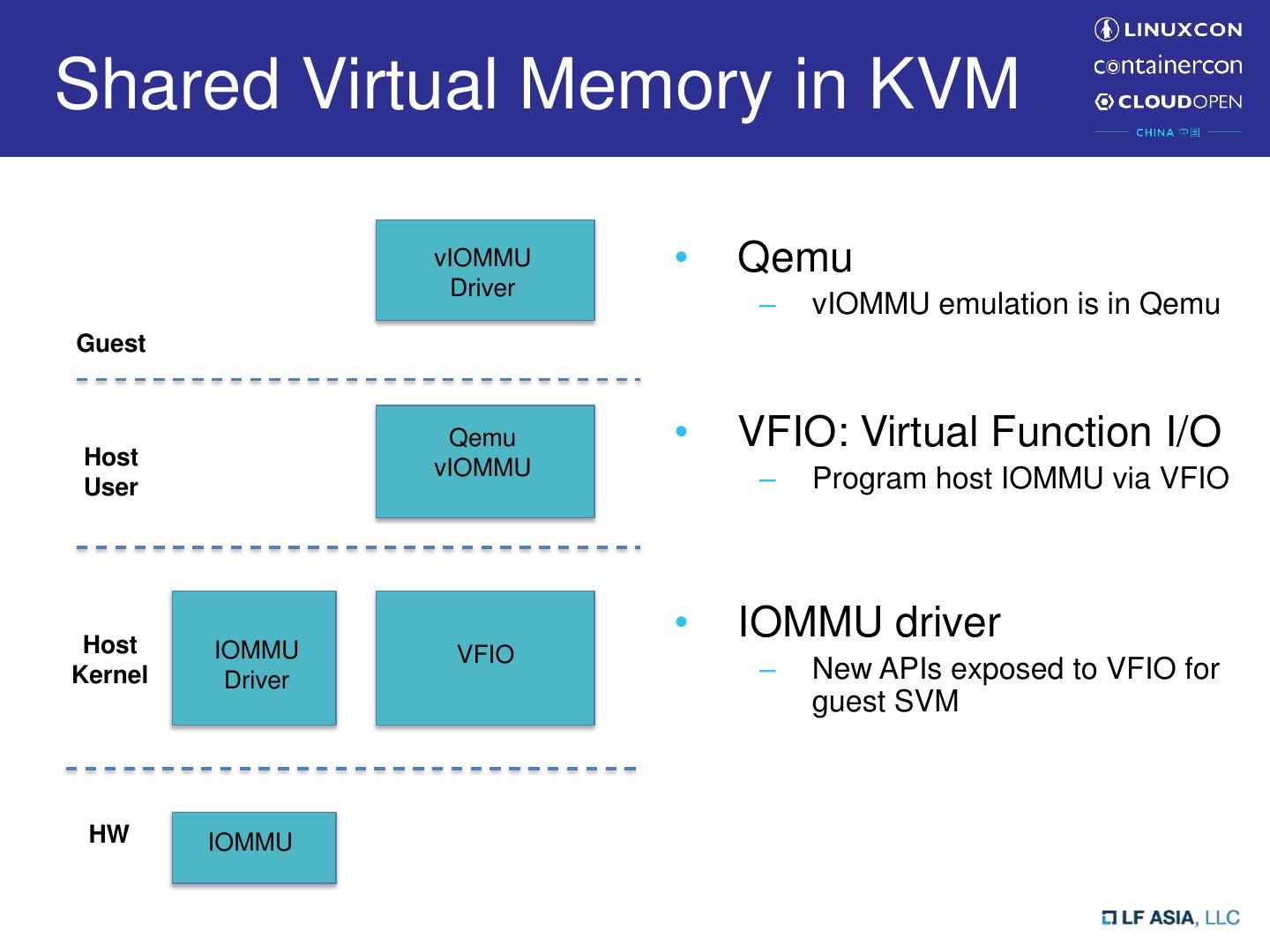

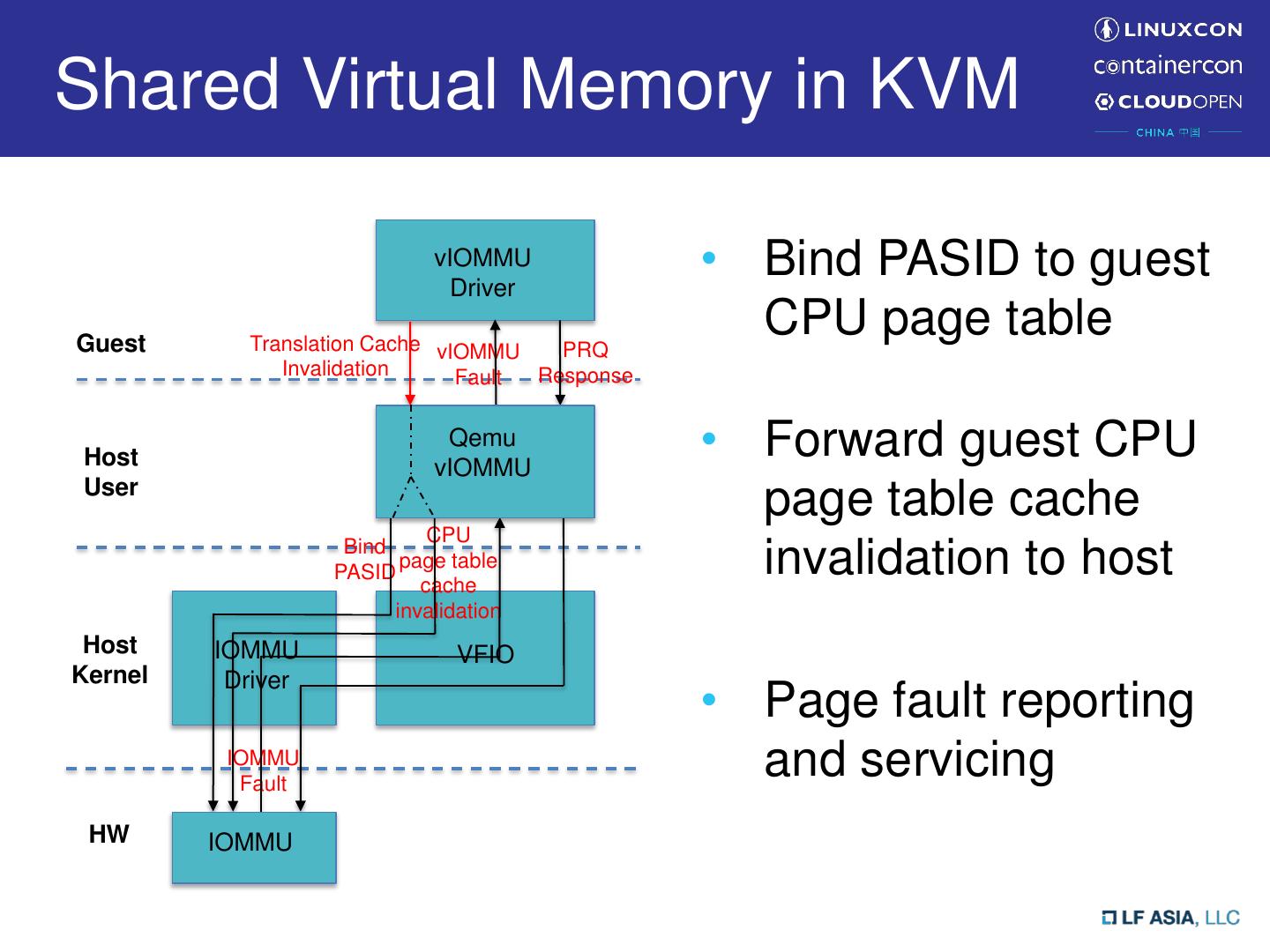

13 .Shared Virtual Memory in KVM vIOMMU • Qemu Driver – vIOMMU emulation is in Qemu Guest Host Qemu • VFIO: Virtual Function I/O vIOMMU User – Program host IOMMU via VFIO Host • IOMMU driver IOMMU VFIO Kernel Driver – New APIs exposed to VFIO for guest SVM HW IOMMU

14 .Shared Virtual Memory in KVM vIOMMU Driver • Bind PASID to guest Guest Translation Cache CPU page table Invalidation Qemu Host vIOMMU User Bind PASID Host IOMMU VFIO Kernel Driver HW IOMMU

15 .Shared Virtual Memory in KVM vIOMMU Driver • Bind PASID to guest Guest Translation Cache CPU page table Invalidation Host Qemu vIOMMU • Forward guest CPU User page table cache CPU Bind PASID page table cache invalidation to host invalidation Host IOMMU VFIO Kernel Driver HW IOMMU

16 .Shared Virtual Memory in KVM vIOMMU Driver • Bind PASID to guest Guest Translation Cache vIOMMU CPU page table Invalidation Fault Host Qemu vIOMMU • Forward guest CPU User page table cache CPU Bind PASID page table cache invalidation to host invalidation Host IOMMU VFIO Kernel Driver • Page fault reporting IOMMU Fault and servicing HW IOMMU

17 .Shared Virtual Memory in KVM vIOMMU Driver • Bind PASID to guest Guest Translation Cache vIOMMU PRQ CPU page table Invalidation Fault Response Host Qemu vIOMMU • Forward guest CPU User page table cache CPU Bind PASID page table cache invalidation to host invalidation Host IOMMU VFIO Kernel Driver • Page fault reporting IOMMU Fault and servicing HW IOMMU

18 .Shared Virtual Memory in KVM vIOMMU Driver • Bind PASID to guest Guest Translation Cache vIOMMU PRQ CPU page table Invalidation Fault Response Host Qemu vIOMMU • Forward guest CPU User page table cache CPU Bind PASID page table cache invalidation to host invalidation Host IOMMU VFIO Kernel Driver • Page fault reporting IOMMU Fault and servicing HW IOMMU Neutral Kernel APIs for both emulated and virtio-based vIOMMUs

19 .Bind PASID • New VFIO IOCTL supporting multiple binding types: – VFIO_IOMMU_BIND_PROCESS • Binding to host CPU page table – VFIO_IOMMU_BIND_PGTBL • Binding to guest CPU page table – VFIO_IOMMU_BIND_PASID_TBL • Binding to guest PASID Table • New IOMMU API to configure physical IOMMU – Need compatibility check of the table format Program Program pasid entry; guest pasid entry New VFIO IOCTL iommu_bind_xxx(…) Enable nested mode vIOMMU Qemu pIOMMU VFIO Driver Driver vIOMMU <File descriptor of assigned device, <struct device *dev, bind_cfg> bing_cfg>

20 .Forward Cache Invalidation to Host • Invalidation types – IOMMU_INV_TYPE_TLB • IOTLB and paging structure-caches • Granularity conversion – Supported granularities • Domain selective flush • PASID selective flush • Page selective flush – Avoid unnecessary flush • Guest global flush -> either domain/pasid selective flush in host • Use host Identities – RID, Domain ID, PASID New VFIO IOCTL iommu tlb flushing (VFIO_IOMMU_INVALIDATION) iommu_sva_invalidate(…) Submit Invalidation to HW vIOMMU Qemu pIOMMU VFIO Driver vIOMMU Driver

21 .Page Fault Handling • PCI Express® Address Translation Service – PRI: page request interface – Page Response (PRS) PCIe (PRS/ATS) Dev-IOTLB missing IOMMU Translation request Dev IOTLB IOTLB Translation fault PRI Page request PRQ event device IOMMU PRQ handling Page response PCIE Dev DMA Page response Translated request

22 . Page Fault Handling (Cont.) • Report PRQ to Guest – Page Request Capability in vIOMMU • Forward guest page response to host iommu fault Notify via eventfd Emulate virtual fault/PRQ request report framework vIOMMU pIOMMU VFIO Qemu Driver vIOMMU Driver <IOMMU_DMAR_FAULT, <vendor-agnostic fault info> <VT-d specific prq desc format> PRQ info> Device driver Guest issue PRQ response New VFIO IOCTL iommu_sva_page_resp (…) submit PRQ response to HW (VFIO_IOMMU_SVM_PAGE_RESP) vIOMMU Qemu pIOMMU VFIO Driver Driver vIOMMU

23 .IOMMU Fault Reporting Framework • Newly defined “struct iommu_fault_param”, added to “struct device” /** * struct iommu_fault_param - Per device generic IOMMU runtime data * @dev_fault_handler: Callback function to handle IOMMU faults at device level * @data: handler private data * @faults: holds the pending faults which needs response, e.g. page response. * @timer: track page request pending time limit * @lock: protect pending PRQ event list */ struct iommu_fault_param { iommu_dev_fault_handler_t handler; struct list_head faults; struct timer_list timer; struct mutex lock; void *data; };

24 .IOMMU Fault Reporting Framework • IOMMU fault handler registration – iommu_register_device_fault_handler() – iommu_unregister_device_fault_handler() • In-kernel device driver and vfio driver registers its own fault handler – Vfio fault handler should further notify Qemu or other user- space application • The original idea was brought up by David Woodhouse – May refer to more detail https://lwn.net/Articles/608914/

25 .Upstream Status (Kernel) • IOMMU/VFIO extension for virtual SVA support (Jacob Pan/Yi Liu, Intel) – Earliest RFC patch for vSVA support – current kernel API in v5 (https://lkml.org/lkml/2018/5/11/605) • SVA native enabling on ARM platform (Jean- Philippe Brucker, ARM) • Shared requirements in the two tracks – binding PASID, fault reporting SVA stands for Shared Virtual Addressing in Linux community

26 .Upstream Status (Qemu) • Qemu vSVA enabling has two parts (Yi Liu, Intel) – vIOMMU emulation • Earliest RFC patch for vSVA back to 2017-April – Notification framework between vIOMMU emulator and VFIO within Qemu • Notifier framework in v3, with community comments addressed

27 .Summary • Shared Virtual Memory (SVM) enables efficient workload submission by directly programming CPU virtual addresses on the device • Intel® VT-d 3.0 specification extends SVM usage together with Intel® Scalable I/O Virtualization • Holistic enhancements are introduced cross multiple kernel/user space components, to enable SVM virtualization in KVM • New kernel APIs are kept neutral to support all kinds of virtual IOMMUs (either emulated or para-virtualized)

28 .Q&A

29 .