- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Processes/Threads

展开查看详情

1 . Goals for Today • Processes CS194-24 • Fork/Exec Advanced Operating Systems • Multithreading/Posix support for threads Structures and Implementation Lecture 5 • Interprocess Communication Processes/Threads Interactive is important! Ask Questions! February 5th, 2014 Prof. John Kubiatowicz http://inst.eecs.berkeley.edu/~cs194-24 Note: Some slides and/or pictures in the following are adapted from slides ©2013 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.2 Recall: X86 Memory model with segmentation Recall: UNIX Process • Process: Operating system abstraction to represent what is needed to run a single program – Originally: a single, sequential stream of execution in its own address space – Modern Process: multiple threads in same address space! – Different from a “program”: a process is an active program and related resources • Two parts: – Threads of execution (or just “threads”) » Code executed as one or more sequential stream of execution (threads) » Each thread includes its own state of CPU registers » Threads either multiplexed in software (OS) or hardware (simultaneous multithrading/hyperthreading) – Protected Resources: » Main Memory State (contents of Address Space) » I/O state (i.e. file descriptors) • This is a virtual machine abstraction – Some might say that the only point of an OS is to support a clean Process abstraction 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.3 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.4

2 . Recall: Modern “Lightweight” Process with Threads Single and Multithreaded Processes • Thread: a sequential execution stream within process (Sometimes called a “Lightweight process”) – Process still contains a single Address Space – No protection between threads • Multithreading: a single program made up of a number of different concurrent activities – Sometimes called multitasking, as in Ada… • Why separate the concept of a thread from that of a process? – Discuss the “thread” part of a process (concurrency) – Separate from the “address space” (Protection) – Heavyweight Process Process with one thread • Threads encapsulate concurrency: “Active” component • Linux confuses this model a bit: – How do thread stacks stay separate from one another? – Processes and Threads are “the same” – Really means: Threads are managed separately and can share – They may not! a variety of resources (such as address spaces) • Address spaces encapsulate protection: “Passive” part – Threads related to one another in fashion similar to Processes with Threads within – Keeps buggy program from trashing the system 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.5 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.6 Process comprises Processes – Address Space • What is in a process? – an address space – usually protected and virtual – mapped into memory 0xFFFFFFFF stack – the code for the running program (dynamically allocated) SP – the data for the running program – an execution stack and stack pointer (SP); also heap – the program counter (PC) Virtual heap (dynamically allocated) – a set of processor registers – general purpose and address space status static data – a set of system resources » files, network connections, pipes, … program code PC » privileges, (human) user association, … 0x00000000 (text) » Personalities (linux) –… See also Silbershatz, figure 3.1 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.7 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.8

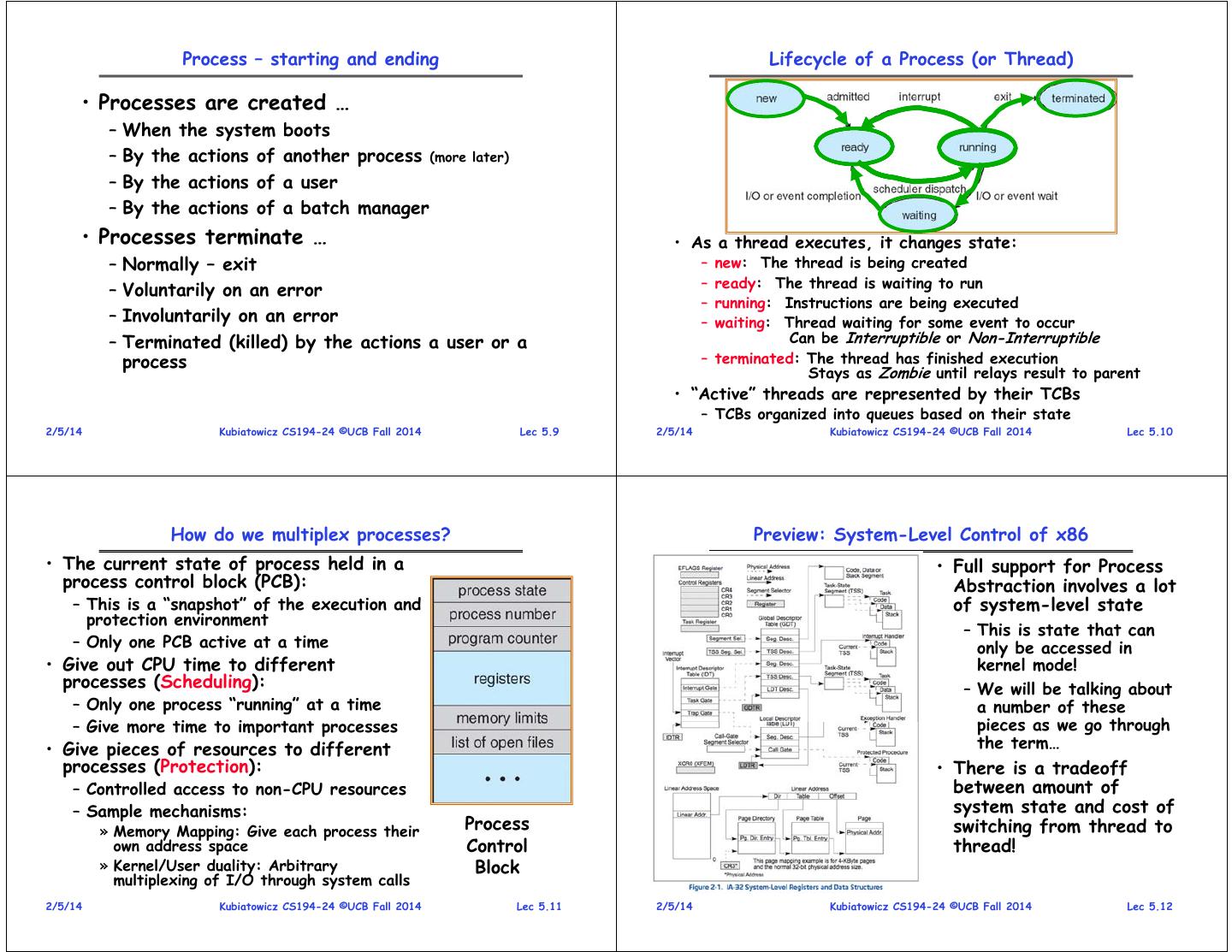

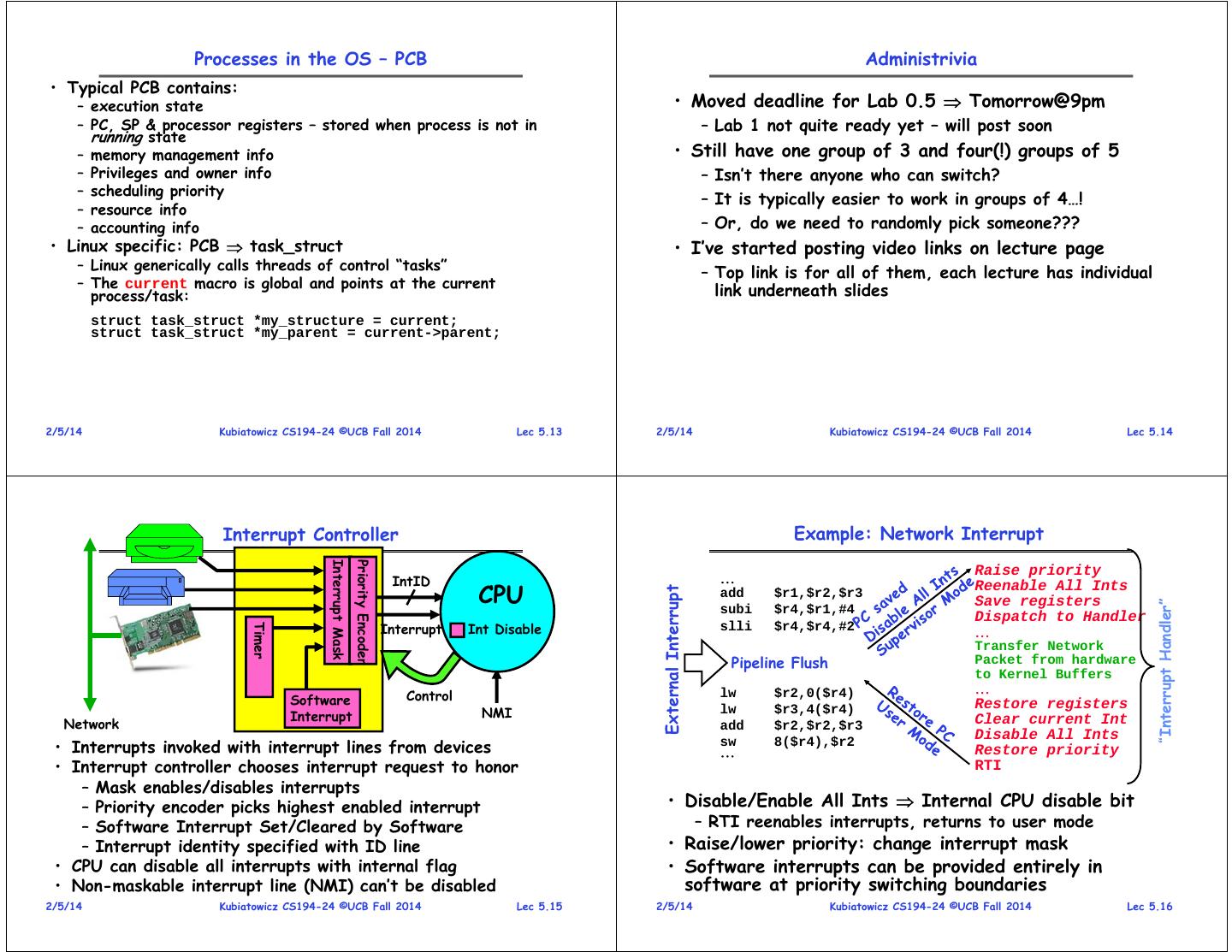

3 . Process – starting and ending Lifecycle of a Process (or Thread) • Processes are created … – When the system boots – By the actions of another process (more later) – By the actions of a user – By the actions of a batch manager • Processes terminate … • As a thread executes, it changes state: – Normally – exit – new: The thread is being created – ready: The thread is waiting to run – Voluntarily on an error – running: Instructions are being executed – Involuntarily on an error – waiting: Thread waiting for some event to occur – Terminated (killed) by the actions a user or a Can be Interruptible or Non-Interruptible process – terminated: The thread has finished execution Stays as Zombie until relays result to parent • “Active” threads are represented by their TCBs – TCBs organized into queues based on their state 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.9 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.10 How do we multiplex processes? Preview: System-Level Control of x86 • The current state of process held in a • Full support for Process process control block (PCB): Abstraction involves a lot – This is a “snapshot” of the execution and of system-level state protection environment – This is state that can – Only one PCB active at a time only be accessed in • Give out CPU time to different kernel mode! processes (Scheduling): – We will be talking about – Only one process “running” at a time a number of these – Give more time to important processes pieces as we go through • Give pieces of resources to different the term… processes (Protection): • There is a tradeoff – Controlled access to non-CPU resources between amount of – Sample mechanisms: system state and cost of » Memory Mapping: Give each process their Process switching from thread to own address space Control thread! » Kernel/User duality: Arbitrary Block multiplexing of I/O through system calls 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.11 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.12

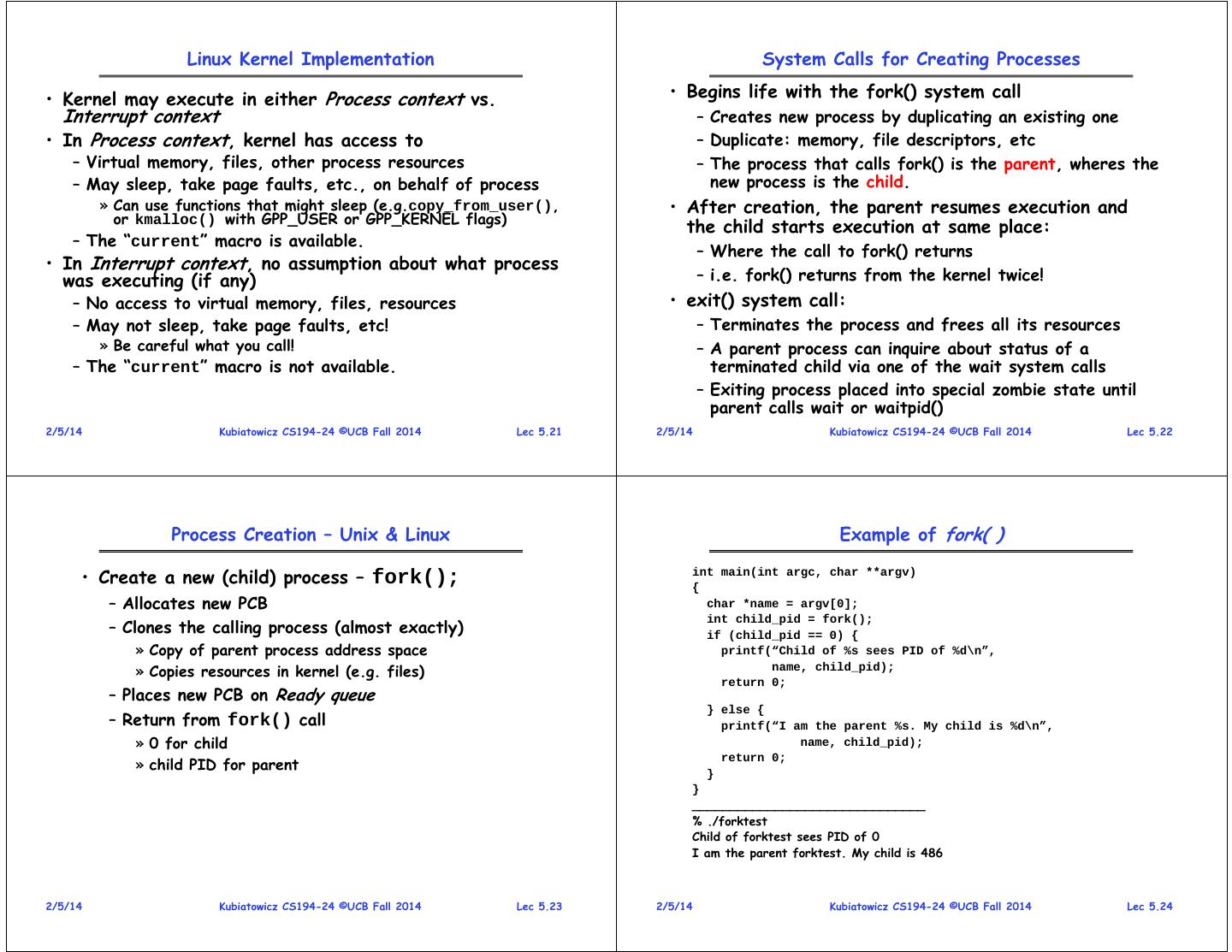

4 . Processes in the OS – PCB Administrivia • Typical PCB contains: – execution state • Moved deadline for Lab 0.5 Tomorrow@9pm – PC, SP & processor registers – stored when process is not in – Lab 1 not quite ready yet – will post soon running state – memory management info • Still have one group of 3 and four(!) groups of 5 – Privileges and owner info – Isn’t there anyone who can switch? – scheduling priority – It is typically easier to work in groups of 4…! – resource info – accounting info – Or, do we need to randomly pick someone??? • Linux specific: PCB task_struct • I’ve started posting video links on lecture page – Linux generically calls threads of control “tasks” – Top link is for all of them, each lecture has individual – The current macro is global and points at the current process/task: link underneath slides struct task_struct *my_structure = current; struct task_struct *my_parent = current->parent; 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.13 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.14 Interrupt Controller Example: Network Interrupt Interrupt Mask Priority Encoder Raise priority IntID CPU Reenable All Ints External Interrupt add $r1,$r2,$r3 Save registers “Interrupt Handler” subi $r4,$r1,#4 Dispatch to Handler Interrupt Int Disable Timer slli $r4,$r4,#2 Transfer Network Pipeline Flush Packet from hardware to Kernel Buffers Control lw $r2,0($r4) Software Restore registers Interrupt NMI lw $r3,4($r4) Network add $r2,$r2,$r3 Clear current Int Disable All Ints • Interrupts invoked with interrupt lines from devices sw 8($r4),$r2 Restore priority • Interrupt controller chooses interrupt request to honor RTI – Mask enables/disables interrupts – Priority encoder picks highest enabled interrupt • Disable/Enable All Ints Internal CPU disable bit – Software Interrupt Set/Cleared by Software – RTI reenables interrupts, returns to user mode – Interrupt identity specified with ID line • Raise/lower priority: change interrupt mask • CPU can disable all interrupts with internal flag • Software interrupts can be provided entirely in • Non-maskable interrupt line (NMI) can’t be disabled software at priority switching boundaries 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.15 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.16

5 . CPU Switch From Process to Process Processes – State Queues • The OS maintains a collection of process state queues – typically one queue for each state – e.g., ready, waiting, … – each PCB is put onto a queue according to its current state – as a process changes state, its PCB is unlinked from one queue, and linked to another • Process state and the queues change in • This is also called a “context switch” response to events – interrupts, traps • Code executed in kernel above is overhead – Overhead sets minimum practical switching time – Less overhead with SMT/hyperthreading, but… contention for resources instead 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.17 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.18 Process Scheduling Processes – Privileges • Users are given privileges by the system administrator • Privileges determine what rights a user has for an object. – Unix/Linux – Read|Write|eXecute by user, group and “other” (i.e., “world”) – WinNT – Access Control List • Processes “inherit” privileges from user • PCBs move from queue to queue as they change state – Decisions about which order to remove from queues are Scheduling decisions – Many algorithms possible (few weeks from now) 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.19 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.20

6 . Linux Kernel Implementation System Calls for Creating Processes • Kernel may execute in either Process context vs. • Begins life with the fork() system call Interrupt context – Creates new process by duplicating an existing one • In Process context, kernel has access to – Duplicate: memory, file descriptors, etc – Virtual memory, files, other process resources – The process that calls fork() is the parent, wheres the – May sleep, take page faults, etc., on behalf of process new process is the child. » Can use functions that might sleep (e.g.copy_from_user(), • After creation, the parent resumes execution and or kmalloc() with GPP_USER or GPP_KERNEL flags) the child starts execution at same place: – The “current” macro is available. – Where the call to fork() returns • In Interrupt context, no assumption about what process was executing (if any) – i.e. fork() returns from the kernel twice! – No access to virtual memory, files, resources • exit() system call: – May not sleep, take page faults, etc! – Terminates the process and frees all its resources » Be careful what you call! – A parent process can inquire about status of a – The “current” macro is not available. terminated child via one of the wait system calls – Exiting process placed into special zombie state until parent calls wait or waitpid() 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.21 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.22 Process Creation – Unix & Linux Example of fork( ) • Create a new (child) process – fork(); int main(int argc, char **argv) { – Allocates new PCB char *name = argv[0]; – Clones the calling process (almost exactly) int child_pid = fork(); if (child_pid == 0) { » Copy of parent process address space printf(“Child of %s sees PID of %d\n”, » Copies resources in kernel (e.g. files) name, child_pid); return 0; – Places new PCB on Ready queue } else { – Return from fork() call printf(“I am the parent %s. My child is %d\n”, » 0 for child name, child_pid); » child PID for parent return 0; } } _______________________________ % ./forktest Child of forktest sees PID of 0 I am the parent forktest. My child is 486 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.23 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.24

7 . A (more compelling?) example of fork( ): Web Server Parent-Child relationship int main() { Serial Version int listen_fd = listen_for_clients(); while (1) { int client_fd = accept(listen_fd); handle_client_request(client_fd); close(client_fd); } Typical process tree } for Solaris system int main() { int listen_fd = listen_for_clients(); while (1) { Process Per Request int client_fd = accept(listen_fd); if (fork() == 0) { handle_client_request(client_fd); close(client_fd); // Close FD in child when done exit(0); } else { close(client_fd); // Close FD in parent • Every Process has a parentage – A “parent” is a thread that creates another thread // Let exited children rest in peace! while (waitpid(-1,&status,WNOHANG) > 0); – A child of a parent was created by that parent } } 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.25 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.26 Starting New Programs Fork + Exec – shell-like • Exec System Call: int main(int argc, char **argv) { char *argvNew[5]; – int exec (char *prog, char **argv) int pid; – Check privileges and file type if ((pid = fork()) < 0) { – Loads program at path prog into address space printf( "Fork error\n“); » Replacing previous contents! exit(1); » Execution starts at main() } else if (pid == 0) { /* child process */ argvNew[0] = "/bin/ls"; – Initializes context – e.g. passes arguments argvNew[1] = "-l"; » *argv argvNew[2] = NULL; – Place PCB on ready queue if (execve(argvNew[0], argvNew, environ) < 0) { – Preserves, pipes, open files, privileges, etc. printf( "Execve error\n“); exit(1); • Running a new program in Unix/Linux } – fork() followed by exec() } else { /* parent */ – Creates a new process as clone of previous one wait(pid); /* wait for the child to finish */ – First thing that clone does is to replace itself with new } program } 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.27 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.28

8 . How does fork() actually work in Linux? Clone system call in Linux • Semantics are that fork() • What about other shared state? duplicates a lot from the – Clone() system call! parent: – Can select exactly what state to copy, what to create – Difference between creating process and thread in Linux is – Memory space, File just different arguments to clone! Descriptors, Security • Some interesting clone flags: Context – CLONE_FILES: Parent and Child share open files Immediately after fork • How to make this cheap? – CLONE_FS: Parent and child share filesystem info – CLONE_PARENT: Child has same parent as parent – Copy on Write! – CLONE_THREAD: Parent and child in same thread grp – Instead of copying – CLONE_VFORK: do a vfork() memory, copy page-tables – CLONE_VM: Parent and Child share addess space • Another option: vfork() – CLONE_SIGHAND: Parent and Child share signal handlers and blocked signals – Same effect as fork(), • So – implementations of calls: but page tables not copied After Process-1 writes Page C – Fork(): clone(SIGCHLD) – Instead, child executes in parent’s address space – Vfork(): clone(CLONE_VFORK | CLONE_VM | SIGCHLD) Parent blocked until child calls “exec()” or exits – Thread creation: clone(CLONE_VM | CLONE_FS | CLONE_FILES | CLONE_SIGHAND) – Child not allowed to write to the address space 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.29 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.30 Waiting for a Process Processes – Windows • Multiple variations of wait function • Windows NT/XP – combines fork & exec » Including non-blocking wait functions – CreateProcess(10 arguments) – Not a parent child relationship • Waits until child process terminates – Note – privileges required to create a new process » Acquires termination code from child » Child process is destroyed by kernel • Zombie:– a process that had never been waited for » Hence, cannot go away » See Love, Linux Kernel Development, Chapter 3 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.31 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.32

9 . Windows, Unix, and Linux (traditional) A Note on Implementation • Processes are in separate address spaces • Many OS implementations include (parts of) » By default, no shared memory kernel in every address space • Processes are unit of scheduling – Protected » A process is ready, waiting, or running – Easy to access • Processes are unit of resource allocation – Allows kernel to see into client processes » Files, I/O, memory, privileges, … » Transferring data » Examining state • Processes are used for (almost) everything! »… 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.33 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.34 Processes – Address Space Processes in the OS – Representation 0xFFFFFFFF • To users (and other processes) a process is identified by its Process ID (PID) Kernel Code and Data Kernel Space • In the OS, processes are represented by entries in a Process Table (PT) stack (dynamically allocated) SP Virtual – PID is index to (or pointer to) a PT entry User Space address space heap – PT entry = Process Control Block (PCB) (dynamically allocated) • PCB is a large data structure that contains or points to all info about the process static data code PC – Linux - defined in task_struct – over 70 fields » see include/linux/sched.h (text) 0x00000000 – Windows XP – defined in EPROCESS – about 60 32-bit Linux & Win XP – 3G/1G user fields space/kernel space 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.35 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.36

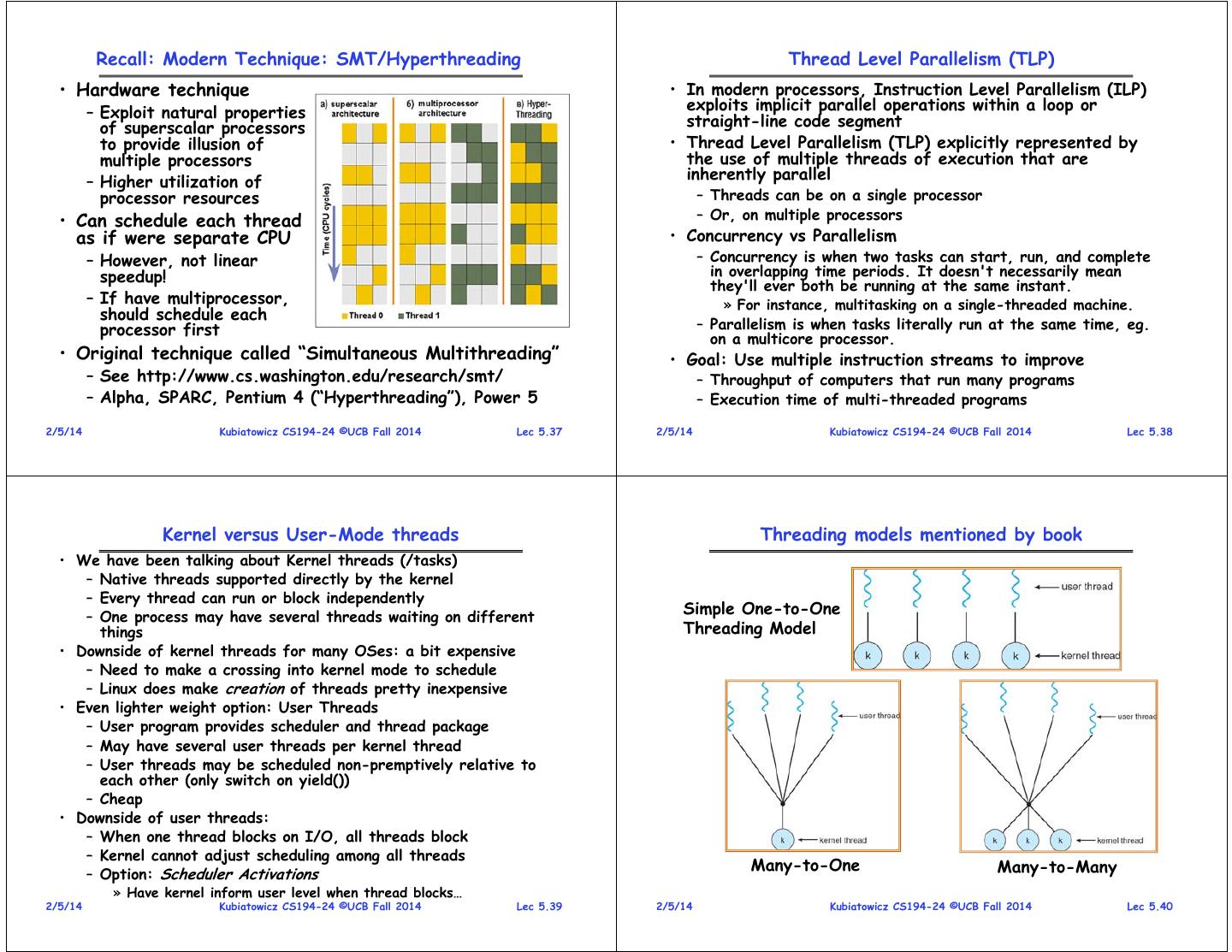

10 . Recall: Modern Technique: SMT/Hyperthreading Thread Level Parallelism (TLP) • Hardware technique • In modern processors, Instruction Level Parallelism (ILP) – Exploit natural properties exploits implicit parallel operations within a loop or of superscalar processors straight-line code segment to provide illusion of • Thread Level Parallelism (TLP) explicitly represented by multiple processors the use of multiple threads of execution that are – Higher utilization of inherently parallel processor resources – Threads can be on a single processor • Can schedule each thread – Or, on multiple processors as if were separate CPU • Concurrency vs Parallelism – However, not linear – Concurrency is when two tasks can start, run, and complete speedup! in overlapping time periods. It doesn't necessarily mean they'll ever both be running at the same instant. – If have multiprocessor, » For instance, multitasking on a single-threaded machine. should schedule each processor first – Parallelism is when tasks literally run at the same time, eg. on a multicore processor. • Original technique called “Simultaneous Multithreading” • Goal: Use multiple instruction streams to improve – See http://www.cs.washington.edu/research/smt/ – Throughput of computers that run many programs – Alpha, SPARC, Pentium 4 (“Hyperthreading”), Power 5 – Execution time of multi-threaded programs 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.37 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.38 Kernel versus User-Mode threads Threading models mentioned by book • We have been talking about Kernel threads (/tasks) – Native threads supported directly by the kernel – Every thread can run or block independently – One process may have several threads waiting on different Simple One-to-One things Threading Model • Downside of kernel threads for many OSes: a bit expensive – Need to make a crossing into kernel mode to schedule – Linux does make creation of threads pretty inexpensive • Even lighter weight option: User Threads – User program provides scheduler and thread package – May have several user threads per kernel thread – User threads may be scheduled non-premptively relative to each other (only switch on yield()) – Cheap • Downside of user threads: – When one thread blocks on I/O, all threads block – Kernel cannot adjust scheduling among all threads Many-to-One Many-to-Many – Option: Scheduler Activations » Have kernel inform user level when thread blocks… 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.39 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.40

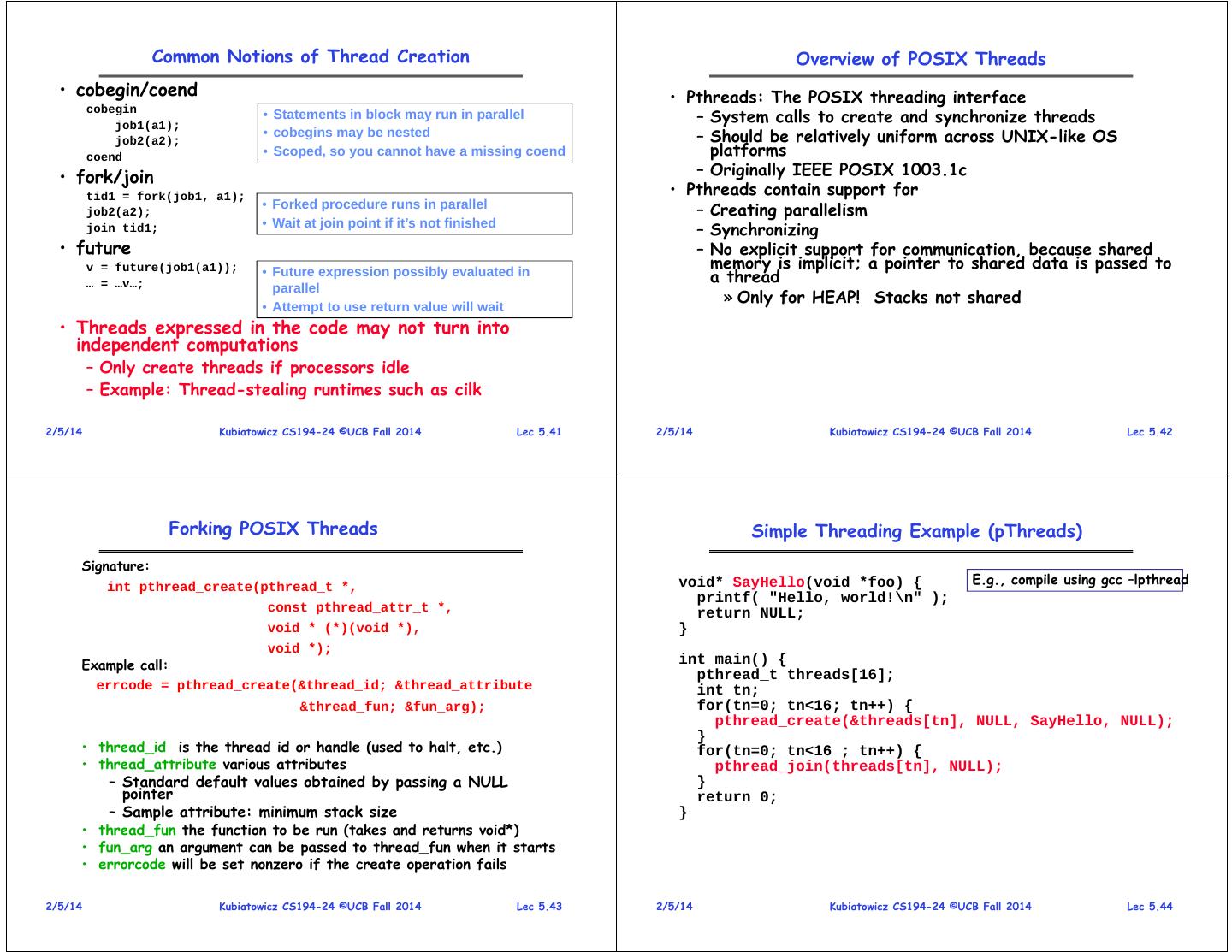

11 . Common Notions of Thread Creation Overview of POSIX Threads • cobegin/coend • Pthreads: The POSIX threading interface – System calls to create and synchronize threads cobegin • Statements in block may run in parallel job1(a1); job2(a2); • cobegins may be nested – Should be relatively uniform across UNIX-like OS coend • Scoped, so you cannot have a missing coend platforms • fork/join – Originally IEEE POSIX 1003.1c tid1 = fork(job1, a1); • Pthreads contain support for job2(a2); • Forked procedure runs in parallel – Creating parallelism join tid1; • Wait at join point if it’s not finished – Synchronizing • future – No explicit support for communication, because shared v = future(job1(a1)); memory is implicit; a pointer to shared data is passed to … = …v…; • Future expression possibly evaluated in a thread parallel » Only for HEAP! Stacks not shared • Attempt to use return value will wait • Threads expressed in the code may not turn into independent computations – Only create threads if processors idle – Example: Thread-stealing runtimes such as cilk 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.41 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.42 Forking POSIX Threads Simple Threading Example (pThreads) Signature: int pthread_create(pthread_t *, void* SayHello(void *foo) { E.g., compile using gcc –lpthread printf( "Hello, world!\n" ); const pthread_attr_t *, return NULL; void * (*)(void *), } void *); Example call: int main() { pthread_t threads[16]; errcode = pthread_create(&thread_id; &thread_attribute int tn; &thread_fun; &fun_arg); for(tn=0; tn<16; tn++) { pthread_create(&threads[tn], NULL, SayHello, NULL); } • thread_id is the thread id or handle (used to halt, etc.) for(tn=0; tn<16 ; tn++) { • thread_attribute various attributes pthread_join(threads[tn], NULL); – Standard default values obtained by passing a NULL } pointer return 0; – Sample attribute: minimum stack size } • thread_fun the function to be run (takes and returns void*) • fun_arg an argument can be passed to thread_fun when it starts • errorcode will be set nonzero if the create operation fails 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.43 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.44

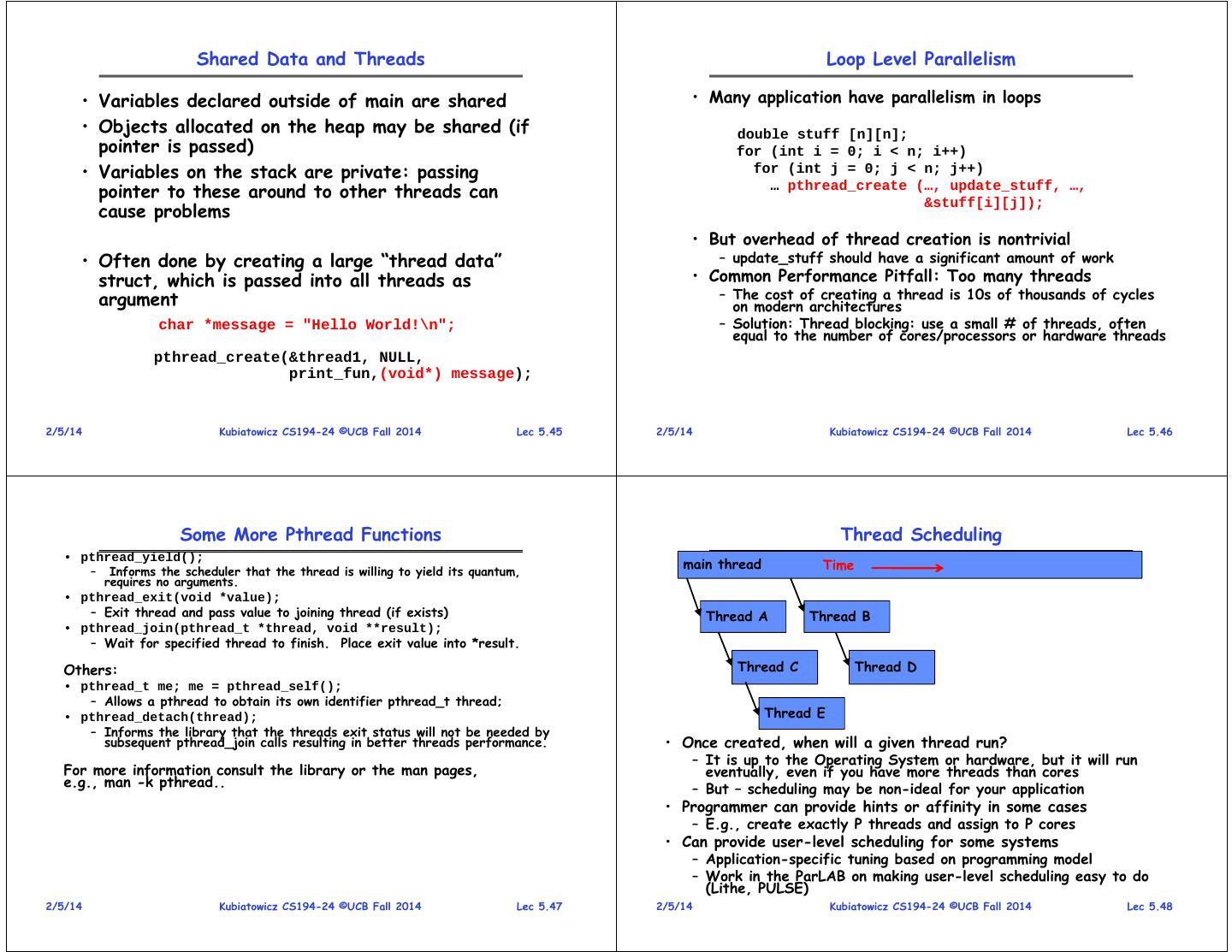

12 . Shared Data and Threads Loop Level Parallelism • Variables declared outside of main are shared • Many application have parallelism in loops • Objects allocated on the heap may be shared (if double stuff [n][n]; pointer is passed) for (int i = 0; i < n; i++) • Variables on the stack are private: passing for (int j = 0; j < n; j++) pointer to these around to other threads can … pthread_create (…, update_stuff, …, cause problems &stuff[i][j]); • But overhead of thread creation is nontrivial • Often done by creating a large “thread data” – update_stuff should have a significant amount of work struct, which is passed into all threads as • Common Performance Pitfall: Too many threads argument – The cost of creating a thread is 10s of thousands of cycles on modern architectures char *message = "Hello World!\n"; – Solution: Thread blocking: use a small # of threads, often equal to the number of cores/processors or hardware threads pthread_create(&thread1, NULL, print_fun,(void*) message); 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.45 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.46 Some More Pthread Functions Thread Scheduling • pthread_yield(); main thread Time – Informs the scheduler that the thread is willing to yield its quantum, requires no arguments. • pthread_exit(void *value); – Exit thread and pass value to joining thread (if exists) Thread A Thread B • pthread_join(pthread_t *thread, void **result); – Wait for specified thread to finish. Place exit value into *result. Others: Thread C Thread D • pthread_t me; me = pthread_self(); – Allows a pthread to obtain its own identifier pthread_t thread; • pthread_detach(thread); Thread E – Informs the library that the threads exit status will not be needed by subsequent pthread_join calls resulting in better threads performance. • Once created, when will a given thread run? – It is up to the Operating System or hardware, but it will run For more information consult the library or the man pages, eventually, even if you have more threads than cores e.g., man -k pthread.. – But – scheduling may be non-ideal for your application • Programmer can provide hints or affinity in some cases – E.g., create exactly P threads and assign to P cores • Can provide user-level scheduling for some systems – Application-specific tuning based on programming model – Work in the ParLAB on making user-level scheduling easy to do (Lithe, PULSE) 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.47 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.48

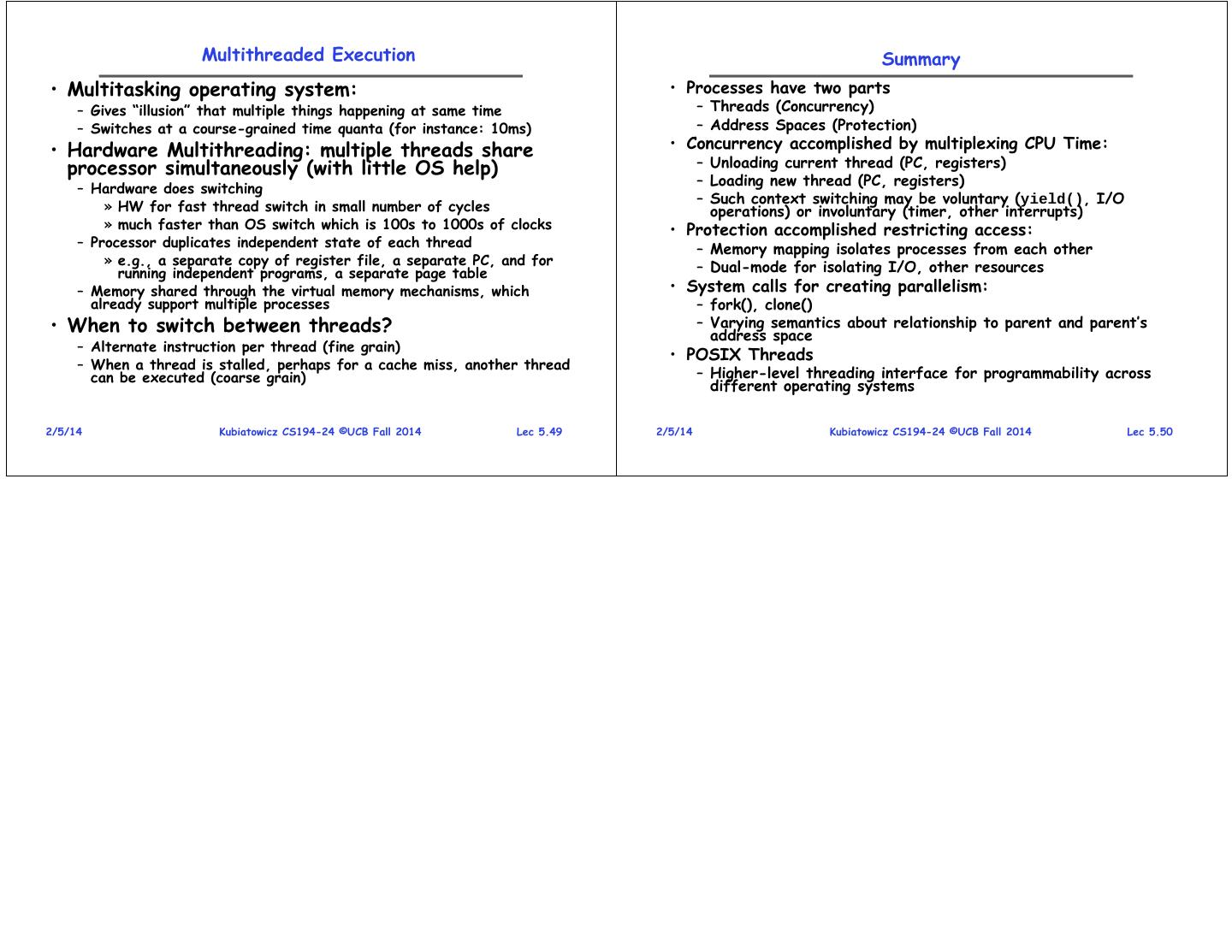

13 . Multithreaded Execution Summary • Multitasking operating system: • Processes have two parts – Gives “illusion” that multiple things happening at same time – Threads (Concurrency) – Switches at a course-grained time quanta (for instance: 10ms) – Address Spaces (Protection) • Hardware Multithreading: multiple threads share • Concurrency accomplished by multiplexing CPU Time: processor simultaneously (with little OS help) – Unloading current thread (PC, registers) – Loading new thread (PC, registers) – Hardware does switching – Such context switching may be voluntary (yield(), I/O » HW for fast thread switch in small number of cycles operations) or involuntary (timer, other interrupts) » much faster than OS switch which is 100s to 1000s of clocks • Protection accomplished restricting access: – Processor duplicates independent state of each thread – Memory mapping isolates processes from each other » e.g., a separate copy of register file, a separate PC, and for – Dual-mode for isolating I/O, other resources running independent programs, a separate page table – Memory shared through the virtual memory mechanisms, which • System calls for creating parallelism: already support multiple processes – fork(), clone() • When to switch between threads? – Varying semantics about relationship to parent and parent’s address space – Alternate instruction per thread (fine grain) • POSIX Threads – When a thread is stalled, perhaps for a cache miss, another thread can be executed (coarse grain) – Higher-level threading interface for programmability across different operating systems 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.49 2/5/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 5.50