- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Modern Architecture

展开查看详情

1 . Goals for Today CS194-24 • OS Organizations (Con’t): The Linux Kernel Advanced Operating Systems • Processes, Threads, and Such Structures and Implementation Lecture 4 Interactive is important! Ask Questions! OS Structure (Con’t) Modern Architecture February 3th, 2014 Prof. John Kubiatowicz http://inst.eecs.berkeley.edu/~cs194-24 Note: Some slides and/or pictures in the following are adapted from slides ©2013 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.2 Recall: OS Resources – at the center of it all! Recall: System Calls: Details • What do modern OSs do? • Challenge: Interaction Despite Isolation – Why all of these pieces running – How to isolate processes and their resources… together? Independent » While permitting them to request help from the kernel Requesters – Is this complexity necessary? » Processes interact while maintaining policies such as security, QoS, etc • Control of Resources – Letting processes interact with one another in a controlled way » Through messages, shared memory, etc – Access/No Access/ • Enter the System Call interface Partial Access – Layer between the hardware and user-space processes » Check every access to see if it is allowed – Programming interface to the services provided by the OS – Resource Multiplexing Access Control and Multiplexing • Mostly accessed by programs via a high-level Application Program Interface (API) rather than directly » When multiple valid requests – Get at system calls by linking with libraries in glibc occur at same time – how to multiplex access? » What fraction of resource can Call to Printf() in the Write() requester get? printf() C library system call – Performance Isolation » Can requests from one entity • Three most common APIs are: prevent requests from another? – Win32 API for Windows • What or Who is a requester??? – POSIX API for POSIX-based systems (including virtually all versions of UNIX, Linux, and Mac OS X) – Process? User? Public Key? – Java API for the Java virtual machine (JVM) – Think of this as a “Principle” 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.3 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.4

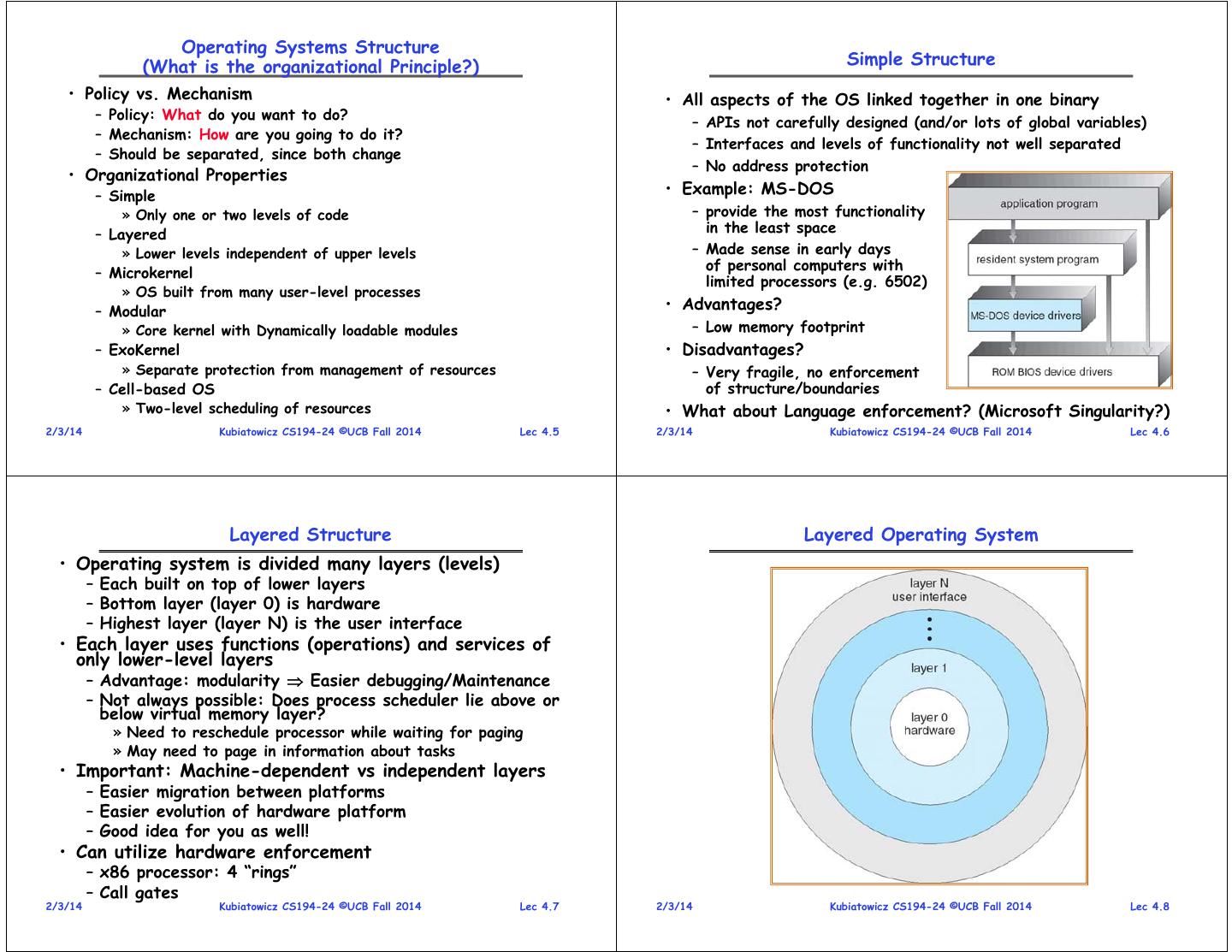

2 . Operating Systems Structure (What is the organizational Principle?) Simple Structure • Policy vs. Mechanism • All aspects of the OS linked together in one binary – Policy: What do you want to do? – APIs not carefully designed (and/or lots of global variables) – Mechanism: How are you going to do it? – Interfaces and levels of functionality not well separated – Should be separated, since both change – No address protection • Organizational Properties – Simple • Example: MS-DOS » Only one or two levels of code – provide the most functionality – Layered in the least space » Lower levels independent of upper levels – Made sense in early days of personal computers with – Microkernel limited processors (e.g. 6502) » OS built from many user-level processes – Modular • Advantages? » Core kernel with Dynamically loadable modules – Low memory footprint – ExoKernel • Disadvantages? » Separate protection from management of resources – Very fragile, no enforcement – Cell-based OS of structure/boundaries » Two-level scheduling of resources • What about Language enforcement? (Microsoft Singularity?) 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.5 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.6 Layered Structure Layered Operating System • Operating system is divided many layers (levels) – Each built on top of lower layers – Bottom layer (layer 0) is hardware – Highest layer (layer N) is the user interface • Each layer uses functions (operations) and services of only lower-level layers – Advantage: modularity Easier debugging/Maintenance – Not always possible: Does process scheduler lie above or below virtual memory layer? » Need to reschedule processor while waiting for paging » May need to page in information about tasks • Important: Machine-dependent vs independent layers – Easier migration between platforms – Easier evolution of hardware platform – Good idea for you as well! • Can utilize hardware enforcement – x86 processor: 4 “rings” – Call gates 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.7 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.8

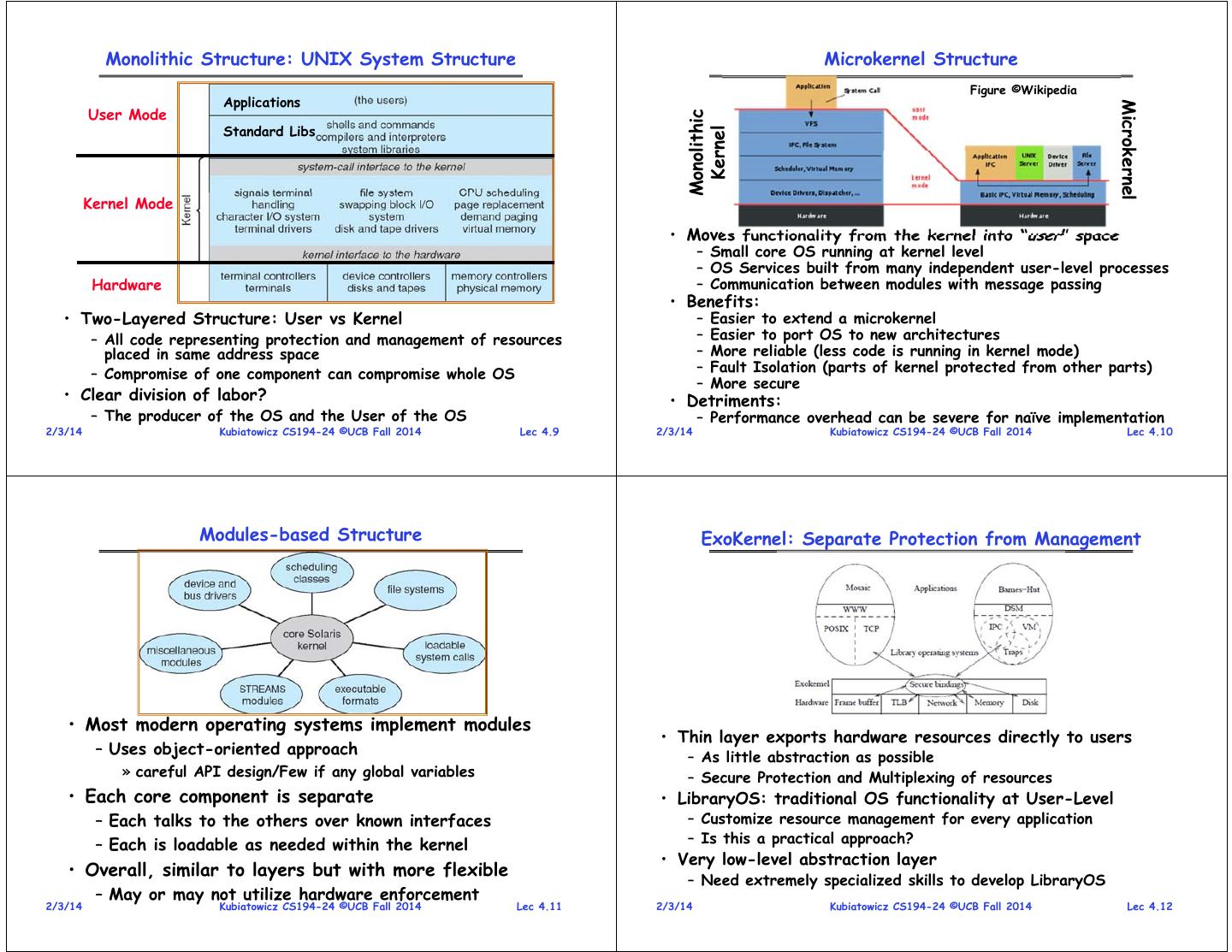

3 . Monolithic Structure: UNIX System Structure Microkernel Structure Figure ©Wikipedia Applications Microkernel User Mode Monolithic Standard Libs Kernel Kernel Mode • Moves functionality from the kernel into “user” space – Small core OS running at kernel level – OS Services built from many independent user-level processes Hardware – Communication between modules with message passing • Benefits: • Two-Layered Structure: User vs Kernel – Easier to extend a microkernel – All code representing protection and management of resources – Easier to port OS to new architectures placed in same address space – More reliable (less code is running in kernel mode) – Compromise of one component can compromise whole OS – Fault Isolation (parts of kernel protected from other parts) – More secure • Clear division of labor? • Detriments: – The producer of the OS and the User of the OS – Performance overhead can be severe for naïve implementation 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.9 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.10 Modules-based Structure ExoKernel: Separate Protection from Management • Most modern operating systems implement modules • Thin layer exports hardware resources directly to users – Uses object-oriented approach – As little abstraction as possible » careful API design/Few if any global variables – Secure Protection and Multiplexing of resources • Each core component is separate • LibraryOS: traditional OS functionality at User-Level – Each talks to the others over known interfaces – Customize resource management for every application – Each is loadable as needed within the kernel – Is this a practical approach? • Very low-level abstraction layer • Overall, similar to layers but with more flexible – Need extremely specialized skills to develop LibraryOS – May or may not utilize hardware enforcement 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.11 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.12

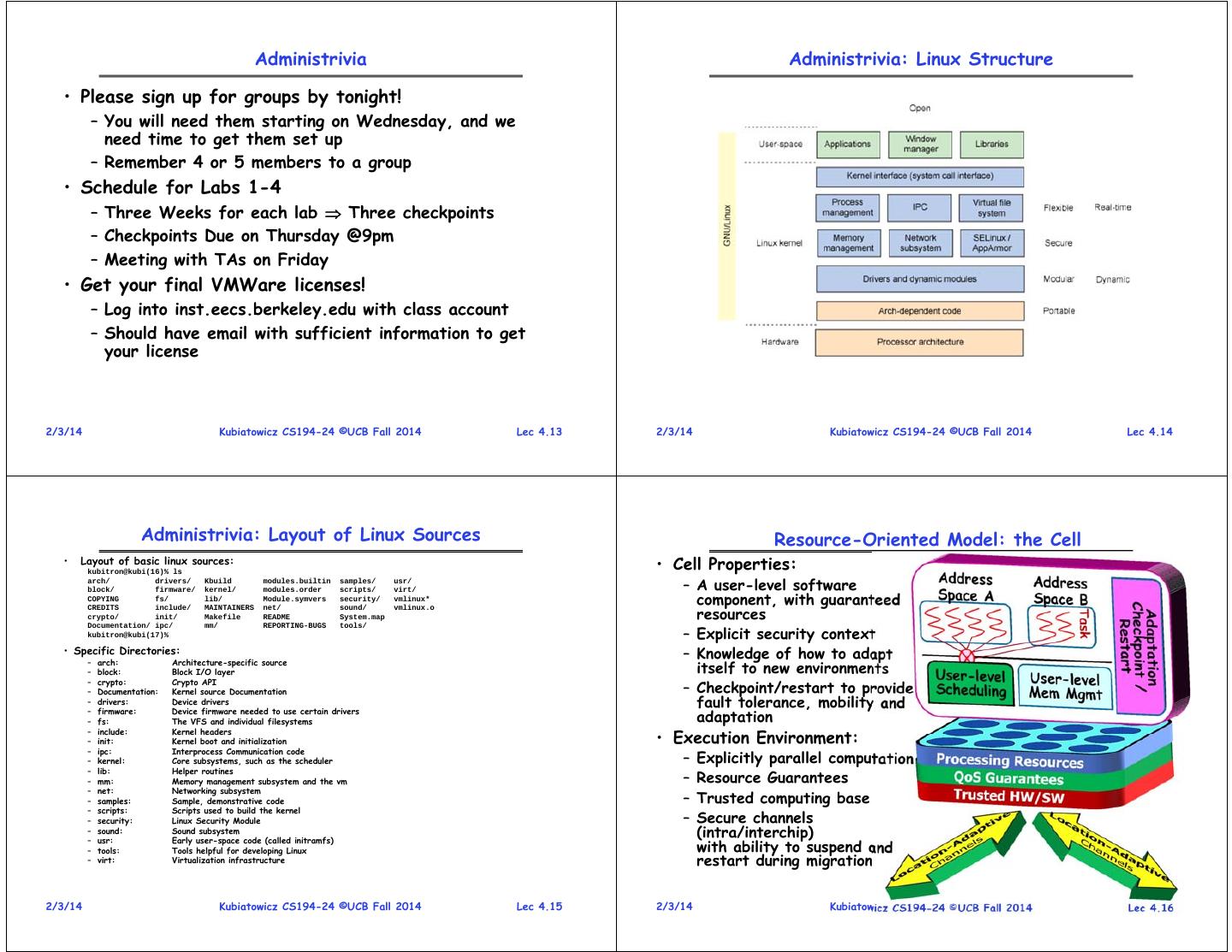

4 . Administrivia Administrivia: Linux Structure • Please sign up for groups by tonight! – You will need them starting on Wednesday, and we need time to get them set up – Remember 4 or 5 members to a group • Schedule for Labs 1-4 – Three Weeks for each lab Three checkpoints – Checkpoints Due on Thursday @9pm – Meeting with TAs on Friday • Get your final VMWare licenses! – Log into inst.eecs.berkeley.edu with class account – Should have email with sufficient information to get your license 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.13 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.14 Administrivia: Layout of Linux Sources Resource-Oriented Model: the Cell • Layout of basic linux sources: kubitron@kubi(16)% ls • Cell Properties: arch/ block/ drivers/ firmware/ Kbuild kernel/ modules.builtin modules.order samples/ scripts/ usr/ virt/ – A user-level software COPYING fs/ lib/ Module.symvers security/ vmlinux* component, with guaranteed resources CREDITS include/ MAINTAINERS net/ sound/ vmlinux.o crypto/ init/ Makefile README System.map – Explicit security context Documentation/ ipc/ mm/ REPORTING-BUGS tools/ kubitron@kubi(17)% • Specific Directories: – arch: Architecture-specific source – Knowledge of how to adapt – block: Block I/O layer itself to new environments – crypto: Crypto API – Documentation: Kernel source Documentation – Checkpoint/restart to provide – drivers: Device drivers fault tolerance, mobility and – – firmware: fs: Device firmware needed to use certain drivers The VFS and individual filesystems adaptation • Execution Environment: – include: Kernel headers – init: Kernel boot and initialization – ipc: Interprocess Communication code – kernel: Core subsystems, such as the scheduler – Explicitly parallel computation – lib: Helper routines – mm: Memory management subsystem and the vm – Resource Guarantees – net: Networking subsystem – samples: Sample, demonstrative code – Trusted computing base – scripts: Scripts used to build the kernel – security: Linux Security Module – Secure channels – – sound: usr: Sound subsystem Early user-space code (called initramfs) (intra/interchip) – tools: Tools helpful for developing Linux with ability to suspend and – virt: Virtualization infrastructure restart during migration 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.15 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.16

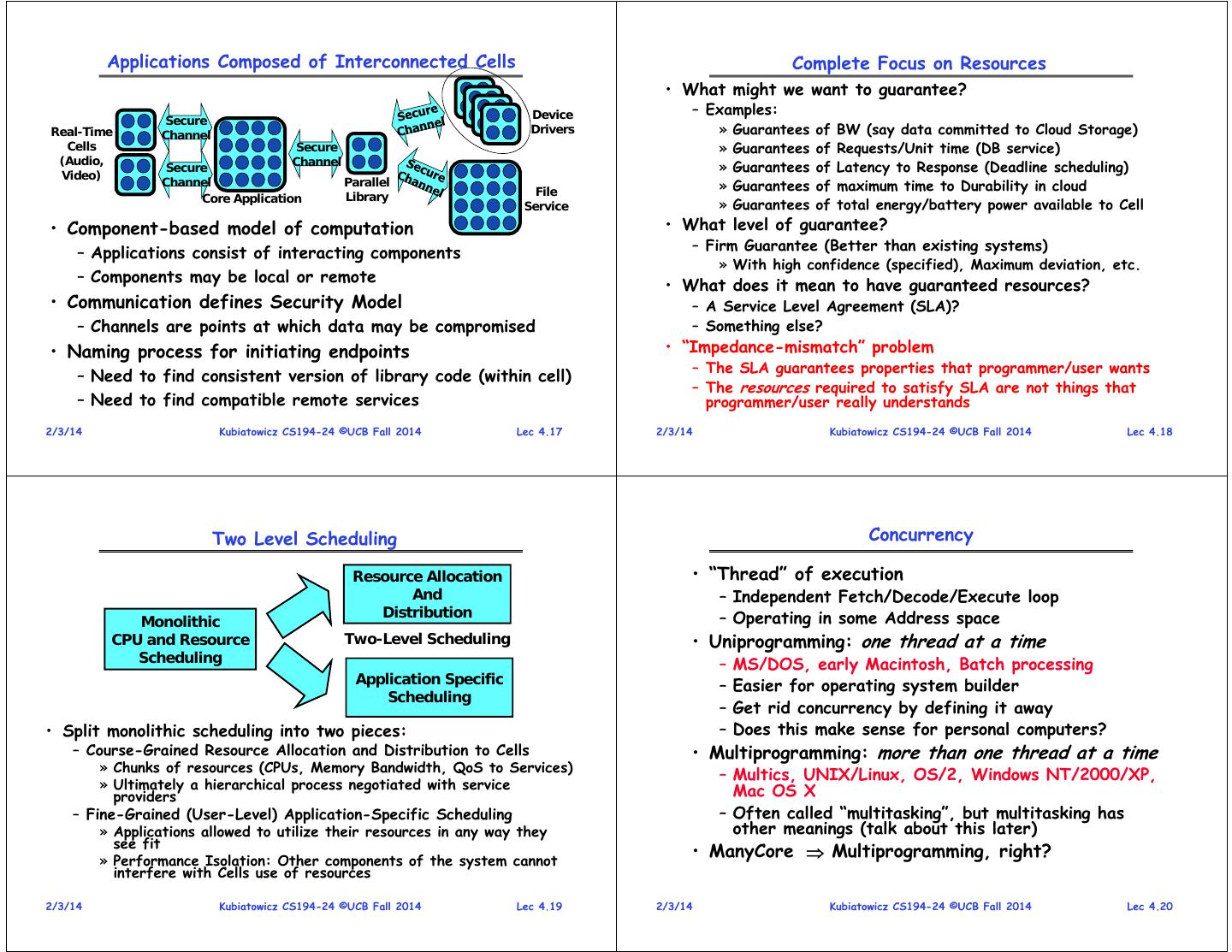

5 . Applications Composed of Interconnected Cells Complete Focus on Resources • What might we want to guarantee? Device – Examples: Secure Real-Time Channel Drivers » Guarantees of BW (say data committed to Cloud Storage) Cells Secure » Guarantees of Requests/Unit time (DB service) (Audio, Channel Secure » Guarantees of Latency to Response (Deadline scheduling) Video) Channel Parallel » Guarantees of maximum time to Durability in cloud Library File Core Application Service » Guarantees of total energy/battery power available to Cell • Component-based model of computation • What level of guarantee? – Firm Guarantee (Better than existing systems) – Applications consist of interacting components » With high confidence (specified), Maximum deviation, etc. – Components may be local or remote • What does it mean to have guaranteed resources? • Communication defines Security Model – A Service Level Agreement (SLA)? – Channels are points at which data may be compromised – Something else? • Naming process for initiating endpoints • “Impedance-mismatch” problem – The SLA guarantees properties that programmer/user wants – Need to find consistent version of library code (within cell) – The resources required to satisfy SLA are not things that – Need to find compatible remote services programmer/user really understands 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.17 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.18 Two Level Scheduling Concurrency Resource Allocation • “Thread” of execution And – Independent Fetch/Decode/Execute loop Distribution – Operating in some Address space Monolithic CPU and Resource Two-Level Scheduling • Uniprogramming: one thread at a time Scheduling – MS/DOS, early Macintosh, Batch processing Application Specific – Easier for operating system builder Scheduling – Get rid concurrency by defining it away • Split monolithic scheduling into two pieces: – Does this make sense for personal computers? – Course-Grained Resource Allocation and Distribution to Cells • Multiprogramming: more than one thread at a time » Chunks of resources (CPUs, Memory Bandwidth, QoS to Services) – Multics, UNIX/Linux, OS/2, Windows NT/2000/XP, » Ultimately a hierarchical process negotiated with service Mac OS X providers – Fine-Grained (User-Level) Application-Specific Scheduling – Often called “multitasking”, but multitasking has » Applications allowed to utilize their resources in any way they other meanings (talk about this later) see fit » Performance Isolation: Other components of the system cannot • ManyCore Multiprogramming, right? interfere with Cells use of resources 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.19 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.20

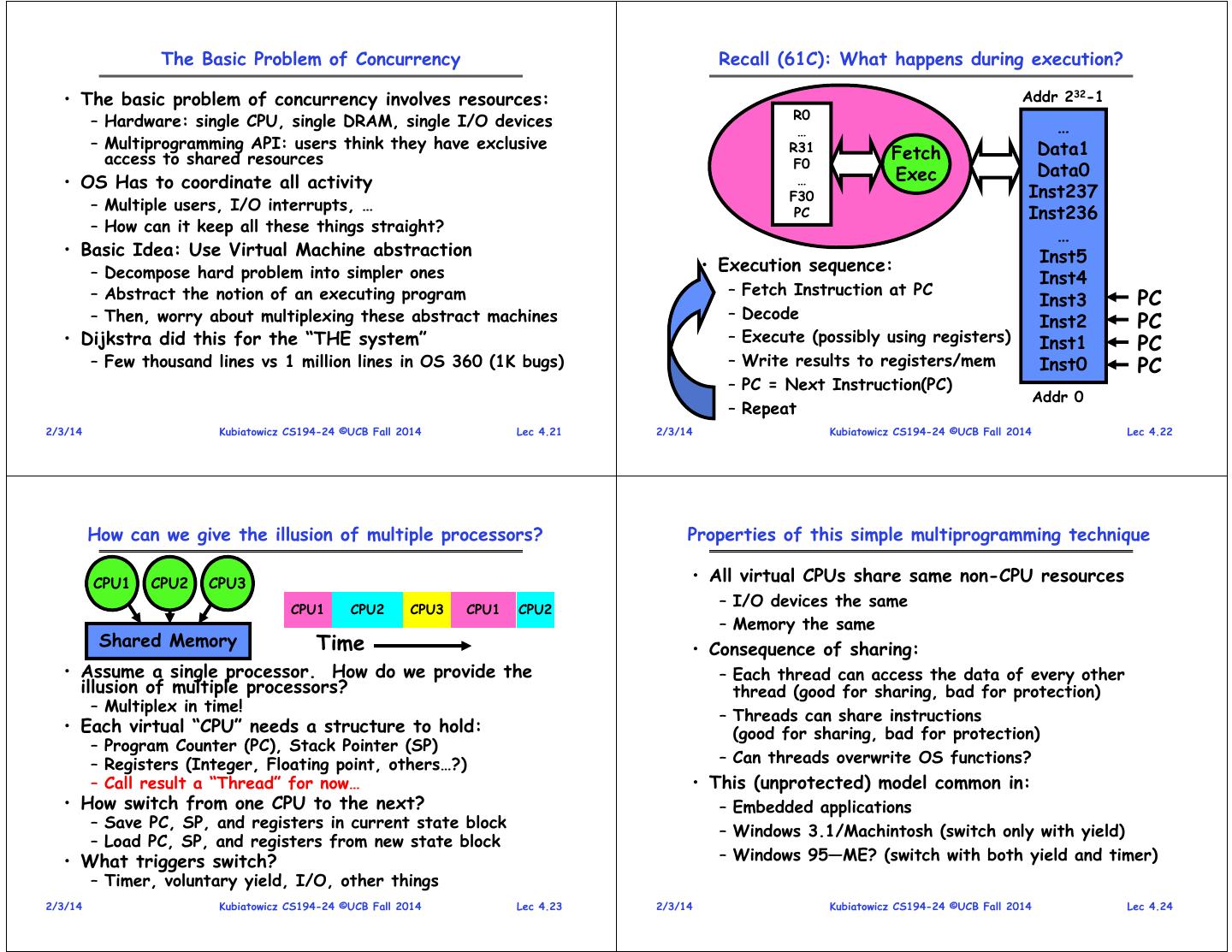

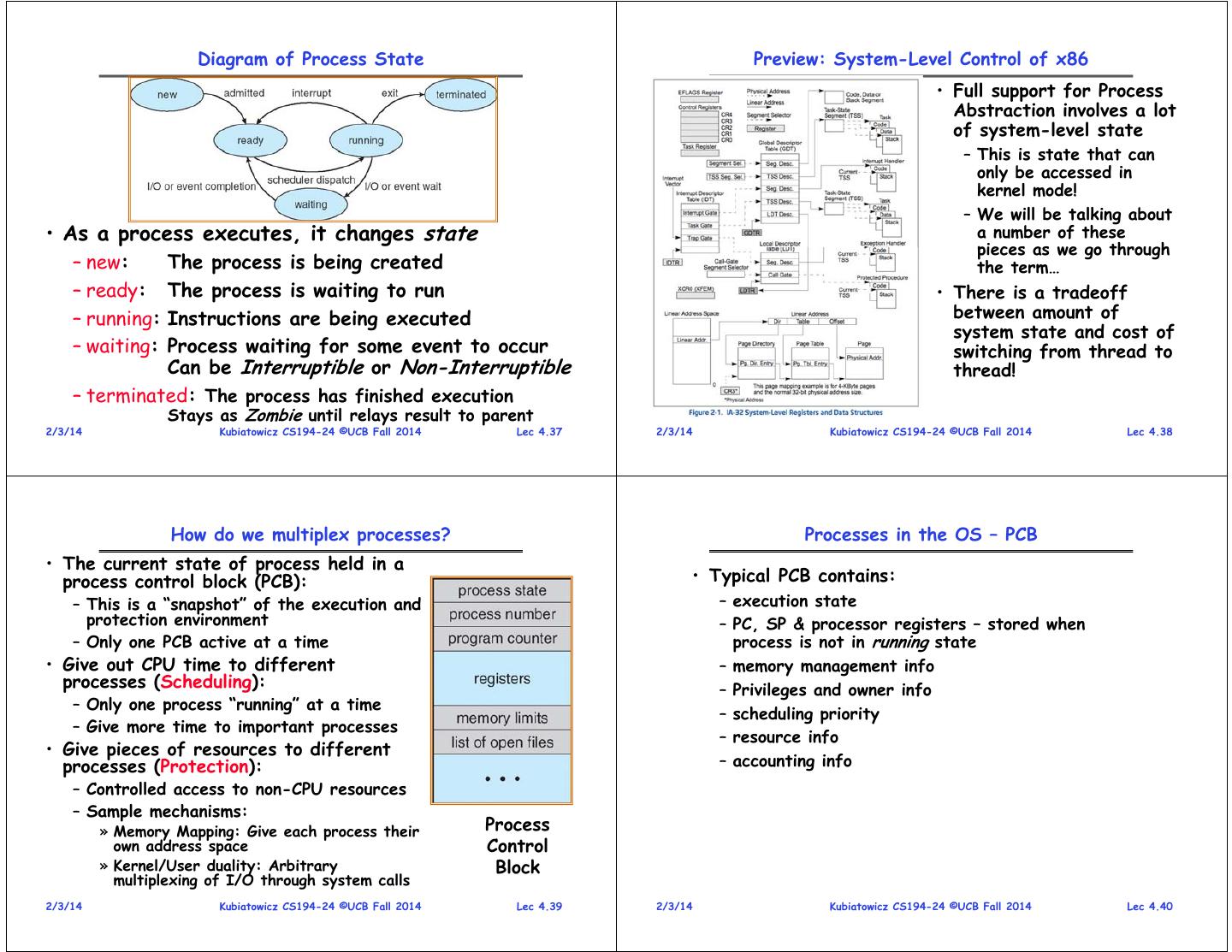

6 . The Basic Problem of Concurrency Recall (61C): What happens during execution? • The basic problem of concurrency involves resources: Addr 232-1 R0 – Hardware: single CPU, single DRAM, single I/O devices … … – Multiprogramming API: users think they have exclusive R31 Data1 access to shared resources Fetch F0 Exec Data0 • OS Has to coordinate all activity … F30 Inst237 – Multiple users, I/O interrupts, … PC Inst236 – How can it keep all these things straight? … • Basic Idea: Use Virtual Machine abstraction Inst5 – Decompose hard problem into simpler ones • Execution sequence: Inst4 – Abstract the notion of an executing program – Fetch Instruction at PC Inst3 PC – Then, worry about multiplexing these abstract machines – Decode Inst2 PC • Dijkstra did this for the “THE system” – Execute (possibly using registers) Inst1 PC – Few thousand lines vs 1 million lines in OS 360 (1K bugs) – Write results to registers/mem Inst0 PC – PC = Next Instruction(PC) Addr 0 – Repeat 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.21 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.22 How can we give the illusion of multiple processors? Properties of this simple multiprogramming technique CPU1 CPU2 CPU3 • All virtual CPUs share same non-CPU resources CPU1 CPU2 CPU3 CPU1 CPU2 – I/O devices the same – Memory the same Shared Memory Time • Consequence of sharing: • Assume a single processor. How do we provide the – Each thread can access the data of every other illusion of multiple processors? thread (good for sharing, bad for protection) – Multiplex in time! – Threads can share instructions • Each virtual “CPU” needs a structure to hold: (good for sharing, bad for protection) – Program Counter (PC), Stack Pointer (SP) – Registers (Integer, Floating point, others…?) – Can threads overwrite OS functions? – Call result a “Thread” for now… • This (unprotected) model common in: • How switch from one CPU to the next? – Embedded applications – Save PC, SP, and registers in current state block – Windows 3.1/Machintosh (switch only with yield) – Load PC, SP, and registers from new state block • What triggers switch? – Windows 95—ME? (switch with both yield and timer) – Timer, voluntary yield, I/O, other things 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.23 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.24

7 . What needs to be saved in Modern X86? Modern Technique: SMT/Hyperthreading 64-bit Register Set Traditional 32-bit subset • Hardware technique – Exploit natural properties of superscalar processors to provide illusion of multiple processors – Higher utilization of processor resources • Can schedule each thread EFLAGS Register as if were separate CPU – However, not linear speedup! – If have multiprocessor, should schedule each processor first • Original technique called “Simultaneous Multithreading” – See http://www.cs.washington.edu/research/smt/ – Alpha, SPARC, Pentium 4 (“Hyperthreading”), Power 5 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.25 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.26 How to protect threads from one another? Recall from CS162: Program’s Address Space • Address space the set of • Need three important things: accessible addresses + state 1. Protection of memory associated with them: Program Address Space » Every task does not have access to all memory – For a 32-bit processor there are 2. Protection of I/O devices 232 = 4 billion addresses » Every task does not have access to every device • What happens when you read or 3. Protection of Access to Processor: write to an address? Preemptive switching from task to task – Perhaps Nothing » Use of timer – Perhaps acts like regular memory » Must not be possible to disable timer from – Perhaps ignores writes usercode – Perhaps causes I/O operation » (Memory-mapped I/O) – Perhaps causes exception (fault) 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.27 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.28

8 . Providing Illusion of Separate Address Space: Load new Translation Map on Switch X86 Memory model with segmentation Data 2 Code Code Stack 1 Data Data Heap Heap 1 Heap Stack Code 1 Stack Stack 2 Prog 1 Prog 2 Data 1 Virtual Virtual Address Heap 2 Address Space 1 Space 2 Code 2 OS code Translation Map 1 OS data Translation Map 2 OS heap & Stacks Physical Address Space 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.29 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.30 The Six x86 Segment Registers UNIX Process • CS - Code Segment • Process: Operating system abstraction to represent • SS - Stack Segment what is needed to run a single program – “Stack segments are data segments which must be – Originally: a single, sequential stream of execution in its own address space read/write segments. Loading the SS register with a segment selector for a nonwritable data segment generates a – Modern Process: multiple threads in same address space! general-protection exception (#GP)” • Two parts: • DS - Data Segment – Sequential Program Execution Streams • ES/FS/GS - Extra (usually data) segment registers » Code executed as one or more sequential stream of execution (threads) – FS and GS used for thread-local storage/by glibc » Each thread includes its own state of CPU registers • The “hidden part” is like a cache so that segment » Threads either multiplexed in software (OS) or hardware (simultaneous multithrading/hyperthreading) descriptor info doesn’t have to be looked up each time – Protected Resources: » Main Memory State (contents of Address Space) » I/O state (i.e. file descriptors) • This is a virtual machine abstraction – Some might say that the only point of an OS is to support a clean Process abstraction 31 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.31 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.32

9 . Modern “Lightweight” Process with Threads Single and Multithreaded Processes • Thread: a sequential execution stream within process (Sometimes called a “Lightweight process”) – Process still contains a single Address Space – No protection between threads • Multithreading: a single program made up of a number of different concurrent activities – Sometimes called multitasking, as in Ada… • Why separate the concept of a thread from that of a process? – Discuss the “thread” part of a process (concurrency) – Separate from the “address space” (Protection) – Heavyweight Process Process with one thread • Linux confuses this model a bit: • Threads encapsulate concurrency: “Active” component – Processes and Threads are “the same” • Address spaces encapsulate protection: “Passive” part – Really means: Threads are managed separately and can share – Keeps buggy program from trashing the system a variety of resources (such as address spaces) – Threads related to one another in fashion similar to • Why have multiple threads per address space? Processes with Threads within 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.33 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.34 Process comprises Process – starting and ending • What is in a process? • Processes are created … – an address space – usually protected and virtual – mapped into memory – When the system boots – the code for the running program – By the actions of another process (more later) – the data for the running program – By the actions of a user – an execution stack and stack pointer (SP); also heap – By the actions of a batch manager – the program counter (PC) • Processes terminate … – a set of processor registers – general purpose and – Normally – exit status – a set of system resources – Voluntarily on an error » files, network connections, pipes, … – Involuntarily on an error » privileges, (human) user association, … – Terminated (killed) by the actions a user or a » Personalities (linux) process –… 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.35 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.36

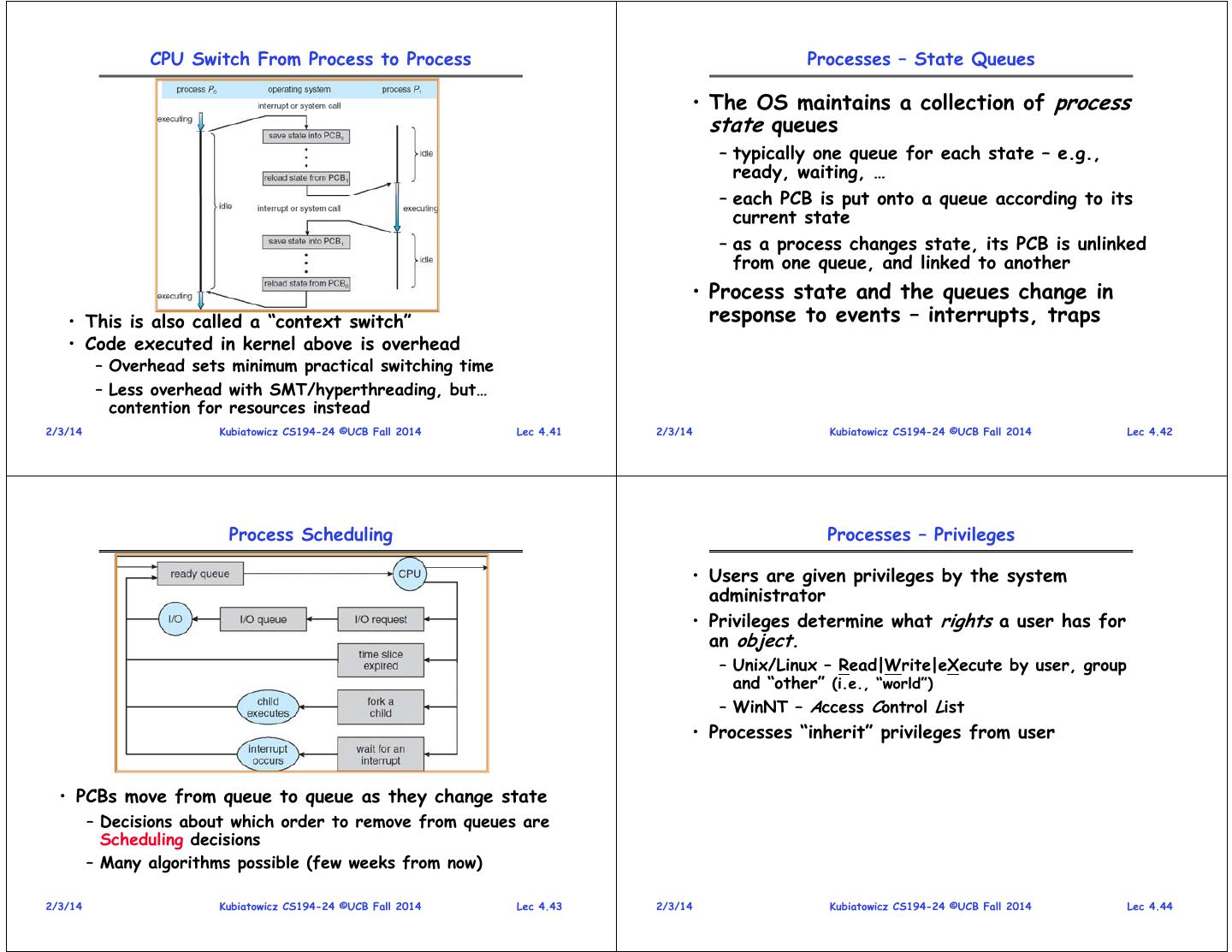

10 . Diagram of Process State Preview: System-Level Control of x86 • Full support for Process Abstraction involves a lot of system-level state – This is state that can only be accessed in kernel mode! – We will be talking about • As a process executes, it changes state a number of these pieces as we go through – new: The process is being created the term… – ready: The process is waiting to run • There is a tradeoff – running:Instructions are being executed between amount of system state and cost of – waiting:Process waiting for some event to occur switching from thread to Can be Interruptible or Non-Interruptible thread! – terminated: The process has finished execution Stays as Zombie until relays result to parent 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.37 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.38 How do we multiplex processes? Processes in the OS – PCB • The current state of process held in a process control block (PCB): • Typical PCB contains: – This is a “snapshot” of the execution and – execution state protection environment – PC, SP & processor registers – stored when – Only one PCB active at a time process is not in running state • Give out CPU time to different – memory management info processes (Scheduling): – Privileges and owner info – Only one process “running” at a time – scheduling priority – Give more time to important processes – resource info • Give pieces of resources to different processes (Protection): – accounting info – Controlled access to non-CPU resources – Sample mechanisms: » Memory Mapping: Give each process their Process own address space Control » Kernel/User duality: Arbitrary Block multiplexing of I/O through system calls 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.39 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.40

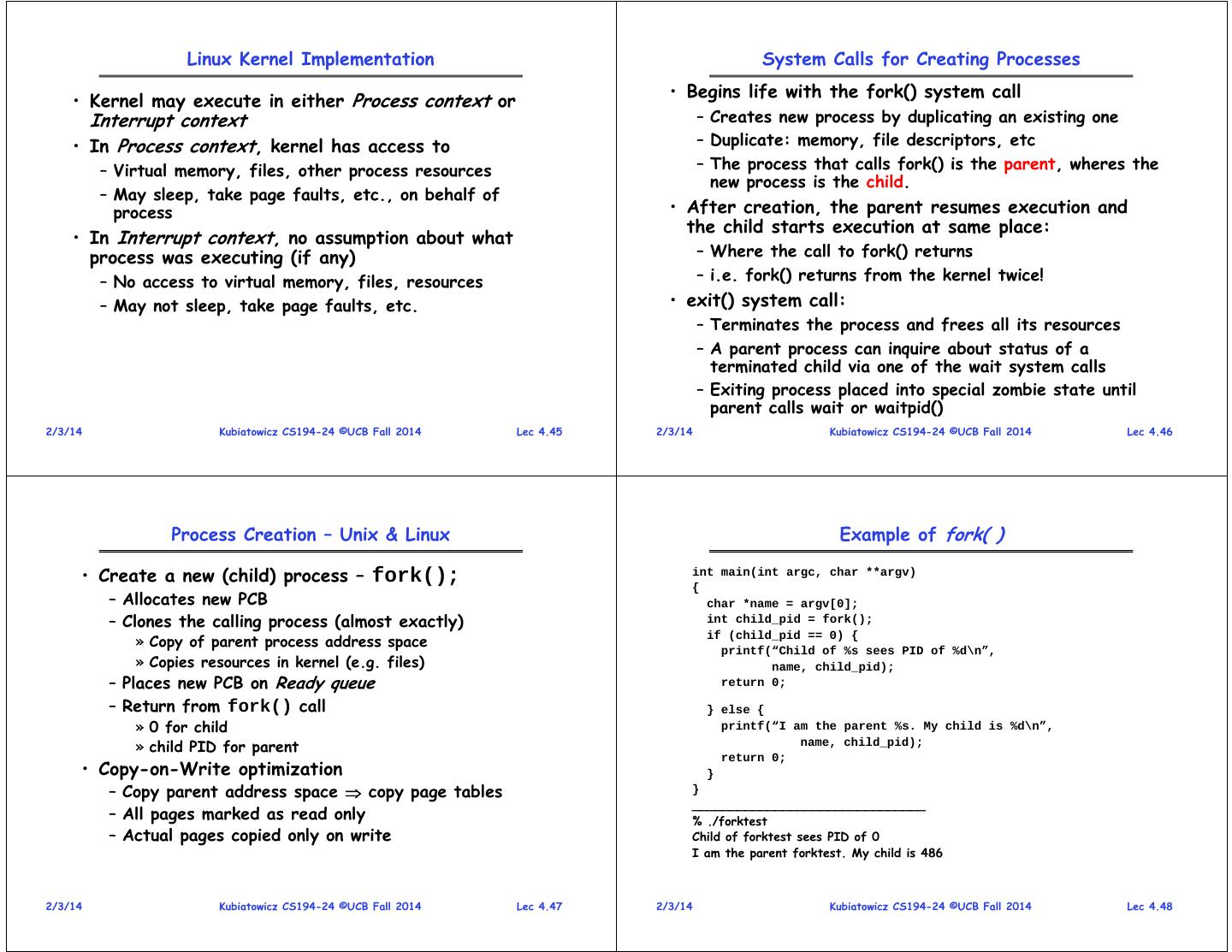

11 . CPU Switch From Process to Process Processes – State Queues • The OS maintains a collection of process state queues – typically one queue for each state – e.g., ready, waiting, … – each PCB is put onto a queue according to its current state – as a process changes state, its PCB is unlinked from one queue, and linked to another • Process state and the queues change in • This is also called a “context switch” response to events – interrupts, traps • Code executed in kernel above is overhead – Overhead sets minimum practical switching time – Less overhead with SMT/hyperthreading, but… contention for resources instead 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.41 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.42 Process Scheduling Processes – Privileges • Users are given privileges by the system administrator • Privileges determine what rights a user has for an object. – Unix/Linux – Read|Write|eXecute by user, group and “other” (i.e., “world”) – WinNT – Access Control List • Processes “inherit” privileges from user • PCBs move from queue to queue as they change state – Decisions about which order to remove from queues are Scheduling decisions – Many algorithms possible (few weeks from now) 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.43 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.44

12 . Linux Kernel Implementation System Calls for Creating Processes • Kernel may execute in either Process context or • Begins life with the fork() system call Interrupt context – Creates new process by duplicating an existing one • In Process context, kernel has access to – Duplicate: memory, file descriptors, etc – Virtual memory, files, other process resources – The process that calls fork() is the parent, wheres the new process is the child. – May sleep, take page faults, etc., on behalf of process • After creation, the parent resumes execution and the child starts execution at same place: • In Interrupt context, no assumption about what process was executing (if any) – Where the call to fork() returns – No access to virtual memory, files, resources – i.e. fork() returns from the kernel twice! – May not sleep, take page faults, etc. • exit() system call: – Terminates the process and frees all its resources – A parent process can inquire about status of a terminated child via one of the wait system calls – Exiting process placed into special zombie state until parent calls wait or waitpid() 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.45 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.46 Process Creation – Unix & Linux Example of fork( ) • Create a new (child) process – fork(); int main(int argc, char **argv) { – Allocates new PCB char *name = argv[0]; – Clones the calling process (almost exactly) int child_pid = fork(); » Copy of parent process address space if (child_pid == 0) { printf(“Child of %s sees PID of %d\n”, » Copies resources in kernel (e.g. files) name, child_pid); – Places new PCB on Ready queue return 0; – Return from fork() call } else { » 0 for child printf(“I am the parent %s. My child is %d\n”, » child PID for parent name, child_pid); return 0; • Copy-on-Write optimization } – Copy parent address space copy page tables } _______________________________ – All pages marked as read only % ./forktest – Actual pages copied only on write Child of forktest sees PID of 0 I am the parent forktest. My child is 486 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.47 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.48

13 . Summary • Many organizations for Operating Systems – All oriented around Resources – Common organizations: Monolithic, MicroKernel, ExoKernel • Processes have two parts – Threads (Concurrency) – Address Spaces (Protection) • Concurrency accomplished by multiplexing CPU Time: – Unloading current thread (PC, registers) – Loading new thread (PC, registers) – Such context switching may be voluntary (yield(), I/O operations) or involuntary (timer, other interrupts) • Protection accomplished restricting access: – Memory mapping isolates processes from each other – Dual-mode for isolating I/O, other resources 2/3/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 4.49