- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Networks (Con’t) Security

展开查看详情

1 . Goals for Today CS194-24 • Security mechanisms Advanced Operating Systems – Mandatory Access Control Structures and Implementation – Data Centric Access Control Lecture 24 – Distributed Decision Making – Trusted Computing Hardware Networks (Con’t) Security Interactive is important! April 30th, 2014 Ask Questions! Prof. John Kubiatowicz http://inst.eecs.berkeley.edu/~cs194-24 Note: Some slides and/or pictures in the following are adapted from slides ©2013 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.2 Recall: Protection vs Security Recall: Authorization: Who Can Do What? • Protection: use of one or more mechanisms for controlling • How do we decide who is authorized the access of programs, processes, or users to resources to do actions in the system? – Page Table Mechanism • Access Control Matrix: contains all permissions in the system – File Access Mechanism – Resources across top – On-disk encryption » Files, Devices, etc… • Security: use of protection mechanisms to prevent misuse – Domains in columns » A domain might be a user or a of resources group of permissions – Misuse defined with respect to policy » E.g. above: User D3 can read F2 or execute F3 » E.g.: prevent exposure of certain sensitive information – In practice, table would be huge and sparse! » E.g.: prevent unauthorized modification/deletion of data • Two approaches to implementation – Requires consideration of the external environment within – Access Control Lists: store permissions with each object » Still might be lots of users! which the system operates » UNIX limits each file to: r,w,x for owner, group, world » Most well-constructed system cannot protect information if » More recent systems allow definition of groups of users user accidentally reveals password and permissions for each group • Three Pieces to Security – Capability List: each process tracks objects has – Authentication: who the user actually is permission to touch » Popular in the past, idea out of favor today – Authorization: who is allowed to do what » Consider page table: Each process has list of pages it has – Enforcement: make sure people do only what they are access to, not each page has list of processes … 4/30/14 supposed to do Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.3 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.4

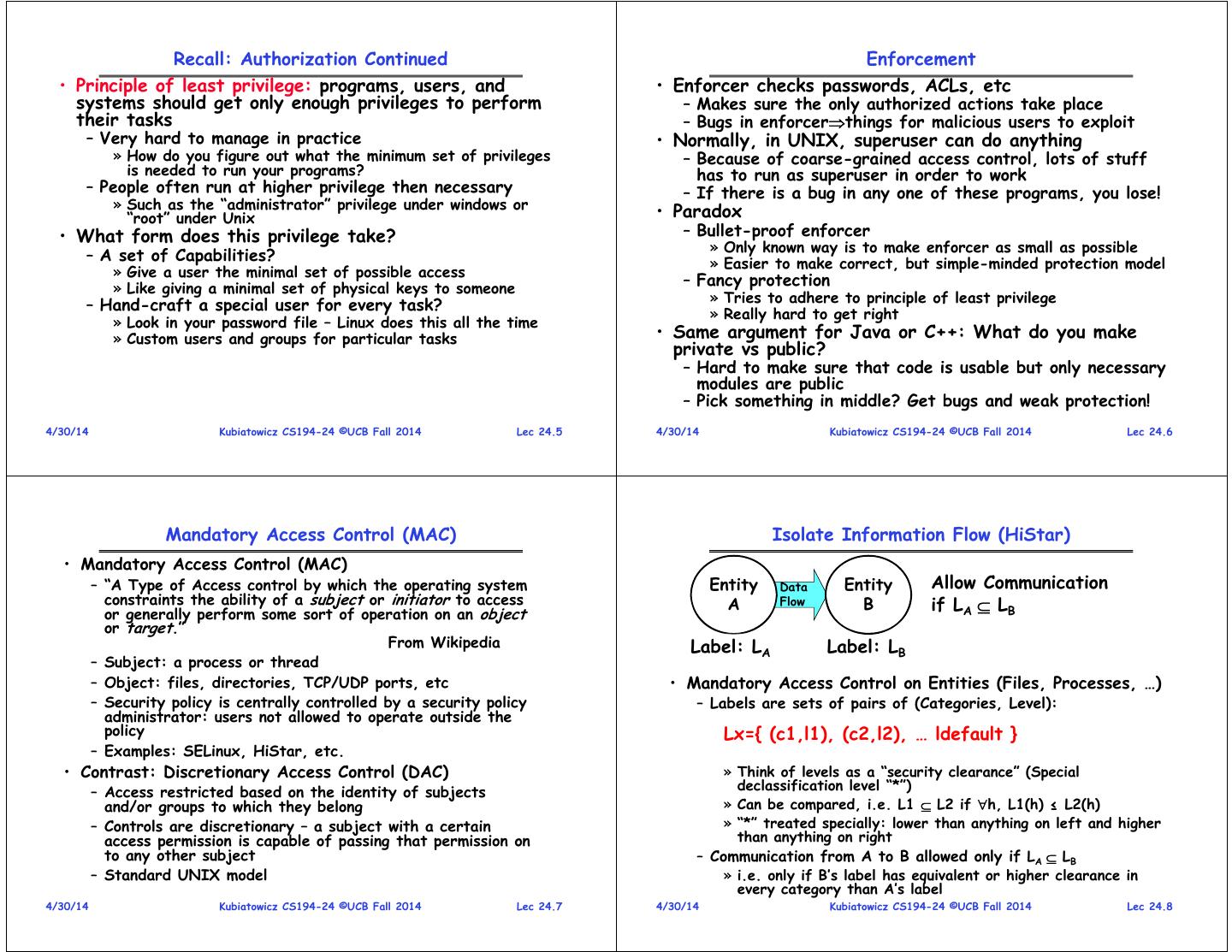

2 . Recall: Authorization Continued Enforcement • Principle of least privilege: programs, users, and • Enforcer checks passwords, ACLs, etc systems should get only enough privileges to perform – Makes sure the only authorized actions take place their tasks – Bugs in enforcerthings for malicious users to exploit – Very hard to manage in practice • Normally, in UNIX, superuser can do anything » How do you figure out what the minimum set of privileges – Because of coarse-grained access control, lots of stuff is needed to run your programs? has to run as superuser in order to work – People often run at higher privilege then necessary – If there is a bug in any one of these programs, you lose! » Such as the “administrator” privilege under windows or “root” under Unix • Paradox • What form does this privilege take? – Bullet-proof enforcer » Only known way is to make enforcer as small as possible – A set of Capabilities? » Easier to make correct, but simple-minded protection model » Give a user the minimal set of possible access » Like giving a minimal set of physical keys to someone – Fancy protection » Tries to adhere to principle of least privilege – Hand-craft a special user for every task? » Really hard to get right » Look in your password file – Linux does this all the time » Custom users and groups for particular tasks • Same argument for Java or C++: What do you make private vs public? – Hard to make sure that code is usable but only necessary modules are public – Pick something in middle? Get bugs and weak protection! 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.5 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.6 Mandatory Access Control (MAC) Isolate Information Flow (HiStar) • Mandatory Access Control (MAC) – “A Type of Access control by which the operating system Entity Data Entity Allow Communication constraints the ability of a subject or initiator to access A Flow B if LA LB or generally perform some sort of operation on an object or target.” From Wikipedia Label: LA Label: LB – Subject: a process or thread – Object: files, directories, TCP/UDP ports, etc • Mandatory Access Control on Entities (Files, Processes, …) – Security policy is centrally controlled by a security policy – Labels are sets of pairs of (Categories, Level): administrator: users not allowed to operate outside the policy Lx={ (c1,l1), (c2,l2), … ldefault } – Examples: SELinux, HiStar, etc. • Contrast: Discretionary Access Control (DAC) » Think of levels as a “security clearance” (Special declassification level “*”) – Access restricted based on the identity of subjects and/or groups to which they belong » Can be compared, i.e. L1 L2 if h, L1(h) ≤ L2(h) – Controls are discretionary – a subject with a certain » “*” treated specially: lower than anything on left and higher access permission is capable of passing that permission on than anything on right to any other subject – Communication from A to B allowed only if LA LB – Standard UNIX model » i.e. only if B’s label has equivalent or higher clearance in every category than A’s label 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.7 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.8

3 . HiStar Virus Scanner Example SELinux: Secure-Enhanced Linux • SELinux: a Linux feature that provides the mechanisms for access control polices including MAC – A set of kernel modifications and user-space tools added to various Linux distributions – Separate enforcement of security decisions from policy – Integrated into mainline Linux kernel since version 2.6 • Originally started by the Information Assurance Research Group of the NSA, working with Secure Computing Corporation • Security labels on subjects and objects • Bob’s Files Marked as {br3, bw0, 1} – Subjects such as processes have labels which are • User login for Bob creates process {br*, bw*, 1} tuples such as (role:user:domain) – Launches wrapper program which allocates v – Usually all real users share same Selinux user (“user_t”) • Wrapper launches scanner with taint v3 – Objects such as files, network ports, and hardware also labeled with tuples such as (name:role:type) – Temp directory marked {br3, v3, 1} • Policy – Can not write Bob’s files, since less tainted (1) in category v – A set of rules specify which operations can be performed by than scanner is (which is 3) an entity with a given label on an entity with a given label – Scanner can read from Virus DB, cannot write to anything – Also, policy specifies which domain transitions can occur except through wrapper program (which decides how to declassify information tagged with v 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.9 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.10 SELinux Domain-type Enforcement Example • Execute the command “ls -Z /usr/bin/passwd” • Each object is labeled by a type – This will produce the output: -r-s—x—x root root system_u:object_r:passwd_exec_t – Object semantics /usr/bin/passwd – Example: – Using this provided information, we can then create rules to » /etc/shadow etc_t have a domain transition. » /etc/rc.d/init.d/httpd httpd_script_exec_t • Three rules are required to give the user the ability to do a domain transition to the password file: • Objects are grouped by object security classes – allow user_t passwd_exec_t : file {getattr execute}; – Such as files, sockets, IPC channels, capabilities » Lets user_t execute an execve() system call on passwd_exec_t – The security class determines what operations can be – allow passwd_t passwd_exec_t : file entrypoint; performed on the object » This rule provides entrypoint access to the passwd_t • Each subject (process) is associated with a domain domain, entrypoint defines which executable files can “enter” a domain. – E.g., httpd_t, sshd_t, sendmail_t – allow user_t passwd_t : process transition; » The original type (user_t) must have transition permission to the new type (passwd_t) for the domain transition to be allowed. CS426 Fall 2010/Lecture 28 11 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.11 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.12

4 . Limitations of the Type Enforcement Model Administrivia • Using only programs, but not information flow tracking • Final: Tuesday May 13th cannot protect against certain attacks – 310 Soda Hall – Consider for example: httpd -> shell -> load kernel module – 11:30—2:30 • Policies loadable with package-specific modules – Bring calculator, 2 pages of hand-written notes – Only with root shells derived from console or other well • Review Session defined paths – Sunday 5/11 – Special language and compilation process to build “binary” policies – 4-6PM, 405 Soda Hall » Graphical user interfaces to construct policies • Don’t forget final Lecture during RRR • Note that SeLinux results in very large policies – Next Monday. Send me final topics! – Hundreds of thousands of rules for Linux – I don’t really have a lot of topics yet! – Difficult to understand – Right now I could talk about: – Often people turn off SeLinux in frustration » Quantum Computing (Someone actually asked) » I’m not going to tell you how » Mobile Operating Systems (iOS/Android) » Talk about Swarm Lab 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.13 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.14 Data Centric Access Control (DCAC?) Recall: Authentication in Distributed Systems • Problem with many current models: • What if identity must be established across network? – If you break into OS data is compromised – In reality, it is the data that matters – hardware is Network somewhat irrelevant (and ubiquitous) PASS: gina • Data-Centric Access Control (DCAC) – I just made this term up, but you get the idea – Protect data at all costs, assume that software might be compromised – Requires encryption and sandboxing techniques – Need way to prevent exposure of information while still proving identity to remote system – If hardware (or virtual machine) has the right – Many of the original UNIX tools sent passwords over the cryptographic keys, then data is released wire “in clear text” • All of the previous authorization and enforcement » E.g.: telnet, ftp, yp (yellow pages, for distributed login) mechanisms reduce to key distribution and protection » Result: Snooping programs widespread – Never let decrypted data or keys outside sandbox • What do we need? Cannot rely on physical security! – Examples: Use of TPM, virtual machine mechanisms – Encryption: Privacy, restrict receivers – Authentication: Remote Authenticity, restrict senders 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.15 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.16

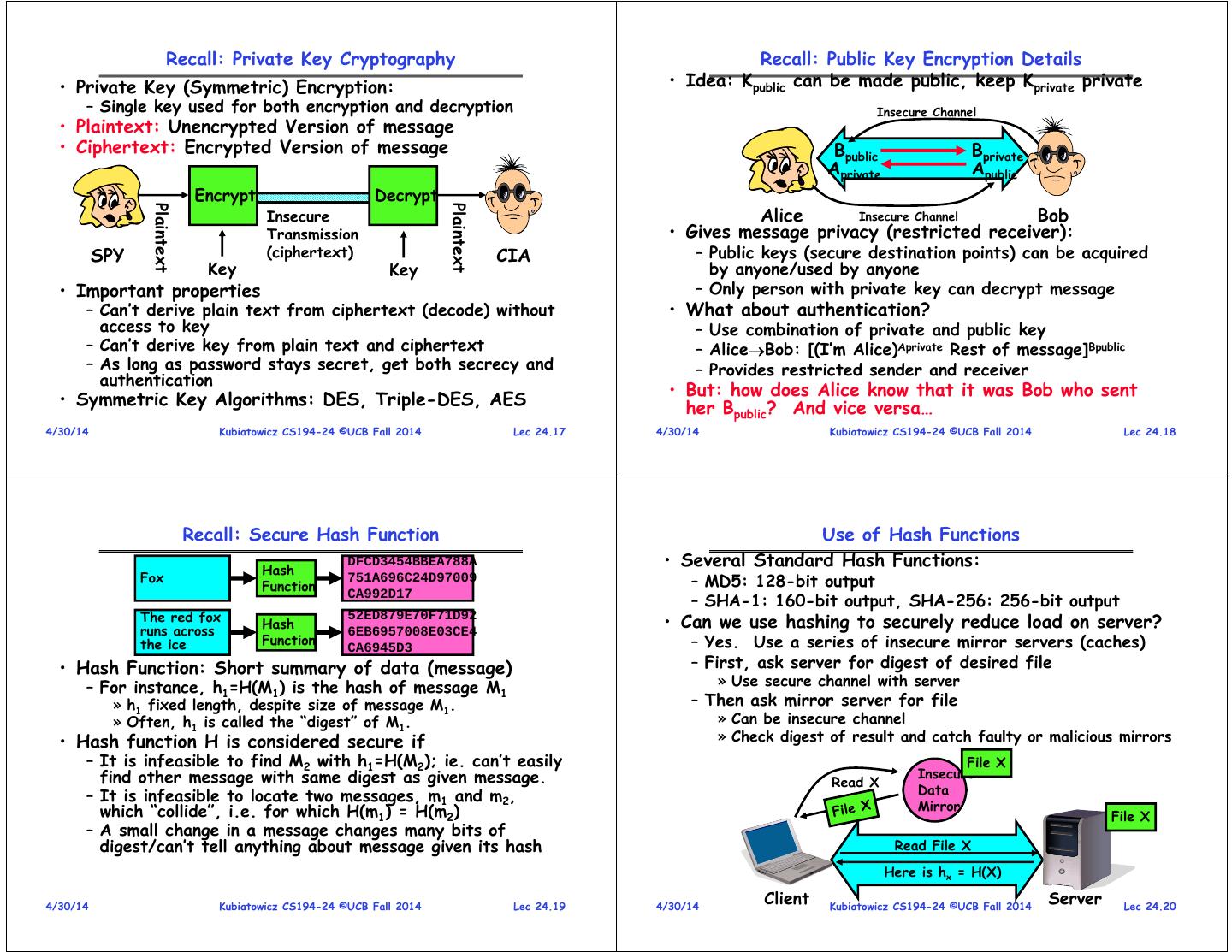

5 . Recall: Private Key Cryptography Recall: Public Key Encryption Details • Private Key (Symmetric) Encryption: • Idea: Kpublic can be made public, keep Kprivate private – Single key used for both encryption and decryption Insecure Channel • Plaintext: Unencrypted Version of message • Ciphertext: Encrypted Version of message Bpublic Bprivate Aprivate Apublic Encrypt Decrypt Alice Bob Plaintext Plaintext Insecure Insecure Channel Transmission • Gives message privacy (restricted receiver): SPY (ciphertext) CIA – Public keys (secure destination points) can be acquired Key Key by anyone/used by anyone • Important properties – Only person with private key can decrypt message – Can’t derive plain text from ciphertext (decode) without • What about authentication? access to key – Use combination of private and public key – Can’t derive key from plain text and ciphertext – AliceBob: [(I’m Alice)Aprivate Rest of message]Bpublic – As long as password stays secret, get both secrecy and – Provides restricted sender and receiver authentication • But: how does Alice know that it was Bob who sent • Symmetric Key Algorithms: DES, Triple-DES, AES her Bpublic? And vice versa… 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.17 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.18 Recall: Secure Hash Function Use of Hash Functions Hash DFCD3454BBEA788A • Several Standard Hash Functions: Fox 751A696C24D97009 – MD5: 128-bit output Function – SHA-1: 160-bit output, SHA-256: 256-bit output CA992D17 The red fox 52ED879E70F71D92 • Can we use hashing to securely reduce load on server? Hash runs across 6EB6957008E03CE4 the ice Function CA6945D3 – Yes. Use a series of insecure mirror servers (caches) • Hash Function: Short summary of data (message) – First, ask server for digest of desired file – For instance, h1=H(M1) is the hash of message M1 » Use secure channel with server » h1 fixed length, despite size of message M1. – Then ask mirror server for file » Often, h1 is called the “digest” of M1. » Can be insecure channel • Hash function H is considered secure if » Check digest of result and catch faulty or malicious mirrors – It is infeasible to find M2 with h1=H(M2); ie. can’t easily File X find other message with same digest as given message. Insecure Read X Data – It is infeasible to locate two messages, m1 and m2, which “collide”, i.e. for which H(m1) = H(m2) Mirror File X – A small change in a message changes many bits of digest/can’t tell anything about message given its hash Read File X Here is hx = H(X) 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.19 4/30/14 Client Kubiatowicz CS194-24 ©UCB Fall 2014 Server Lec 24.20

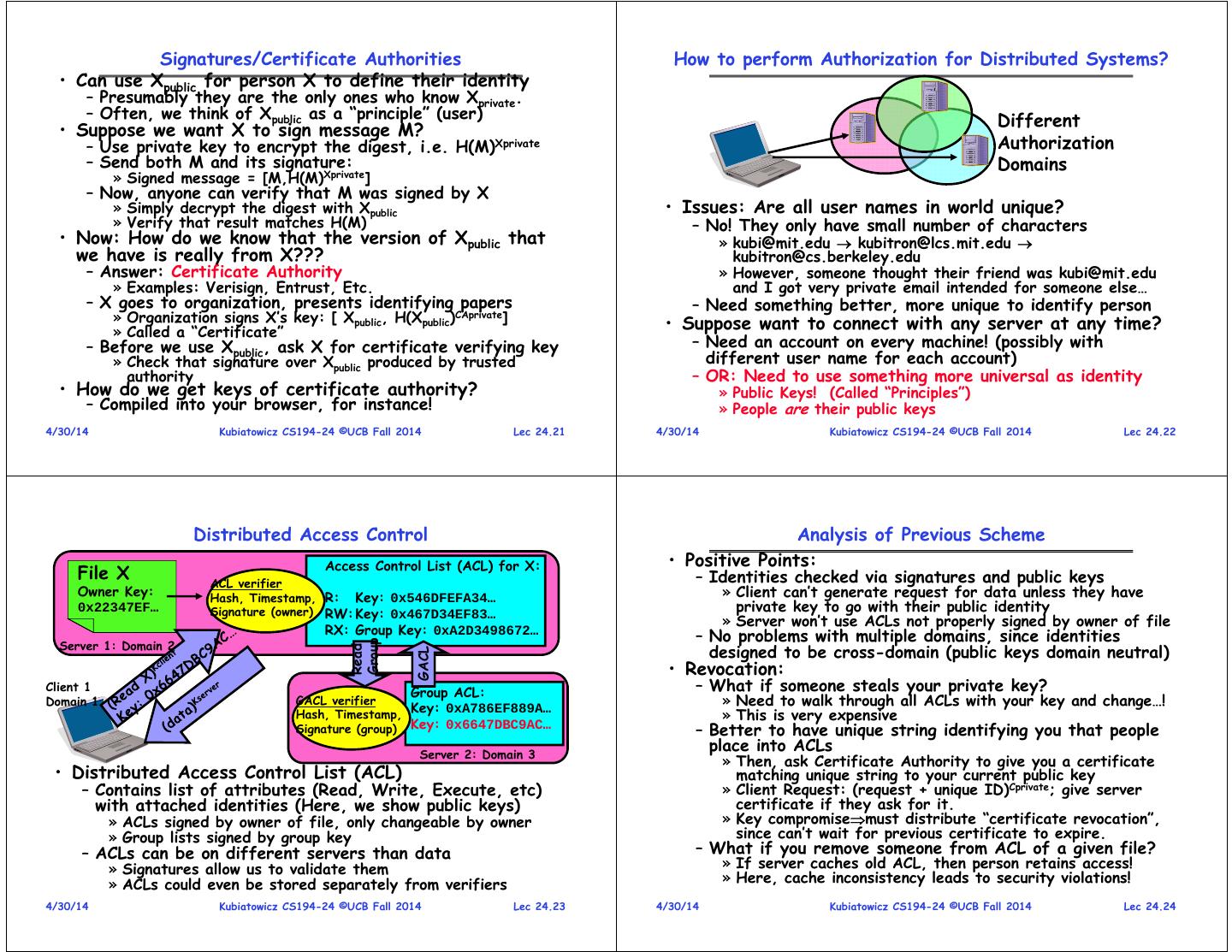

6 . Signatures/Certificate Authorities How to perform Authorization for Distributed Systems? • Can use Xpublic for person X to define their identity – Presumably they are the only ones who know Xprivate. – Often, we think of Xpublic as a “principle” (user) Different • Suppose we want X to sign message M? – Use private key to encrypt the digest, i.e. H(M)Xprivate Authorization – Send both M and its signature: Domains » Signed message = [M,H(M)Xprivate] – Now, anyone can verify that M was signed by X » Simply decrypt the digest with Xpublic • Issues: Are all user names in world unique? » Verify that result matches H(M) – No! They only have small number of characters • Now: How do we know that the version of Xpublic that » kubi@mit.edu kubitron@lcs.mit.edu we have is really from X??? kubitron@cs.berkeley.edu – Answer: Certificate Authority » However, someone thought their friend was kubi@mit.edu » Examples: Verisign, Entrust, Etc. and I got very private email intended for someone else… – X goes to organization, presents identifying papers – Need something better, more unique to identify person » Organization signs X’s key: [ Xpublic, H(Xpublic)CAprivate] • Suppose want to connect with any server at any time? » Called a “Certificate” – Before we use Xpublic, ask X for certificate verifying key – Need an account on every machine! (possibly with » Check that signature over Xpublic produced by trusted different user name for each account) authority – OR: Need to use something more universal as identity • How do we get keys of certificate authority? » Public Keys! (Called “Principles”) – Compiled into your browser, for instance! » People are their public keys 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.21 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.22 Distributed Access Control Analysis of Previous Scheme Access Control List (ACL) for X: • Positive Points: File X ACL verifier – Identities checked via signatures and public keys Owner Key: Hash, Timestamp, R: Key: 0x546DFEFA34… » Client can’t generate request for data unless they have 0x22347EF… Signature (owner) RW: Key: 0x467D34EF83… private key to go with their public identity » Server won’t use ACLs not properly signed by owner of file RX: Group Key: 0xA2D3498672… – No problems with multiple domains, since identities Server 1: Domain 2 Group designed to be cross-domain (public keys domain neutral) Read GACL • Revocation: Client 1 Group ACL: – What if someone steals your private key? Domain 1 GACL verifier Key: 0xA786EF889A… » Need to walk through all ACLs with your key and change…! Hash, Timestamp, » This is very expensive Signature (group) Key: 0x6647DBC9AC… – Better to have unique string identifying you that people Server 2: Domain 3 place into ACLs » Then, ask Certificate Authority to give you a certificate • Distributed Access Control List (ACL) matching unique string to your current public key – Contains list of attributes (Read, Write, Execute, etc) » Client Request: (request + unique ID)Cprivate; give server with attached identities (Here, we show public keys) certificate if they ask for it. » ACLs signed by owner of file, only changeable by owner » Key compromisemust distribute “certificate revocation”, » Group lists signed by group key since can’t wait for previous certificate to expire. – ACLs can be on different servers than data – What if you remove someone from ACL of a given file? » Signatures allow us to validate them » If server caches old ACL, then person retains access! » ACLs could even be stored separately from verifiers » Here, cache inconsistency leads to security violations! 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.23 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.24

7 . Analysis Continued Distributed Decision Making • Who signs the data? • Why is distributed decision making desirable? – Or: How does client know they are getting valid data? – Fault Tolerance! – Signed by server? – Group of machines comes to decision even if one or more fail » What if server compromised? Should client trust server? – Signed by owner of file? » Simple failure mode called “failstop” (is this realistic?) » Better, but now only owner can update file! – After decision made, result recorded in multiple places » Pretty inconvenient! • Two-Phase Commit protocol does this – Signed by group of servers that accepted latest update? – Stable log on each machine tracks whether commit has happened » If must have signatures from all servers Safe, but one bad server can prevent update from happening » If a machine crashes, when it wakes up it first checks its log to recover state of world at time of crash » Instead: ask for a threshold number of signatures » Byzantine agreement can help here – Prepare Phase: • How do you know that data is up-to-date? » The global coordinator requests that all participants will promise to – Valid signature only means data is valid older version commit or rollback the transaction – Freshness attack: » Participants record promise in log, then acknowledge » Malicious server returns old data instead of recent data » If anyone votes to abort, coordinator writes “Abort” in its log and » Problem with both ACLs and data tells everyone to abort; each records “Abort” in log » E.g.: you just got a raise, but enemy breaks into a server – Commit Phase: and prevents payroll from seeing latest version of update » After all participants respond that they are prepared, then the – Hard problem coordinator writes “Commit” to its log » Needs to be fixed by invalidating old copies or having a » Then asks all nodes to commit; they respond with ack trusted group of servers (Byzantine Agrement?) » After receive acks, coordinator writes “Got Commit” to log – Log helps ensure all machines either commit or don’t commit 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.25 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.26 Distributed Decision Making Discussion (Con’t) Byzantine General’s Problem • Undesirable feature of Two-Phase Commit: Blocking Lieutenant – One machine can be stalled until another site recovers: » Site B writes “prepared to commit” record to its log, sends a “yes” vote to the coordinator (site A) and crashes Retreat! » Site A crashes Attack! Lieutenant » Site B wakes up, check its log, and realizes that it has voted “yes” on the update. It sends a message to site A asking what happened. At this point, B cannot decide to General abort, because update may have committed » B is blocked until A comes back Malicious! Lieutenant – A blocked site holds resources (locks on updated items, pages pinned in memory, etc) until learns fate of update • Byazantine General’s Problem (n players): – One General • Alternative: There are alternatives such as “Three – n-1 Lieutenants Phase Commit” which don’t have this blocking problem – Some number of these (f) can be insane or malicious • What happens if one or more of the nodes is • The commanding general must send an order to his n-1 malicious? lieutenants such that: – IC1: All loyal lieutenants obey the same order – Malicious: attempting to compromise the decision making – IC2: If the commanding general is loyal, then all loyal lieutenants obey the order he sends 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.27 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.28

8 . Byzantine General’s Problem (con’t) Trusted Computing • Impossibility Results: • Problem: Can’t trust that software is correct – Cannot solve Byzantine General’s Problem with n=3 – Viruses/Worms install themselves into kernel or system because one malicious player can mess up things without users knowledge – Rootkit: software tools to conceal running processes, files General General or system data, which helps an intruder maintain access Attack! Attack! Attack! Retreat! to a system without the user's knowledge Lieutenant Lieutenant Lieutenant Lieutenant – How do you know that software won’t leak private Retreat! Retreat! information or further compromise user’s access? – With f faults, need n > 3f to solve problem • A solution: What if there were a secure way to validate all software running on system? • Various algorithms exist to solve problem – Idea: Compute a cryptographic hash of BIOS, Kernel, – Original algorithm has #messages exponential in n crucial programs, etc. – Newer algorithms have message complexity O(n2) – Then, if hashes don’t match, know have problem » One from MIT, for instance (Castro and Liskov, 1999) • Use of BFT (Byzantine Fault Tolerance) algorithm • Further extension: – Secure attestation: ability to prove to a remote party – Allow multiple machines to make a coordinated decision that local machine is running correct software even if some subset of them (< n/3 ) are malicious – Reason: allow remote user to avoid interacting with compromised system Distributed • Challenge: How to do this in an unhackable way Request – Must have hardware components somewhere Decision 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.29 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.30 TCPA: Trusted Computing Platform Alliance Trusted Platform Module • Idea: Add a Trusted Platform Module (TPM) Functional Non-volatile Volatile Units Memory Memory • Founded in 1999: Compaq, HP, IBM, Intel, Microsoft Random Num Endorsement Key RSA Key Slot-0 • Currently more than 200 members Generator (2048 Bits) … SHA-1 Storage Root Key RSA Key Slot-9 • Changes to platform Hash (2048 Bits) PCR-0 – Extra: Trusted Platform Module (TPM) HMAC Owner Auth … Secret(160 Bits) PCR-15 – Software changes: BIOS + OS RSA Encrypt/ Decrypt Key Handles • Main properties RSA Key Auth Session Generation Handles – Secure bootstrap – Platform attestation • Cryptographic operations – Hashing: SHA-1, HMAC – Protected storage – Random number generator • Microsoft version: – Asymmetric key generation: RSA (512, 1024, 2048) ATMEL TPM Chip – Asymmetric encryption/ decryption: RSA – Palladium (Used in IBM equipment) Symmetric encryption/ decryption: DES, 3DES (AES) – – Note quite same: More extensive • Tamper resistant (hash and key) storage hardware/software system 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.31 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.32

9 . TCPA: PCR Reporting Value TCPA: Secure bootstrap Platform Configuration Register extended value present value measured values Option Hash Concatenate Hardware Memory TPM ROMs • Platform Configuration Registers (PCR0-16) – Reset at boot time to well defined value BIOS OS boot BIOS OS Application Network – Only thing that software can do is give new block loader measured value to TPM » TPM takes new value, concatenates with old value, Root of trust in then hashes result together for new PCR integrity New OS Component • Measuring involves hashing components of software measurement TPM • Integrity reporting: report the value of the PCR measuring – Challenge-response protocol: Root of trust in reporting integrity reporting storing values Challenger nonce Trusted Platform Agent logging methods TPM SignID(nonce, PCR, log), CID 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.33 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.34 Implications of TPM Philosophy? Summary • Could have great benefits • Mandatory Access Control (MAC) – Prevent use of malicious software – Separate access policy from use – Parts of OceanStore would benefit – Examples: HiStar, SELinux • What does “trusted computing” really mean? • Distributed identity – You are forced to trust hardware to be correct! – Use cryptography (Public Key, Signed by PKI) – Could also mean that user is not trusted to install • Distributed storage example – Revocation: How to remove permissions from someone? their own software – Integrity: How to know whether data is valid • Many in the security community have talked about – Freshness: How to know whether data is recent potential abuses • Byzantine General’s Problem: distributed decision making with – These are only theoretical, but very possible malicious failures – Software fixing – One general, n-1 lieutenants: some number of them may be malicious (often “f” of them) » What if companies prevent user from accessing their – All non-malicious lieutenants must come to same decision websites with non-Microsoft browser? – If general not malicious, lieutenants must follow general » Possible to encrypt data and only decrypt if software – Only solvable if n 3f+1 still matches Could prevent display of .doc files except on Microsoft versions of software • Trusted Hardware – A secure layer of hardware that can: – Digital Rights Management (DRM): » Generate proofs about software running on the machine » Prevent playing of music/video except on accepted » Allow secure access to information without revealing keys to (potentially) players compromised layers of software » Selling of CDs that only play 3 times? – Cannonical example: TPM 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.35 4/30/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 24.36