- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Dominant Resource Fairness Two-Level Scheduling

展开查看详情

1 . Goals for Today CS194-24 • DRF Advanced Operating Systems • Lithe Structures and Implementation • Two-Level Scheduling/Tessellation Lecture 18 Dominant Resource Fairness Two-Level Scheduling Interactive is important! Ask Questions! April 7th, 2014 Prof. John Kubiatowicz http://inst.eecs.berkeley.edu/~cs194-24 Note: Some slides and/or pictures in the following are adapted from slides ©2013 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.2 Recall: CFS (Continued) Recall: EDF: Schedulability Test • Idea: track amount of “virtual time” received by each Theorem (Utilization-based Schedulability Test): process when it is executing – Take real execution time, scale by weighting factor A task set T1 , T2 , , Tn with Di Pi is schedulable by the earliest deadline first (EDF) » Lower priority real time divided by smaller weight scheduling algorithm if – Keep virtual time advancing at same rate among processes. Thus, scaling factor adjusts amount of CPU time/process n Ci • More details D 1 – Weights relative to Nice-value 0 i 1 i » Relative weights~: (1.25)-nice » vruntime = runtime/relative_weight – Processes with nice-value 0 Exact schedulability test (necessary + sufficient) » vruntime advances at same rate as real time Proof: [Liu and Layland, 1973] – Processes with higher nice value (lower priority) » vruntime advances at faster rate than real time – Processes with lower nice value (higher priority) » vruntime advances at slower rate than real time 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.3 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.4

2 . CPU Recall: Constant Bandwidth Server What is Fair Sharing? 100% 33% • Intuition: give fixed share of CPU to certain of jobs – Good for tasks with probabilistic resource requirements • n users want to share a resource (e.g., CPU) 50% 33% • Basic approach: Slots (called “servers”) scheduled with – Solution: 33% EDF, rather than jobs Allocate each 1/n of the shared resource 0% – CBS Server defined by two parameters: Qs and Ts 100% 20% – Mechanism for tracking processor usage so that no more • Generalized by max-min fairness than Qs CPU seconds used every Ts seconds when there is 40 demand. Otherwise get to use processor as you like – Handles if a user wants less than its fair share 50% % – E.g. user 1 wants no more than 20% • Since using EDF, can mix hard-realtime and soft 40 % realtime: 0% • Generalized by weighted max-min fairness 100% 33% – Give weights to users according to importance – User 1 gets weight 1, user 2 weight 2 50% 66% 0% 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.5 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.6 Why is Fair Sharing Useful? Properties of Max-Min Fairness • Weighted Fair Sharing / Proportional Shares • Share guarantee – User 1 gets weight 2, user 2 weight 1 100% – Each user can get at least 1/n of the resource 66 – But will get less if her demand is less • Priorities 50% % – Give user 1 weight 1000, user 2 weight 1 33 • Strategy-proof % 0% – Users are not better off by asking for more than • Revervations CPU they need – Ensure user 1 gets 10% of a resource 100% 10 % – Users have no reason to lie – Give user 1 weight 10, sum weights ≤ 100 50 50% % • Isolation Policy 40 – Users cannot affect others beyond their fair % • Max-min fairness is the only “reasonable” share 0% CPU mechanism with these two properties 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.7 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.8

3 . Why Care about Fairness? When is Max-Min Fairness not Enough? • Desirable properties of max-min fairness – Isolation policy: • Need to schedule multiple, heterogeneous resources A user gets her fair share irrespective of the demands of – Example: Task scheduling in datacenters other users » Tasks consume more than just CPU – CPU, memory, disk, and I/O – Flexibility separates mechanism from policy: Proportional sharing, priority, reservation,... • What are today’s datacenter task demands? • Many schedulers use max-min fairness – Datacenters: Hadoop’s fair sched, capacity, Quincy – OS: rr, prop sharing, lottery, linux cfs, ... – Networking: wfq, wf2q, sfq, drr, csfq, ... 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.9 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.10 Heterogeneous Resource Demands Problem 100% Some tasks are CPU-intensive Single resource example 50 % – 1 resource: CPU 50% – User 1 wants <1 CPU> per task 50 – User 2 wants <3 CPU> per task Most task need ~ Some tasks are % <2 CPU, 2 GB memory-intensive 0% CPU RAM> 100% Multi-resource example – 2 resources: CPUs & memory 50% ? – User 1 wants <1 CPU, 4 GB> per task – User 2 wants <3 CPU, 1 GB> per task ? 2000-node Hadoop Cluster at Facebook (Oct 2010) – What is a fair allocation? 0% CPU mem 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.11 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.12

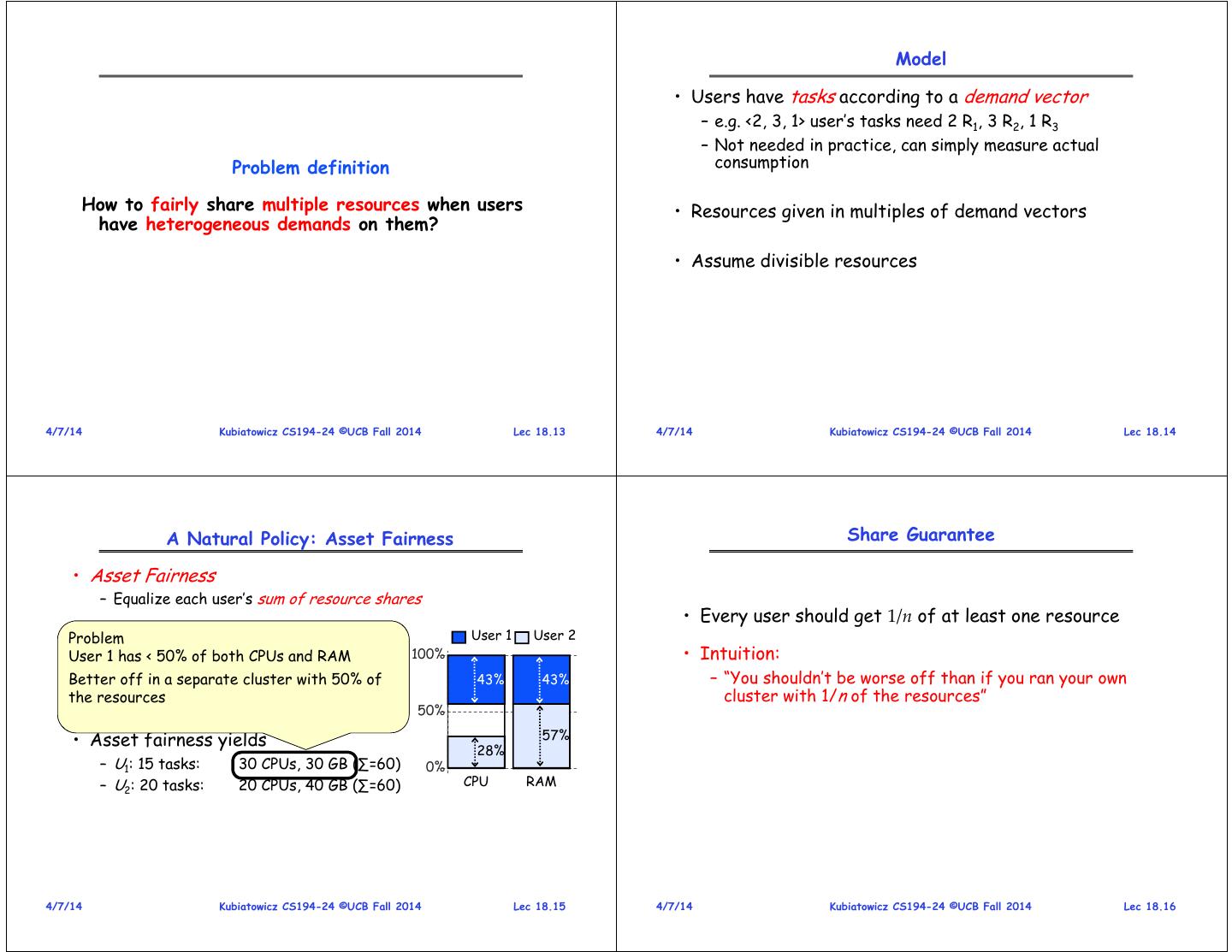

4 . Model • Users have tasks according to a demand vector – e.g. <2, 3, 1> user’s tasks need 2 R1, 3 R2, 1 R3 – Not needed in practice, can simply measure actual Problem definition consumption How to fairly share multiple resources when users • Resources given in multiples of demand vectors have heterogeneous demands on them? • Assume divisible resources 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.13 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.14 A Natural Policy: Asset Fairness Share Guarantee • Asset Fairness – Equalize each user’s sum of resource shares • Every user should get 1/n of at least one resource Problem User 1 User 2 • Cluster User 1 has < with 50% of70both CPUs, CPUs70 andGB RAM RAM 100% • Intuition: – Uoff Better 1 needs <2 CPU, 2cluster in a separate GB RAM> per with task 50% of 43% 43% – “You shouldn’t be worse off than if you ran your own – U2 needs <1 CPU, 2 GB RAM> per task the resources cluster with 1/n of the resources” 50% • Asset fairness yields 57% 28% – U1: 15 tasks: 30 CPUs, 30 GB (∑=60) 0% – U2: 20 tasks: 20 CPUs, 40 GB (∑=60) CPU RAM 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.15 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.16

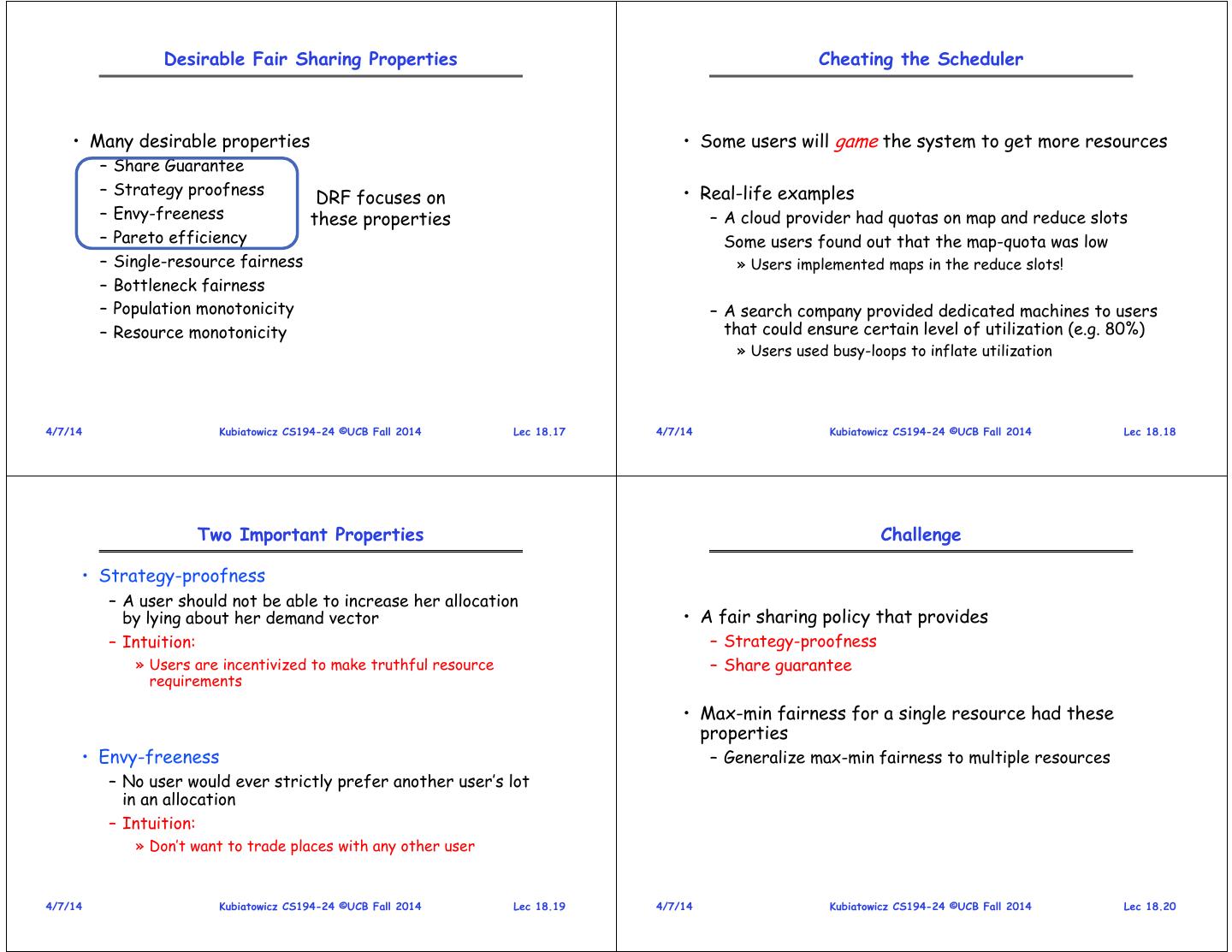

5 . Desirable Fair Sharing Properties Cheating the Scheduler • Many desirable properties • Some users will game the system to get more resources – Share Guarantee – Strategy proofness • Real-life examples DRF focuses on – Envy-freeness these properties – A cloud provider had quotas on map and reduce slots – Pareto efficiency Some users found out that the map-quota was low – Single-resource fairness » Users implemented maps in the reduce slots! – Bottleneck fairness – Population monotonicity – A search company provided dedicated machines to users – Resource monotonicity that could ensure certain level of utilization (e.g. 80%) » Users used busy-loops to inflate utilization 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.17 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.18 Two Important Properties Challenge • Strategy-proofness – A user should not be able to increase her allocation by lying about her demand vector • A fair sharing policy that provides – Intuition: – Strategy-proofness » Users are incentivized to make truthful resource – Share guarantee requirements • Max-min fairness for a single resource had these properties • Envy-freeness – Generalize max-min fairness to multiple resources – No user would ever strictly prefer another user’s lot in an allocation – Intuition: » Don’t want to trade places with any other user 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.19 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.20

6 . Dominant Resource Fairness Dominant Resource Fairness (2) • A user’s dominant resource is the resource she has • Apply max-min fairness to dominant shares the biggest share of • Equalize the dominant share of the users – Example: – Example: Total resources: <10 CPU, 4 GB> Total resources: <9 CPU, 18 GB> User 1’s allocation: <2 CPU, 1 GB> User 1 demand: <1 CPU, 4 GB> dominant res: mem Dominant resource is memory as 1/4 > 2/10 (1/5) User 2 demand: <3 CPU, 1 GB> dominant res: CPU 100% 3 CPUs 12 GB User 1 • A user’s dominant share is the fraction of the dominant resource she is allocated User 2 66% – User 1’s dominant share is 25% (1/4) 50% 66% 6 CPUs 2 GB 0% CPU mem (9 total) (18 total) 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.21 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.22 DRF is Fair Online DRF Scheduler • DRF is strategy-proof • DRF satisfies the share guarantee • DRF allocations are envy-free Whenever there are available resources and tasks to run: Schedule a task to the user with smallest dominant share See DRF paper for proofs • O(log n) time per decision using binary heaps • Need to determine demand vectors 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.23 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.24

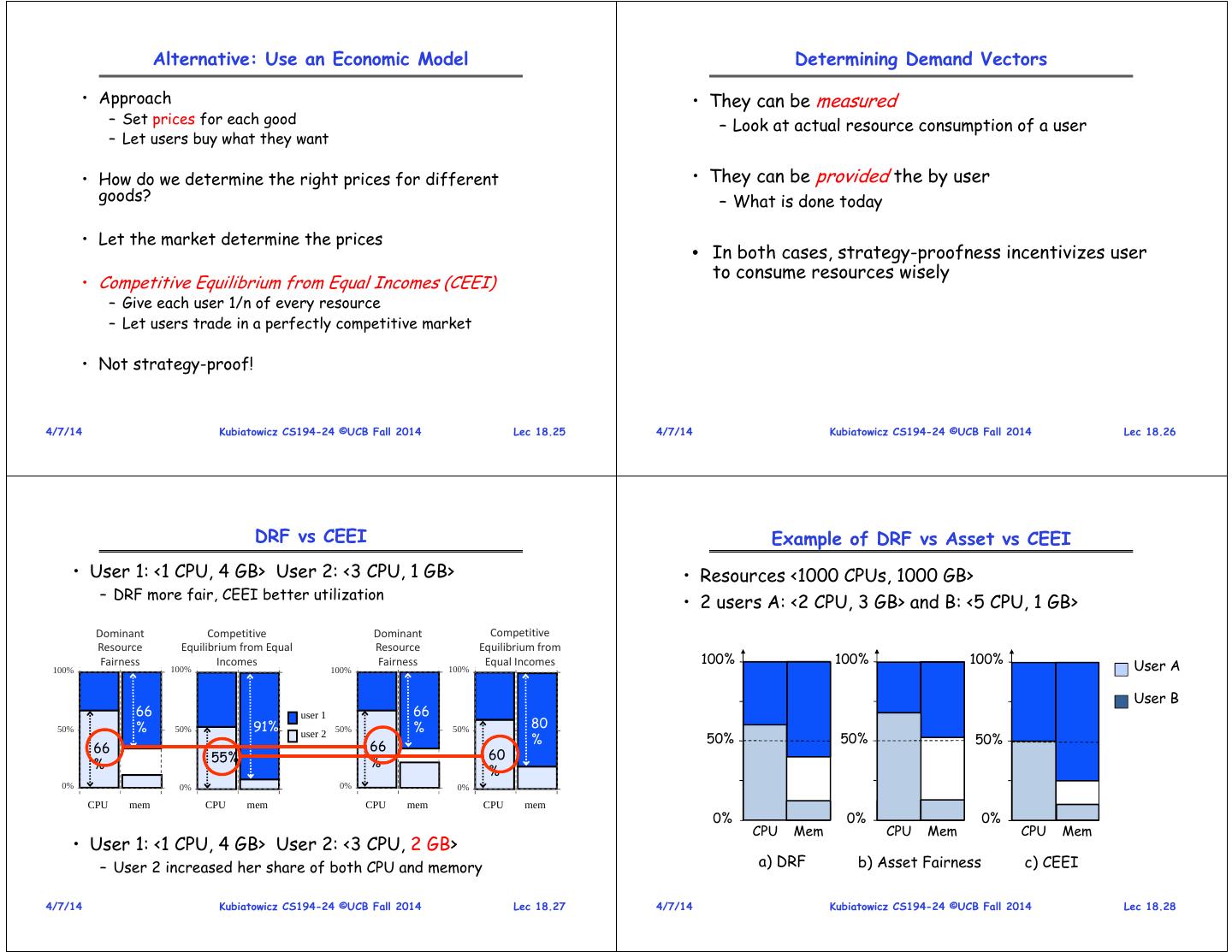

7 . Alternative: Use an Economic Model Determining Demand Vectors • Approach • They can be measured – Set prices for each good – Look at actual resource consumption of a user – Let users buy what they want • How do we determine the right prices for different • They can be provided the by user goods? – What is done today • Let the market determine the prices • In both cases, strategy-proofness incentivizes user to consume resources wisely • Competitive Equilibrium from Equal Incomes (CEEI) – Give each user 1/n of every resource – Let users trade in a perfectly competitive market • Not strategy-proof! 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.25 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.26 DRF vs CEEI Example of DRF vs Asset vs CEEI • User 1: <1 CPU, 4 GB> User 2: <3 CPU, 1 GB> • Resources <1000 CPUs, 1000 GB> – DRF more fair, CEEI better utilization • 2 users A: <2 CPU, 3 GB> and B: <5 CPU, 1 GB> Dominant Competitive Dominant Competitive Resource Equilibrium from Equal Resource Equilibrium from 100% 100% 100% 100% Fairness 100% Incomes 100% Fairness 100% Equal Incomes User A User B 66 user 1 66 50% % 50% 91% 50% % 50% 80 user 2 % 50% 50% 50% 66 66 55% 60 % % % 0% 0% 0% 0% CPU mem CPU mem CPU mem CPU mem 0% 0% 0% CPU Mem CPU Mem CPU Mem • User 1: <1 CPU, 4 GB> User 2: <3 CPU, 2 GB> – User 2 increased her share of both CPU and memory a) DRF b) Asset Fairness c) CEEI 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.27 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.28

8 . Composability is Essential Motivational Example Sparse QR Factorization (Tim Davis, Univ of Florida) App 1 App 2 App Column BLAS BLAS Elimination MKL Goto Tree SPQR BLAS BLAS Frontal Matrix Factorization MKL TBB code reuse modularity OpenMP same library implementation, different same app, different library apps implementations OS Hardware Composability is key to building large, complex apps. Software Architecture System Stack 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.29 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.30 TBB, MKL, OpenMP Suboptimal Performance • Intel’s Threading Building Blocks (TBB) – Library that allows programmers to express parallelism using a higher-level, task-based, abstraction Performance of SPQR on 16-core AMD Opteron System – Uses work-stealing internally (i.e. Cilk) – Open-source 6 Speedup over Sequential • Intel’s Math Kernel Library (MKL) 5 – Uses OpenMP for parallelism 4 3 • OpenMP Out-of-the-Box – Allows programmers to express parallelism in the 2 SPMD-style using a combination of compiler directives and a runtime library 1 – Creates SPMD teams internally (i.e. UPC) 0 – Open-source implementation of OpenMP from GNU deltaX landmark ESOC Rucci (libgomp) Matrix 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.31 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.32

9 . Out-of-the-Box Configurations Providing Performance Isolation Using Intel MKL with Threaded Applications http://www.intel.com/support/performancetools/libraries/mkl/sb/CS-017177.htm Core Core Core Core 0 1 2 3 TBB OpenMP OS virtualized kernel threads Hardware 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.33 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.34 “Tuning” the Code Partition Resources Performance of SPQR on 16-core AMD Opteron System 6 Speedup over Sequential 5 4 Core Core Core Core Out-of-the-Box 0 1 2 3 3 Serial MKL 2 TBB OpenMP 1 OS 0 Hardware deltaX landmark ESOC Rucci Matrix Tim Davis’ “tuned” SPQR by manually partitioning the resources. 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.35 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.36

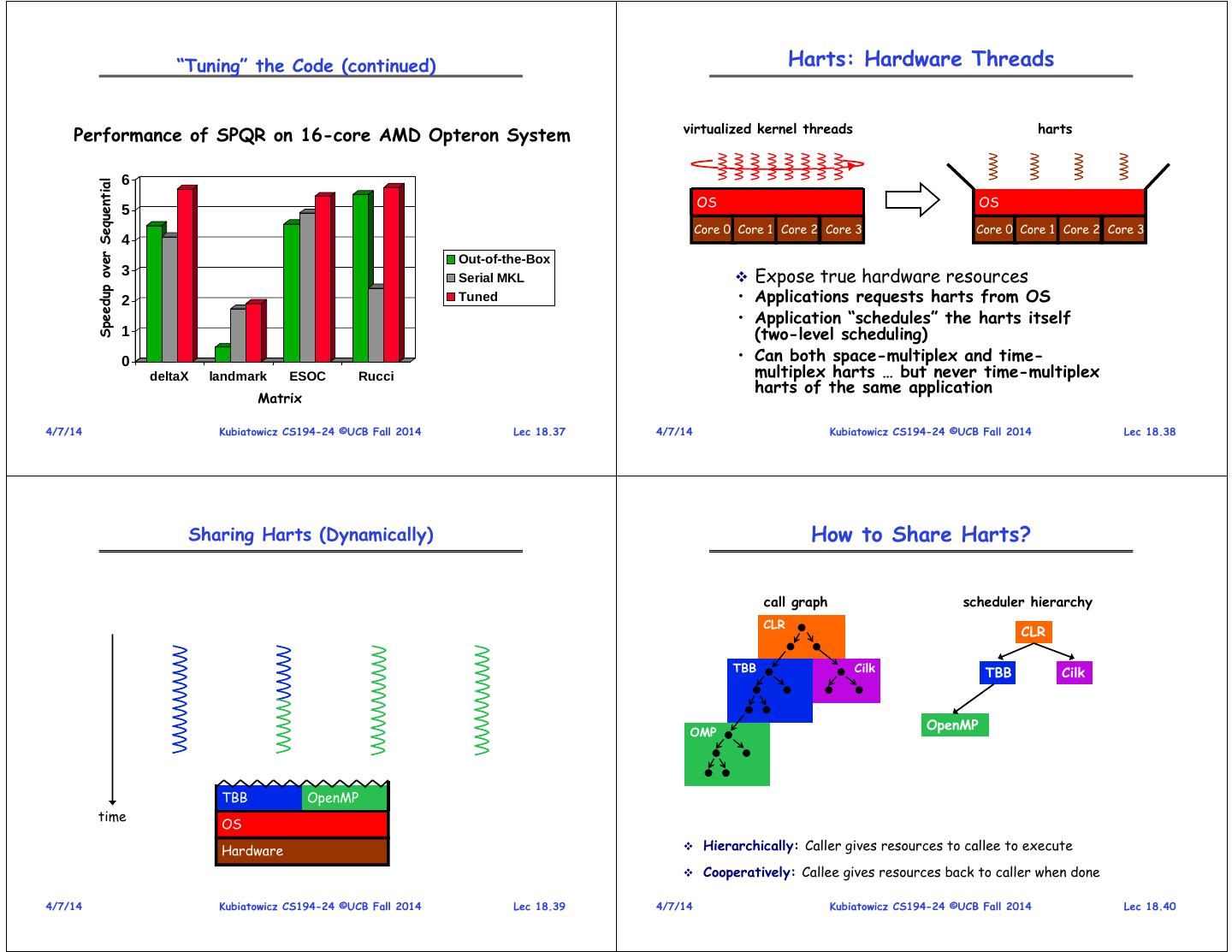

10 . “Tuning” the Code (continued) Harts: Hardware Threads virtualized kernel threads harts Performance of SPQR on 16-core AMD Opteron System 6 Speedup over Sequential OS OS 5 Core 0 Core 1 Core 2 Core 3 Core 0 Core 1 Core 2 Core 3 4 Out-of-the-Box 3 Serial MKL Expose true hardware resources 2 Tuned • Applications requests harts from OS • Application “schedules” the harts itself 1 (two-level scheduling) 0 • Can both space-multiplex and time- deltaX landmark ESOC Rucci multiplex harts … but never time-multiplex harts of the same application Matrix 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.37 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.38 Sharing Harts (Dynamically) How to Share Harts? call graph scheduler hierarchy CLR CLR TBB Cilk TBB Cilk OpenMP OMP TBB OpenMP time OS Hardware Hierarchically: Caller gives resources to callee to execute Cooperatively: Callee gives resources back to caller when done 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.39 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.40

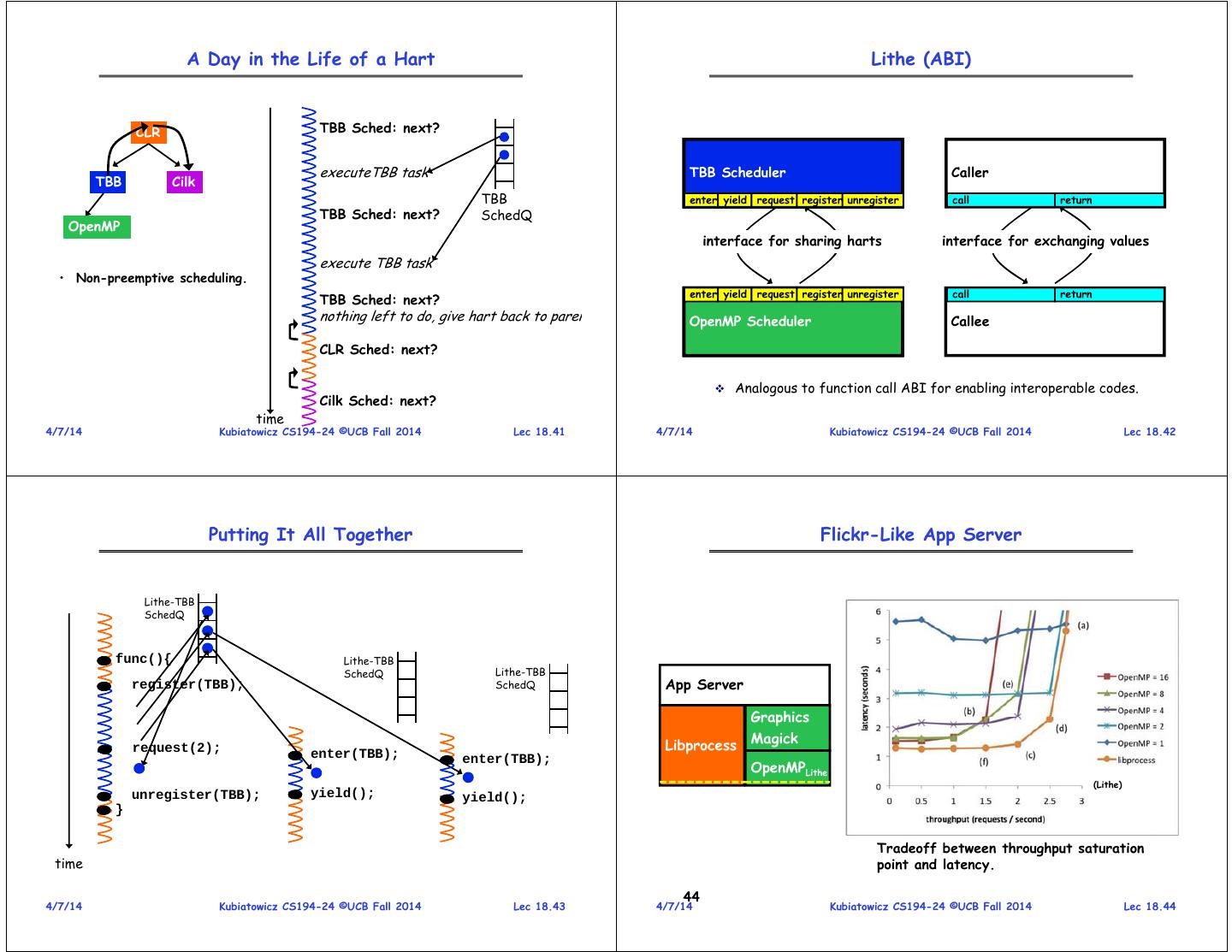

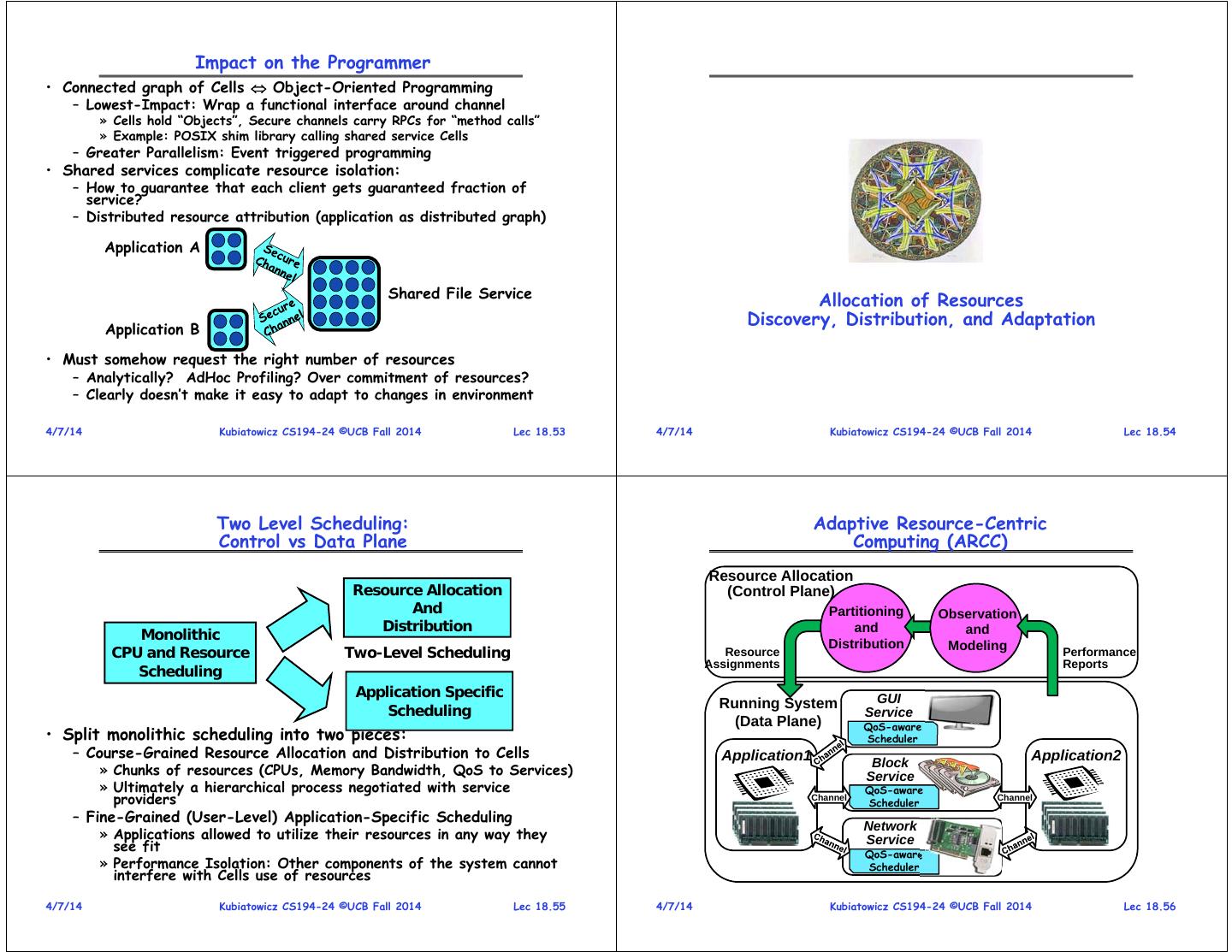

11 . A Day in the Life of a Hart Lithe (ABI) CLR TBB Sched: next? executeTBB task Parent Cilk TBB Scheduler Scheduler Scheduler Caller TBB Cilk TBB enter yield request register unregister call return TBB Sched: next? SchedQ OpenMP interface for sharing harts interface for exchanging values execute TBB task • Non-preemptive scheduling. enter yield request register unregister call return TBB Sched: next? nothing left to do, give hart back to paren Child TBB OpenMPScheduler Scheduler Scheduler Callee CLR Sched: next? Analogous to function call ABI for enabling interoperable codes. Cilk Sched: next? time 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.41 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.42 Putting It All Together Flickr-Like App Server Lithe-TBB SchedQ func(){ Lithe-TBB SchedQ Lithe-TBB register(TBB); SchedQ App Server Graphics Magick request(2); Libprocess enter(TBB); enter(TBB); OpenMPLithe (Lithe) unregister(TBB); yield(); yield(); } Tradeoff between throughput saturation time point and latency. 44 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.43 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.44

12 . Case Study: Sparse QR Factorization Case Study: Sparse QR Factorization ESOC Rucci deltaX landmark Tuned: 70.8 Tuned: 360.0 Tuned: 14.5 Tuned: 2.5 Out-of-the-box: 111.8 Out-of-the-box: 576.9 Out-of-the-box: 26.8 Out-of-the-box: 4.1 Sequential: 172.1 Sequential: 970.5 Sequential: 37.9 Sequential: 3.4 Lithe: 354.7 Lithe: 13.6 Lithe: 2.3 Lithe: 66.7 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.45 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.46 The Swarm of Resources Support for Applications • Clearly, new Swarm applications will contain: Cloud Services – Direct interaction with Swarm and Cloud services » Potentially extensive use of remote services » Serious security/data vulnerability concerns – Real Time requirements » Sophisticated multimedia interactions » Control of/interaction with health-related devices – Responsiveness Requirements Enterprise » Provide a good interactive experience to users Services – Explicitly parallel components The Local Swarm » However, parallelism may be “hard won” (not embarrassingly parallel) » Must not interfere with this parallelism • What system structure required to support Swarm? – Discover and Manage resource • What support do we need for new Swarm applications? – Integrate sensors, portable devices, cloud components – No existing OS handles all of the above patterns well! – Guarantee responsiveness, real-time behavior, throughput » A lot of functionality, hard to experiment with, possibly fragile, … – Self-adapting to adjust for failure and performance predictability » Monolithic resource allocation, scheduling, memory management… – Uniformly secure, durable, available data – Need focus on resources, asynchrony, composability 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.47 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.48

13 . Resource Allocation must be Adaptive Guaranteeing Resources • Guaranteed Resources Stable software components – Good overall behavior • What might we want to guarantee? – Physical memory pages – BW (say data committed to Cloud Storage) – Requests/Unit time (DB service) – Latency to Response (Deadline scheduling) – Total energy/battery power available to Cell • What does it mean to have guaranteed resources? – Firm Guarantee (with high confidence, maximum deviation, etc) – A Service Level Agreement (SLA)? – Something else? • “Impedance-mismatch” problem • Three applications: – The SLA guarantees properties that programmer/user wants – 2 Real Time apps (RayTrace, Swaptions) – The resources required to satisfy SLA are not things that programmer/user really understands – 1 High-throughput App (Fluidanimate) 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.49 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.50 New Abstraction: the Cell Applications are Interconnected Graphs of Services • Properties of a Cell – A user-level software component with guaranteed resources – Has full control over resources it owns (“Bare Metal”) Device Secure Real-Time Drivers – Contains at least one memory protection domain (possibly more) Channel Cells Secure – Contains a set of secured channel endpoints to other Cells (Audio, Channel Secure – Hardware-enforced security context to protect the privacy of Video) Channel Parallel information and decrypt information (a Hardware TCB) Core Application Library File Service • Each Cell schedules its resources exclusively with application- • Component-based model of computation specific user-level schedulers – Applications consist of interacting components – Gang-scheduled hardware thread resources (“Harts”) – Explicitly asynchronous/non-blocking – Virtual Memory mapping and paging – Components may be local or remote – Storage and Communication resources • Communication defines Security Model » Cache partitions, memory bandwidth, power – Channels are points at which data may be compromised – Use of Guaranteed fractions of system services – Channels define points for QoS constraints • Predictability of Behavior • Naming (Brokering) process for initiating endpoints – Ability to model performance vs resources – Need to find compatible remote services – Ability for user-level schedulers to better provide QoS – Continuous adaptation: links changing over time! 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.51 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.52

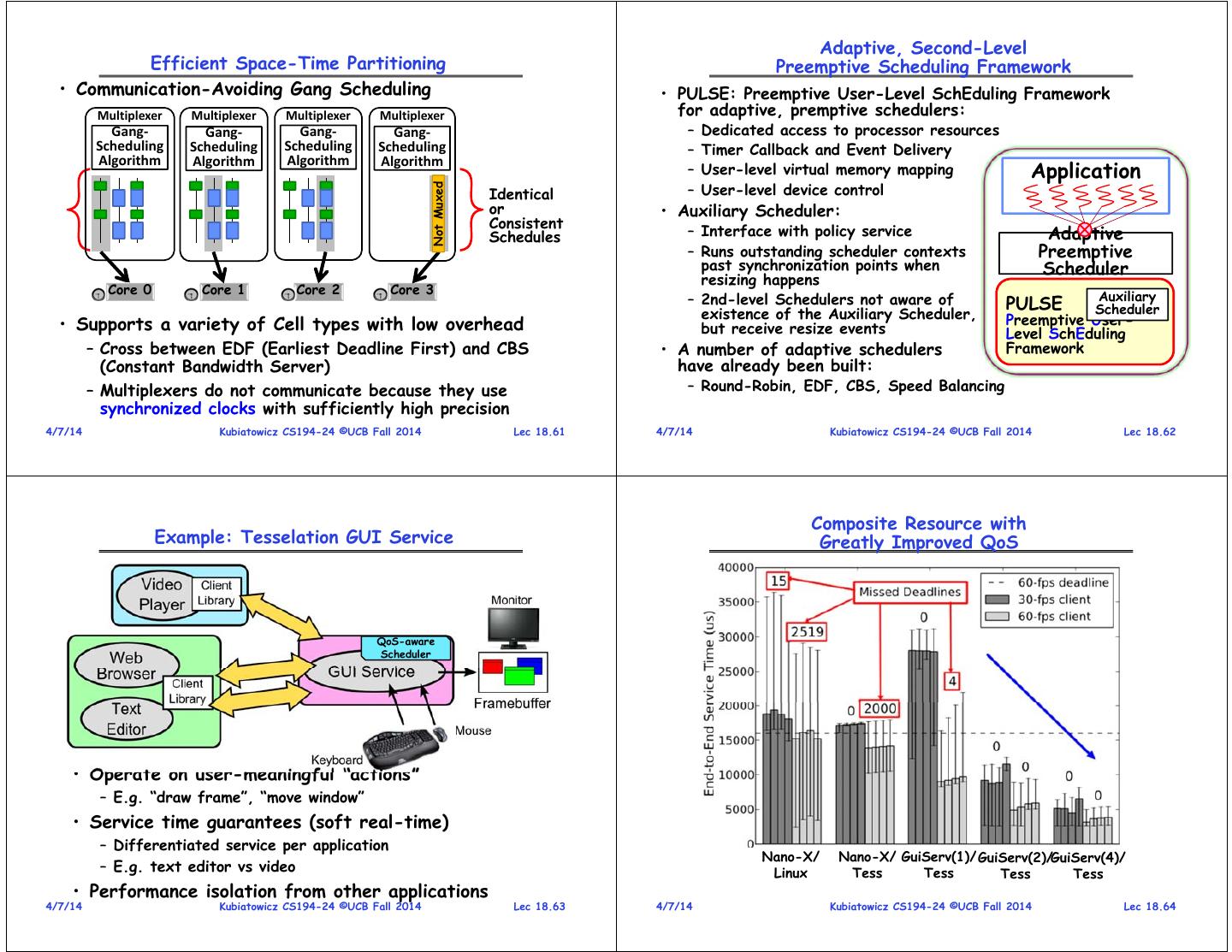

14 . Impact on the Programmer • Connected graph of Cells Object-Oriented Programming – Lowest-Impact: Wrap a functional interface around channel » Cells hold “Objects”, Secure channels carry RPCs for “method calls” » Example: POSIX shim library calling shared service Cells – Greater Parallelism: Event triggered programming • Shared services complicate resource isolation: – How to guarantee that each client gets guaranteed fraction of service? – Distributed resource attribution (application as distributed graph) Application A Shared File Service Allocation of Resources Application B Discovery, Distribution, and Adaptation • Must somehow request the right number of resources – Analytically? AdHoc Profiling? Over commitment of resources? – Clearly doesn’t make it easy to adapt to changes in environment 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.53 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.54 Two Level Scheduling: Adaptive Resource-Centric Control vs Data Plane Computing (ARCC) Resource Allocation Resource Allocation (Control Plane) And Partitioning Observation Distribution and and Monolithic Distribution Modeling CPU and Resource Two-Level Scheduling Resource Performance Assignments Reports Scheduling Application Specific GUI Scheduling Running System Service (Data Plane) QoS-aware • Split monolithic scheduling into two pieces: Scheduler – Course-Grained Resource Allocation and Distribution to Cells Application1 Application2 Block » Chunks of resources (CPUs, Memory Bandwidth, QoS to Services) Service » Ultimately a hierarchical process negotiated with service QoS-aware providers Channel Scheduler Channel – Fine-Grained (User-Level) Application-Specific Scheduling Network » Applications allowed to utilize their resources in any way they Service see fit QoS-aware » Performance Isolation: Other components of the system cannot Scheduler interfere with Cells use of resources 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.55 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.56

15 . Tackling Multiple Requirements: Resource Allocation + Express as Convex Optimization Problem 58 • Goal: Meet the QoS requirements of a software component (Cell) Penalty Function Resource-Value Function – Behavior tracked through application- Execution specific “heartbeats” and system- Execution Continuously Execution Penalty1 Speech level monitoring Execution Execution minimize using the – Dynamic exploration of performance space to find penalty of the Runtime1(r(0,1), …, r(n-1,1)) operation points system Runtime1 • Complications: Penalty2 Modeling Models Graph – Many cells with conflicting Models Adaptation Modelingand requirements State Adaptation Models Modelingand Evaluation Modelingand Evaluation State Adaptation Models – Finite Resources Modelingand Evaluation State Adaptation Models Evaluation andState StateAdaptation Runtime2 Runtime2 (r(0,2), …, r(n-1,2)) – Hierarchy of resource ownership Evaluation – Context-dependent resource availability (subject to restrictions on – Stability, Efficiency, Rate of Resource Discovery, Stencil the total amount of Penaltyi Convergence, … Access Control, resources) Advertisement Runtimei Runtimei(r(0,i), …, r(n-1,i)) 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.57 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.58 Brokering Service: The Hierarchy of Ownership Space-Time Partitioning Cell • Discover Resources in “Domain” Parent – Devices, Services, Other Brokers Space Broker – Resources self-describing? • Allocate and Distribute Resources Time Local to Cells that need them Sibling Broker – Solve Impedance-mismatch • Spatial Partition: Broker problem • Partitioning varies over time Performance isolation – Dynamically optimize execution – Fine-grained multiplexing and – Each partition receives a guarantee of resources – Hand out Service-Level Agreements (SLAs) to Cells vector of basic » Resources are gang-scheduled Child resources Broker – Deny admission to Cells when • Controlled multiplexing, not violates existing agreements » A number HW threads » Chunk of physical memory uncontrolled virtualization • Complete hierarchy – Throughout world graph of » A portion of shared cache • Partitioning adapted to the applications » A fraction of memory BW system’s needs » Shared fractions of services 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.59 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.60

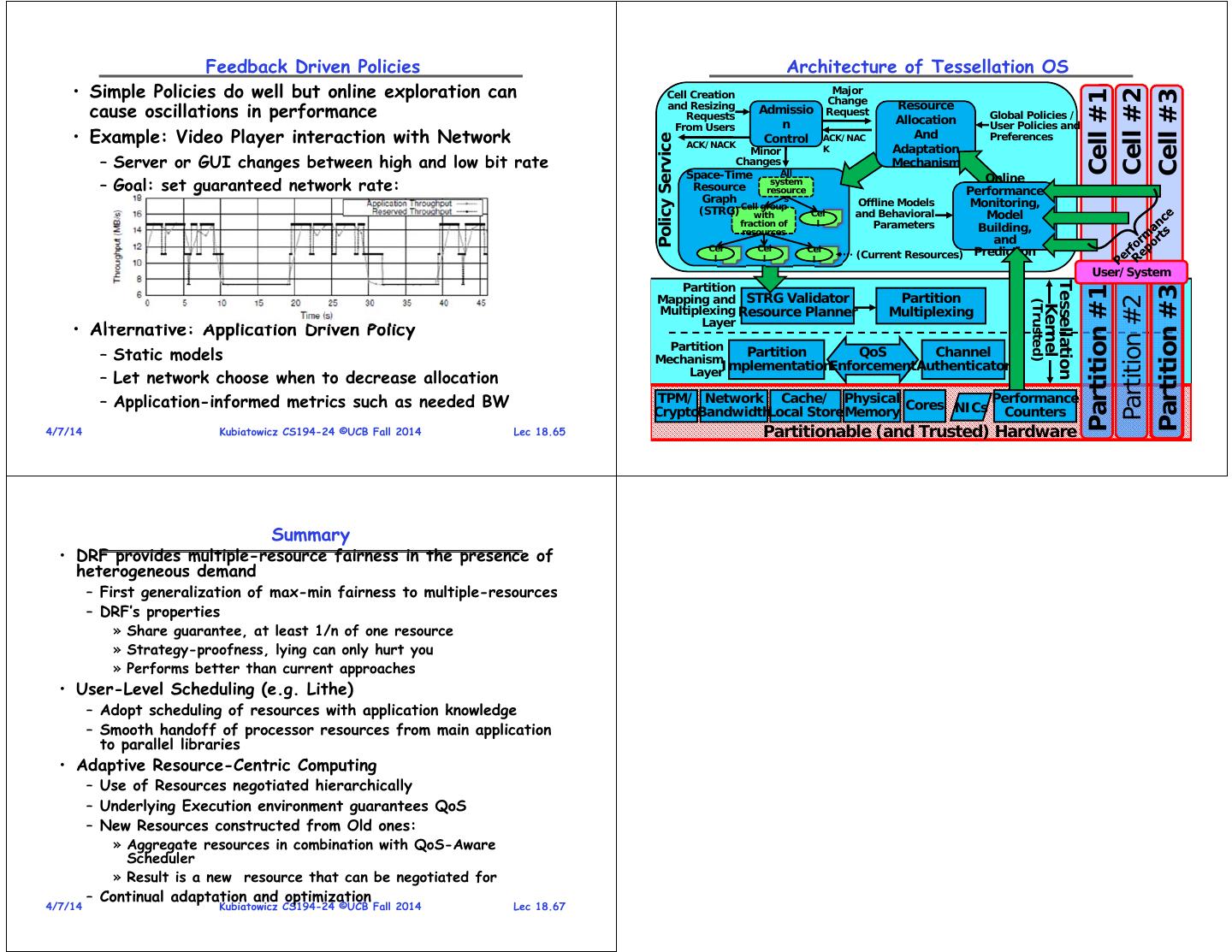

16 . Adaptive, Second-Level Efficient Space-Time Partitioning Preemptive Scheduling Framework • Communication-Avoiding Gang Scheduling • PULSE: Preemptive User-Level SchEduling Framework Multiplexer Multiplexer Multiplexer Multiplexer for adaptive, premptive schedulers: Gang‐ Gang‐ Gang‐ Gang‐ – Dedicated access to processor resources Scheduling Scheduling Scheduling Scheduling – Timer Callback and Event Delivery Algorithm Algorithm Application Algorithm Algorithm – User-level virtual memory mapping – User-level device control Not Muxed Identical or • Auxiliary Scheduler: Consistent Schedules – Interface with policy service Adaptive – Runs outstanding scheduler contexts Preemptive past synchronization points when Scheduler resizing happens Core 0 Core 1 Core 2 Core 3 Auxiliary – 2nd-level Schedulers not aware of PULSE existence of the Auxiliary Scheduler, Scheduler • Supports a variety of Cell types with low overhead Preemptive User- but receive resize events Level SchEduling – Cross between EDF (Earliest Deadline First) and CBS • A number of adaptive schedulers Framework (Constant Bandwidth Server) have already been built: – Multiplexers do not communicate because they use – Round-Robin, EDF, CBS, Speed Balancing synchronized clocks with sufficiently high precision 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.61 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.62 Composite Resource with Example: Tesselation GUI Service Greatly Improved QoS QoS-aware Scheduler • Operate on user-meaningful “actions” – E.g. “draw frame”, “move window” • Service time guarantees (soft real-time) – Differentiated service per application Nano-X/ Nano-X/ GuiServ(1)/ GuiServ(2)/GuiServ(4)/ – E.g. text editor vs video Linux Tess Tess Tess Tess • Performance isolation from other applications 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.63 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.64

17 . Feedback Driven Policies Architecture of Tessellation OS • Simple Policies do well but online exploration can Cell Creation Major Cell #2 Cell #1 Cell #3 Change cause oscillations in performance and Resizing Requests Admissio Request Resource Allocation Global Policies / From Users n User Policies and • Example: Video Player interaction with Network Control ACK/NAC And Preferences Policy Service ACK/NACK K Adaptation Minor – Server or GUI changes between high and low bit rate Changes Mechanism Space-Time All Online – Goal: set guaranteed network rate: Resource system resource Performance Graph s Offline Models Monitoring, (STRG) Cellwith group Cel and Behavioral Model fraction of l Parameters Building, resources and Cel Cel Cel l l l (Current Resources) Prediction User/System Partition Tessellation Partition #1 Partition #3 Mapping and STRG Validator Partition Partition #2 (Trusted) Kernel Multiplexing Resource Planner Multiplexing Layer • Alternative: Application Driven Policy Partition – Static models Mechanism Partition QoS Channel Layer ImplementationEnforcement Authenticator – Let network choose when to decrease allocation – Application-informed metrics such as needed BW TPM/ Network Cache/ Physical Cores NICs Performance CryptoBandwidthLocal Store Memory Counters 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.65 4/7/14 Partitionable (and Kubiatowicz Trusted) CS194-24 Hardware ©UCB Fall 2014 Lec 18.66 Summary • DRF provides multiple-resource fairness in the presence of heterogeneous demand – First generalization of max-min fairness to multiple-resources – DRF’s properties » Share guarantee, at least 1/n of one resource » Strategy-proofness, lying can only hurt you » Performs better than current approaches • User-Level Scheduling (e.g. Lithe) – Adopt scheduling of resources with application knowledge – Smooth handoff of processor resources from main application to parallel libraries • Adaptive Resource-Centric Computing – Use of Resources negotiated hierarchically – Underlying Execution environment guarantees QoS – New Resources constructed from Old ones: » Aggregate resources in combination with QoS-Aware Scheduler » Result is a new resource that can be negotiated for – Continual adaptation and optimization 4/7/14 Kubiatowicz CS194-24 ©UCB Fall 2014 Lec 18.67