- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

深入了解Rook

展开查看详情

1 .Rook Deep Dive Jared Watts Rook Senior Maintainer Upbound Founding Engineer https://rook.io/ https://github.com/rook/rook

2 .Agenda ● Quick introduction to Rook (again) ● Deep dive: Ceph orchestration ● Deep dive: Storage provider integration for Minio ● Demo (if time allows) ● Questions

3 .What is Rook? ● Cloud-Native Storage Orchestrator ● Extends Kubernetes with custom types and controllers ● Automates deployment, bootstrapping, configuration, provisioning, scaling, upgrading, migration, disaster recovery, monitoring, and resource management ● Framework for many storage providers and solutions ● Open Source (Apache 2.0) ● Hosted by the Cloud-Native Computing Foundation (CNCF)

4 .Storage Challenges ● Reliance on external storage ○ Requires these services to be accessible ○ Deployment burden ● Reliance on cloud provider managed services ○ Vendor lock-in ● Day 2 operations - who is managing the storage?

5 .Possible Solutions ● Deploy storage systems INTO the cluster ● Portable abstractions for all storage needs ○ Database, message queue, cache, object store, etc. ● Power of choice: cost, features, resiliency, compliance ● Automated management by smart software

6 .Custom Resource Definitions (CRDs) ● Teaches Kubernetes about new first-class objects ● Custom Resource Definition (CRDs) are arbitrary types that extend the Kubernetes API ○ look just like any other built-in object (e.g. Pod) ○ Enabled native kubectl experience ● A means for user to describe their desired state

7 . Rook Operators ● Implements the Operator Pattern for storage solutions ● User defines desired state for the storage cluster ● The Operator runs reconciliation loops ○ Observe - Watches for changes in desired state and cluster ○ Analyze - Determine differences between desired and actual ○ Act - Applies changes to the cluster to drive it towards desired

8 .Rook Framework for Storage Solutions ● Rook is more than just a collection of Operators and CRDs ● Framework for storage providers to integrate their solutions into cloud-native environments ○ Storage resource normalization ○ Operator patterns/plumbing ○ Common policies, specs, logic ○ Testing effort ● Ceph, CockroachDB, Minio, NFS, Cassandra, Nexenta, and more...

9 .Ceph Deep Dive

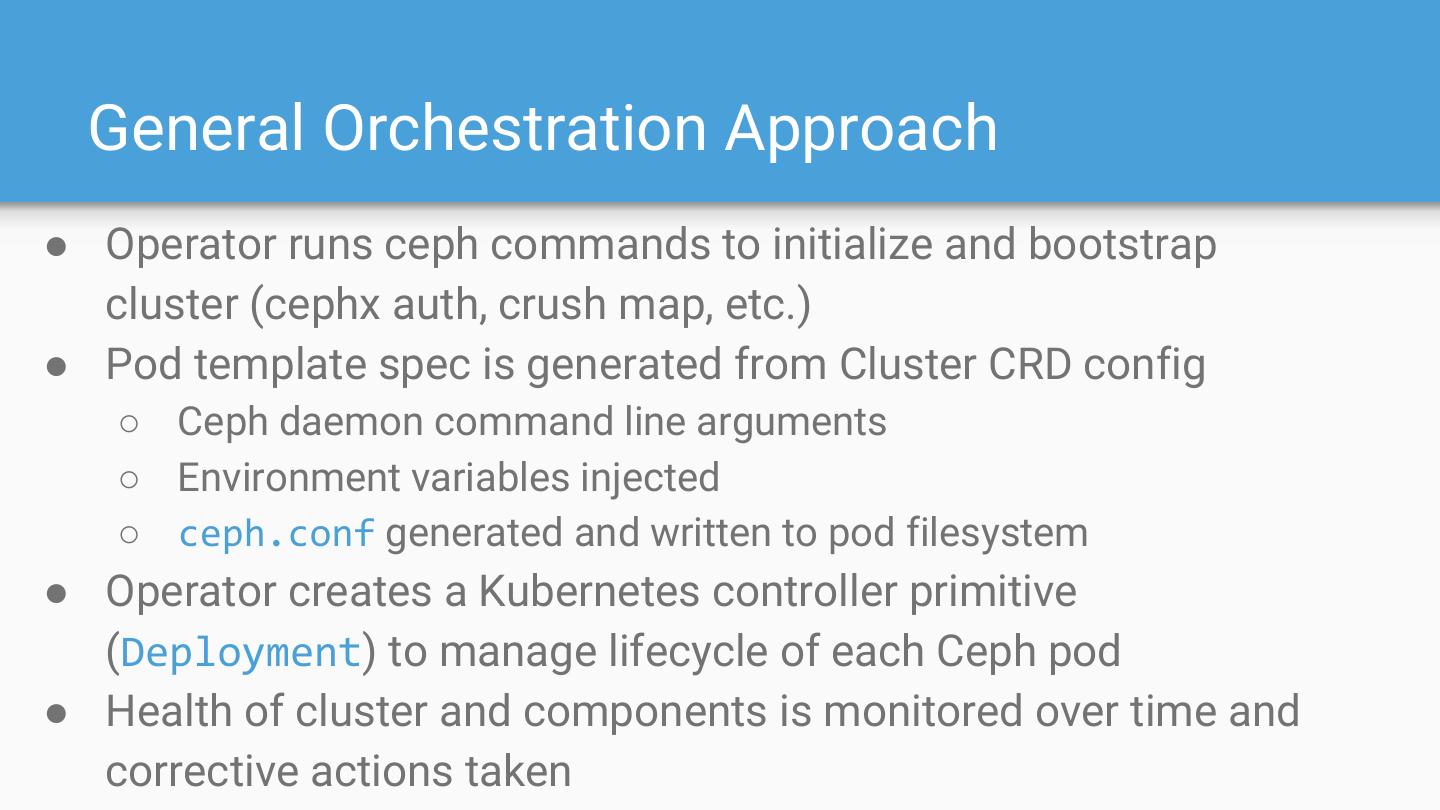

10 . General Orchestration Approach ● Operator runs ceph commands to initialize and bootstrap cluster (cephx auth, crush map, etc.) ● Pod template spec is generated from Cluster CRD config ○ Ceph daemon command line arguments ○ Environment variables injected ○ ceph.conf generated and written to pod filesystem ● Operator creates a Kubernetes controller primitive (Deployment) to manage lifecycle of each Ceph pod ● Health of cluster and components is monitored over time and corrective actions taken

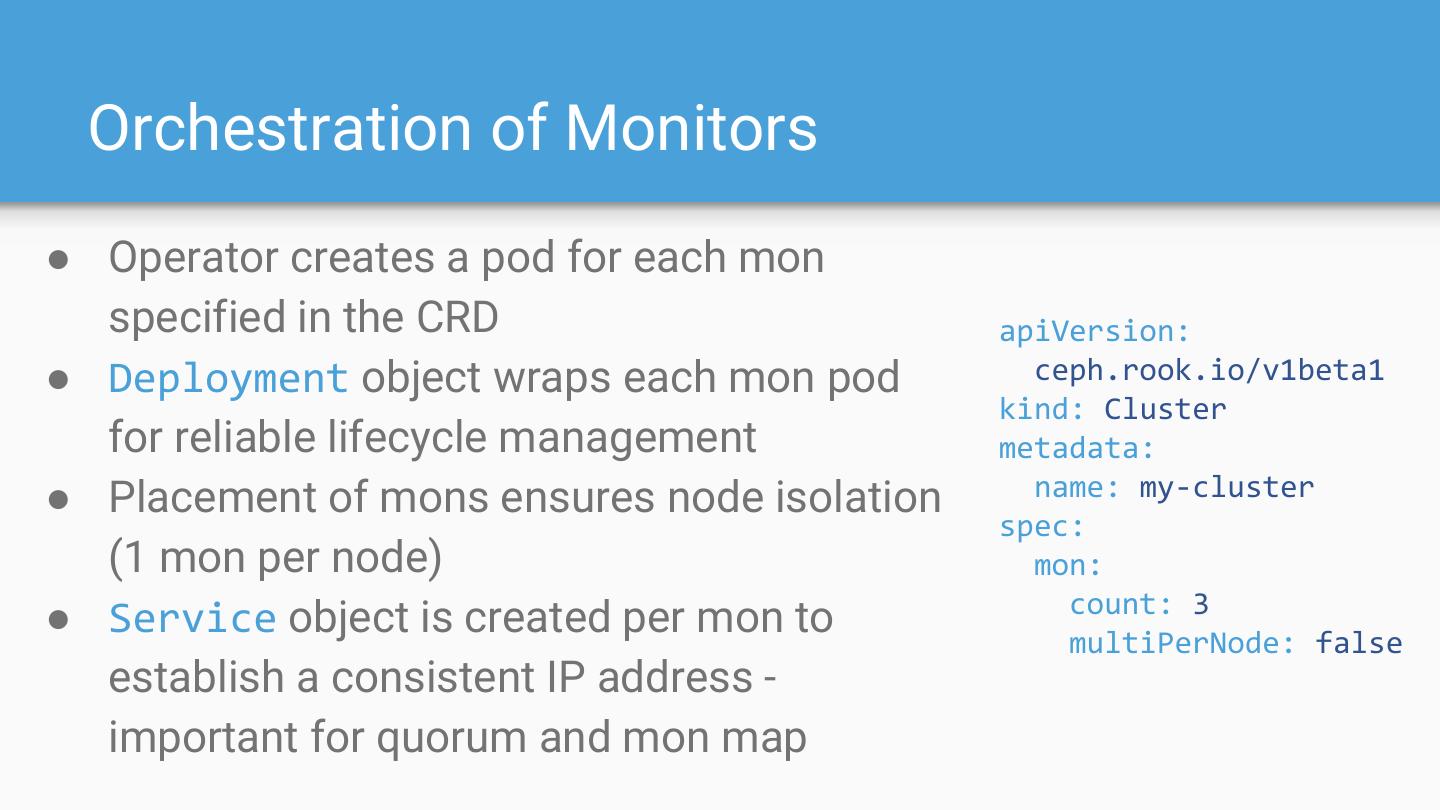

11 . Orchestration of Monitors ● Operator creates a pod for each mon specified in the CRD apiVersion: ● Deployment object wraps each mon pod ceph.rook.io/v1beta1 kind: Cluster for reliable lifecycle management metadata: ● Placement of mons ensures node isolation name: my-cluster spec: (1 mon per node) mon: count: 3 ● Service object is created per mon to multiPerNode: false establish a consistent IP address - important for quorum and mon map

12 . Monitors: Surviving Pod Restarts ● Mon persistent state must survive restarts (pod restart, node reboot, power failure, etc.) ● Mon state is stored in a HostPath mounted by the mon pod ○ user configurable via dataDirHostPath in Cluster CRD ● After a power outage, mon pods start and load state from the persisted data ○ Once mons form quorum, the cluster is healthy again ● If a mon loses its persisted data, it will heal itself after a restart

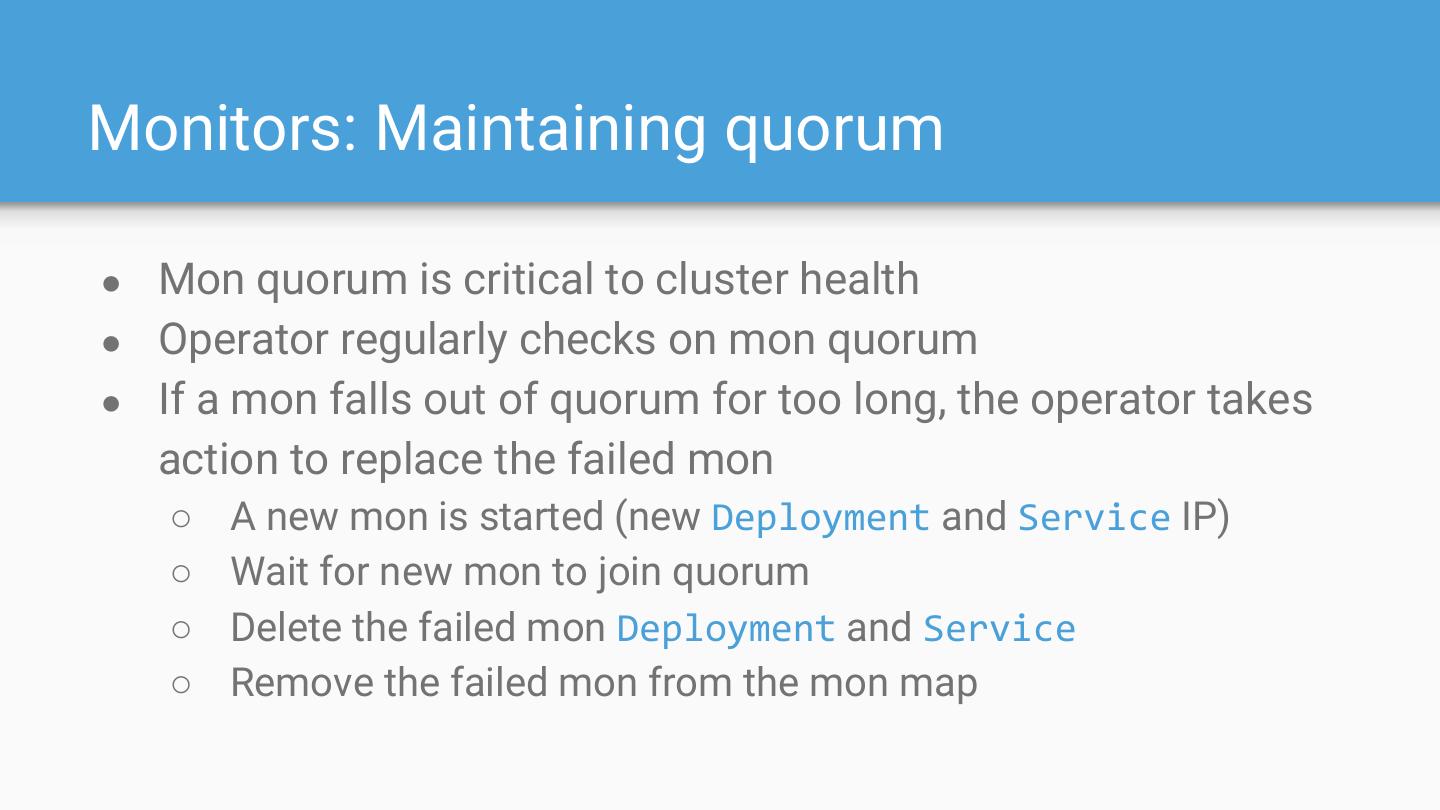

13 .Monitors: Maintaining quorum ● Mon quorum is critical to cluster health ● Operator regularly checks on mon quorum ● If a mon falls out of quorum for too long, the operator takes action to replace the failed mon ○ A new mon is started (new Deployment and Service IP) ○ Wait for new mon to join quorum ○ Delete the failed mon Deployment and Service ○ Remove the failed mon from the mon map

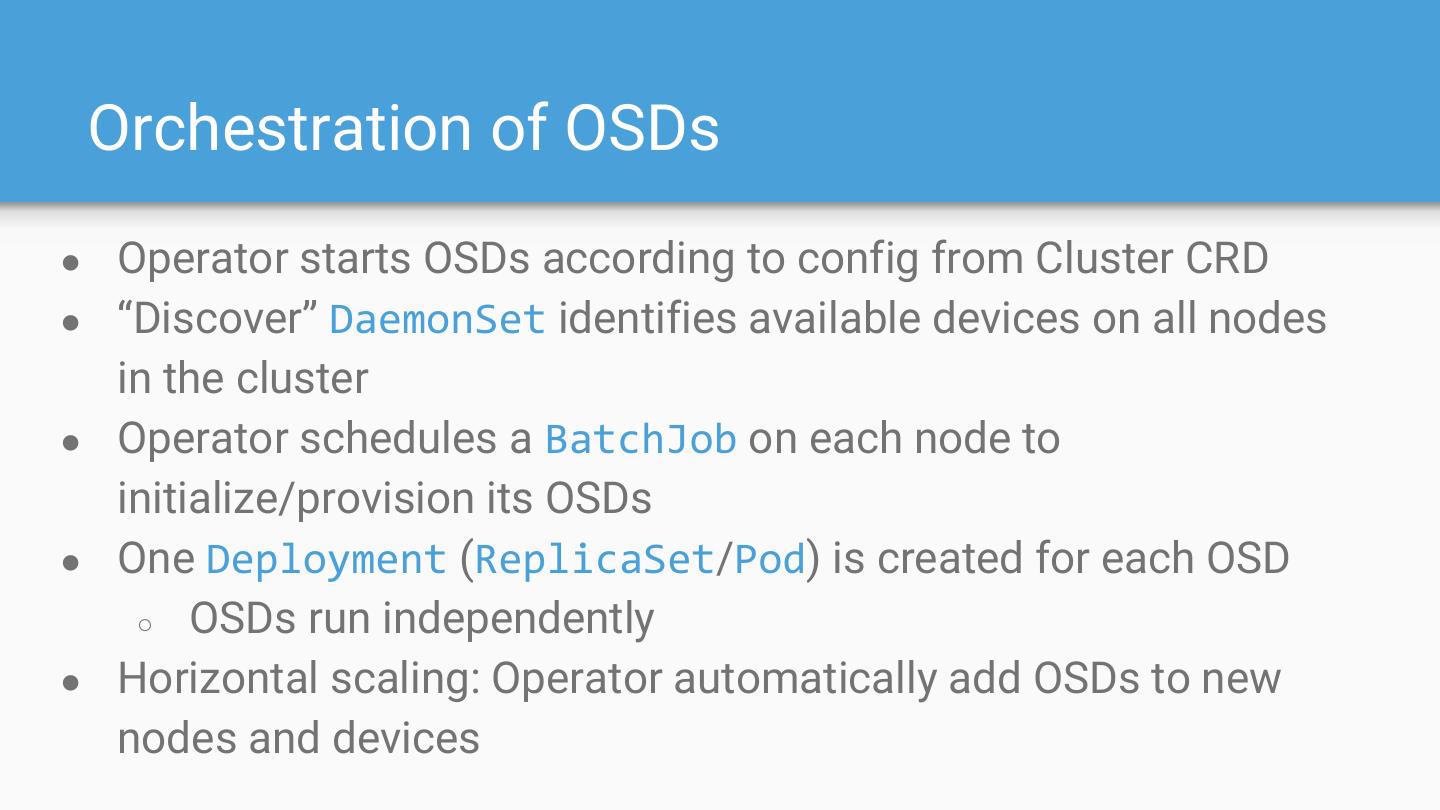

14 . Orchestration of OSDs ● Operator starts OSDs according to config from Cluster CRD ● “Discover” DaemonSet identifies available devices on all nodes in the cluster ● Operator schedules a BatchJob on each node to initialize/provision its OSDs ● One Deployment (ReplicaSet/Pod) is created for each OSD ○ OSDs run independently ● Horizontal scaling: Operator automatically add OSDs to new nodes and devices

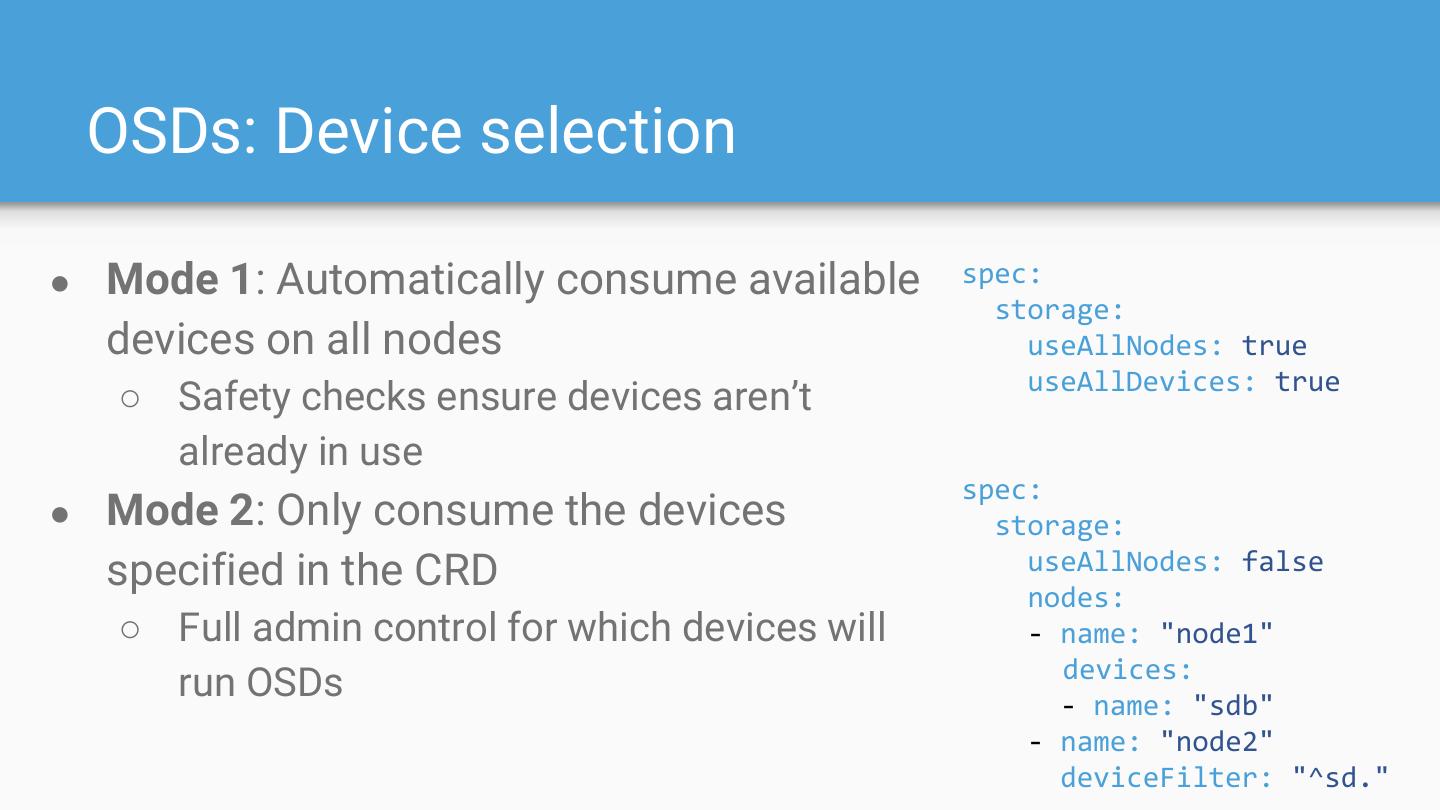

15 . OSDs: Device selection ● Mode 1: Automatically consume available spec: storage: devices on all nodes useAllNodes: true useAllDevices: true ○ Safety checks ensure devices aren’t already in use spec: ● Mode 2: Only consume the devices storage: specified in the CRD useAllNodes: false nodes: ○ Full admin control for which devices will - name: "node1" devices: run OSDs - name: "sdb" - name: "node2" deviceFilter: "^sd."

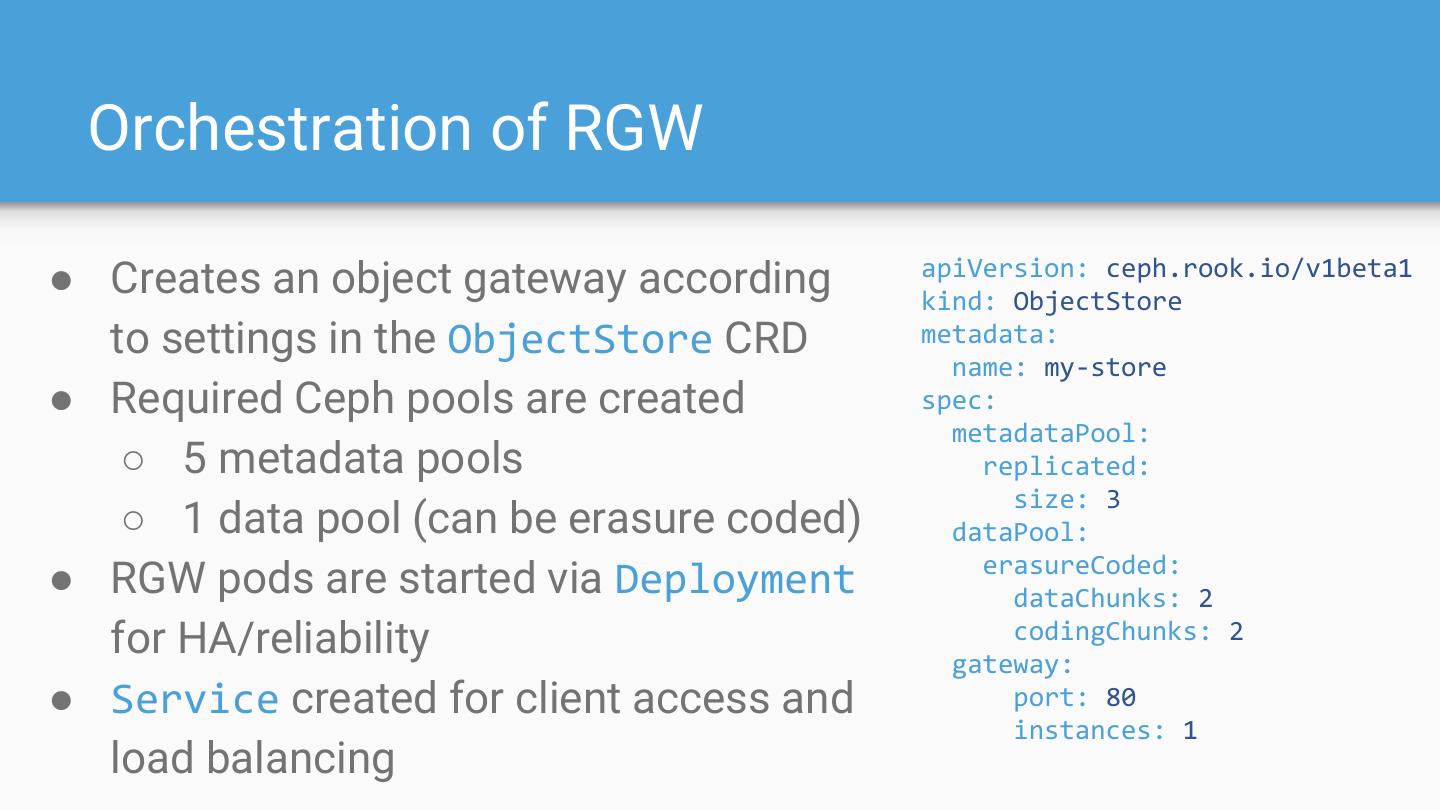

16 . Orchestration of RGW apiVersion: ceph.rook.io/v1beta1 ● Creates an object gateway according kind: ObjectStore to settings in the ObjectStore CRD metadata: name: my-store ● Required Ceph pools are created spec: metadataPool: ○ 5 metadata pools replicated: size: 3 ○ 1 data pool (can be erasure coded) dataPool: erasureCoded: ● RGW pods are started via Deployment dataChunks: 2 for HA/reliability codingChunks: 2 gateway: ● Service created for client access and port: 80 instances: 1 load balancing

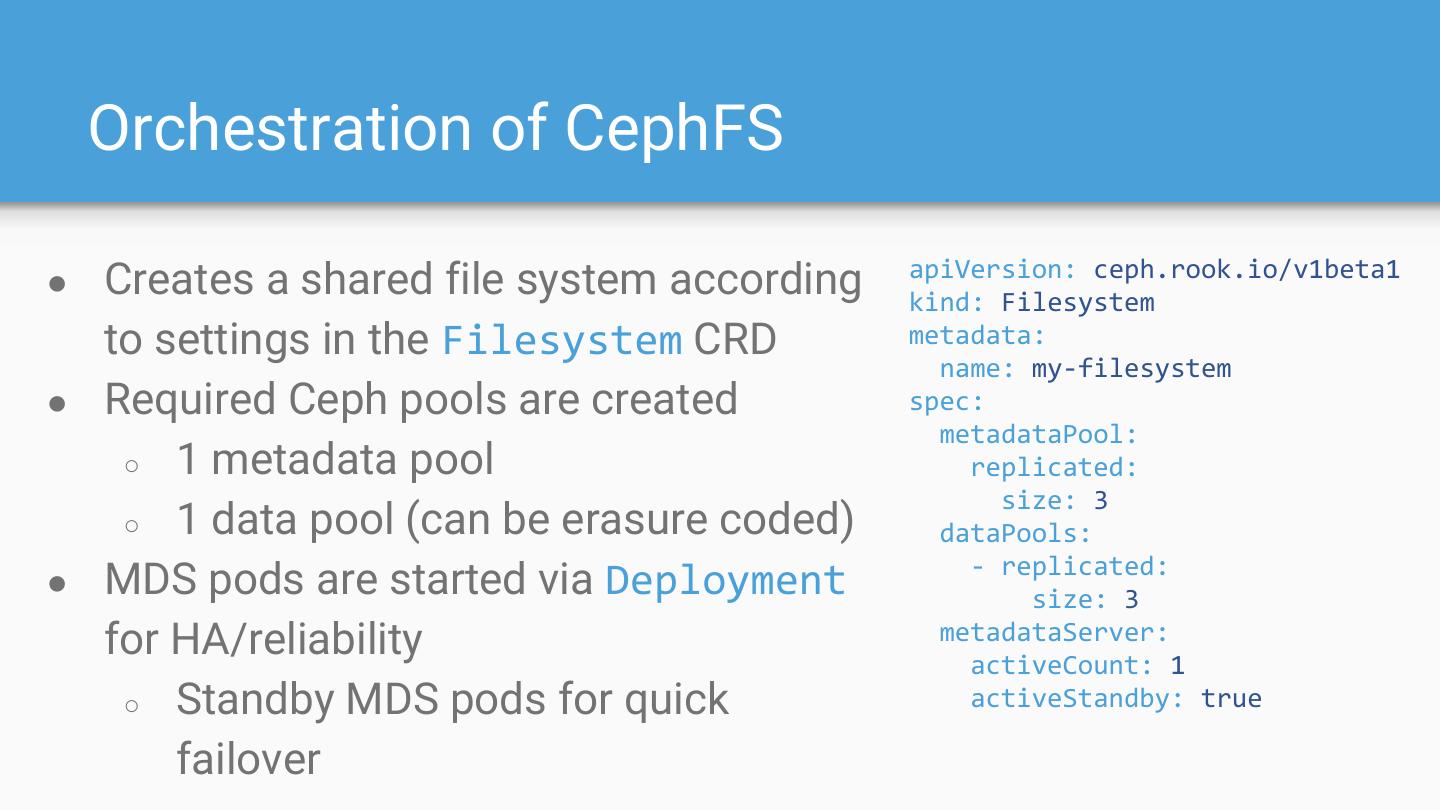

17 . Orchestration of CephFS apiVersion: ceph.rook.io/v1beta1 ● Creates a shared file system according kind: Filesystem to settings in the Filesystem CRD metadata: name: my-filesystem ● Required Ceph pools are created spec: metadataPool: ○ 1 metadata pool replicated: size: 3 ○ 1 data pool (can be erasure coded) dataPools: - replicated: ● MDS pods are started via Deployment size: 3 for HA/reliability metadataServer: activeCount: 1 ○ Standby MDS pods for quick activeStandby: true failover

18 . Rook Agent ● Dynamically attaches/mounts Ceph storage for pod consumption ● Runs as DaemonSet on all schedulable nodes in cluster ● Block: rbd map ● File: mount -t ceph ● Fencing and locking for ReadWriteOnce ● Detach and reattach if pod scheduled onto another node ● Currently a Kubernetes FlexVolume, will be replaced by CSI driver in the near future (work ongoing)

19 . Automated Stateful Upgrades ● Partially implemented in 0.8, more support coming in 0.9 ● Operator controls and manages software upgrade flow ● Upgrade is simply applying/reconciling desired state ● Leverages built-in functionality of K8s resources like Deployments to update components in a rolling fashion ● Health checks to ensure cluster health is maintained ● Separation of Rook and Ceph versioning to isolate impact ● Special upgrade and migration steps between major versions of Ceph (Mimic -> Nautilus) will be implemented as necessary

20 .Developer Deep Dive: Storage Provider Integration Minio Operator

21 . Operator Frameworks Current: Register CRDs, watch events and invoke handler functions ● Rook operator-kit: https://github.com/rook/operator-kit Future: Auto-generate APIs, CRDs, controllers, reconciliation, boilerplate code, unit tests, deployment, etc. ● Operator SDK: https://github.com/operator-framework/operator-sdk ● Kubebuilder: https://github.com/kubernetes-sigs/kubebuilder

22 .Minio ObjectStore CRD

23 .Minio ObjectStore Custom Object

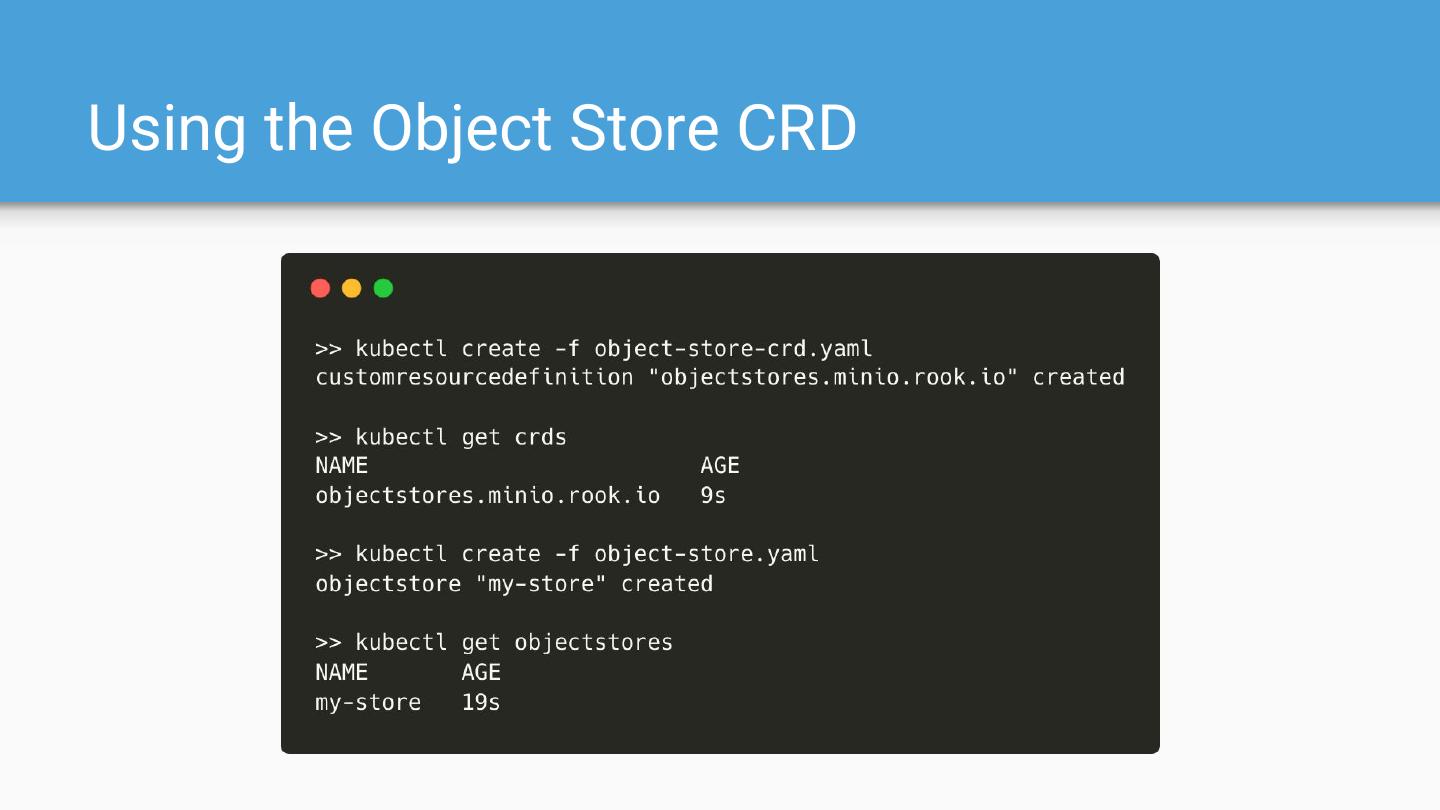

24 .Using the Object Store CRD

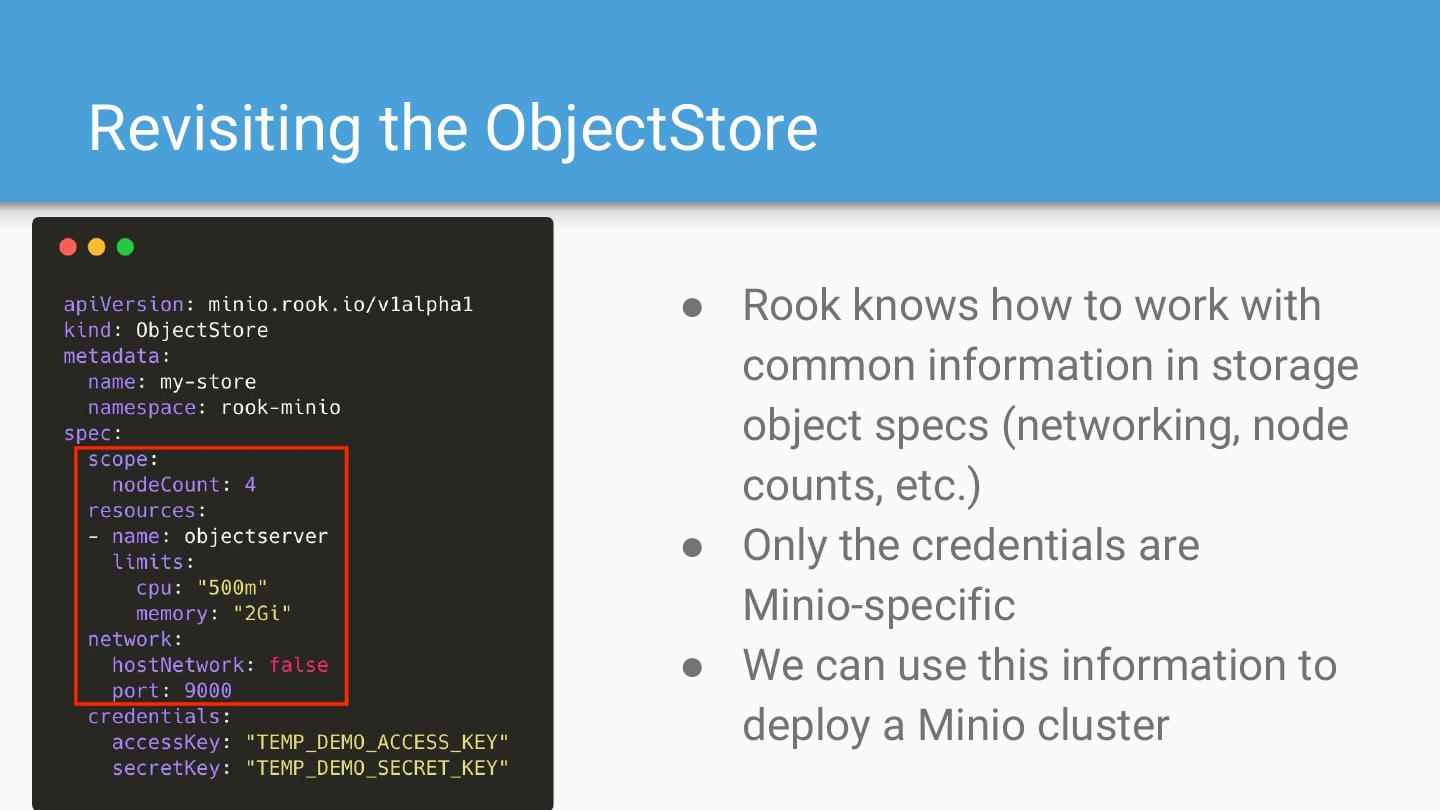

25 .Revisiting the ObjectStore ● Rook knows how to work with common information in storage object specs (networking, node counts, etc.) ● Only the credentials are Minio-specific ● We can use this information to deploy a Minio cluster

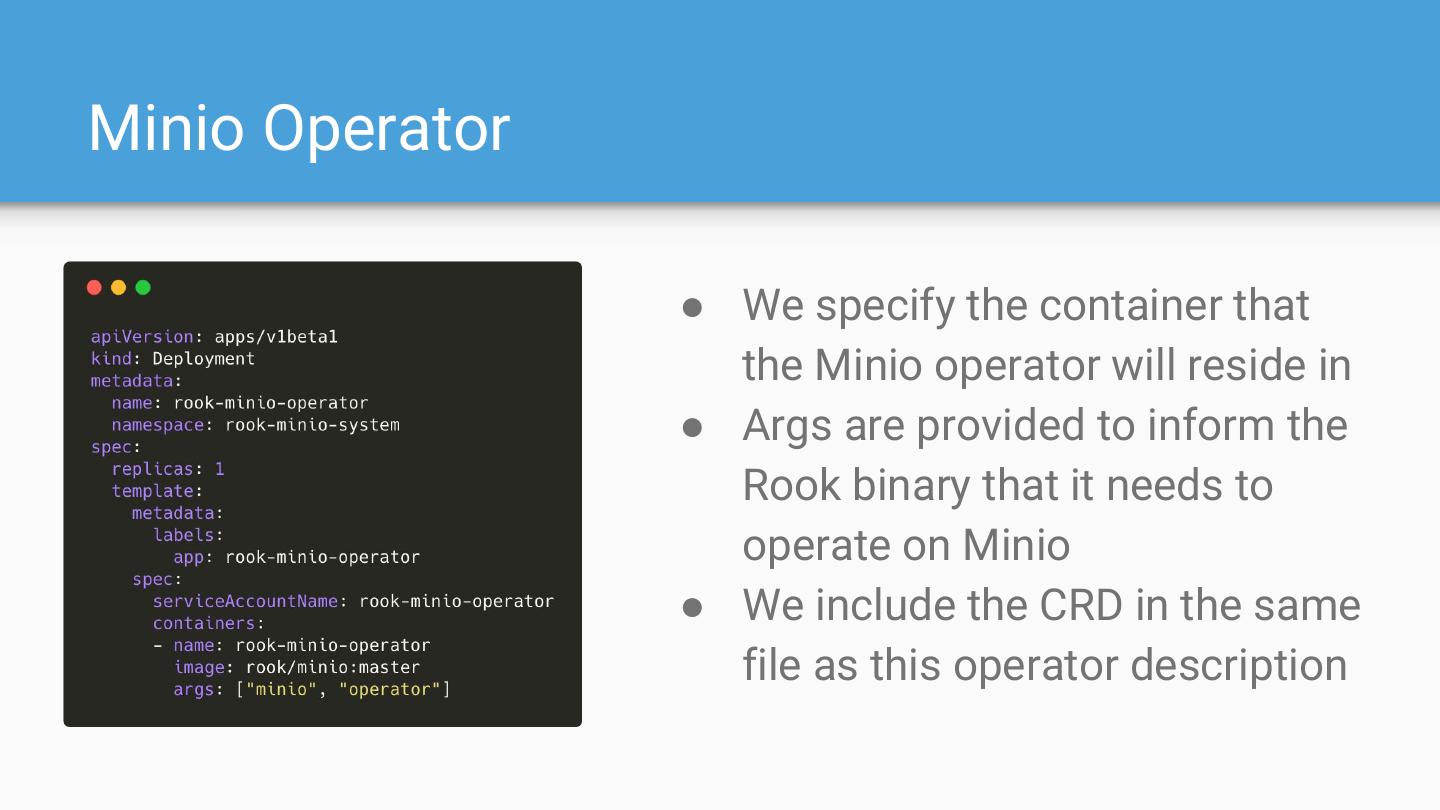

26 .Minio Operator ● We specify the container that the Minio operator will reside in ● Args are provided to inform the Rook binary that it needs to operate on Minio ● We include the CRD in the same file as this operator description

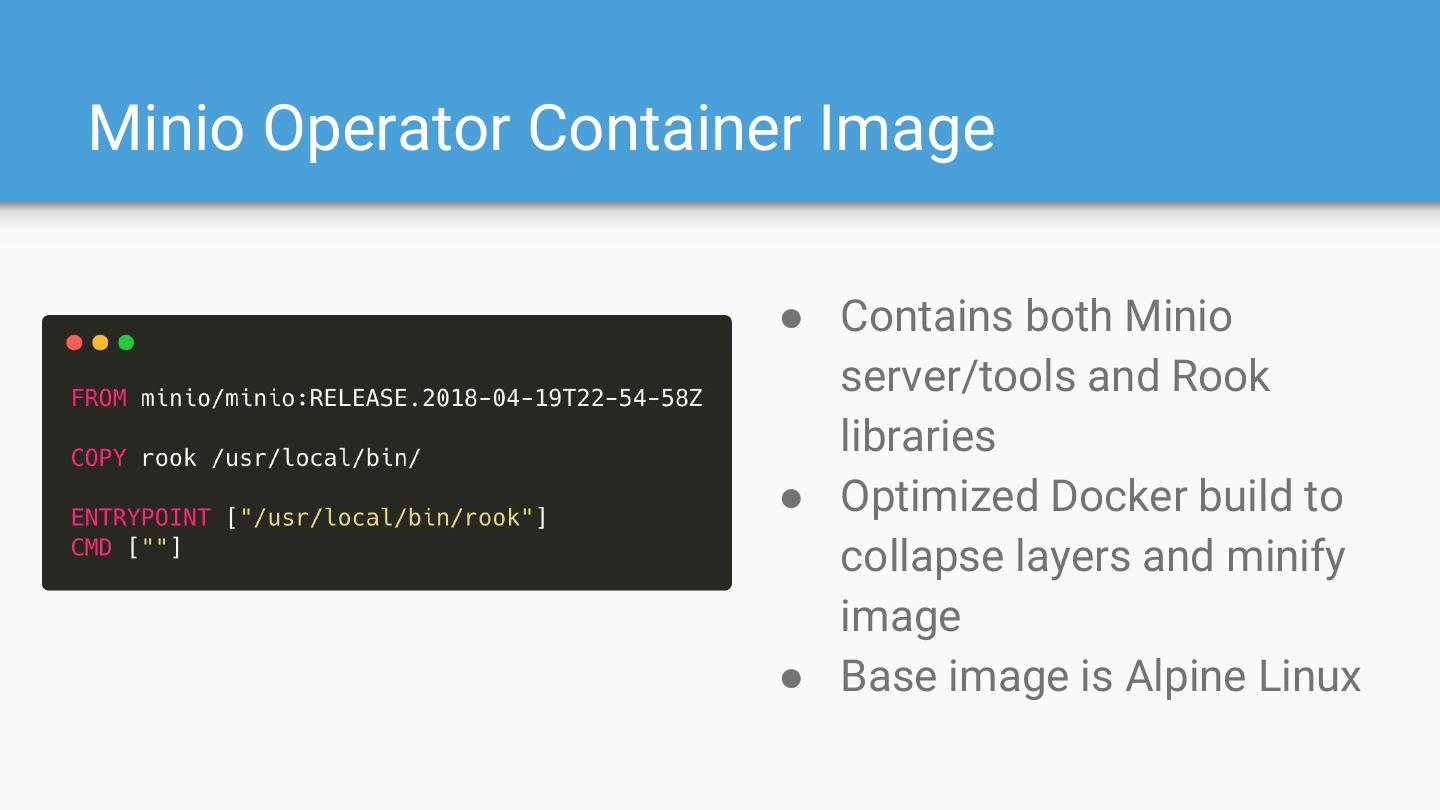

27 .Minio Operator Container Image ● Contains both Minio server/tools and Rook libraries ● Optimized Docker build to collapse layers and minify image ● Base image is Alpine Linux

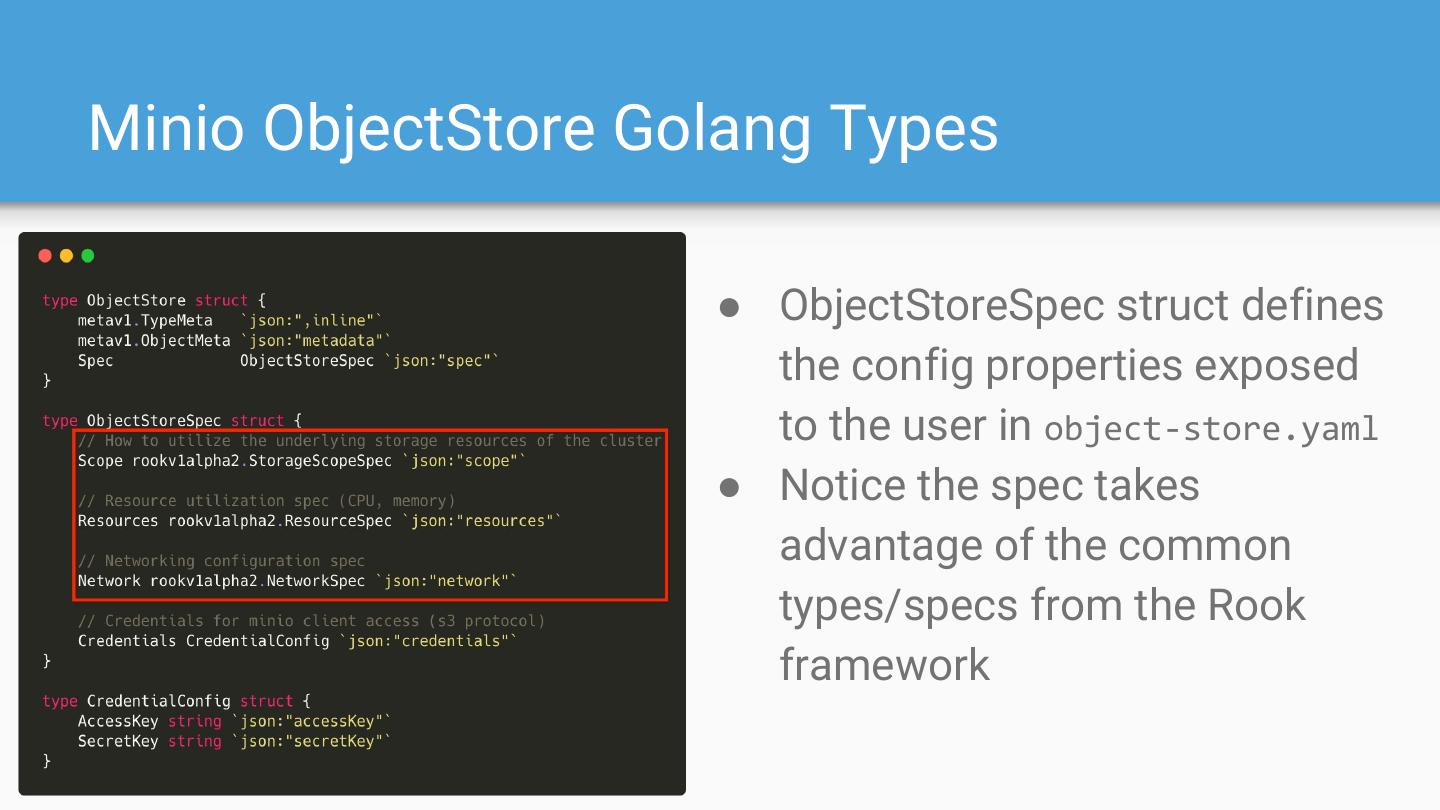

28 .Minio ObjectStore Golang Types ● ObjectStoreSpec struct defines the config properties exposed to the user in object-store.yaml ● Notice the spec takes advantage of the common types/specs from the Rook framework

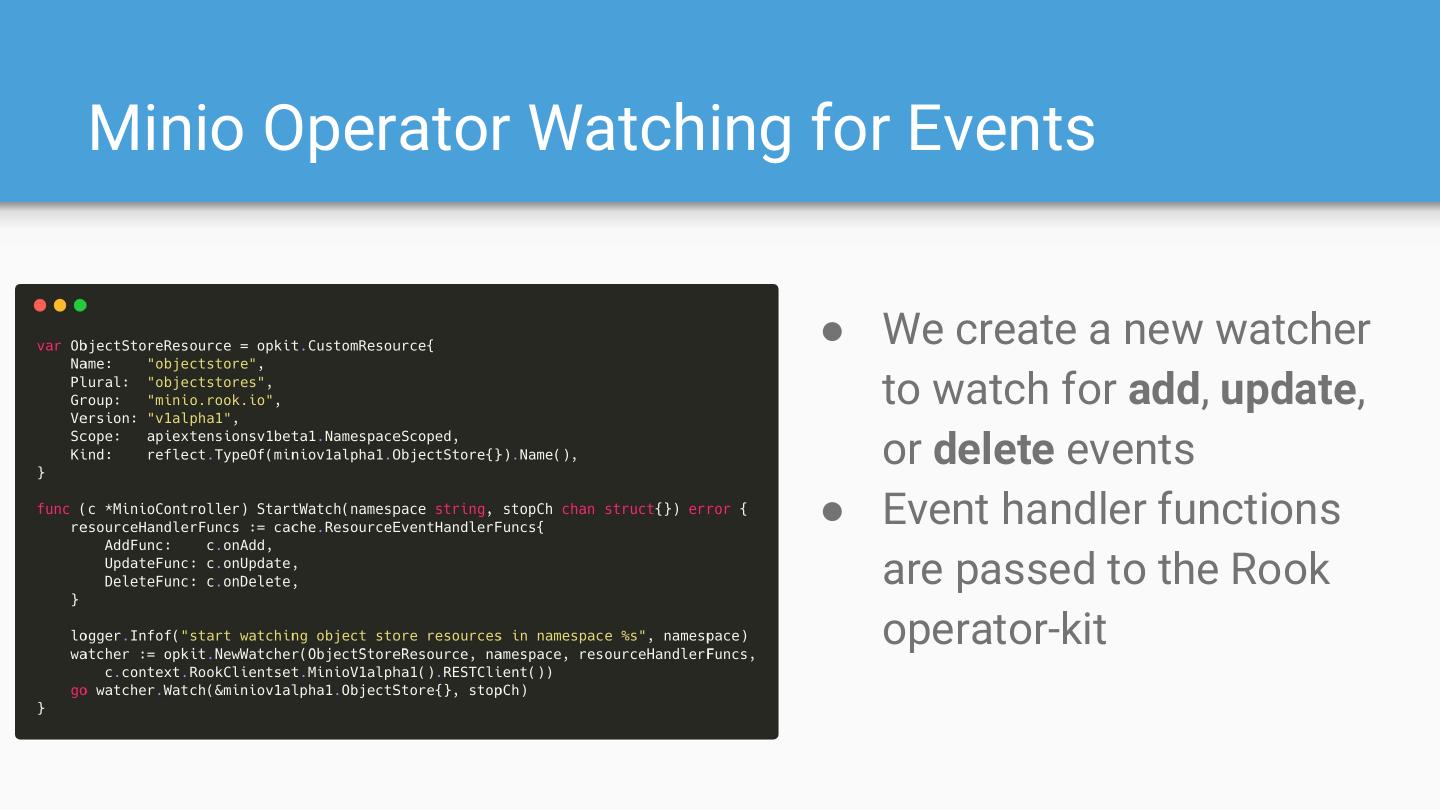

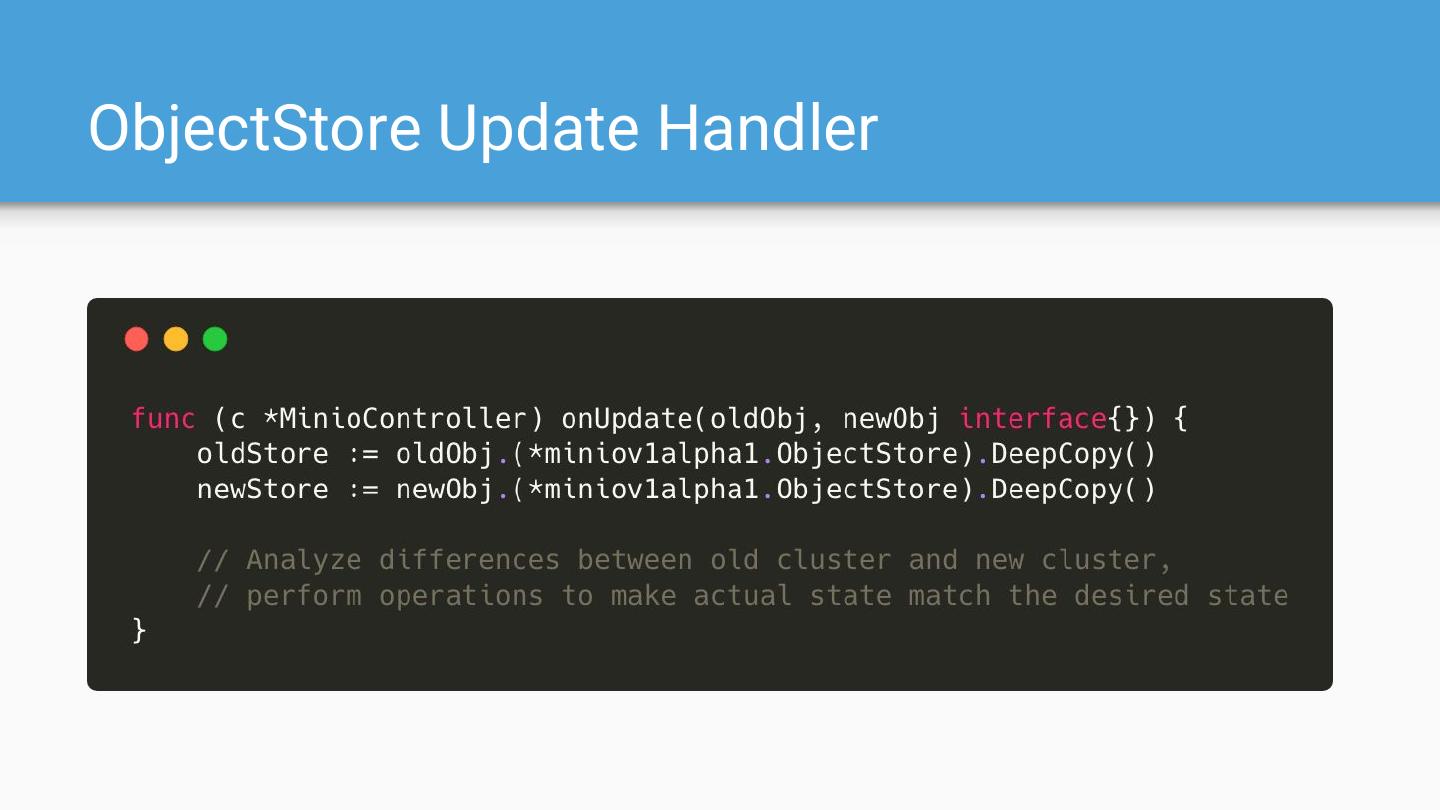

29 .Minio Operator Watching for Events ● We create a new watcher to watch for add, update, or delete events ● Event handler functions are passed to the Rook operator-kit