- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Distributed Algorithms Fundamentals + Introduction

展开查看详情

1 .Indranil Gupta (Indy) Lecture 7 Distributed Algorithms Fundamentals + Introduction to Sensor Networks February 7, 2017 CS 525 Advanced Distributed Systems Spring 2017 All Slides © IG

2 .CS 525 and Distributed Systems D.S. Theory Peer to peer systems Cloud Computing Sensor Networks

3 .Distributed Algorithms Fundamentals – Outline Synchronous versus Asynchronous systems Lamport Timestamps Global Snapshots Impossibility of Consensus proof

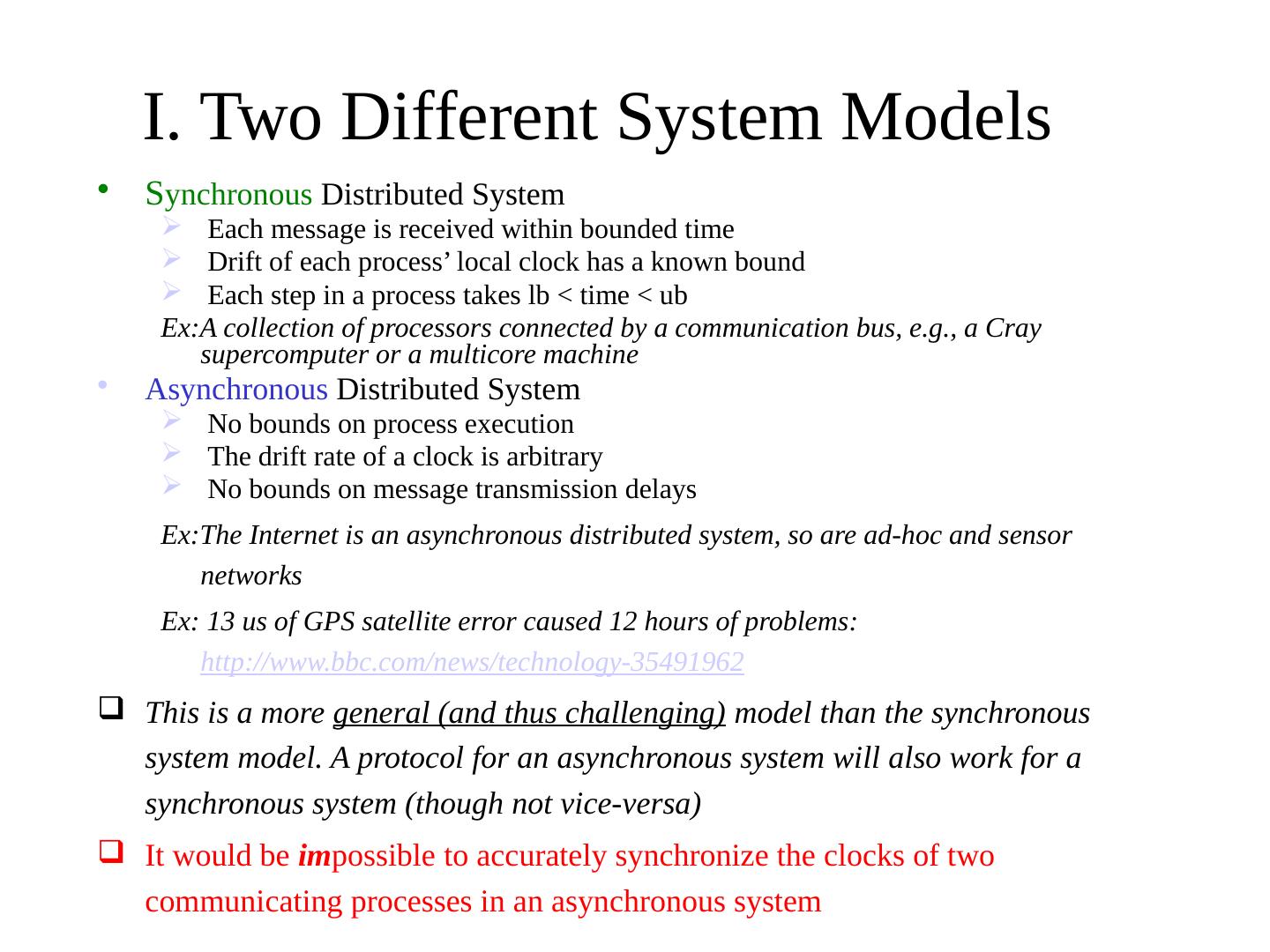

4 .I. Two Different System Models S ynchronous Distributed System Each message is received within bounded time Drift of each process’ local clock has a known bound Each step in a process takes lb < time < ub Ex:A collection of processors connected by a communication bus, e.g., a Cray supercomputer or a multicore machine Asynchronous Distributed System No bounds on process execution The drift rate of a clock is arbitrary No bounds on message transmission delays Ex:The Internet is an asynchronous distributed system, so are ad-hoc and sensor networks Ex: 13 us of GPS satellite error caused 12 hours of problems: http://www.bbc.com/news/technology-35491962 This is a more general (and thus challenging) model than the synchronous system model. A protocol for an asynchronous system will also work for a synchronous system (though not vice-versa) It would be im possible to accurately synchronize the clocks of two communicating processes in an asynchronous system

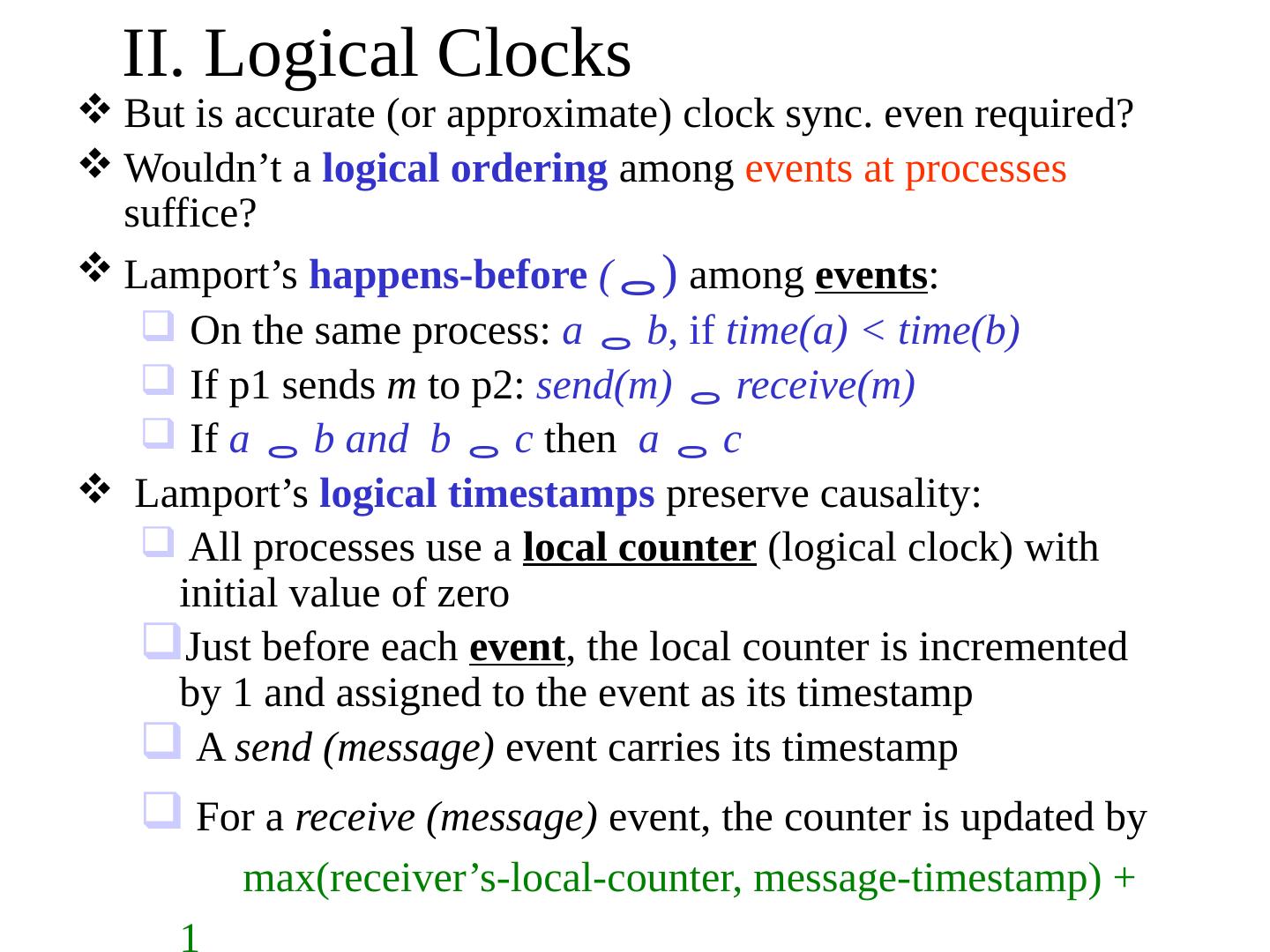

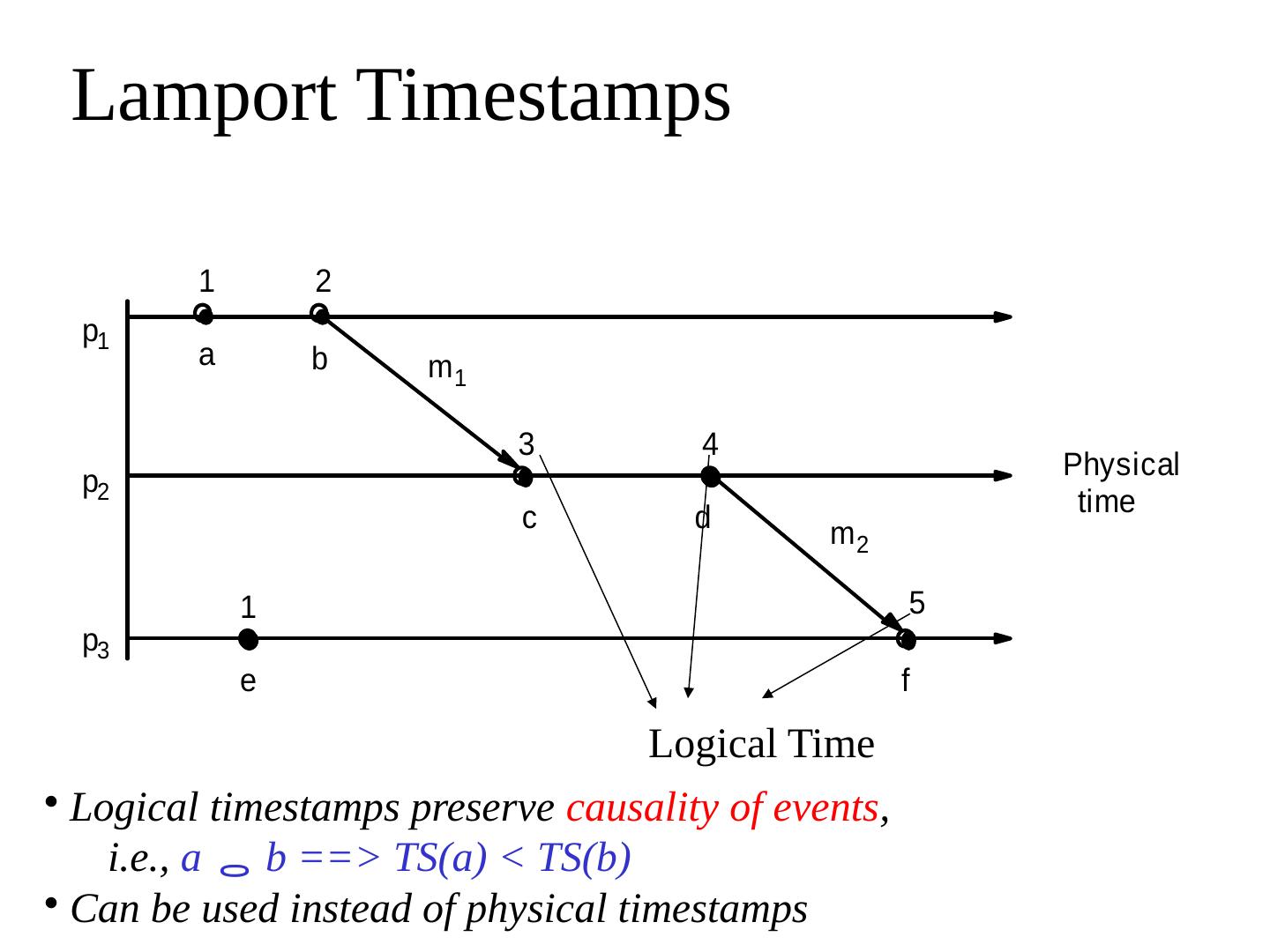

5 .II. Logical Clocks But is accurate (or approximate) clock sync. even required? Wouldn’ t a logical ordering among events at processes suffice? Lamport’ s happens-before ( ) among events : On the same process: a b , if time(a) < time(b) If p1 sends m to p2: send(m) receive(m) If a b and b c then a c Lamport’ s logical timestamps preserve causality: All processes use a local counter (logical clock) with initial value of zero Just before each event , the local counter is incremented by 1 and assigned to the event as its timestamp A send (message) event carries its timestamp For a receive (message) event, the counter is updated by max(receiver’ s-local-counter, message-timestamp) + 1

6 .Example

7 .Lamport Timestamps Logical Time Logical timestamps preserve causality of events , i.e., a b ==> TS(a) < TS(b) Can be used instead of physical timestamps

8 .Lamport Timestamps Logical Time Logical timestamps preserve causality of events , i.e., a b ==> TS(a) < TS(b) Other way implication may not be true! (may be concurrent) logically concurrent events

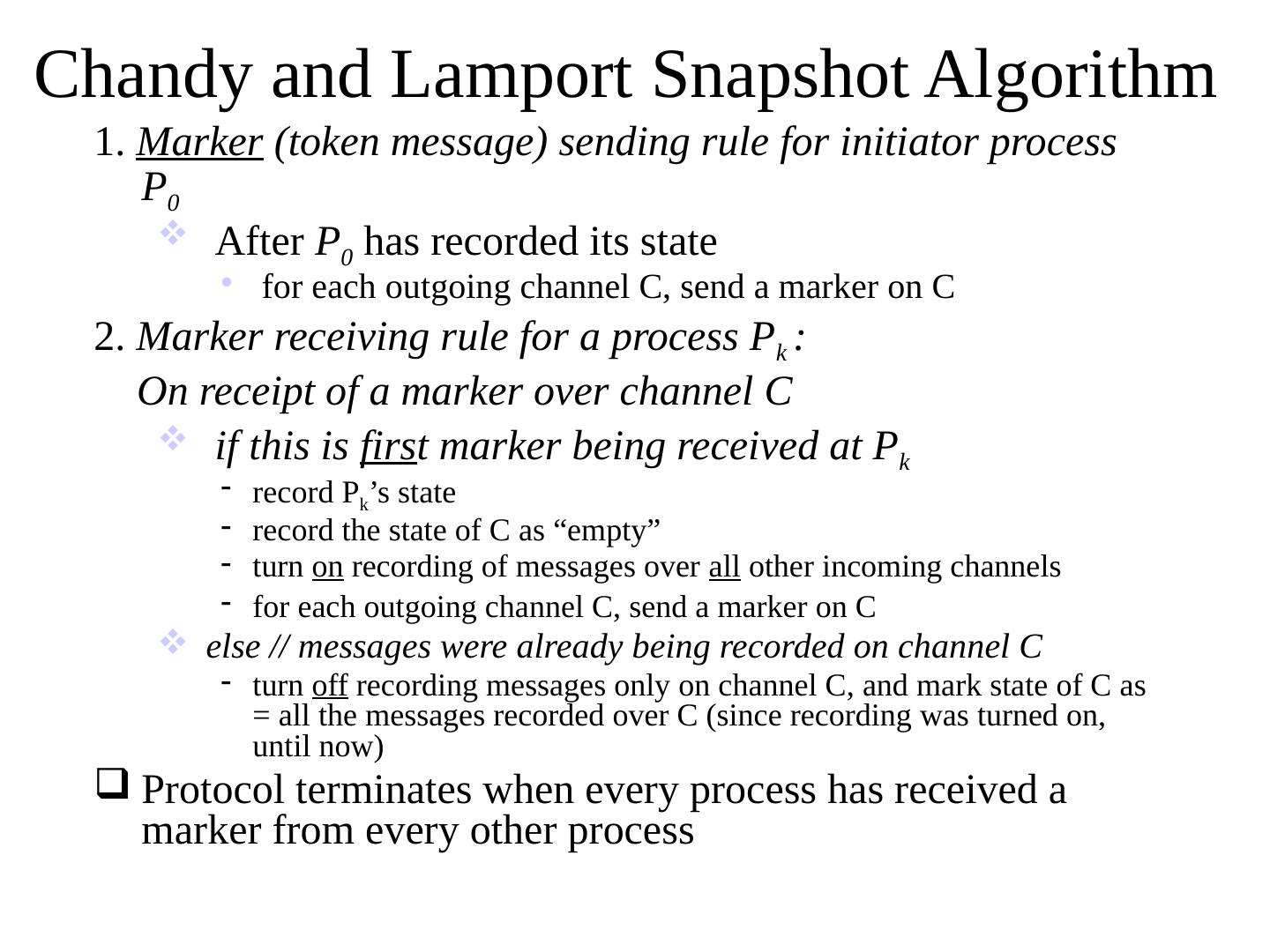

9 .III. Global Snapshot Algorithm Can you capture (record) the states of all processes and communication channels at exactly 10:04:50 am? Is it even necessary to take such an exact snapshot? Chandy and Lamport snapshot algorithm: records a logical (or causal) snapshot of the system. System Model: No failures, all messages arrive intact, exactly once, eventually There is a communication path between every process pair Communication channels are unidirectional and FIFO-ordered

10 .Chandy and Lamport Snapshot Algorithm 1. Marker (token message) sending rule for initiator process P 0 After P 0 has recorded its state for each outgoing channel C, send a marker on C 2. Marker receiving rule for a process P k : On receipt of a marker over channel C if this is firs t marker being received at P k record P k ’ s state record the state of C as “ empty ” turn on recording of messages over all other incoming channels for each outgoing channel C, send a marker on C else // messages were already being recorded on channel C turn off recording messages only on channel C, and mark state of C as = all the messages recorded over C (since recording was turned on, until now) Protocol terminates when every process has received a marker from every other process

11 .Snapshot Example P1 P2 P3 e 1 0 e 2 0 e 2 3 e 3 0 e 1 3 a b M e 1 1,2 M 1- P1 initiates snapshot: records its state (S1); sends Markers to P2 & P3; turns on recording for channels C21 and C31 e 2 1,2,3 M M 2- P2 receives Marker over C12, records its state (S2), sets state(C12) = {} sends Marker to P1 & P3; turns on recording for channel C32 e 1 4 3- P1 receives Marker over C21, sets state(C21) = {a} e 3 2,3,4 M M 4- P3 receives Marker over C13, records its state (S3), sets state(C13) = {} sends Marker to P1 & P2; turns on recording for channel C23 e 2 4 5- P2 receives Marker over C32, sets state(C32) = {b} e 3 1 6- P3 receives Marker over C23, sets state(C23) = {} e 1 3 7- P1 receives Marker over C31, sets state(C31) = {} Consistent Cut Consistent Cut =time-cut across processors and channels so no event to the right of the cut “ happens-before ” an event that is left of the cut

12 .IV. Give it a thought Have you ever wondered why distributed server vendors always only offer solutions that promise five-9’ s reliability, seven-9 ’ s reliability, but never 100% reliable? The fault does not lie with Microsoft Corp. or Apple Inc. or Cisco The fault lies in the impossibility of consensus

13 .What is Consensus? N processes Each process p has input variable xp : initially either 0 or 1 output variable yp : initially b (can be changed only once) Consensus problem : design a protocol so that at the end, either: all processes set their output variables to 0 Or all processes set their output variables to 1 Also, there is at least one initial state that leads to each outcome above (non-triviality) There might be other constraints (Validity=if everyone proposes same value that’s what’ s decided. Integrity = decided value must have been proposed by some process)

14 .Why is Consensus Important Many problems in distributed systems are equivalent to (or harder than) consensus! Agreement (harder than consensus, since it can be used to solve consensus) Leader election (select exactly one leader, and every alive process knows about it) Perfect Failure Detection Consensus using leader election Choose 0 or 1 based on the last bit of the identity of the elected leader. So consensus is a very important problem, and solving it would be really useful!

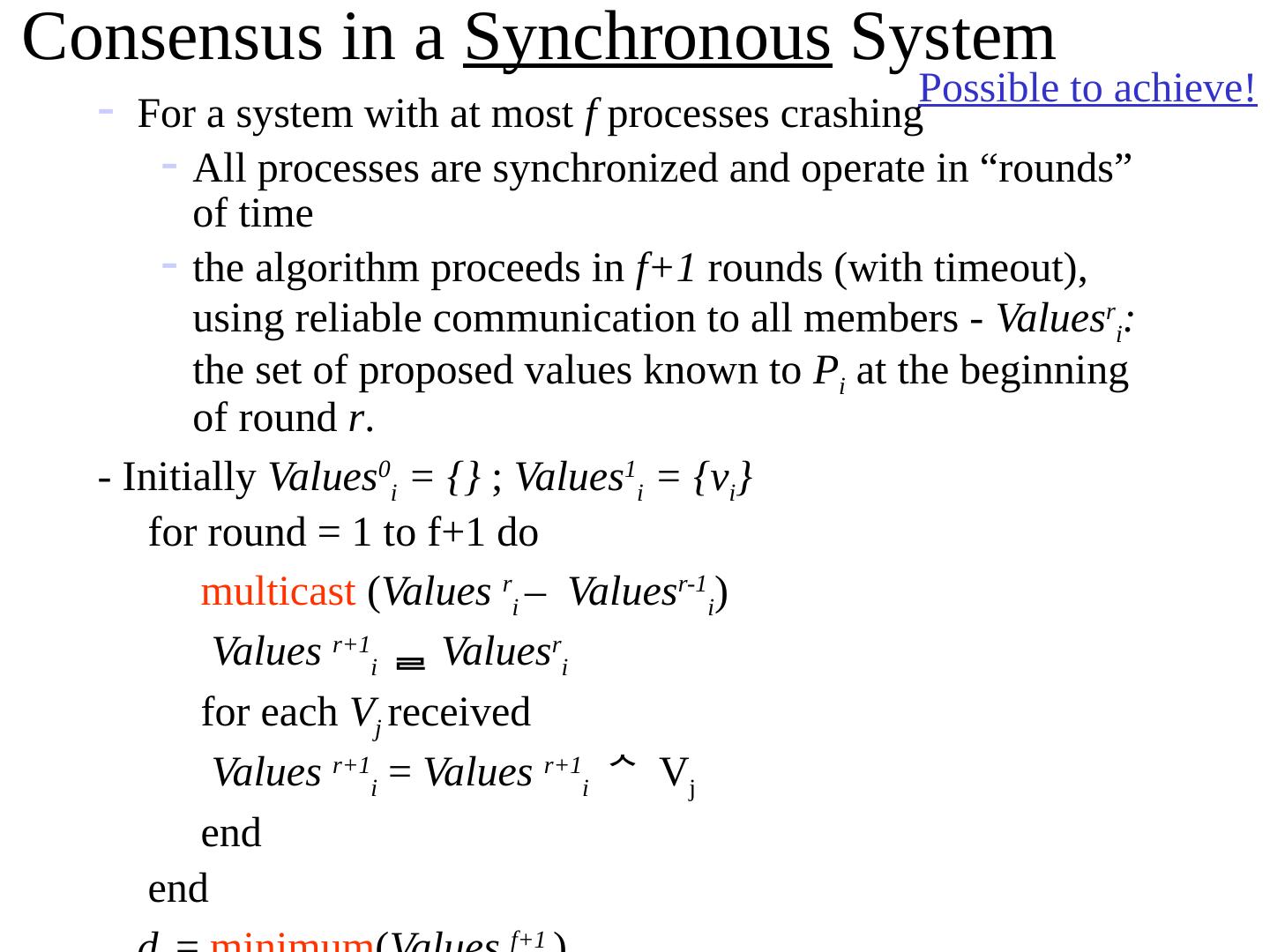

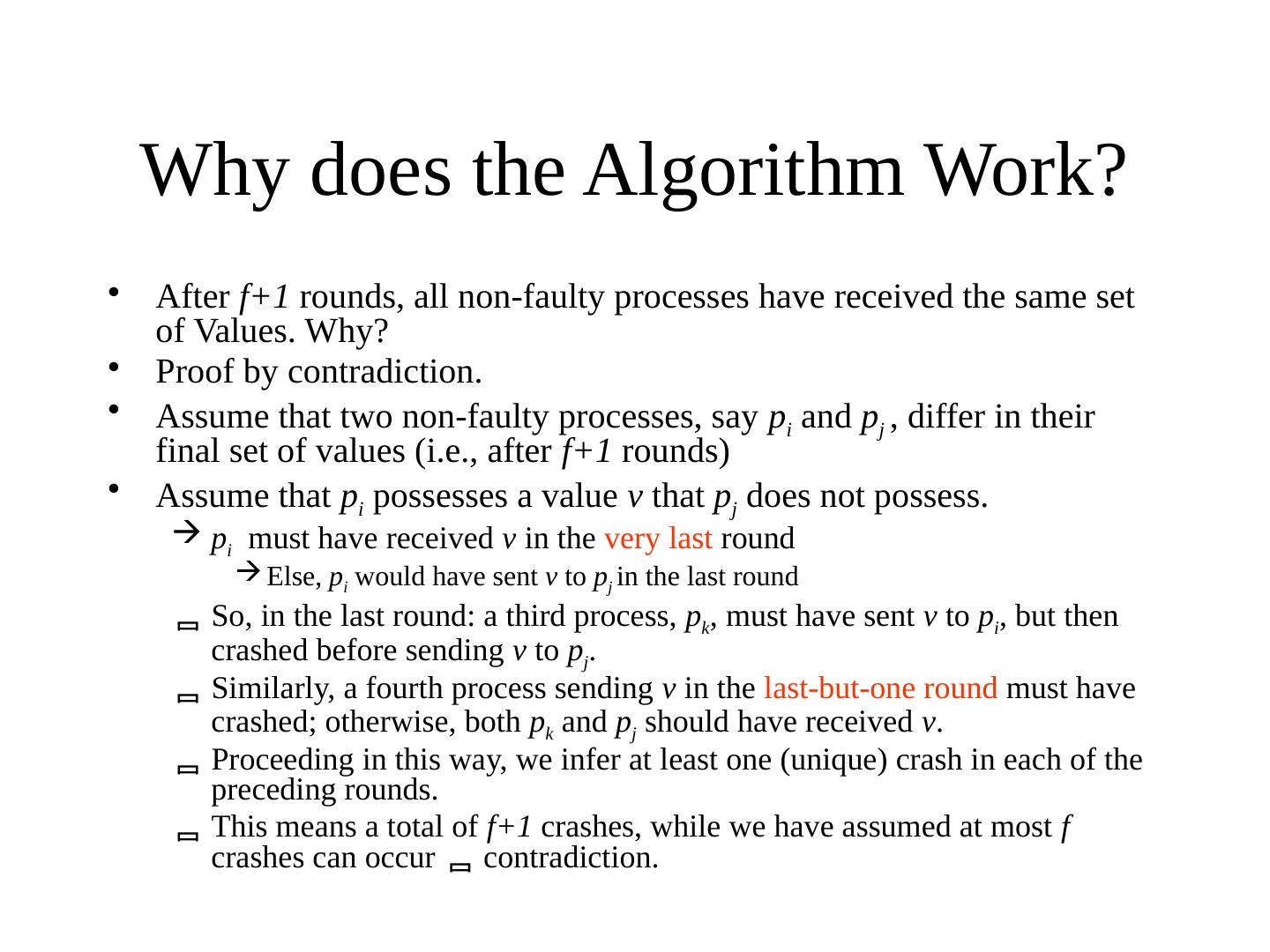

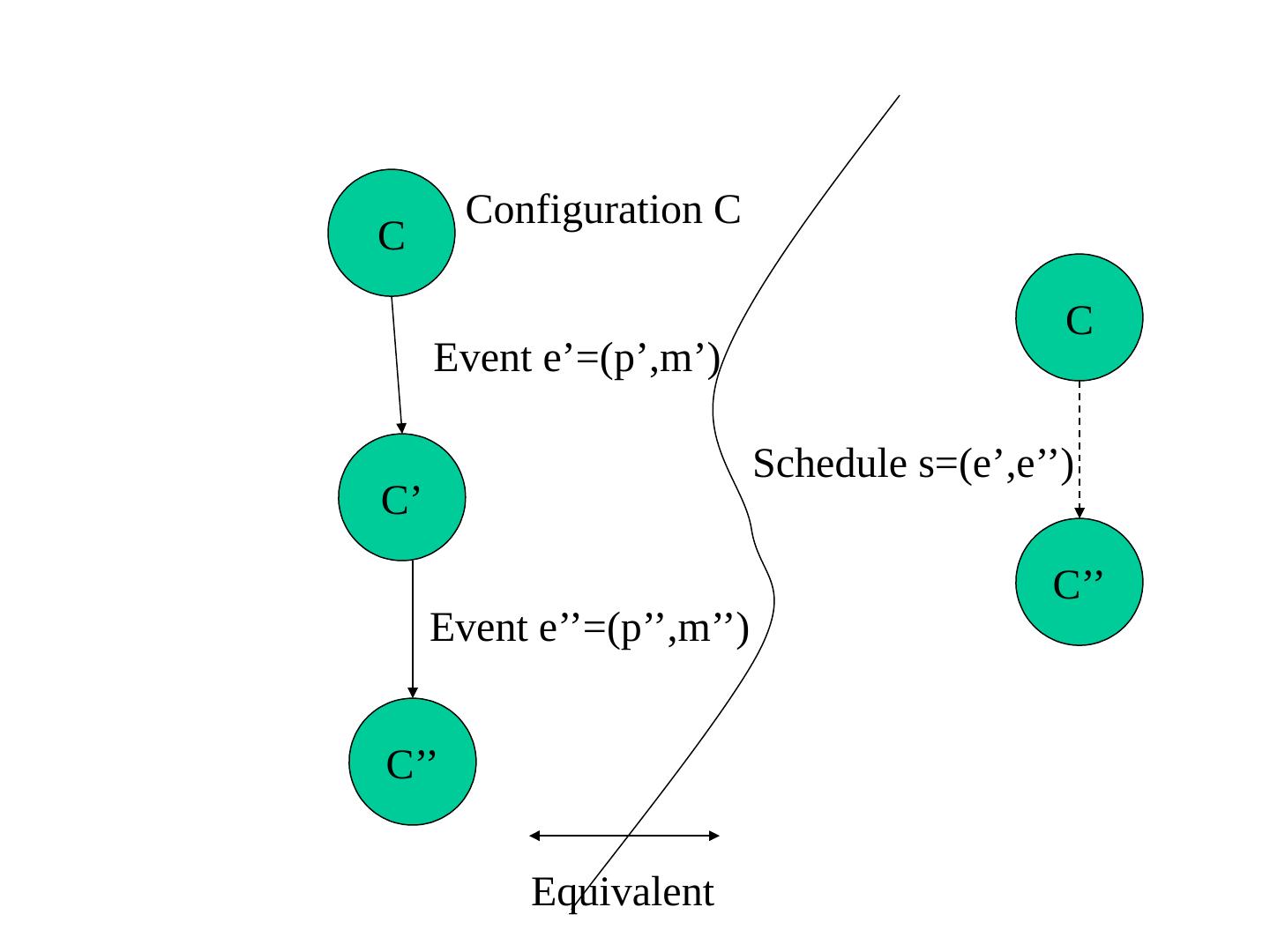

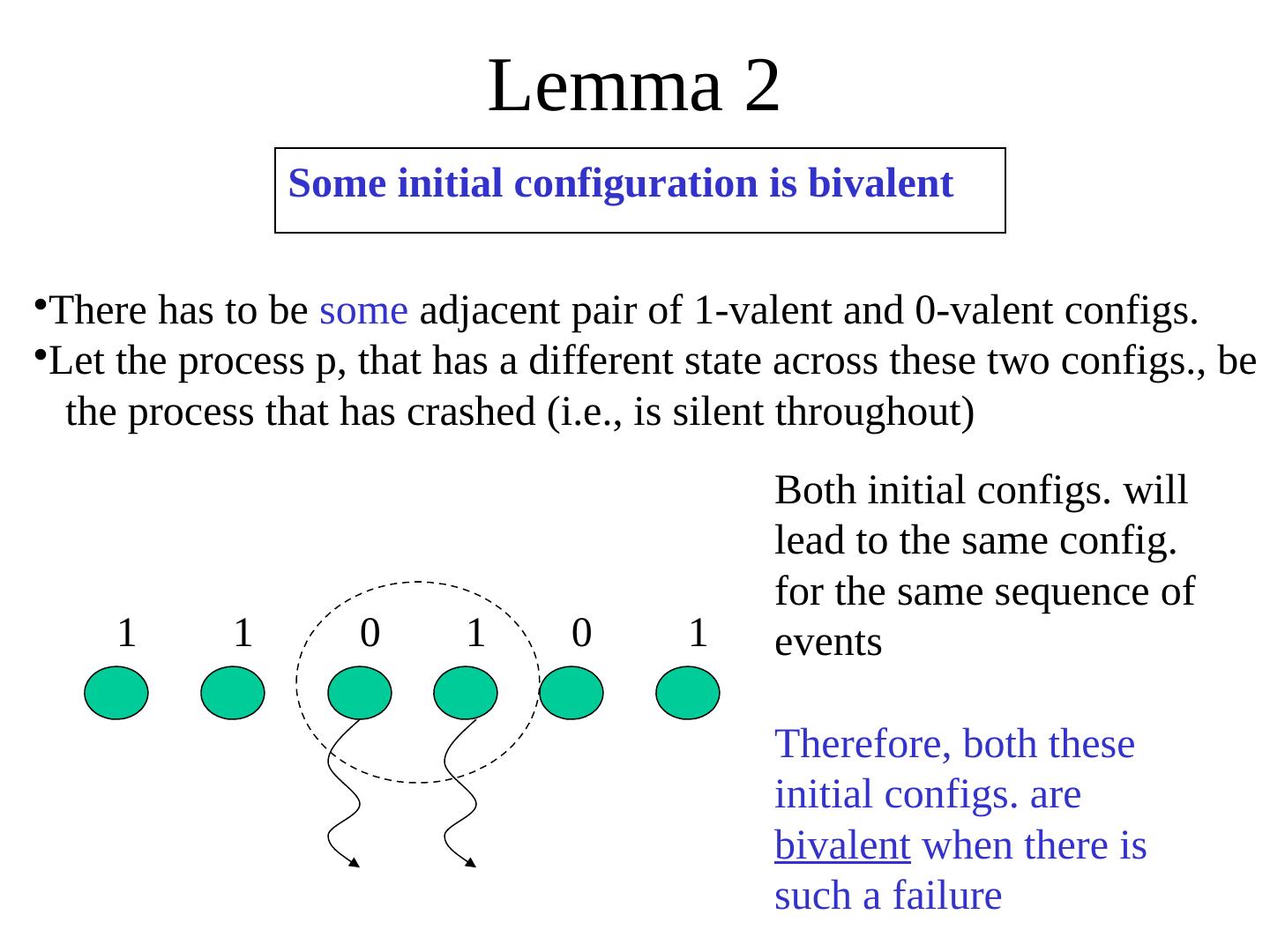

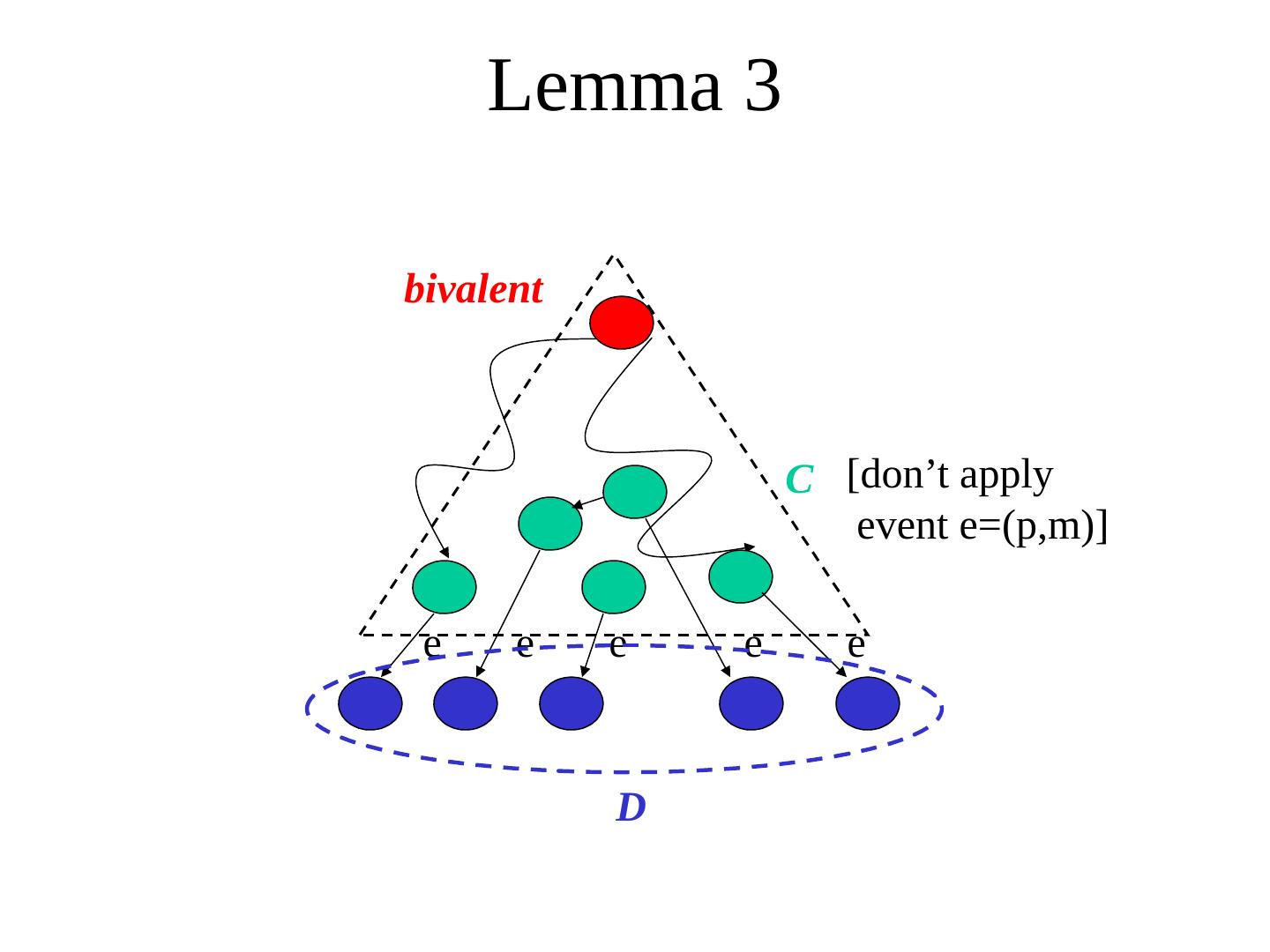

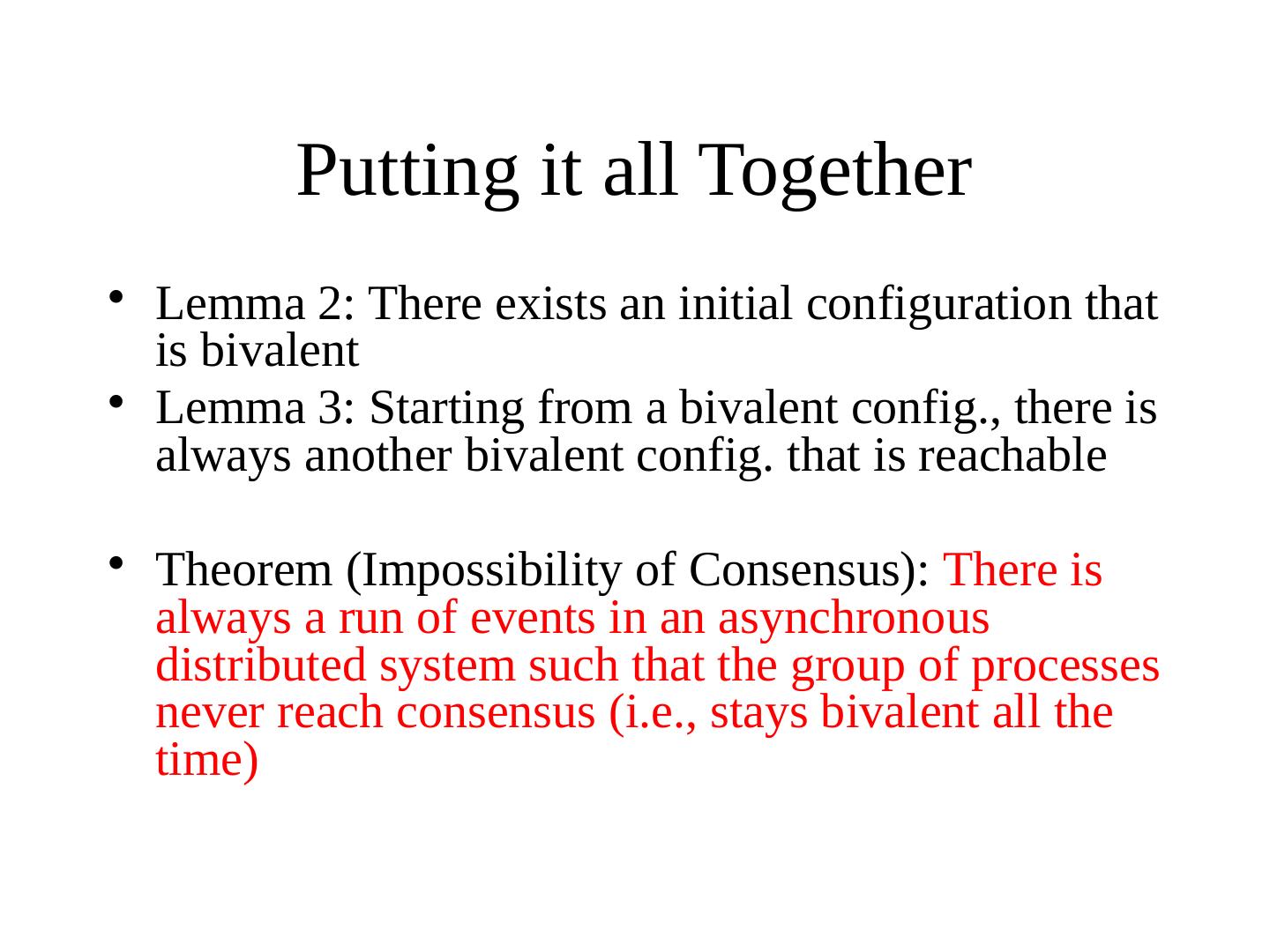

15 .Possible or not In the synchronous system model Consensus is solvable Use a multicast protocol in each round to disseminate all known values, for (N+1) rounds. At the end, everyone has the same value set. In the asynchronous system model Consensus is impossible to solve This means that no matter what protocol/algorithm you suggest, there is always a worst-case possible (with failures and message delays) such that the system is prevented from reaching consensus Powerful result (see the FLP proof in Backup slides of this slide set) Subsequently, safe or probabilistic solutions have become quite popular to consensus or related problems. Paxos is a safe solution (next lecture/session). FLP proof in appendix of slides (peruse in your own time)

16 .Intro to Sensor Networks

17 .A Gram of Gold=How Many Processors? Smallest state-of-the-art transistor today is made of a single Gold atom Still in research, not yet in industry. Pentium P4 contains 42 M transistors Gold atomic weight is 196 ~ 200. 1 g of Au contains 3 X 10^21 atoms => 7.5 X 10^18 P4 processors from a gram of Au => 1 billion P4’ s per person CPU speedup ~ √(# transistors on die)

18 .Sensor Networks Hype, But do we really need this technology? Coal mines have always had CO/CO2 sensors Industry has used sensors for a long time Today… Excessive Information Environmentalists collecting data on an island Army needs to know about enemy troop deployments Humans in society face information overload Sensor Networking technology can help filter and process this information (And then perhaps respond automatically?)

19 .Growth of a technology requires Hardware Operating Systems and Protocols Killer applications Military and Civilian

20 .Sensor Nodes Motivating factors for emergence: applications, Moore’ s Law (or variants), wireless comm., MEMS (micro electro mechanical sensors) Canonical Sensor Node contains Sensor(s) to convert a different energy form to an electrical impulse e.g., to measure temperature Microprocessor Communications link e.g., wireless Power source e.g., battery

21 .Laser diode III-V process Passive CCR comm. MEMS/polysilicon Sensor MEMS/bulk, surface, ... Analog I/O, DSP, Control COTS CMOS Solar cell CMOS or III-V Thick film battery Sol/gel V 2 O 5 Power capacitor Multi-layer ceramic 1-2 mm Example: Berkeley “ Motes ” or “ Smart Dust ” Can you identify the 4 components here?

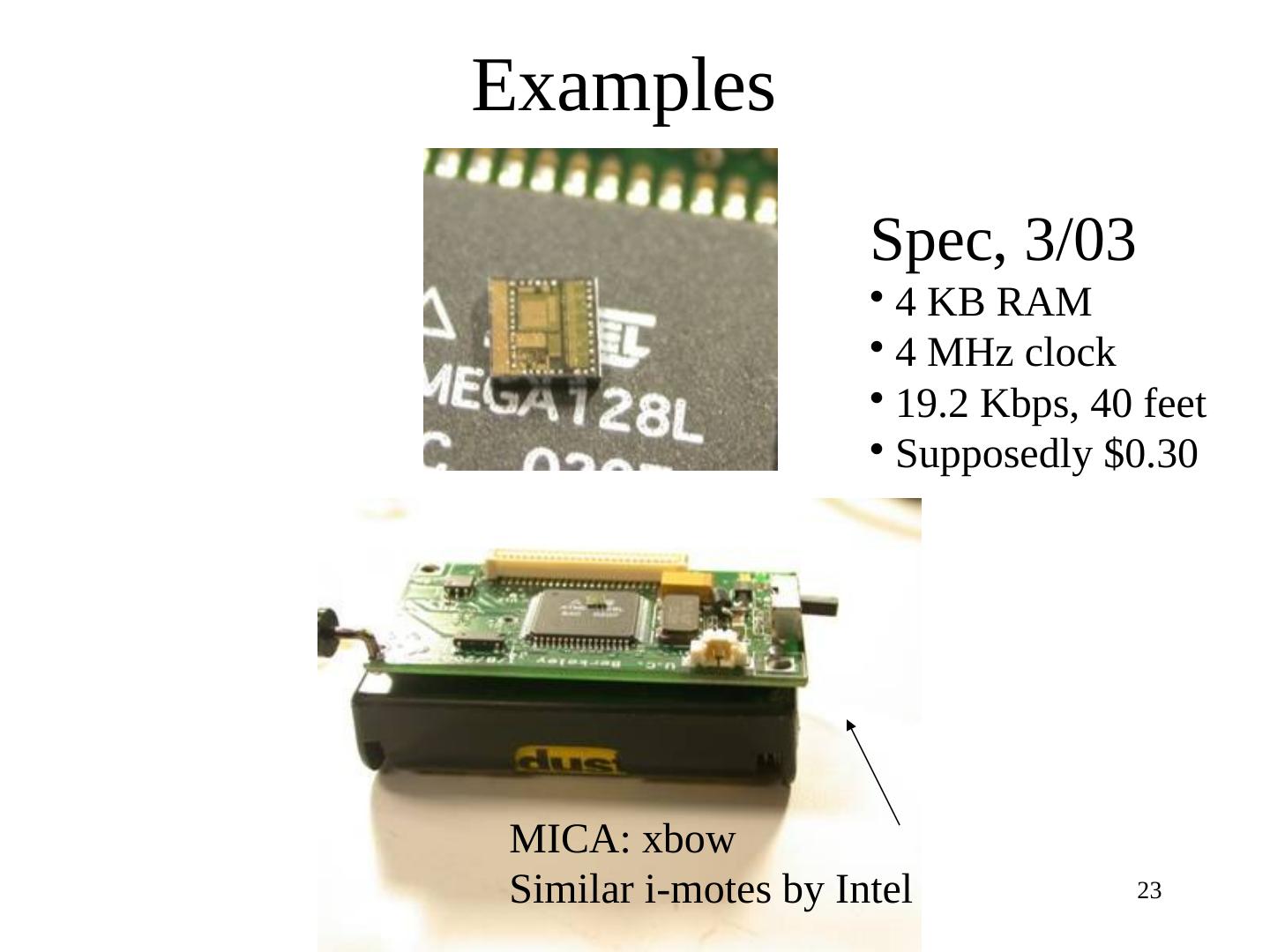

22 .Example Hardware Size Golem Dust: 11.7 cu. mm MICA motes: Few inches Everything on one chip: micro-everything processor, transceiver, battery, sensors, memory, bus MICA: 4 MHz, 40 Kbps, 4 KB SRAM / 512 KB Serial Flash, lasts 7 days at full blast on 2 x AA batteries

23 .Examples Spec, 3/03 4 KB RAM 4 MHz clock 19.2 Kbps, 40 feet Supposedly $0.30 MICA: xbow Similar i-motes by Intel

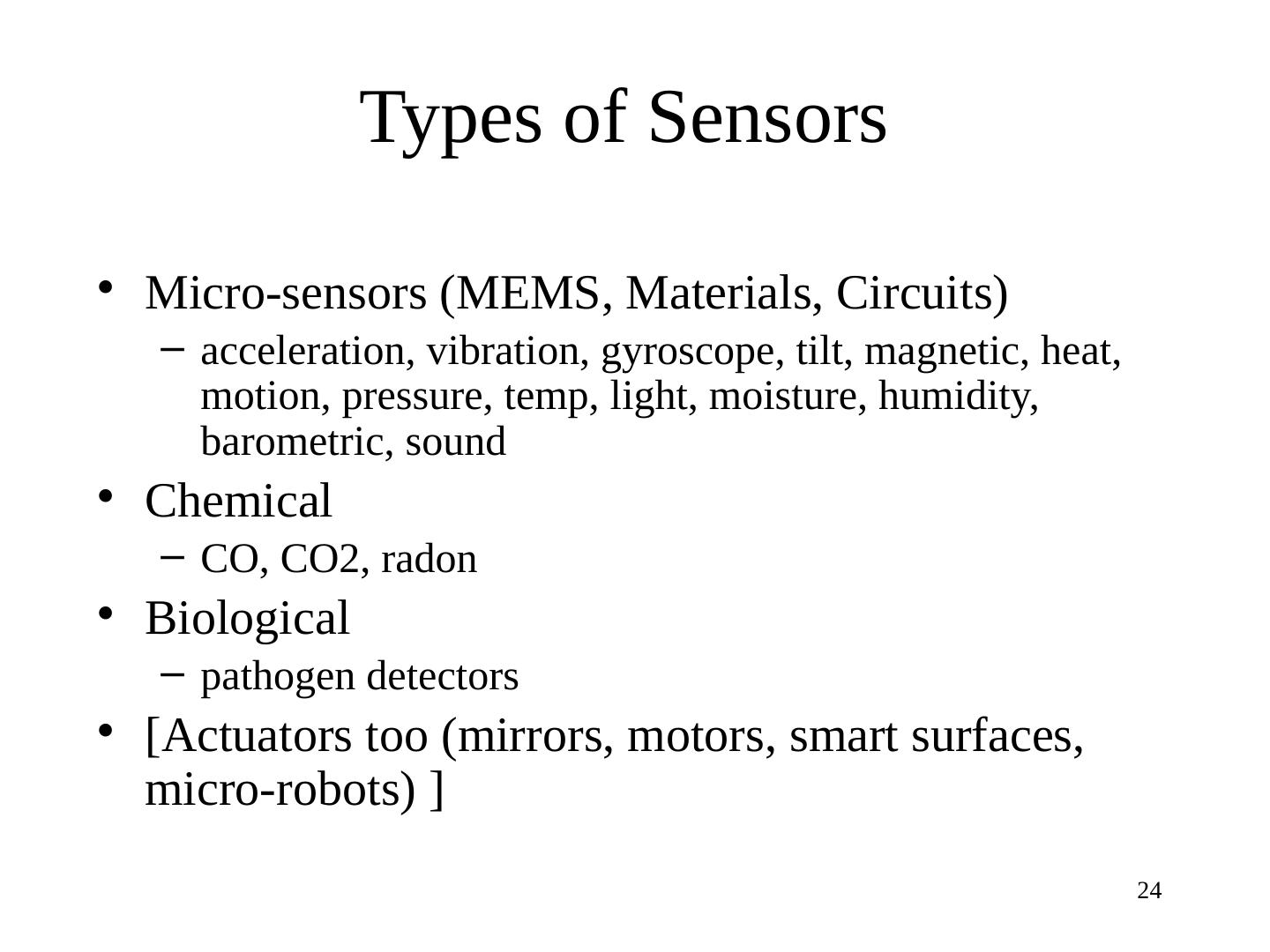

24 .Types of Sensors Micro-sensors (MEMS, Materials, Circuits) acceleration, vibration, gyroscope, tilt, magnetic, heat, motion, pressure, temp, light, moisture, humidity, barometric, sound Chemical CO, CO2, radon Biological pathogen detectors [Actuators too (mirrors, motors, smart surfaces, micro-robots) ]

25 .I2C bus – simple technology Inter-IC connect e.g., connect sensor to microprocessor Simple features Has only 2 wires Bi-directional serial data (SDA) and serial clock (SCL) bus Up to 3.4 Mbps Developed By Philips

26 .Transmission Medium Spec, MICA : Radio Frequency (RF) Broadcast medium, routing is “ store and forward ” , links are bidirectional Smart Dust : smaller size but RF needs high frequency => higher power consumption Optical transmission : simpler hardware, lower power Directional antennas only, broadcast costly Line of sight required Switching links costly : mechanical antenna movements Passive transmission (reflectors) => wormhole routing Unidirectional links

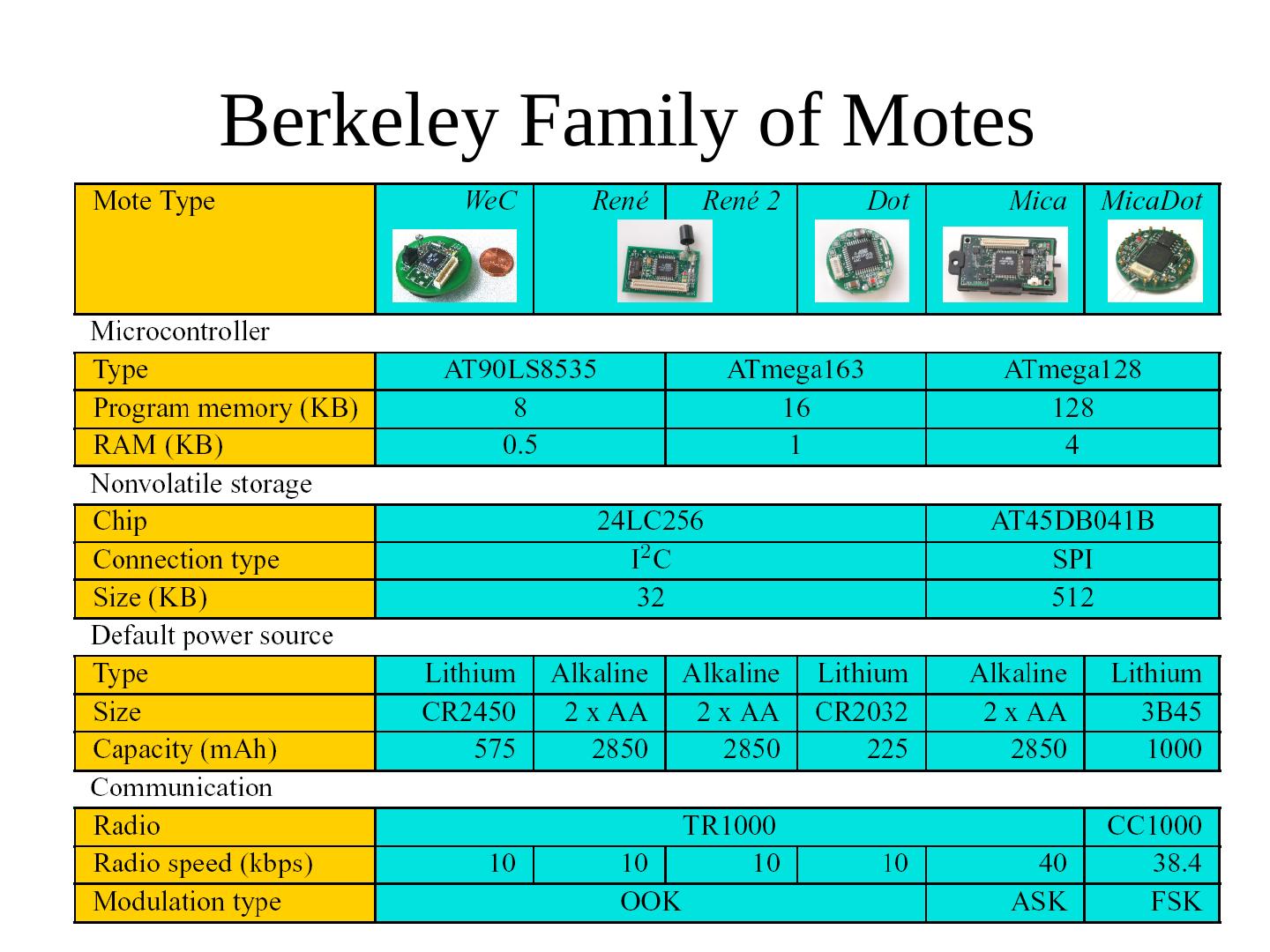

27 .Berkeley Family of Motes

28 .Summary: Sensor Node Small Size : few mm to a few inches Limited processing and communication MhZ clock, MB flash, KB RAM, 100’ s Kbps (wireless) bandwidth Limited power (MICA: 7-10 days at full blast) Failure prone nodes and links (due to deployment, fab, wireless medium, etc.) But easy to manufacture and deploy in large numbers Need to offset this with scalable and fault-tolerant OS’ s and protocols

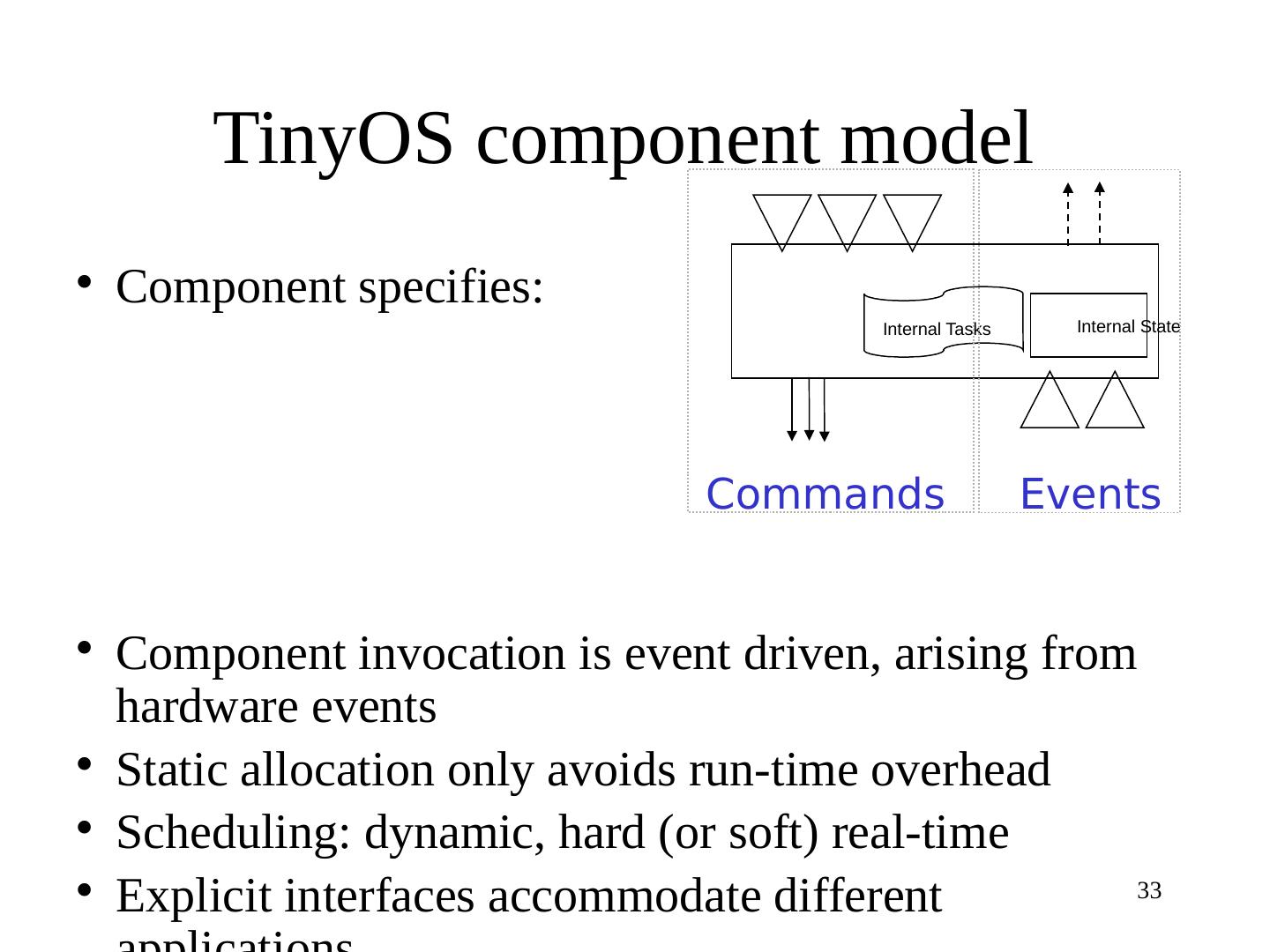

29 .Sensor-node Operating System Issues Size of code and run-time memory footprint Embedded System OS’ s inapplicable: need hundreds of KB ROM Workload characteristics Continuous ? Bursty ? Application diversity Want to reuse sensor nodes Tasks and processes Scheduling Hard and soft real-time Power consumption Communication