- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

09 Transaction Locking and Concurrency #2

展开查看详情

1 . Replicated Data EECS 262a • Replicate data at many nodes – Performance: local reads Advanced Topics in Computer Systems – Fault-tolerance: no data loss unless all replicas fail or become Lecture 9 unreachable – Availability: data still available unless all replicas fail or become unreachable CRDTs and Coordination Avoidance – Scalability: load balance across nodes for reads September 20th, 2018 • Updates – Push to all replicas – Consistency: expensive! John Kubiatowicz Based on slides by Ali Ghodsi and Ion Stoica http://www.eecs.berkeley.edu/~kubitron/cs262 9/20/2018 cs262a-F18 Lecture-09 2 Conflicts Strong Consistency • Updating replicas may lead to different results • All replicas execute updates in same total order inconsistent data – Deterministic updates: same update on same objects same result s1 5 3 7 s1 5 3 3 7 s2 5 3 7 s2 5 3 7 s3 5 7 3 s3 5 7 3 7 coordinate 9/20/2018 cs262a-F18 Lecture-09 3 9/20/2018 cs262a-F18 Lecture-09 4

2 . Strong Consistency CAP theorem • All replicas execute updates in same total order • Can only have two of the three – Deterministic updates: same update on same objects properties in a distributed system same result • Consistency. Always return a consistent • Requires coordination and consensus to decide results (linearizable). As if there was only on total order of operations a single copy of the data. – N-way agreement, basically serialize updates very • Availability. Always return an answer to expensive! requests (faster than really long lived partitions). • Partition-tolerance. Continue operating correctly even if the network partitions. 9/20/2018 cs262a-F18 Lecture-09 5 9/20/2018 cs262a-F18 Lecture-09 6 CAP theorem v2 Eventual Consistency to the rescue • When the networked is partitioned, • If no new updates are made to an object all replicas will eventually you must chose one of these converge to the same value • Consistency. Always return a consistent • Update local and propagate results (linearizable). As if there was only – No consensus in the background scale well for both reads and a single copy of the data. writes – Expose intermediate state • Availability. Always return an answer to – Assume, eventual, reliable delivery requests (faster than really long lived partitions). • On conflict, applications – Arbitrate & Rollback • How can we get around CAP? 9/20/2018 cs262a-F18 Lecture-09 7 9/20/2018 cs262a-F18 Lecture-09 8

3 . Eventual Consistency • If no new updates are made to an object all replicas will eventually converge to the same value • However – High complexity – Unclear semantics if application reads data and then we have a rollback! 9/20/2018 cs262a-F18 Lecture-09 9 9/20/2018 cs262a-F18 Lecture-09 10 • Must be available when partitions happen • Must be available when partitions happen • “For example, customers should be able to view and add items to their shopping cart even if disks • “Many traditional […]. In such systems, writes may be rejected if the data store cannot reach all are failing, network routes are flapping, or data (or a majority of) the replicas at a given time. On centers are being destroyed by tornados. the other hand, Dynamo targets the design space Therefore, the service responsible for managing of an “always writeable” data store (i.e., a data shopping carts requires that it can always write to store that is highly available for writes). […] For and read from its data store, and that its data instance, the shopping cart service must allow needs to be available across multiple data customers to add and remove items from their centers.” shopping cart even amidst network and server failures. This requirement forces us to push the • Handles 3 million checkouts a day (2009). complexity of conflict resolution to the reads in Availability! order to ensure that writes are never rejected” 9/20/2018 cs262a-F18 Lecture-09 11 9/20/2018 cs262a-F18 Lecture-09 12

4 . Today’s Papers • CRDTs: Consistency without concurrency control • Must be available when partitions happen Marc Shapiro, Nuno Preguica, Carlos Baquero, Marek Zawirski Research Report, RR-6956, INRIA, 2009 • “There is a category of applications in Amazon’s platform that can tolerate such inconsistencies and can be constructed to operate under these conditions. For • Coordination Avoidance in Database Systems example, the shopping cart application requires that an Peter Bailis, Alan Fekete, Michael J. Franklin, Ali Ghodsi, Joseph M. “Add to Cart” operation can never be forgotten or Hellerstein, Ion Stoica, rejected. If the most recent state of the cart is Proceedings of VLDB’14 unavailable, and a user makes changes to an older version of the cart, that change is still meaningful and should be preserved. Note that both “add to cart” • Thoughts? and “delete item from cart” operations are translated into put requests to Dynamo. When a customer wants to add an item to (or remove from) a shopping cart and the latest version is not available, the item is added to (or removed from) the older version and the divergent versions are reconciled later. .” 9/20/2018 cs262a-F18 Lecture-09 13 9/20/2018 cs262a-F18 Lecture-09 14 Main idea of CRDTs Strong Eventual Consistency • What does CRDT stand for? • Strong Eventual Consistency (SEC): – Commutative Replicated Data Type? – Eventual Consistency with the guarantee that correct replicas that – Conflict-free Replicated Data Type? have received the same updates (maybe in different order) have an – Both…. equivalent correct state! • In a CRDT data structure: – If concurrent updates to replicas commute and all replicas execute • Like eventual consistency but with deterministic outcomes updates in causal order, then replicas converge of concurrent updates – Leverages simple mathematical properties that ensure absence of conflict such as monotonicity in a semi-lattice and/or commutativity – No need for background consensus • How does CRDTs get around consistency problems of eventual – No need to rollback consistency? – Available, fault-tolerant, scalable – Create many specialized APIs with custom semantics • Shopping cart might need a SET instead of PUT/GET • A search engine might need a distributed DAG • CS Research Trick: assume more semantics. More limited applicability, but can do things that were impossible before! 9/20/2018 cs262a-F18 Lecture-09 15 9/20/2018 cs262a-F18 Lecture-09 16

5 . Treedoc: A CRDT for Wikipedia Treedoc: A CRDT for Wikipedia • A Data structure for storing articles – Many replicas of documents – Document fragments (words/paragraphs) stored in tree » Fragments assembled in depth-first, left-to-right order • Each node in tree has unique ID based on path to node – Elements inserted by adding to tree at proper place (may unbalance tree) – Deletes labeled in tree but not immediately removed – Uniqueness (between replicas) enforced by adding tie-breaker based on replica nameh • Epidemic replication now works – just send all updates (tuple [ID, Text] or [ID, Delete]) to everyone • Periodic, all-replica compression by flattening and rebuilding tree – Requires consensus, but only rarely – Needs to be aborted if ongoing updates at any replica 9/20/2018 cs262a-F18 Lecture-09 17 9/20/2018 cs262a-F18 Lecture-09 18 Treedoc size over time (GWB page) Was This a good paper?? • George W Bush Wikipedia page gets lots of • What were the authors’ goals? editing (read – “hacking”) and becomes good test case: • What about the evaluation / metrics? • Did they convince you that this was a good system /approach? • Were there any red-flags? • What mistakes did they make? • Does the system/approach meet the “Test of Time” challenge? • How would you review this paper today? 9/20/2018 cs262a-F18 Lecture-09 19 9/20/2018 cs262a-F18 Lecture-09 20

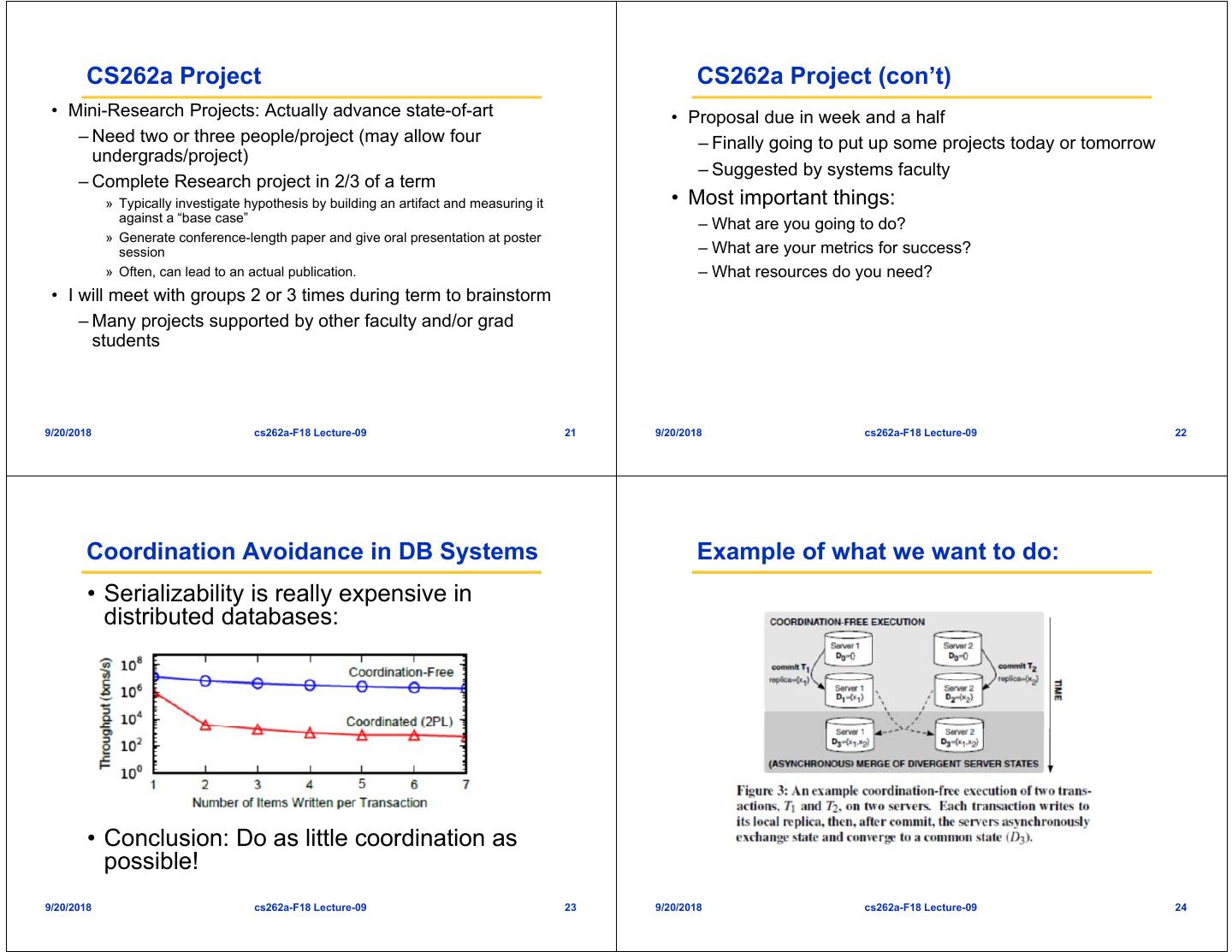

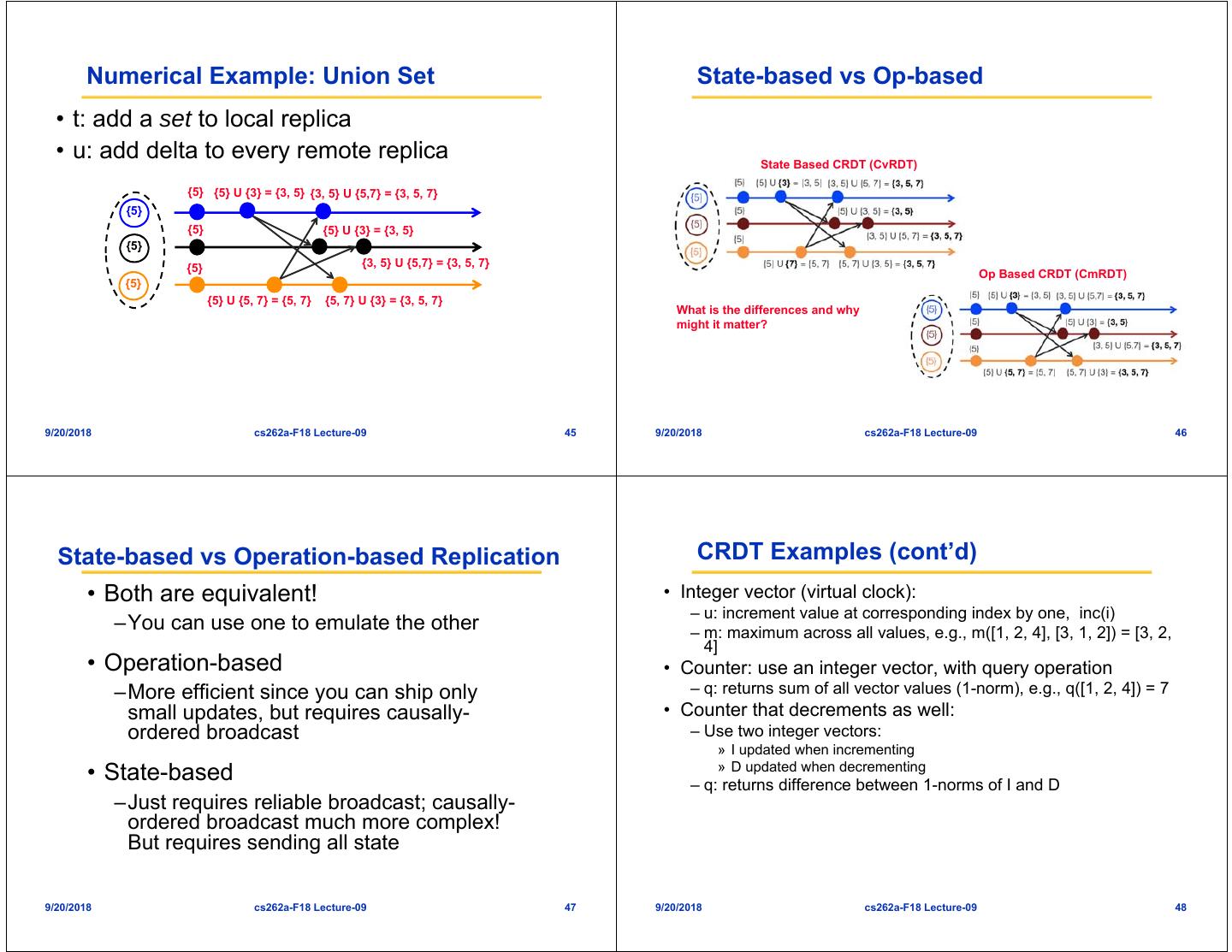

6 . CS262a Project CS262a Project (con’t) • Mini-Research Projects: Actually advance state-of-art • Proposal due in week and a half – Need two or three people/project (may allow four – Finally going to put up some projects today or tomorrow undergrads/project) – Suggested by systems faculty – Complete Research project in 2/3 of a term » Typically investigate hypothesis by building an artifact and measuring it • Most important things: against a “base case” – What are you going to do? » Generate conference-length paper and give oral presentation at poster session – What are your metrics for success? » Often, can lead to an actual publication. – What resources do you need? • I will meet with groups 2 or 3 times during term to brainstorm – Many projects supported by other faculty and/or grad students 9/20/2018 cs262a-F18 Lecture-09 21 9/20/2018 cs262a-F18 Lecture-09 22 Coordination Avoidance in DB Systems Example of what we want to do: • Serializability is really expensive in distributed databases: • Conclusion: Do as little coordination as possible! 9/20/2018 cs262a-F18 Lecture-09 23 9/20/2018 cs262a-F18 Lecture-09 24

7 . System Model: I-confluent execution Useful idea? • A set of trasactions T is -confluent with respect to invariant I if for all reachable states with common ancestor state, merged state is still I-valid: 9/20/2018 cs262a-F18 Lecture-09 25 9/20/2018 cs262a-F18 Lecture-09 26 Result for TPC-C Was This a good paper?? • What were the authors’ goals? • What about the evaluation / metrics? • Did they convince you that this was a good system /approach? • Were there any red-flags? • What mistakes did they make? • Does the system/approach meet the “Test of Time” challenge? • How would you review this paper today? 9/20/2018 cs262a-F18 Lecture-09 27 9/20/2018 cs262a-F18 Lecture-09 28

8 . Generalization (Optional Paper) Partial Order (poset) • Conflict-free Replicated Data Types • Set of objects S and an order relationship ≤ – Marc Shapiro, Nuno Preguica, Carlos Baquero, Marek Zawirski, 2011 between them, such that for all a, b, c in S • What are general properties of such conflict-free data • Reflexive: a ≤ a structures? • Antisymmetric: ( a ≤ b b ≤ a ) ( a = b ) • Two classes of replication: State-based and Operation-based • Transitive: ( a ≤ b b ≤ c ) ( a ≤ c ) 9/20/2018 cs262a-F18 Lecture-09 29 9/20/2018 cs262a-F18 Lecture-09 30 Semi-lattice Example • Partial order ≤ set S with a least upper • Partial order ≤ on set of integers bound (LUB), denoted • : max( ) –m = x y is a LUB of {x, y} under ≤ iff m′ ( x ≤ m′ y ≤ m′) (x≤ m y≤ m m ≤ m′ ) • Then, we have: –commutative: max(x, y) = max(y, x) • The nice thing about semi-lattices is that it follows that is: –idempotent: max(x, x) = x –commutative: x y = y x –associative: max(max(x, y), z) = max(x, –idempotent: x x = x max(y, z)) –associative: ( x y) z = x (y z) 9/20/2018 cs262a-F18 Lecture-09 31 9/20/2018 cs262a-F18 Lecture-09 32

9 . Example Aha! • Partial order on sets • How can this help us in building replicated distributed systems? • : U (set union) • Just use the LUB ⊔ to merge state between replicas • For instance, could build a CRDT using • Supports add(integer) • Then, we have: • • Supports get returns the maximum integer How? –commutative: A U B = B U A • Always correct: available and strongly eventually consistent –idempotent: A U A = A – Can we support remove(integer)? –associative: (A U B) U C = A U (B U C) 9/20/2018 cs262a-F18 Lecture-09 33 9/20/2018 cs262a-F18 Lecture-09 34 State-based Replication State-based Replication • Replicated object: a tuple (S, s0, q, u, m). • Algorithm – Replica at process pi has state si ∈ S – Periodically, replica at pi sends its current state to pj – s0: initial state – Replica pj merges received state into its local state by • Each replica can execute one of following commands executing m – q: query object’s state – u: update object’s state • After receiving all updates (irrespective of order), – m: merge state from a remote replica each replica will have same state 9/20/2018 cs262a-F18 Lecture-09 35 9/20/2018 cs262a-F18 Lecture-09 36

10 . Monotonic Semi-lattice Object Convergent Replicated Data Type (CvRDT) • A state-based object with partial order • Theorem: Assuming eventual delivery ≤, noted (S,≤, s0, q, u, m), that has and termination, any state-based object following properties, is called a that satisfies the monotonic semi-lattice monotonic semi-lattice: property is 1. Set S of values forms a semi-lattice • SEC ordered by ≤ 2. Merging state s with remote state s′ computes the LUB of the two states, i.e., s •m (s′ ) = s s′ 3. State is monotonically non-decreasing across updates, i.e., s ≤ s • u 9/20/2018 cs262a-F18 Lecture-09 37 9/20/2018 cs262a-F18 Lecture-09 38 Why does it work? Numerical Example: Union Set • Don’t care about order: • u: add new element to local replica –Merge is both commutative and associative • q: return entire set • merge: union between remote set and local replica {5} {5} U {3} = {3, 5} {3, 5} U {5, 7} = {3, 5, 7} • Don’t care about delivering more than {5} once {5} {5} {5} U {3, 5} = {3, 5} –Merge is idempotent {5} {3, 5} U {5, 7} = {3, 5, 7} {5} {5} U {7} = {5, 7} {5, 7} U {3, 5} = {3, 5, 7} 9/20/2018 cs262a-F18 Lecture-09 39 9/20/2018 cs262a-F18 Lecture-09 40

11 . Operation-based Replication Operation-based Replication • Algorithm • An op-based object is a tuple (S, s0, q, t, u, P ), where S, s0 and q have same meaning: state domain, initial state and query method – Updates are delivered to all replicas – No merge method; instead an update is split into a pair (t, u ), where – Use causally-ordered broadcast communication protocol, i.e., deliver every message to every node exactly once, consistent with happen- – t: side-effect-free prepare-update method (at local copy) before order – u: effect-free update method (at all copies) – Happen-before: updates from same replica are delivered in the order – P: delivery precondition (see next) they happened to all recipients (effectively delivery precondition, P) – Note: concurrent updates can be delivered in any order 9/20/2018 cs262a-F18 Lecture-09 41 9/20/2018 cs262a-F18 Lecture-09 42 Commutativity Property Commutative Replicated Data Type (CmRDT) • Updates (t, u) and (t′, u′) commute, iff for any • Assuming causal delivery of updates and reachable replica state s where both u and u′ are method termination, any op-based object enabled: that satisfies the commutativity property for – u (resp. u′ ) remains enabled in state s • u′ (resp. s • u ) all concurrent updates is SEC – s • u • u′ ≡ s • u′ • u • Commutativity holds for concurrent updates 9/20/2018 cs262a-F18 Lecture-09 43 9/20/2018 cs262a-F18 Lecture-09 44

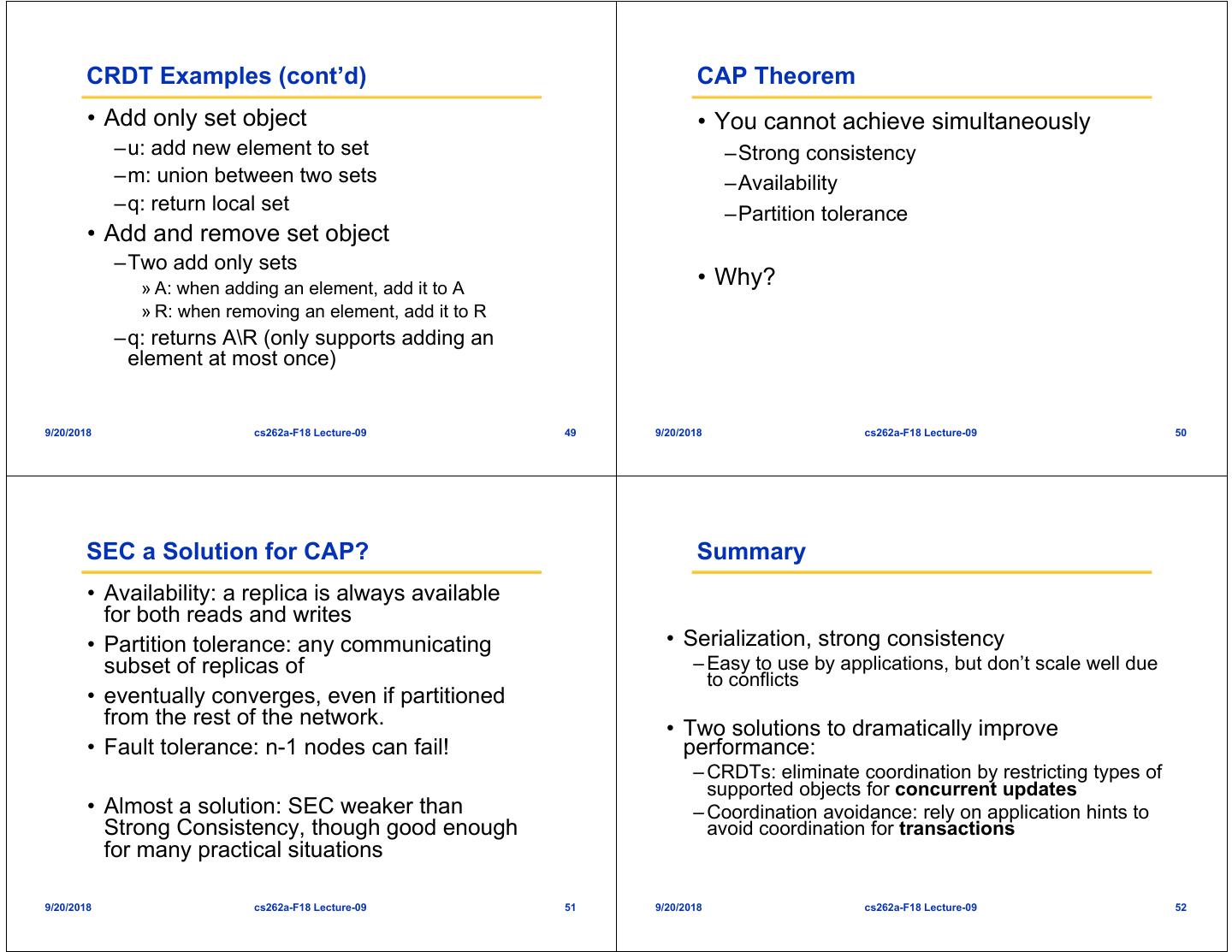

12 . Numerical Example: Union Set State-based vs Op-based • t: add a set to local replica • u: add delta to every remote replica State Based CRDT (CvRDT) {5} {5} U {3} = {3, 5} {3, 5} U {5,7} = {3, 5, 7} {5} {5} {5} U {3} = {3, 5} {5} {5} {3, 5} U {5,7} = {3, 5, 7} Op Based CRDT (CmRDT) {5} {5} U {5, 7} = {5, 7} {5, 7} U {3} = {3, 5, 7} What is the differences and why might it matter? 9/20/2018 cs262a-F18 Lecture-09 45 9/20/2018 cs262a-F18 Lecture-09 46 State-based vs Operation-based Replication CRDT Examples (cont’d) • Both are equivalent! • Integer vector (virtual clock): – u: increment value at corresponding index by one, inc(i) –You can use one to emulate the other – m: maximum across all values, e.g., m([1, 2, 4], [3, 1, 2]) = [3, 2, 4] • Operation-based • Counter: use an integer vector, with query operation –More efficient since you can ship only – q: returns sum of all vector values (1-norm), e.g., q([1, 2, 4]) = 7 small updates, but requires causally- • Counter that decrements as well: ordered broadcast – Use two integer vectors: » I updated when incrementing » D updated when decrementing • State-based – q: returns difference between 1-norms of I and D –Just requires reliable broadcast; causally- ordered broadcast much more complex! But requires sending all state 9/20/2018 cs262a-F18 Lecture-09 47 9/20/2018 cs262a-F18 Lecture-09 48

13 . CRDT Examples (cont’d) CAP Theorem • Add only set object • You cannot achieve simultaneously –u: add new element to set –Strong consistency –m: union between two sets –Availability –q: return local set –Partition tolerance • Add and remove set object –Two add only sets » A: when adding an element, add it to A • Why? » R: when removing an element, add it to R –q: returns A\R (only supports adding an element at most once) 9/20/2018 cs262a-F18 Lecture-09 49 9/20/2018 cs262a-F18 Lecture-09 50 SEC a Solution for CAP? Summary • Availability: a replica is always available for both reads and writes • Partition tolerance: any communicating • Serialization, strong consistency subset of replicas of – Easy to use by applications, but don’t scale well due to conflicts • eventually converges, even if partitioned from the rest of the network. • Two solutions to dramatically improve • Fault tolerance: n-1 nodes can fail! performance: – CRDTs: eliminate coordination by restricting types of supported objects for concurrent updates • Almost a solution: SEC weaker than – Coordination avoidance: rely on application hints to Strong Consistency, though good enough avoid coordination for transactions for many practical situations 9/20/2018 cs262a-F18 Lecture-09 51 9/20/2018 cs262a-F18 Lecture-09 52