- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

15 Edge Computing #1

展开查看详情

1 . Today’s Papers EECS 262a • You Can Teach Elephants to Dance Advanced Topics in Computer Systems Kiryong Ha, Yoshihisa Abe, Thomas Eiszler, Zhuo Chen, Wenlu Hu, Brandon Amos, Rohit Upadhyaya, Padmanabhan Lecture 15 Pillai, Mahadev Satyanarayanan, 2017 • Toward a Global Data Infrastructure Agile VM Migration/ Nitesh Mor, Ben Zhang, John Kolb, Douglas S. Chan, Nikhil Goyal, Nicholas Sun, Ken Lutz, Eric Allman, John Wawrzynek, Global Data Plane/DataCapsules Edward A. Lee, and John Kubiatowicz, 2016 October 11th, 2018 John Kubiatowicz Electrical Engineering and Computer Sciences • Thoughts? University of California, Berkeley http://www.eecs.berkeley.edu/~kubitron/cs262 10/11/2018 Cs262a-F18 Lecture-15 2 Recall: Edge Computing Why VM Encapsulation at Edge? • Wikipedia: • Lightweight mechanisms give performance and scalability – Docker, Linux containers, other in-kernel mechanisms Edge computing is a distributed computing paradigm in – Focus on lightweight encapsulation which computation is largely or completely performed on • Other attributes to consider (that favor VMs): distributed device nodes known as smart devices or edge – Safety: Protect infrastructure from potentially malicious software devices as opposed to primarily taking place in a – Isolation: Hide actions of mutually untrusting executions from one another centralized cloud environment. on multi-tenant cloudlet – Transparency: Ability to run unmodified app code without recompiling or relinking. Allow reuse of existing software for rapid deployment • Why compute on the edge? – Deployability: The ability to easily maintain cloudlets in the field and – Latency: Importance of human interactivity create mobile apps that have high likelihood of finding software- – Privacy: Keep sensitive data local compatible cloudlet anywhere in the world. – Reliability: Keep computing during network partitions • In short – VMs have many advantages in the edge environment – Can you get above advantages without full guest OS? 10/11/2018 Cs262a-F18 Lecture-15 3 10/11/2018 Cs262a-F18 Lecture-15 4

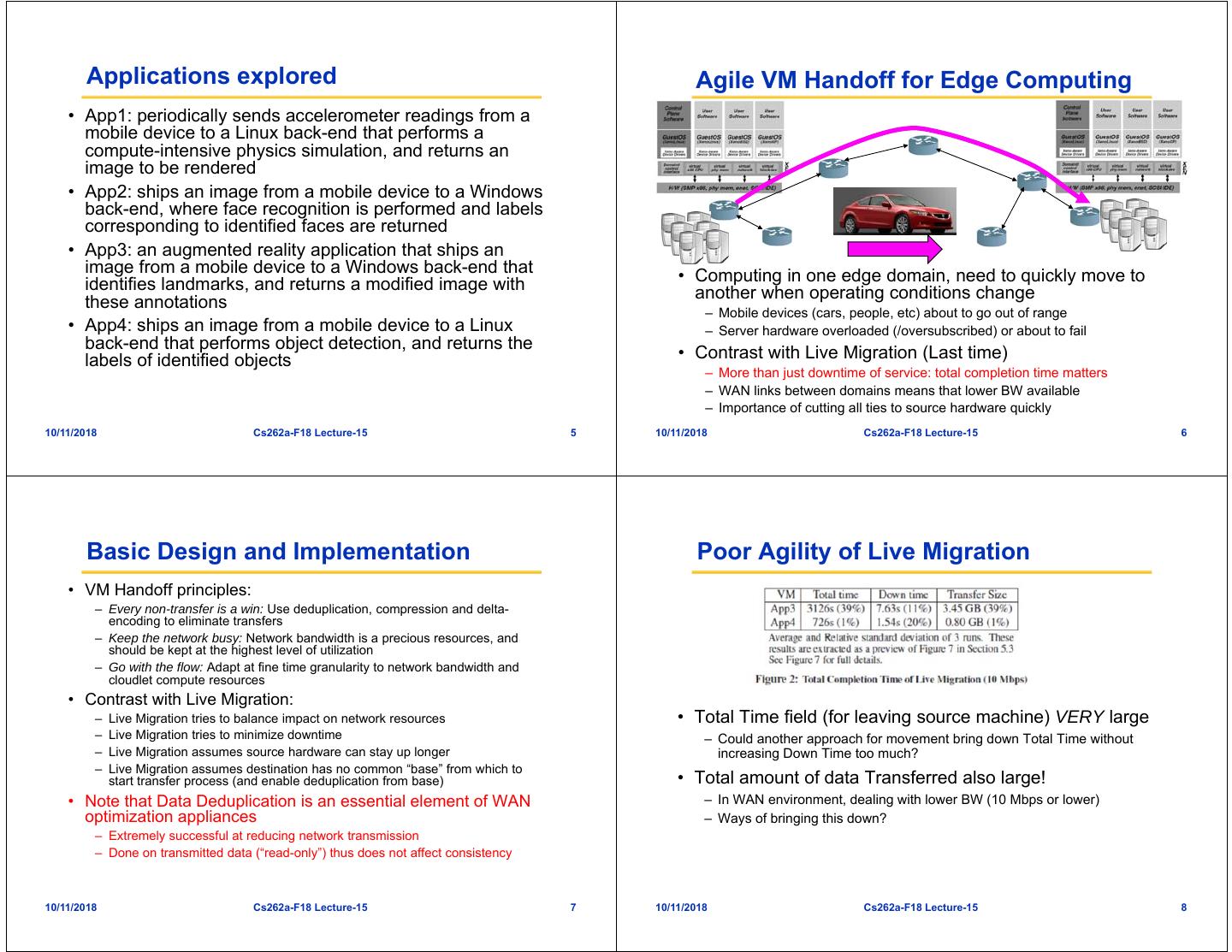

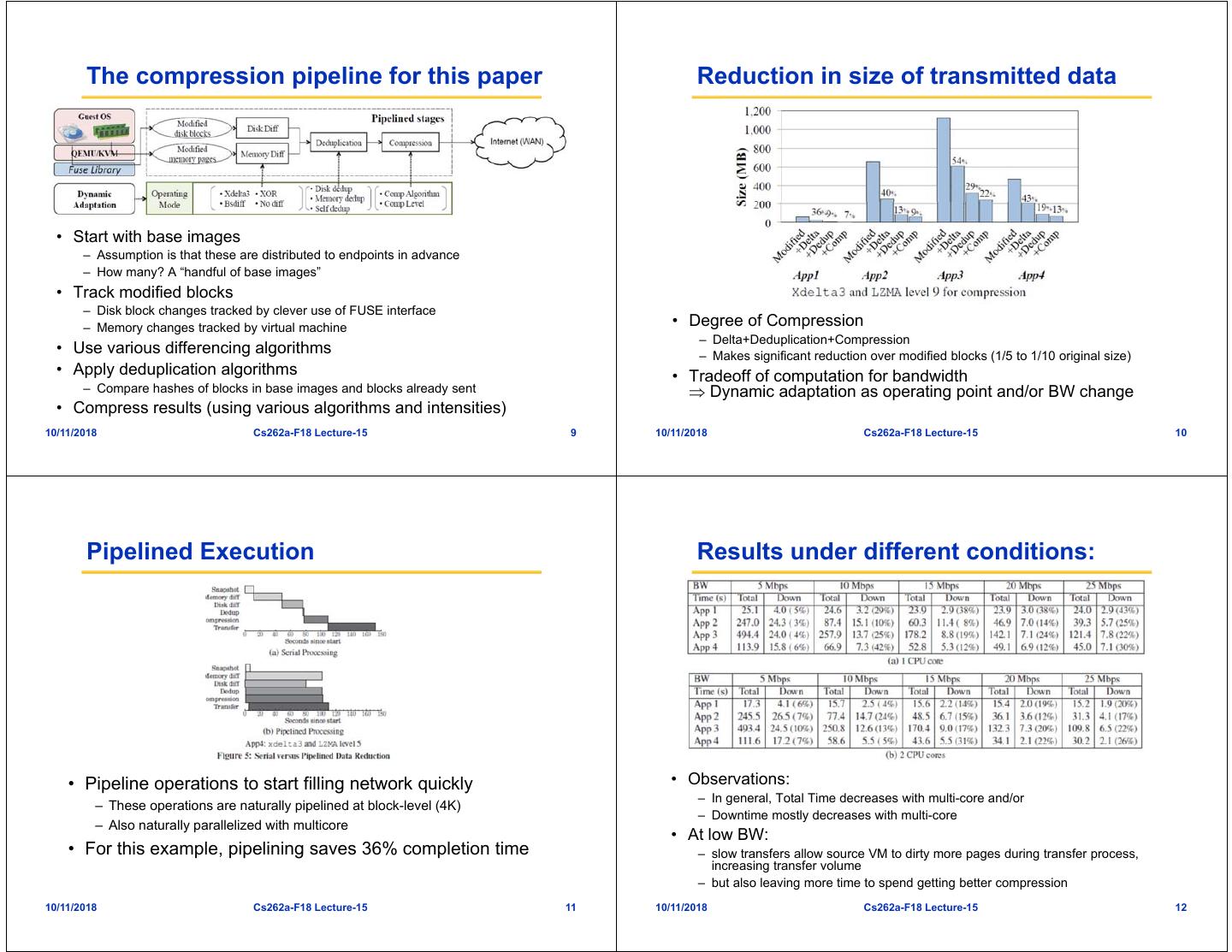

2 . Applications explored Agile VM Handoff for Edge Computing • App1: periodically sends accelerometer readings from a mobile device to a Linux back-end that performs a compute-intensive physics simulation, and returns an image to be rendered • App2: ships an image from a mobile device to a Windows back-end, where face recognition is performed and labels corresponding to identified faces are returned • App3: an augmented reality application that ships an image from a mobile device to a Windows back-end that • Computing in one edge domain, need to quickly move to identifies landmarks, and returns a modified image with another when operating conditions change these annotations – Mobile devices (cars, people, etc) about to go out of range • App4: ships an image from a mobile device to a Linux – Server hardware overloaded (/oversubscribed) or about to fail back-end that performs object detection, and returns the labels of identified objects • Contrast with Live Migration (Last time) – More than just downtime of service: total completion time matters – WAN links between domains means that lower BW available – Importance of cutting all ties to source hardware quickly 10/11/2018 Cs262a-F18 Lecture-15 5 10/11/2018 Cs262a-F18 Lecture-15 6 Basic Design and Implementation Poor Agility of Live Migration • VM Handoff principles: – Every non-transfer is a win: Use deduplication, compression and delta- encoding to eliminate transfers – Keep the network busy: Network bandwidth is a precious resources, and should be kept at the highest level of utilization – Go with the flow: Adapt at fine time granularity to network bandwidth and cloudlet compute resources • Contrast with Live Migration: – Live Migration tries to balance impact on network resources • Total Time field (for leaving source machine) VERY large – Live Migration tries to minimize downtime – Could another approach for movement bring down Total Time without – Live Migration assumes source hardware can stay up longer increasing Down Time too much? – Live Migration assumes destination has no common “base” from which to start transfer process (and enable deduplication from base) • Total amount of data Transferred also large! • Note that Data Deduplication is an essential element of WAN – In WAN environment, dealing with lower BW (10 Mbps or lower) optimization appliances – Ways of bringing this down? – Extremely successful at reducing network transmission – Done on transmitted data (“read-only”) thus does not affect consistency 10/11/2018 Cs262a-F18 Lecture-15 7 10/11/2018 Cs262a-F18 Lecture-15 8

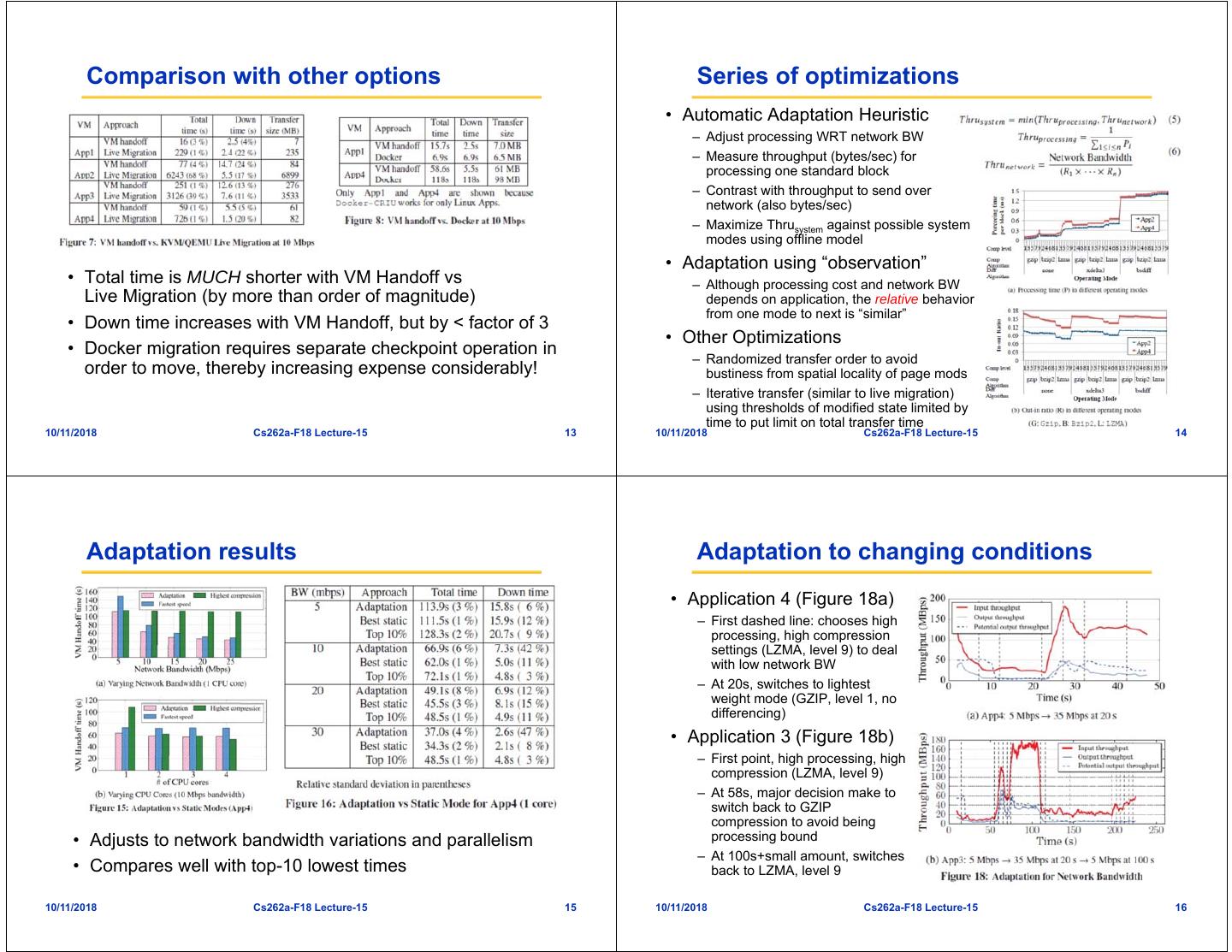

3 . The compression pipeline for this paper Reduction in size of transmitted data • Start with base images – Assumption is that these are distributed to endpoints in advance – How many? A “handful of base images” • Track modified blocks – Disk block changes tracked by clever use of FUSE interface – Memory changes tracked by virtual machine • Degree of Compression – Delta+Deduplication+Compression • Use various differencing algorithms – Makes significant reduction over modified blocks (1/5 to 1/10 original size) • Apply deduplication algorithms • Tradeoff of computation for bandwidth – Compare hashes of blocks in base images and blocks already sent Dynamic adaptation as operating point and/or BW change • Compress results (using various algorithms and intensities) 10/11/2018 Cs262a-F18 Lecture-15 9 10/11/2018 Cs262a-F18 Lecture-15 10 Pipelined Execution Results under different conditions: • Pipeline operations to start filling network quickly • Observations: – In general, Total Time decreases with multi-core and/or – These operations are naturally pipelined at block-level (4K) – Downtime mostly decreases with multi-core – Also naturally parallelized with multicore • At low BW: • For this example, pipelining saves 36% completion time – slow transfers allow source VM to dirty more pages during transfer process, increasing transfer volume – but also leaving more time to spend getting better compression 10/11/2018 Cs262a-F18 Lecture-15 11 10/11/2018 Cs262a-F18 Lecture-15 12

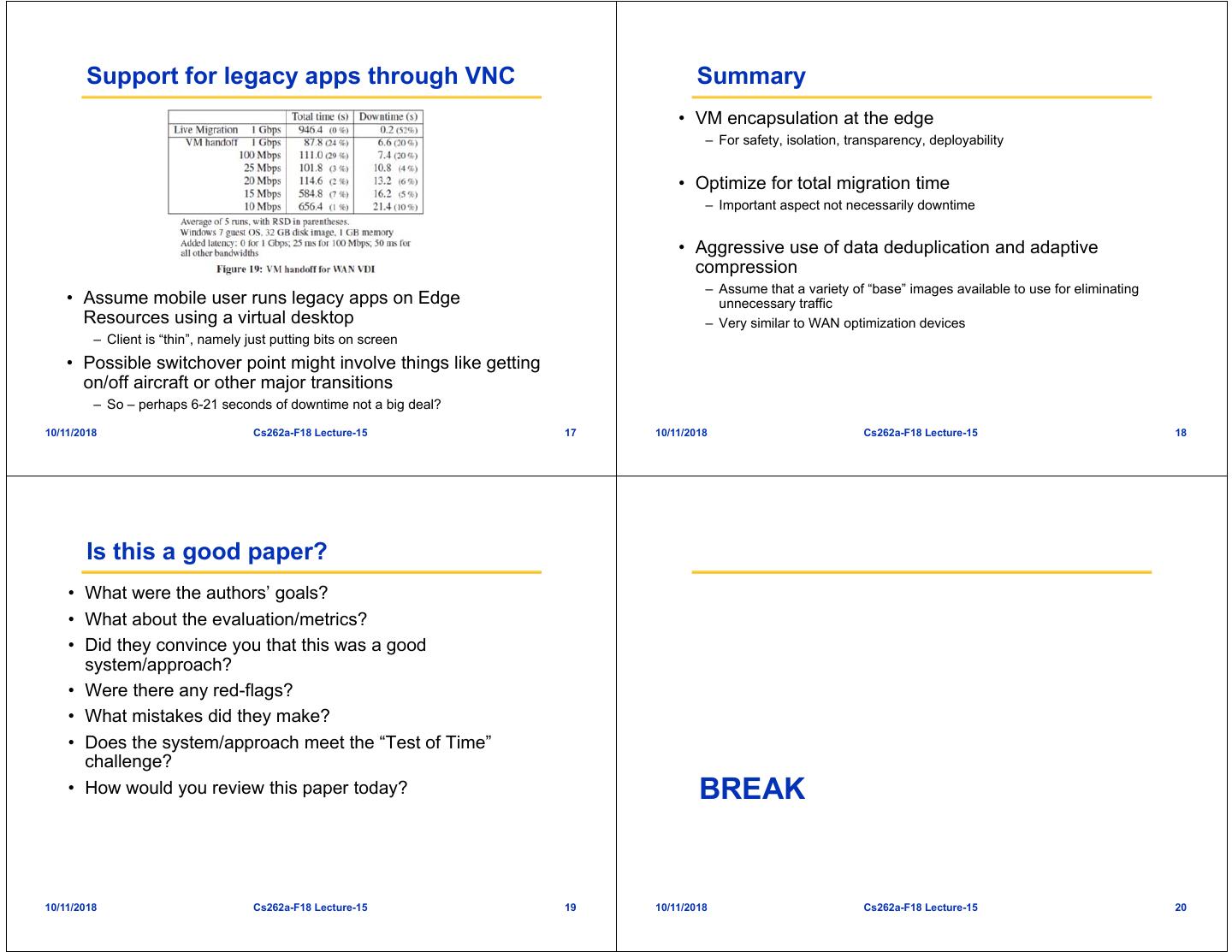

4 . Comparison with other options Series of optimizations • Automatic Adaptation Heuristic – Adjust processing WRT network BW – Measure throughput (bytes/sec) for processing one standard block – Contrast with throughput to send over network (also bytes/sec) – Maximize Thrusystem against possible system modes using offline model • Adaptation using “observation” • Total time is MUCH shorter with VM Handoff vs – Although processing cost and network BW Live Migration (by more than order of magnitude) depends on application, the relative behavior from one mode to next is “similar” • Down time increases with VM Handoff, but by < factor of 3 • Other Optimizations • Docker migration requires separate checkpoint operation in – Randomized transfer order to avoid order to move, thereby increasing expense considerably! bustiness from spatial locality of page mods – Iterative transfer (similar to live migration) using thresholds of modified state limited by time to put limit on total transfer time 10/11/2018 Cs262a-F18 Lecture-15 13 10/11/2018 Cs262a-F18 Lecture-15 14 Adaptation results Adaptation to changing conditions • Application 4 (Figure 18a) – First dashed line: chooses high processing, high compression settings (LZMA, level 9) to deal with low network BW – At 20s, switches to lightest weight mode (GZIP, level 1, no differencing) • Application 3 (Figure 18b) – First point, high processing, high compression (LZMA, level 9) – At 58s, major decision make to switch back to GZIP compression to avoid being • Adjusts to network bandwidth variations and parallelism processing bound – At 100s+small amount, switches • Compares well with top-10 lowest times back to LZMA, level 9 10/11/2018 Cs262a-F18 Lecture-15 15 10/11/2018 Cs262a-F18 Lecture-15 16

5 . Support for legacy apps through VNC Summary • VM encapsulation at the edge – For safety, isolation, transparency, deployability • Optimize for total migration time – Important aspect not necessarily downtime • Aggressive use of data deduplication and adaptive compression – Assume that a variety of “base” images available to use for eliminating • Assume mobile user runs legacy apps on Edge unnecessary traffic Resources using a virtual desktop – Very similar to WAN optimization devices – Client is “thin”, namely just putting bits on screen • Possible switchover point might involve things like getting on/off aircraft or other major transitions – So – perhaps 6-21 seconds of downtime not a big deal? 10/11/2018 Cs262a-F18 Lecture-15 17 10/11/2018 Cs262a-F18 Lecture-15 18 Is this a good paper? • What were the authors’ goals? • What about the evaluation/metrics? • Did they convince you that this was a good system/approach? • Were there any red-flags? • What mistakes did they make? • Does the system/approach meet the “Test of Time” challenge? • How would you review this paper today? BREAK 10/11/2018 Cs262a-F18 Lecture-15 19 10/11/2018 Cs262a-F18 Lecture-15 20

6 . What is the IoT? Ad Hoc Application Design! Why do Data Breaches happen frequently? Really Large TCB Really Large TCB Warehouse SSL SSL h h g SSL Home Clusters Full OS TCB • AdHoc boundary construction! – Security mechanisms all “roll-your-own” and different for each application Factory – Use of encrypted channels to “tunnel” across untrusted domains • Border security rather than Inherent security – Large Trusted Computing Base (TCB): huge amount of code must be correct to • Data Analytics protect data • Machine Learning – Make it through the border (firewall, OS, VM, container, etc…) data compromised! • Anomaly Detection • What about data integrity and provenance? • Data Storage??? – Insertion of any bits into “secure” environment get trusted as authentic (think • Control??? “Fake News”!) 10/11/2018 Cs262a-F18 Lecture-15 21 10/11/2018 Cs262a-F18 Lecture-15 22 But: What can we do? On The Importance of Data Integrity Cryptographically Hardened Data Containers • Machine-to-Machine (M2M) Fiber communication has reached a Hole dangerous tipping point metadata – Cyber Physical Systems use Hash Ptr Signature models and behaviors that from elsewhere • DataCapsule (DC): • In July (2015), a team of – Firmware, safety protocols, – Standardized metadata wrapped navigation systems, • Inspiration: Shipping around opaque data transactions researchers took total control of a recommendations, … Containers – Uniquely named and globally Jeep SUV remotely – Invented in 1956. Changed findable – IoT (whatever it is) is everywhere • They exploited a firmware update everything! – Every transaction explicitly • Do you know where your data – Ships, trains, trucks, cranes sequenced in a hash-chain history vulnerability and hijacked the handle standardized format came from? PROVENANCE – Provenance enforced through vehicle over the Sprint cellular containers signatures network • Do you know that it is ordered – Each container has a unique ID • Underlying infrastructure assists properly? INTEGRITY – Can ship (and store) anything and improves performance • They could make it speed up, slow down and even veer off the • The rise of Fake News! • Can we use this idea to help? – Anyone can verify validity, road – Much deeper problem than news membership, and sequencing of transactions (like blockchain) stories: Fake DATA! 10/11/2018 Cs262a-F18 Lecture-15 23 10/11/2018 Cs262a-F18 Lecture-15 24

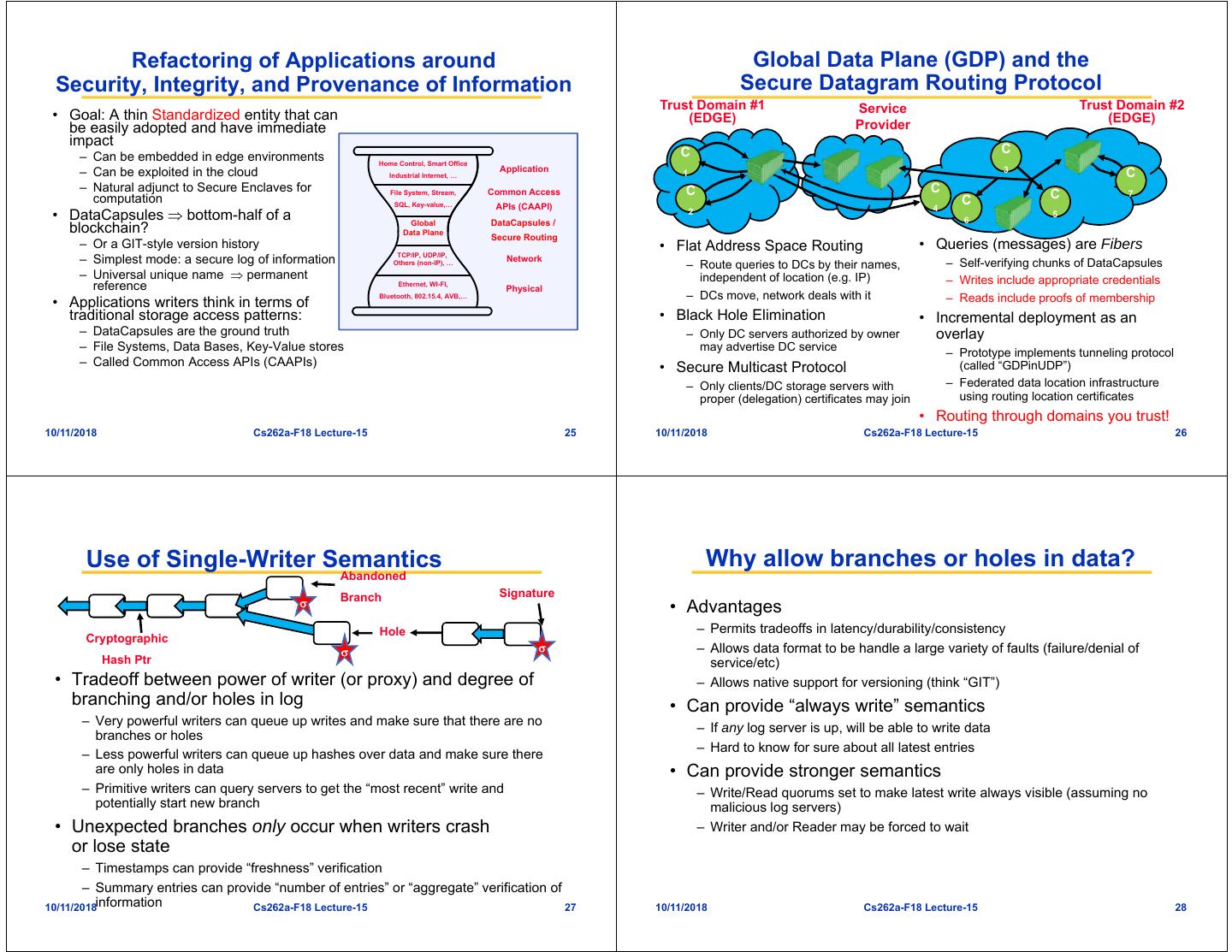

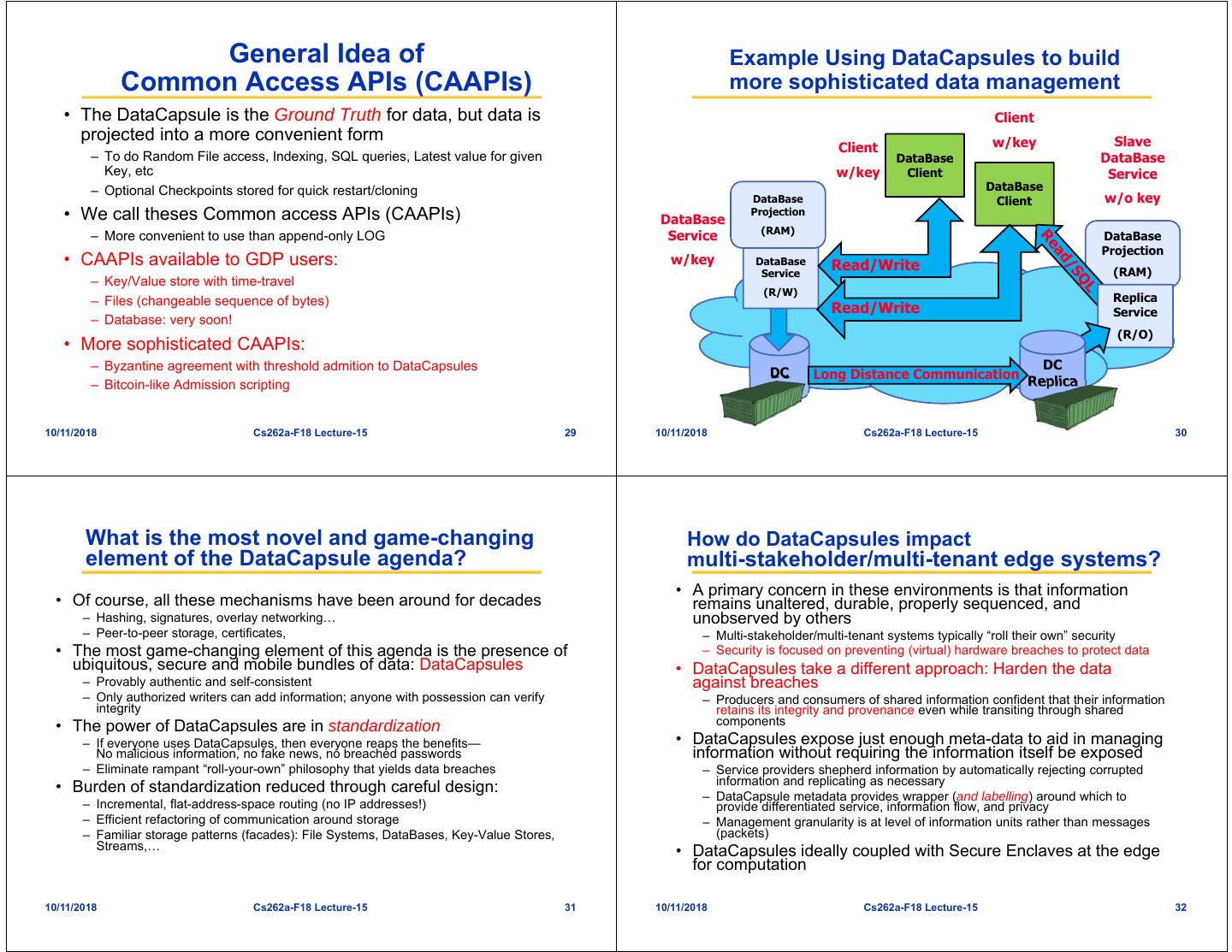

7 . Refactoring of Applications around Global Data Plane (GDP) and the Security, Integrity, and Provenance of Information Secure Datagram Routing Protocol Trust Domain #1 Service Trust Domain #2 • Goal: A thin Standardized entity that can (EDGE) (EDGE) be easily adopted and have immediate Provider impact C C – Can be embedded in edge environments Home Control, Smart Office – Can be exploited in the cloud Application 3 Industrial Internet, … 1 C – Natural adjunct to Secure Enclaves for File System, Stream, Common Access C C C 7 computation SQL, Key-value,… C APIs (CAAPI) 4 • DataCapsules bottom-half of a Global DataCapsules / 2 6 5 blockchain? Data Plane Secure Routing – Or a GIT-style version history • Flat Address Space Routing • Queries (messages) are Fibers TCP/IP, UDP/IP, – Simplest mode: a secure log of information Others (non-IP), … Network – Route queries to DCs by their names, – Self-verifying chunks of DataCapsules – Universal unique name permanent independent of location (e.g. IP) – Writes include appropriate credentials reference Ethernet, WI-FI, Physical Bluetooth, 802.15.4, AVB,… – DCs move, network deals with it – Reads include proofs of membership • Applications writers think in terms of traditional storage access patterns: • Black Hole Elimination • Incremental deployment as an – DataCapsules are the ground truth – Only DC servers authorized by owner overlay – File Systems, Data Bases, Key-Value stores may advertise DC service – Prototype implements tunneling protocol – Called Common Access APIs (CAAPIs) • Secure Multicast Protocol (called “GDPinUDP”) – Only clients/DC storage servers with – Federated data location infrastructure proper (delegation) certificates may join using routing location certificates • Routing through domains you trust! 10/11/2018 Cs262a-F18 Lecture-15 25 10/11/2018 Cs262a-F18 Lecture-15 26 Use of Single-Writer Semantics Why allow branches or holes in data? Abandoned Branch Signature • Advantages Hole – Permits tradeoffs in latency/durability/consistency Cryptographic – Allows data format to be handle a large variety of faults (failure/denial of Hash Ptr service/etc) • Tradeoff between power of writer (or proxy) and degree of – Allows native support for versioning (think “GIT”) branching and/or holes in log • Can provide “always write” semantics – Very powerful writers can queue up writes and make sure that there are no – If any log server is up, will be able to write data branches or holes – Hard to know for sure about all latest entries – Less powerful writers can queue up hashes over data and make sure there are only holes in data • Can provide stronger semantics – Primitive writers can query servers to get the “most recent” write and – Write/Read quorums set to make latest write always visible (assuming no potentially start new branch malicious log servers) • Unexpected branches only occur when writers crash – Writer and/or Reader may be forced to wait or lose state – Timestamps can provide “freshness” verification – Summary entries can provide “number of entries” or “aggregate” verification of 10/11/2018information Cs262a-F18 Lecture-15 27 10/11/2018 Cs262a-F18 Lecture-15 28

8 . General Idea of Example Using DataCapsules to build Common Access APIs (CAAPIs) more sophisticated data management • The DataCapsule is the Ground Truth for data, but data is Client projected into a more convenient form w/key Slave Client – To do Random File access, Indexing, SQL queries, Latest value for given DataBase DataBase Key, etc w/key Client Service – Optional Checkpoints stored for quick restart/cloning DataBase DataBase Client w/o key • We call theses Common access APIs (CAAPIs) DataBase Projection (RAM) – More convenient to use than append-only LOG Service DataBase Projection • CAAPIs available to GDP users: w/key DataBase Read/Write Service (RAM) – Key/Value store with time-travel (R/W) – Files (changeable sequence of bytes) Replica Read/Write Service – Database: very soon! (R/O) • More sophisticated CAAPIs: – Byzantine agreement with threshold admition to DataCapsules DC DC Long Distance Communication Replica – Bitcoin-like Admission scripting 10/11/2018 Cs262a-F18 Lecture-15 29 10/11/2018 Cs262a-F18 Lecture-15 30 What is the most novel and game-changing How do DataCapsules impact element of the DataCapsule agenda? multi-stakeholder/multi-tenant edge systems? • A primary concern in these environments is that information • Of course, all these mechanisms have been around for decades remains unaltered, durable, properly sequenced, and – Hashing, signatures, overlay networking… unobserved by others – Peer-to-peer storage, certificates, – Multi-stakeholder/multi-tenant systems typically “roll their own” security • The most game-changing element of this agenda is the presence of – Security is focused on preventing (virtual) hardware breaches to protect data ubiquitous, secure and mobile bundles of data: DataCapsules • DataCapsules take a different approach: Harden the data – Provably authentic and self-consistent against breaches – Only authorized writers can add information; anyone with possession can verify – Producers and consumers of shared information confident that their information integrity retains its integrity and provenance even while transiting through shared • The power of DataCapsules are in standardization components – If everyone uses DataCapsules, then everyone reaps the benefits— • DataCapsules expose just enough meta-data to aid in managing No malicious information, no fake news, no breached passwords information without requiring the information itself be exposed – Eliminate rampant “roll-your-own” philosophy that yields data breaches – Service providers shepherd information by automatically rejecting corrupted information and replicating as necessary • Burden of standardization reduced through careful design: – DataCapsule metadata provides wrapper (and labelling) around which to – Incremental, flat-address-space routing (no IP addresses!) provide differentiated service, information flow, and privacy – Efficient refactoring of communication around storage – Management granularity is at level of information units rather than messages – Familiar storage patterns (facades): File Systems, DataBases, Key-Value Stores, (packets) Streams,… • DataCapsules ideally coupled with Secure Enclaves at the edge for computation 10/11/2018 Cs262a-F18 Lecture-15 31 10/11/2018 Cs262a-F18 Lecture-15 32

9 . Fog Robotics on the Global Data Plane DataCapsules for Machine Learning/Robotics • Ground truth for data for ML training + a vehicle for Tier 1 secure dissemination of continuously updating Trust Domain models. DataCapsules GDP enables decomposition of tasks into trust Trust Domain 1 Replica ℝC2 Top-Level domains, operating in different physical locations DataCapsules Resolver (edge, cloud). Edge No ad-hoc data transfer/sharing across facilities. Training Computing Data Domain More flexible than “cloud as a rendezvous point” Replica Resolver Domain Integration with Tensorflow: A minimal filesystem Resolver interface. GDP Trust Domain 2 Using of edge resources is 1-2 orders of magnitude Routers faster to load data than cloud storage. No QoS over Replica public internet. Edge Preliminary Computing Edge Results Time(s) to load a 255 MB neural net model (non- Neural GPU training Computing GPU) Network Trust Domain 1 Trust Domain 2 s3 bucket (tensorflow s3://<>) 148. 130.6 116. Models 8 1 gdp (tensorflow gdp://<>) 11.1 11.5 12.0 Local file system 8.2 8.6 8.9 10/11/2018 Cs262a-F18 Lecture-15 33 10/11/2018 Cs262a-F18 Lecture-15 34 What does short- and long-term success? Is this a good paper? • Short-term success: DataCapsules in IoT • What were the authors’ goals? – TerraSwarm IoT applications: DataCapsules+GDP were the Great Integrator • What about the evaluation/metrics? – For low-power devices: simple gateway talks to devices with BlueTooth, • Did they convince you that this was a good ZigBee, WiFi and speaks GDP protocols to infrastructure system/approach? • Short-term success: working distributed applications and that could be experimentally tested under a variety of • Were there any red-flags? conditions and threats • What mistakes did they make? – Fog Robotics: Robots using and generating models at the edge – Smart manufacturing in which a 3D additive fabrication facility (at the edge) • Does the system/approach meet the “Test of Time” is communicating securely with a digital twin demonstrating secure and challenge? timely exchange of data between the facility and the cloud. • Long-term success: widespread usage • How would you review this paper today? – Adoption of DataCapsules and the GDP as the underpinning for many devices and applications both on the edge and in the cloud. – Example: Adoption of DataCapsules as new standard for IoT devices and applications – Similar to the widespread use of shipping containers in ports today 10/11/2018 Cs262a-F18 Lecture-15 35 10/11/2018 Cs262a-F18 Lecture-15 36