- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

StackGAN

展开查看详情

1 . StackGAN: Text to Photo-realistic Image Synthesis with Stacked Generative Adversarial Networks Han Zhang1 , Tao Xu2 , Hongsheng Li3 , Shaoting Zhang4 , Xiaogang Wang3 , Xiaolei Huang2 , Dimitris Metaxas1 1 2 3 4 arXiv:1612.03242v2 [cs.CV] 5 Aug 2017 Rutgers University Lehigh University The Chinese University of Hong Kong Baidu Research {han.zhang, dnm}@cs.rutgers.edu, {tax313, xih206}@lehigh.edu {hsli, xgwang}@ee.cuhk.edu.hk, zhangshaoting@baidu.com This bird has a This flower has Abstract This bird is white yellow belly and overlapping pink with some black on tarsus, grey back, pointed petals its head and wings, wings, and brown surrounding a ring Synthesizing high-quality images from text descriptions and has a long throat, nape with of short yellow is a challenging problem in computer vision and has many orange beak a black face filaments practical applications. Samples generated by existing text- (a) StackGAN to-image approaches can roughly reflect the meaning of the Stage-I given descriptions, but they fail to contain necessary details 64x64 images and vivid object parts. In this paper, we propose Stacked Generative Adversarial Networks (StackGAN) to generate 256×256 photo-realistic images conditioned on text de- (b) StackGAN scriptions. We decompose the hard problem into more man- Stage-II ageable sub-problems through a sketch-refinement process. 256x256 images The Stage-I GAN sketches the primitive shape and colors of the object based on the given text description, yield- ing Stage-I low-resolution images. The Stage-II GAN takes Stage-I results and text descriptions as inputs, and gener- (c) Vanilla GAN 256x256 ates high-resolution images with photo-realistic details. It images is able to rectify defects in Stage-I results and add com- pelling details with the refinement process. To improve the diversity of the synthesized images and stabilize the training Figure 1. Comparison of the proposed StackGAN and a vanilla of the conditional-GAN, we introduce a novel Conditioning one-stage GAN for generating 256×256 images. (a) Given text descriptions, Stage-I of StackGAN sketches rough shapes and ba- Augmentation technique that encourages smoothness in the sic colors of objects, yielding low-resolution images. (b) Stage-II latent conditioning manifold. Extensive experiments and of StackGAN takes Stage-I results and text descriptions as inputs, comparisons with state-of-the-arts on benchmark datasets and generates high-resolution images with photo-realistic details. demonstrate that the proposed method achieves significant (c) Results by a vanilla 256×256 GAN which simply adds more improvements on generating photo-realistic images condi- upsampling layers to state-of-the-art GAN-INT-CLS [26]. It is un- tioned on text descriptions. able to generate any plausible images of 256×256 resolution. 1. Introduction GANs [26, 24] are able to generate images that are highly Generating photo-realistic images from text is an im- related to the text meanings. portant problem and has tremendous applications, includ- However, it is very difficult to train GAN to generate ing photo-editing, computer-aided design, etc. Recently, high-resolution photo-realistic images from text descrip- Generative Adversarial Networks (GAN) [8, 5, 23] have tions. Simply adding more upsampling layers in state-of- shown promising results in synthesizing real-world im- the-art GAN models for generating high-resolution (e.g., ages. Conditioned on given text descriptions, conditional- 256×256) images generally results in training instability 1

2 .and produces nonsensical outputs (see Figure 1(c)). The 2. Related Work main difficulty for generating high-resolution images by Generative image modeling is a fundamental problem in GANs is that supports of natural image distribution and im- computer vision. There has been remarkable progress in plied model distribution may not overlap in high dimen- this direction with the emergence of deep learning tech- sional pixel space [31, 1]. This problem is more severe niques. Variational Autoencoders (VAE) [13, 28] for- as the image resolution increases. Reed et al. only suc- mulated the problem with probabilistic graphical models ceeded in generating plausible 64×64 images conditioned whose goal was to maximize the lower bound of data like- on text descriptions [26], which usually lack details and lihood. Autoregressive models (e.g., PixelRNN) [33] that vivid object parts, e.g., beaks and eyes of birds. More- utilized neural networks to model the conditional distri- over, they were unable to synthesize higher resolution (e.g., bution of the pixel space have also generated appealing 128×128) images without providing additional annotations synthetic images. Recently, Generative Adversarial Net- of objects [24]. works (GAN) [8] have shown promising performance for In analogy to how human painters draw, we decompose generating sharper images. But training instability makes the problem of text to photo-realistic image synthesis into it hard for GAN models to generate high-resolution (e.g., two more tractable sub-problems with Stacked Generative 256×256) images. Several techniques [23, 29, 18, 1, 3] Adversarial Networks (StackGAN). Low-resolution images have been proposed to stabilize the training process and are first generated by our Stage-I GAN (see Figure 1(a)). On generate compelling results. An energy-based GAN [38] the top of our Stage-I GAN, we stack Stage-II GAN to gen- has also been proposed for more stable training behavior. erate realistic high-resolution (e.g., 256×256) images con- Built upon these generative models, conditional image ditioned on Stage-I results and text descriptions (see Fig- generation has also been studied. Most methods utilized ure 1(b)). By conditioning on the Stage-I result and the simple conditioning variables such as attributes or class la- text again, Stage-II GAN learns to capture the text infor- bels [37, 34, 4, 22]. There is also work conditioned on im- mation that is omitted by Stage-I GAN and draws more de- ages to generate images, including photo editing [2, 39], do- tails for the object. The support of model distribution gener- main transfer [32, 12] and super-resolution [31, 15]. How- ated from a roughly aligned low-resolution image has better ever, super-resolution methods [31, 15] can only add limited probability of intersecting with the support of image distri- details to low-resolution images and can not correct large bution. This is the underlying reason why Stage-II GAN is defects as our proposed StackGAN does. Recently, several able to generate better high-resolution images. methods have been developed to generate images from un- In addition, for the text-to-image generation task, the structured text. Mansimov et al. [17] built an AlignDRAW limited number of training text-image pairs often results in model by learning to estimate alignment between text and sparsity in the text conditioning manifold and such spar- the generating canvas. Reed et al. [27] used conditional Pix- sity makes it difficult to train GAN. Thus, we propose a elCNN to generate images using the text descriptions and novel Conditioning Augmentation technique to encourage object location constraints. Nguyen et al. [20] used an ap- smoothness in the latent conditioning manifold. It allows proximate Langevin sampling approach to generate images small random perturbations in the conditioning manifold conditioned on text. However, their sampling approach re- and increases the diversity of synthesized images. quires an inefficient iterative optimization process. With The contribution of the proposed method is threefold: conditional GAN, Reed et al. [26] successfully generated (1) We propose a novel Stacked Generative Adversar- plausible 64×64 images for birds and flowers based on text ial Networks for synthesizing photo-realistic images from descriptions. Their follow-up work [24] was able to gener- text descriptions. It decomposes the difficult problem ate 128×128 images by utilizing additional annotations on of generating high-resolution images into more manage- object part locations. able subproblems and significantly improve the state of Besides using a single GAN for generating images, there the art. The StackGAN for the first time generates im- is also work [36, 5, 10] that utilized a series of GANs for im- ages of 256×256 resolution with photo-realistic details age generation. Wang et al. [36] factorized the indoor scene from text descriptions. (2) A new Conditioning Augmen- generation process into structure generation and style gen- tation technique is proposed to stabilize the conditional eration with the proposed S 2 -GAN. In contrast, the second GAN training and also improves the diversity of the gen- stage of our StackGAN aims to complete object details and erated samples. (3) Extensive qualitative and quantitative correct defects of Stage-I results based on text descriptions. experiments demonstrate the effectiveness of the overall Denton et al. [5] built a series of GANs within a Lapla- model design as well as the effects of individual compo- cian pyramid framework. At each level of the pyramid, a nents, which provide useful information for designing fu- residual image was generated conditioned on the image of ture conditional GAN models. Our code is available at the previous stage and then added back to the input image https://github.com/hanzhanggit/StackGAN. to produce the input for the next stage. Concurrent to our

3 .work, Huang et al. [10] also showed that they can generate for learning the generator. To mitigate this problem, we better images by stacking several GANs to reconstruct the introduce a Conditioning Augmentation technique to pro- multi-level representations of a pre-trained discriminative duce additional conditioning variables cˆ. In contrast to the model. However, they only succeeded in generating 32×32 fixed conditioning text variable c in [26, 24], we randomly images, while our method utilizes a simpler architecture to sample the latent variables cˆ from an independent Gaussian generate 256×256 images with photo-realistic details and distribution N (µ(ϕt ), Σ(ϕt )), where the mean µ(ϕt ) and sixty-four times more pixels. diagonal covariance matrix Σ(ϕt ) are functions of the text embedding ϕt . The proposed Conditioning Augmentation 3. Stacked Generative Adversarial Networks yields more training pairs given a small number of image- To generate high-resolution images with photo-realistic text pairs, and thus encourages robustness to small pertur- details, we propose a simple yet effective Stacked Genera- bations along the conditioning manifold. To further enforce tive Adversarial Networks. It decomposes the text-to-image the smoothness over the conditioning manifold and avoid generative process into two stages (see Figure 2). overfitting [6, 14], we add the following regularization term - Stage-I GAN: it sketches the primitive shape and ba- to the objective of the generator during training, sic colors of the object conditioned on the given text description, and draws the background layout from a DKL (N (µ(ϕt ), Σ(ϕt )) || N (0, I)), (2) random noise vector, yielding a low-resolution image. which is the Kullback-Leibler divergence (KL divergence) between the standard Gaussian distribution and the condi- - Stage-II GAN: it corrects defects in the low-resolution tioning Gaussian distribution. The randomness introduced image from Stage-I and completes details of the object in the Conditioning Augmentation is beneficial for model- by reading the text description again, producing a high- ing text to image translation as the same sentence usually resolution photo-realistic image. corresponds to objects with various poses and appearances. 3.1. Preliminaries 3.3. Stage-I GAN Generative Adversarial Networks (GAN) [8] are com- Instead of directly generating a high-resolution image posed of two models that are alternatively trained to com- conditioned on the text description, we simplify the task to pete with each other. The generator G is optimized to re- first generate a low-resolution image with our Stage-I GAN, produce the true data distribution pdata by generating im- which focuses on drawing only rough shape and correct col- ages that are difficult for the discriminator D to differentiate ors for the object. from real images. Meanwhile, D is optimized to distinguish Let ϕt be the text embedding of the given description, real images and synthetic images generated by G. Overall, which is generated by a pre-trained encoder [25] in this pa- the training procedure is similar to a two-player min-max per. The Gaussian conditioning variables cˆ0 for text embed- game with the following objective function, ding are sampled from N (µ0 (ϕt ), Σ0 (ϕt )) to capture the min max V (D, G) = Ex∼pdata [log D(x)] + meaning of ϕt with variations. Conditioned on cˆ0 and ran- G D (1) dom variable z, Stage-I GAN trains the discriminator D0 Ez∼pz [log(1 − D(G(z)))], and the generator G0 by alternatively maximizing LD0 in where x is a real image from the true data distribution pdata , Eq. (3) and minimizing LG0 in Eq. (4), and z is a noise vector sampled from distribution pz (e.g., LD0 = E(I0 ,t)∼pdata [log D0 (I0 , ϕt )] + uniform or Gaussian distribution). (3) Conditional GAN [7, 19] is an extension of GAN where Ez∼pz ,t∼pdata [log(1 − D0 (G0 (z, cˆ0 ), ϕt ))], both the generator and discriminator receive additional con- LG0 = Ez∼pz ,t∼pdata [log(1 − D0 (G0 (z, cˆ0 ), ϕt ))] + ditioning variables c, yielding G(z, c) and D(x, c). This formulation allows G to generate images conditioned on λDKL (N (µ0 (ϕt ), Σ0 (ϕt )) || N (0, I)), variables c. (4) where the real image I0 and the text description t are from 3.2. Conditioning Augmentation the true data distribution pdata . z is a noise vector randomly As shown in Figure 2, the text description t is first en- sampled from a given distribution pz (Gaussian distribution coded by an encoder, yielding a text embedding ϕt . In in this paper). λ is a regularization parameter that balances previous works [26, 24], the text embedding is nonlinearly the two terms in Eq. (4). We set λ = 1 for all our ex- transformed to generate conditioning latent variables as the periments. Using the reparameterization trick introduced input of the generator. However, latent space for the text in [13], both µ0 (ϕt ) and Σ0 (ϕt ) are learned jointly with the embedding is usually high dimensional (> 100 dimen- rest of the network. sions). With limited amount of data, it usually causes dis- Model Architecture. For the generator G0 , to obtain continuity in the latent data manifold, which is not desirable text conditioning variable cˆ0 , the text embedding ϕt is first

4 . Conditioning Stage-I Generator G0 Stage-I Discriminator D0 64 x 64 Augmentation (CA) for sketch results Text description t Embedding ϕt μ0 128 ĉ0 This bird is grey with 512 Down- white on its chest and Upsampling {0, 1} has a very short beak sampling 4 z ~ N(0, I) 4 σ0 Compression and 64 x 64 ε ~ N(0, I) real images Spatial Replication Embedding ϕt Embedding ϕt ĉ 256 x 256 Compression and Conditioning real images Spatial Replication Augmentation 128 128 64 x 64 512 512 Stage-I results Down- Residual Down- Upsampling {0, 1} sampling 16 blocks sampling 4 16 4 256 x 256 Stage-II Generator G for refinement results Stage-II Discriminator D Figure 2. The architecture of the proposed StackGAN. The Stage-I generator draws a low-resolution image by sketching rough shape and basic colors of the object from the given text and painting the background from a random noise vector. Conditioned on Stage-I results, the Stage-II generator corrects defects and adds compelling details into Stage-I results, yielding a more realistic high-resolution image. fed into a fully connected layer to generate µ0 and σ0 (σ0 D and generator G in Stage-II GAN are trained by alter- are the values in the diagonal of Σ0 ) for the Gaussian distri- natively maximizing LD in Eq. (5) and minimizing LG in bution N (µ0 (ϕt ), Σ0 (ϕt )). cˆ0 are then sampled from the Eq. (6), Gaussian distribution. Our Ng dimensional conditioning vector cˆ0 is computed by cˆ0 = µ0 + σ0 (where is LD = E(I,t)∼pdata [log D(I, ϕt )] + (5) the element-wise multiplication, ∼ N (0, I)). Then, cˆ0 is Es0 ∼pG0 ,t∼pdata [log(1 − D(G(s0 , cˆ), ϕt ))], concatenated with a Nz dimensional noise vector to gener- ate a W0 × H0 image by a series of up-sampling blocks. LG = Es0 ∼pG0 ,t∼pdata [log(1 − D(G(s0 , cˆ), ϕt ))] + (6) For the discriminator D0 , the text embedding ϕt is first λDKL (N (µ(ϕt ), Σ(ϕt )) || N (0, I)), compressed to Nd dimensions using a fully-connected layer Different from the original GAN formulation, the random and then spatially replicated to form a Md × Md × Nd noise z is not used in this stage with the assumption that tensor. Meanwhile, the image is fed through a series of the randomness has already been preserved by s0 . Gaus- down-sampling blocks until it has Md × Md spatial dimen- sian conditioning variables cˆ used in this stage and cˆ0 used sion. Then, the image filter map is concatenated along the in Stage-I GAN share the same pre-trained text encoder, channel dimension with the text tensor. The resulting ten- generating the same text embedding ϕt . However, Stage- sor is further fed to a 1×1 convolutional layer to jointly I and Stage-II Conditioning Augmentation have different learn features across the image and the text. Finally, a fully- fully connected layers for generating different means and connected layer with one node is used to produce the deci- standard deviations. In this way, Stage-II GAN learns to sion score. capture useful information in the text embedding that is omitted by Stage-I GAN. 3.4. Stage-II GAN Model Architecture. We design Stage-II generator as Low-resolution images generated by Stage-I GAN usu- an encoder-decoder network with residual blocks [9]. Sim- ally lack vivid object parts and might contain shape distor- ilar to the previous stage, the text embedding ϕt is used tions. Some details in the text might also be omitted in the to generate the Ng dimensional text conditioning vector cˆ, first stage, which is vital for generating photo-realistic im- which is spatially replicated to form a Mg ×Mg ×Ng tensor. ages. Our Stage-II GAN is built upon Stage-I GAN results Meanwhile, the Stage-I result s0 generated by Stage-I GAN to generate high-resolution images. It is conditioned on is fed into several down-sampling blocks (i.e., encoder) un- low-resolution images and also the text embedding again to til it has a spatial size of Mg × Mg . The image features correct defects in Stage-I results. The Stage-II GAN com- and the text features are concatenated along the channel di- pletes previously ignored text information to generate more mension. The encoded image features coupled with text photo-realistic details. features are fed into several residual blocks, which are de- Conditioning on the low-resolution result s0 = signed to learn multi-modal representations across image G0 (z, cˆ0 ) and Gaussian latent variables cˆ, the discriminator and text features. Finally, a series of up-sampling layers

5 .(i.e., decoder) are used to generate a W ×H high-resolution 4.1. Datasets and evaluation metrics image. Such a generator is able to help rectify defects in the CUB [35] contains 200 bird species with 11,788 images. input image while add more details to generate the realistic Since 80% of birds in this dataset have object-image size high-resolution image. ratios of less than 0.5 [35], as a pre-processing step, we For the discriminator, its structure is similar to that of crop all images to ensure that bounding boxes of birds have Stage-I discriminator with only extra down-sampling blocks greater-than-0.75 object-image size ratios. Oxford-102 [21] since the image size is larger in this stage. To explicitly en- contains 8,189 images of flowers from 102 different cat- force GAN to learn better alignment between the image and egories. To show the generalization capability of our ap- the conditioning text, rather than using the vanilla discrimi- proach, a more challenging dataset, MS COCO [16] is also nator, we adopt the matching-aware discriminator proposed utilized for evaluation. Different from CUB and Oxford- by Reed et al. [26] for both stages. During training, the 102, the MS COCO dataset contains images with multiple discriminator takes real images and their corresponding text objects and various backgrounds. It has a training set with descriptions as positive sample pairs, whereas negative sam- 80k images and a validation set with 40k images. Each ple pairs consist of two groups. The first is real images with image in COCO has 5 descriptions, while 10 descriptions mismatched text embeddings, while the second is synthetic are provided by [25] for every image in CUB and Oxford- images with their corresponding text embeddings. 102 datasets. Following the experimental setup in [26], 3.5. Implementation details we directly use the training and validation sets provided The up-sampling blocks consist of the nearest-neighbor by COCO, meanwhile we split CUB and Oxford-102 into upsampling followed by a 3×3 stride 1 convolution. Batch class-disjoint training and test sets. normalization [11] and ReLU activation are applied after Evaluation metrics. It is difficult to evaluate the per- every convolution except the last one. The residual blocks formance of generative models (e.g., GAN). We choose a consist of 3×3 stride 1 convolutions, Batch normalization recently proposed numerical assessment approach “incep- and ReLU. Two residual blocks are used in 128×128 Stack- tion score” [29] for quantitative evaluation, GAN models while four are used in 256×256 models. The I = exp(Ex DKL (p(y|x) || p(y))), (7) down-sampling blocks consist of 4×4 stride 2 convolutions, Batch normalization and LeakyReLU, except that the first where x denotes one generated sample, and y is the label one does not have Batch normalization. predicted by the Inception model [30]. The intuition behind By default, Ng = 128, Nz = 100, Mg = 16, Md = 4, this metric is that good models should generate diverse but Nd = 128, W0 = H0 = 64 and W = H = 256. For train- meaningful images. Therefore, the KL divergence between ing, we first iteratively train D0 and G0 of Stage-I GAN the marginal distribution p(y) and the conditional distribu- for 600 epochs by fixing Stage-II GAN. Then we iteratively tion p(y|x) should be large. In our experiments, we directly train D and G of Stage-II GAN for another 600 epochs by use the pre-trained Inception model for COCO dataset. For fixing Stage-I GAN. All networks are trained using ADAM fine-grained datasets, CUB and Oxford-102, we fine-tune solver with batch size 64 and an initial learning rate of an Inception model for each of them. As suggested in [29], 0.0002. The learning rate is decayed to 1/2 of its previous we evaluate this metric on a large number of samples (i.e., value every 100 epochs. 30k randomly selected samples) for each model. Although the inception score has shown to well correlate 4. Experiments with human perception on visual quality of samples [29], it To validate our method, we conduct extensive quantita- cannot reflect whether the generated images are well con- tive and qualitative evaluations. Two state-of-the-art meth- ditioned on the given text descriptions. Therefore, we also ods on text-to-image synthesis, GAN-INT-CLS [26] and conduct human evaluation. We randomly select 50 text de- GAWWN [24], are compared. Results by the two compared scriptions for each class of CUB and Oxford-102 test sets. methods are generated using the code released by their au- For COCO dataset, 4k text descriptions are randomly se- thors. In addition, we design several baseline models to lected from its validation set. For each sentence, 5 im- investigate the overall design and important components of ages are generated by each model. Given the same text de- our proposed StackGAN. For the first baseline, we directly scriptions, 10 users (not including any of the authors) are train Stage-I GAN for generating 64×64 and 256×256 im- asked to rank the results by different methods. The average ages to investigate whether the proposed stacked structure ranks by human users are calculated to evaluate all com- and Conditioning Augmentation are beneficial. Then we pared methods. modify our StackGAN to generate 128×128 and 256×256 images to investigate whether larger images by our method 4.2. Quantitative and qualitative results result in higher image quality. We also investigate whether We compare our results with the state-of-the-art text-to- inputting text at both stages of StackGAN is useful. image methods [24, 26] on CUB, Oxford-102 and COCO

6 . A small bird A small yellow This small bird The bird is A bird with a This small with varying bird with a has a white This bird is red short and medium orange black bird has shades of black crown breast, light Text and brown in stubby with bill white body a short, slightly brown with and a short grey head, and description color, with a yellow on its gray wings and curved bill and white under the black pointed black wings stubby beak body webbed feet long legs eyes beak and tail 64x64 GAN-INT-CLS 128x128 GAWWN 256x256 StackGAN Figure 3. Example results by our StackGAN, GAWWN [24], and GAN-INT-CLS [26] conditioned on text descriptions from CUB test set. This flower is This flower has This flower is This flower has pink, white, petals that are white and Eggs fruit A street sign Text a lot of small and yellow in dark pink with yellow in color, A group of candy nuts on a stoplight description purple petals in color, and has white edges with petals that A picture of a people on skis and meat pole in the a dome-like petals that are and pink are wavy and very clean stand in the served on middle of a configuration striped stamen smooth living room snow white dish day 64x64 GAN-INT-CLS 256x256 StackGAN Figure 4. Example results by our StackGAN and GAN-INT-CLS [26] conditioned on text descriptions from Oxford-102 test set (leftmost four columns) and COCO validation set (rightmost four columns). Metric Dataset GAN-INT-CLS GAWWN Our StackGAN CUB 2.88 ± .04 3.62 ± .07 3.70 ± .04 erage human rank on all three datasets. Compared with Inception score Oxford 2.66 ± .03 / 3.20 ± .01 GAN-INT-CLS [26], StackGAN achieves 28.47% improve- COCO 7.88 ± .07 / 8.45 ± .03 CUB 2.81 ± .03 1.99 ± .04 1.37 ± .02 ment in terms of inception score on CUB dataset (from 2.88 Human to 3.70), and 20.30% improvement on Oxford-102 (from Oxford 1.87 ± .03 / 1.13 ± .03 rank COCO 1.89 ± .04 / 1.11 ± .03 2.66 to 3.20). The better average human rank of our Stack- Table 1. Inception scores and average human ranks of our Stack- GAN also indicates our proposed method is able to generate GAN, GAWWN [24], and GAN-INT-CLS [26] on CUB, Oxford- more realistic samples conditioned on text descriptions. 102, and MS-COCO datasets. As shown in Figure 3, the 64×64 samples generated by datasets. The inception scores and average human ranks GAN-INT-CLS [26] can only reflect the general shape and for our proposed StackGAN and compared methods are re- color of the birds. Their results lack vivid parts (e.g., beak ported in Table 1. Representative examples are compared in and legs) and convincing details in most cases, which make Figure 3 and Figure 4. them neither realistic enough nor have sufficiently high res- Our StackGAN achieves the best inception score and av- olution. By using additional conditioning variables on loca-

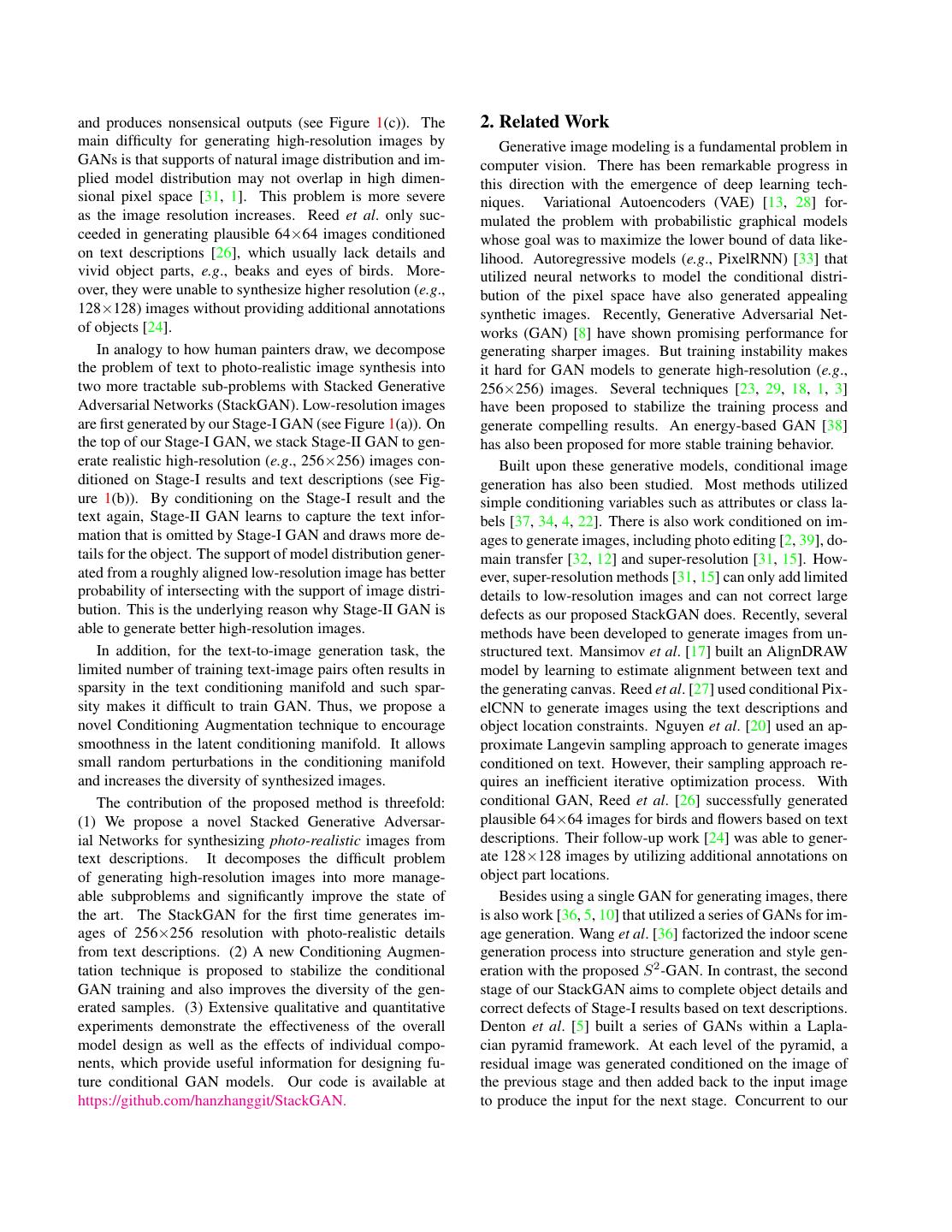

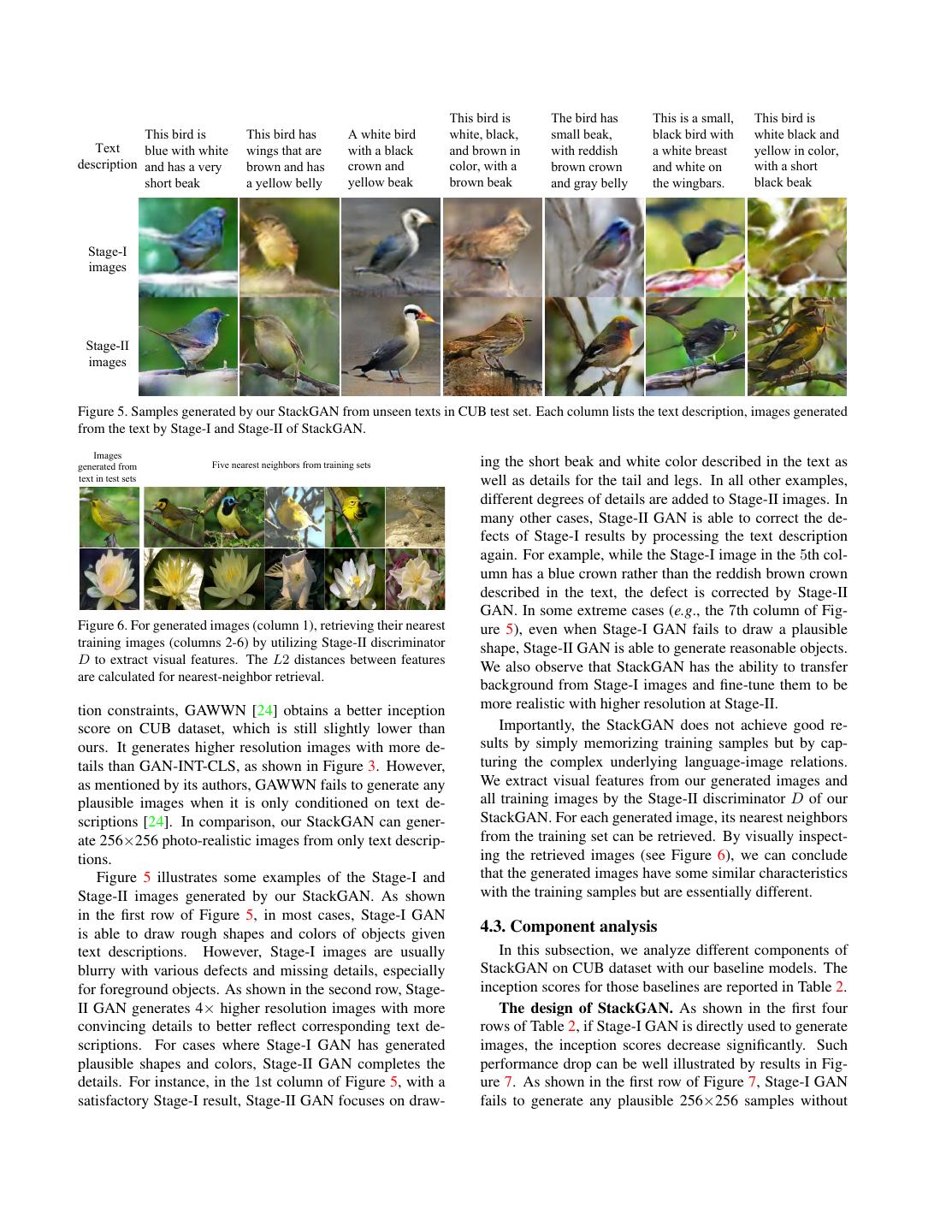

7 . This bird is The bird has This is a small, This bird is This bird is This bird has A white bird white, black, small beak, black bird with white black and Text blue with white wings that are with a black and brown in with reddish a white breast yellow in color, description and has a very brown and has crown and color, with a brown crown and white on with a short short beak a yellow belly yellow beak brown beak and gray belly the wingbars. black beak Stage-I images Stage-II images Figure 5. Samples generated by our StackGAN from unseen texts in CUB test set. Each column lists the text description, images generated from the text by Stage-I and Stage-II of StackGAN. Images generated from Five nearest neighbors from training sets ing the short beak and white color described in the text as text in test sets well as details for the tail and legs. In all other examples, different degrees of details are added to Stage-II images. In many other cases, Stage-II GAN is able to correct the de- fects of Stage-I results by processing the text description again. For example, while the Stage-I image in the 5th col- umn has a blue crown rather than the reddish brown crown described in the text, the defect is corrected by Stage-II GAN. In some extreme cases (e.g., the 7th column of Fig- Figure 6. For generated images (column 1), retrieving their nearest ure 5), even when Stage-I GAN fails to draw a plausible training images (columns 2-6) by utilizing Stage-II discriminator shape, Stage-II GAN is able to generate reasonable objects. D to extract visual features. The L2 distances between features We also observe that StackGAN has the ability to transfer are calculated for nearest-neighbor retrieval. background from Stage-I images and fine-tune them to be tion constraints, GAWWN [24] obtains a better inception more realistic with higher resolution at Stage-II. score on CUB dataset, which is still slightly lower than Importantly, the StackGAN does not achieve good re- ours. It generates higher resolution images with more de- sults by simply memorizing training samples but by cap- tails than GAN-INT-CLS, as shown in Figure 3. However, turing the complex underlying language-image relations. as mentioned by its authors, GAWWN fails to generate any We extract visual features from our generated images and plausible images when it is only conditioned on text de- all training images by the Stage-II discriminator D of our scriptions [24]. In comparison, our StackGAN can gener- StackGAN. For each generated image, its nearest neighbors ate 256×256 photo-realistic images from only text descrip- from the training set can be retrieved. By visually inspect- tions. ing the retrieved images (see Figure 6), we can conclude Figure 5 illustrates some examples of the Stage-I and that the generated images have some similar characteristics Stage-II images generated by our StackGAN. As shown with the training samples but are essentially different. in the first row of Figure 5, in most cases, Stage-I GAN is able to draw rough shapes and colors of objects given 4.3. Component analysis text descriptions. However, Stage-I images are usually In this subsection, we analyze different components of blurry with various defects and missing details, especially StackGAN on CUB dataset with our baseline models. The for foreground objects. As shown in the second row, Stage- inception scores for those baselines are reported in Table 2. II GAN generates 4× higher resolution images with more The design of StackGAN. As shown in the first four convincing details to better reflect corresponding text de- rows of Table 2, if Stage-I GAN is directly used to generate scriptions. For cases where Stage-I GAN has generated images, the inception scores decrease significantly. Such plausible shapes and colors, Stage-II GAN completes the performance drop can be well illustrated by results in Fig- details. For instance, in the 1st column of Figure 5, with a ure 7. As shown in the first row of Figure 7, Stage-I GAN satisfactory Stage-I result, Stage-II GAN focuses on draw- fails to generate any plausible 256×256 samples without

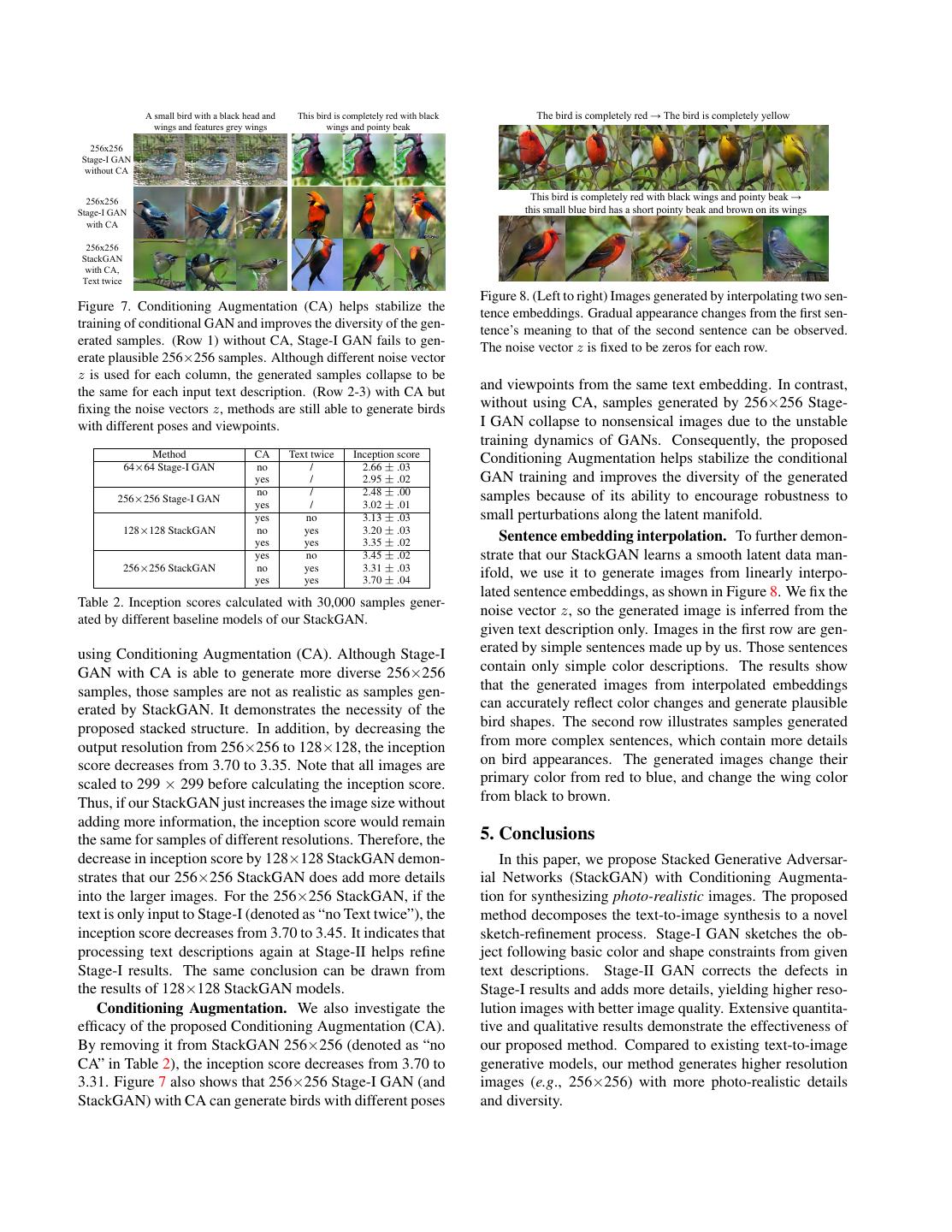

8 . A small bird with a black head and This bird is completely red with black The bird is completely red → The bird is completely yellow wings and features grey wings wings and pointy beak 256x256 Stage-I GAN without CA 256x256 This bird is completely red with black wings and pointy beak → Stage-I GAN this small blue bird has a short pointy beak and brown on its wings with CA 256x256 StackGAN with CA, Text twice Figure 8. (Left to right) Images generated by interpolating two sen- Figure 7. Conditioning Augmentation (CA) helps stabilize the tence embeddings. Gradual appearance changes from the first sen- training of conditional GAN and improves the diversity of the gen- tence’s meaning to that of the second sentence can be observed. erated samples. (Row 1) without CA, Stage-I GAN fails to gen- The noise vector z is fixed to be zeros for each row. erate plausible 256×256 samples. Although different noise vector z is used for each column, the generated samples collapse to be the same for each input text description. (Row 2-3) with CA but and viewpoints from the same text embedding. In contrast, fixing the noise vectors z, methods are still able to generate birds without using CA, samples generated by 256×256 Stage- with different poses and viewpoints. I GAN collapse to nonsensical images due to the unstable training dynamics of GANs. Consequently, the proposed Method CA Text twice Inception score 64×64 Stage-I GAN no / 2.66 ± .03 Conditioning Augmentation helps stabilize the conditional yes / 2.95 ± .02 GAN training and improves the diversity of the generated no / 2.48 ± .00 samples because of its ability to encourage robustness to 256×256 Stage-I GAN yes / 3.02 ± .01 yes no 3.13 ± .03 small perturbations along the latent manifold. 128×128 StackGAN no yes 3.20 ± .03 yes yes 3.35 ± .02 Sentence embedding interpolation. To further demon- yes no 3.45 ± .02 strate that our StackGAN learns a smooth latent data man- 256×256 StackGAN no yes 3.31 ± .03 yes yes 3.70 ± .04 ifold, we use it to generate images from linearly interpo- lated sentence embeddings, as shown in Figure 8. We fix the Table 2. Inception scores calculated with 30,000 samples gener- noise vector z, so the generated image is inferred from the ated by different baseline models of our StackGAN. given text description only. Images in the first row are gen- using Conditioning Augmentation (CA). Although Stage-I erated by simple sentences made up by us. Those sentences GAN with CA is able to generate more diverse 256×256 contain only simple color descriptions. The results show samples, those samples are not as realistic as samples gen- that the generated images from interpolated embeddings erated by StackGAN. It demonstrates the necessity of the can accurately reflect color changes and generate plausible proposed stacked structure. In addition, by decreasing the bird shapes. The second row illustrates samples generated output resolution from 256×256 to 128×128, the inception from more complex sentences, which contain more details score decreases from 3.70 to 3.35. Note that all images are on bird appearances. The generated images change their scaled to 299 × 299 before calculating the inception score. primary color from red to blue, and change the wing color Thus, if our StackGAN just increases the image size without from black to brown. adding more information, the inception score would remain the same for samples of different resolutions. Therefore, the 5. Conclusions decrease in inception score by 128×128 StackGAN demon- In this paper, we propose Stacked Generative Adversar- strates that our 256×256 StackGAN does add more details ial Networks (StackGAN) with Conditioning Augmenta- into the larger images. For the 256×256 StackGAN, if the tion for synthesizing photo-realistic images. The proposed text is only input to Stage-I (denoted as “no Text twice”), the method decomposes the text-to-image synthesis to a novel inception score decreases from 3.70 to 3.45. It indicates that sketch-refinement process. Stage-I GAN sketches the ob- processing text descriptions again at Stage-II helps refine ject following basic color and shape constraints from given Stage-I results. The same conclusion can be drawn from text descriptions. Stage-II GAN corrects the defects in the results of 128×128 StackGAN models. Stage-I results and adds more details, yielding higher reso- Conditioning Augmentation. We also investigate the lution images with better image quality. Extensive quantita- efficacy of the proposed Conditioning Augmentation (CA). tive and qualitative results demonstrate the effectiveness of By removing it from StackGAN 256×256 (denoted as “no our proposed method. Compared to existing text-to-image CA” in Table 2), the inception score decreases from 3.70 to generative models, our method generates higher resolution 3.31. Figure 7 also shows that 256×256 Stage-I GAN (and images (e.g., 256×256) with more photo-realistic details StackGAN) with CA can generate birds with different poses and diversity.

9 .References [21] M.-E. Nilsback and A. Zisserman. Automated flower classi- fication over a large number of classes. In ICCVGIP, 2008. [1] M. Arjovsky and L. Bottou. Towards principled methods for 5 training generative adversarial networks. In ICLR, 2017. 2 [22] A. Odena, C. Olah, and J. Shlens. Conditional image synthe- [2] A. Brock, T. Lim, J. M. Ritchie, and N. Weston. Neural photo sis with auxiliary classifier gans. In ICML, 2017. 2 editing with introspective adversarial networks. In ICLR, [23] A. Radford, L. Metz, and S. Chintala. Unsupervised repre- 2017. 2 sentation learning with deep convolutional generative adver- [3] T. Che, Y. Li, A. P. Jacob, Y. Bengio, and W. Li. Mode sarial networks. In ICLR, 2016. 1, 2 regularized generative adversarial networks. In ICLR, 2017. [24] S. Reed, Z. Akata, S. Mohan, S. Tenka, B. Schiele, and 2 H. Lee. Learning what and where to draw. In NIPS, 2016. 1, [4] X. Chen, Y. Duan, R. Houthooft, J. Schulman, I. Sutskever, 2, 3, 5, 6, 7 and P. Abbeel. Infogan: Interpretable representation learning by information maximizing generative adversarial nets. In [25] S. Reed, Z. Akata, B. Schiele, and H. Lee. Learning deep NIPS, 2016. 2 representations of fine-grained visual descriptions. In CVPR, 2016. 3, 5 [5] E. L. Denton, S. Chintala, A. Szlam, and R. Fergus. Deep generative image models using a laplacian pyramid of adver- [26] S. Reed, Z. Akata, X. Yan, L. Logeswaran, B. Schiele, and sarial networks. In NIPS, 2015. 1, 2 H. Lee. Generative adversarial text-to-image synthesis. In [6] C. Doersch. Tutorial on variational autoencoders. ICML, 2016. 1, 2, 3, 5, 6 arXiv:1606.05908, 2016. 3 [27] S. Reed, A. van den Oord, N. Kalchbrenner, V. Bapst, [7] J. Gauthier. Conditional generative adversarial networks for M. Botvinick, and N. de Freitas. Generating interpretable convolutional face generation. Technical report, 2015. 3 images with controllable structure. Technical report, 2016. [8] I. J. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, 2 D. Warde-Farley, S. Ozair, A. C. Courville, and Y. Bengio. [28] D. J. Rezende, S. Mohamed, and D. Wierstra. Stochastic Generative adversarial nets. In NIPS, 2014. 1, 2, 3 backpropagation and approximate inference in deep genera- [9] K. He, X. Zhang, S. Ren, and J. Sun. Deep residual learning tive models. In ICML, 2014. 2 for image recognition. In CVPR, 2016. 4 [29] T. Salimans, I. J. Goodfellow, W. Zaremba, V. Cheung, [10] X. Huang, Y. Li, O. Poursaeed, J. Hopcroft, and S. Belongie. A. Radford, and X. Chen. Improved techniques for training Stacked generative adversarial networks. In CVPR, 2017. 2, gans. In NIPS, 2016. 2, 5 3 [30] C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna. [11] S. Ioffe and C. Szegedy. Batch normalization: Accelerating Rethinking the inception architecture for computer vision. In deep network training by reducing internal covariate shift. In CVPR, 2016. 5 ICML, 2015. 5 [31] C. K. Snderby, J. Caballero, L. Theis, W. Shi, and F. Huszar. [12] P. Isola, J.-Y. Zhu, T. Zhou, and A. A. Efros. Image-to-image Amortised map inference for image super-resolution. In translation with conditional adversarial networks. In CVPR, ICLR, 2017. 2 2017. 2 [32] Y. Taigman, A. Polyak, and L. Wolf. Unsupervised cross- [13] D. P. Kingma and M. Welling. Auto-encoding variational domain image generation. In ICLR, 2017. 2 bayes. In ICLR, 2014. 2, 3 [33] A. van den Oord, N. Kalchbrenner, and K. Kavukcuoglu. [14] A. B. L. Larsen, S. K. Sønderby, H. Larochelle, and Pixel recurrent neural networks. In ICML, 2016. 2 O. Winther. Autoencoding beyond pixels using a learned [34] A. van den Oord, N. Kalchbrenner, O. Vinyals, L. Espeholt, similarity metric. In ICML, 2016. 3 A. Graves, and K. Kavukcuoglu. Conditional image genera- [15] C. Ledig, L. Theis, F. Huszar, J. Caballero, A. Aitken, A. Te- tion with pixelcnn decoders. In NIPS, 2016. 2 jani, J. Totz, Z. Wang, and W. Shi. Photo-realistic single im- [35] C. Wah, S. Branson, P. Welinder, P. Perona, and S. Belongie. age super-resolution using a generative adversarial network. The Caltech-UCSD Birds-200-2011 Dataset. Technical Re- In CVPR, 2017. 2 port CNS-TR-2011-001, California Institute of Technology, [16] T.-Y. Lin, M. Maire, S. Belongie, J. Hays, P. Perona, D. Ra- 2011. 5 manan, P. Dollr, and C. L. Zitnick. Microsoft coco: Common [36] X. Wang and A. Gupta. Generative image modeling using objects in context. In ECCV, 2014. 5 style and structure adversarial networks. In ECCV, 2016. 2 [17] E. Mansimov, E. Parisotto, L. J. Ba, and R. Salakhutdinov. [37] X. Yan, J. Yang, K. Sohn, and H. Lee. Attribute2image: Con- Generating images from captions with attention. In ICLR, ditional image generation from visual attributes. In ECCV, 2016. 2 2016. 2 [18] L. Metz, B. Poole, D. Pfau, and J. Sohl-Dickstein. Unrolled [38] J. Zhao, M. Mathieu, and Y. LeCun. Energy-based generative generative adversarial networks. In ICLR, 2017. 2 adversarial network. In ICLR, 2017. 2 [19] M. Mirza and S. Osindero. Conditional generative adversar- [39] J. Zhu, P. Kr¨ahenb¨uhl, E. Shechtman, and A. A. Efros. Gen- ial nets. arXiv:1411.1784, 2014. 3 erative visual manipulation on the natural image manifold. [20] A. Nguyen, J. Yosinski, Y. Bengio, A. Dosovitskiy, and In ECCV, 2016. 2 J. Clune. Plug & play generative networks: Conditional iter- ative generation of images in latent space. In CVPR, 2017. 2

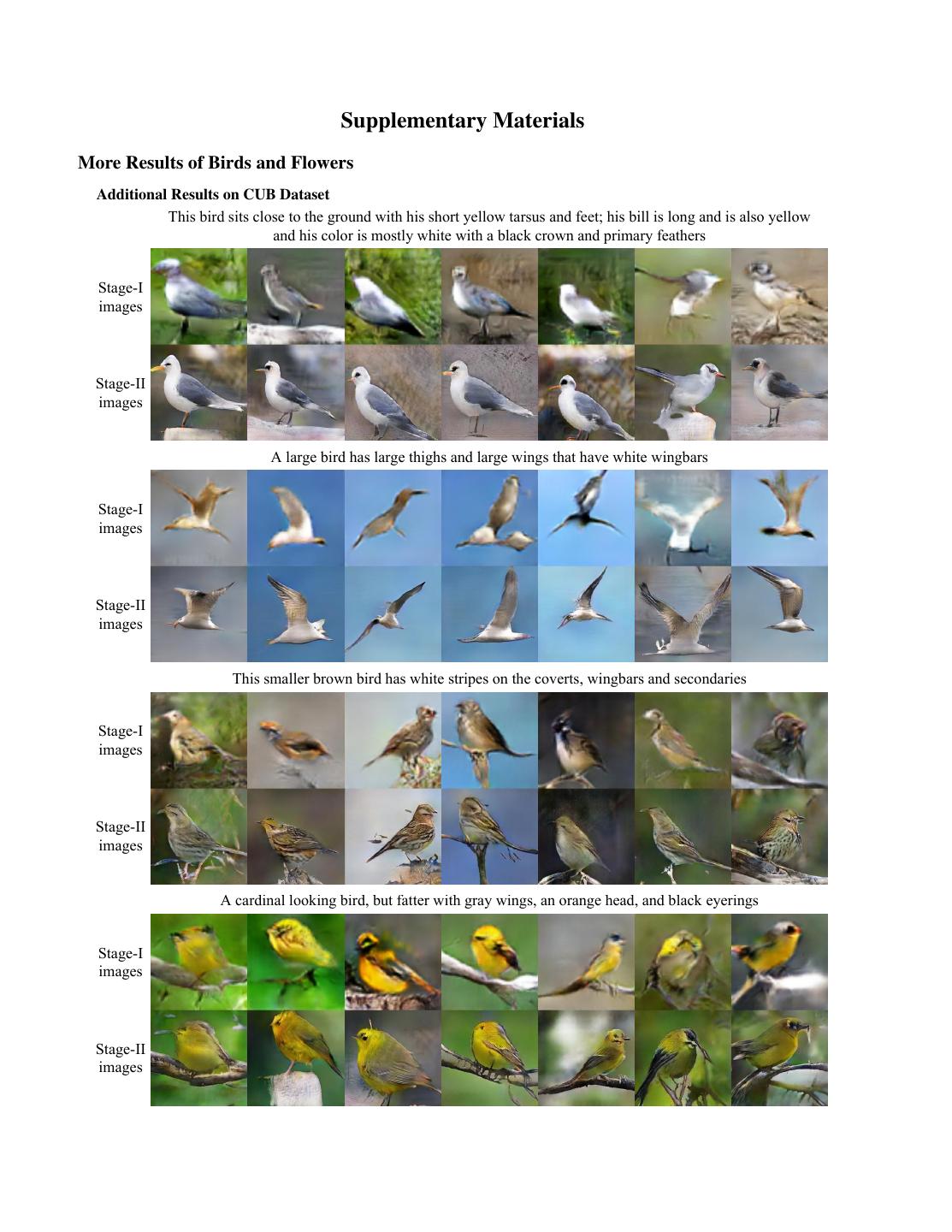

10 . Supplementary Materials More Results of Birds and Flowers Additional Results on CUB Dataset This bird sits close to the ground with his short yellow tarsus and feet; his bill is long and is also yellow and his color is mostly white with a black crown and primary feathers Stage-I images Stage-II images A large bird has large thighs and large wings that have white wingbars Stage-I images Stage-II images This smaller brown bird has white stripes on the coverts, wingbars and secondaries Stage-I images Stage-II images A cardinal looking bird, but fatter with gray wings, an orange head, and black eyerings Stage-I images Stage-II images

11 . The small bird has a red head with feathers that fade from red to gray from head to tail Stage-I images Stage-II images This bird is black with green and has a very short beak Stage-I images Stage-II images This bird is light brown, gray, and yellow in color, with a light colored beak Stage-I images Stage-II images This bird has wings that are black and has a white belly Stage-I images Stage-II images

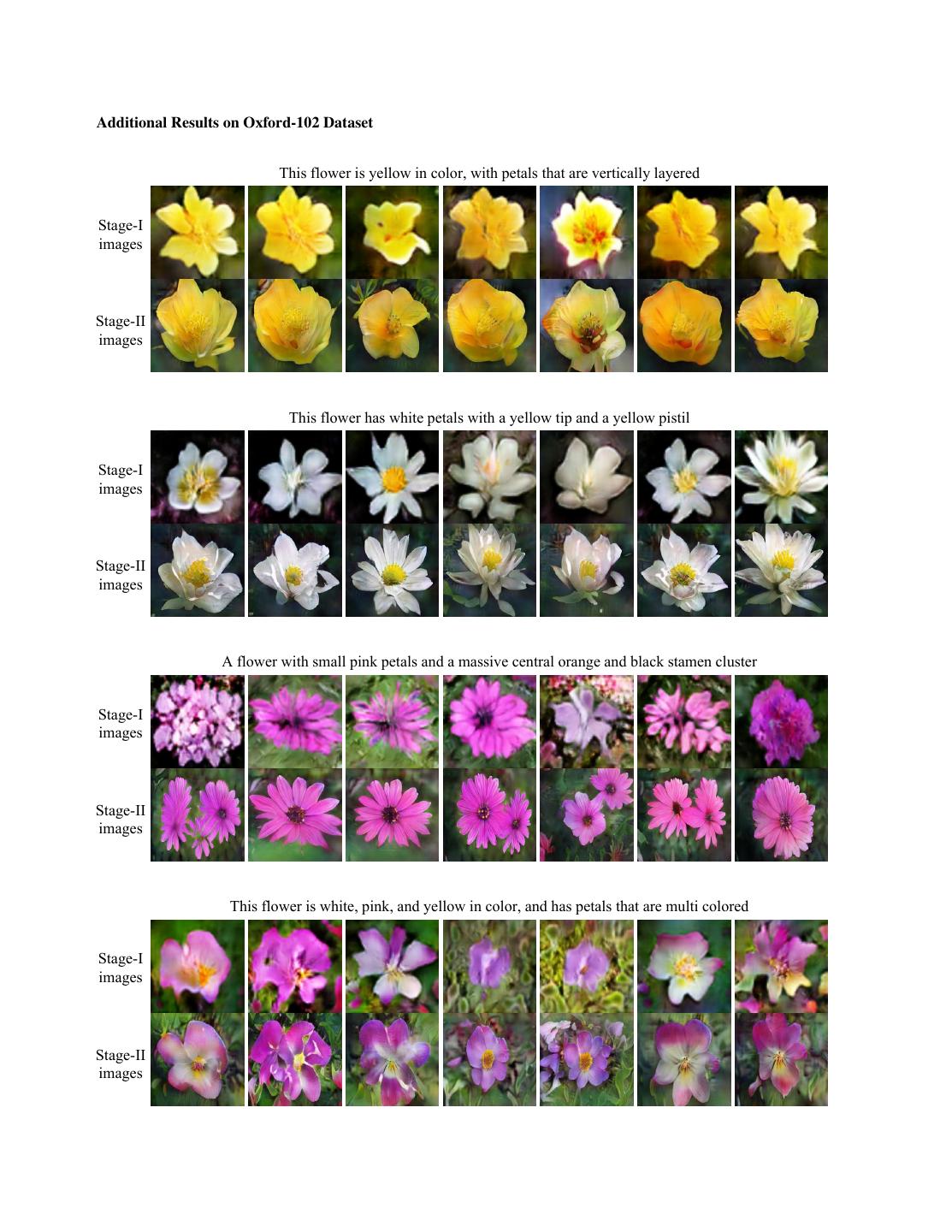

12 .Additional Results on Oxford-102 Dataset This flower is yellow in color, with petals that are vertically layered Stage-I images Stage-II images This flower has white petals with a yellow tip and a yellow pistil Stage-I images Stage-II images A flower with small pink petals and a massive central orange and black stamen cluster Stage-I images Stage-II images This flower is white, pink, and yellow in color, and has petals that are multi colored Stage-I images Stage-II images

13 . This flower has petals that are yellow with shades of orange Stage-I images Stage-II images Failure Cases The main reason for failure cases is that Stage-I GAN fails to generate plausible rough shapes or colors of the objects. CUB failure cases: This medium sized bird is This bird has a primarily black The medium Colored bill dark brown and has a large Bird has brown sized bird has a with a white overall body wingspan and a This particular Grey bird with body feathers, dark grey color, a ring around color, with a long black bill Text bird has a black flat beak brown breast black downward it on the small white with a strip of description brown body with grey and feathers, and curved beak, and upper part patch around the white at the and brown bill white big wings brown beak long wings near the bill base of the bill beginning of it Stage-I images Stage-II images Oxford-102 failure cases: This flower The flower A flower that A unique yellow This flower is This is a light is yellow have large has white petals The petals of flower with no pink and yellow colored flower and green in petals that are with some Text this flower are visible pistils in color, with with many color, with pink with tones of yellow description white with a protruding from petals that are different petals petals that yellow on some and green large stigma the center oddly shaped on a green stem are ruffled of the petals filaments Stage-I images Stage-II images

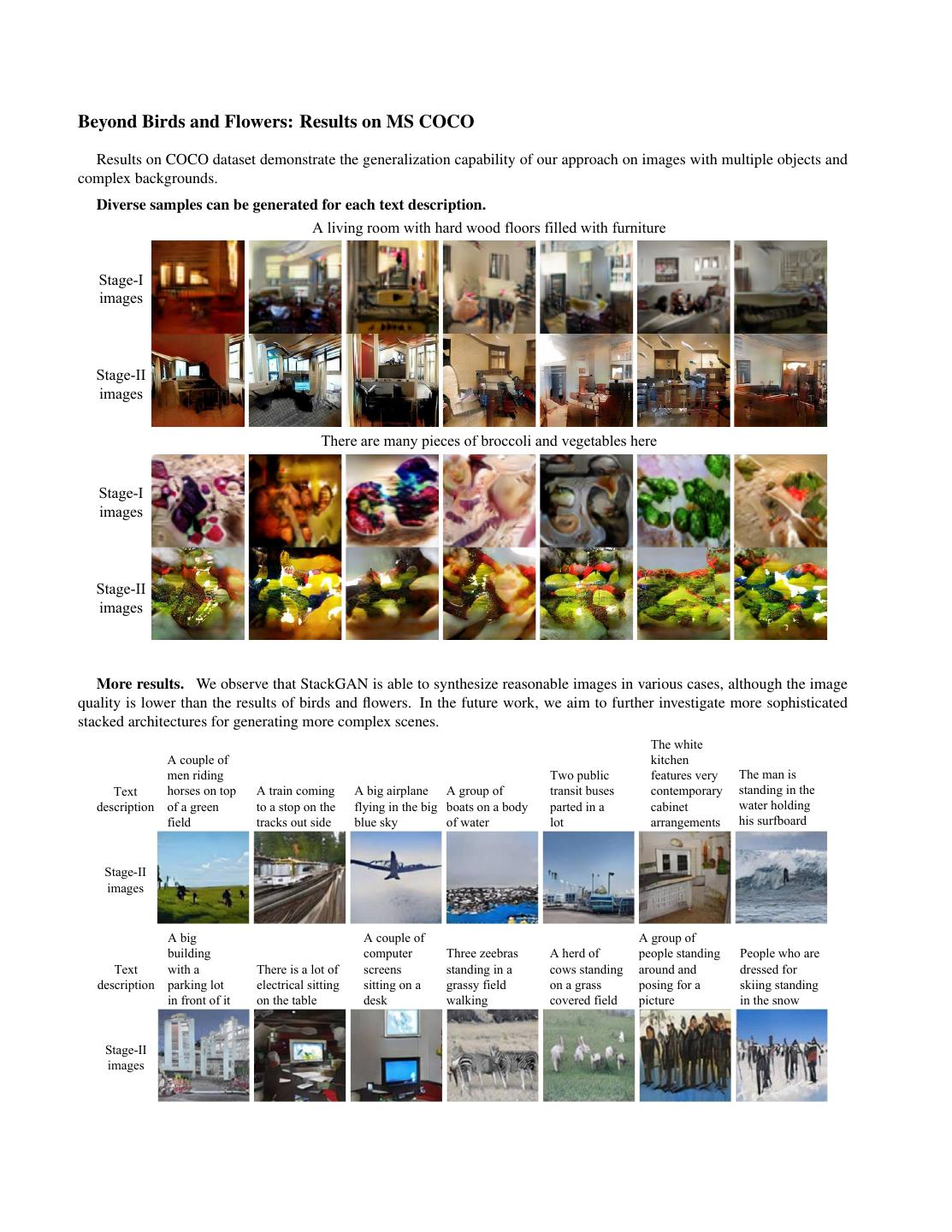

14 .Beyond Birds and Flowers: Results on MS COCO Results on COCO dataset demonstrate the generalization capability of our approach on images with multiple objects and complex backgrounds. Diverse samples can be generated for each text description. A living room with hard wood floors filled with furniture Stage-I images Stage-II images There are many pieces of broccoli and vegetables here Stage-I images Stage-II images More results. We observe that StackGAN is able to synthesize reasonable images in various cases, although the image quality is lower than the results of birds and flowers. In the future work, we aim to further investigate more sophisticated stacked architectures for generating more complex scenes. The white A couple of kitchen men riding Two public features very The man is Text horses on top A train coming A big airplane A group of transit buses contemporary standing in the description of a green to a stop on the flying in the big boats on a body parted in a cabinet water holding field tracks out side blue sky of water lot arrangements his surfboard Stage-II images A big A couple of A group of building computer Three zeebras A herd of people standing People who are Text with a There is a lot of screens standing in a cows standing around and dressed for description parking lot electrical sitting sitting on a grassy field on a grass posing for a skiing standing in front of it on the table desk walking covered field picture in the snow Stage-II images