- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

MVPNet

展开查看详情

1 . MVPNet: Multi-View Point Regression Networks for 3D Object Reconstruction from A Single Image Jinglu Wang† Bo Sun‡∗ Yan Lu† † ‡ Microsoft Research Peking University {jinglwa, v-bosu, yanlu}@microsoft.com arXiv:1811.09410v1 [cs.CV] 23 Nov 2018 GT surface 2D Projection GT 1-VPC GT MVPC Abstract In this paper, we address the problem of reconstructing an ob- N Views ject’s surface from a single image using generative networks. Triangulate First, we represent a 3D surface with an aggregation of dense 𝑥 point clouds from multiple views. Each point cloud is em- 𝑦 Lift to 3D 𝑧 bedded in a regular 2D grid aligned on an image plane of a 𝑣 Geometric Loss viewpoint, making the point cloud convolution-favored and 2D grid 3D mesh ordered so as to fit into deep network architectures. The point (a) Multi-View Point Clouds (MVPC) clouds can be easily triangulated by exploiting connectivities of the 2D grids to form mesh-based surfaces. Second, we pro- Predicted 1-VPC pose an encoder-decoder network that generates such kind Triangulate View 1 of multiple view-dependent point clouds from a single im- Input image Lift to 3D Predicted MVPC age by regressing their 3D coordinates and visibilities. We also introduce a novel geometric loss that is able to inter- View 2 MVPNet pret discrepancy over 3D surfaces as opposed to 2D projec- tive planes, resorting to the surface discretization on the con- structed meshes. We demonstrate that the multi-view point regression network outperforms state-of-the-art methods with View N a significant improvement on challenging datasets. (b) Multi-View Point Network (MVPNet) Figure 1: (a) A surface is represented by MVPC. Each pixel Introduction in a 1-VPC stores the backprojected surface point (x, y, z) 3D object reconstruction from a single RGB image is an in- from this pixel and its visibility v. The stored 3D points are herently ill-posed problem as many configurations of shape, triangulated according to the 2D grid on the image plane texture, lighting, and camera can give rise to the same ob- and their normals are shown to indicate surface orientation. served image. Recently, the advanced deep learning mod- (b) Given an RGB image, the MVPNet generates a set of els allow for the rethinking of this task as generating re- 1-VPCs and their union forms the predicted MVPC. The ge- alistic samples from underlying distributions. Regular rep- ometric loss measures discrepancy between predicted and resentations are favored by deep convolutional neural net- groundtruth MVPC. works for dense data sampling, weight sharing, etc. Al- though meshes are the predominant representations for 3D geometries, their irregular structures are not easy for encod- In order to depict dense and detailed surfaces, we in- ing and decoding. Most extant deep nets (Choy et al. 2016; troduce an efficient and expressive view-based represen- Tulsiani et al. 2017; Wu et al. 2016; Zhu et al. 2017; tation inspired by recent studies on multi-view projec- Girdhar et al. 2016) employ 3D volumetric grids. However, tions (Kalogerakis et al. 2017; Soltani et al. 2017; Shin, they suffer from high computational complexity for dense Fowlkes, and Hoiem 2018). In particular, we propose to rep- sampling. A few recent methods (Fan, Su, and Guibas 2017; resent a surface by dense point clouds visible from multiple Diamanti, Mitliagkas, and Guibas 2017) advocate the un- viewpoints. The arrangement of viewpoints are configured ordered point cloud representation. The unordered property to cover most of the surface. The multi-view point clouds requires additional computation to establish a one-to-one (MVPC) are illustrated in Fig. 1 (a). Each point cloud is mapping for point pairs. It often yields sparse results be- stored in a 2D grid embedded in a viewpoint’s image plane. cause of costly mapping algorithms. A 1-view point cloud (1-VPC) looks like a depth map, but ∗ The work was done when Bo Sun was an intern at MSR. each pixel stores the 3D coordinates and visibility informa- Copyright c 2019, Association for the Advancement of Artificial tion rather than the depth of the backprojected surface point Intelligence (www.aaai.org). All rights reserved. from this pixel. The backprojection transformation offers a

2 .one-to-one mapping of point sets in 1-VPCs with equal cam- View-based methods. As the drawbacks of voxel-based era parameters. Meanwhile, local connectivities of the 3D CNNs are obvious, some methods adopt view-based repre- points are introduced from the 2D grids, which facilitate to sentations. They project surfaces on image planes with reg- form a triangular mesh based on such backprojected points. ular 2D grids that allows planar convolution. A few meth- Accordingly, the surface reconstruction problem is for- ods (Park et al. 2017; Zhou et al. 2016; Chen et al. 2018; mulated as the regression of values stored in MVPC. We 2017) achieve impressive results in synthesizing novel views employ an encoder-decoder network as a conditional sam- from a single view. Tatarchenko et al.(Tatarchenko, Dosovit- pler to generate the underlying MVPC, as shown in Fig. 1 skiy, and Brox 2016) utilize CNNs to infer images and depth (b). The encoder extracts image features and combines them maps of arbitrary views given an RGB image, and then fuse with different viewpoints’ features respectively. The decoder the depth maps to yield a 3D surface. Soltani et al.(Soltani consists of multiple weight-shared branches, each of which et al. 2017) synthesize multi-view depth maps from a single generates a view-dependent point cloud. The union of all or multiple depth maps. Since depth maps inherently con- 1-VPCs forms the final MVPC. We propose a novel geomet- tain geometric information, our task, which takes a single ric loss that measures discrepancies over real 3D surfaces RGB image as an input is much more challenging. Lin et as opposed to 2D planes. Unlike previous view-based meth- al.(Lin, Kong, and Lucey 2018) also generate points of mul- ods processing features in 2D projective spaces (i.e., image tiple views with a generative network. These methods all fo- planes) and neglecting the information loss through dimen- cus on predicting the intermediate information in 2D projec- sion reduction from 3D to 2D, the proposed MVPC allow tive planar spaces yet ignore real 3D spatial correlation and us to discretize integrals of surface variations over the con- multi-view consistency. Our method incorporates the spatial structed triangular mesh. The geometric loss integrating vol- correlation of surface points and further enforces multi-view ume variations, prediction confidences and multi-view con- consistency to achieve more accurate and robust reconstruc- sistencies contributes to high reconstruction performance. tions. Point-based methods. Some methods generate an un- Related Work ordered point cloud from an image by deep learning. Su et al.(Fan, Su, and Guibas 2017) are the first to study the prob- Mesh-based methods. Mesh representation has been ex- lem. The unordered property of a point cloud enjoys high tensively used to improve and manipulate surface interfaces. flexibility (Qi et al. 2017), but it increases computational In particular, surface reconstruction is usually posed to de- complexity due to lack of correspondences. This makes such form an initial mesh to minimize a variational energy func- methods not scalable, resulting in sparse points. tional in the spirit of data fidelity. (Delaunoy and Prados 2011; Pons, Keriven, and Faugeras 2007; Wang et al. ; Approach Liu et al. 2015) are the pioneers of reconstructing mesh- based surface from multi-views. These deformable mesh In this section, we first formally introduce the MVPC rep- methods calculate the integral over the whole surface and resentation for depicting 3D surfaces efficiently and expres- thus capture complete properties on the surface. However, sively. Then, we detail the MVPNet architecture and the geo- irregular connectivities of mesh representation make it diffi- metric loss for generating the underlying MVPC conditioned cult to leverage the advance of convolutional architectures. on an input image. Recent methods (Pontes et al. 2017) use linear combinations MVPC Representation of a dictionary of CAD models and learn the parameters of the combination to represent the models, which are limited An object’s surface S is considered as an aggregation of par- N to the capacity of the constructed dictionary. We are inspired tial surfaces i=1 Si visible from a set of predefined view- by variational methods that have geometric interpretations points {ci |i = 1, ..., N }. Each partial surfaces Si is dis- for optimization formulation. Important geometric clues are cretized and parameterized by the aligned 2D grid on the integrated into the loss function, which contributes a supe- image plane of ci , as shown in Fig. 1 (a). Each pixel xk on rior performance significantly. the grid stores the 3D point xk = (xk , yk , zk ) backprojected from xk onto S and the visibility vki of xk from ci . vki is Voxel-based methods. When learning methods dominate set to 1 if xk is visible from ci , otherwise 0. The visible the recognition tasks, volumetric representation (Girdhar et 3D points are triangulated by connecting them with the 2D al. 2016; Wu et al. 2016; Choy et al. 2016; Wu et al. 2017; grid’s horizontal, vertical, and one of the diagonal edges to Tulsiani et al. 2017) is more favored because of its regu- form a mesh-based surface. Such multiple view-dependent lar grid-like structure that suites convolutional operations. parameterized surfaces are named multi-view point clouds, N Tulsiani and Zhou (Tulsiani et al. 2017) formulate a differ- MVPC in short, M = i=0 Mi , where Mi denotes a 1-view entiable ray consistency term to enforce view consistency point cloud, 1-VPC in short. Let X = {xk } denote all the on the voxels with the supervision of multi-view observa- 3D points in M. tion. 3D-R2N2 (Choy et al. 2016) learns to aggregate voxel MVPC inherit the advantage of efficiency from general occupancy from sequential input images and can obtain ro- view-based representations. Unlike volumetric representa- bust results. Voxel-based methods are limited by the cubic tion using costly 3D convolution, 2D convolution is per- growth rate of both memory and computation time, leading formed on the 2D grids, which encourages higher reso- to low-resolution of grids. lutions for denser surface sampling. Meanwhile, MVPC

3 . N MVPC M = i=1 Mi is of shape N × H × W × 4, where Decoder 𝑣𝑘𝑖 , 𝐱𝑘 H and W denote the height and width of a 1-VPC. The last channel corresponds to a 3D coordinate xk = (xk , yk , zk ) Encoder and visibility vki of a point xk . 𝐼 𝑧 The encoder is a composition of convolution and leaky fc ReLU layers. The camera parameters are encoded with fully connected layers. The decoder contains a sequence 𝑁 𝑀𝑖=1,…,𝑁 Share weights of transposed-convolution and leaky ReLU layers. The last layer is activated with the tanh functions, responsible for fc regressing 3D coordinates and visibilities of points. Imple- fc 𝐜𝑖 mentation details are described in the experiment section. 𝑐𝑖=1,…,𝑁 Geometric Loss While most point generation methods (Fan, Su, and Guibas 2017; Lin, Kong, and Lucey 2018; Soltani et al. 2017) adopt point-wise distance metrics, they disregard geometric char- Figure 2: MVPNet architecture. Given an input image I, acteristics of surfaces. These networks attempt to predict MVPNet consisting of an encoder and a decoder regresses “mean” shapes (Fan, Su, and Guibas 2017), failing to pre- the N 1-VPCs {Mi } for {ci }, i = 1, .., N respectively. N serve fine details. concatenated features (z, ci ) are fed into N branches of the We propose a geometric loss (GeoLoss) that is able to decoder, of which the branches share weights. capture variances over 3D surfaces rather than over sparse point sets or 2D projective planes. We expand the GeoLoss to be differentiable for neuron networks and also to be ro- encode one-to-one mapping of predicted points X and bust against noise and incompletion. The GeoLoss is made groudtruth points X explicitly. Induced by the same view- up of three components: point ci , pixels with the same 2D coordinates xk are de- LGeo = Lptd + αLvol + βLmv (1) fined to store the same surface point xk . In other words, the groundtruth and predicted 1-VPC of the same viewpoint where Lptd is the sum of distances between corresponding store the points in the same order. Compared to unordered point pairs, Lvol denotes the quasi-volume term measuring point cloud representations that require additional computa- discrepancy of local volumes, and Lmv is the multi-view tion to construct point-wise mapping, MVPC have superior consistency term. Coefficients α and β are the weights bal- performance in computation. ancing different losses. MVPC express not a simple combination of multi-view projections but a discrete approximation to a real 3D surface. Point-wise distance term. The points in groundtruth and On the one hand, A triangular mesh is constructed for each predicted 1-VPC have a one-to-one mapping according to 1-VPC, and thus we can formulate losses based on geome- the definition of MVPC, illustrated in Fig. 3 (a). 2D pix- tries on 3D surfaces rather than on 2D projections. Note that els with equal 2D coordinates are defined to store the same the edges inherited from 2D grids are not all real in 3D, e.g., surface point induced by the same viewpoint. Therefore, the edges connecting points on depth discontinuities are fake. sum of point-wise distances for groundtruth and predicted We deal with the fake edges by penalizing them largely in 1-VPC is the L2 loss. The total sum of point-wise distances the loss formulation. On the other hand, we carefully select of MVPC is given by: relatively few yet evenly distributed viewpoints on a viewing sphere that can cover most of the targeting surface. Different N numbers of viewpoints are discussed in the experiment sec- tion. We also consider multi-view consistency constraints in Lptd = ||Mi (x) − Mi (x)||2 (2) overlap regions to improve the expressiveness of MVPC. i x∈Mi MVPNet Architecture where x is a 2D pixel, Mi (x) and Mi (x) denotes the 3D coordinates stored in predicted 1-VPC Mi and groundtruth We exploit an encoder-decoder generative network architec- ture and incorporate camera parameters into the network to 1-VPC Mi at x. Here we take the visibility into account by generate view-dependent point clouds. The network archi- setting Mi (x) to an infinite point F3 (a point at the far clip- tecture is illustrated in Fig. 2. The encoder learns to map ping plane practically). an image I to an embedding space to obtain a latent feature Neural networks tend to predict a mean shape averaging z. Each camera matrix ci is first transformed to a higher- out the space of uncertainty using L2 or L1 loss. Point-wise dimensional hidden representation ci , serving as a view in- distances neglecting local interactions do not fully express dicator, and then is concatenated with z to get (z, ci ). The geometric discrepancy between surfaces. Moreover, this decoder that converts (z, ci ) to a 1-VPC Mi indicated ci metric may give rise to erroneous reconstructions around learns the projective transformation and space completion. occluding contours, because minor errors on 2D projective The decoder shares weights among N branches. The output planes lead to large 3D deviations at depth continuities.

4 . 𝑖𝑗 𝑖𝑗 Camera 𝑀𝑖 𝑂෨𝑖 𝑀𝑖 𝑂෨𝑖 GT Surface 𝐱1′ 𝐱 𝑘 ෩𝑖 GT 1-VPC 𝑀 ෩𝑗 (𝑥′൯ 𝛱𝑖 ∘ 𝑀 𝑥𝑘 𝑥 𝐜 𝐱1′ 𝔽3 Visible surface 𝐱 3′ ෩𝑗 𝑀 𝑂෨𝑗 𝑖𝑗 𝑖𝑗 𝑂෨𝑗 𝑀𝑗 Predicted 1-VPC 𝑀𝑖 Point-wise distance 𝐱3 𝐱𝑘 𝑥′ 𝔽3 Terminate point Far clipping plane 𝔽3 𝛱𝑗 ∘ 𝑀𝑖 (𝑥൯ (b) Quasi-volume discrepancy (a) Point-wise distance term. (b) Quasi-volume term. (c) Multi-view consistency term. Figure 3: Loss functions. (a) Point-wise distances for 1-VPC. Induced by the same viewpoint, groundtruth and predicted points (xk and xk ) stored in xk indicate the same surface point. (b) Quasi-volume discrepancy for typical examples. The local volume discrepancies of points around depth discontinuities, e.g., x2 and x4 , are largely penalized by keeping fake connectivities (red dashed lines). (c) Oiij is the projection on view i of the overlap region visible from view i and j. Ojij is the projected overlap region on view j. Considering the overlap region, the multi-view consistency term minimizes the sum of distances between 3D points stored in pixels x ∈ Mi and its reprojected pixel Πj ◦ Mi (x) ∈ Mj , and verse visa. Quasi-volume term. The limitation of point-wise dis- loss, e.g., x2 in Fig. 3 (b). The quasi-volume term implicitly tance metrics motivates us to formulate discrepancies over handles the challenges introduced by occluding contours. surfaces. Inspired by the volume-preserving constraints used Multi-view consistency term. Partial surfaces of an ob- in variational surface deformation (Eckstein et al. 2007), ject visible from different viewpoints may have overlap, we propose a quasi-volume discrepancy metric to better de- which can be reached by letting points from different views scribe the surface discrepancy. This term is able to charac- attract one another. The consistency serves as links between terize fine details and deal with occluding contours. groundtruth and predicted 3D points stored in a pair of cor- Let us first define the volume discrepancy between pre- responding pixels from two different views. Fig. 3 (c) shows dicted and groundtruth continuous surfaces: an example for two views. Note that the consistency only ex- ists at overlap regions. We first compute the projected over- Lvol (S, S) = (x − x) · ndx (3) S lap region Oiij on view i by rendering groundtruth 1-VPC where x and x are 3D points on predicted surface S and Mj on view i, and get Ojij by rendering Mi on view j. We groundtruth surface S, dx is an area element of a surface minimize the sum of two distances between the stored 3D and n is the outward normal to the surface at point x, (·) points in two corresponding pixels and their reprojected pix- denotes the inner product operator. els in the other view. For each pixel x in Oiij , the predicted The discrete volume discrepancy of the MVPC represen- 3D coordinate is Mi (x). The reprojected pixel on view j is tation can be deduced as: Πj ◦ Mi (x), where Πj denotes the projection matrix of view N j. Similarly, the pixel x at groundtruth 1-VPC Mj corre- Lvol = Vi (x)(Mi (x) − Mi (x)) · Ni (x) (4) sponds to the pixel Πi ◦ Mj (x ) in the predicted 1-VPC Mi . i x∈Mi Therefore, the multi-view consistency term takes the form: where Vi is the groundtruth visibility map and Ni is the Lmv = ( ||Mi (x) − Mj (Πj ◦ Mi (x))||2 groundtruth area-weighted normal map of view i. Formally, i,j x∈Oiij for each pixel x with its backprojected surface point x, the normal map of view i is Ni (x) = ∆∈Ω(x) |∆|n(x), where + ||Mj (x) − Mi (Πi ◦ Mj (x))||2 ) (5) ∆ denotes a mesh triangle, Ω(x) contains the 1-ring trian- x∈Ojij gles around point x, n(x) is the outward normal at point x. The multi-view consistency term does not directly mini- A detailed proof is presented in the supplemental material. mize distances between two predicted 1-VPCs but leverages Note that we use the groundtruth visibility Vi (·), and thus the correspondences between predictions and groundtruths. add a cross entropy loss accounting for the visibilities. This is because erroneous 3D coordinates in predictions will Equation 4 formulates the volume discrepancy for visible introduce false correspondences, resulting in divergence or parts. We complement it with invisible parts, and name it falling into a trivial solution. as quasi-volume discrepancy, as illustrated in Fig. 3 (b). We assign the background pixels with a terminate point F3 at Experiment far clipping plane. Thus, the points at boundaries of Mi will achieve a large discrepancy gain, which means they are of Implementation high weights in the loss function, e.g., x4 in Fig. 3 (b). Sim- We show the architecture of MVPNet in Fig. 2. The input ilarly, points at occluding contours experience large volume RGB image is of size 128 × 128. The output surface coor-

5 . Table 1: Quantitative comparison to the state-of-the-arts with per-category voxel IoU. plane bench cabinet car chair display lamp speaker firearm couch table phone vessel mean R2N2(Choy et al. 2016)(1 view) 0.513 0.421 0.716 0.798 0.466 0.468 0.381 0.662 0.544 0.628 0.513 0.661 0.513 0.56 voxel R2N2(Choy et al. 2016)(5 views) 0.561 0.527 0.772 0.836 0.550 0.565 0.421 0.717 0.600 0.706 0.580 0.754 0.610 0.630 PTN-Comb(Yan et al. 2016) 0.584 0.508 0.711 0.738 0.470 0.547 0.422 0.587 0.610 0.653 0.515 0.773 0.551 0.590 CNN-Vol(Yan et al. 2016) 0.575 0.514 0.697 0.735 0.445 0.539 0.386 0.548 0.603 0.647 0.514 0.769 0.5445 0.578 MVPNet Depth point Soltani(Soltani et al. 2017) 0.587 0.524 0.698 0.743 0.529 0.679 0.480 0.586 0.635 0.59 0.593 0.789 0.604 0.618 Su(Fan, Su, and Guibas 2017) 0.601 0.55 0.771 0.831 0.544 0.552 0.462 0.737 0.604 0.708 0.606 0.749 0.611 0.640 GeoLoss(N =4) 0.655 0.578 0.664 0.709 0.546 0.653 0.486 0.573 0.676 0.630 0.561 0.783 0.633 0.627 GeoLoss(N =6) 0.624 0.579 0.677 0.719 0.543 0.636 0.498 0.578 0.682 0.636 0.548 0.800 0.643 0.628 GeoLoss(N =8) 0.622 0.576 0.691 0.724 0.540 0.643 0.501 0.590 0.684 0.647 0.534 0.788 0.640 0.629 PtLoss(N =6) 0.474 0.459 0.573 0.704 0.436 0.558 0.375 0.496 0.519 0.567 0.432 0.691 0.558 0.526 GeoLoss(N =4) 0.666 0.622 0.693 0.786 0.616 0.653 0.510 0.599 0.696 0.690 0.635 0.811 0.663 0.665 GeoLoss(N =6) 0.678 0.623 0.685 0.788 0.627 0.681 0.523 0.602 0.693 0.701 0.652 0.814 0.659 0.671 GeoLoss(N =8) 0.667 0.610 0.686 0.782 0.609 0.667 0.507 0.596 0.688 0.686 0.641 0.809 0.661 0.662 Table 2: Quantitative comparison to point-based methods using the chamfer distance metric. All numbers are scaled by 0.01. plane bench cabinet car chair display lamp speaker firearm couch table phone vessel mean Su(Fan, Su, and Guibas 2017) 1.395 1.899 2.454 1.927 2.121 2.127 2.280 3.000 1.337 2.688 2.052 1.753 2.064 2.084 Lin(Lin, Kong, and Lucey 2018) 1.418 1.622 1.443 1.254 1.964 1.640 3.547 2.039 1.400 1.670 1.655 1.569 1.682 1.761 Soltani(Soltani et al. 2017) 0.167 0.165 0.122 0.026 0.277 0.085 1.814 0.163 0.107 0.138 0.226 0.258 0.102 0.28 MVPNet(N =4) 0.045 0.084 0.063 0.042 0.086 0.065 0.561 0.163 0.104 0.082 0.070 0.046 0.060 0.113 MVPNet(N =6) 0.041 0.079 0.060 0.041 0.085 0.053 0.421 0.152 0.093 0.070 0.069 0.038 0.050 0.096 MVPNet(N =8) 0.044 0.085 0.058 0.040 0.103 0.050 0.494 0.153 0.113 0.083 0.075 0.039 0.059 0.107 dinate maps is of shape N × 128 × 128 × 4. The encoder training and testing sets with the fraction 0.8/0.2. consists of five convolution (conv) layers with numbers of To obtain input RGB images, we render each 3D model channels {32, 64, 128, 256, 512}, kernel sizes {3, 3, 3, 3, for 24 viewpoints which are randomly sampled with an ele- 3}, and strides {2, 2, 2, 2, 2}, and two fully connected (fc) vation ranging from (-20, 20), an azimuth ranging from (0, layers with numbers of neurons {4096, 2048}. The cam- 360) degrees, and a radius ranging from (0.6, 2.3). Note that era matrix c is encoded with two fc layers with numbers all models are normalized by their bounding spheres’ radius. of neurons {64, 512}. The decoder part takes the concate- nated feature (z, ci ) as input and generates a surface coordi- Viewpoint arrangement. For the viewpoint arrangement nate map for each viewpoint. The structure of the decoder is of the output MVPC, we approximately maximize the cov- mirrored to the encoder, consisting of two fc layers and five erage of the “mean” shape (the unit sphere) of all objects transposed-convolution (also known as “deconv”) layers for with respect to N . The N (4, 6, 8) viewpoints are located at up-sampling. We add the last conv layer with the number of vertices of a tetrahedron, octahedron, and cube, respectively. channels 4 and kernel size 1 to generate 4-channel output. All the viewpoints look at the origin. Orthogonal projection Batch normalization (Ioffe and Szegedy 2015) is not per- is used to avoid additional perspective distortion. We calcu- formed because we observe the training process is smooth. late the average surface coverage by counting the number Leaky ReLU activation with a negative slope of 0.2 is ap- of visible points in groundtruth models, which are 97.2%, plied after all conv layers except the last one which is fol- 97.7% and 98.0% for N =4, 6, 8 respectively. The perfor- lowed by the tanh layer. mances of different viewpoint settings are reported. We train the network with Tensorflow (Abadi et al. 2016) on a Nvidia TitanX GPU with a minibatch of 32. We use Reconstruction Result Adam optimizer (Kingma and Ba 2014) with a learning rate Both qualitative and quantitative results of the reconstruc- of 0.0001. The training procedure takes 100,000 iterations. tion are presented. We compare our method to two collec- The coefficients α and β of GeoLoss is set to 100 and 1 tions of state-of-the-art methods according to the final result respectively after 10000 iterations and both to 0 before, be- representations, namely, point clouds and volumetric grids. cause the initial point clouds are noisy and the computed volume discrepancy and consistency term are not reliable. Comparison to point generation methods. We compare our method to the state-of-the-art point generation methods using both an unordered point cloud representation (Fan, Su, Dataset and Guibas 2017) and view-based representations (Soltani et We leverage the ShapeNet (Chang et al. 2015) dataset, which al. 2017; Lin, Kong, and Lucey 2018) on the ShapeNet-13 contains a large volume of clean CAD models for our ex- dataset. Note that the methods proposed by Su et al. (Fan, periments. We setup two datasets for single-class and multi- Su, and Guibas 2017), Lin et al. (Lin, Kong, and Lucey class cases. The chair category (ShapeNet-Chair) is used for 2018) and us take a single RGB image as the input, while single-class processing since it is ubiquitously evaluated in Soltani et al. (Soltani et al. 2017) use depth maps as in- previous methods. For the multi-class dataset, we use 13 ma- put which may contain more geometric information. We use jor classes as the 3D-R2N2 (Choy et al. 2016) set, listed in Intersection-of-Union (IoU) of voxel occupancy for evalu- Table 1, named as ShapeNet-13. The datasets are split into ating the reconstruction accuracy as most methods do. The

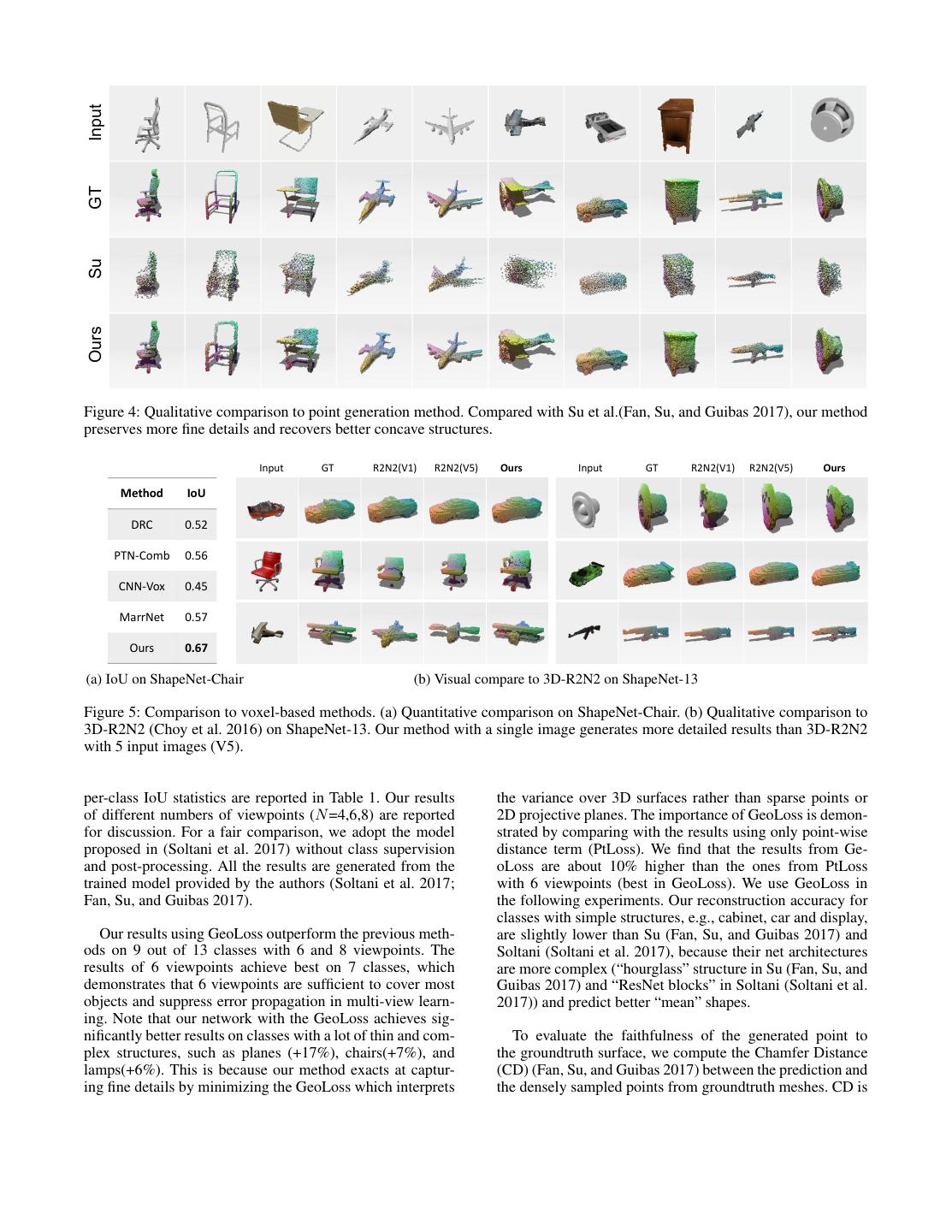

6 .Input GT Su Ours Figure 4: Qualitative comparison to point generation method. Compared with Su et al.(Fan, Su, and Guibas 2017), our method preserves more fine details and recovers better concave structures. Input GT R2N2(V1) R2N2(V5) Ours Input GT R2N2(V1) R2N2(V5) Ours Method IoU DRC 0.52 PTN-Comb 0.56 CNN-Vox 0.45 MarrNet 0.57 Ours 0.67 (a) IoU on ShapeNet-Chair (b) Visual compare to 3D-R2N2 on ShapeNet-13 Figure 5: Comparison to voxel-based methods. (a) Quantitative comparison on ShapeNet-Chair. (b) Qualitative comparison to 3D-R2N2 (Choy et al. 2016) on ShapeNet-13. Our method with a single image generates more detailed results than 3D-R2N2 with 5 input images (V5). per-class IoU statistics are reported in Table 1. Our results the variance over 3D surfaces rather than sparse points or of different numbers of viewpoints (N =4,6,8) are reported 2D projective planes. The importance of GeoLoss is demon- for discussion. For a fair comparison, we adopt the model strated by comparing with the results using only point-wise proposed in (Soltani et al. 2017) without class supervision distance term (PtLoss). We find that the results from Ge- and post-processing. All the results are generated from the oLoss are about 10% higher than the ones from PtLoss trained model provided by the authors (Soltani et al. 2017; with 6 viewpoints (best in GeoLoss). We use GeoLoss in Fan, Su, and Guibas 2017). the following experiments. Our reconstruction accuracy for classes with simple structures, e.g., cabinet, car and display, Our results using GeoLoss outperform the previous meth- are slightly lower than Su (Fan, Su, and Guibas 2017) and ods on 9 out of 13 classes with 6 and 8 viewpoints. The Soltani (Soltani et al. 2017), because their net architectures results of 6 viewpoints achieve best on 7 classes, which are more complex (“hourglass” structure in Su (Fan, Su, and demonstrates that 6 viewpoints are sufficient to cover most Guibas 2017) and “ResNet blocks” in Soltani (Soltani et al. objects and suppress error propagation in multi-view learn- 2017)) and predict better “mean” shapes. ing. Note that our network with the GeoLoss achieves sig- nificantly better results on classes with a lot of thin and com- To evaluate the faithfulness of the generated point to plex structures, such as planes (+17%), chairs(+7%), and the groundtruth surface, we compute the Chamfer Distance lamps(+6%). This is because our method exacts at captur- (CD) (Fan, Su, and Guibas 2017) between the prediction and ing fine details by minimizing the GeoLoss which interprets the densely sampled points from groundtruth meshes. CD is

7 .a common measure of the distance between two point sets, chair which is defined by summing up the distances between each = source point to its nearest point in the target point set. The plane groundtruth points of size 100,000 are uniformly sampled = car on the surface. The CD evaluation is reported in Table 2. Our method is superior to the previous methods on most classes (12/13) by a large margin. Same as in IoU evalu- Figure 6: Reconstruction results on real word data. ation, 6 viewpoints get the best on 10 classes. The multi- view point clouds generated by our network possess high density and the geometric loss enforces local spatial coher- ence. The unordered point generation method (Fan, Su, and Within-Class Guibas 2017) gets sparse point clouds which are limited to characterizing enough details, leading to large chamfer dis- tances. The method proposed by Soltani et al.(Soltani et al. 2017) also obtains small distances since it generates points with many more (20) depth maps. Cross-Class For qualitative comparison, we present several typical ex- amples in Fig. 4. Our method is able to produce much denser points (∼15k), while the method proposed by Su (Fan, Su, and Guibas 2017) limits the point cloud size to 1024. Our method is superior in recovering fine details (see chair backs, Figure 7: Reconstructions for linear interpolation of two plane tails and car wheels) and dealing with concave struc- learned latent features within and across classes respectively. tures, such as car trunk and two layers of plane wings. The geometric loss that handles occlusion encourages the improvement on concave shapes. More results of ours are depth needs an extremely large move in the fixed direction. shown in supplemental materials. Thus, 3D coordinates are easier to learn than depths. In ad- dition, the complexity does not grow much because only the Comparison to voxel-based methods. We compare the last layer of the decoder is different. proposed method to the state-of-the-art voxel-based meth- Results on real dataset. We show our model works well ods, i.e., 3D-R2N2 (Choy et al. 2016), DRC (Tulsiani et al. on natural images without additional input. To adjust our 2017), two models of PTN (Yan et al. 2016) (PTN-Comb, model to real-world images, we synthesize the training data CNN-Vox), and MarreNet (Wu et al. 2017). These methods by augmenting the input images with random crops from directly use 3D volumetric representation and usually com- the PASCAL VOC 2012 dataset (Everingham et al. 2011) pute the IoU for evaluation. Since our results form dense as (Tatarchenko, Dosovitskiy, and Brox 2016) do. We show point clouds, we convert them to (32 × 32 × 32) grids as that the proposed method yields reasonable results in Fig. 6. Su et al. (Fan, Su, and Guibas 2017) do. For single class model, our method achieves much higher IoU (0.667) than Application. We show the generative representation of the the highest IoU (0.57) among the state-of-the-art methods learned features using linear interpolation in Fig. 7. We on the ShapeNet-Chair dataset, shown in Fig. 5 (a). For the can see clear and gradual transitions of the generated point multi-class results, we report per-category IoU in Table 1 clouds, indicating the learned feature space to be sufficiently on ShapeNet-13 dataset. The qualitative comparison to 3D- representative and smooth. More results of discriminative R2N2 is shown in Fig. 5 (b). We show that our method pre- representations are presented in the supplemental material. serves more fine details, such as legs of chairs, wings of planes, and holders of firearms. Conclusions Comparison to depth regression. Here we show our find- We have presented the MVPNet for regressing dense 3D ings that directly regressing 3D coordinates has advantages point clouds of an object from a single image. The point over regressing depths. To compare 3D coordinates and regression achieves state-of-the-art performance resorting depth regression, we adopt the same network architecture to the MVPC representation and the geometric loss. The but the last layer and use the same GeoLoss. The channel MVPC express an object’s surface with view-dependent numbers of the last layers are 3 and 1 for regressing co- point clouds that are embedded in regular 2D grids, which ordinates and depths respectively. As reported in Table 1, easily fit into CNN-based architectures. Also, the one-to- the depth regression generates reasonable results, but the ac- one mapping from 2D pixels to reprojected 3D points makes curacy is about 4% lower than coordinate regression. This these points in 1-VPC ordered, which accelerate the loss is because searching a gradient decent move in 3D space computation. Although the dimension of the data embedding with an arbitrary direction is more flexible and stable than space is reduced from 3D space to 2D projective planes, we searching in one fixed direction considering the loss of the propose the geometric loss that integrates variances over the 3D space (rather than 1D depth loss), especially on occlud- 3D surfaces instead of the 2D projective planes. The experi- ing contours. With the same 3D volume loss descent, the 3D ments demonstrate the geometric loss significantly improves point needs a small move in the steepest direction, while the the reconstruction accuracy.

8 . References surfaces. In IEEE International Conference on Computer Vision. Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G. S.; Davis, A.; Dean, J.; Devin, Park, E.; Yang, J.; Yumer, E.; Ceylan, D.; and Berg, A. C. M.; et al. 2016. Tensorflow: Large-scale machine learn- 2017. Transformation-grounded image generation network ing on heterogeneous distributed systems. arXiv preprint for novel 3d view synthesis. In IEEE Conference on Com- arXiv:1603.04467. puter Vision and Pattern Recognition (CVPR), 702–711. IEEE. Chang, A. X.; Funkhouser, T.; Guibas, L.; Hanrahan, P.; Huang, Q.; Li, Z.; Savarese, S.; Savva, M.; Song, S.; Su, Pons, J.-P.; Keriven, R.; and Faugeras, O. 2007. Multi-view H.; et al. 2015. Shapenet: An information-rich 3d model stereo reconstruction and scene flow estimation with a global repository. arXiv preprint arXiv:1512.03012. image-based matching score. International Journal of Com- puter Vision (IJCV) 72(2):179–193. Chen, D.; Yuan, L.; Liao, J.; Yu, N.; and Hua, G. 2017. Stylebank: An explicit representation for neural image style Pontes, J. K.; Kong, C.; Sridharan, S.; Lucey, S.; Eriksson, transfer. In CVPR 2018. A.; and Fookes, C. 2017. Image2mesh: A learning frame- work for single image 3d reconstruction. arXiv preprint Chen, D.; Yuan, L.; Liao, J.; Yu, N.; and Hua, G. 2018. arXiv:1711.10669. Stereoscopic neural style transfer. CVPR 2018. Qi, C. R.; Su, H.; Mo, K.; and Guibas, L. J. 2017. Pointnet: Choy, C. B.; Xu, D.; Gwak, J.; Chen, K.; and Savarese, S. Deep learning on point sets for 3d classification and segmen- 2016. 3d-r2n2: A unified approach for single and multi-view tation. IEEE Conference on Computer Vision and Pattern 3d object reconstruction. In ECCV. Recognition (CVPR). Delaunoy, A., and Prados, E. 2011. Gradient flows for Shin, D.; Fowlkes, C. C.; and Hoiem, D. 2018. Pix- optimizing triangular mesh-based surfaces: Applications to els, voxels, and views: A study of shape representations 3d reconstruction problems dealing with visibility. Interna- for single view 3d object shape prediction. arXiv preprint tional journal of computer vision (IJCV) 95(2):100–123. arXiv:1804.06032. Diamanti, P. A. O.; Mitliagkas, I.; and Guibas, L. J. 2017. Soltani, A. A.; Huang, H.; Wu, J.; Kulkarni, T. D.; and Representation learning and adversarial generation of 3d Tenenbaum, J. B. 2017. Synthesizing 3d shapes via model- point clouds. CoRR. ing multi-view depth maps and silhouettes with deep gener- Eckstein, I.; Pons, J.-P.; Tong, Y.; Kuo, C.-C.; and Desbrun, ative networks. In CVPR 2017. M. 2007. Generalized surface flows for mesh processing. In Tatarchenko, M.; Dosovitskiy, A.; and Brox, T. 2016. Multi- Proceedings of the fifth Eurographics symposium on Geom- view 3d models from single images with a convolutional net- etry processing. Eurographics Association. work. In European Conference on Computer Vision (ECCV). Everingham, M.; Van Gool, L.; Williams, C.; Winn, Tulsiani, S.; Zhou, T.; Efros, A. A.; and Malik, J. 2017. J.; and Zisserman, A. 2011. The pascal vi- Multi-view supervision for single-view reconstruction via sual object classes challenge 2012 (voc2012) re- differentiable ray consistency. IEEE Conference on Com- sults (2012). In URL http://www. pascal-network. puter Vision and Pattern Recognition (CVPR). org/challenges/VOC/voc2011/workshop/index. html. Wang, J.; Fang, T.; Su, Q.; Zhu, S.; Liu, J.; Cai, S.; Tai, C.- Fan, H.; Su, H.; and Guibas, L. 2017. A point set generation L.; and Quan, L. Image-based building regularization using network for 3d object reconstruction from a single image. structural linear features. IEEE Transactions on Visualiza- IEEE International Conference on Computer Vision (ICCV). tion Computer Graphics. Girdhar, R.; Fouhey, D. F.; Rodriguez, M.; and Gupta, A. Wu, J.; Zhang, C.; Xue, T.; Freeman, B.; and Tenenbaum, J. 2016. Learning a predictable and generative vector repre- 2016. Learning a probabilistic latent space of object shapes sentation for objects. In ECCV. via 3d generative-adversarial modeling. In Advances in Neu- ral Information Processing Systems (NIPS). Ioffe, S., and Szegedy, C. 2015. Batch normalization: Ac- celerating deep network training by reducing internal covari- Wu, J.; Wang, Y.; Xue, T.; Sun, X.; Freeman, B.; and Tenen- ate shift. In International Conference on Machine Learning baum, J. 2017. Marrnet: 3d shape reconstruction via 2.5 (ICML). d sketches. In Advances in Neural Information Processing Systems (NIPS). Kalogerakis, E.; Averkiou, M.; Maji, S.; and Chaudhuri, S. Yan, X.; Yang, J.; Yumer, E.; Guo, Y.; and Lee, H. 2016. 2017. 3d shape segmentation with projective convolutional Perspective transformer nets: Learning single-view 3d ob- networks. CVPR 2017. ject reconstruction without 3d supervision. In Advances in Kingma, D., and Ba, J. 2014. Adam: A method for stochastic Neural Information Processing Systems(NIPS). optimization. arXiv preprint arXiv:1412.6980. Zhou, T.; Tulsiani, S.; Sun, W.; Malik, J.; and Efros, A. A. Lin, C.-H.; Kong, C.; and Lucey, S. 2018. Learning efficient 2016. View synthesis by appearance flow. In ECCV. point cloud generation for dense 3d object reconstruction. In Zhu, R.; Galoogahi, H. K.; Wang, C.; and Lucey, S. 2017. AAAI Conference on Artificial Intelligence (AAAI). Rethinking reprojection: Closing the loop for pose-aware Liu, J.; Wang, J.; Fang, T.; Tai, C.-L.; and Quan, L. 2015. shape reconstruction from a single image. In IEEE Inter- Higher-order crf structural segmentation of 3d reconstructed national Conference on Computer Vision (ICCV). IEEE.