- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

MADE: Masked Autoencoder for Distribution Estimation

展开查看详情

1 . MADE: Masked Autoencoder for Distribution Estimation Mathieu Germain MATHIEU . GERMAIN 2@ USHERBROOKE . CA Universit´e de Sherbrooke, Canada Karol Gregor KAROL . GREGOR @ GMAIL . COM Google DeepMind Iain Murray I . MURRAY @ ED . AC . UK arXiv:1502.03509v2 [cs.LG] 5 Jun 2015 University of Edinburgh, United Kingdom Hugo Larochelle HUGO . LAROCHELLE @ USHERBROOKE . CA Universit´e de Sherbrooke, Canada Abstract et al., 2009), denoising or missing input imputation (Poon There has been a lot of recent interest in designing & Domingos, 2011; Dinh et al., 2014), data (e.g. speech) neural network models to estimate a distribution synthesis (Uria et al., 2015) and many others. The very from a set of examples. We introduce a simple nature of distribution estimation also makes it a particular modification for autoencoder neural networks that challenge for machine learning. In essence, the curse of yields powerful generative models. Our method dimensionality has a distinct impact because, as the number masks the autoencoder’s parameters to respect of dimensions of the input space of x grows, the volume of autoregressive constraints: each input is recon- space in which the model must provide a good answer for structed only from previous inputs in a given or- p(x) exponentially increases. dering. Constrained this way, the autoencoder Fortunately, recent research has made substantial progress outputs can be interpreted as a set of conditional on this task. Specifically, learning algorithms for a vari- probabilities, and their product, the full joint prob- ety of neural network models have been proposed (Bengio ability. We can also train a single network that & Bengio, 2000; Larochelle & Murray, 2011; Gregor & can decompose the joint probability in multiple LeCun, 2011; Uria et al., 2013; 2014; Kingma & Welling, different orderings. Our simple framework can be 2014; Rezende et al., 2014; Bengio et al., 2014; Gregor applied to multiple architectures, including deep et al., 2014; Goodfellow et al., 2014; Dinh et al., 2014). ones. Vectorized implementations, such as on These algorithms are showing great potential in scaling to GPUs, are simple and fast. Experiments demon- high-dimensional distribution estimation problems. In this strate that this approach is competitive with state- work, we focus our attention on autoregressive models (Sec- of-the-art tractable distribution estimators. At test tion 3). Computing p(x) exactly for a test example x is time, the method is significantly faster and scales tractable with these models. However, the computational better than other autoregressive estimators. cost of this operation is still larger than typical neural net- work predictions for a D-dimensional input. For previous deep autoregressive models, evaluating p(x) costs O(D) 1. Introduction times more than a simple neural network point predictor. Distribution estimation is the task of estimating a joint distri- This paper’s contribution is to describe and explore a simple bution p(x) from a set of examples {x(t) }Tt=1 , which is by way of adapting autoencoder neural networks that makes definition a general problem. Many tasks in machine learn- them competitive tractable distribution estimators that are ing can be formulated as learning only specific properties of faster than existing alternatives. We show how to mask the a joint distribution. Thus a good distribution estimator can weighted connections of a standard autoencoder to convert it be used in many scenarios, including classification (Schmah into a distribution estimator. The key is to use masks that are designed in such a way that the output is autoregressive for a Proceedings of the 32 nd International Conference on Machine given ordering of the inputs, i.e. that each input dimension is Learning, Lille, France, 2015. JMLR: W&CP volume 37. Copy- right 2015 by the author(s). reconstructed solely from the dimensions preceding it in the

2 . MADE: Masked Autoencoder for Distribution Estimation ordering. The resulting Masked Autoencoder Distribution and output layers. Its main disadvantage is that the repre- Estimator (MADE) preserves the efficiency of a single pass sentation it learns can be trivial. For instance, if the hidden through a regular autoencoder. Implementation on a GPU is layer is at least as large as the input, hidden units can each straightforward, making the method scalable. learn to “copy” a single input dimension, so as to recon- struct all inputs perfectly at the output layer. One obvious The single hidden layer version of MADE corresponds to the consequence of this observation is that the loss function previously proposed autoregressive neural network of Ben- of Equation 3 isn’t in fact a proper log-likelihood func- gio & Bengio (2000). Here, we go further by exploring tion. Indeed, since perfect reconstruction could be achieved, deep variants of the model. We also explore training MADE the implied data ‘distribution’ q(x) = d xxd d (1−xd )1−xd to work simultaneously with multiple orderings of the in- could be learned to be 1 for any x and thus not be properly put observations and hidden layer connectivity structures. normalized ( x q(x) = 1). We test these extensions across a range of binary datasets with hundreds of dimensions, and compare its statistical performance and scaling to comparable methods. 3. Distribution Estimation as Autoregression An interesting question is what property we could impose 2. Autoencoders on the autoencoder, such that its output can be used to obtain valid probabilities. Specifically, we’d like to be able to write A brief description of the basic autoencoder, on which this p(x) in such a way that it could be computed based on the work builds upon, is required to clearly grasp what follows. output of a properly corrected autoencoder. In this paper, we assume that we are given a training set of examples {x(t) }Tt=1 . We concentrate on the case of binary First, we can use the fact that, for any distribution, the prob- observations, where for every D-dimensional input x, each ability product rule implies that we can always decompose input dimension xd belongs in {0, 1}. The motivation is it into the product of its nested conditionals to learn hidden representations of the inputs that reveal the statistical structure of the distribution that generated them. D An autoencoder attempts to learn a feed-forward, hidden p(x) = p(xd | x<d ), (4) d=1 representation h(x) of its input x such that, from it, we can obtain a reconstruction x which is as close as possible to x. where x<d = [x1 , . . . , xd−1 ] . Specifically, we have By defining p(xd = 1 | x<d ) = x ˆd , and thus p(xd = h(x) = g(b + Wx) (1) 0 | x<d ) = 1− xˆd , the loss of Equation 3 becomes a valid x = sigm(c + Vh(x)) , (2) negative log-likelihood: D where W and V are matrices, b and c are vectors, g is a non- linear activation function and sigm(a) = 1/(1 + exp(−a)). − log p(x) = − log p(xd | x<d ) d=1 Thus, W represents the connections from the input to the D hidden layer, and V represents the connections from the = −xd log p(xd = 1 | x<d ) (5) hidden to the output layer. d=1 To train the autoencoder, we must first specify a training − (1−xd ) log p(xd = 0 | x<d ) loss function. For binary observations, a natural choice is = (x) . the cross-entropy loss: D This connection provides a way to define autoencoders (x) = −xd log xd − (1−xd ) log(1−xd ) . (3) that can be used for distribution estimation. Each output d=1 xd = p(xd | x<d ) must be a function taking as input x<d By treating xd as the model’s probability that xd is 1, the only and outputting the probability of observing value xd cross-entropy can be understood as taking the form of a at the dth dimension. In particular, the autoencoder forms negative log-likelihood function. Training the autoencoder a proper distribution if each output unit xˆd only depends corresponds to optimizing the parameters {W, V, b, c} to on the previous input units x<d , and not the other units reduce the average loss on the training examples, usually x≥d = [xd , . . . , xD ] . with (mini-batch) stochastic gradient descent. We refer to this property as the autoregressive property, One advantage of the autoencoder paradigm is its flexibility. because computing the negative log-likelihood (5) is equiv- In particular, it is straightforward to obtain a deep autoen- alent to sequentially predicting (regressing) each dimension coder by inserting more hidden layers between the input of input x.

3 . MADE: Masked Autoencoder for Distribution Estimation 4. Masked Autoencoders MdV,W ,d is the number of network paths between output unit x ˆd and input unit xd . Thus, to demonstrate the autoregres- The question now is how to modify the autoencoder so as sive property, we need to show that MV,W is strictly lower to satisfy the autoregressive property. Since output x ˆd must diagonal, i.e. MdV,W ,d is 0 if d ≤ d. By definition of the depend only on the preceding inputs x<d , it means that matrix product, we have: there must be no computational path between output unit x ˆd and any of the input units xd , . . . , xD . In other words, K K for each of these paths, at least one connection (in matrix MdV,W ,d = MdV,k Mk,d W = 1d >m(k) 1m(k)≥d . (10) W or V) must be 0. k=1 k=1 A convenient way of zeroing connections is to elementwise- If d ≤ d, then there are no values for m(k) such that it is multiply each matrix by a binary mask matrix, whose entries both strictly less than d and greater or equal to d. Thus that are set to 0 correspond to the connections we wish to MdV,W ,d is indeed 0. remove. For a single hidden layer autoencoder, we write Constructing the masks MV and MW only requires an as- W h(x) = g(b + (W M )x) (6) signment of the m(k) values to each hidden unit. One could ˆ = sigm(c + (V MV )h(x)) x (7) imagine trying to assign an (approximately) equal number of units to each legal value of m(k). In our experiments, we where MW and MV are the masks for W and V respec- instead set m(k) by sampling from a uniform discrete dis- tively. It is thus left to the masks MW and MV to satisfy tribution defined on integers from 1 to D−1, independently the autoregressive property. for each of the K hidden units. To impose the autoregressive property we first assign each Previous work on autoregressive neural networks have also unit in the hidden layer an integer m between 1 and D−1 found it advantageous to use direct connections between the inclusively. The k th hidden unit’s number m(k) gives the input and output layers (Bengio & Bengio, 2000). In this maximum number of input units to which it can be con- context, the reconstruction becomes: nected. We disallow m(k) = D since this hidden unit would depend on all inputs and could not be used in modelling ˆ = sigm(c + (V x MV )h(x) + (A MA )x) , (11) any of the conditionals p(xd | x<d ). Similarly, we exclude m(k) = 0, as it would create constant hidden units. where A is the parameter connection matrix and MA is its mask matrix. To satisfy the autoregressive property, MA The constraints on the maximum number of inputs to each simply needs to be a strictly lower diagonal matrix, filled hidden unit are encoded in the matrix masking the connec- otherwise with ones. We used such direct connections in tions between the input and hidden units: our experiments as well. W 1 if m(k) ≥ d Mk,d = 1m(k)≥d = (8) 4.1. Deep MADE 0 otherwise, One advantage of the masked autoencoder framework de- for d ∈ {1, . . . , D} and k ∈ {1, . . . , K}. Overall, we need scribed in the previous section is that it naturally generalizes to encode the constraint that the dth output unit is only to deep architectures. Indeed, as we’ll see, by assigning a connected to x<d (and thus not to x≥d ). Therefore the maximum number of connected inputs to all units across output weights can only connect the dth output to hidden the deep network, masks can be similarly constructed so as units with m(k) < d, i.e. units that are connected to at most to satisfy the autoregressive property. d−1 input units. These constraints are encoded in the output mask matrix: For networks with L > 1 hidden layers, we use superscripts 1 if d > m(k) to index the layers. The first hidden layer matrix (previously V Md,k = 1d>m(k) = (9) W) will be denoted W1 , the second hidden layer matrix will 0 otherwise, be W2 , and so on. The number of hidden units (previously again for d ∈ {1, . . . , D} and k ∈ {1, . . . , K}. Notice that, K) in each hidden layer will be similarly indexed as K l , from this rule, no hidden units will be connected to the first where l is the hidden layer index. We will also generalize output unit x ˆ1 , as desired. the notation for the maximum number of connected inputs of the k th unit in the lth layer to ml (k). From these mask constructions, we can easily demonstrate that the corresponding masked autoencoder satisfies the au- We’ve already discussed how to define the first layer’s mask toregressive property. First, we note that, since the masks matrix such that it ensures that its k th unit is connected to MV and MW represent the network’s connectivity, their at most m(k) (now m1 (k)) inputs. To impose the same matrix product MV,W = MV MW represents the connec- property on the second hidden layer, we must simply make tivity between the input and the output layer. Specifically, sure that each unit k is only connected to first layer units

4 . MADE: Masked Autoencoder for Distribution Estimation p(x1 |x2 , x3 ) p(x2 ) p(x3 |x2 ) to be greater than or equal to the minimum connectivity at x1 x2 x3 the previous layer, i.e. mink ml−1 (k ). 3 1 2 V = MV 4.2. Order-agnostic training 1 2 2 1 So far, we’ve assumed that the conditionals modelled by W 2 =M W2 MADE were consistent with the natural ordering of the dimensions of x. However, we might be interested in mod- 2 1 2 2 elling the conditionals associated with an arbitrary ordering W1 = MW 1 of the input’s dimensions. 3 1 2 Specifically, Uria et al. (2014) have shown that training x1 x2 x3 x1 x2 x3 an autoregressive model on all orderings can be beneficial. Autoencoder x Masks MADE We refer to this approach as order-agnostic training. It can be achieved by sampling an ordering before each stochas- Figure 1. Left: Conventional three hidden layer autoencoder. tic/minibatch gradient update of the model. There are two Input in the bottom is passed through fully connected layers and advantages of this approach. Firstly, missing values in par- point-wise nonlinearities. In the final top layer, a reconstruction tially observed input vectors can be imputed efficiently: we specified as a probability distribution over inputs is produced. invoke an ordering where observed dimensions are all be- As this distribution depends on the input itself, a standard au- fore unobserved ones, making inference straightforward. toencoder cannot predict or sample new data. Right: MADE. Secondly, an ensemble of autoregressive models can be con- The network has the same structure as the autoencoder, but a set structed on the fly, by exploiting the fact that the conditionals of connections is removed such that each input unit is only pre- dicted from the previous ones, using multiplicative binary masks for two different orderings are not guaranteed to be exactly 1 2 (MW , MW , MV ). In this example, the ordering of the input consistent (and thus technically correspond to slightly dif- is changed from 1,2,3 to 3,1,2. This change is explained in sec- ferent models). An ensemble is then easily obtained by tion 4.2, but is not necessary for understanding the basic principle. sampling a set of orderings, computing the probability of x The numbers in the hidden units indicate the maximum number under each ordering and averaging. of inputs on which the kth unit of layer l depends. The masks are constructed based on these numbers (see Equations 12 and 13). Conveniently, in MADE, the ordering is simply represented These masks ensure that MADE satisfies the autoregressive prop- by the vector m0 = [m0 (1), . . . , m0 (D)]. Specifically, erty, allowing it to form a probabilistic model, in this example m0 (d) corresponds to the position of the original dth dimen- p(x) = p(x2 ) p(x3 |x2 ) p(x1 |x2 , x3 ). Connections in light gray sion of x in the product of conditionals. Thus, a random correspond to paths that depend only on 1 input, while the dark ordering can be obtained by randomly permuting the or- gray connections depend on 2 inputs. dered vector [1, . . . , D]. From these values of each m0 , the first hidden layer mask matrix can then be created. During order-agnostic training, randomly permuting the last value connected to at most m2 (k ) inputs, i.e. the first layer units of m0 again is sufficient to obtain a new random ordering. such that m1 (k) ≤ m2 (k ). One can generalize this rule to any layer l, as follows: 4.3. Connectivity-agnostic training l l−1 l 1 if m (k ) ≥ m (k) One advantage of order-agnostic training is that it effectively MkW,k = 1ml (k )≥ml−1 (k) = 0 otherwise. allows us to train as many models as there are orderings, (12) using a common set of parameters. This can be exploited Also, taking l = 0 to mean the input layer and defining by creating ensembles of models at test time. m0 (d) = d (which is intuitive, since the dth input unit in- deed takes its values from the d first inputs), this definition In MADE, in addition to choosing an ordering, we also have also applies for the first hidden layer weights. As for the to choose each hidden unit’s connectivity constraint ml (k). output mask, we simply need to adapt its definition by using Thus, we could imaging training MADE to also be agnostic the connectivity constraints of the last hidden layer mL (k) of the connectivity pattern generated by these constraints. To instead of the first: achieve this, instead of sampling the values of ml (k) for all units and layers once and for all before training, we actually V 1 if d > mL (k) resample them for each training example or minibatch. This Md,k = 1d>mL (k) = (13) 0 otherwise. is still practical, since the operation of creating the masks is easy to parallelize. Denoting ml = [ml (1), . . . , ml (K l )], Like for the single hidden layer case, the values for ml (k) and assuming an element-wise and parallel implementation for each hidden layer l ∈ {1, . . . , L} are sampled uniformly. of the operation 1a≥b for vectors, such that 1a≥b is a matrix To avoid unconnected units, the value for ml (k) is sampled

5 . MADE: Masked Autoencoder for Distribution Estimation Algorithm 1 Computation of p(x) and learning gradients were providing input with binary indicator variables, con- for MADE with order and connectivity sampling. D is the nected with additional learnable weights. We considered size of the input, L the number of hidden layers and K the applying a similar strategy, using companion weight matri- l number of hidden units. ces Ul , that are also masked by MW but connected to a Input: training observation vector x constant one-valued vector: Output: p(x) and gradients of − log p(x) on parameters l l hl (x) = g(bl + (Wl MW )hl−1 (x) + (Ul MW )1) l # Sampling m vectors (14) m0 ← shuffle([1, . . . , D]) An analogous parametrization of the output layer was also for l from 1 to L do employed. These connectivity conditioning weights were for k from 1 to K l do only sometimes useful. In our experiments, we treated the ml (k) ← Uniform([mink ml−1 (k ), . . . , D−1]) choice of using them as a hyperparameter. end for end for Moreover, we’ve found in our experiments that sampling masks for every example could sometimes over-regularize # Constructing masks for each layer MADE and provoke underfitting. To fix this issue, we also for l from 1 to L do considered sampling from only a finite list of masks. During l MW ← 1ml ≥ml−1 training, MADE cycles through this list, using one for every end for update. At test time, we then average probabilities obtained MV ← 1m0 >mL for all masks in the list. Algorithm 1 details how p(x) is computed by MADE, as # Computing p(x) well as how to obtain the gradient of (x) for stochastic h0 (x) ← x gradient descent training. For simplicity, the pseudocode for l from 1 to L do assumes order-agnostic and connectivity-agnostic training, l hl (x) ← g(bl + (Wl MW )hl−1 (x)) doesn’t assume the use of conditioning weight matrices or end for of direct input/output connections. Figure 1 also illustrates x ← sigm(c + (V MV )hL (x)) an example of such a two-layer MADE network, along with D p(x) ← exp d=1 xd log xd + (1−xd ) log(1−xd ) its ml (k) values and its masks. # Computing gradients of − log p(x) 5. Related Work tmp ← x − x δc ← tmp There has been a lot of recent work on exploring the use δV ← tmp hL (x) MV of feed-forward, autoencoder-like neural networks as prob- tmp ← (tmp (V M )) V abilistic generative models. Part of the motivation behind for l from L to 1 do this research is to test the common assumption that the l tmp ← tmp g (bl + (Wl MW )hl−1 (x)) use of models with probabilistic latent variables and in- δbl ← tmp tractable partition functions (such as the restricted Boltz- l δWl ← tmp hl−1 (x) MW mann machine (Salakhutdinov & Murray, 2008)), is a nec- l essary evil in designing powerful generative models for tmp ← (tmp (Wl MW )) high-dimensional data. end for return p(x), δb1 , . . . , δbL , δW1 , . . . , δWL , δc, δV The work on the neural autoregressive distribution estimator or NADE (Larochelle & Murray, 2011) has illustrated that feed-forward architectures can in fact be used to form state- of-the-art and even tractable distribution estimators. whose i, j element is 1ai ≥bj , then the hidden layer masks l Recently, a deep extension of NADE was proposed, improv- are simply MW = 1ml ≥ml−1 . ing even further the state-of-the-art in distribution estima- By resampling the connectivity of hidden units for every tion (Uria et al., 2014). This work introduced a randomized update, each hidden unit will have a constantly changing training procedure, which (like MADE) has nearly the same number of incoming inputs during training. However, the cost per iteration as a standard autoencoder. Unfortunately, absence of a connection is indistinguishable from an instan- deep NADE models still require D feed-forward passes tiated connection to a zero-valued unit, which could confuse through the network to evaluate the probability p(x) of a the neural network during training. In a similar situation, D-dimensional test vector. The computation of the first Uria et al. (2014) informed each hidden unit which units hidden layer’s activations can be shared across these passes,

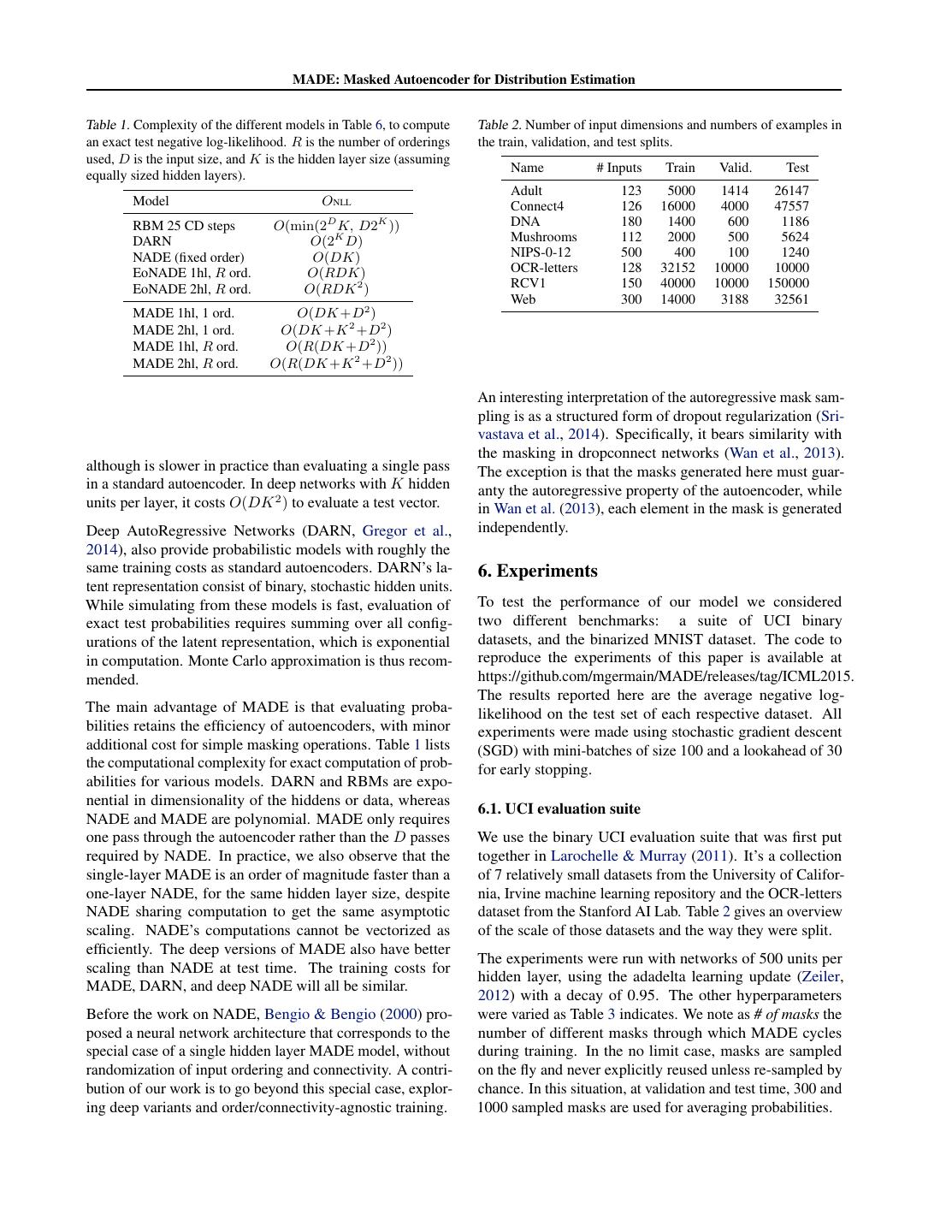

6 . MADE: Masked Autoencoder for Distribution Estimation Table 1. Complexity of the different models in Table 6, to compute Table 2. Number of input dimensions and numbers of examples in an exact test negative log-likelihood. R is the number of orderings the train, validation, and test splits. used, D is the input size, and K is the hidden layer size (assuming Name # Inputs Train Valid. Test equally sized hidden layers). Adult 123 5000 1414 26147 Model ONLL Connect4 126 16000 4000 47557 RBM 25 CD steps O(min(2D K, D2K )) DNA 180 1400 600 1186 DARN O(2K D) Mushrooms 112 2000 500 5624 NADE (fixed order) O(DK) NIPS-0-12 500 400 100 1240 EoNADE 1hl, R ord. O(RDK) OCR-letters 128 32152 10000 10000 EoNADE 2hl, R ord. O(RDK 2 ) RCV1 150 40000 10000 150000 Web 300 14000 3188 32561 MADE 1hl, 1 ord. O(DK +D2 ) MADE 2hl, 1 ord. O(DK +K 2 +D2 ) MADE 1hl, R ord. O(R(DK +D2 )) MADE 2hl, R ord. O(R(DK +K 2 +D2 )) An interesting interpretation of the autoregressive mask sam- pling is as a structured form of dropout regularization (Sri- vastava et al., 2014). Specifically, it bears similarity with the masking in dropconnect networks (Wan et al., 2013). although is slower in practice than evaluating a single pass The exception is that the masks generated here must guar- in a standard autoencoder. In deep networks with K hidden anty the autoregressive property of the autoencoder, while units per layer, it costs O(DK 2 ) to evaluate a test vector. in Wan et al. (2013), each element in the mask is generated Deep AutoRegressive Networks (DARN, Gregor et al., independently. 2014), also provide probabilistic models with roughly the same training costs as standard autoencoders. DARN’s la- 6. Experiments tent representation consist of binary, stochastic hidden units. While simulating from these models is fast, evaluation of To test the performance of our model we considered exact test probabilities requires summing over all config- two different benchmarks: a suite of UCI binary urations of the latent representation, which is exponential datasets, and the binarized MNIST dataset. The code to in computation. Monte Carlo approximation is thus recom- reproduce the experiments of this paper is available at mended. https://github.com/mgermain/MADE/releases/tag/ICML2015. The results reported here are the average negative log- The main advantage of MADE is that evaluating proba- likelihood on the test set of each respective dataset. All bilities retains the efficiency of autoencoders, with minor experiments were made using stochastic gradient descent additional cost for simple masking operations. Table 1 lists (SGD) with mini-batches of size 100 and a lookahead of 30 the computational complexity for exact computation of prob- for early stopping. abilities for various models. DARN and RBMs are expo- nential in dimensionality of the hiddens or data, whereas 6.1. UCI evaluation suite NADE and MADE are polynomial. MADE only requires one pass through the autoencoder rather than the D passes We use the binary UCI evaluation suite that was first put required by NADE. In practice, we also observe that the together in Larochelle & Murray (2011). It’s a collection single-layer MADE is an order of magnitude faster than a of 7 relatively small datasets from the University of Califor- one-layer NADE, for the same hidden layer size, despite nia, Irvine machine learning repository and the OCR-letters NADE sharing computation to get the same asymptotic dataset from the Stanford AI Lab. Table 2 gives an overview scaling. NADE’s computations cannot be vectorized as of the scale of those datasets and the way they were split. efficiently. The deep versions of MADE also have better The experiments were run with networks of 500 units per scaling than NADE at test time. The training costs for hidden layer, using the adadelta learning update (Zeiler, MADE, DARN, and deep NADE will all be similar. 2012) with a decay of 0.95. The other hyperparameters Before the work on NADE, Bengio & Bengio (2000) pro- were varied as Table 3 indicates. We note as # of masks the posed a neural network architecture that corresponds to the number of different masks through which MADE cycles special case of a single hidden layer MADE model, without during training. In the no limit case, masks are sampled randomization of input ordering and connectivity. A contri- on the fly and never explicitly reused unless re-sampled by bution of our work is to go beyond this special case, explor- chance. In this situation, at validation and test time, 300 and ing deep variants and order/connectivity-agnostic training. 1000 sampled masks are used for averaging probabilities.

7 . MADE: Masked Autoencoder for Distribution Estimation Table 3. UCI Grid Search Table 5. Binarized MNIST Grid Search Hyperparameter Values tried Hyperparameter Values tried # Hidden Layer 1, 2 # Hidden Layer 1, 2 Activation function ReLU, Softplus Learning Rate 0.1, 0.05, 0.01, 0.005 Adadelta epsilon 10−5 , 10−7 , 10−9 # of masks 1, 2, 4, 8, 16, 32, 64 Conditioning Weights True, False # of orderings 1, 8, 16, 32, No Limit 97.0 Test error The results are reported in Table 4. We see that MADE is 96.5 among the best performing models on half of the datasets 96.0 and is competitive otherwise. To reduce clutter, we have not reported standard deviations, which were fairly small and 95.5 consistent across models. However, for completeness we NLL report standard deviations in a separate table in the supple- 95.0 mentary materials. 94.5 An analysis of the hyperparameters selected for each dataset reveals no clear winner. However, we do see from Table 4 94.0 that when the mask sampling helps, it helps quite a bit and 93.5 when it does not, the impact is negligible on all but OCR- letters. Another interesting note is that the conditioning 93.0 0 10 20 30 40 50 60 70 weights had almost no influence except on NIPS-0-12 where # Masks used during training it helped. Figure 2. Impact of the number of masks used with a single hidden 6.2. Binarized MNIST evaluation layer, 500 hidden units network, on binarized MNIST. The version of MNIST we used is the one binarized by Salakhutdinov & Murray (2008). MNIST is a set of 70,000 hand written digits of 28×28 pixels. We use the same split taken from the literature. Again, despite its tractability, as in Larochelle & Murray (2011), consisting of 50,000 for MADE is competitive with other models. Of note is the the training set, 10,000 for the validation set and 10,000 for fact that the best MADE model outperforms the single layer the test set. NADE network, which was otherwise the best model among Experiments were run using the adagrad learning up- those requiring only a single feed-forward pass to compute date (Duchi et al., 2010), with an epsilon of 10−6 . Since log probabilities. MADE is much more efficient than NADE, we considered In these experiments, we clearly observed the over- varying the hidden layer size from 500 to 8000 units. Seeing regularization phenomenon from using too many masks. that increasing the number of units tended to always help, When more than four orderings were used, the deeper vari- we used 8000. Even with such a large hidden layer, our ant of MADE always yielded better results. For the two GPU implementation of MADE was quite efficient. Using layer model, adding masks during training helped up to 64, a single mask, one training epoch requires about 14 and 44 at which point the negative log-likelihood started to increase. seconds, for one hidden layer and two hidden layer MADE We observed a similar pattern for the single layer model, but respectively. Using 32 sampled masks, training time in- in this case the dip was around 8 masks. Figure 2 illustrates creases to 33 and 100 respectively. These timings are all this behaviour more precisely for a single layer MADE with less than our GPU implementation of the 500 hidden units 500 hidden units, trained by only varying the number of NADE model, which requires about 130 seconds per epoch. masks used and the size of the mini-batches (83, 100, 128). These timings were obtained on a K20 NVIDIA GPU. We randomly sampled 100 digits from our best performing Building on what we learned on the UCI experiments, we model from Table 6 and compared them with their nearest set the activation function to be ReLU and the conditioning neighbor in the training set (Figure 3), to ensure that the weights were not used. The hyperparameters that were generated samples are not simple memorization. Each row varied are in Table 5. of digits uses a different mask that was seen at training time The results are reported in Table 6, alongside other results by the network.

8 . MADE: Masked Autoencoder for Distribution Estimation Table 4. Negative log-likelihood test results of different models on multiple datasets. The best result as well as any other result with an overlapping confidence interval is shown in bold. Note that since the variance of DARN was not available, we considered it to be zero. Model Adult Connect4 DNA Mushrooms NIPS-0-12 OCR-letters RCV1 Web MoBernoullis 20.44 23.41 98.19 14.46 290.02 40.56 47.59 30.16 RBM 16.26 22.66 96.74 15.15 277.37 43.05 48.88 29.38 FVSBN 13.17 12.39 83.64 10.27 276.88 39.30 49.84 29.35 NADE (fixed order) 13.19 11.99 84.81 9.81 273.08 27.22 46.66 28.39 EoNADE 1hl (16 ord.) 13.19 12.58 82.31 9.69 272.39 27.32 46.12 27.87 DARN 13.19 11.91 81.04 9.55 274.68 ≈28.17 ≈46.10 ≈28.83 MADE 13.12 11.90 83.63 9.68 280.25 28.34 47.10 28.53 MADE mask sampling 13.13 11.90 79.66 9.69 277.28 30.04 46.74 28.25 Figure 3. Left: Samples from a 2 hidden layer MADE. Right: Nearest neighbour in binarized MNIST. 7. Conclusion Table 6. Negative log-likelihood test results of different models on the binarized MNIST dataset. We proposed MADE, a simple modification of autoencoders Model − log p allowing them to be used as distribution estimators. MADE demonstrates that it is possible to get direct, cheap estimates RBM (500 h, 25 CD steps) ≈ 86.34 of high-dimensional joint probabilities, from a single pass Intractable DBM 2hl ≈ 84.62 DBN 2hl ≈ 84.55 through an autoencoder. Like standard autoencoders, our ex- DARN nh =500 ≈ 84.71 tension is easy to vectorize and implement on GPUs. MADE DARN nh =500, adaNoise ≈ 84.13 can evaluate high-dimensional probably distributions with MoBernoullis K=10 168.95 better scaling than before, while maintaining state-of-the-art MoBernoullis K=500 137.64 statistical performance. NADE 1hl (fixed order) 88.33 Tractable EoNADE 1hl (128 orderings) 87.71 EoNADE 2hl (128 orderings) 85.10 Acknowledgments MADE 1hl (1 mask) 88.40 We thank Marc-Alexandre Cˆot´e for helping to implement MADE 2hl (1 mask) 89.59 MADE 1hl (32 masks) 88.04 NADE in Theano and the whole Theano (Bastien et al., MADE 2hl (32 masks) 86.64 2012; Bergstra et al., 2010) team of contributors. We also thank NSERC, Calcul Qu´ebec and Compute Canada.

9 . MADE: Masked Autoencoder for Distribution Estimation References Larochelle, Hugo and Murray, Iain. The neural autore- gressive distribution estimator. In Proceedings of the Bastien, Fr´ed´eric, Lamblin, Pascal, Pascanu, Razvan, 14th International Conference on Artificial Intelligence Bergstra, James, Goodfellow, Ian J., Bergeron, Arnaud, and Statistics (AISTATS 2011), volume 15, pp. 29–37, Ft. Bouchard, Nicolas, and Bengio, Yoshua. Theano: new Lauderdale, USA, 2011. JMLR W&CP. features and speed improvements. Deep Learning and Unsupervised Feature Learning NIPS 2012 Workshop, Poon, Hoifung and Domingos, Pedro. Sum-product net- 2012. works: A new deep architecture. In Proceedings of the 20th Conference on Uncertainty in Artificial Intelligence Bengio, Yoshua and Bengio, Samy. Modeling high- (UAI 2011), pp. 337–346, 2011. dimensional discrete data with multi-layer neural net- works. In Advances in Neural Information Processing Rezende, Danilo Jimenez, Mohamed, Shakir, and Wierstra, Systems 12 (NIPS 1999), pp. 400–406. MIT Press, 2000. Daan. Stochastic backpropagation and approximate in- ference in deep generative models. In Proceedings of Bengio, Yoshua, Laufer, Eric, Alain, Guillaume, and Yosin- the 31th International Conference on Machine Learning ski, Jason. Deep generative stochastic networks trainable (ICML 2014), pp. 1278–1286, 2014. by backprop. In Proceedings of the 31th Annual Inter- Salakhutdinov, Ruslan and Murray, Iain. On the quantita- national Conference on Machine Learning (ICML 2014), tive analysis of deep belief networks. In Proceedings of pp. 226–234. JMLR.org, 2014. the 25th Annual International Conference on Machine Learning (ICML 2008), pp. 872–879. Omnipress, 2008. Bergstra, James, Breuleux, Olivier, Bastien, Fr´ed´eric, Lam- blin, Pascal, Pascanu, Razvan, Desjardins, Guillaume, Schmah, Tanya, Hinton, Geoffrey E., Zemel, Richard S., Turian, Joseph, Warde-Farley, David, and Bengio, Yoshua. Small, Steven L., and Strother, Stephen C. Generative Theano: a CPU and GPU math expression compiler. In versus discriminative training of RBMs for classification Proceedings of the Python for Scientific Computing Con- of fMRI images. In Advances in Neural Information Pro- ference (SciPy), June 2010. Oral Presentation. cessing Systems 21 (NIPS 2008), pp. 1409–1416, 2009. Dinh, Laurent, Krueger, David, and Bengio, Yoshua. NICE: Srivastava, Nitish, Hinton, Geoffrey, Krizhevsky, Alex, non-linear independent components estimation, 2014. Sutskever, Ilya, and Salakhutdinov, Ruslan. Dropout: arXiv:1410.8516v2. A simple way to prevent neural networks from overfitting. Journal of Machine Learning Research, 15:1929–1958, Duchi, John, Hazan, Elad, and Singer, Yoram. Adaptive 2014. subgradient methods for online learning and stochastic Uria, Benigno, Murray, Iain, and Larochelle, Hugo. optimization. Technical report, EECS Department, Uni- RNADE: The real-valued neural autoregressive density- versity of California, Berkeley, Mar 2010. estimator. In Advances in Neural Information Processing Systems 26 (NIPS 2013), pp. 2175–2183, 2013. Goodfellow, Ian, Pouget-Abadie, Jean, Mirza, Mehdi, Xu, Bing, Warde-Farley, David, Ozair, Sherjil, Courville, Uria, Benigno, Murray, Iain, and Larochelle, Hugo. A deep Aaron, and Bengio, Yoshua. Generative adversarial nets. and tractable density estimator. In Proceedings of the 31th In Advances in Neural Information Processing Systems International Conference on Machine Learning, (ICML 27 (NIPS 2014), pp. 2672–2680. Curran Associates, Inc., 2014), pp. 467–475, 2014. 2014. Uria, Benigno, Murray, Iain, Renals, Steve, and Valentini- Botinhao, Cassia. Modelling acoustic feature dependen- Gregor, Karol and LeCun, Yann. Learning representations cies with artificial neural networks: Trajectory-RNADE. by maximizing compression, 2011. arXiv:1108.1169v1. In Proceedings of the 40th IEEE International Conference Gregor, Karol, Danihelka, Ivo, Mnih, Andriy, Blundell, on Acoustics, Speech and Signal Processing (ICASSP Charles, and Wierstra, Daan. Deep AutoRegressive Net- 2015). IEEE Signal Processing Society, 2015. works. In Proceedings of the 31th Annual International Wan, Li, Zeiler, Matthew D., Zhang, Sixin, LeCun, Yann, Conference on Machine Learning (ICML 2014), pp. 1242– and Fergus, Rob. Regularization of neural networks us- 1250. JMLR.org, 2014. ing dropconnect. In Proceedings of the 30th Interna- tional Conference on Machine Learning, (ICML 2013), Kingma, Diederik P. and Welling, Max. Auto-encoding pp. 1058–1066, 2013. variational bayes. In Proceedings of the 2nd International Conference on Learning Representations (ICLR 2014), Zeiler, Matthew D. ADADELTA: an adaptive learning rate 2014. method, 2012. arXiv:1212.5701v1.

10 . MADE: Masked Autoencoder for Distribution Estimation. Supplementary Material Mathieu Germain MATHIEU . GERMAIN 2@ USHERBROOKE . CA Universit´e de Sherbrooke, Canada Karol Gregor KAROL . GREGOR @ GMAIL . COM Google DeepMind Iain Murray I . MURRAY @ ED . AC . UK University of Edinburgh, United Kingdom Hugo Larochelle HUGO . LAROCHELLE @ USHERBROOKE . CA Universit´e de Sherbrooke, Canada Table 7. Negative log-likelihood and 95% confidence intervals for Table 4 in the main document. MADE EoNADE Dataset Fixed mask Mask sampling 16 ord. Adult 13.12±0.05 13.13±0.05 13.19±0.04 Connect4 11.90±0.01 11.90±0.01 12.58±0.01 DNA 83.63±0.52 79.66±0.63 82.31±0.46 Mushrooms 9.68±0.04 9.69±0.03 9.69±0.03 NIPS-0-12 280.25±1.05 275.92±1.01 272.39±1.08 Ocr-letters 28.34±0.22 30.04±0.22 27.32±0.19 RCV1 47.10±0.11 46.74±0.11 46.12±0.11 Web 28.53±0.20 28.25±0.20 27.87±0.20 Table 8. Binarized MNIST negative log-likelihood and 95% confi- dence intervals for Table 6 in the main document. Model MADE 1hl (1 mask) 88.40±0.45 MADE 2hl (1 mask) 89.59±0.46 MADE 1hl (32 masks) 88.04±0.44 MADE 2hl (32 masks) 86.64±0.44