- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Non-Autoregressive Machine Translation with Auxiliary Regularization

展开查看详情

1 . Non-Autoregressive Machine Translation with Auxiliary Regularization 1 Yiren Wang∗ , 2 Fei Tian, 3 Di He, 2 Tao Qin, 1 ChengXiang Zhai, 2 Tie-Yan Liu 1 University of Illinois at Urbana-Champaign, Urbana, IL, USA 2 Microsoft Research, Beijing, China 3 Key Laboratory of Machine Perception, MOE, School of EECS, Peking University, Beijing, China 1 {yiren,czhai}@illinois.edu 2 {fetia, taoqin, tie-yan.liu}@microsoft.com 3 di he@pku.edu.cn Abstract Therefore, during the inference process, we have to sequen- tially translate each target-side word one by one, which sub- As a new neural machine translation approach, Non- stantially hampers the inference efficiency of NMT systems. Autoregressive machine Translation (NAT) has attracted at- tention recently due to its high efficiency in inference. How- To alleviate the latency of inference brought by auto- ever, the high efficiency has come at the cost of not capturing regressive decoding, recently the community has turned to the sequential dependency on the target side of translation, Non-Autoregressive Translation (NAT) systems (Gu et al. which causes NAT to suffer from two kinds of translation er- 2018; Lee, Mansimov, and Cho 2018; Kaiser et al. 2018). rors: 1) repeated translations (due to indistinguishable adja- A basic NAT model has the same encoder-decoder archi- cent decoder hidden states), and 2) incomplete translations tecture as the autoregressive translation (AT) model, except (due to incomplete transfer of source side information via the that the sequential dependency within the target side sen- decoder hidden states). In this paper, we propose to address tence is omitted. In this way, all the tokens can be gen- these two problems by improving the quality of decoder hid- den representations via two auxiliary regularization terms in erated in parallel, and the inference speed is thus signifi- the training process of an NAT model. First, to make the hid- cantly boosted. However, it comes at the cost that the trans- den states more distinguishable, we regularize the similarity lation quality is largely sacrificed since the intrinsic depen- between consecutive hidden states based on the correspond- dency within the natural language sentence is abandoned. To ing target tokens. Second, to force the hidden states to contain mitigate such performance degradation, previous work has all the information in the source sentence, we leverage the tried different ways to insert intermediate discrete variables dual nature of translation tasks (e.g., English to German and to the basic NAT model, so as to incorporate some light- German to English) and minimize a backward reconstruction weighted sequential information into the non-autoregressive error to ensure that the hidden states of the NAT decoder are decoder. The discrete variables include the autoregressively able to recover the source side sentence. Extensive experi- generated latent variables (Kaiser et al. 2018), and the fer- ments conducted on several benchmark datasets show that both regularization strategies are effective and can alleviate tility information brought by a third-party word alignment the issues of repeated translations and incomplete translations model (Gu et al. 2018). However, leveraging such discrete in NAT models. The accuracy of NAT models is therefore variables not only brings additional difficulty for optimiza- improved significantly over the state-of-the-art NAT models tion, but also slows down the translation by introducing extra with even better efficiency for inference. computational cost for producing such discrete variables. In this paper, we propose a new solution to the problem Introduction that does not rely on any discrete variables and makes lit- tle revision to the basic NAT model, thus retaining most of Neural Machine Translation (NMT) based on deep neural the benefit of an NAT model. Our approach was motivated networks has gained rapid progress over recent years (Cho et by the following result we obtained from carefully analyz- al. 2014; Bahdanau, Cho, and Bengio 2014; Wu et al. 2016; ing the key issues existed in the basic NAT model. We em- Vaswani et al. 2017; Hassan et al. 2018). NMT systems are pirically observed that the two types of translation errors typically implemented in an encoder-decoder framework, frequently made by the basic NAT model are: 1) repeated in which the encoder network feeds the representations of translation, where the same token is generated repeatedly at source side sentence x into the decoder network to gener- consecutive time steps; 2) incomplete translation, where the ate the tokens in target sentence y. The decoder typically semantics of several tokens/sentence pieces from the source works in an auto-regressive manner: the generation of the t- sentence are not adequately translated. Both issues suggest th token yt follows a conditional distribution P (yt |x, y<t ), that the decoder hidden states in NAT model, i.e., the hid- where y<t represents all the generated tokens before yt . den states output in the topmost layer of the decoder, are of ∗ The work was done when the author was an intern at Microsoft low quality: the repeated translation shows that two adjacent Research Asia. hidden states are indistinguishable, leading to the same to- Copyright c 2019, Association for the Advancement of Artificial kens decoded out, while the incomplete translation reflects Intelligence (www.aaai.org). All rights reserved. that the hidden states in the decoder are incomplete in repre-

2 .senting source side information. machine translation system specifies a conditional distribu- Such a poor quality of decoder hidden states is in fact a tion P (y|x) of the likelihood of translating source side sen- direct consequence of the non-autoregressive nature of the tence x into target sentence y. There are typically two parts model: at each time step, the hidden states have no access in NMT: the encoder network and the decoder network. The to their prior decoded states, making them in a ‘chaotic’ encoder reads the source side sentence x = (x1 , · · · , xTx ) state being unaware of what has and has not been translated. with Tx tokens, and processes it into context vectors which Therefore, it is difficult for the neural network to learn good are then fed into the decoder network. The decoder builds hidden representations all by itself. Thus, in order to im- the conditional distribution P (yt |y<t , x) at each decoding prove the quality of the decoder representations, we must go time-step t, where y<t represents the set of generated tokens beyond the pure non-autoregressive models. The challenge, before time-step t. The final distribution P (y|x) is then in Ty though, is how to improve the quality of decoder representa- the factorized form P (y|x) = t=1 P (yt |y<t , x), with y tions to address the two problems identified above while still containing Ty target side tokens y = (y1 , · · · , yTy ). keeping the benefit of efficient inference of the NAT models. The NMT suffers from the high inference latency, which We propose to address this challenge by directly regular- limits its application in the real world scenarios. The main izing the learning of the decoder representations using two bottleneck comes from its autoregressive nature of the se- auxiliary regularization terms for model training. First, to quence generation: each target side token is generated one overcome the problem of repeated translation, we propose to by one, which prevents parallelism during inference, and force the similarity of two neighboring hidden state vectors thus the computational power of GPU cannot be fully ex- to be well aligned with the similarity of the embedding vec- ploited. Recent research efforts have been resorted to solve tors representing the two corresponding target tokens they the huge latency issue in the decoding process. In the do- aim to predict. We call this regularization strategy similar- main of speech synthesis, parallel wavenet (van den Oord et ity regularization. Second, to overcome the problem of in- al. 2018) successfully achieves the parallel sampling based complete translation, inspired by the dual nature of machine on inverse autoregressive flows (Kingma et al. 2016) and translation task (He et al. 2016), we propose to impose that knowledge distillation (Hinton, Vinyals, and Dean 2015). the hidden representations should be able to reconstruct the In NMT, the non-autoregressive neural machine translation source side sentence, which can be achieved by putting an (NAT) model has been developed recently (Gu et al. 2018; auto-regressive backward translation model on top of the de- Lee, Mansimov, and Cho 2018; Kaiser et al. 2018). In NAT, coder; the backward translation model would “demand” the all the tokens within one target side sentence are gener- decoder hidden representations of NAT to contain enough ated in parallel, without any limitation of sequential de- information about the source. We call this regularization pendency. The inference speed has thus been significantly strategy reconstruction regularization. Both regularization boosted (e.g., tens of times faster than the typical autore- terms only appear in the training process and have no ef- gressive NMT model) and for the sake of maintaining trans- fect during inference, thus bringing no additional computa- lation quality, there are several technical innovations in the tional/time cost to inference and allowing us to retain the NAT model design from previous exploration: major benefit of an NAT model. Meanwhile, the direct reg- ularization on the hidden states effectively improves their • Sequence level knowledge distillation. NAT model is typ- representation. In contrast, the existing approaches would ically trained with the help from an autoregressive trans- need either a third-party word alignment module (Gu et al. lation (AT) model as its teacher. The knowledge of the 2018) (hindering end-to-end learning), or a special compo- AT model is distilled to the NAT model via the sequence nent handling the discrete variables (e.g., several softmax level distillation technique (Kim and Rush 2016) which is operators in (Lee, Mansimov, and Cho 2018)) which brings essentially using the sampled translation from the teacher additional latency for decoding. model, out from the source side sentences, as the bilin- gual training data. It has been reported previously (Gu We evaluate the proposed regularization strategies by con- et al. 2018), and is also consistent with our empirical ducting extensive experiments on several benchmark ma- studies that training NAT with such distilled data per- chine translation datasets. The experiment results show that forms much better than the original ground truth data, both regularization strategies are effective and they can al- or the mixture of ground truth and distilled translations. leviate the issues of repeated translations and incomplete There has been no precise theoretical justification, but translations in NAT models, leading to improved NAT mod- an intuitive explanation is that the NAT model suffers els that can improve accuracy substantially over the state- from the ‘multimodality’ problem (Gu et al. 2018) – there of-the-art NAT models without sacrificing efficiency. We might be multiple valid translations for the same source set a new state-of-the-art performance of non-autoregressive word/phrase/sentence. The distilled data via the teacher translation models on the WMT14 datasets, with 24.61 model eschew such problem since the output of a neural BLEU in En-De and 28.90 in De-En. network is less noisy and more ‘deterministic’. Background • Model architecture modification. The NAT model is sim- ilar in the framework of encoder and decoder but has sev- Neural machine translation (Bahdanau, Cho, and Bengio eral differences from the autoregressive model, including 2014) (NMT) has been the widely adopted machine transla- (a) the causal mask of decoder, which prevents the ear- tion approach within both academia and industry. A neural lier decoding steps from accessing later information, is re-

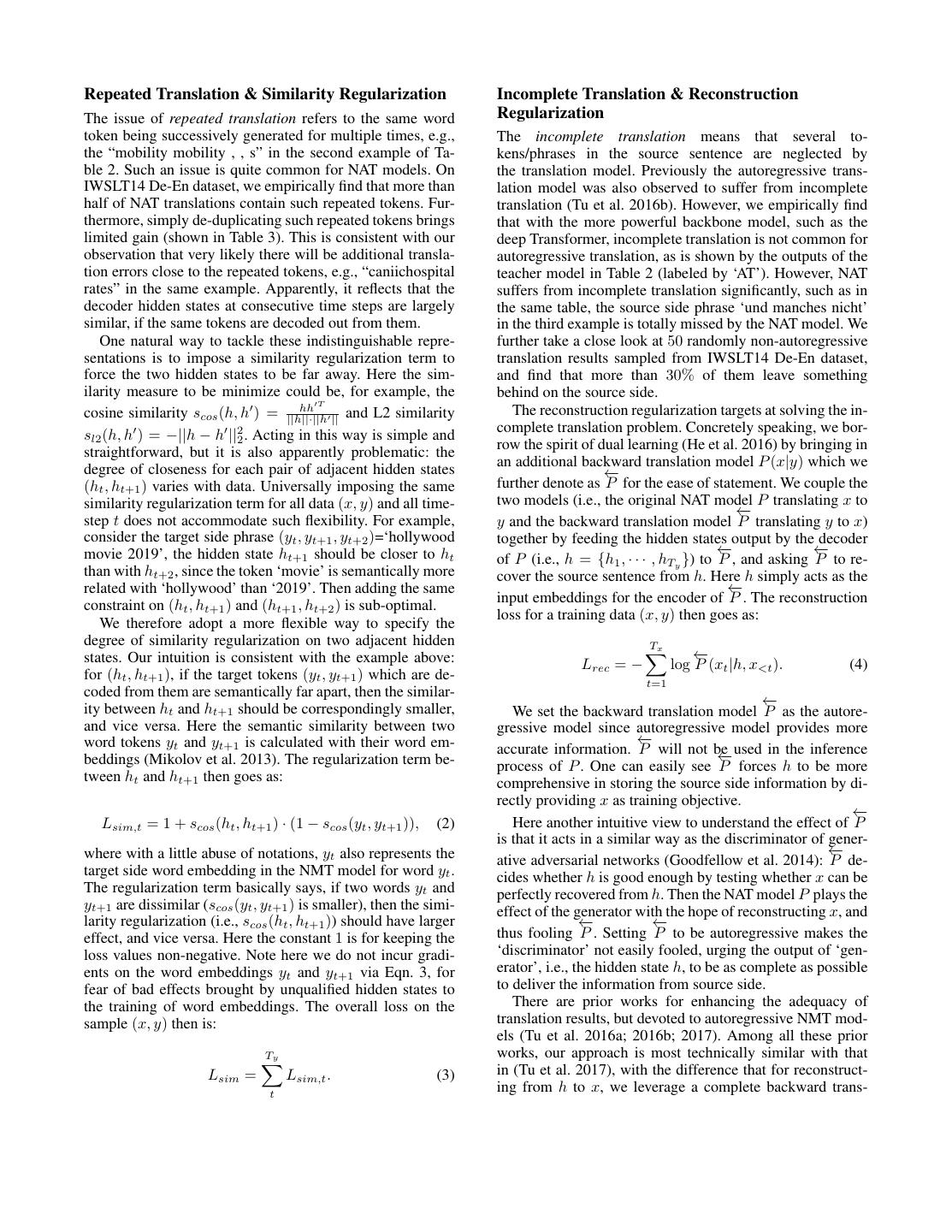

3 .Figure 1: The overall architecture of NAT model with auxiliary regularization terms. AT stands for autoregressive translation. Each sublayer of the encoder/decoder contains a residual connection and layer normalization following (Vaswani et al. 2017). moved; (b) positional attention is leveraged in the decoder prior works with two simple regularization terms. As a pre- to enhance position information, where the positional em- requisite, since there are no discrete variables available to beddings (Vaswani et al. 2017) are used as query and key, indicate some sequential information, we need new mecha- and hidden representations from the previous layers are nisms to predict the target length during inference and gen- used as value (Gu et al. 2018). erate inputs for the decoder, which are necessary for the • Discrete variables to aid NAT model training and infer- NAT model since the information fed into the decoder (i.e., ence. There are intermediate discrete variables in previous the word embeddings of previous target side tokens in au- NAT models, which aim to make up the loss of sequen- toregressive model) is unknown due to parallel decoding. tial information in the non-autoregressive decoding. The Target length prediction during inference. Instead of esti- representative examples include the fertility value to in- mating target length Ty with fertility values (Gu et al. 2018), dicate the number of copying each source side token (Gu or with a separate model p(Ty |x) (Lee, Mansimov, and Cho et al. 2018), and the discrete latent variables autoregres- 2018), we use a simple yet effective method as (Anony- sively generated (Kaiser et al. 2018). Additional difficulty mous 2019) that leverages source length to determine target arises due to the discreteness, and thus specially designed length. During inference, the target side length Ty is pre- optimization processes are necessary such as variational dicted as Ty = Tx + ∆T , where Tx is the length of source method (Gu et al. 2018; Lee, Mansimov, and Cho 2018) sentence, ∆T is a constant bias term that can be set accord- and vector quantization (Kaiser et al. 2018). ing to the overall length statistics of the training data. Generate decoder input with uniform mapping. Different from other works relying on the fertility (Gu et al. 2018), Model we adopt a simple way of uniformly mapping the source We introduce the details of our proposed approaches to non- side word embedding as the inputs to the decoder. Specif- autoregressive neural machine translation in this section. ically, given source sentence x = {x1 , x2 , ..., xTx } and tar- The overall model architecture is depicted in Fig. 1. get length Ty , we uniformly map the embeddings (denoted We use the basic NAT model with the same encoder- as E(·)) of the source tokens to generate decoder input with: decoder architecture as the AT model, and follow two of Tx the aforementioned techniques of training NAT models. zk = E(xi ), i = t , t = 1, 2, ..., Ty . (1) First, we train our NAT models based on the sequence- Ty level knowledge distillation. Second, we obey the aforemen- We use this variant of the basic NAT model as our back- tioned model variants specially designed for NAT, such as bone model (denoted as “NAT-BASE”). We now turn to ana- the positional attention and removing causal mask in the lyze the two commonly observed translation issues of NAT- decoder (Gu et al. 2018). What makes our solution unique BASE and introduce the corresponding solutions to tackle is that we replace the hard-to-optimize discrete variables in them.

4 .Repeated Translation & Similarity Regularization Incomplete Translation & Reconstruction The issue of repeated translation refers to the same word Regularization token being successively generated for multiple times, e.g., The incomplete translation means that several to- the “mobility mobility , , s” in the second example of Ta- kens/phrases in the source sentence are neglected by ble 2. Such an issue is quite common for NAT models. On the translation model. Previously the autoregressive trans- IWSLT14 De-En dataset, we empirically find that more than lation model was also observed to suffer from incomplete half of NAT translations contain such repeated tokens. Fur- translation (Tu et al. 2016b). However, we empirically find thermore, simply de-duplicating such repeated tokens brings that with the more powerful backbone model, such as the limited gain (shown in Table 3). This is consistent with our deep Transformer, incomplete translation is not common for observation that very likely there will be additional transla- autoregressive translation, as is shown by the outputs of the tion errors close to the repeated tokens, e.g., “caniichospital teacher model in Table 2 (labeled by ‘AT’). However, NAT rates” in the same example. Apparently, it reflects that the suffers from incomplete translation significantly, such as in decoder hidden states at consecutive time steps are largely the same table, the source side phrase ‘und manches nicht’ similar, if the same tokens are decoded out from them. in the third example is totally missed by the NAT model. We One natural way to tackle these indistinguishable repre- further take a close look at 50 randomly non-autoregressive sentations is to impose a similarity regularization term to translation results sampled from IWSLT14 De-En dataset, force the two hidden states to be far away. Here the sim- and find that more than 30% of them leave something ilarity measure to be minimize could be, for example, the behind on the source side. hh T The reconstruction regularization targets at solving the in- cosine similarity scos (h, h ) = ||h||·||h || and L2 similarity complete translation problem. Concretely speaking, we bor- sl2 (h, h ) = −||h − h ||22 . Acting in this way is simple and row the spirit of dual learning (He et al. 2016) by bringing in straightforward, but it is also apparently problematic: the an additional backward translation model P (x|y) which we degree of closeness for each pair of adjacent hidden states ← − (ht , ht+1 ) varies with data. Universally imposing the same further denote as P for the ease of statement. We couple the similarity regularization term for all data (x, y) and all time- two models (i.e., the original NAT model P translating x to ←− step t does not accommodate such flexibility. For example, y and the backward translation model P translating y to x) consider the target side phrase (yt , yt+1 , yt+2 )=‘hollywood together by feeding the hidden states output by the decoder movie 2019’, the hidden state ht+1 should be closer to ht ← − ← − of P (i.e., h = {h1 , · · · , hTy }) to P , and asking P to re- than with ht+2 , since the token ‘movie’ is semantically more cover the source sentence from h. Here h simply acts as the related with ‘hollywood’ than ‘2019’. Then adding the same ←− input embeddings for the encoder of P . The reconstruction constraint on (ht , ht+1 ) and (ht+1 , ht+2 ) is sub-optimal. loss for a training data (x, y) then goes as: We therefore adopt a more flexible way to specify the degree of similarity regularization on two adjacent hidden Tx states. Our intuition is consistent with the example above: ← − Lrec = − log P (xt |h, x<t ). (4) for (ht , ht+1 ), if the target tokens (yt , yt+1 ) which are de- t=1 coded from them are semantically far apart, then the similar- ← − ity between ht and ht+1 should be correspondingly smaller, We set the backward translation model P as the autore- and vice versa. Here the semantic similarity between two gressive model since autoregressive model provides more word tokens yt and yt+1 is calculated with their word em- ← − accurate information. P will not be − used in the inference ← beddings (Mikolov et al. 2013). The regularization term be- process of P . One can easily see P forces h to be more tween ht and ht+1 then goes as: comprehensive in storing the source side information by di- rectly providing x as training objective. ←− Lsim,t = 1 + scos (ht , ht+1 ) · (1 − scos (yt , yt+1 )), (2) Here another intuitive view to understand the effect of P is that it acts in a similar way as the discriminator of gener- where with a little abuse of notations, yt also represents the ←− ative adversarial networks (Goodfellow et al. 2014): P de- target side word embedding in the NMT model for word yt . cides whether h is good enough by testing whether x can be The regularization term basically says, if two words yt and perfectly recovered from h. Then the NAT model P plays the yt+1 are dissimilar (scos (yt , yt+1 ) is smaller), then the simi- effect of the generator with the hope of reconstructing x, and larity regularization (i.e., scos (ht , ht+1 )) should have larger ←− ←− effect, and vice versa. Here the constant 1 is for keeping the thus fooling P . Setting P to be autoregressive makes the loss values non-negative. Note here we do not incur gradi- ‘discriminator’ not easily fooled, urging the output of ‘gen- ents on the word embeddings yt and yt+1 via Eqn. 3, for erator’, i.e., the hidden state h, to be as complete as possible fear of bad effects brought by unqualified hidden states to to deliver the information from source side. the training of word embeddings. The overall loss on the There are prior works for enhancing the adequacy of sample (x, y) then is: translation results, but devoted to autoregressive NMT mod- els (Tu et al. 2016a; 2016b; 2017). Among all these prior Ty works, our approach is most technically similar with that Lsim = Lsim,t . (3) in (Tu et al. 2017), with the difference that for reconstruct- t ing from h to x, we leverage a complete backward trans-

5 .lation model based on encoder-decoder framework, while Baselines We include three latest NAT works as our base- they adopt a simple decoder without encoder. We empiri- lines, the NAT with fertility (NAT-FT) (Gu et al. 2018), the cally find that such an encoder cannot be dropped in the sce- NAT with iterative refinement (NAT-IR) (Lee, Mansimov, nario of helping NAT models, which we conjecture is due and Cho 2018) and the NAT with discrete latent variables to the better expressiveness brought by the encoder. Another (LT) (Kaiser et al. 2018). For all our four tasks, we obtain difference is that they furthermore leverage the reconstruc- the baseline performance by either directly using the per- tion score to assist the inference process via re-ranking dif- formance figures reported in the previous works if they are ferent candidates, while we do not perform such a step since available or producing them by using the open source imple- it hurts the efficiency of inference. mentation of baseline algorithms on our datasets. Joint Training Model Settings For the teacher model, we follow the same setup with previous NAT works to adopt the Trans- In the training process, all the two regularization terms are former model (Vaswani et al. 2017) as teacher model for combined with the commonly used cross-entropy loss Lce . both sequence-level knowledge distillation and inference re- For a training data (x, y) in which y is the sampled transla- scoring. We use the same model size and hyperparameters tion of x from the teacher model, the total loss is: for each NAT model and its respective AT teacher. Here we note that several baseline results are fairly weak prob- L = Lce + αLsim + βLrec (5) ably due to the weak teacher models. For example, (Gu et al. 2018) reports an AT teacher with 23.45 BLEU for Ty WMT14 En-De task, while the official number of Trans- Lce = log P (yt |x) (6) former is 27.3 (Vaswani et al. 2017). For fair comparison, t=1 we bring in a weakened AT teacher model with the same where α and β are the trade-off parameters, Lsim and Lrec model architecture, yet sub-optimal performances similar to respectively denotes the similarity regularization in Eqn.3 the teacher models in previous works. We rerun our algo- and the reconstruction regularization in Eqn. 4. We denote rithms with such weakened teacher models. our model with the two regularization terms as “NAT-REG” For the NAT model, we similarly adopt the Transformer architecture. For WMT datasets, we use the hyperparame- Evaluation ter settings of base Transformer model in (Vaswani et al. 2017). For IWSLT14 DE-EN, we use the small Trans- We use multiple benchmark datasets to evaluate the effec- former setting with a 5-layer encoder and 5-layer decoder tiveness of the proposed approach. We compare the pro- (size of hidden states and embeddings is 256, and the num- posed approach with multiple baseline approaches that rep- ber of attention heads is 4). For IWSLT16 EN-DE, we use resent the state-of-the-art in terms of both translation accu- a slightly different version of small settings with 5 layers racy and efficiency, and analyze the effectiveness of the two from (Gu et al. 2018), where size of hidden states and em- regularization strategies. As we will show, the strategies are beddings are 278, number of attention heads is 2. For our effective and can lead to substantial improvements of trans- models with reconstruction regularization, the backward AT lation accuracy with even better efficiency. models share word embeddings and the same model size with the NAT model. Our models are implemented based Experiment Design on the official TensorFlow implementation of Transformer2 . Datasets We use several widely adopted benchmark For sequence-level distillation, we set beam size to be 4. datasets to evaluate the effectiveness of our proposed For our model NAT-REG, we determine the trade-off param- method: the IWSLT14 German to English translation eters, i.e, α and β in Eqn. 5 by the BLEU on the IWSLT14 (IWSLT14 De-En), the IWSLT16 English to German De-En dev set, and use the same values for all other datasets. (IWSLT16 En-De), and the WMT14 English to German The optimal values are α = 2 and β = 0.5. (WMT14 En-De) and German to English (WMT14 De-En) which share the same dataset. For IWSLT16 En-De, we fol- Training and Inference low the dataset construction in (Gu et al. 2018; Lee, Man- We train all models using Adam following the optimizer set- simov, and Cho 2018) with roughly 195k/1k/1k parallel tings and learning rate schedule in Transformer(Vaswani et sentences as training/dev/test sets respectively. We use the al. 2017). We run the training procedure on 8/1 Nvidia M40 fairseq recipes1 for preprocessing data and creating dataset GPUs for WMT and IWSLT datasets respectively. Distilla- split for the rest datasets. The IWSLT14 De-En contains tion and inference are run on 1 GPU. roughly 153k/7k/7k parallel sentences as training/dev/test For inference, we follow the common practice of noisy sets. The WMT14 En-De/De-En dataset is much larger with parallel decoding (Gu et al. 2018), which generates a number 4.5M parallel training pairs. We use Newstest2014 as test of decoding candidates in parallel and selects the best trans- set and Newstest2013 as dev set. We use byte pair encoding lation via re-scoring using AT teacher. In our scenario, we (BPE) (Sennrich, Haddow, and Birch 2016) to segment word generate multiple translation candidates by predicting differ- tokens into subword units, forming a 32k-subword vocabu- ent target lengths Ty ∈ [Tx +∆T −B, Tx +∆T +B], which lary shared by source and target languages for each dataset. 2 https://github.com/tensorflow/ 1 https://github.com/pytorch/fairseq tensor2tensor.

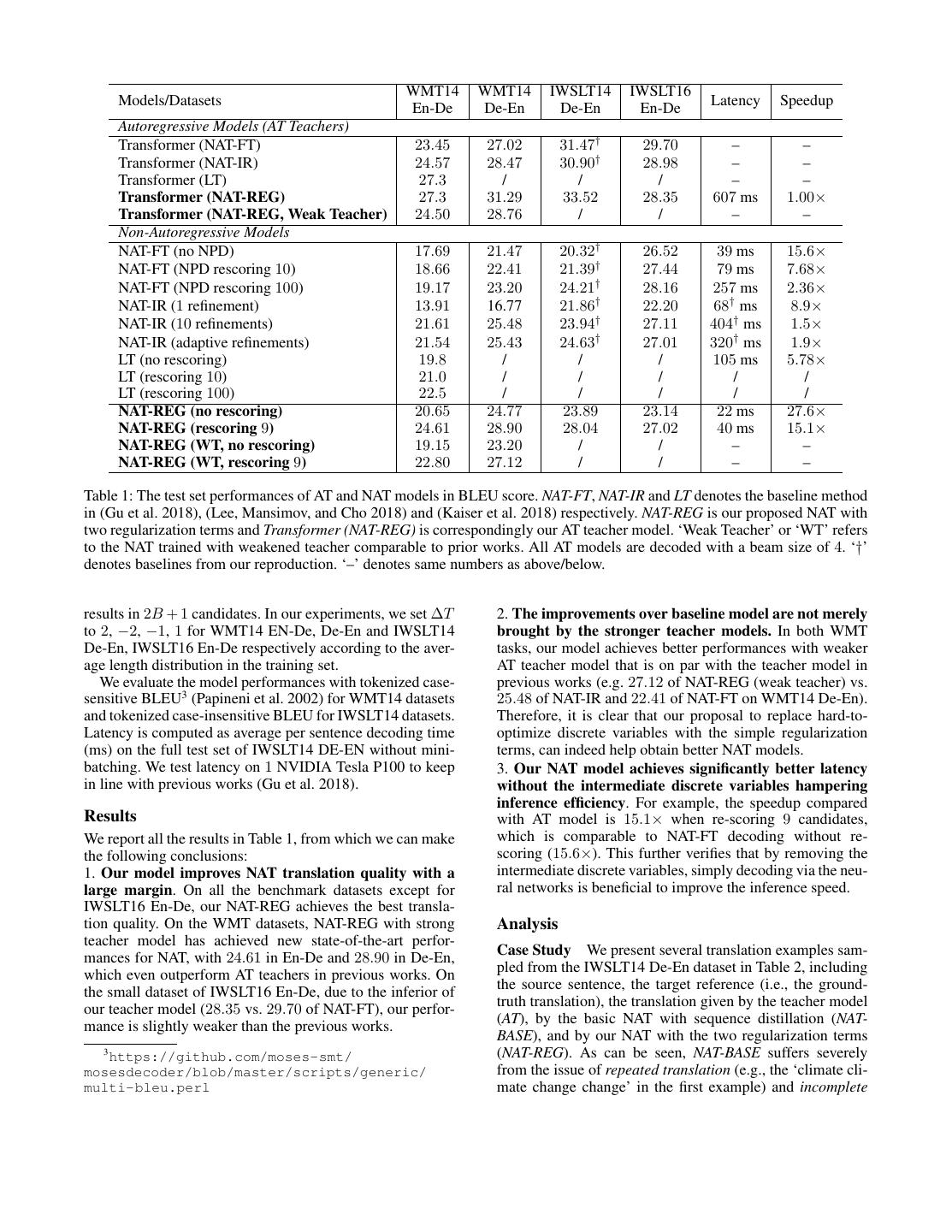

6 . WMT14 WMT14 IWSLT14 IWSLT16 Models/Datasets Latency Speedup En-De De-En De-En En-De Autoregressive Models (AT Teachers) Transformer (NAT-FT) 23.45 27.02 31.47† 29.70 – – Transformer (NAT-IR) 24.57 28.47 30.90† 28.98 – – Transformer (LT) 27.3 / / / – – Transformer (NAT-REG) 27.3 31.29 33.52 28.35 607 ms 1.00× Transformer (NAT-REG, Weak Teacher) 24.50 28.76 / / – – Non-Autoregressive Models NAT-FT (no NPD) 17.69 21.47 20.32† 26.52 39 ms 15.6× NAT-FT (NPD rescoring 10) 18.66 22.41 21.39† 27.44 79 ms 7.68× NAT-FT (NPD rescoring 100) 19.17 23.20 24.21† 28.16 257 ms 2.36× NAT-IR (1 refinement) 13.91 16.77 21.86† 22.20 68† ms 8.9× NAT-IR (10 refinements) 21.61 25.48 23.94† 27.11 404† ms 1.5× NAT-IR (adaptive refinements) 21.54 25.43 24.63† 27.01 320† ms 1.9× LT (no rescoring) 19.8 / / / 105 ms 5.78× LT (rescoring 10) 21.0 / / / / / LT (rescoring 100) 22.5 / / / / / NAT-REG (no rescoring) 20.65 24.77 23.89 23.14 22 ms 27.6× NAT-REG (rescoring 9) 24.61 28.90 28.04 27.02 40 ms 15.1× NAT-REG (WT, no rescoring) 19.15 23.20 / / – – NAT-REG (WT, rescoring 9) 22.80 27.12 / / – – Table 1: The test set performances of AT and NAT models in BLEU score. NAT-FT, NAT-IR and LT denotes the baseline method in (Gu et al. 2018), (Lee, Mansimov, and Cho 2018) and (Kaiser et al. 2018) respectively. NAT-REG is our proposed NAT with two regularization terms and Transformer (NAT-REG) is correspondingly our AT teacher model. ‘Weak Teacher’ or ‘WT’ refers to the NAT trained with weakened teacher comparable to prior works. All AT models are decoded with a beam size of 4. ‘†’ denotes baselines from our reproduction. ‘–’ denotes same numbers as above/below. results in 2B + 1 candidates. In our experiments, we set ∆T 2. The improvements over baseline model are not merely to 2, −2, −1, 1 for WMT14 EN-De, De-En and IWSLT14 brought by the stronger teacher models. In both WMT De-En, IWSLT16 En-De respectively according to the aver- tasks, our model achieves better performances with weaker age length distribution in the training set. AT teacher model that is on par with the teacher model in We evaluate the model performances with tokenized case- previous works (e.g. 27.12 of NAT-REG (weak teacher) vs. sensitive BLEU3 (Papineni et al. 2002) for WMT14 datasets 25.48 of NAT-IR and 22.41 of NAT-FT on WMT14 De-En). and tokenized case-insensitive BLEU for IWSLT14 datasets. Therefore, it is clear that our proposal to replace hard-to- Latency is computed as average per sentence decoding time optimize discrete variables with the simple regularization (ms) on the full test set of IWSLT14 DE-EN without mini- terms, can indeed help obtain better NAT models. batching. We test latency on 1 NVIDIA Tesla P100 to keep 3. Our NAT model achieves significantly better latency in line with previous works (Gu et al. 2018). without the intermediate discrete variables hampering inference efficiency. For example, the speedup compared Results with AT model is 15.1× when re-scoring 9 candidates, We report all the results in Table 1, from which we can make which is comparable to NAT-FT decoding without re- the following conclusions: scoring (15.6×). This further verifies that by removing the 1. Our model improves NAT translation quality with a intermediate discrete variables, simply decoding via the neu- large margin. On all the benchmark datasets except for ral networks is beneficial to improve the inference speed. IWSLT16 En-De, our NAT-REG achieves the best transla- tion quality. On the WMT datasets, NAT-REG with strong Analysis teacher model has achieved new state-of-the-art perfor- mances for NAT, with 24.61 in En-De and 28.90 in De-En, Case Study We present several translation examples sam- which even outperform AT teachers in previous works. On pled from the IWSLT14 De-En dataset in Table 2, including the small dataset of IWSLT16 En-De, due to the inferior of the source sentence, the target reference (i.e., the ground- our teacher model (28.35 vs. 29.70 of NAT-FT), our perfor- truth translation), the translation given by the teacher model mance is slightly weaker than the previous works. (AT), by the basic NAT with sequence distillation (NAT- BASE), and by our NAT with the two regularization terms 3 https://github.com/moses-smt/ (NAT-REG). As can be seen, NAT-BASE suffers severely mosesdecoder/blob/master/scripts/generic/ from the issue of repeated translation (e.g., the ‘climate cli- multi-bleu.perl mate change change’ in the first example) and incomplete

7 . Source: bei der coalergy sehen wir klimaver¨anderung als eine ernste gefahr f¨ur unser gesch¨aft . Reference: at coalergy we view climate change as a very serious threat to our business . AT: in coalergy , we see climate change as a serious threat to our business . NAT-BASE: in the coalergy , we 'll see climate climate change change as a most serious danger for our business . NAT-REG: at coalergy , we 're seeing climate change as a serious threat to our business . Source: dies ist die großartigste zeit , die es je auf diesem planeten gab , egal , welchen maßstab sie anlegen :gesundheit , reichtum , mobilit¨at , gelegenheiten , sinkende krankheitsraten . Reference: this is the greatest time there 's ever been on this planet by any measure that you wish to choose : health , wealth , mobility , opportunity , declining rates of disease . AT: this is the greatest time you 've ever had on this planet , no matter what scale you 're putting : health , wealth , mobility , opportunities , declining disease rates . NAT-BASE: this is the most greatest time that ever existed on this planet no matter what scale they 're imsi : : , , mobility mobility , , scaniichospital rates . NAT-REG: this is the greatest time that we 've ever been on this planet no matter what scale they 're ianition : health , wealth , mobility , opportunities , declining disease rates . Source: und manches davon hat funktioniert und manches nicht . Reference: and some of it worked , and some of it didn 't . AT: and some of it worked and some of it didn 't work . NAT-BASE: and some of it worked . NAT-REG: and some of it worked and some not . Table 2: Translation examples from IWSLT14 De-En task. The AT result is decoded with a beam size of 4 and NAT results are generated by re-scoring 9 candidates. We use the italic fonts to indicate the translation pieces where the NAT has the issue of incomplete translation, and bond fonts to indicate the issue of repeated translation. Model variants BLEU errors (repeated translation and incomplete translation) can NAT-BASE 28.73 be correlated to some extent and tackling one might help the NAT-BASE + de-duplication 29.45 alleviation of the other. For example, intuitively, given the NAT-BASE + universally penalize similarity 28.32 fixed target side length Ty , if repeated tokens are removed, NAT-BASE + similarity regularization 30.02 more ‘valid’ tokens will occupy the position, consequently NAT-BASE + reconstruction regularization 30.21 reducing the possibility of incomplete translation. NAT-BASE + both regularizations 30.84 Furthermore, we evaluate the effectiveness of alleviating repeated translations with our proposed approach. We count Table 3: Ablation study on IWLST14 De-En dev set. Results the number of de-duplication (de-dup) operations (for ex- are BLEU scores with teacher rescoring 9 candidates. ample, there are 2 de-dup operations for “we 'll see climate climate change change”). The per-sentence de-dup operations in IWSLT14 De-En dev set are 2.3 with NAT- translation (e.g., incomplete translation of ‘and some of it BASE, which has dropped to 0.9 with the introduction of didn 't’ in the third example), while with the two similarity regularization, clearly indicating the effectiveness auxiliary regularization terms brought in, the two issues are of the similarity regularization. largely alleviated. Ablation Study To further study the effects brought by Conclusions different techniques, we show in Table 3 the translation per- In this paper, we proposed two simple regularization strate- formance of different NAT model variants for the IWSLT14 gies to improve the performance of non-autoregressive ma- De-En translation task. We see that the BLEU of the basic chine translation (NAT) models, the similarity regularization NAT model could be enhanced via either of the two reg- and reconstruction regularization, which have been shown ularization terms by about 1 point. As a comparison, sim- to be effective for addressing two major problems of NAT ply de-duplicating the repeated tokens brings certain level models, i.e., the repeated translation and incomplete transla- of gain, and with the universal regularization that simply tion, respectively, consequently leading to quite strong per- penalizes the cosine similarity of all (ht , ht+1 ), the perfor- formance with fast decoding speed. mance even drops (from 28.73 to 28.32). When combining While the two regularization strategies were proposed to both regularization strategies, the BLEU score goes higher, improve NAT models, they may be generally applicable to which shows that the two regularization strategies are some- other sequence generation models. Exploring such poten- what complementary to each other. A noticeable fact is that tial would be an interesting direction for future research. the gain of combining both regularization strategies (about For example, we can apply our methods to other sequence 2.1) is lower than the sum of each individual gain (about generation tasks such as image caption and text summa- 2.8). One possible explanation may be that the two types of rization, with the hope of successfully deploying the non-

8 .autoregressive models into various real-world applications. autoregressive neural sequence modeling by iterative refine- We also plan to break the upper bound of the autoregressive ment. arXiv preprint arXiv:1802.06901. teacher model and obtain better performance than the au- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G. S.; and toregressive NMT model, which is possible since there is no Dean, J. 2013. Distributed representations of words and gap between training and inference (i.e., the exposure bias phrases and their compositionality. In Advances in neural problem for autoregressive sequence generation (Ranzato et information processing systems, 3111–3119. al. 2015)) in NAT models. Papineni, K.; Roukos, S.; Ward, T.; and Zhu, W.-J. 2002. Bleu: a method for automatic evaluation of machine transla- References tion. In Proceedings of the 40th annual meeting on associa- Anonymous. 2019. Hint-based training for non- tion for computational linguistics, 311–318. Association for autoregressive translation. In Submitted to International Computational Linguistics. Conference on Learning Representations. under review. Ranzato, M.; Chopra, S.; Auli, M.; and Zaremba, W. 2015. Bahdanau, D.; Cho, K.; and Bengio, Y. 2014. Neural ma- Sequence level training with recurrent neural networks. chine translation by jointly learning to align and translate. arXiv preprint arXiv:1511.06732. arXiv preprint arXiv:1409.0473. Sennrich, R.; Haddow, B.; and Birch, A. 2016. Neural ma- Cho, K.; van Merrienboer, B.; Gulcehre, C.; Bahdanau, D.; chine translation of rare words with subword units. In Pro- Bougares, F.; Schwenk, H.; and Bengio, Y. 2014. Learning ceedings of the 54th Annual Meeting of the Association for phrase representations using rnn encoder–decoder for statis- Computational Linguistics (Volume 1: Long Papers), vol- tical machine translation. In Proceedings of the 2014 Con- ume 1, 1715–1725. ference on Empirical Methods in Natural Language Pro- Tu, Z.; Liu, Y.; Lu, Z.; Liu, X.; and Li, H. 2016a. Con- cessing (EMNLP), 1724–1734. Doha, Qatar: Association text gates for neural machine translation. arXiv preprint for Computational Linguistics. arXiv:1608.06043. Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Tu, Z.; Lu, Z.; Liu, Y.; Liu, X.; and Li, H. 2016b. Model- Warde-Farley, D.; Ozair, S.; Courville, A.; and Bengio, Y. ing coverage for neural machine translation. In Proceedings 2014. Generative adversarial nets. In Advances in neural of the 54th Annual Meeting of the Association for Computa- information processing systems, 2672–2680. tional Linguistics (Volume 1: Long Papers), volume 1, 76– 85. Gu, J.; Bradbury, J.; Xiong, C.; Li, V. O.; and Socher, R. 2018. Non-autoregressive neural machine translation. In Tu, Z.; Liu, Y.; Shang, L.; Liu, X.; and Li, H. 2017. Neural International Conference on Learning Representations. machine translation with reconstruction. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, Hassan, H.; Aue, A.; Chen, C.; Chowdhary, V.; Clark, J.; February 4-9, 2017, San Francisco, California, USA., 3097– Federmann, C.; Huang, X.; Junczys-Dowmunt, M.; Lewis, 3103. W.; Li, M.; et al. 2018. Achieving human parity on au- tomatic chinese to english news translation. arXiv preprint van den Oord, A.; Li, Y.; Babuschkin, I.; Simonyan, K.; arXiv:1803.05567. Vinyals, O.; Kavukcuoglu, K.; van den Driessche, G.; Lock- hart, E.; Cobo, L.; Stimberg, F.; Casagrande, N.; Grewe, He, D.; Xia, Y.; Qin, T.; Wang, L.; Yu, N.; Liu, T.; and Ma, D.; Noury, S.; Dieleman, S.; Elsen, E.; Kalchbrenner, N.; W.-Y. 2016. Dual learning for machine translation. In Ad- Zen, H.; Graves, A.; King, H.; Walters, T.; Belov, D.; and vances in Neural Information Processing Systems, 820–828. Hassabis, D. 2018. Parallel WaveNet: Fast high-fidelity Hinton, G.; Vinyals, O.; and Dean, J. 2015. Distill- speech synthesis. In Dy, J., and Krause, A., eds., Pro- ing the knowledge in a neural network. arXiv preprint ceedings of the 35th International Conference on Machine arXiv:1503.02531. Learning, volume 80 of Proceedings of Machine Learning Kaiser, L.; Bengio, S.; Roy, A.; Vaswani, A.; Parmar, N.; Research, 3918–3926. Stockholmsm¨assan, Stockholm Swe- Uszkoreit, J.; and Shazeer, N. 2018. Fast decoding in den: PMLR. sequence models using discrete latent variables. In Pro- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, ceedings of the 35th International Conference on Machine L.; Gomez, A. N.; Kaiser, L.; and Polosukhin, I. 2017. At- Learning, ICML 2018, Stockholmsm¨assan, Stockholm, Swe- tention is all you need. arXiv preprint arXiv:1706.03762. den, July 10-15, 2018, 2395–2404. Wu, Y.; Schuster, M.; Chen, Z.; Le, Q. V.; Norouzi, M.; Kim, Y., and Rush, A. M. 2016. Sequence-level knowl- Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, edge distillation. In Proceedings of the 2016 Conference on K.; Klingner, J.; Shah, A.; Johnson, M.; Liu, X.; Kaiser, Empirical Methods in Natural Language Processing, 1317– Ł.; Gouws, S.; Kato, Y.; Kudo, T.; Kazawa, H.; Stevens, K.; 1327. Kurian, G.; Patil, N.; Wang, W.; Young, C.; Smith, J.; Riesa, Kingma, D. P.; Salimans, T.; Jozefowicz, R.; Chen, X.; J.; Rudnick, A.; Vinyals, O.; Corrado, G.; Hughes, M.; and Sutskever, I.; and Welling, M. 2016. Improved variational Dean, J. 2016. Google’s Neural Machine Translation Sys- inference with inverse autoregressive flow. In Advances in tem: Bridging the Gap between Human and Machine Trans- Neural Information Processing Systems, 4743–4751. lation. ArXiv e-prints. Lee, J.; Mansimov, E.; and Cho, K. 2018. Deterministic non-