- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Introduction to Tensorflow

展开查看详情

1 .Tensorflow An Introduction Content largely based on "Hands on machine learning with scikit -learn and tensorflow " Gene Olafsen

2 .AI, ML Beginnings LISP The OG of AI languages Symbolics and Lisp Machines – ran LISP natively Python Has been the language / platform of choice for machine learning for many years.

3 .theano Theano an academic project and is the inspiration for TensorFlow . Theano is a Python library that lets you to define, optimize, and evaluate mathematical expressions, especially ones with multi-dimensional arrays ( numpy.ndarray ). Release of Theano 1.0.0. -- November 2017 Theano announced that it has stopped development with the release of 1.0.0

4 .Google Brain Google Brain is a deep learning artificial intelligence research project at Google. Combines: Open-ended machine learning research System engineering Google-scale computing resources.

5 .Distbelief TensorFlow is Google Brains second-generation machine learning technology. DistBelief is Google Brains first machine learning system. Built using proprietary algorithms implementing deep learning neural networks. Backpropagation was later introduced, significantly enhancing the neural network performance.

6 .Distbelief TensorFlow is Google Brains second-generation machine learning technology. DistBelief is Google Brains first machine learning system. Built using proprietary algorithms implementing deep learning neural networks. Backpropagation was later introduced, significantly enhancing the neural network performance.

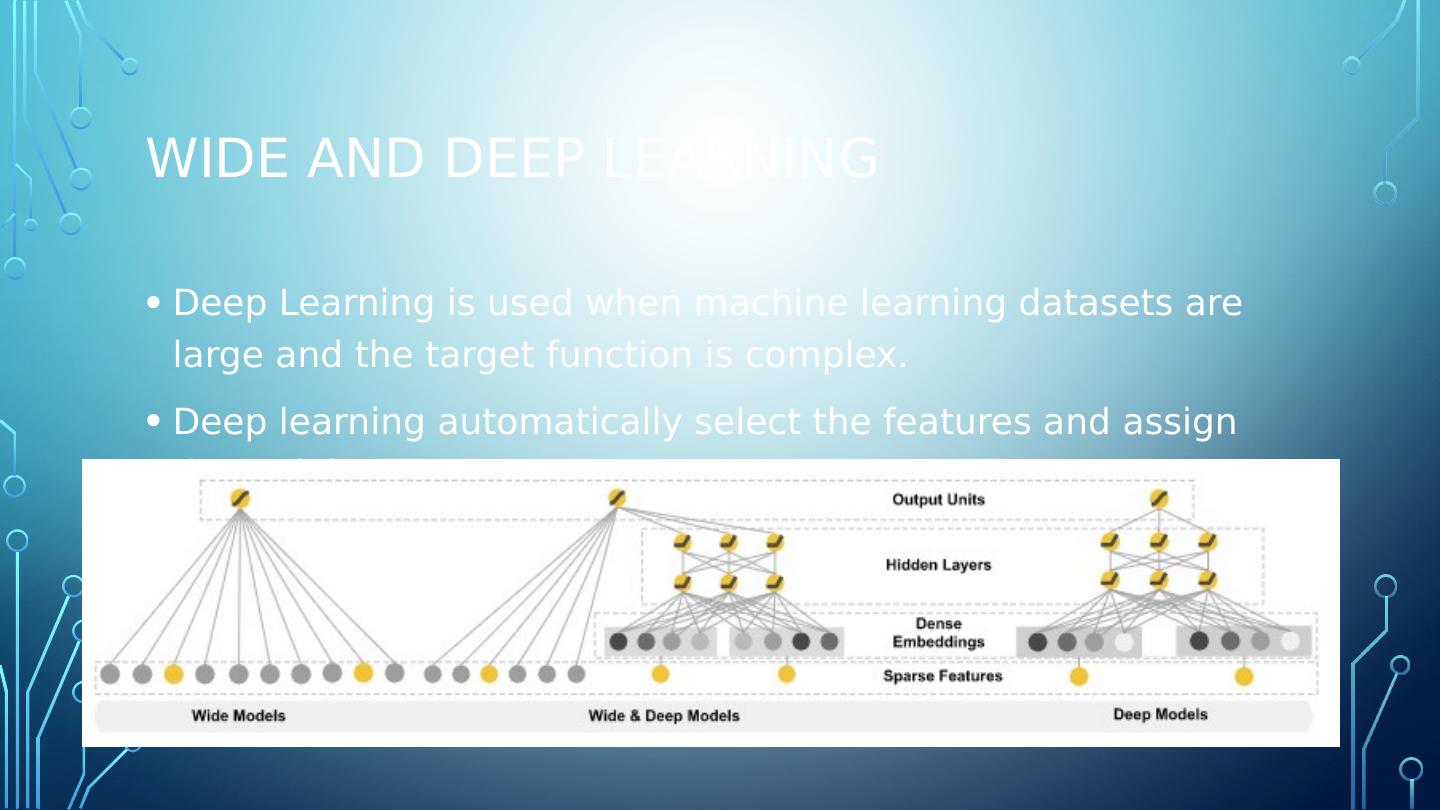

7 .Wide and deep learning Deep Learning is used when machine learning datasets are large and the target function is complex. Deep learning automatically select the features and assign the weights.

8 .licensing Tensorflow is google backed and designed to operate on highly distributed systems. Version 1.0.0 was released on February 11, 2017 Licensed under the Apache License, Version 2.0

9 .licensing Tensorflow is google backed and designed to operate on highly distributed systems. Version 1.0.0 was released on February 11, 2017 Licensed under the Apache License, Version 2.0

10 .Independent api on top of tensorflow Keras - a high-level neural networks API, written in Python and capable of running on top of TensorFlow , CNTK, or Theano. It was developed with a focus on enabling fast experimentation. Pretty Tensor - provides thin wrappers on Tensors so that you can easily build multi-layer neural networks.

11 .Tpu – tensorflow processing unit Tensorflow Processing Unit (TPU) -- an ASIC built specifically for machine learning and tailored for TensorFlow . TPU is a programmable AI accelerator Designed to provide high throughput of low-precision arithmetic Utilized for running models rather than training them.

12 .Tpu v2 The second-generation TPUs deliver up to 180 teraflops of performance, and when organized into clusters of 64 TPUs, provide up to 11.5 petaflops.

13 .packaging The downloadable Tensorflow package supports both GPU and non-GPU enabled calculation. Graphic Processor Units, such as those produced by NVidia excel at the matrix operations on which Tensorflow relies. Note: AMD offers a new line of EPYX-architecture processors which will support TensorFlow . ( TensorFlow is not supported on legacy processors.)

14 .Gce – google compute engine TensorFlow is hosted by GCE Google Compute Engine (GCE) is the Infrastructure as a Service (IaaS) component of Google Cloud Platform which is built on the global infrastructure that runs Google’s search engine, Gmail, YouTube and other services. Google Compute Engine enables users to launch virtual machines (VMs) on demand.

15 .Tensorflow at scale TensorFlow can distribute processing across CPU cores, GPU cores, or even multiple devices like multiple GPUs. TensorFlow can train a network with millions of parameters on a training set composted of billions of instances with millions of features each. There is a performance cost associated with the distribution of tensors across multiple processors and/or machines. Tensorflow addresses the performance impact of moving tensor (data) around the system by employing a lossy compression algorithm. 32-bit and 64-bit floating point numbers are reduced to 16-bit resolution.

16 .Tensorflow lite In May 2017 Google announced a software stack specifically for Android development. TensorFlow Lite supports a set of core operators, both quantized and float, which have been tuned for mobile platforms. Enables on-device machine learning inference with low latency and a small binary size. TensorFlow Lite also supports hardware acceleration with the Android Neural Networks API.

17 .Tensorflow lite In May 2017 Google announced a software stack specifically for Android development. TensorFlow Lite supports a set of core operators, both quantized and float, which have been tuned for mobile platforms. Enables on-device machine learning inference with low latency and a small binary size. TensorFlow Lite also supports hardware acceleration with the Android Neural Networks API.

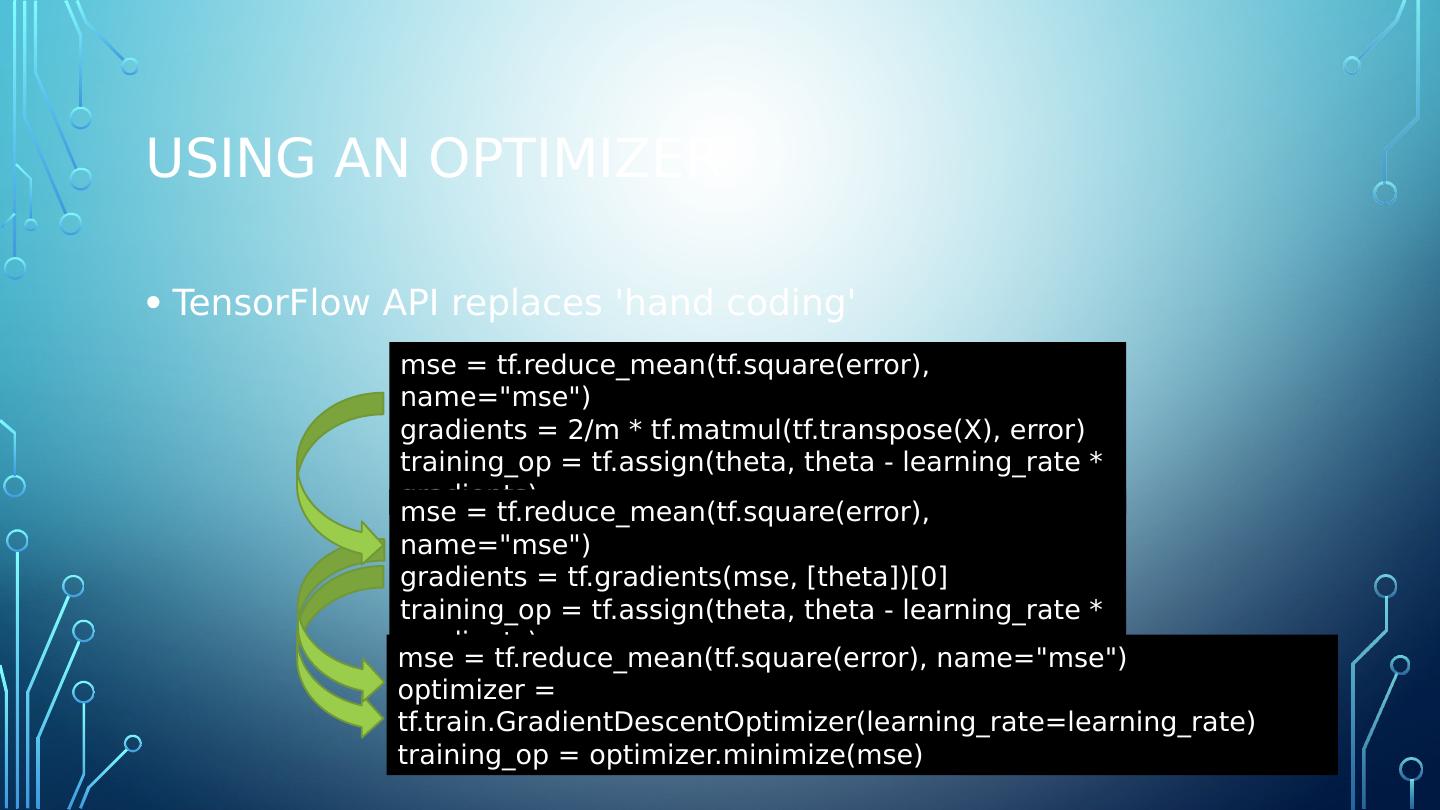

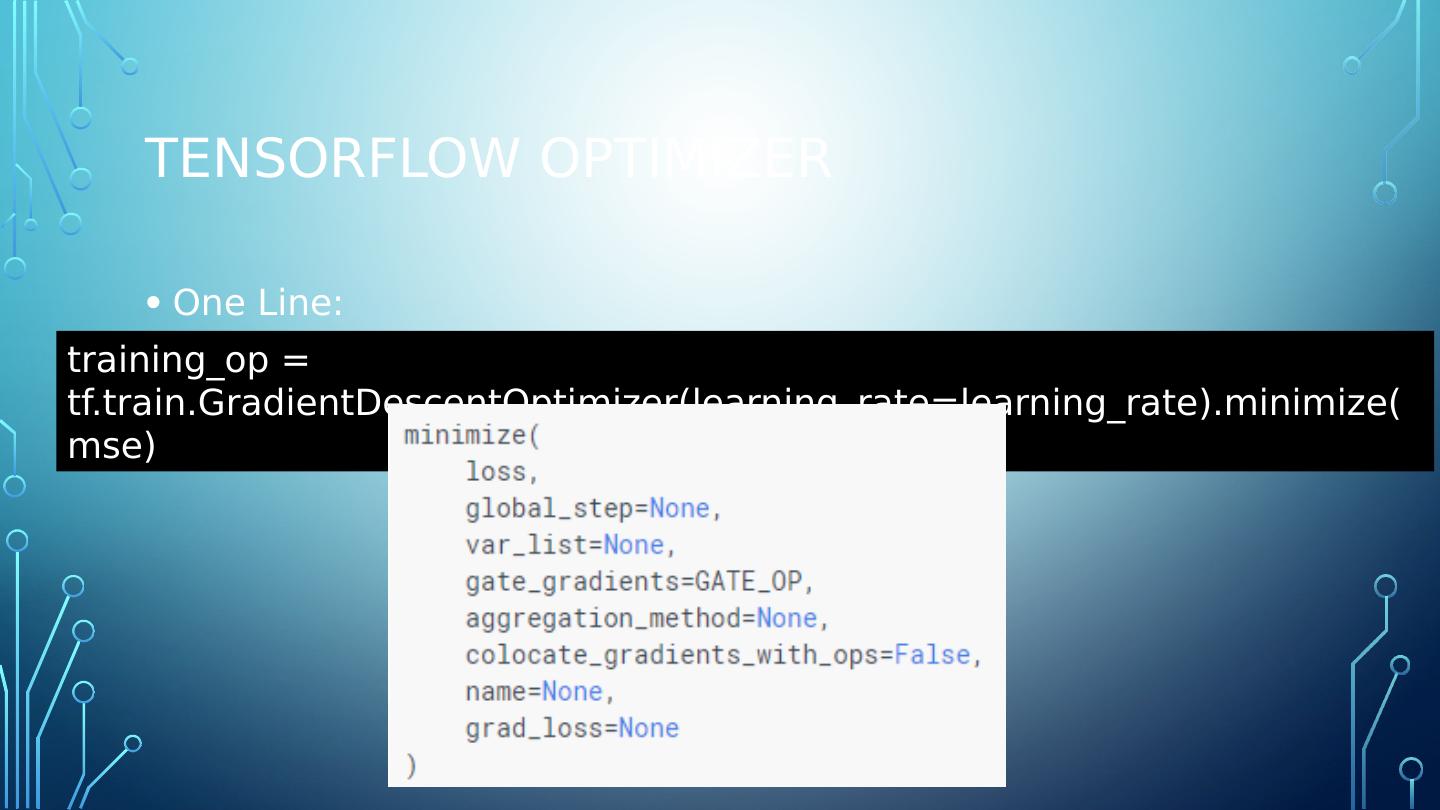

18 .Tensorflow advantage TF-Learn is the TensorFlow Python API which is compatible with Scikit -Learn TF-slim API simpifies building, training and evaluating neural networks. Automatic differentiating ( autodiff )

19 .Namespace The TensorFlow API is vast. Composed of over 2,500 methods/objects/symbols The contrib namespace/module contains volatile or experimental code. Doesnt mean it doesnt work... Expect change... parameters, hierarchy, etc.

20 .Namespace The TensorFlow API is vast. Composed of over 2,500 methods/objects/symbols The contrib namespace/module contains volatile or experimental code. Doesnt mean it doesnt work... Expect change... parameters, hierarchy, etc.

21 .cuda CUDA® is a parallel computing platform and programming model developed by NVIDIA for general computing on graphical processing units (GPUs). With CUDA, developers are able to dramatically speed up computing applications by harnessing the power of GPUs. The NVIDIA CUDA® Deep Neural Network library ( cuDNN ) is a GPU-accelerated library of primitives for deep neural networks. cuDNN provides highly tuned implementations for standard routines such as forward and backward convolution, pooling, normalization, and activation layers. Text from NVIDIA website

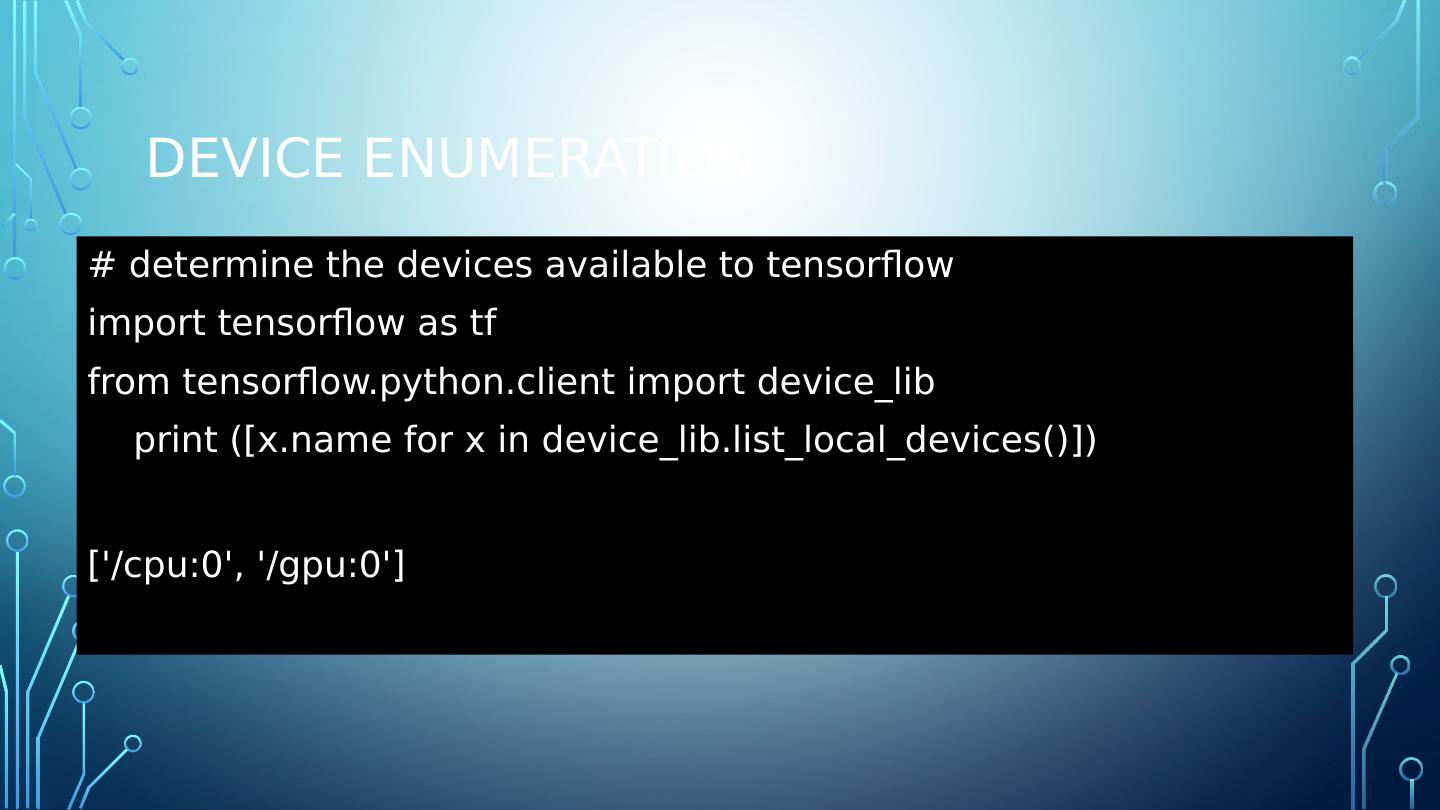

22 .Device enumeration # determine the devices available to tensorflow import tensorflow as tf from tensorflow.python.client import device_lib print ([x.name for x in device_lib.list_local_devices ()]) [/cpu:0, /gpu:0]

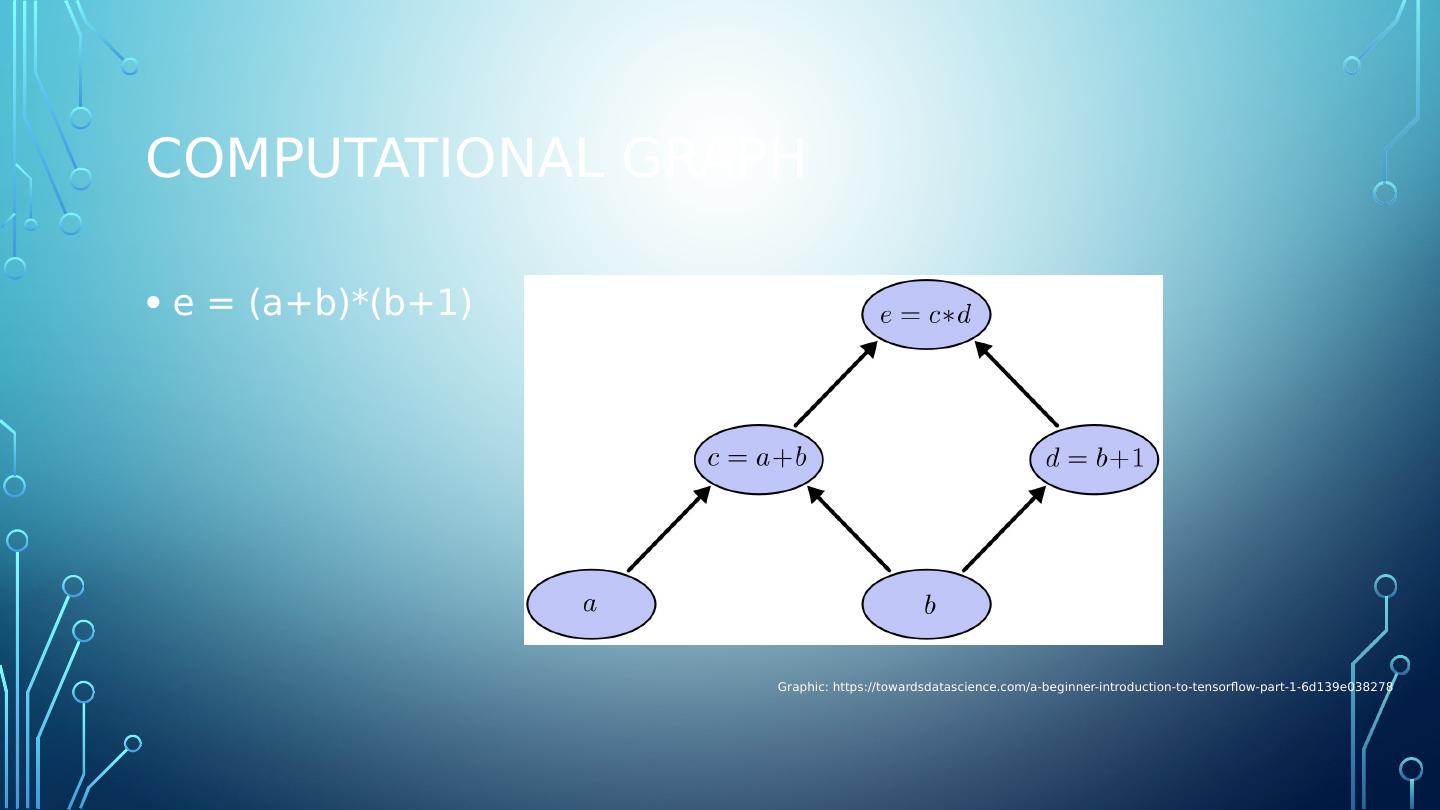

23 .Tensor

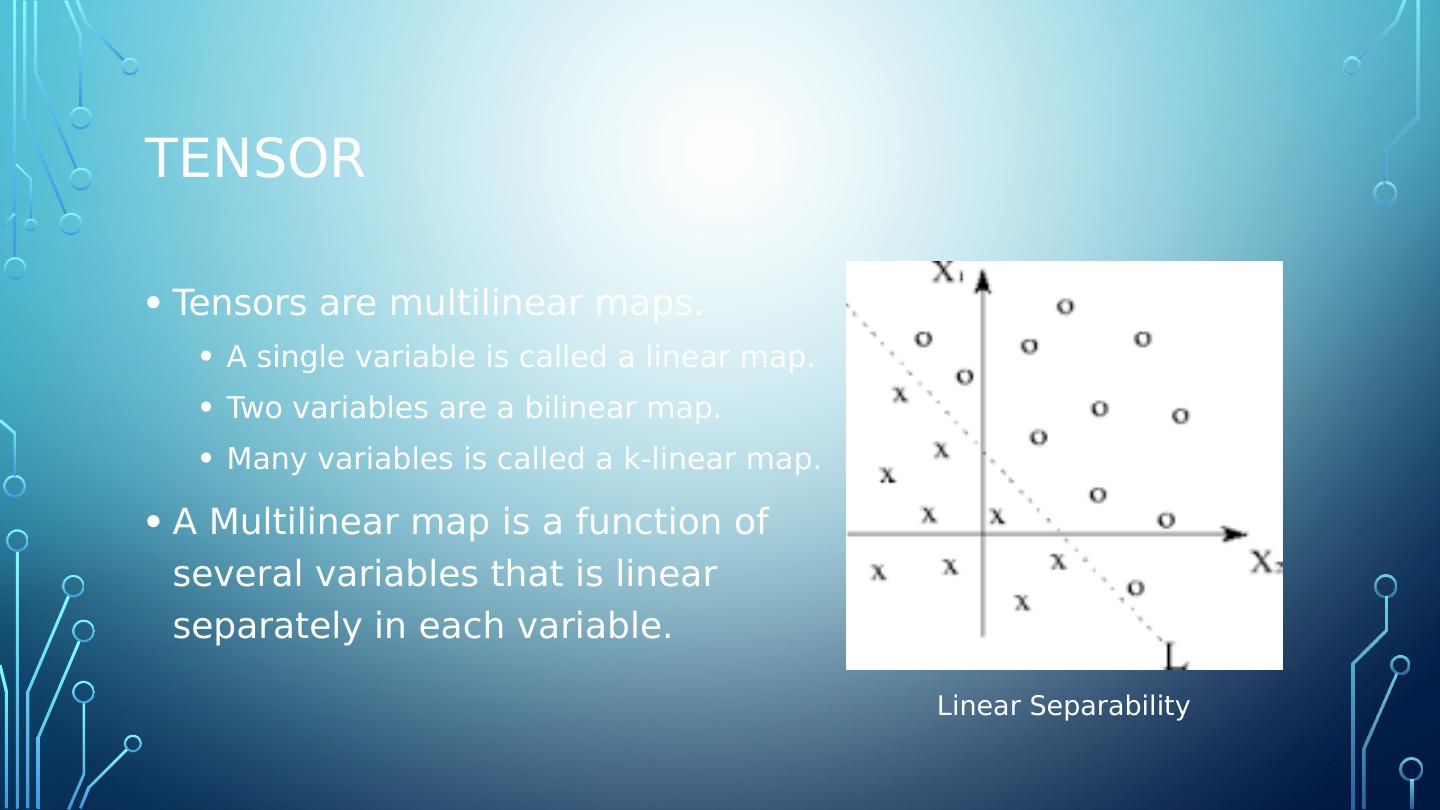

24 .tensor Tensors are multilinear maps. A single variable is called a linear map. Two variables are a bilinear map. Many variables is called a k-linear map. A Multilinear map is a function of several variables that is linear separately in each variable. Linear Separability

25 .Tensor Visual Tensor is a N-dimensional vector https://towardsdatascience.com/a-beginner-introduction-to-tensorflow-part-1-6d139e038278

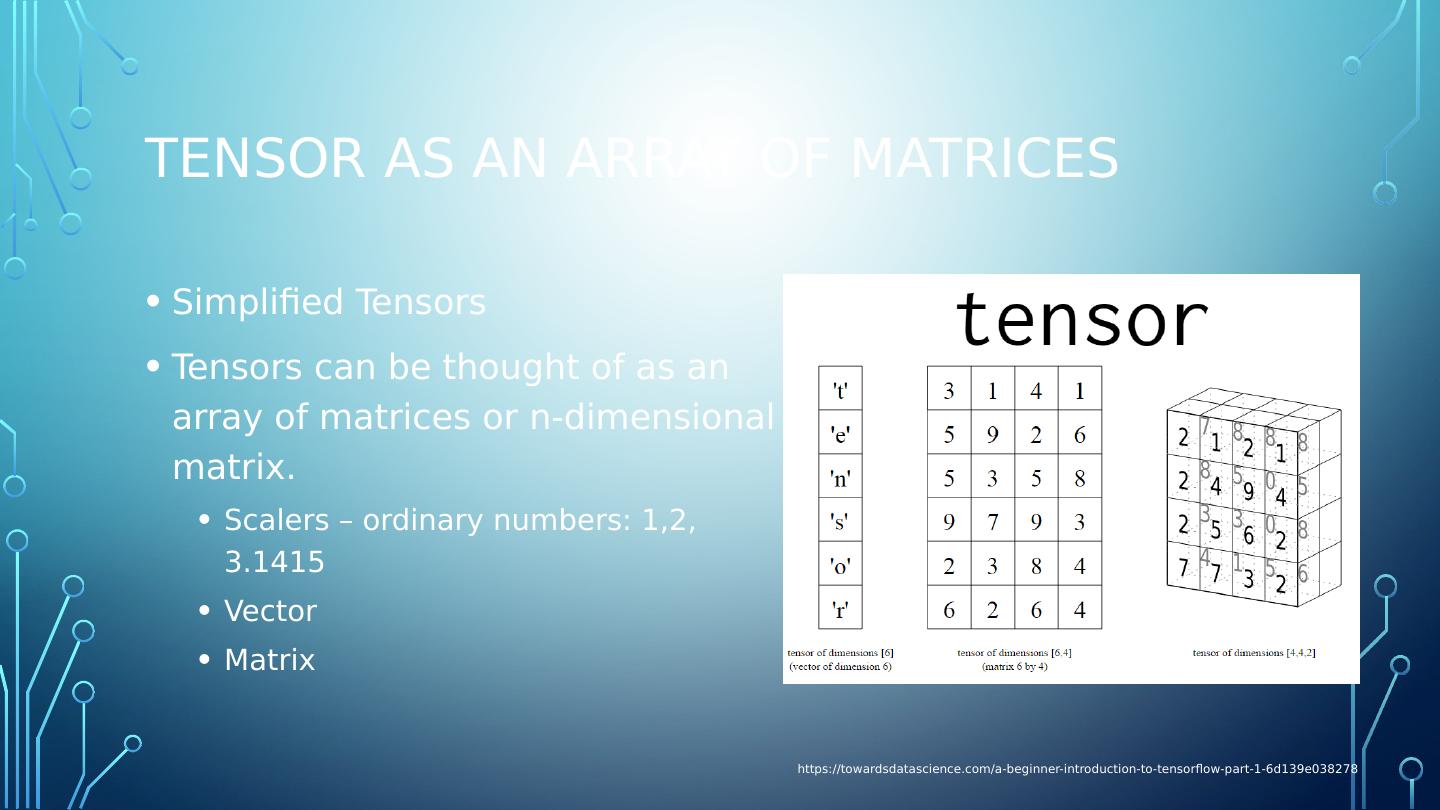

26 .Tensor as an array of matrices Simplified Tensors Tensors can be thought of as an array of matrices or n-dimensional matrix. Scalers – ordinary numbers: 1,2, 3.1415 Vector Matrix https://towardsdatascience.com/a-beginner-introduction-to-tensorflow-part-1-6d139e038278

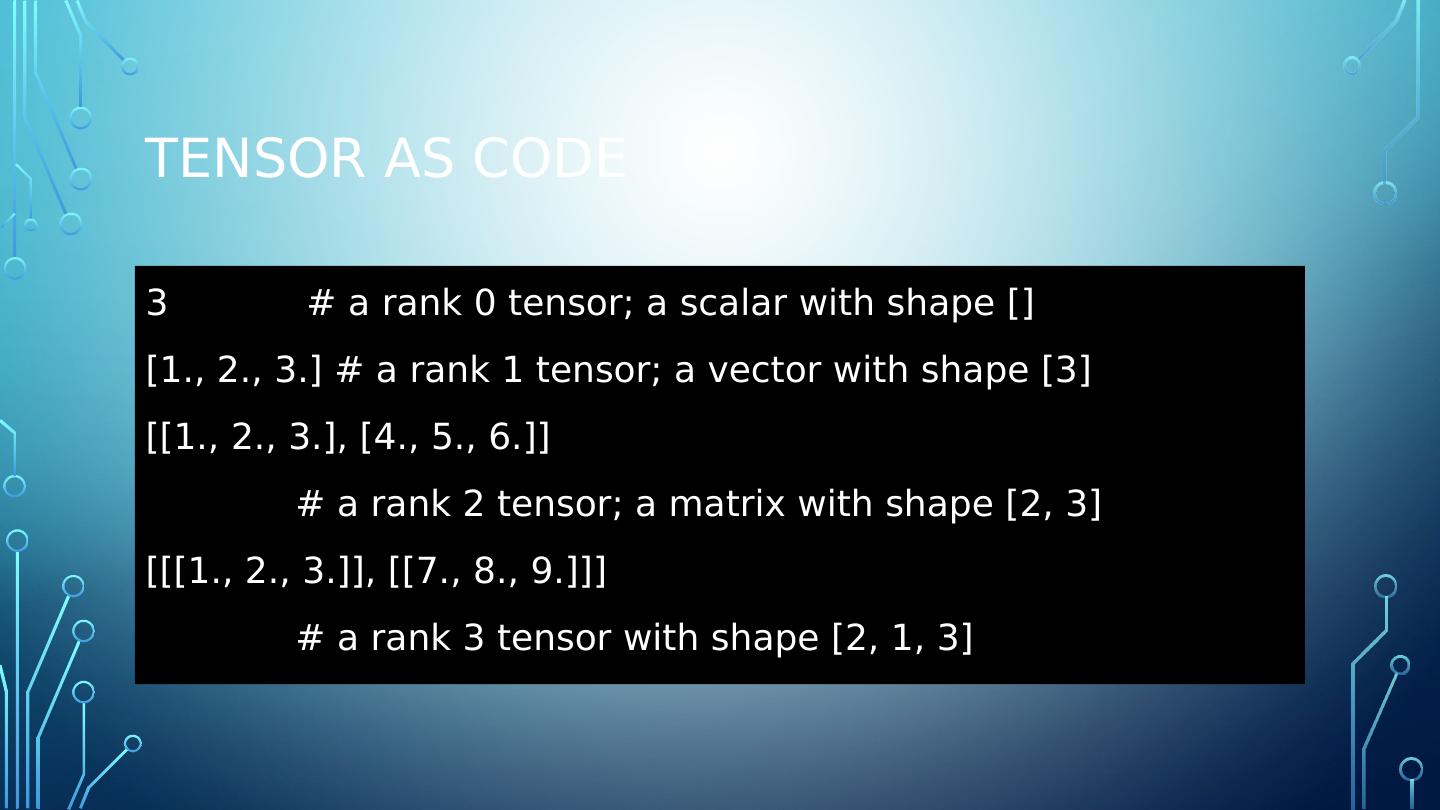

27 .Tensor as code 3 # a rank 0 tensor; a scalar with shape [] [1., 2., 3.] # a rank 1 tensor; a vector with shape [3] [[1., 2., 3.], [4., 5., 6.]] # a rank 2 tensor; a matrix with shape [2, 3] [[[1., 2., 3.]], [[7., 8., 9.]]] # a rank 3 tensor with shape [2, 1, 3]

28 .Tensors... in tensorflow In Tensorflow , there are three primary types of tensors: tf.Variable tf.constant Tf.placeholder

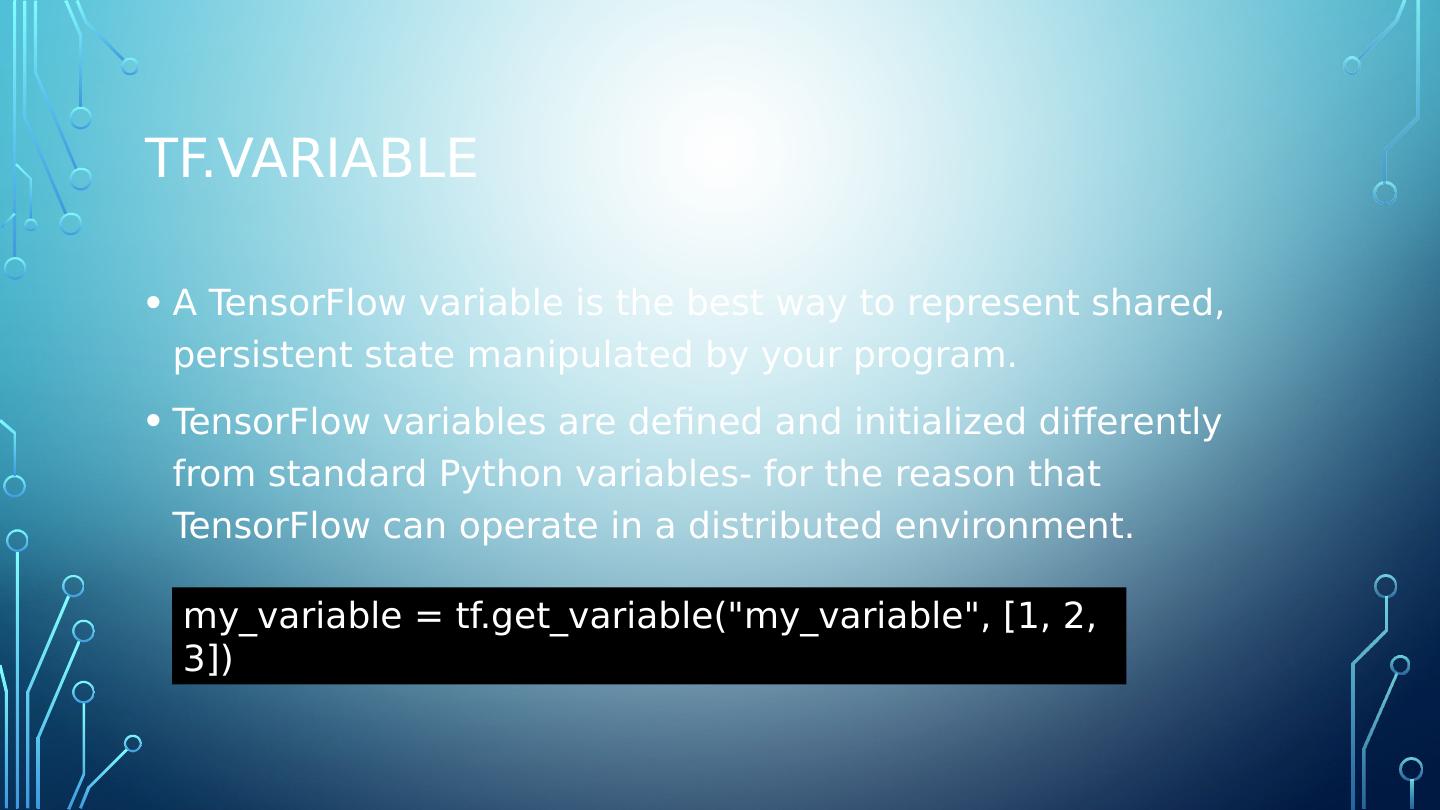

29 .Tf.Variable A TensorFlow variable is the best way to represent shared, persistent state manipulated by your program. TensorFlow variables are defined and initialized differently from standard Python variables- for the reason that TensorFlow can operate in a distributed environment. my_variable = tf.get_variable (" my_variable ", [1, 2, 3])