- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Deep learning challenges

展开查看详情

1 .deep learning challenges Deep learning is a statistical technique... Gene Olafsen

2 .Deep learning works well... "Deep learning systems work less well when there are limited amounts of training data available, or when the test set differs importantly from the training set, or when the space of examples is broad and filled with novelty. https://arxiv.org/ftp/arxiv/papers/1801/1801.00631.pdf

3 .Abstraction/Generalization "Human beings can learn abstract relationships in a few trials."

4 .Requires too much data "Deep learning currently lacks a mechanism for learning abstractions through explicit, verbal definition, and works best when there are thousands, millions or even billions of training examples."

5 ."Deep" is "Shallow" "Deep" refers to the number of so-called hidden layers- not to the capacity of the neural network to comprehend abstract concepts. Deep in this case is an architectural construct.

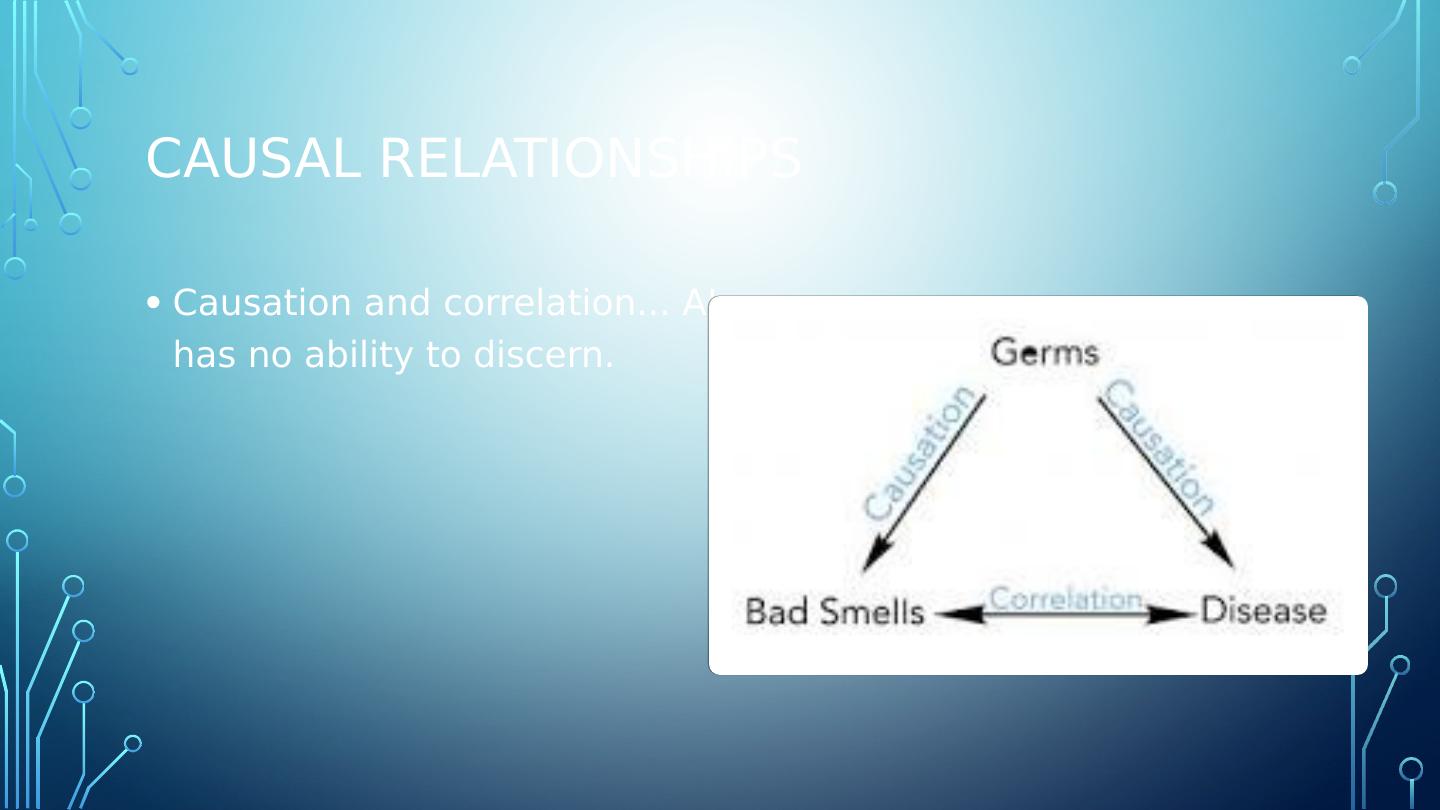

6 .Causal relationships Causation and correlation... AI has no ability to discern.

7 .Hierarchical structures have no representation Deep learning learns correlations between sets of features that are themselves “flat” or nonhierarchical, as if in a simple, unstructured list, with every feature on equal footing.

8 .Nuance "(Bowman, Angeli, Potts, & Manning, 2015; Williams, Nangia , & Bowman, 2017) have taken some important steps in this direction, there is, at present, no deep learning system that can draw open-ended inferences based on real world knowledge with anything like human-level accuracy"

9 .Opaque Deep learning systems have millions or even billions of parameters, Most observers would acknowledge that neural networks as a whole remain something of a black box. What does that mean for mission critical adoption? How do you quantify system bias?

10 .Knowledge silos With the exception of "transfer learning"- in which knowledge is sourced from an existing deep learning system "It also not straightforward in general how to integrate prior knowledge into a deep learning system:," Mathematical concepts, equations describing the physical world, etc... There is no commonsense reasoning

11 .ML Models expect stability Instance predictions require that the rules of the game have not changed since the model was trained to achieve acceptable outcomes

12 .Vulnerability Deep learning systems provide approximations of answers. There are numerous examples of inputs (visual) provided to deep learning system that range from subtle to extreme where classification fails in egregious ways

13 .Continuity and Repeatability As a team of authors at Google put it in 2014, in the title of an important, and as yet unanswered essay (Sculley, Phillips, Ebner, Chaudhary, & Young, 2014), machine learning is “the high-interest credit card of technical debt”, meaning that is comparatively easy to make systems that work in some limited set of circumstances (short term gain), but quite difficult to guarantee that they will work in alternative circumstances with novel data that may not resemble previous training data (long term debt, particularly if one system is used as an element in another larger system).

14 .Continuity and Repeatability Fails to provide incremental development, either all or nothing. Perhaps achieving the trained success, after successive failure, leads to inappropriate adoption of models- think, enterprise hierarchy, engineers need to provide success, managers need to deliver successful projects...

15 .Continuity and Repeatability Fails to provide incremental development, either all or nothing. Perhaps achieving the trained success, after successive failure, leads to inappropriate adoption of models- think, enterprise hierarchy, engineers need to provide success, managers need to deliver successful projects...