- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

2_Intro_to_Concurrency

展开查看详情

1 .Intro to Concurrency 1 Lecture 2: Introduction to Concurrency Learning Goals Describe the differences between single-tasking and multi-tasking Identify challenges introduced by adding concurrency Describe an example of a multitasking system (perhaps with a diagram) Identify whether or not a program will benefit from concurrency, either in terms of efficiency or scalability Describe various methods of implementing multitasking, and list some advantages/disadvantages of each Define and describe time-slicing and how it differs from pseudo-multitasking © Paul Davies, C. Antonio Sanchez. Not to be copied, used, or revised without explicit written permission from the copyright owner.

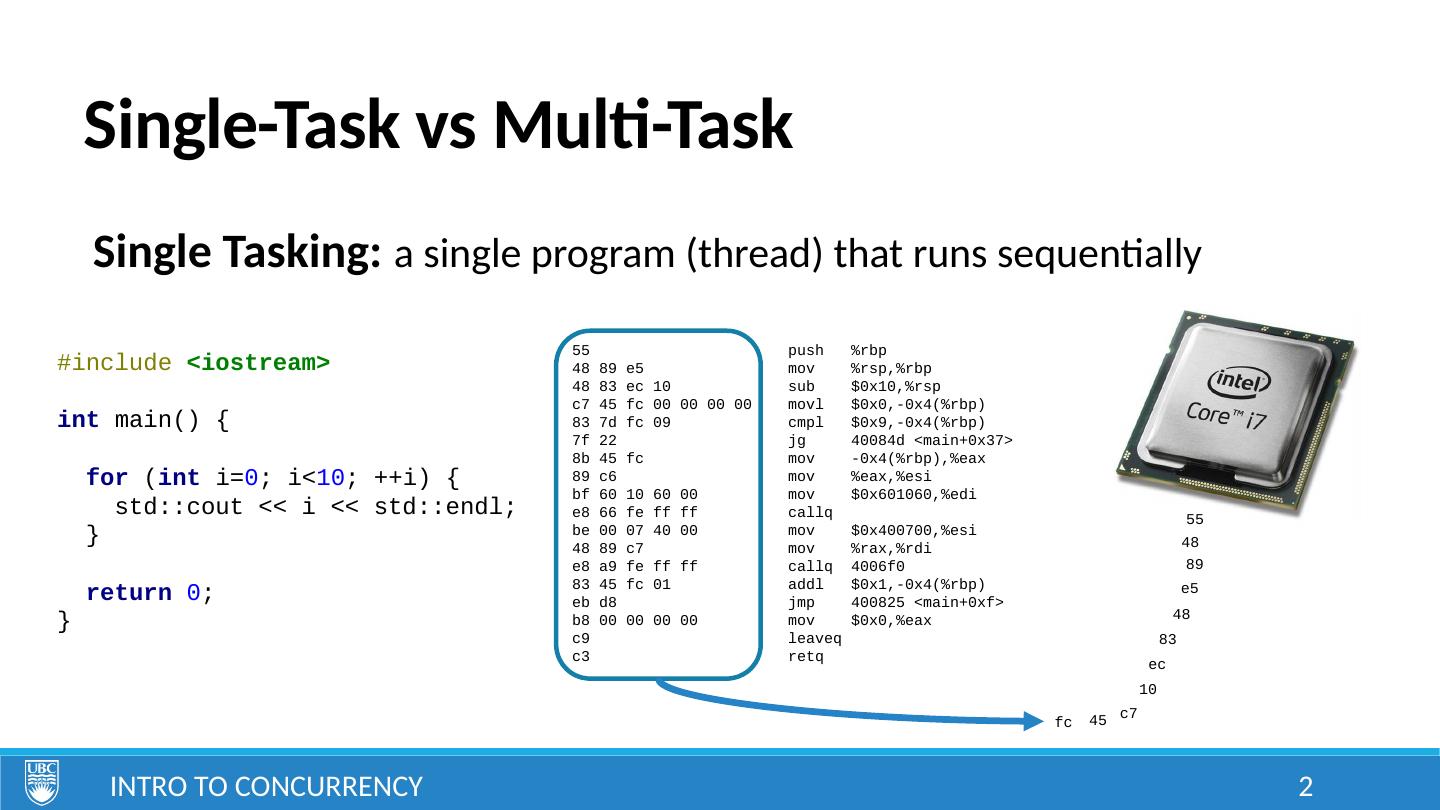

2 .Single-Task vs Multi-Task Intro to Concurrency 2 Single Tasking: a single program (thread) that runs sequentially #include <iostream> int main() { for ( int i= 0 ; i< 10 ; ++i) { std::cout << i << std::endl; } return 0 ; } 55 push %rbp 48 89 e5 mov %rsp,%rbp 48 83 ec 10 sub $0x10,%rsp c7 45 fc 00 00 00 00 movl $0x0,-0x4(%rbp) 83 7d fc 09 cmpl $0x9,-0x4(%rbp) 7f 22 jg 40084d <main+0x37> 8b 45 fc mov -0x4(%rbp),%eax 89 c6 mov %eax,%esi bf 60 10 60 00 mov $0x601060,%edi e8 66 fe ff ff callq be 00 07 40 00 mov $0x400700,%esi 48 89 c7 mov %rax,%rdi e8 a9 fe ff ff callq 4006f0 83 45 fc 01 addl $0x1,-0x4(%rbp) eb d8 jmp 400825 <main+0xf> b8 00 00 00 00 mov $0x0,%eax c9 leaveq c3 retq 55 48 89 e5 48 83 ec 10 c7 45 fc

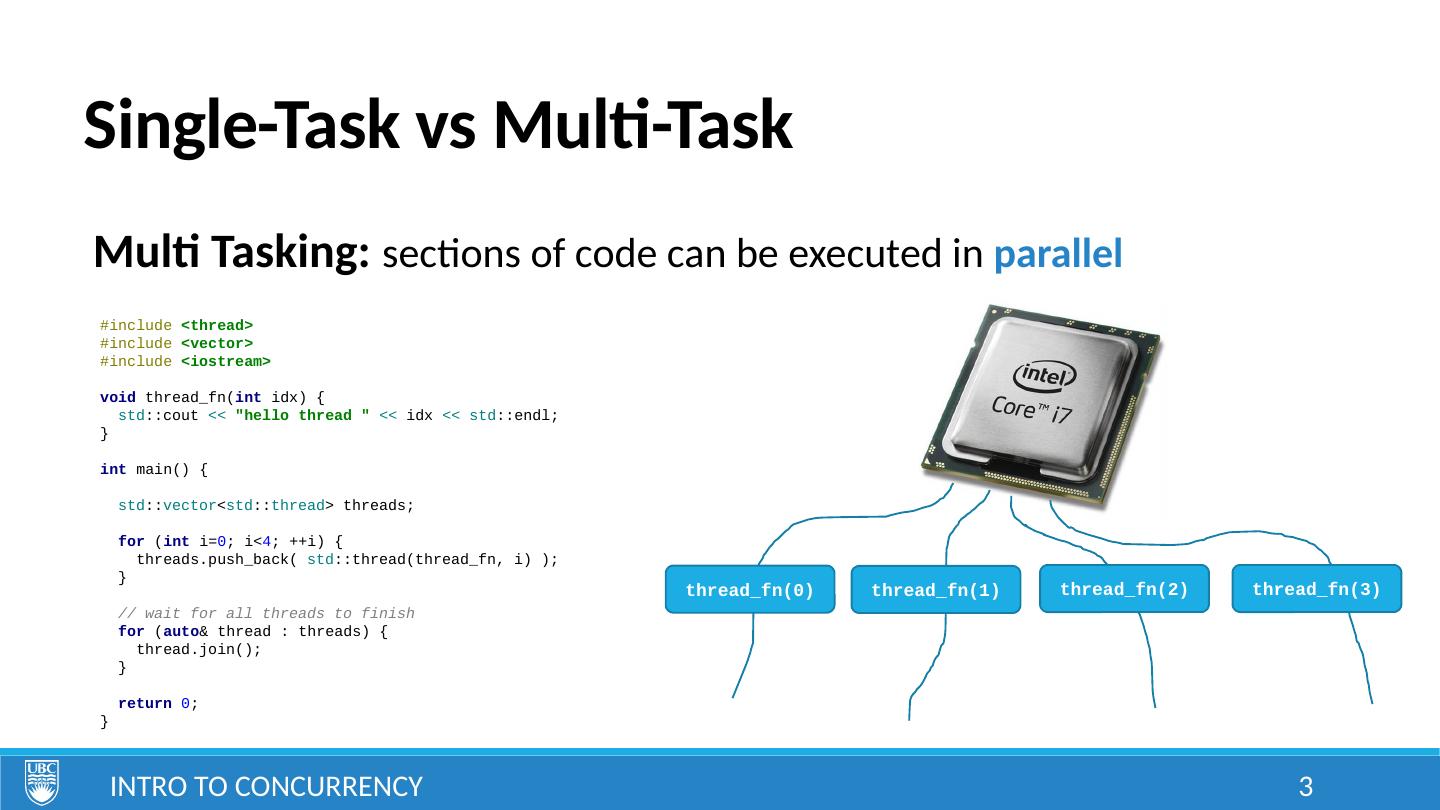

3 .Single-Task vs Multi-Task Intro to Concurrency 3 Multi Tasking: sections of code can be executed in parallel #include <thread> #include <vector> #include <iostream> void thread_fn( int idx) { std ::cout << "hello thread " << idx << std ::endl; } int main() { std :: vector < std :: thread > threads; for ( int i= 0 ; i< 4 ; ++i) { threads.push_back( std ::thread(thread_fn, i) ); } // wait for all threads to finish for ( auto & thread : threads) { thread.join(); } return 0 ; } thread_fn(0) thread_fn(1) thread_fn(2) thread_fn(3)

4 .Multi-Tasking Intro to Concurrency 4 Advantages: greater flexibility – system can be distributed across several servers greater scalability – a single program can be instantiated as many times as needed Disadvantages: challenges in decomposing system into appropriate concurrent units challenges in communication between parallel units challenges in synchronization between parallel units challenges in debugging and testing

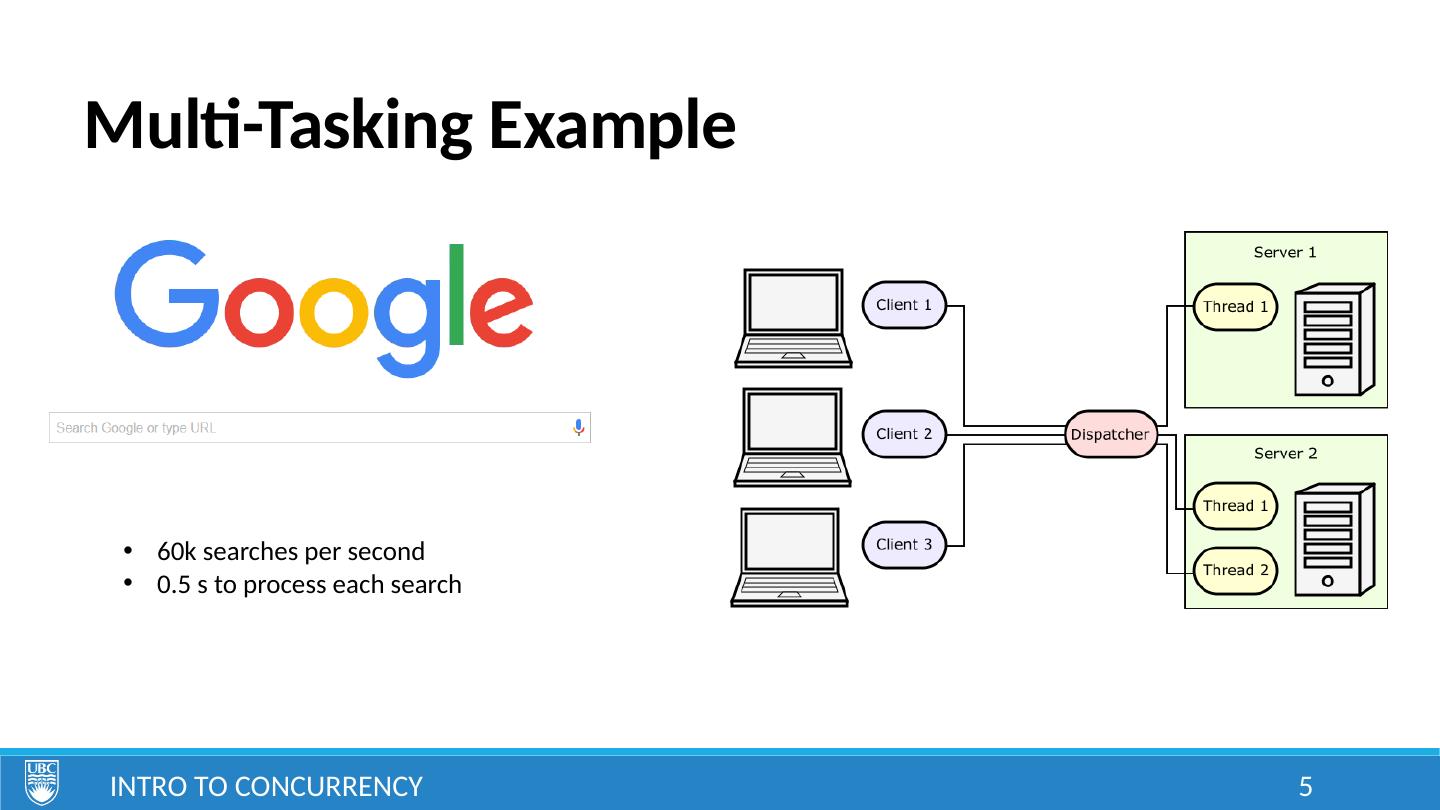

5 .Multi-Tasking Example Intro to Concurrency 5 60k searches per second 0.5 s to process each search

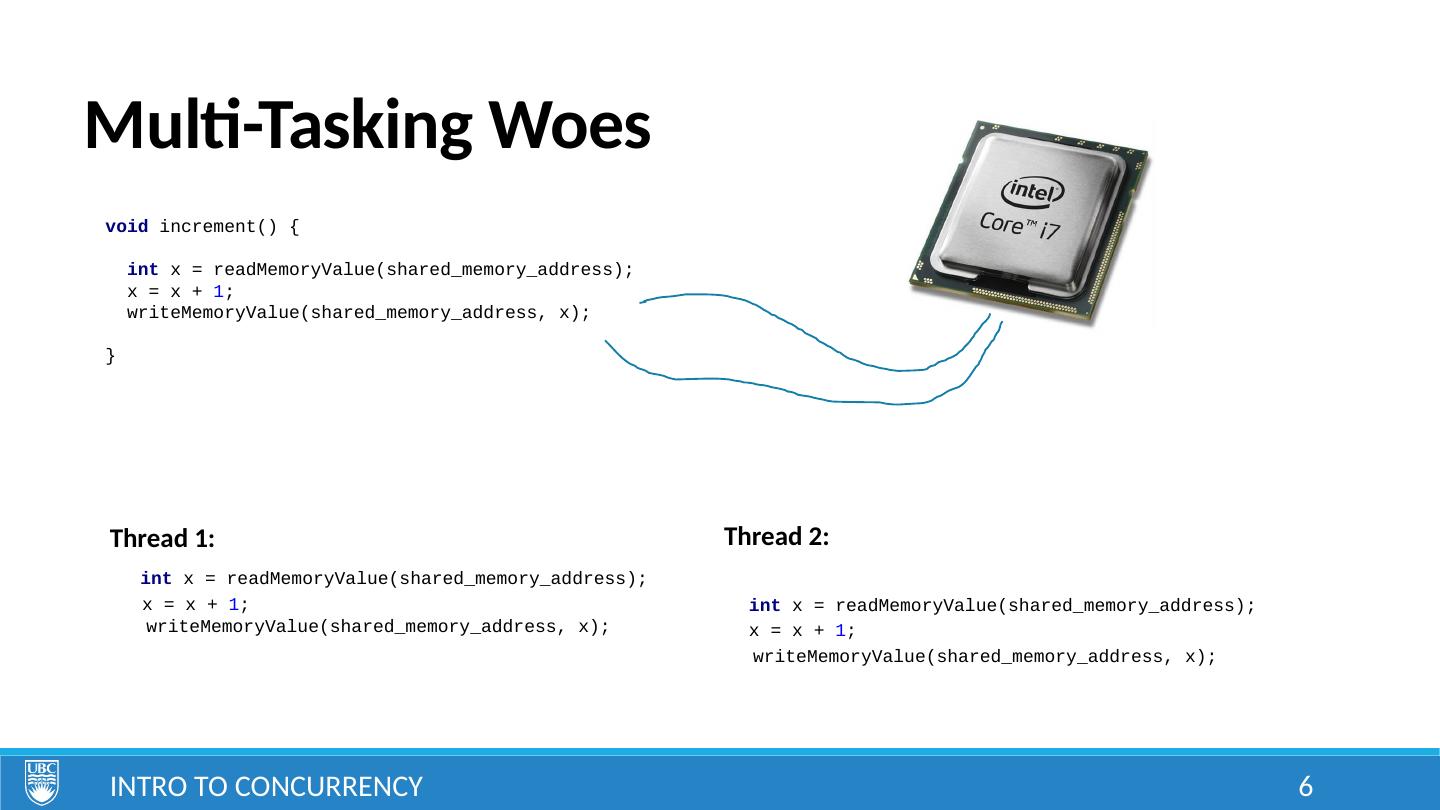

6 .Multi-Tasking Woes Intro to Concurrency 6 void increment() { int x = readMemoryValue(shared_memory_address); x = x + 1 ; writeMemoryValue(shared_memory_address, x); } int x = readMemoryValue(shared_memory_address ); x = x + 1 ; int x = readMemoryValue(shared_memory_address ); writeMemoryValue(shared_memory_address, x); x = x + 1 ; writeMemoryValue(shared_memory_address, x); Thread 1: Thread 2:

7 .Parallel Programming Intro to Concurrency 7 Multi-tasking has the potential to make your software faster Parallel Programming: specialized area of concurrent programming that focuses on designing faster algorithms using concurrency. e.g. parallel merge sort

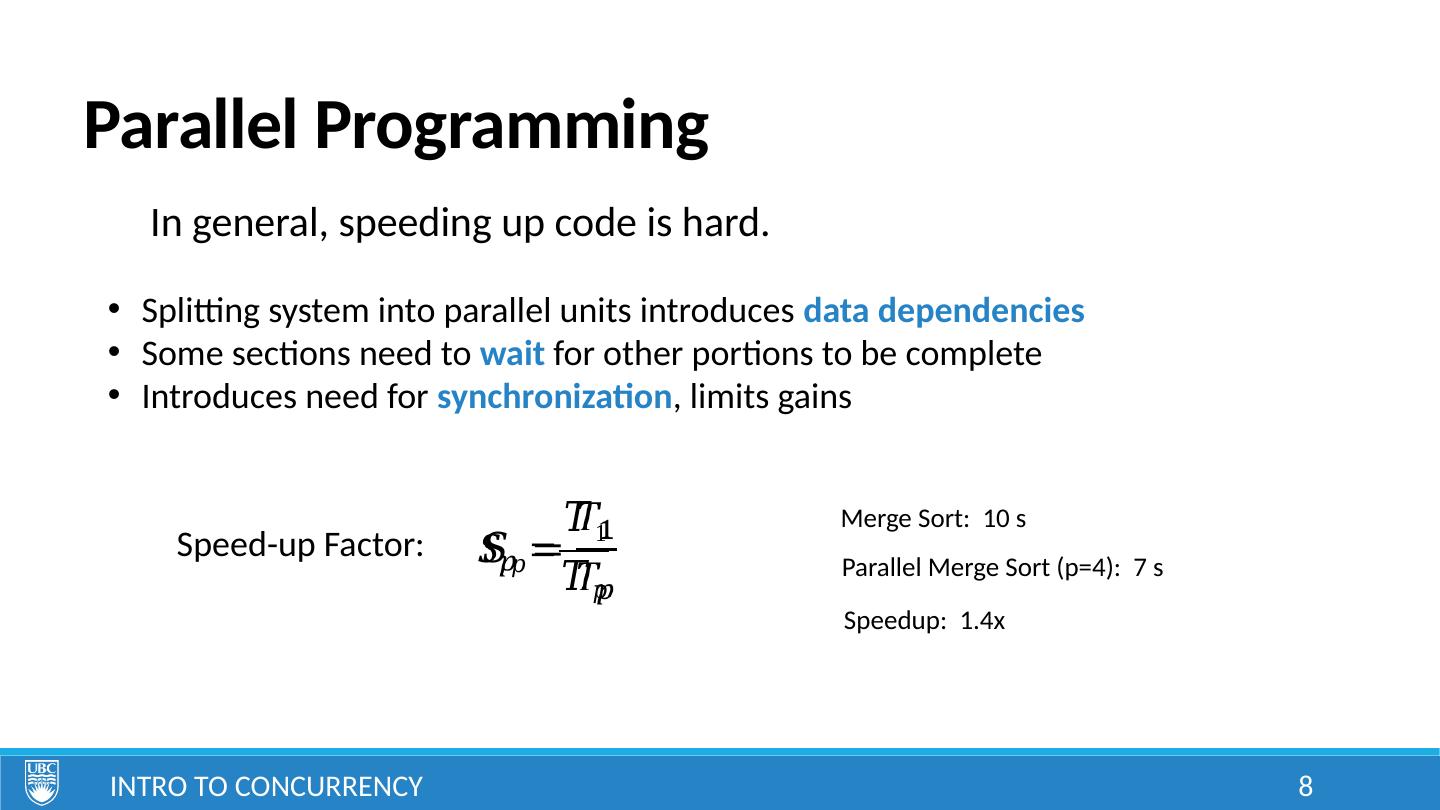

8 .Parallel Programming Intro to Concurrency 8 In general, speeding up code is hard. Splitting system into parallel units introduces data dependencies Some sections need to wait for other portions to be complete Introduces need for synchronization , limits gains Speed-up Factor: Merge Sort: 10 s Parallel Merge Sort (p=4): 7 s Speedup: 1.4x

9 .Multitasking Implementations Intro to Concurrency 9 Pseudo-multitasking Multiple dedicated processors/cores Distributed systems Time-sliced processors/cores

10 .Pseudo Multitasking Intro to Concurrency 10 S imply switch between a bunch of sequential tasks fast enough that it looks like they are running in parallel. int main () { // continuously cycle through set of monitors // to give appearance of parallel operation while ( deviceIsOn () ) { monitorTemperature (); monitorHumidity (); monitorAtmosphericPressure (); updateDisplays (); } return 0 ; }

11 .Pseudo Multitasking Intro to Concurrency 11 Advantages: simple to implement and debug no knowledge of operating system required communication and synchronization is trivial Disadvantages: tasks must be brief , preferably with guaranteed maximum execution times tasks must never loop on a condition or wait for input if a task crashes, brings down the entire system

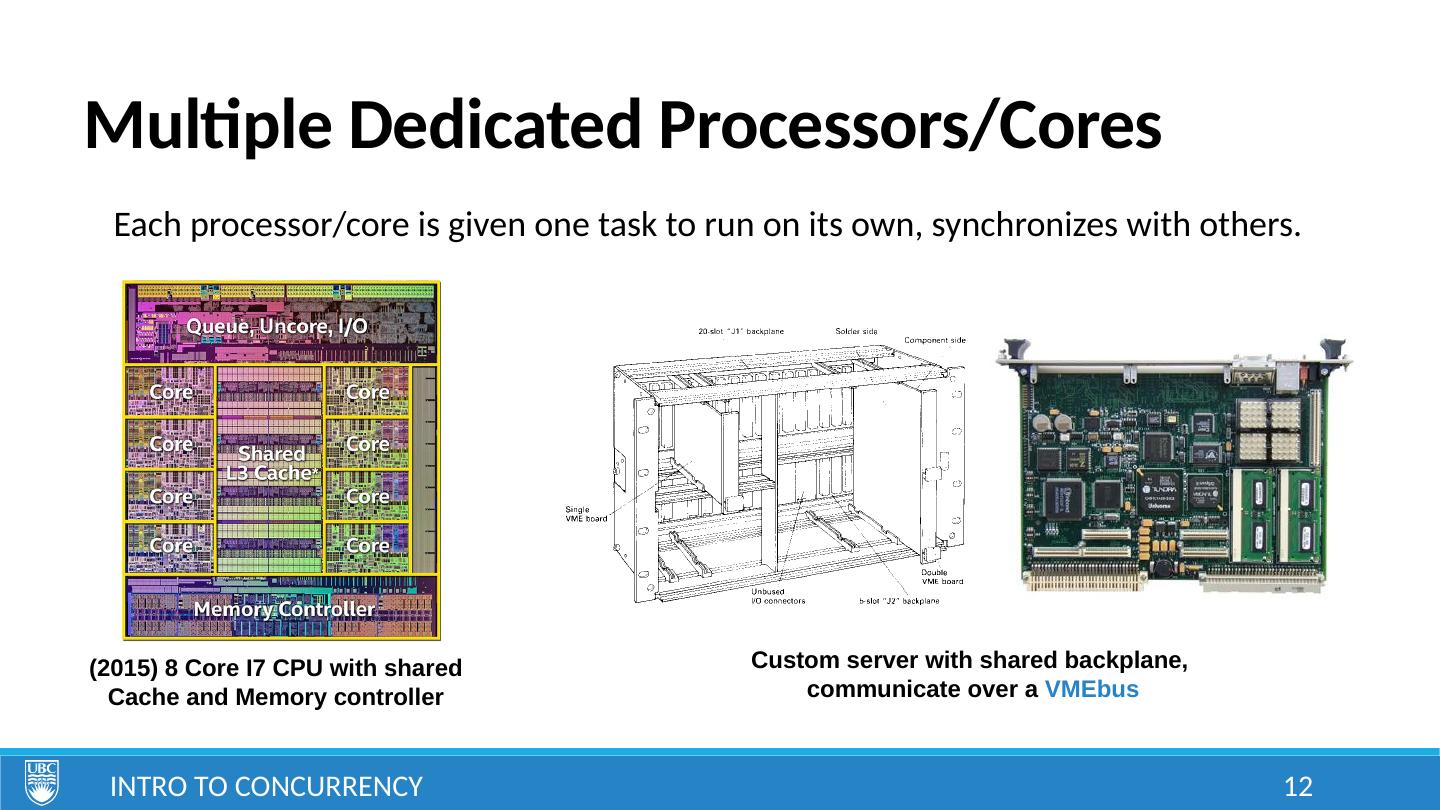

12 .Multiple Dedicated Processors/Cores Intro to Concurrency 12 Each processor/core is given one task to run on its own, synchronizes with others. (2015) 8 Core I7 CPU with shared Cache and Memory controller Custom server with shared backplane, communicate over a VMEbus

13 .Multiple Dedicated Processors/Cores Intro to Concurrency 13 Advantages: customizable to the application extremely high performance Disadvantages: highly expensive requires complex hardware inflexible , cannot accommodate dynamic process creation difficult to debug

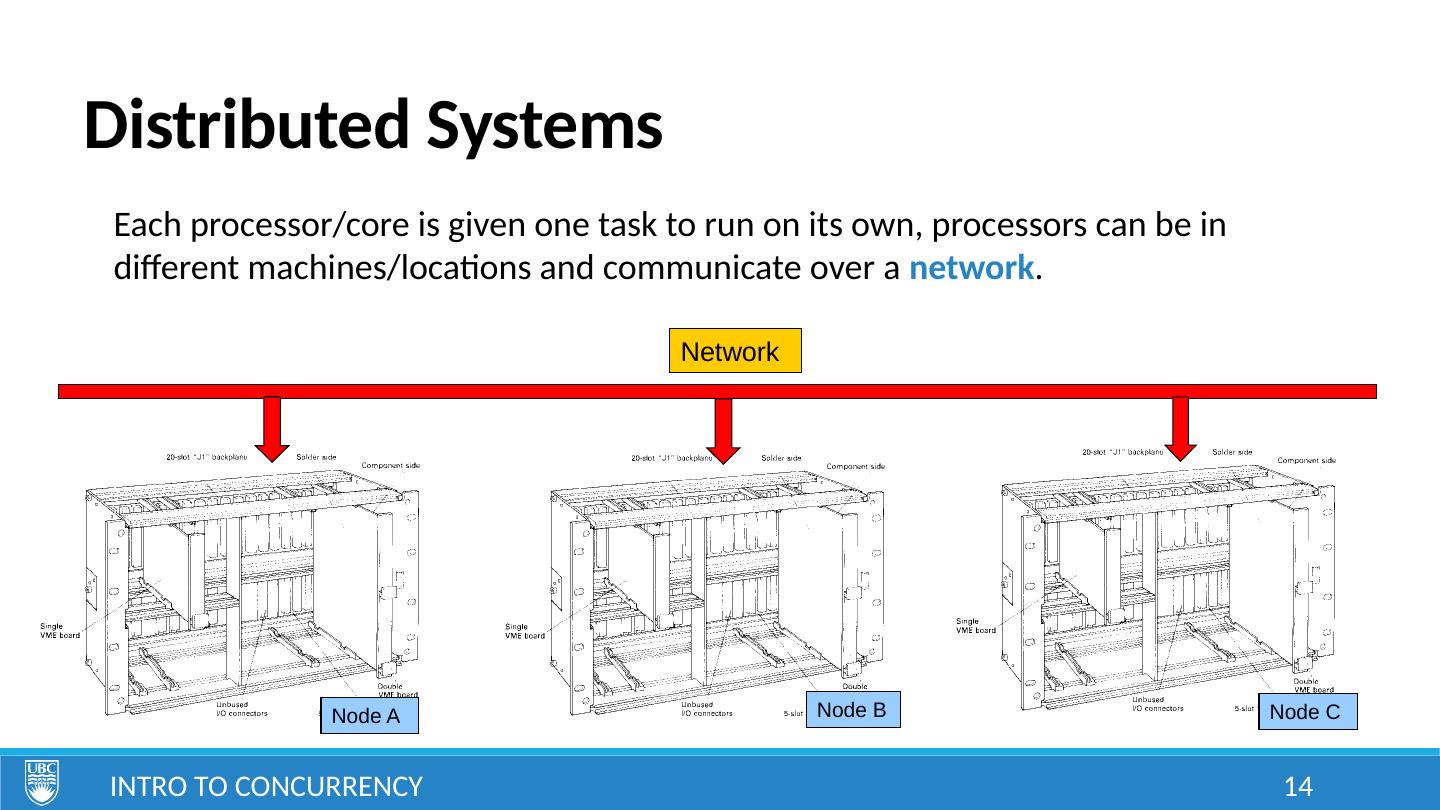

14 .Distributed Systems Intro to Concurrency 14 Each processor/core is given one task to run on its own, processors can be in different machines/locations and communicate over a network . Network Node A Node B Node C

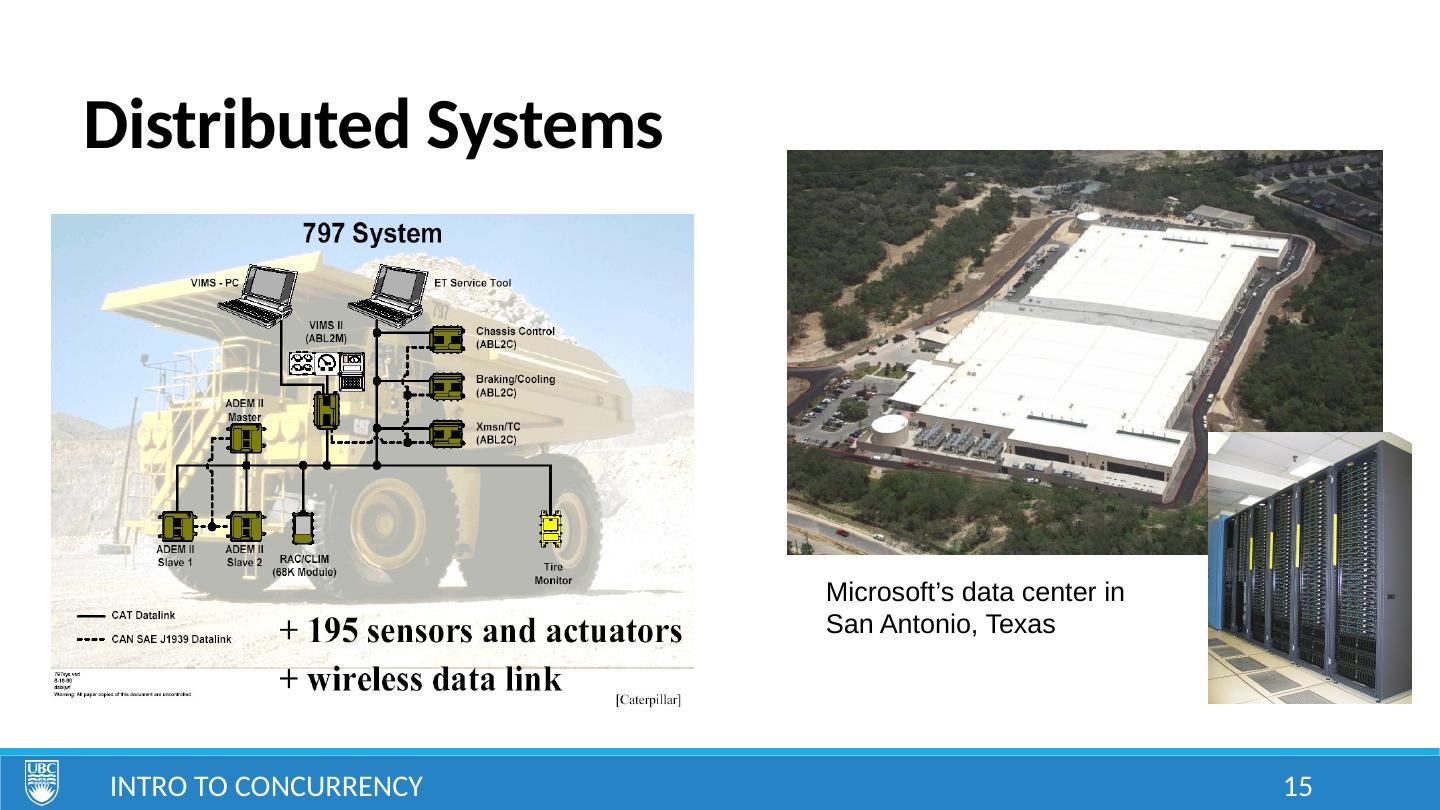

15 .Distributed Systems Intro to Concurrency 15 Microsoft’s data center in San Antonio, Texas

16 .Distributed Systems Intro to Concurrency 16 Advantages: flexibility in adding/removing resources if one node goes down, the rest can continue and pick up the slack Disadvantages: network communication is slower and less reliable complexity in design, requires redundancy in case of disconnection or errors

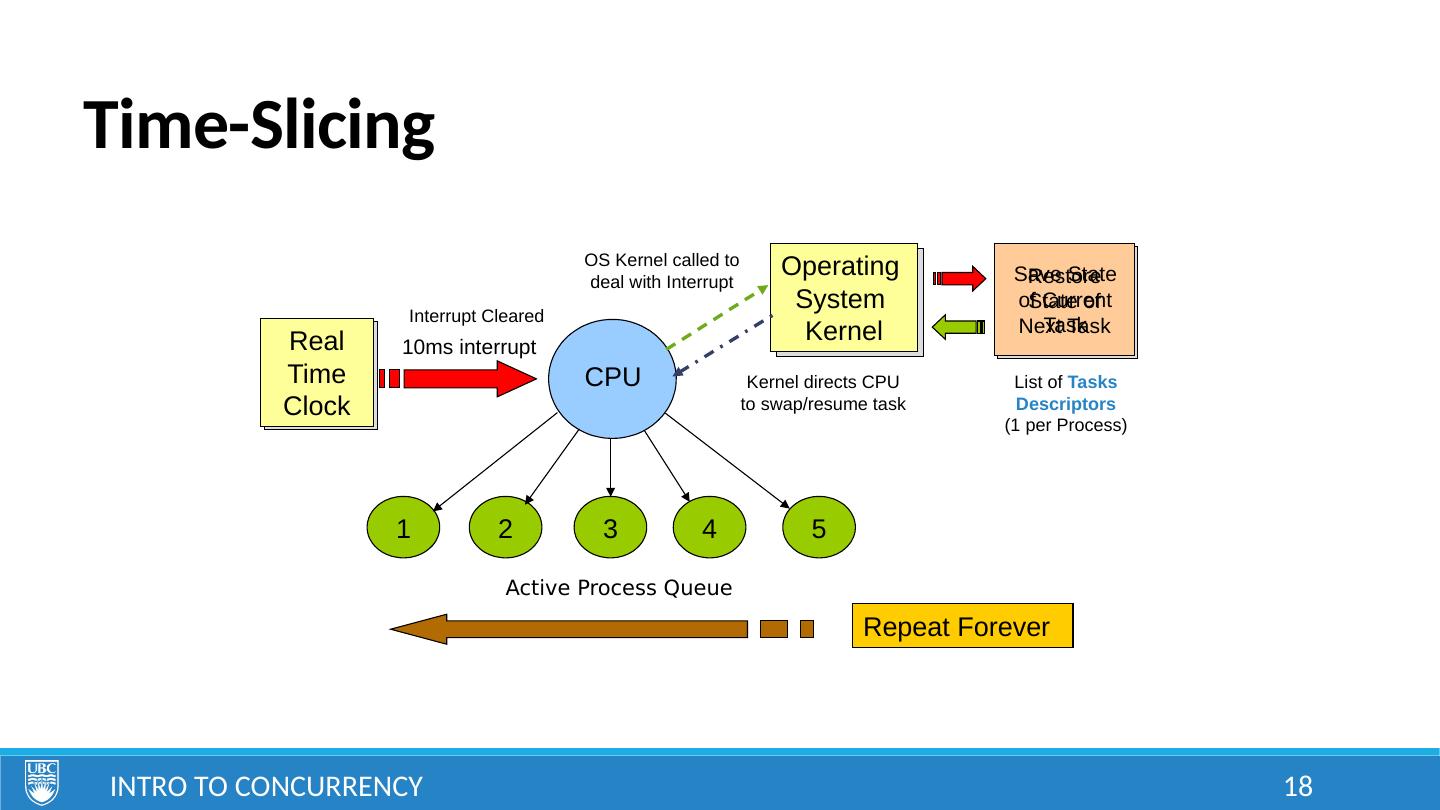

17 .Time-Slicing Intro to Concurrency 17 The more practical and cheaper approach, used in most systems today. Relies on a Multi-Tasking Operating System (MTOS) in conjunction with a time-sliced CPU/core. p rocessor’s computation time is divided into time-slices OS assigns slices to programs/threads processor swaps between programs/threads rapidly, executing one time slice after the other, sequentially

18 .Time-Slicing Intro to Concurrency 18 Real Time Clock Operating System Kernel Operating System Kernel CPU 5 4 3 2 1 Real Time Clock OS Kernel called to deal with Interrupt Kernel directs CPU to swap/resume task 10ms interrupt Repeat Forever Interrupt Cleared Save State of Current Task Restore State of Next Task List of Tasks Descriptors (1 per Process) Active Process Queue

19 .Time-Slicing Intro to Concurrency 19 Advantages: splitting of execution is handled automatically by the OS sub-tasks no longer need to be short, can wait on conditions or for input if one task goes down, others can still run Disadvantages: context switch (saving current task’s state and restoring next) adds overhead

20 .Homework: Intro to Concurrency 20 Read Introduction to C++ lecture notes Read Introduction to Git lecture notes Bring or borrow an iClicker (or alternative?) Create an ECE account for labs https ://help.ece.ubc.ca/How_To_Get_An_Account https://cpen333.github.io/