- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Dremel: Interactive Analysis Of Web-Scale Datasets

展开查看详情

1 .Dremel: Interactive Analysis of Web- Scale Datasets S E R G E Y M E L N I K , A N D R E Y G U B A R E V, J I N G J I N G L O N G , G E O F F R E Y R O M E R , S H I V A S H I V A K U M A R , M AT T T O L T O N ,T H E O V A S S I L A K I S PRESENTED BY D I PA N N I TA D E Y

2 .Outline • Problem • Existing technology • Dremel • Basic features • Applications • Infrastructure & details • Experiments • Evaluations 2

3 .Problem: Latency Matters Trends Interactive Spam Detection Tools Real-time Web Network Dashboards Optimization 3

4 .Existing Technologies • Map-Reduce - Record-oriented data - Does not work with data in-situ - Suitable for batch-processing Inherent Latency between submitting query and getting • Pig result • Hive 4

5 .Dremel • Interactive ad-hoc query system • Scales to thousands of nodes • Fault tolerant and handles stragglers • SQL like query language and multi-level execution trees • Nested data model • Columnar storage of nested (non-relational) data • Tree like architecture similar to web search • Interoperability with data • Access data in situ (Eg. – GFS, Bigtable) • MapReduce Pipelines 5

6 .Widely used inside Google since 2010 • Analysis of crawled web documents • Tracking install data for applications on Android Market • Crash reporting for Google products • Spam analysis • Debugging of map tiles on Google Maps • Tablet migrations in managed Bigtable instances • Results of tests run on Google's distributed build system • Disk I/O statistics for hundreds of thousands of disks • Resource monitoring for jobs run in Google's data centers 6

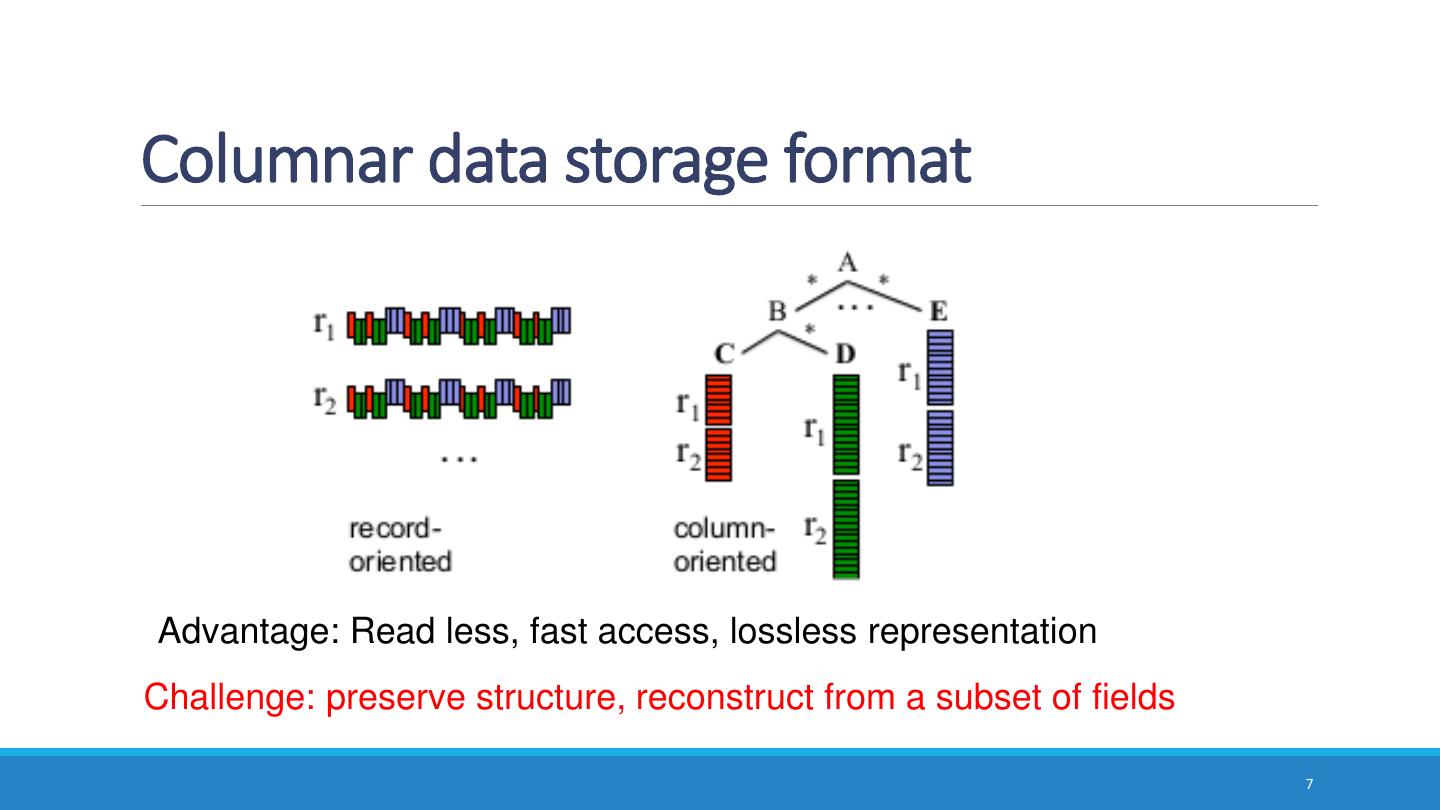

7 .Columnar data storage format Advantage: Read less, fast access, lossless representation Challenge: preserve structure, reconstruct from a subset of fields 7

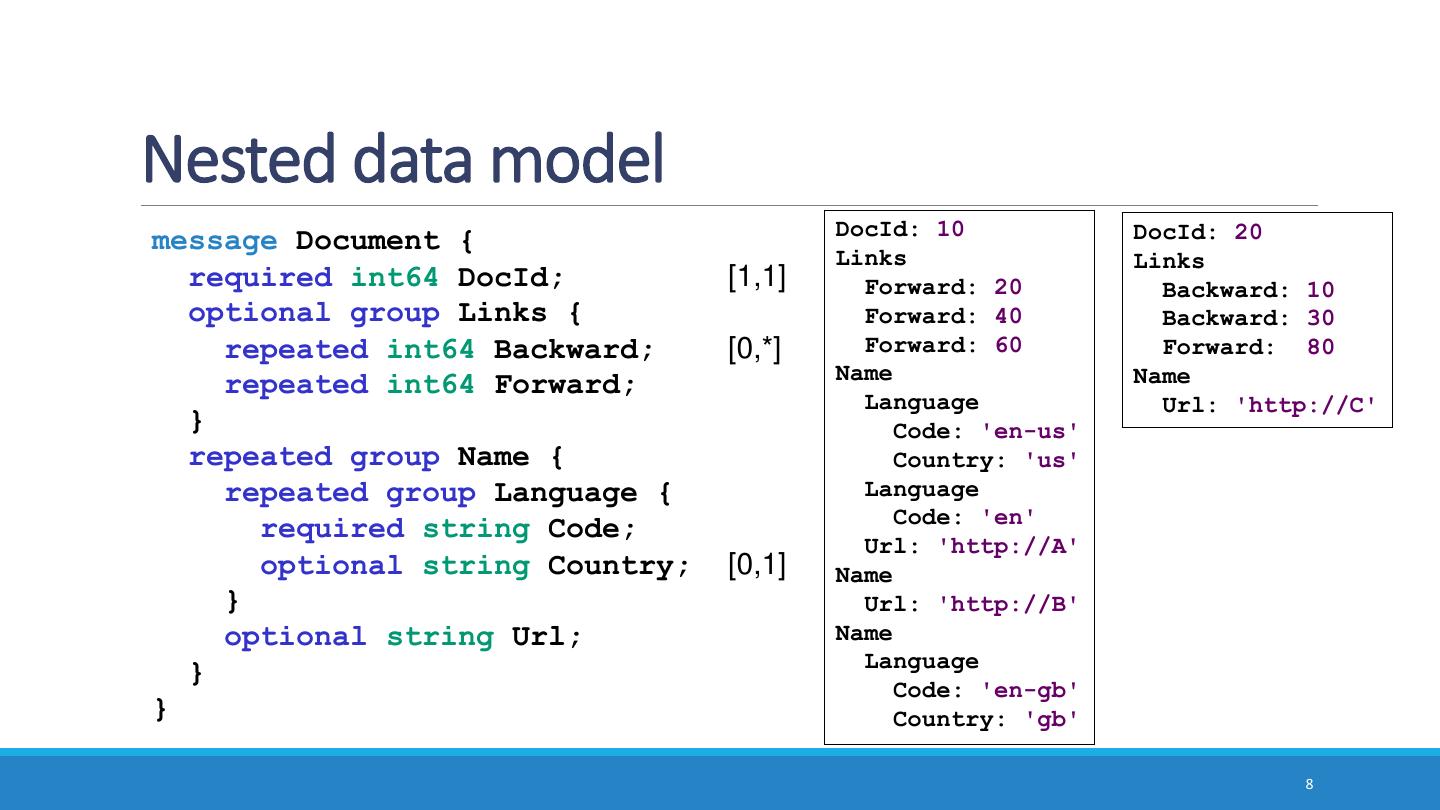

8 .Nested data model DocId: 10 DocId: 20 message Document { Links Links required int64 DocId; [1,1] Forward: 20 Backward: 10 optional group Links { Forward: 40 Backward: 30 repeated int64 Backward; [0,*] Forward: 60 Forward: 80 Name Name repeated int64 Forward; Language Url: 'http://C' } Code: 'en-us' repeated group Name { Country: 'us' repeated group Language { Language Code: 'en' required string Code; Url: 'http://A' optional string Country; [0,1] Name } Url: 'http://B' optional string Url; Name Language } Code: 'en-gb' } Country: 'gb' 8

9 . Repetition and definition levels r=1 r=2 (non-repeating) DocId: 10 r 1 DocId: 20 r 2 Links Links Name.Language.Code Forward: 20 Backward: 10 Forward: 40 Backward: 30 value r d Forward: 60 Forward: 80 en-us 0 2 Name Name Language Url: 'http://C' en 2 2 Code: 'en-us' Country: 'us' NULL 1 1 Language en-gb 1 2 Code: 'en' Url: 'http://A' NULL 0 1 Name Url: 'http://B' r: At what repeated field in the field's path Name the value has repeated Language d: How many fields in paths that could be Code: 'en-gb' Country: 'gb' undefined (opt. or rep.) are actually present 9

10 .Column-striped representation DocId Name.Url Links.Forward Links.Backward value r d value r d value r d value r d 10 0 0 http://A 0 2 20 0 2 NULL 0 1 20 0 0 http://B 1 2 40 1 2 10 0 2 NULL 1 1 60 1 2 30 1 2 Name.Language.Code http://C 0 2 80 0 2 Name.Language.Country value r d value r d en-us 0 2 us 0 3 en 2 2 NULL 2 2 NULL 1 1 NULL 1 1 en-gb 1 2 gb 1 3 NULL 0 1 NULL 0 1 10

11 .Record assembly FSM Transitions DocId 0 labeled with 0 1 repetition levels 1 Links.Backward Links.Forward 0 0,1,2 Name.Language.Code Name.Language.Country 2 Name.Ur 0,1 1 l 0 For record-oriented data processing (e.g., MapReduce) 11

12 .SQL dialect for nested data SELECT DocId AS Id, COUNT(Name.Language.Code) WITHIN Name AS Cnt, Name.Url + ',' + Name.Language.Code AS Str FROM t WHERE REGEXP(Name.Url, '^http') AND DocId < 20; Output schema Output table Id: 10 message QueryResult { Name t1 required int64 Id; Cnt: 2 repeated group Name { Language optional uint64 Cnt; Str: 'http://A,en-us' repeated group Language { Str: 'http://A,en' optional string Str; Name } Cnt: 0 } } No record assembly during query processing 12

13 .Serving tree root server client • Parallelizes scheduling and aggregation • Fault tolerance intermediate ... • Stragglers servers • Designed for "small" results leaf servers ... (<1M records) (with local ... storage) histogram of response times storage layer (e.g., GFS) 13

14 .Example: count() 0 SELECT A, COUNT(B) FROM T SELECT A, SUM(c) GROUP BY A FROM (R11 UNION ALL R110) T = {/gfs/1, /gfs/2, …, /gfs/100000} GROUP BY A R11 R12 SELECT A, COUNT(B) AS c SELECT A, COUNT(B) AS c 1 FROM T11 GROUP BY A FROM T12 GROUP BY A ... T11 = {/gfs/1, …, /gfs/10000} T12 = {/gfs/10001, …, /gfs/20000} ... SELECT A, COUNT(B) AS c 3 FROM T31 GROUP BY A ... T31 = {/gfs/1} Data access ops 14

15 .Experiments • 1 PB of real data (uncompressed, non-replicated) • 100K-800K tablets per table • Experiments run during business hours Table Number of Size (unrepl., Number Data Repl. name records compressed) of fields center factor T1 85 billion 87 TB 270 A 3× T2 24 billion 13 TB 530 A 3× T3 4 billion 70 TB 1200 A 3× T4 1+ trillion 105 TB 50 B 3× T5 1+ trillion 20 TB 30 B 2× 15

16 .Read from disk time (sec) (e) parse as from records C++ objects objects (d) read + records decompress from columns columns (c) parse as C++ objects (b) assemble records (a) read + decompress number of fields Table partition: 375 MB (compressed), 300K rows, 125 columns 16

17 .MR and Dremel execution Avg # of terms in specific field in table T1 execution time (sec) on 3000 nodes 87 TB 0.5 TB 0.5 TB Q1: SELECT SUM(count_words(txtField)) / COUNT(*) FROM T1 MR overheads: launch jobs, schedule 0.5M tasks, assemble records 17

18 .Impact of serving tree depth execution time (sec) Q2: SELECT country, SUM(item.amount) FROM T2 GROUP BY country Q3: SELECT domain, SUM(item.amount ) FROM T2 WHERE domain (returns 100s of records) (returns 1M records) CONTAINS ’.net’ GROUP BY domain 18

19 .Scalability execution time (sec) number of leaf servers Q5 on a trillion-row table T4: SELECT TOP(aids, 20), COUNT(*) FROM T4 19

20 .Interactive speed percentage of queries Monthly query workload of one 3000-node Dremel instance execution time (sec) Most queries complete under 10 sec 20

21 .Outcome • Google Big-Query - Web Service (pay-per-query) BigQuery • Apache Drill - Open source Implementation of BigQuery 21

22 .Take Away • Map-Reduce can benefit from columnar storage like a parallel DBMS - Record assembly is expensive - Dremel complements MR and together produces best results • Parallel DBMS can benefit from serving tree architecture 22

23 .Thank You 23