- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Visual Analyrtics of Deep Learning

展开查看详情

1 . ) TC BD ( 会 大 术 Visual Analytics of Deep Learning 技 据 Mengchen Liu 数 大 Microsoft 国 中 18 20

2 .20 18 中 国 大 数 据 技 术 大 会 ( Deep learning BD TC ) 2

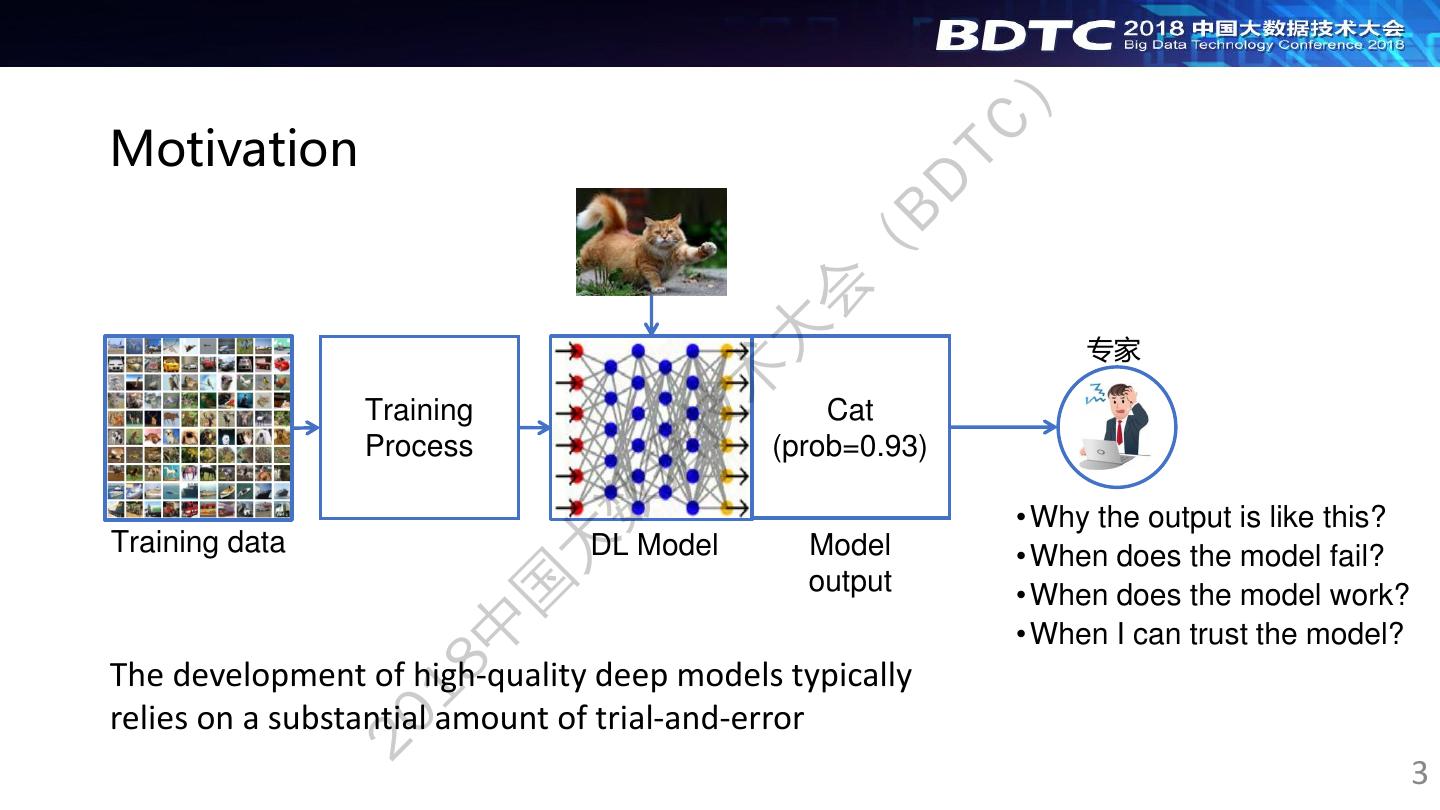

3 . ) Motivation TC BD ( 会 大 专家 术 Training Cat 技 Process (prob=0.93) 据 数 • Why the output is like this? Training data 大 DL Model Model output • When does the model fail? 国 • When does the model work? 中 • When I can trust the model? The development of high-quality deep models typically 18 relies on a substantial amount of trial-and-error 20 3

4 . ) Explainable Deep Learning TC BD ( 会 大 Cat, because 专家 术 Explainable Fur 技 deep + 据 learning Ear 数 • Understand the model output Training data 大 Explainable models Visual analytics of deep models • Understand when it works 国 • Understand when it fails 中 • Understand why it fails 18 Explain the model outputs Visualize workings of the model, 20 by statistical approaches, support interactive exploration, e.g., ICML 2017 best paper e.g., VAST 2017 best paper 4

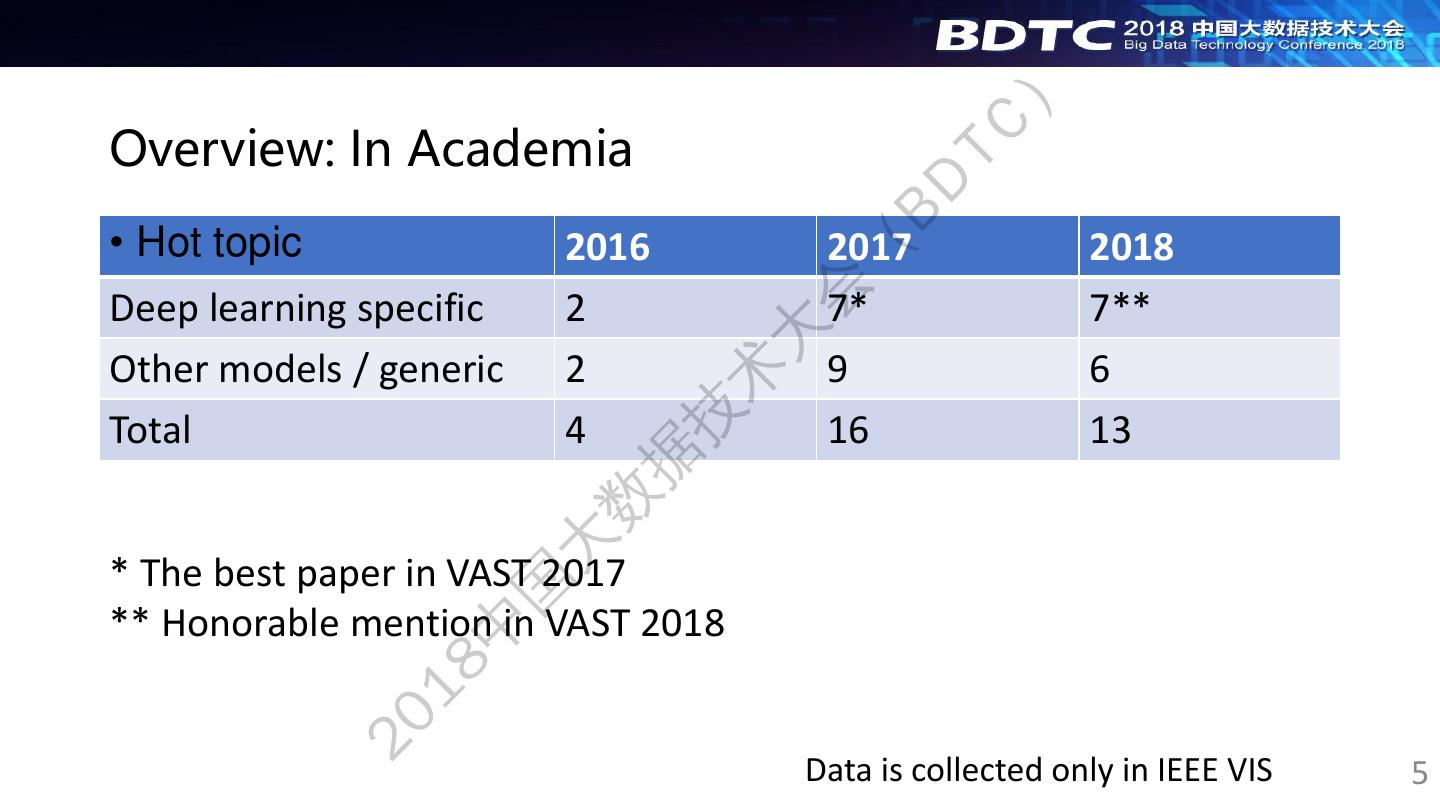

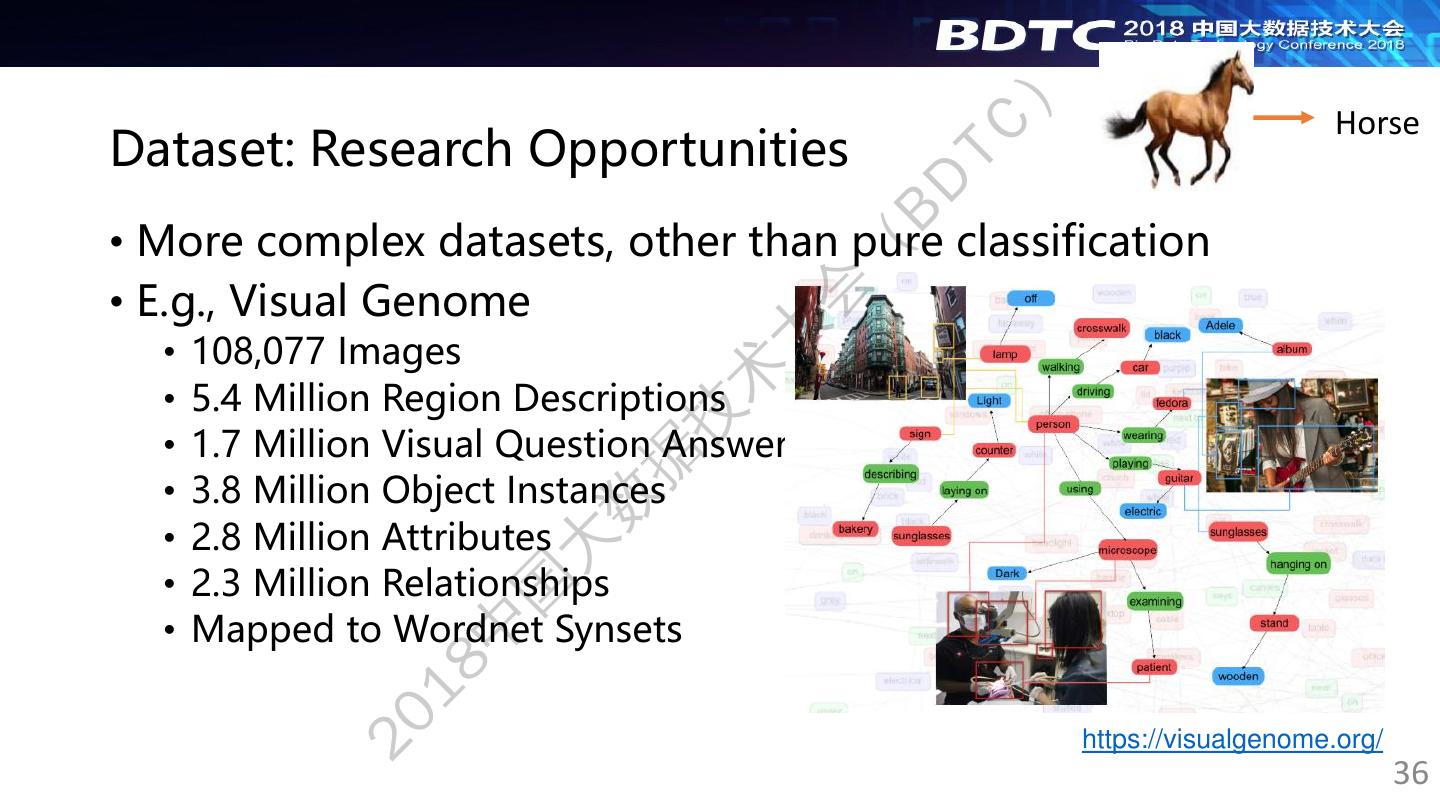

5 . ) Overview: In Academia TC BD • Hot topic 2016 2017 2018 ( 会 Deep learning specific 2 7* 7** 大 Other models / generic 2 9 6 术 技 Total 4 16 13 据 数 大 * The best paper in VAST 2017 国 ** Honorable mention in VAST 2018 中 18 20 Data is collected only in IEEE VIS 5

6 . ) Overview: In Industry TC BD • Google ( • TensorBoard 会 • AutoML 大 • Microsoft 术 • CustomVision 技 • Machine learning service 据 • Machine learning studio 数 • IBM • Visual Recognition大 国 • Facebook 中 • FB Learner 18 20 6

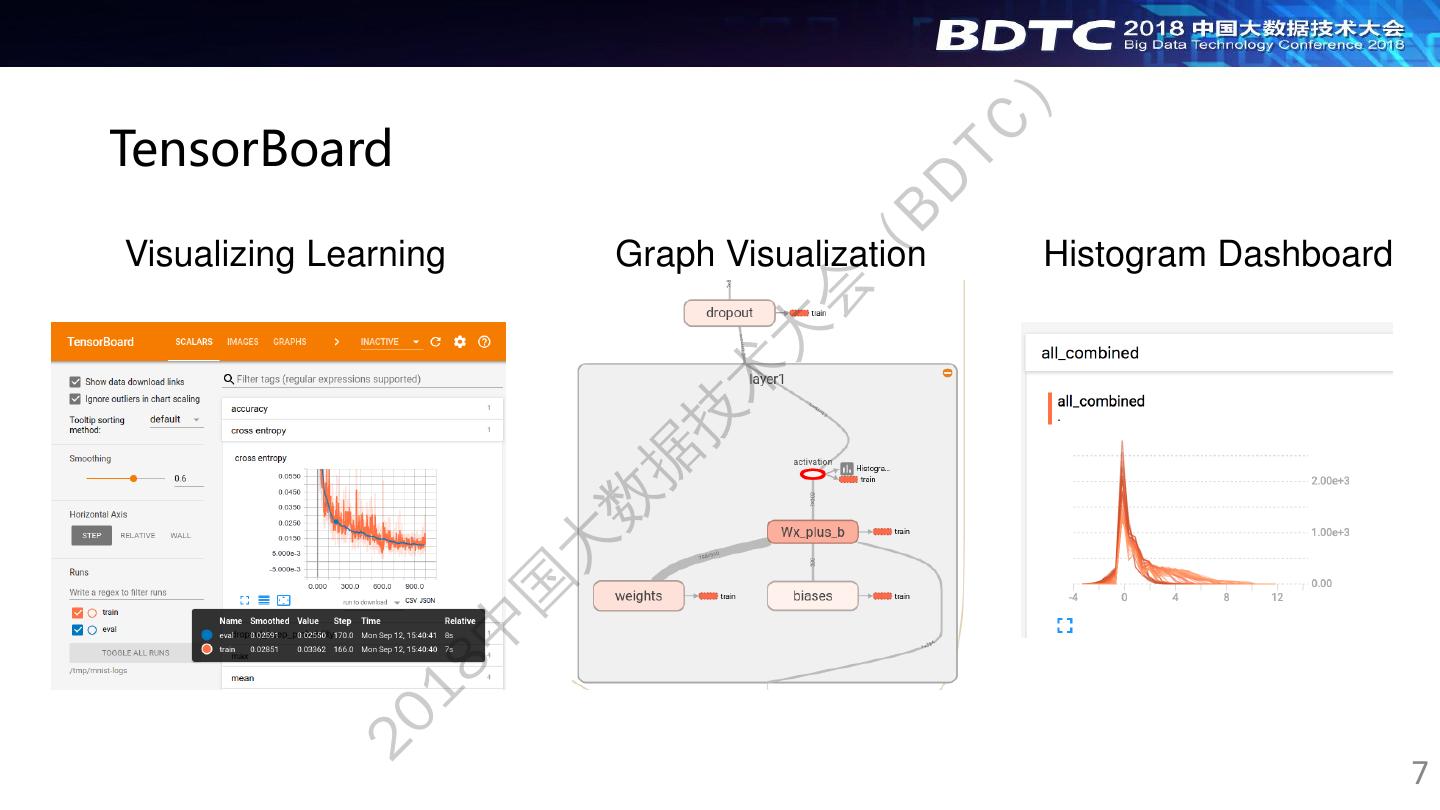

7 . ) TensorBoard TC BD ( Visualizing Learning Graph Visualization Histogram Dashboard 会 大 术 技 据 数 大 国 中 18 20 7

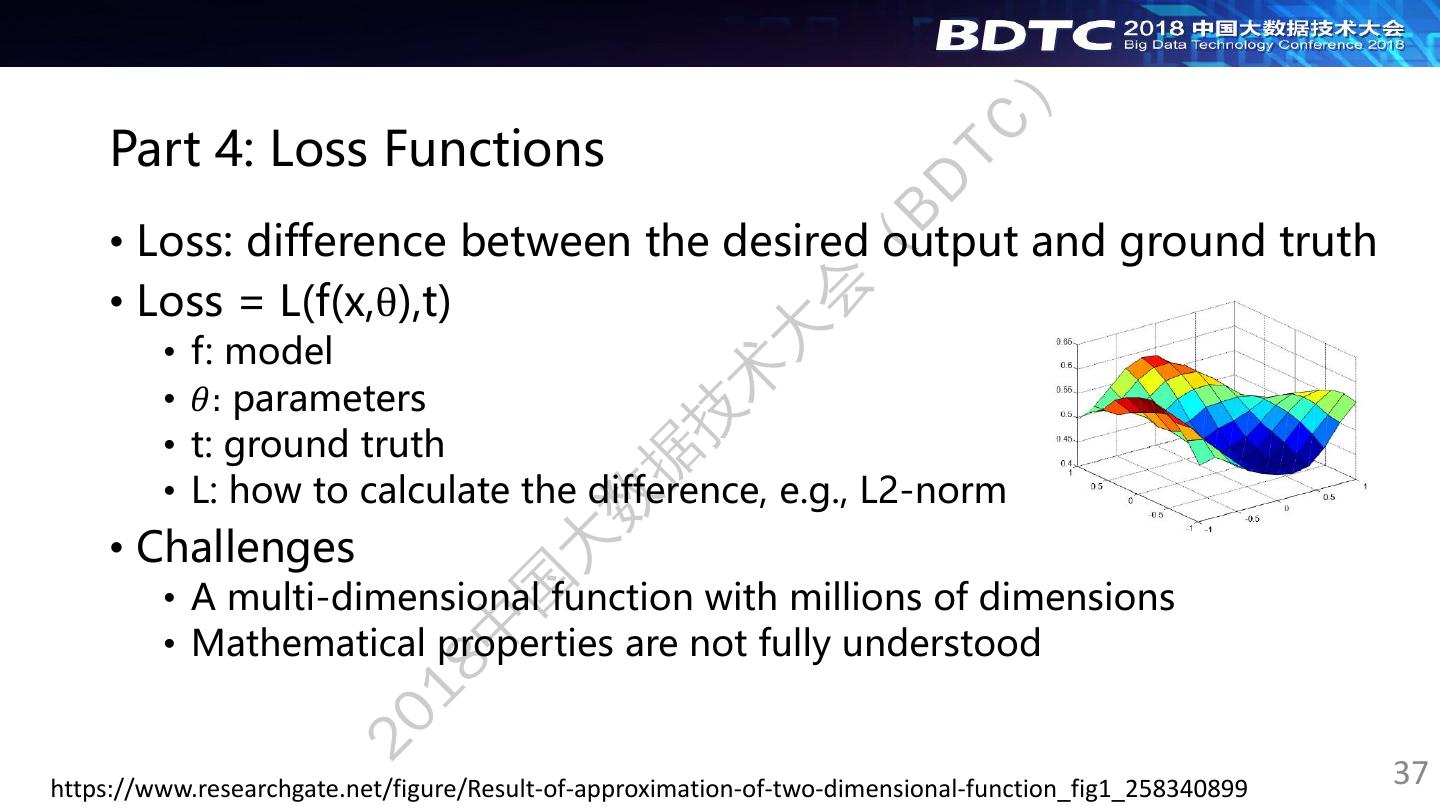

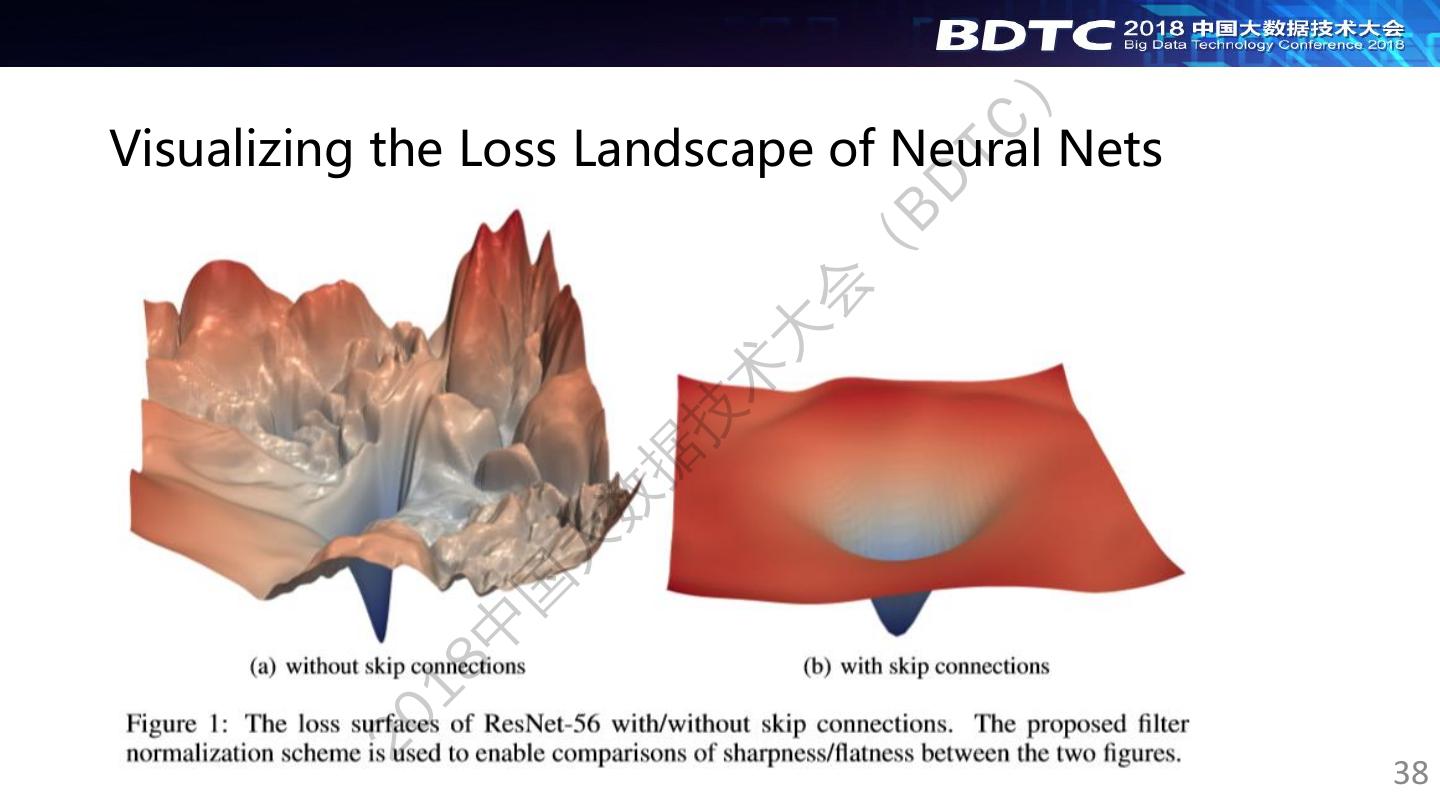

8 . ) Outline TC BD • Part 1: Model ( • Part 2: Training 会 • Part 3: Dataset 大 术 • Part 4: Cost function 技 据 • Nearly all deep learning algorithms can be described as 数 particular instances of a fairly simple recipe: combine a 大 specification of a dataset, a cost function, an optimization 国 procedure, and a model. 中 From the “Deep Learning” book 18 20 8

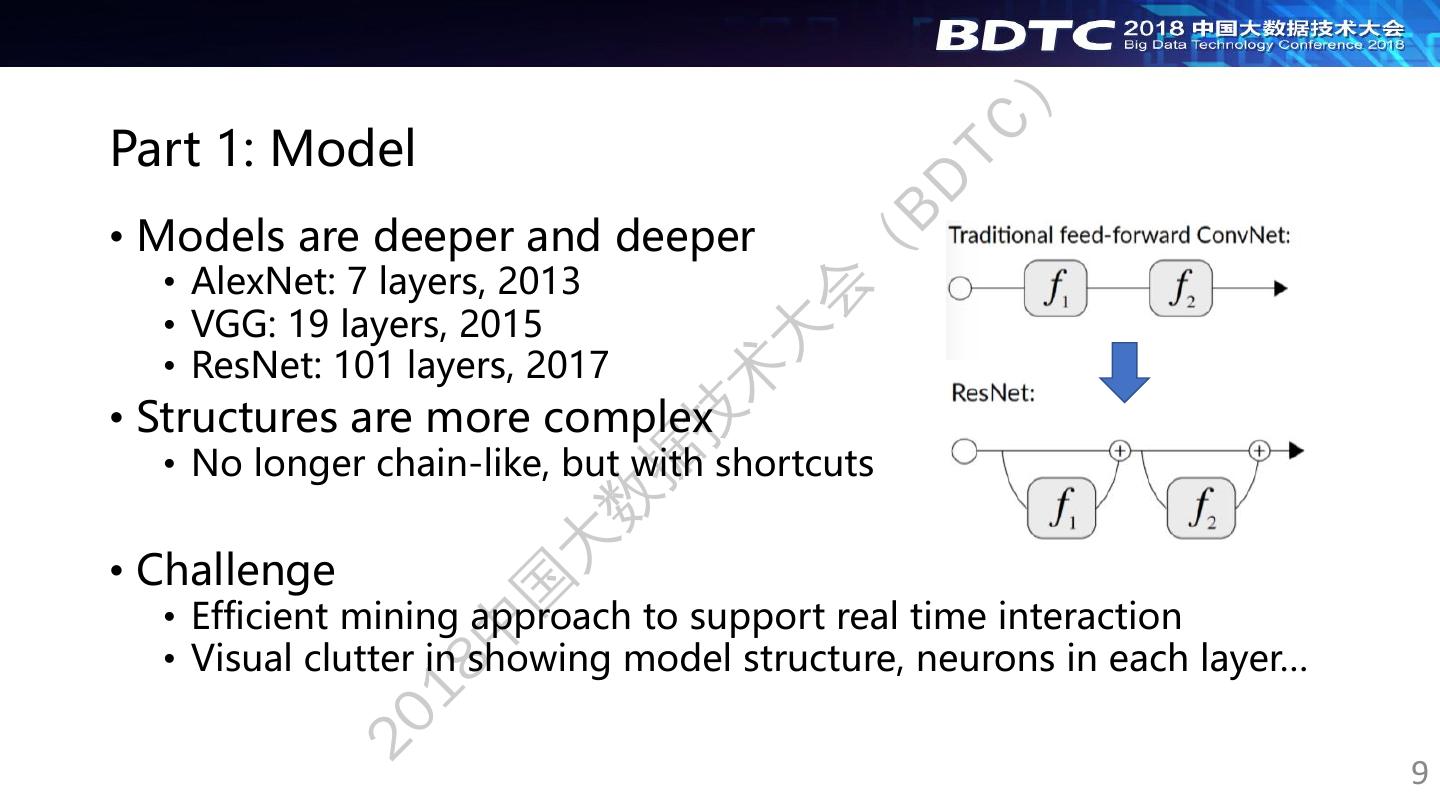

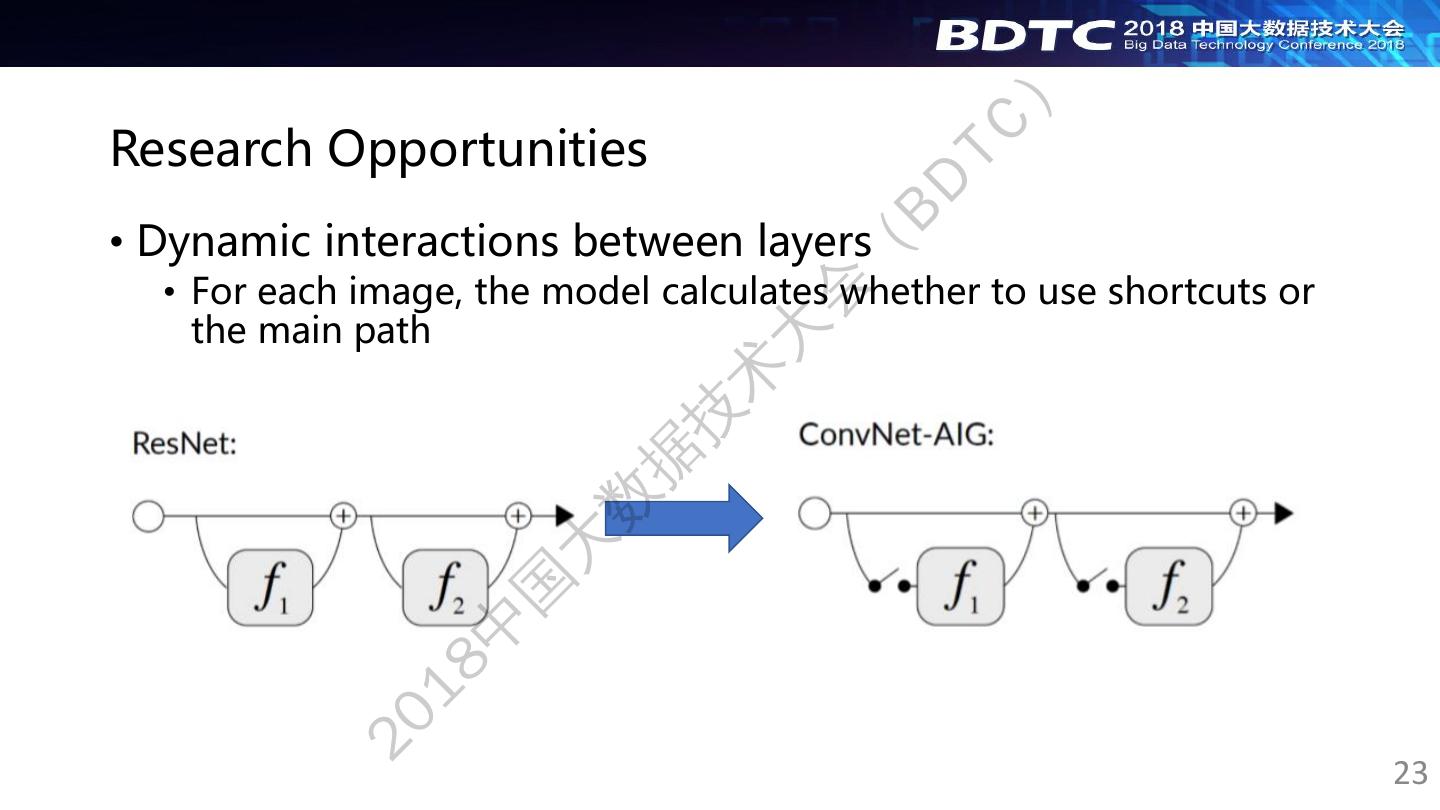

9 . ) Part 1: Model TC BD • Models are deeper and deeper ( • AlexNet: 7 layers, 2013 会 • VGG: 19 layers, 2015 大 • ResNet: 101 layers, 2017 术 • Structures are more complex 技 • No longer chain-like, but with shortcuts 据 数 • Challenge 大 国 • Efficient mining approach to support real time interaction 中 • Visual clutter in showing model structure, neurons in each layer… 18 20 9

10 . ) TC BD ( 会 大 术 Analyzing the Noise Robustness of Deep 技 据 Neural Networks 数 大 Mengchen Liu, Shixia Liu, Hang Su, Kelei Cao, Jun Zhu 国 VAST 2018 中 18 20 10

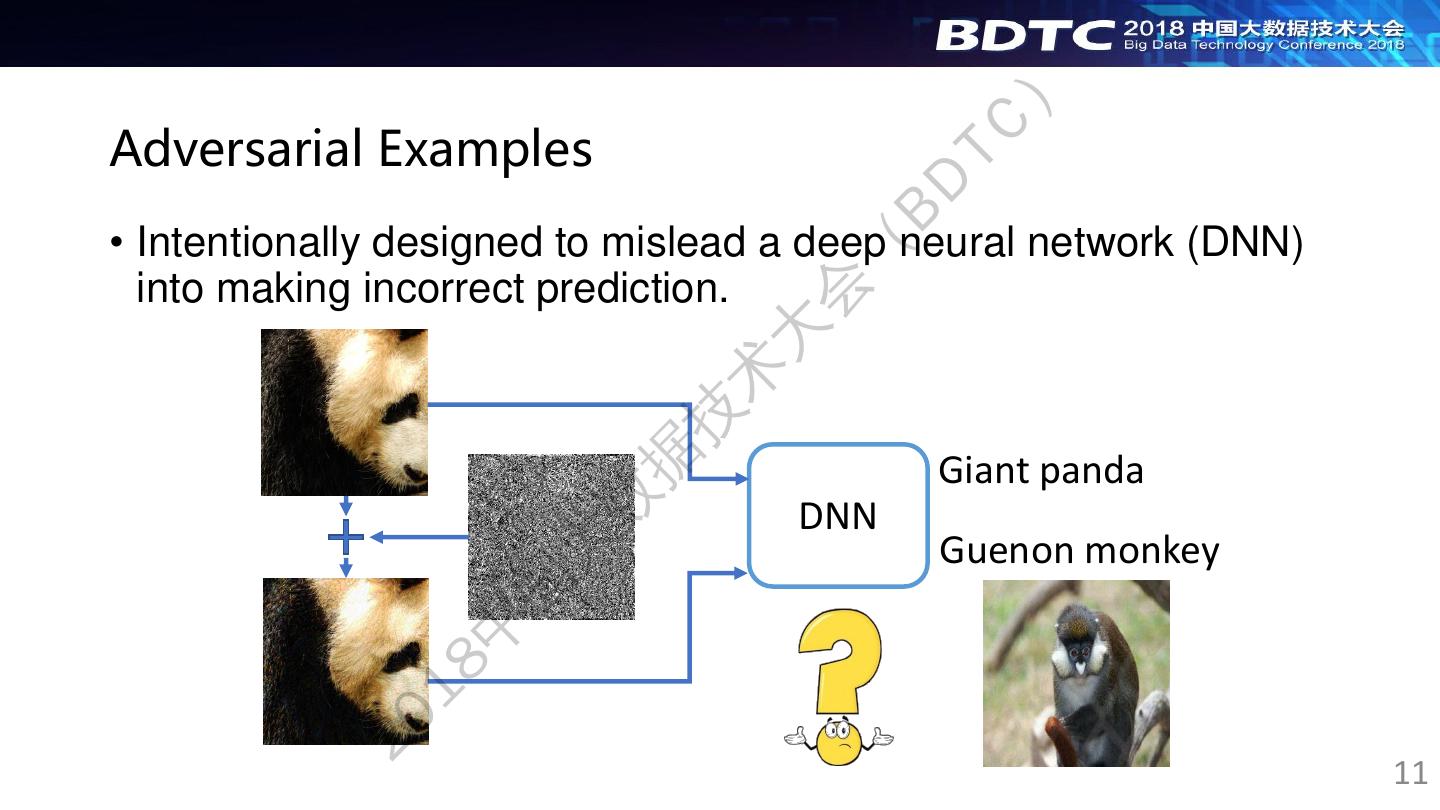

11 . ) Adversarial Examples TC BD • Intentionally designed to mislead a deep neural network (DNN) ( into making incorrect prediction. 会 大 术 技 据 Giant panda 数 DNN 大 Guenon monkey 国 中 18 20 11

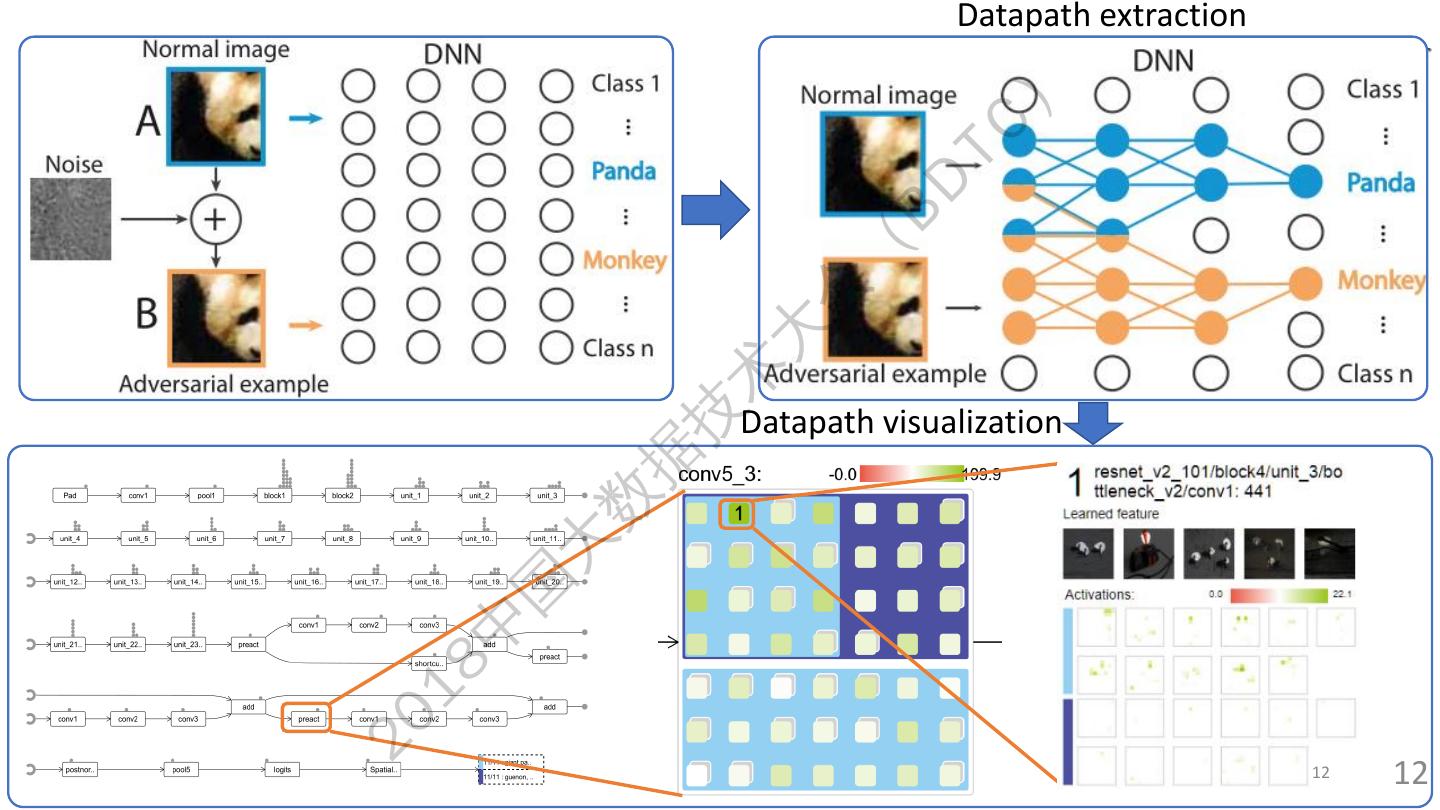

12 . Datapath extraction ) TC BD ( 会 大 术 技 Datapath visualization 据 数 大 国 中 18 20 12 12

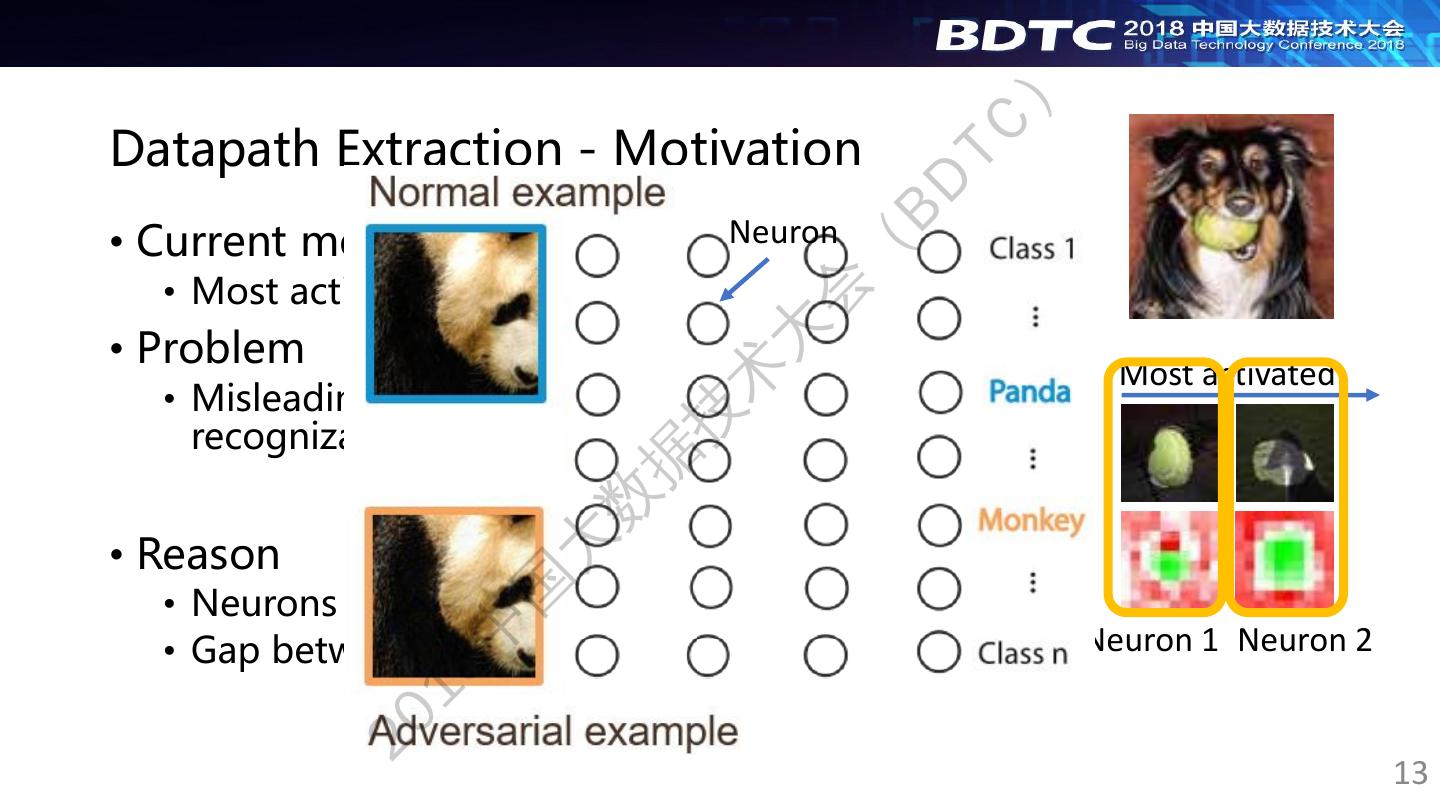

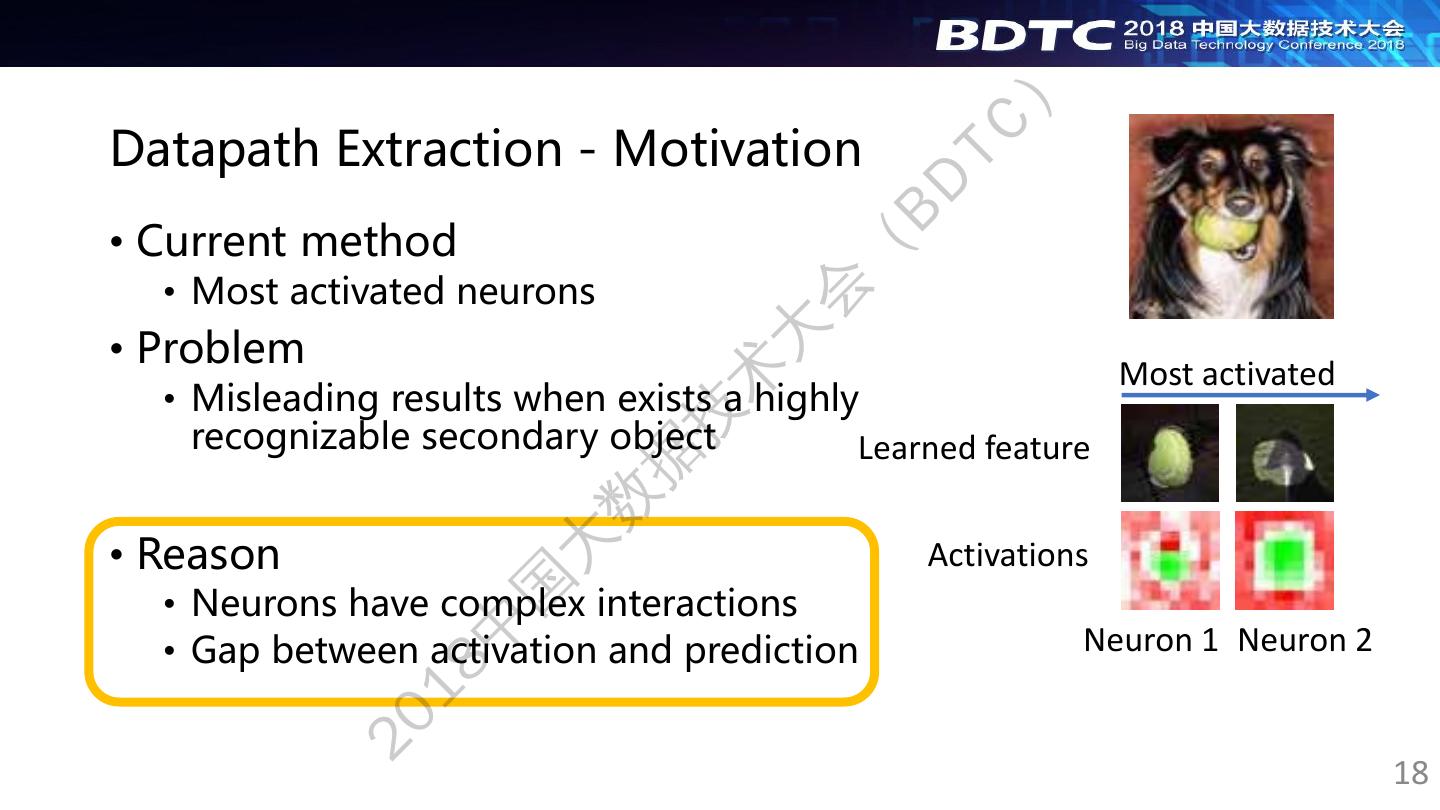

13 . ) Datapath Extraction - Motivation TC BD • Current method Neuron ( • Most activated neurons 会 • Problem 大 Most activated 术 • Misleading results when exists a highly 技 recognizable secondary object Learned feature 据 数 • Reason 大 Activations 国 • Neurons have complex interactions 中 • Gap between activation and prediction Neuron 1 Neuron 2 18 20 13

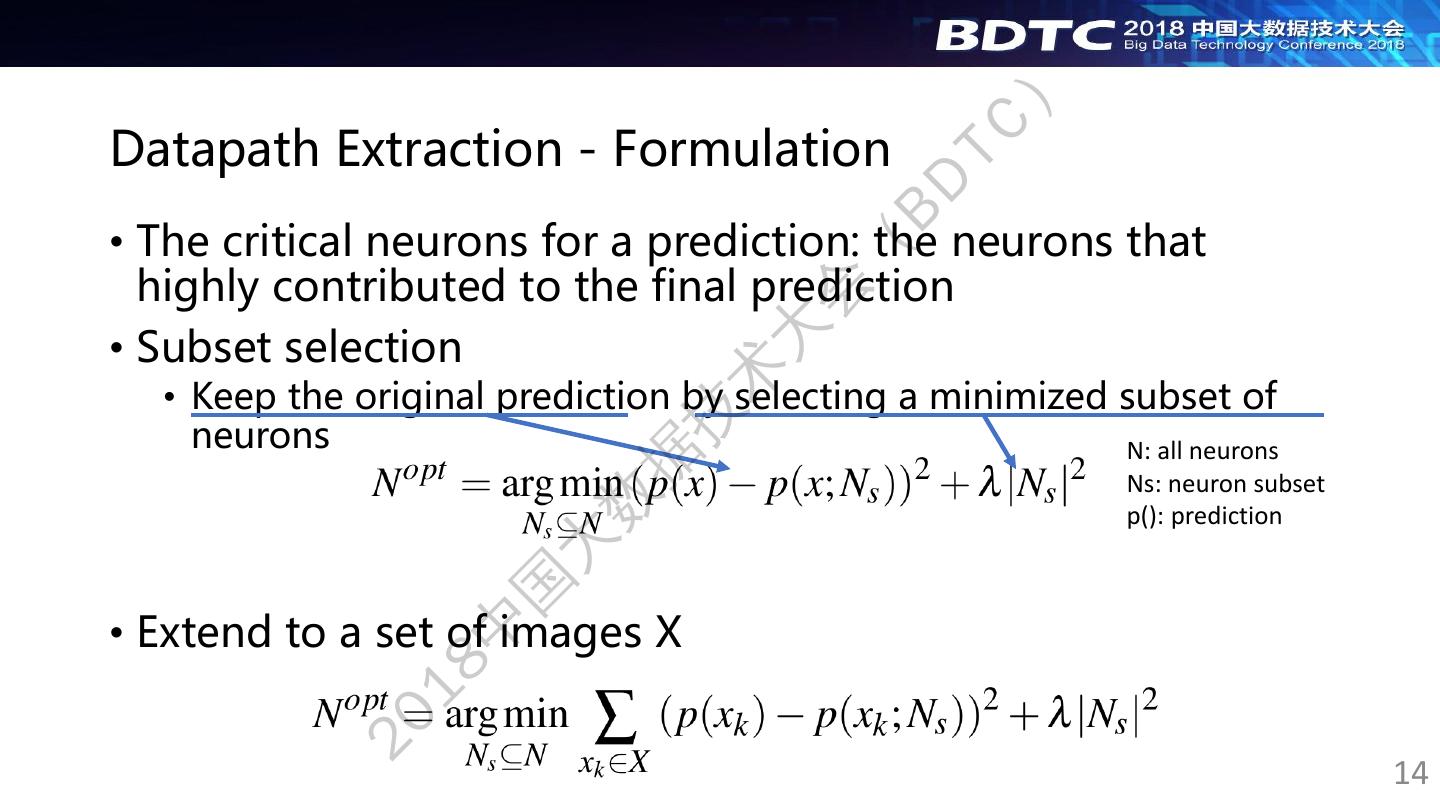

14 . ) Datapath Extraction - Formulation TC BD • The critical neurons for a prediction: the neurons that ( highly contributed to the final prediction 会 • Subset selection 大 术 • Keep the original prediction by selecting a minimized subset of 技 neurons N: all neurons 据 Ns: neuron subset 数 p(): prediction 大 国 • Extend to a set of images X 中 18 20 14

15 . ) Datapath Extraction - Solution TC BD • Directly solving: time-consuming ( • NP-complete 会 大 • Large search space due to the large number of layers 术 and neurons in a CNN 技 据 Quadratic approximation Divide-and-conquer-based 数 search space reduction 大 国 中 18 An accurate approximation in smaller search space 20 15

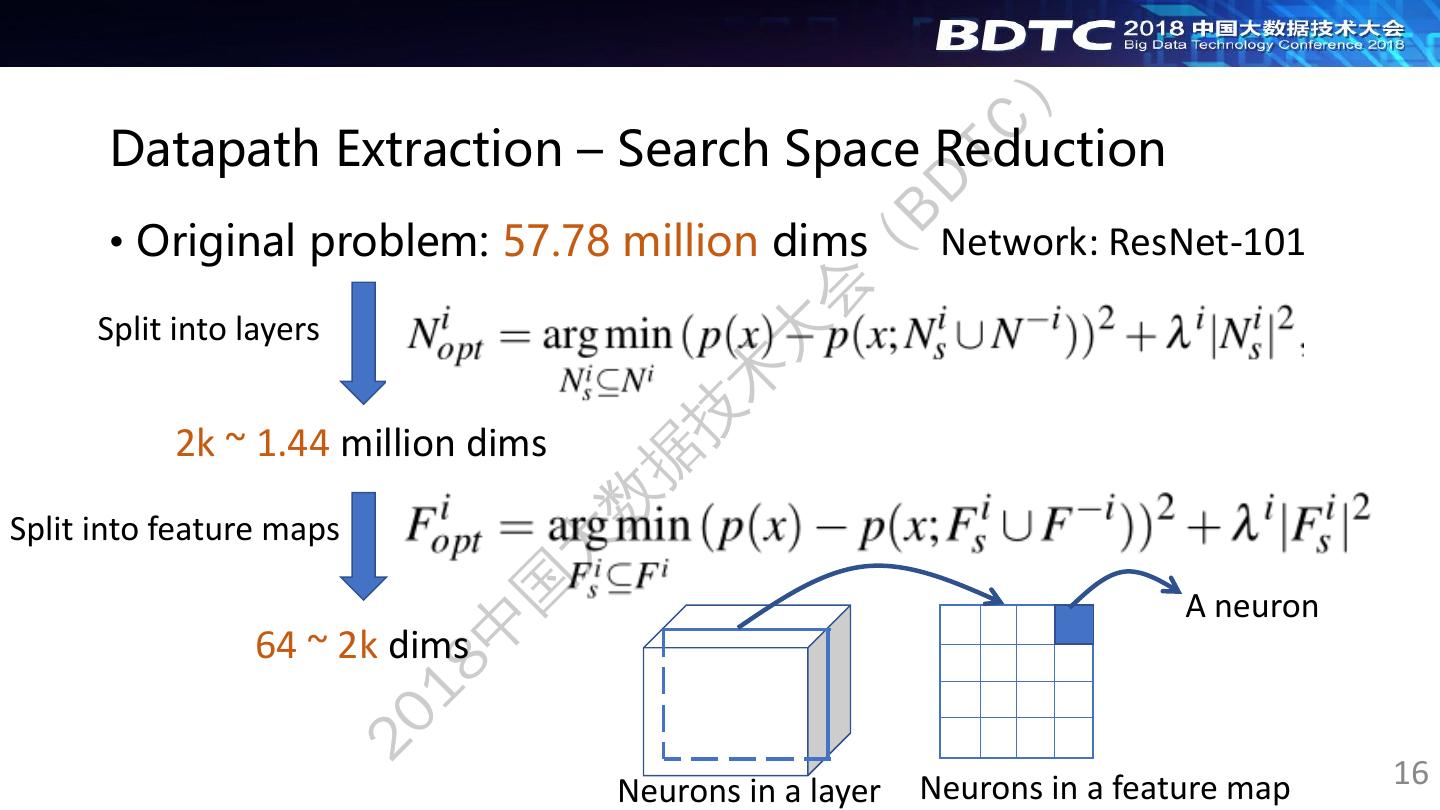

16 . ) Datapath Extraction – Search Space Reduction TC BD • Original problem: 57.78 million dims Network: ResNet-101 ( 会 Split into layers Processing layer by layer 大 术 技 2k ~ 1.44 million dims 据 数 Split into feature maps Aggregate neurons into feature maps 大 国 A neuron 中 64 ~ 2k dims 18 20 Neurons in a layer Neurons in a feature map 16

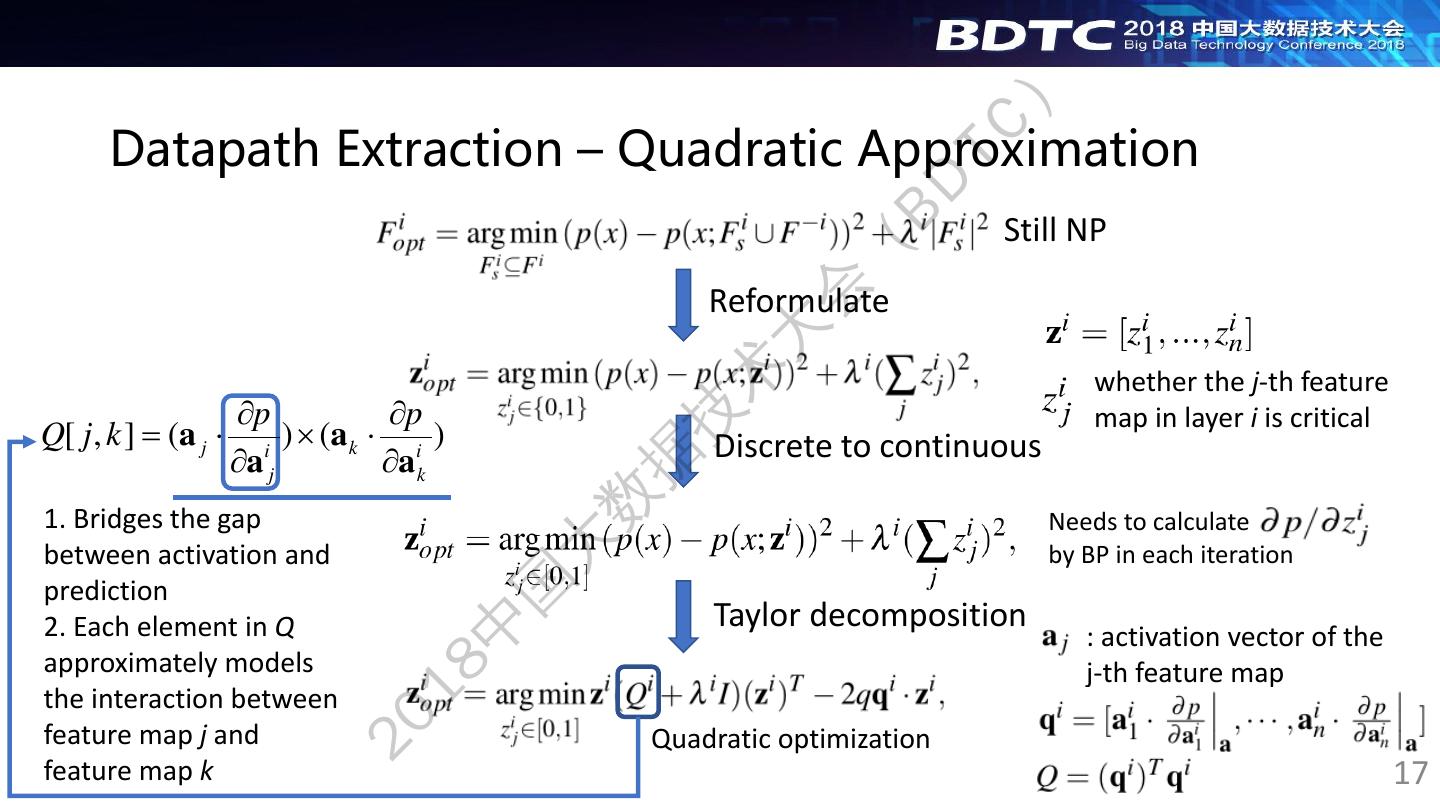

17 . ) Datapath Extraction – Quadratic Approximation TC BD Still NP ( 会 Reformulate 大 术 whether the j-th feature p p 技 map in layer i is critical Q[ j , k ] = (a j i ) (a k i ) Discrete to continuous a j a k 据 数 1. Bridges the gap Needs to calculate between activation and 大 by BP in each iteration 国 prediction 2. Each element in Q Taylor decomposition 中 : activation vector of the approximately models 18 j-th feature map the interaction between 20 feature map j and Quadratic optimization feature map k 17

18 . ) Datapath Extraction - Motivation TC BD • Current method ( • Most activated neurons 会 • Problem 大 Most activated 术 • Misleading results when exists a highly 技 recognizable secondary object Learned feature 据 数 • Reason 大 Activations 国 • Neurons have complex interactions 中 • Gap between activation and prediction Neuron 1 Neuron 2 18 20 18

19 . 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 19

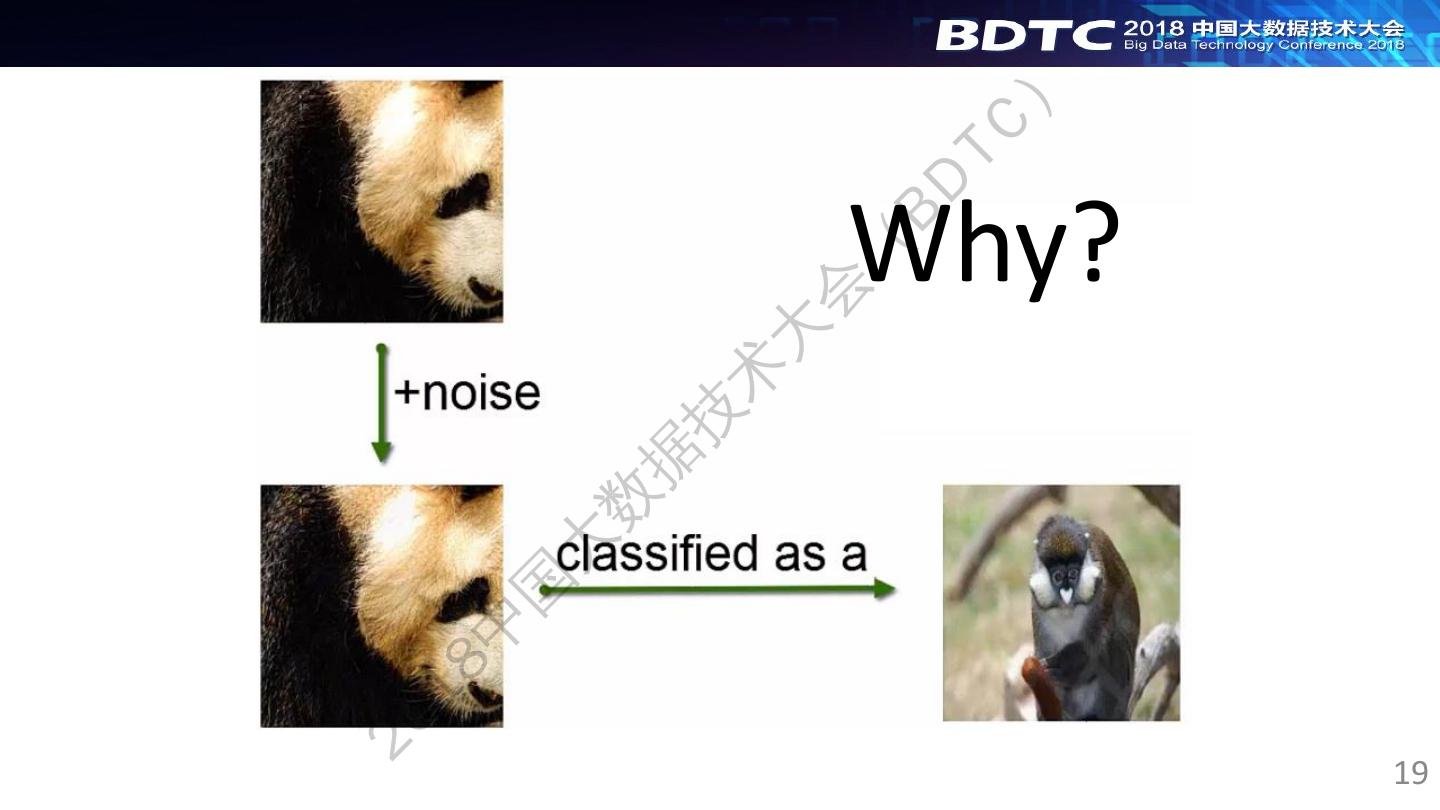

20 . 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) Why? 19

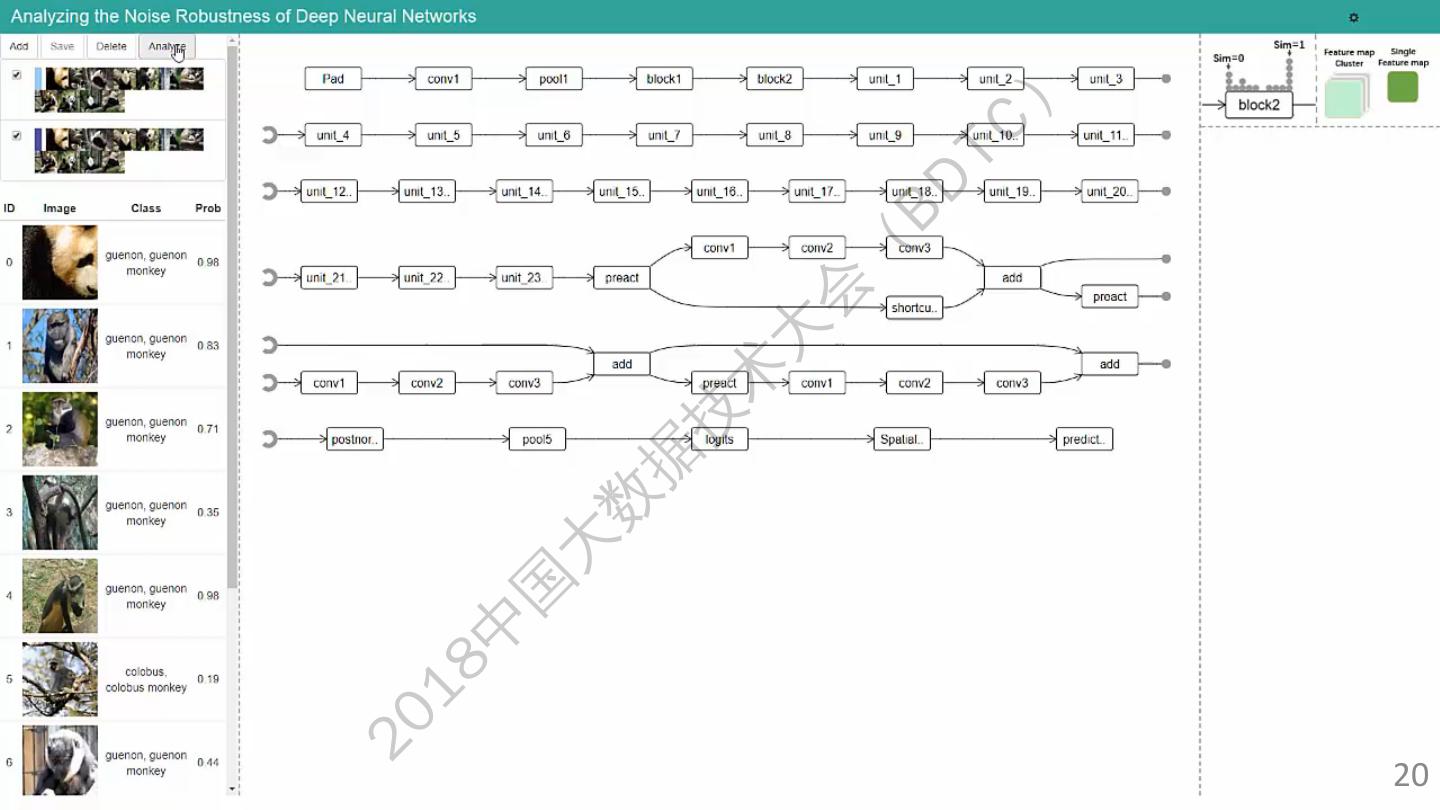

21 .21 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 20

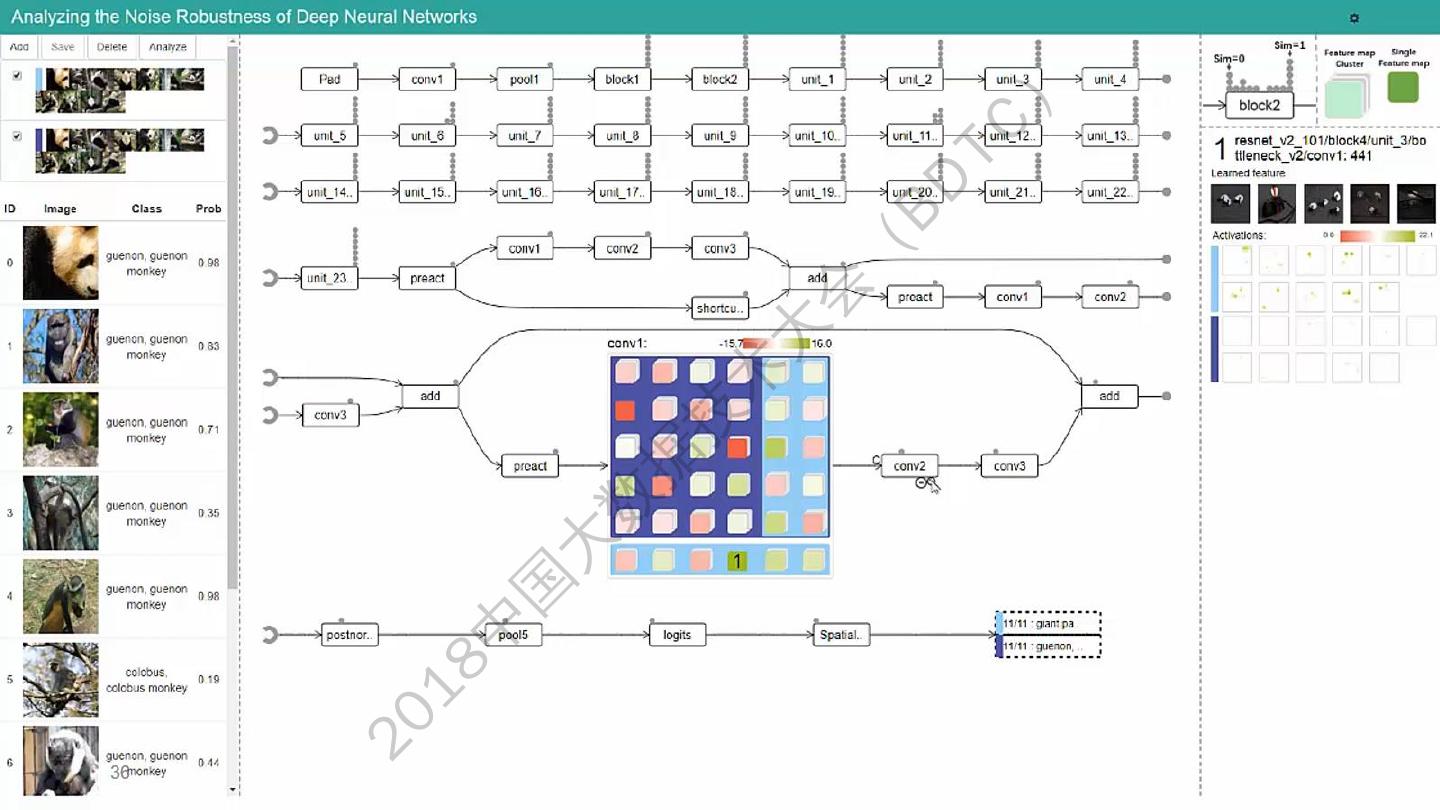

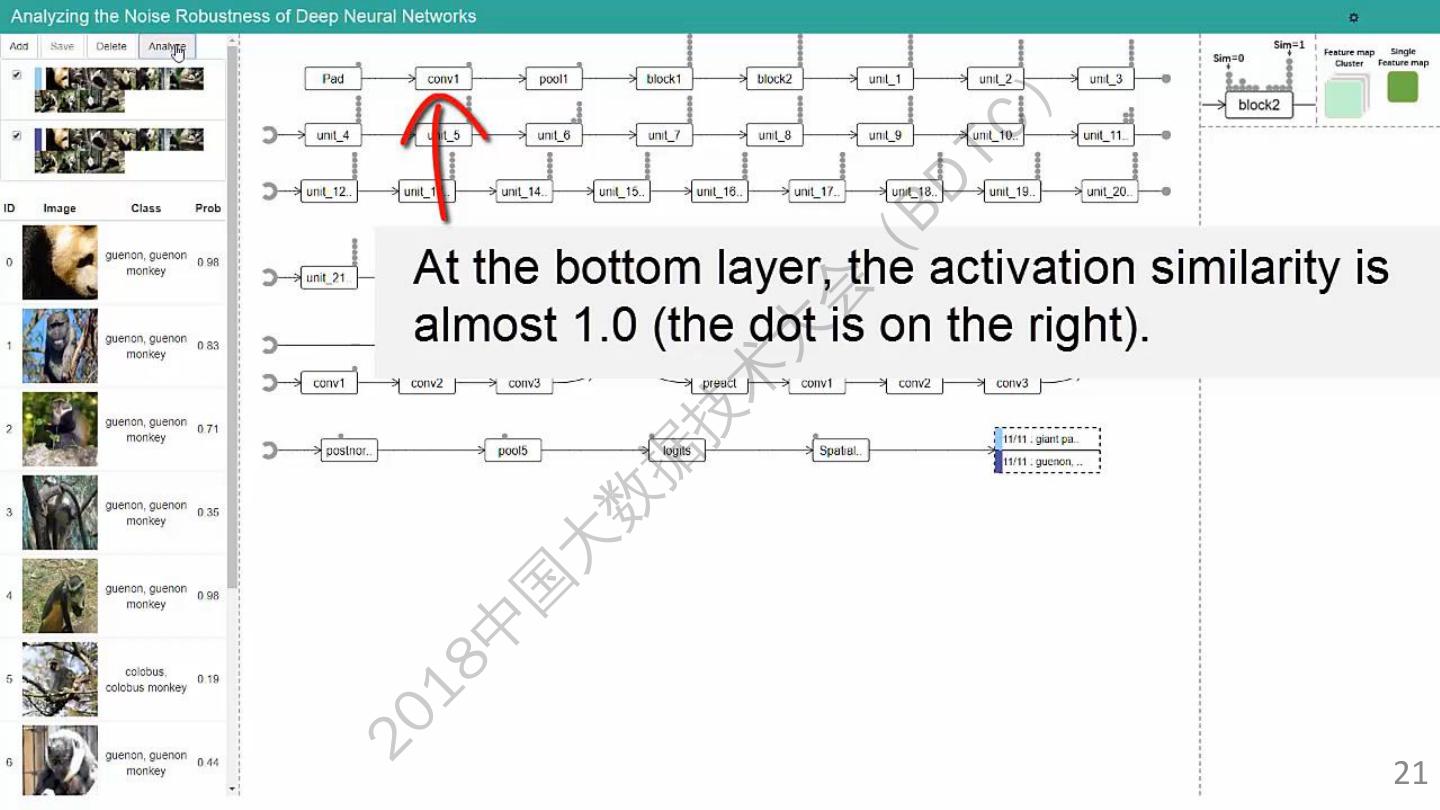

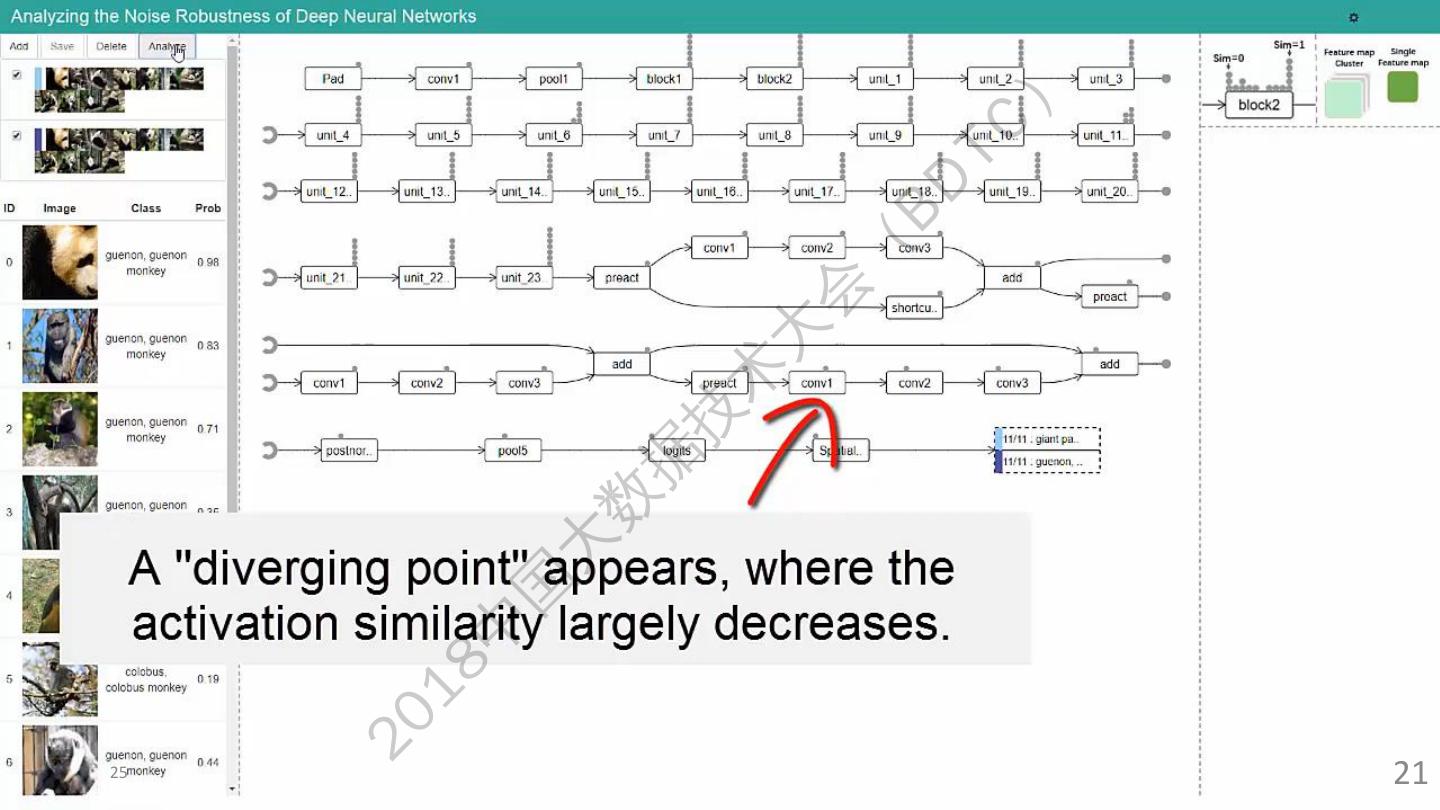

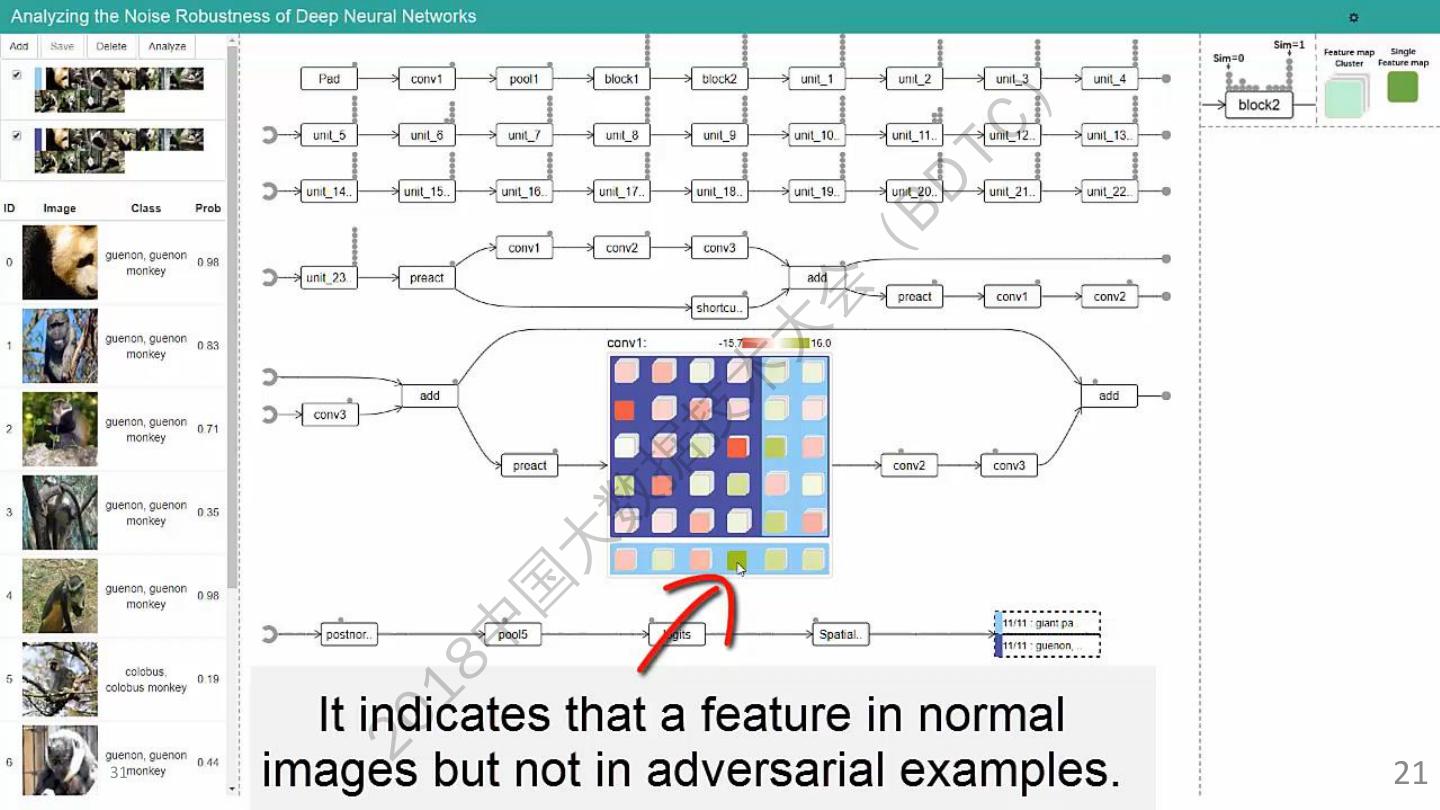

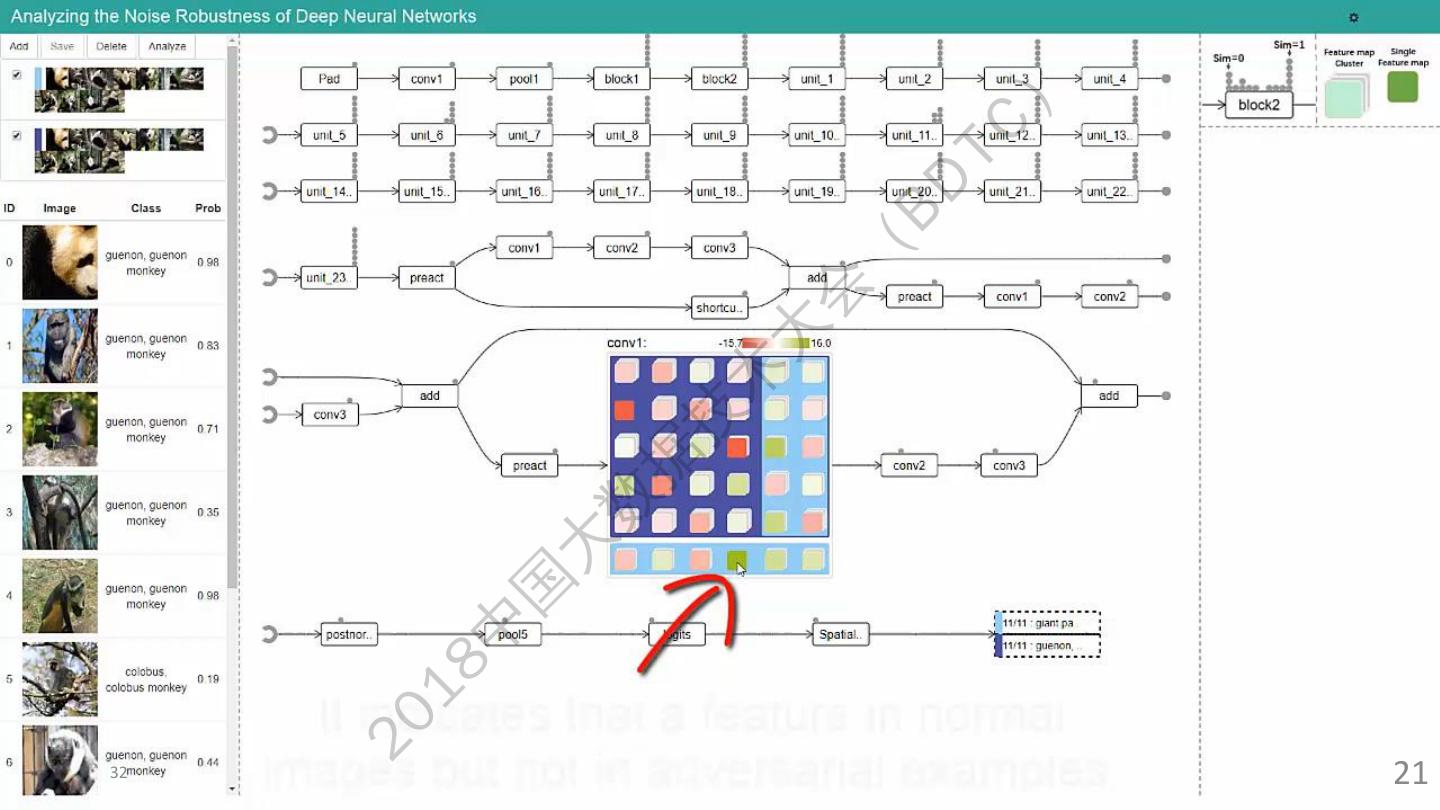

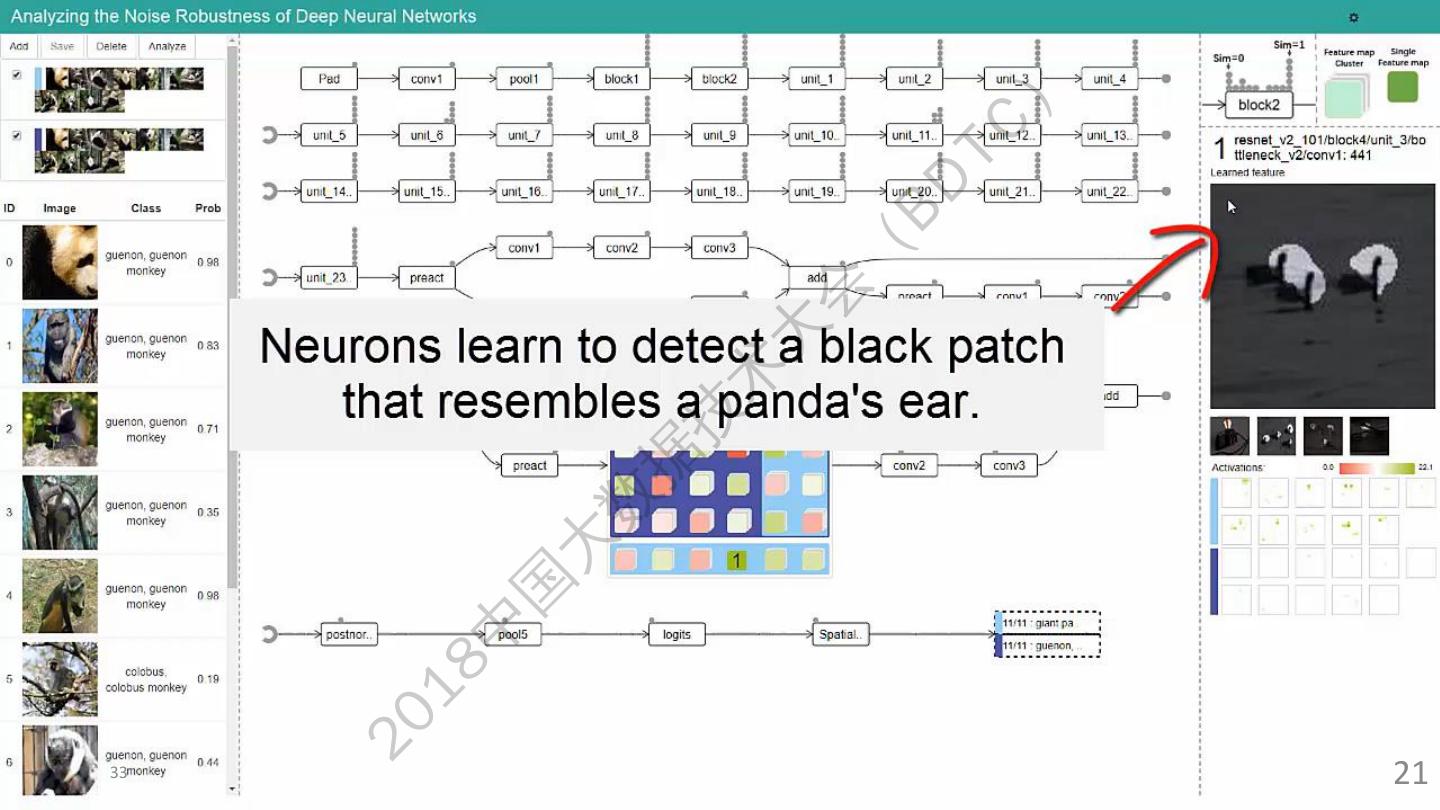

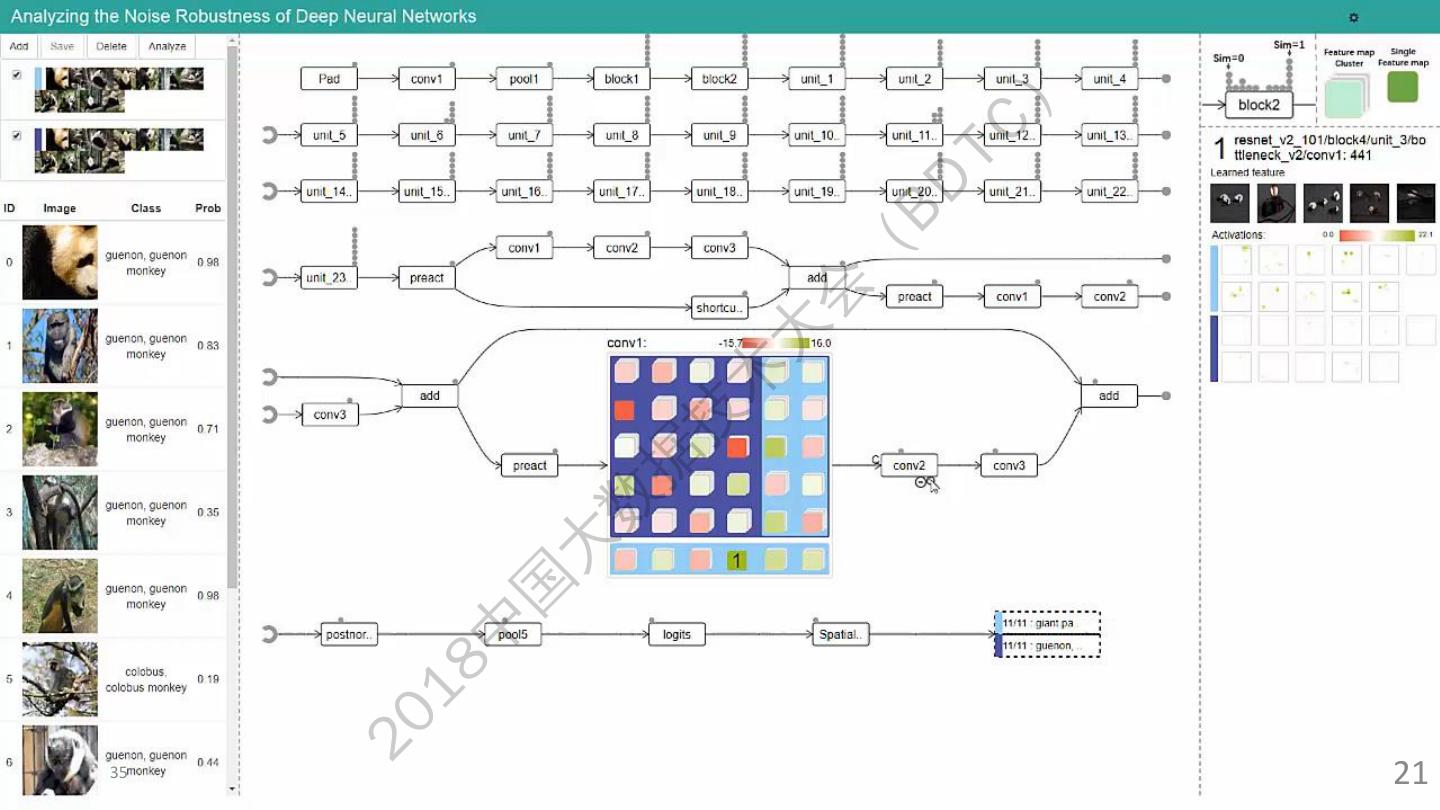

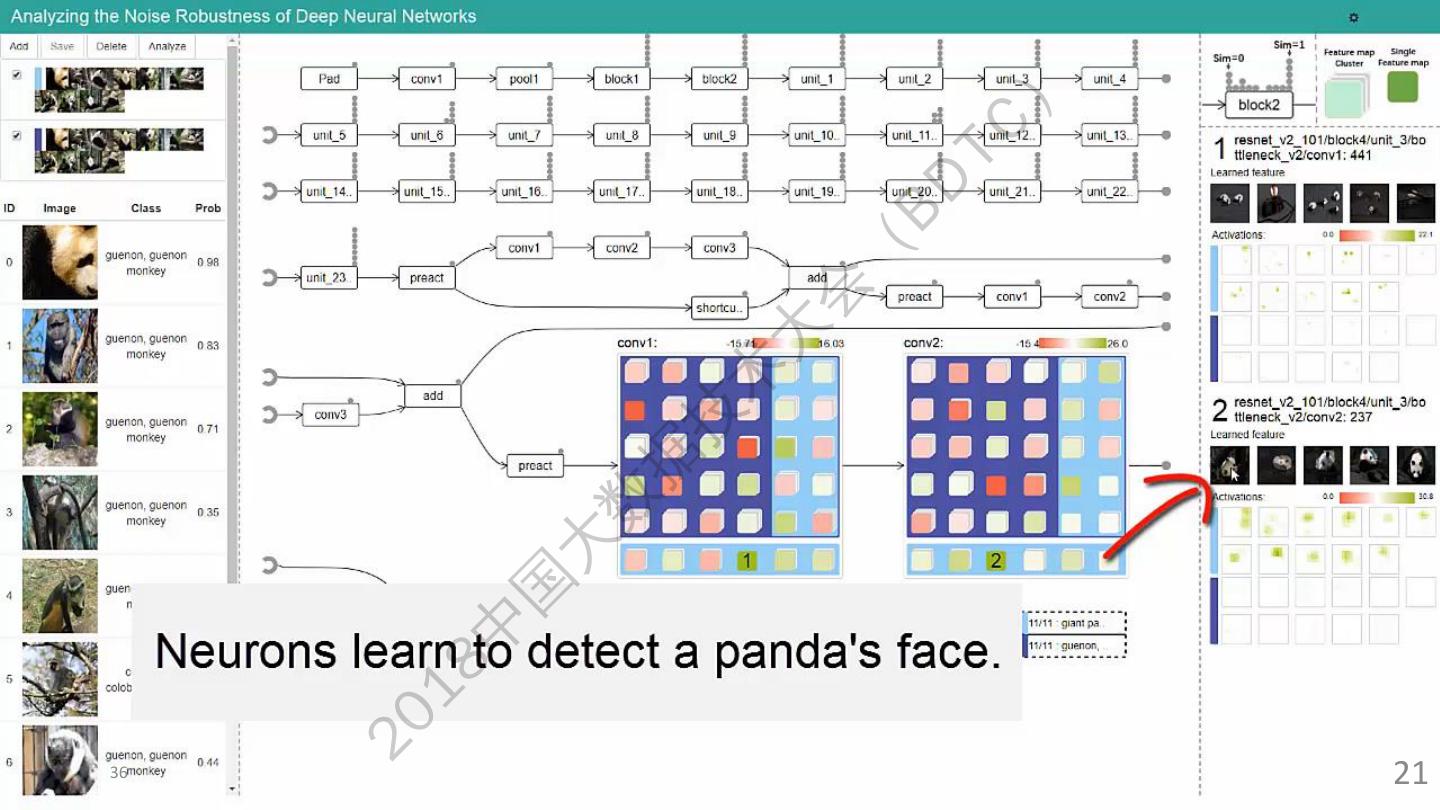

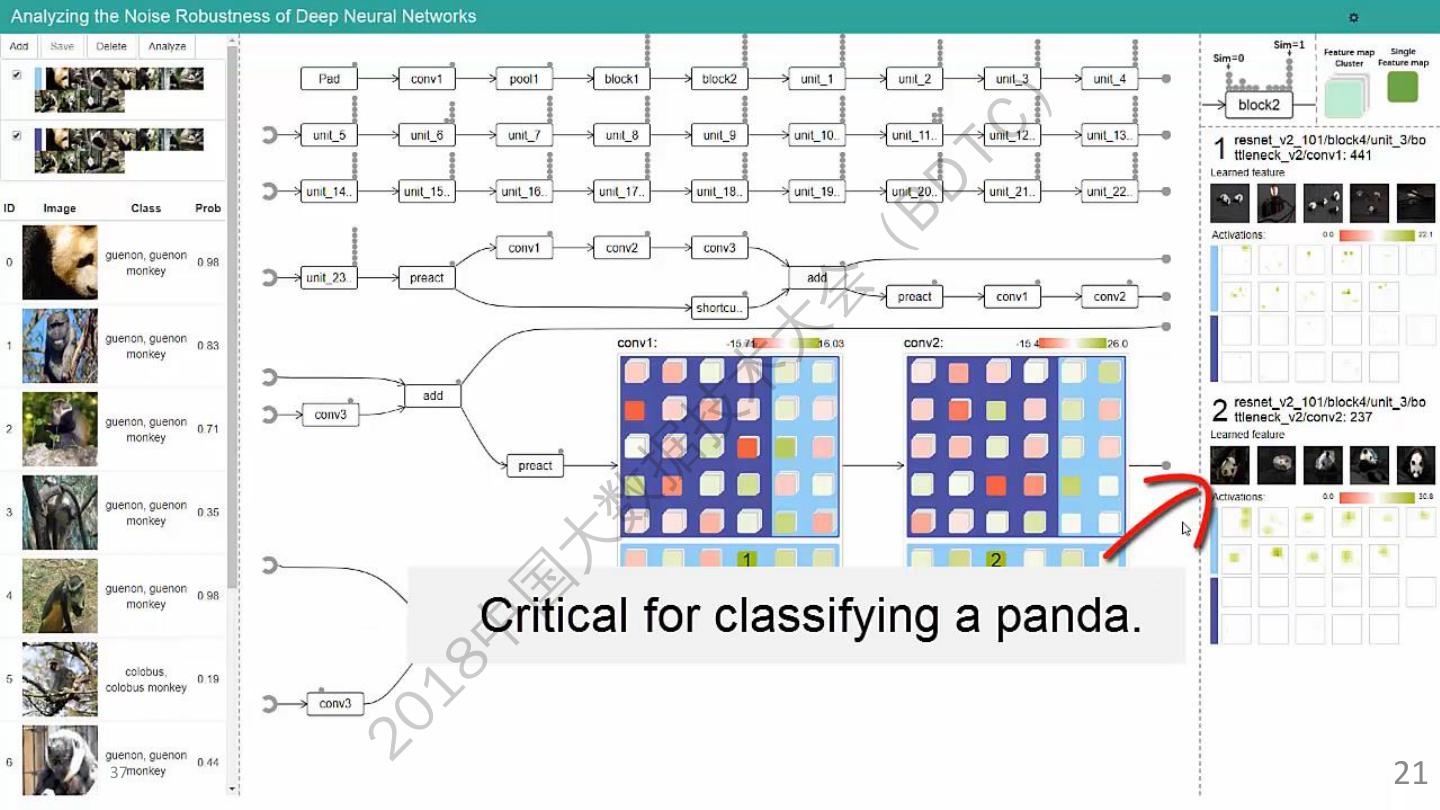

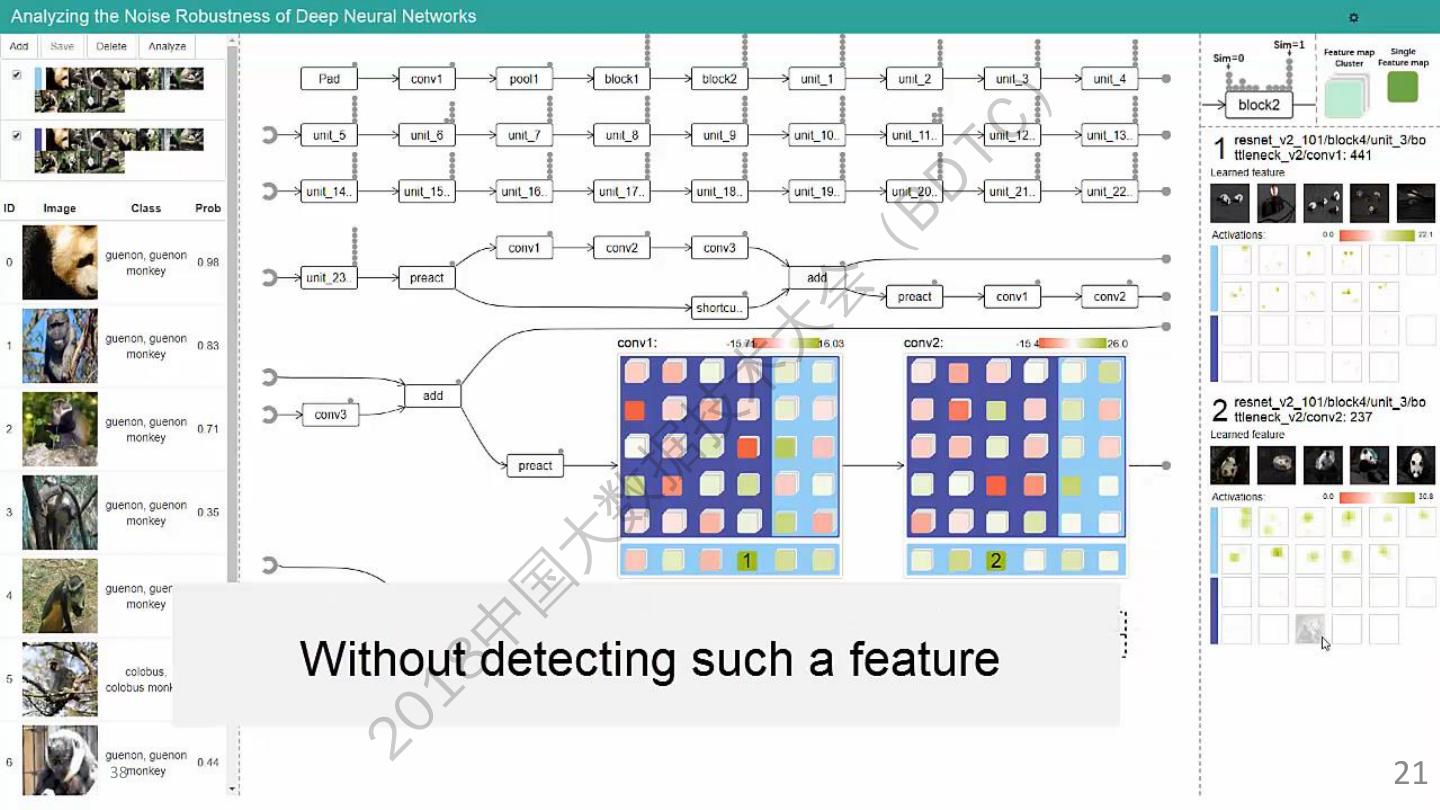

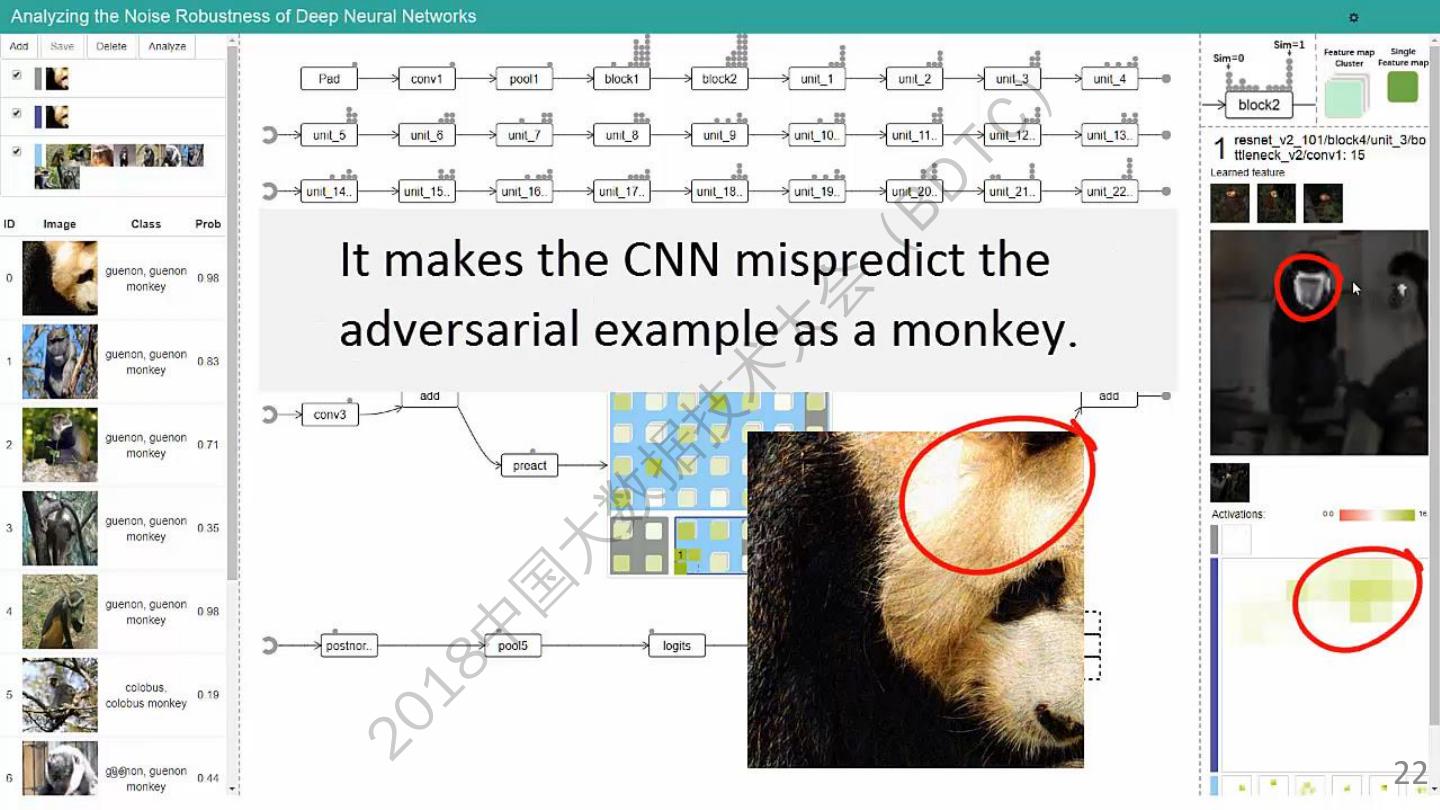

22 .22 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

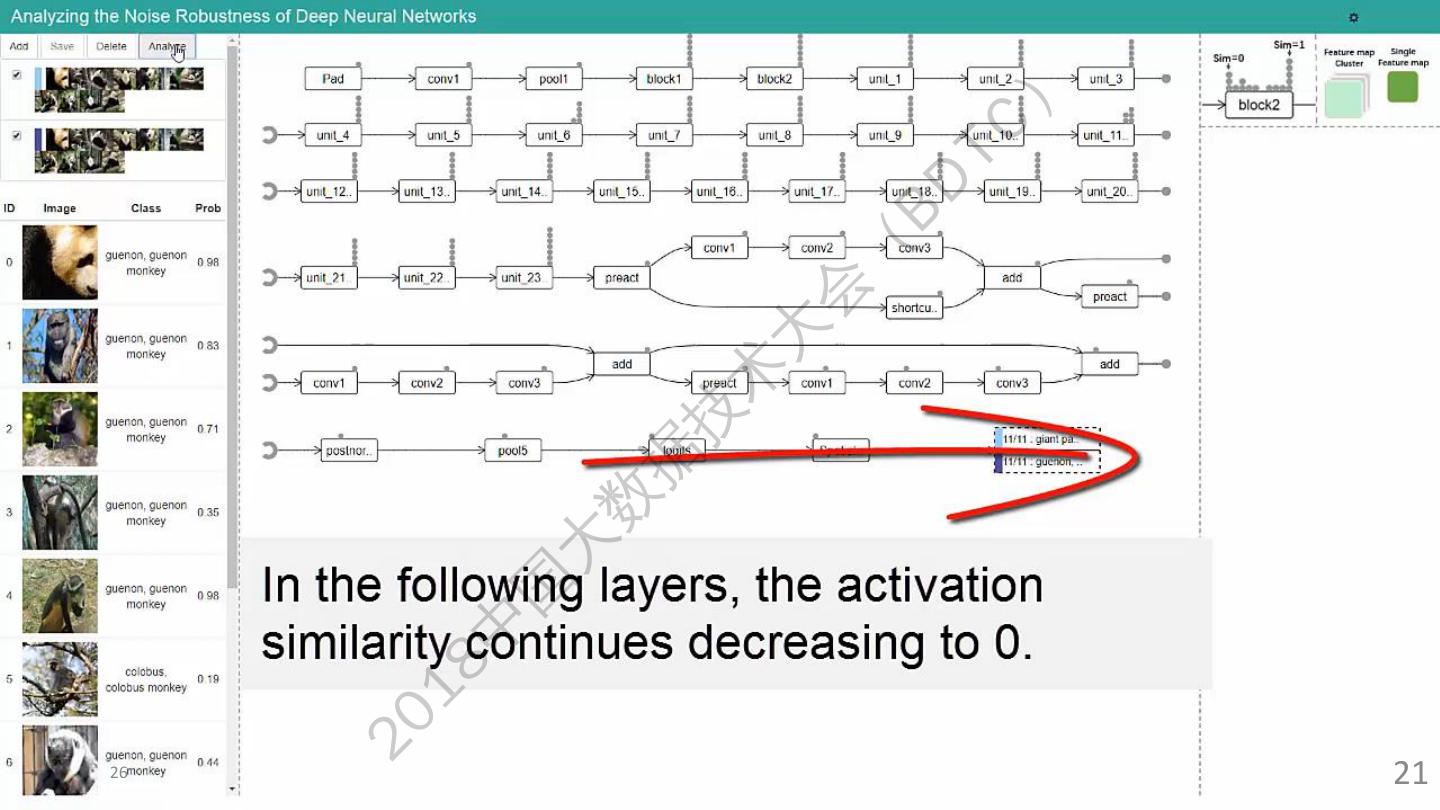

23 .23 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

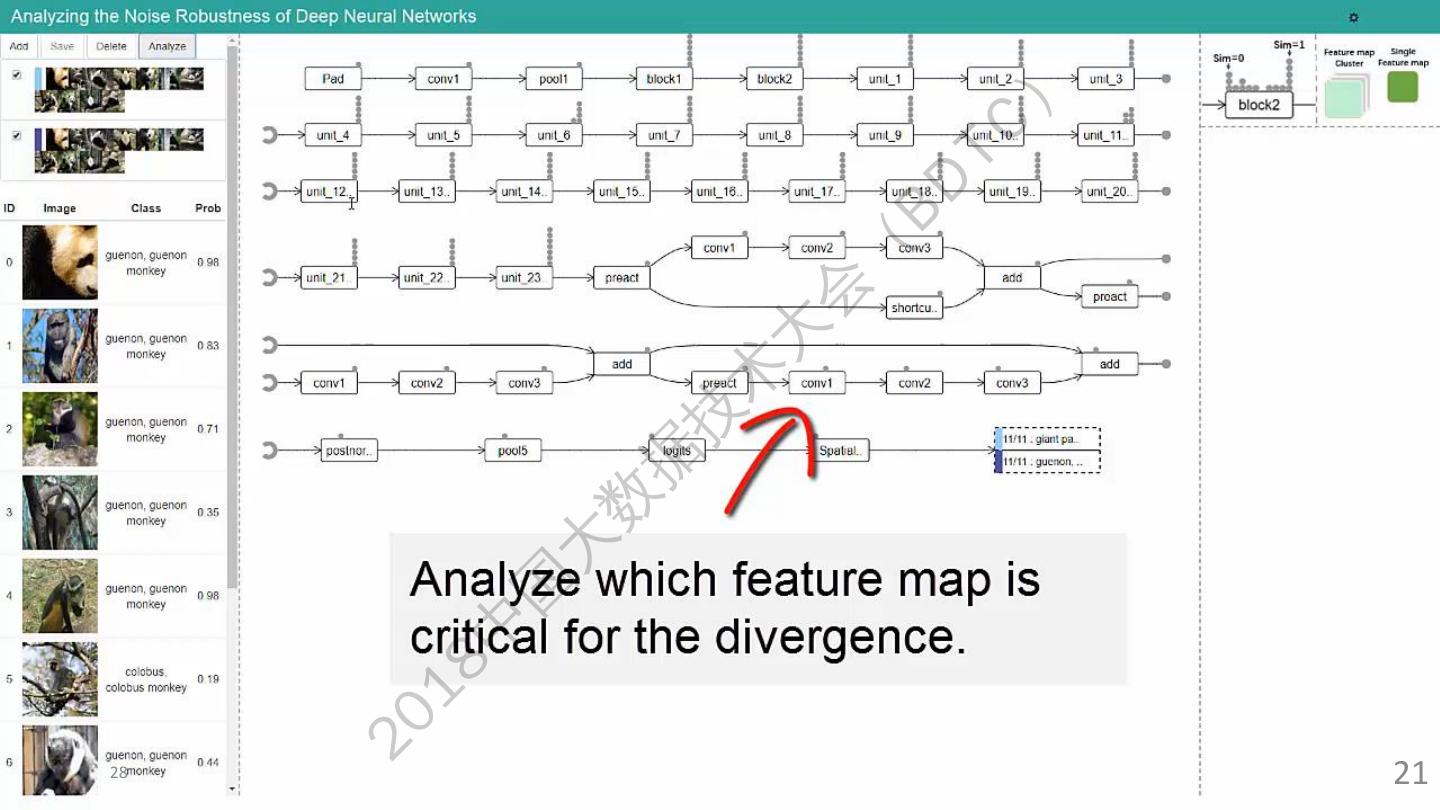

24 .24 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

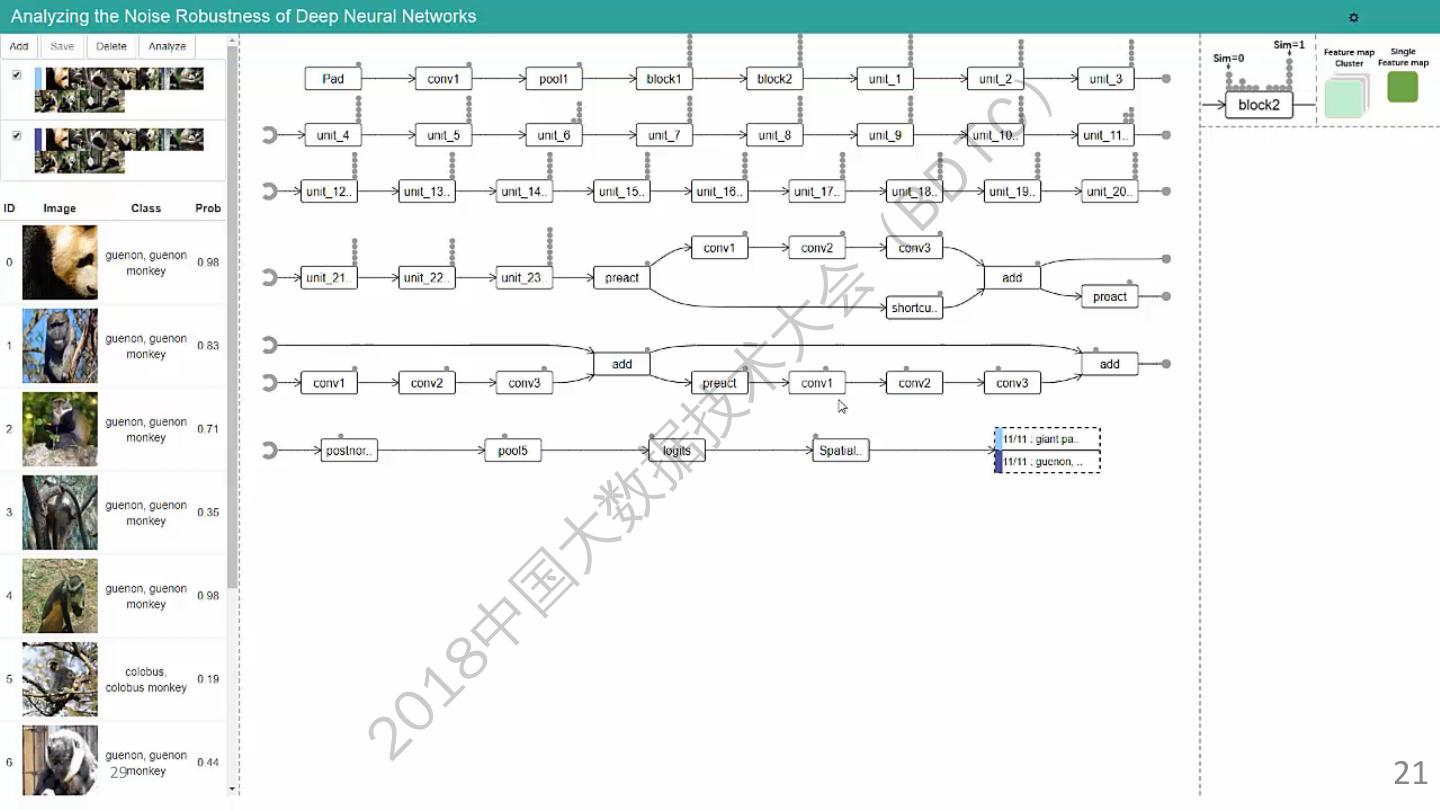

25 .25 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

26 .26 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

27 .27 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

28 .28 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21

29 .29 20 18 中 国 大 数 据 技 术 大 会 ( BD TC ) 21