- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

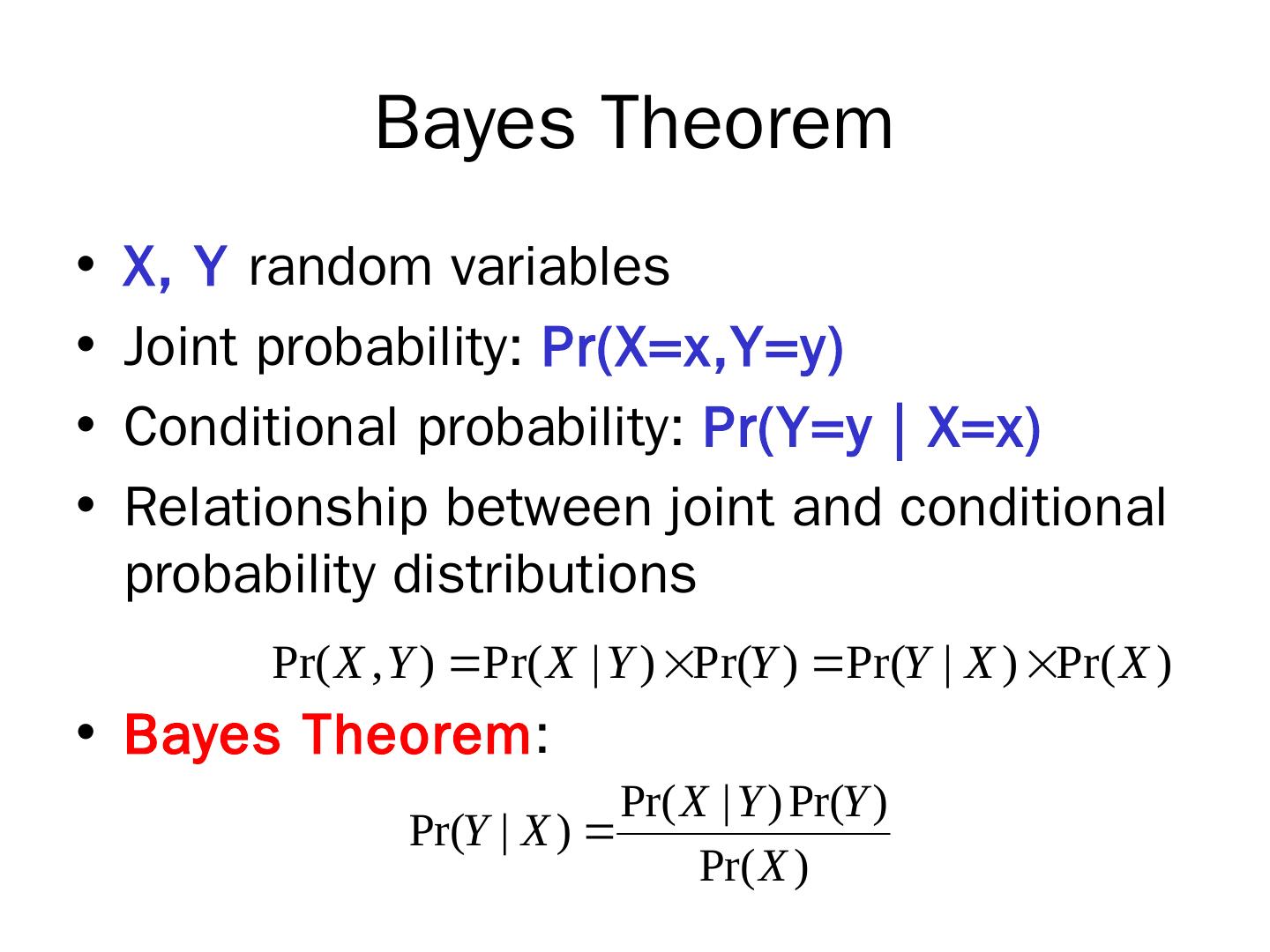

Bayes Theorem

展开查看详情

1 .Lecture outline Classification Naïve Bayes c lassifier Nearest-neighbor classifier

2 .Eager vs Lazy learners Eager learners: learn the model as soon as the training data becomes available Lazy learners: delay model-building until testing data needs to be classified Rote classifier: memorizes the entire training data

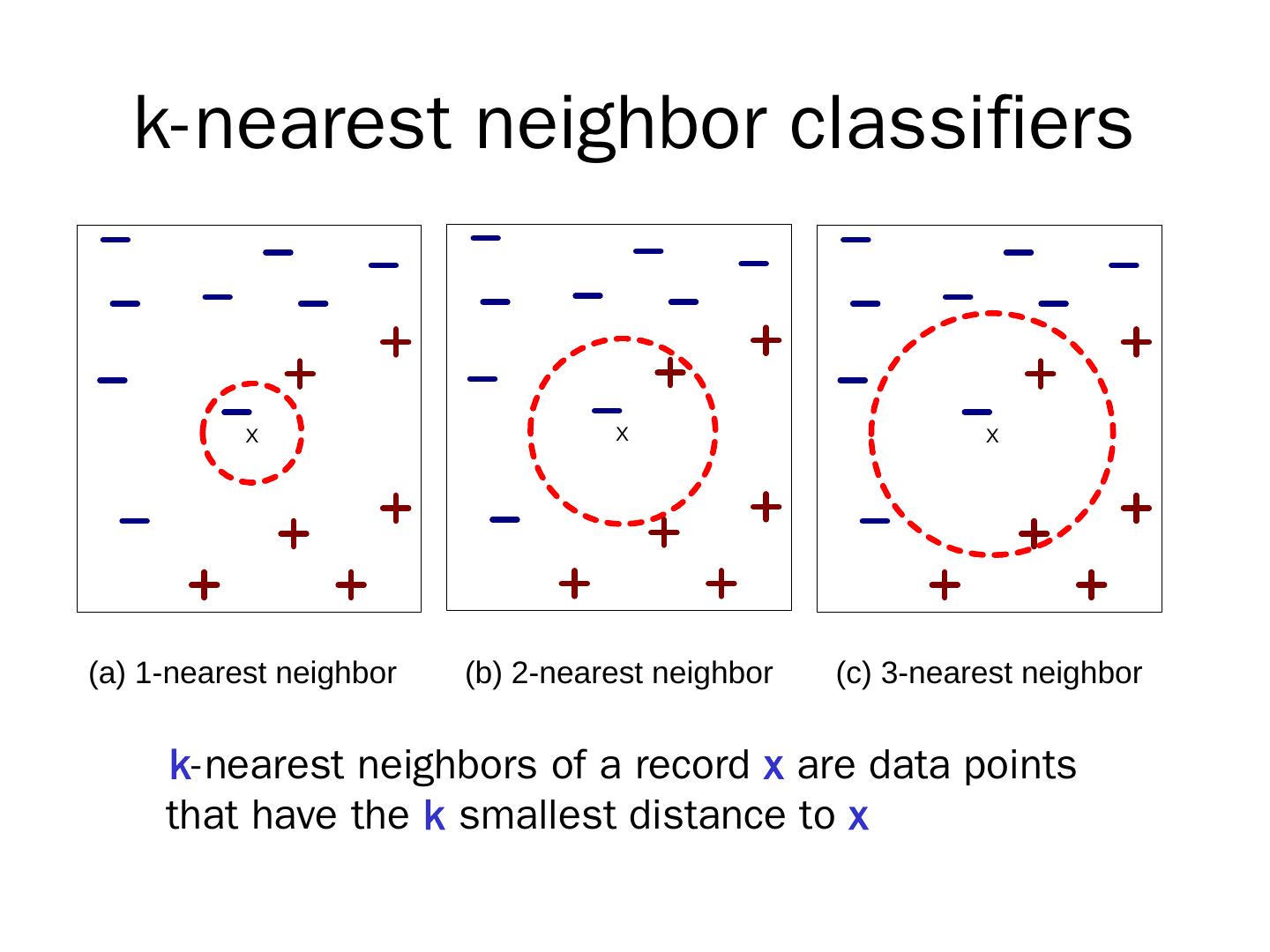

3 .k-nearest neighbor classifiers k -nearest neighbors of a record x are data points that have the k smallest distance to x

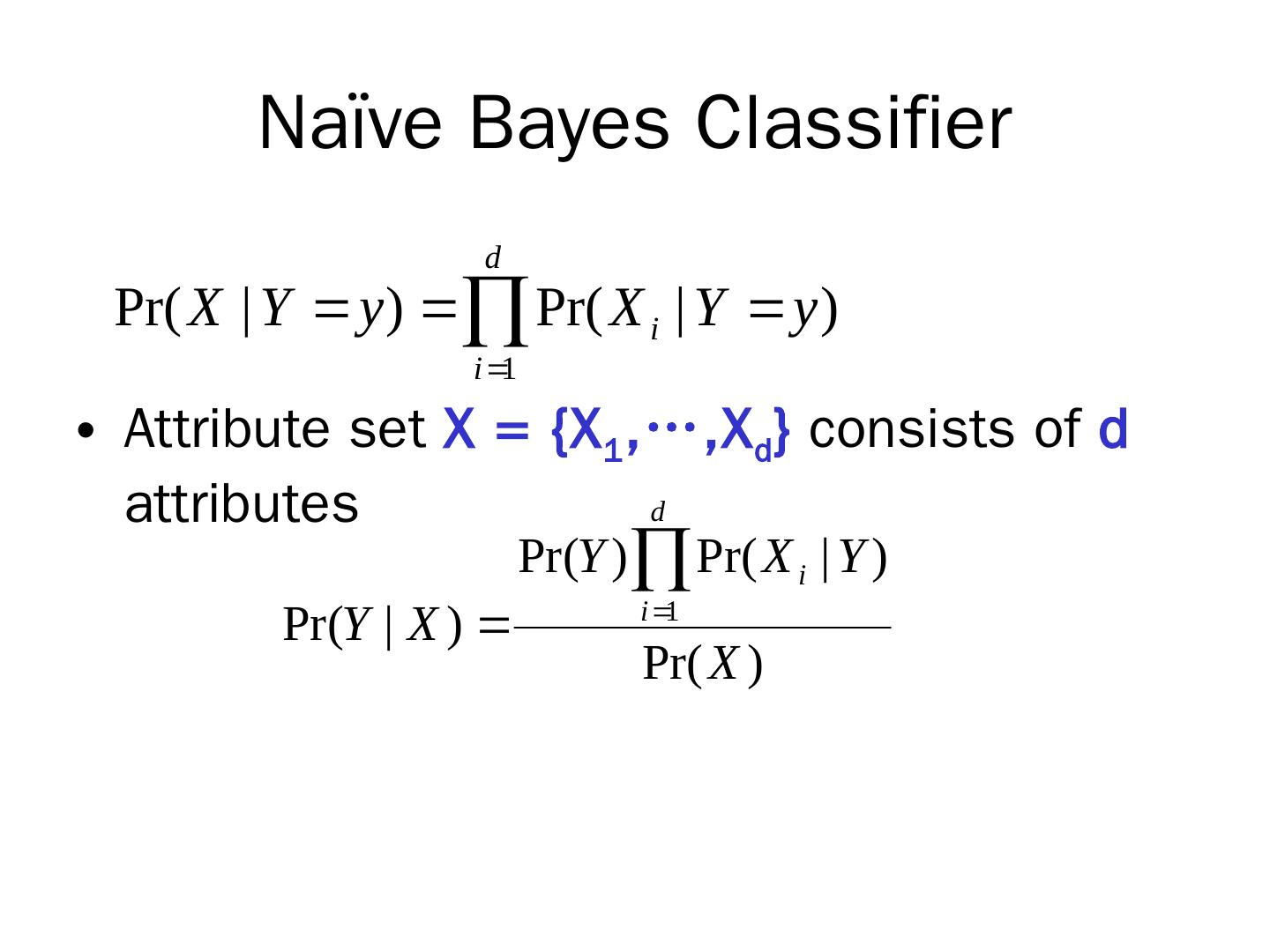

4 .k -nearest neighbor classification Given a data record x find its k closest points Closeness: Euclidean, Hamming, Jaccard distance Determine the class of x based on the classes in the neighbor list Majority vote Weigh the vote according to distance e.g., weight factor, w = 1/d 2 Probabilistic voting

5 .Characteristics of nearest-neighbor classifiers Instance of instance-based learning No model building (lazy learners) Lazy learners: computational time in classification Eager learners: computational time in model building Decision trees try to find global models, k-NN take into account local information K-NN classifiers depend a lot on the choice of proximity measure

6 .Bayes Theorem X, Y random variables Joint probability: Pr(X= x,Y =y) Conditional probability: Pr(Y=y | X=x) Relationship between joint and conditional probability distributions Bayes Theorem :

7 .Bayes Theorem for Classification X : attribute set Y : class variable Y depends on X in a non- determininstic way We can capture this dependence using Pr( Y |X) : Posterior probability vs Pr( Y ) : Prior probability

8 .Building the Classifier Training phase: Learning the posterior probabilities Pr(Y|X) for every combination of X and Y based on training data Test phase: For test record X’ , compute the class Y’ that maximizes the posterior probability Pr(Y’|X’)

9 .Bayes Classification: Example X’=(Home Owner=No, Marital Status=Married, AnnualIncome =120K) Compute: Pr( Yes|X ’) , Pr( No|X ’) pick No or Yes with max Prob. How can we compute these probabilities??

10 .Computing posterior probabilities Bayes Theorem P(X) is constant and can be ignored P(Y): estimated from training data; compute the fraction of training records in each class P(X|Y) ?

11 .Naïve Bayes Classifier Attribute set X = {X 1 ,…, X d } consists of d attributes Conditional independence: X conditionally independent of Y , given X : Pr(X|Y,Z) = Pr(X|Z) Pr(X,Y|Z) = Pr(X|Z) xPr (Y|Z)

12 .Naïve Bayes Classifier Attribute set X = {X 1 ,…, X d } consists of d attributes

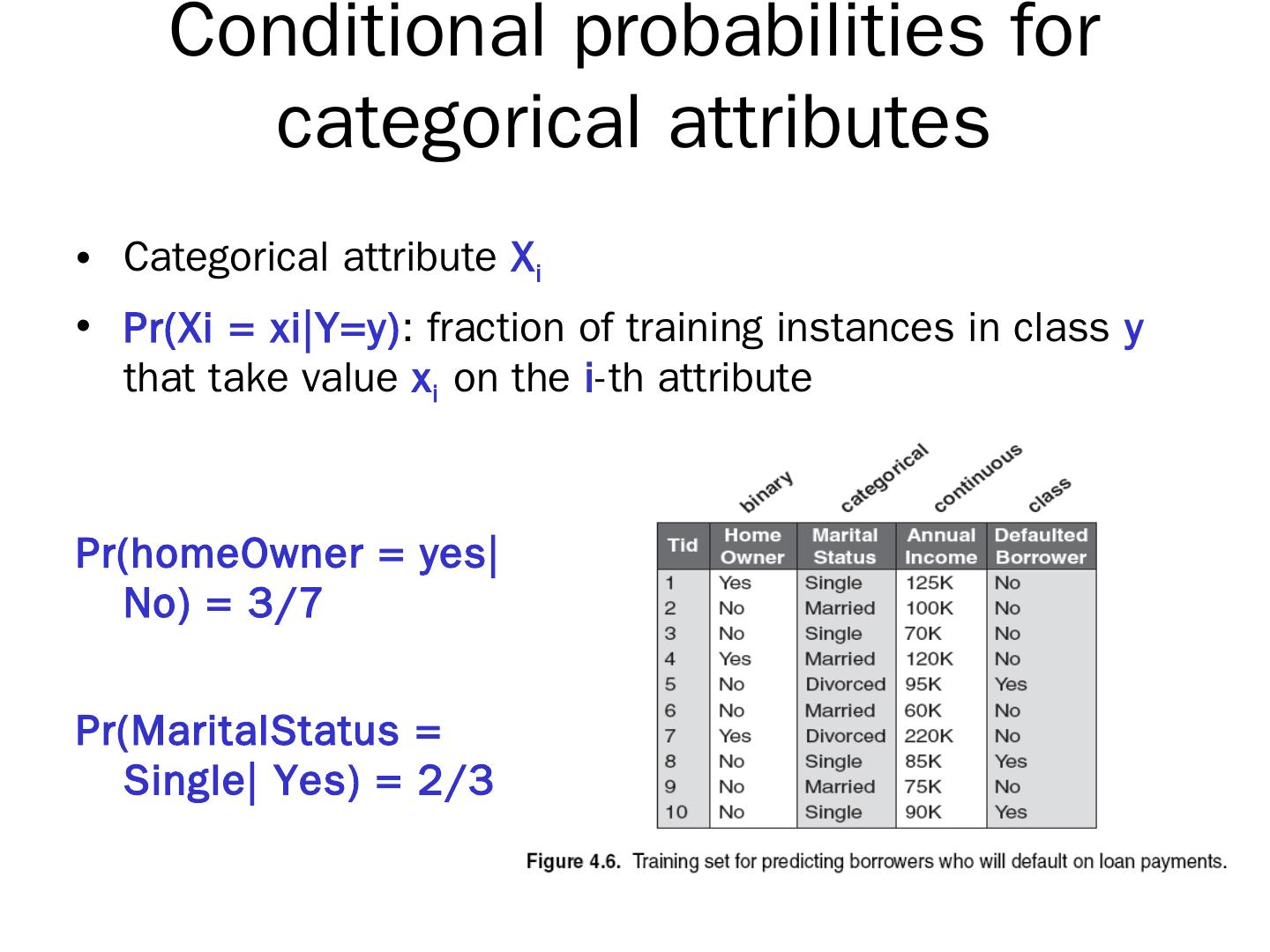

13 .Conditional probabilities for categorical attributes Categorical attribute X i Pr(Xi = xi|Y =y) : fraction of training instances in class y that take value x i on the i -th attribute Pr( homeOwner = yes|No ) = 3/7 Pr( MaritalStatus = Single| Yes ) = 2/3

14 .Estimating conditional probabilities for continuous attributes? Discretization ? How can we discretize ?

15 .Naïve Bayes Classifier: Example X’ = ( HomeOwner = No, MaritalStatus = Married, Income=120K) Need to compute Pr(Y|X’) or Pr(Y) xPr (X’|Y) But Pr(X’|Y) is Y = No : Pr(HO= No|No ) xPr (MS= Married|No ) xPr (Inc=120K|No) = 4/7x4/7x0.0072 = 0.0024 Y=Yes : Pr(HO= No|Yes ) xPr (MS= Married|Yes ) xPr (Inc=120K|Yes) = 1x0x1.2x10 -9 = 0

16 .Naïve Bayes Classifier: Example X’ = ( HomeOwner = No, MaritalStatus = Married, Income=120K) Need to compute Pr(Y|X’) or Pr(Y) xPr (X’|Y) But Pr(X’|Y = Yes) is 0 ? Correction process: n c : number of training examples from class y j that take value x i n: total number of instances from class y j m: equivalent sample size (balance between prior and posterior) p: user-specified parameter (prior probability)

17 .Characteristics of Naïve Bayes Classifier Robust to isolated noise points noise points are averaged out Handles missing values Ignoring missing-value examples Robust to irrelevant attributes If X i is irrelevant, P( X i |Y ) becomes almost uniform Correlated attributes degrade the performance of NB classifier