- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Design and Evaluation of Main Memory Hash Join Algorithms

展开查看详情

1 . Design and Evaluation of Main Memory Hash Join Algorithms for Multi-core CPUs Spyros Blanas Yinan Li Jignesh M. Patel University of Wisconsin–Madison {sblanas, yinan, jignesh}@cs.wisc.edu ABSTRACT 1. INTRODUCTION The focus of this paper is on investigating efficient hash join Large scale multi-core processors are imminent. Modern algorithms for modern multi-core processors in main mem- processors today already have four or more cores, and for the ory environments. This paper dissects each internal phase past few years Intel has been introducing two more cores of a typical hash join algorithm and considers different al- per processor roughly every 15 months. At this rate, it ternatives for implementing each phase, producing a family is not hard to imagine running database management sys- of hash join algorithms. Then, we implement these main tems (DBMSs) on processors with hundreds of cores in the memory algorithms on two radically different modern multi- near future. In addition, memory prices are continuing to processor systems, and carefully examine the factors that drop. Today 1TB of memory costs as little as $25,000. Con- impact the performance of each method. sequently, many databases now either fit entirely in main Our analysis reveals some interesting results – a very sim- memory, or their working set is main memory resident. As ple hash join algorithm is very competitive to the other a result, many DBMSs are becoming CPU bound. more complex methods. This simple join algorithm builds a In this evolving architectural landscape, DBMSs have the shared hash table and does not partition the input relations. unique opportunity to leverage the inherent parallelism that Its simplicity implies that it requires fewer parameter set- is provided by the relational data model. Data is exposed tings, thereby making it far easier for query optimizers and by declarative query languages to user applications and the execution engines to use it in practice. Furthermore, the DBMS is free to choose its execution strategy. Coupled performance of this simple algorithm improves dramatically with the trend towards impending very large multi-cores, as the skew in the input data increases, and it quickly starts this implies that DBMSs must carefully rethink how they to outperform all other algorithms. Based on our results, can exploit the parallelism that is provided by the modern we propose that database implementers consider adding this multi-core processors, or DBMS performance will stall. simple join algorithm to their repertoire of main memory A natural question to ask then is whether there is anything join algorithms, or adapt their methods to mimic the strat- new here. Beginning about three decades ago, at the incep- egy employed by this algorithm, especially when joining in- tion of the field of parallel DBMSs, the database community puts with skewed data distributions. thoroughly examined how a DBMS can use various forms of parallelism. These forms of parallelism include pure shared- nothing, shared-memory, and shared disk architectures [17]. Categories and Subject Descriptors If the modern multi-core architectures resemble any of these H.2.4. [Database Management]: Systems—Query pro- architectural templates, then we can simply adopt the meth- cessing, Relational databases ods that have already been designed. In fact, to a large extent this is the approach that DBMSs General Terms have haven taken towards dealing with multi-core machines. Many commercial DBMSs simply treat a multi-core proces- Algorithms, Design, Performance sor as a symmetric multi-processor (SMP) machine, lever- aging previous work that was done by the DBMS vendors Keywords in reaction to the increasing popularity of SMP machines hash join, multi-core, main memory decades ago. These methods break up the task of a single operation, such as an equijoin, into disjoint parts and allow each processor (in an SMP box) to work on each part in- dependently. At a high-level, these methods resemble vari- ations of query processing techniques that were developed for parallel shared-nothing architectures [6], but adapted Permission to make digital or hard copies of all or part of this work for for SMP machines. In most commercial DBMSs, this ap- personal or classroom use is granted without fee provided that copies are proach is reflected across the entire design process, ranging not made or distributed for profit or commercial advantage and that copies from system internals (join processing, for example) to their bear this notice and the full citation on the first page. To copy otherwise, to republish, to post on servers or to redistribute to lists, requires prior specific pricing model, which is frequently done by scaling the SMP permission and/or a fee. pricing model. On the other hand, open-source DBMSs have SIGMOD’11, June 12–16, 2011, Athens, Greece. Copyright 2011 ACM 978-1-4503-0661-4/11/06 ...$10.00. 37

2 .largely ignored multi-core processing and generally dedicate Finally, we show that the simple “no-partitioning” hash a single thread/process to each query. join algorithm takes advantage of intrinsic hardware opti- The design space for modern high performance main mem- mizations to handle skew. As a result, this simple hash join ory join algorithms has two extremes. One extreme of this technique often benefits from skew and its relative perfor- design space focuses on minimizing the number of proces- mance increases as the skew increases! This property is a sor cache misses. The radix-based hash join algorithm [2] is big advancement over the state-of-the-art methods, as it is an example of a method in this design class. The other ex- important to have methods that can gracefully handle skew treme is to focus on minimizing processor synchronization in practice [8]. costs. In this paper we propose a “no partitioning” hash The remainder of this paper is organized as follows: The join algorithm that does not partition the input relations to next section covers background information. The hash join embody an example of a method in this later design space. variants are presented in Section 3. Experimental results are A crucial question that we ask and answer in this paper described in Section 4, and related work is discussed in Sec- is what is the impact of these two extreme design points in tion 5. Finally, Section 6 contains our concluding remarks. modern multi-core processors for main memory hash join al- gorithms. A perhaps surprising answer is that for modern 2. THE MULTI-CORE LANDSCAPE multi-core architectures, in many cases the right approach is In the last few years alone, more than a dozen different to focus on reducing the computation and synchronization multi-core CPU families have been introduced by CPU ven- costs, as modern processors are very effective in hiding cache dors. These new CPUs have ranged from powerful dual-CPU miss latencies via simultaneous multi-threading. For exam- systems on the same die to prototype systems of hundreds ple, in our experiments, the “no partitioning” hash join algo- of simple RISC cores. rithm far outperforms the radix join algorithm when there This new level of integration has lead to architectural is skew in the data (which is often the case in practice), even changes with deep impact on algorithm design. Although while it incurs many more processor cache and TLB misses. the first multi-core CPUs had dedicated caches for each core, Even with uniform data, the radix join algorithm only out- we now see a shift towards more sharing at the lower levels performs the “no partitioning” algorithm on a modern Intel of the cache hierarchy and consequently the need for access Xeon when the parameters for the radix join algorithm are arbitration to shared caches within the chip. A shared cache set at or near their optimal setting. In contrast, the non- means better single-threaded performance, as one core can partitioned algorithm is “parameter-free”, which is another utilize the whole cache, and more opportunities for sharing important practical advantage. among cores. However, shared caches also increase conflict Reflecting on the previous work in this area, one can ob- cache misses due to false sharing, and may increase capacity serve that the database community has focused on optimiz- cache misses, if the cache sizes don’t increase proportionally ing query processing methods to reduce the number of pro- to the number of cores. cessor cache and TLB misses. We hope that this paper opens One idea that is employed to combat the diminishing re- up a new discussion on the entire design space for multi-core turns of instruction-level parallelism is simultaneous multi- query processing techniques, and incites a similar examina- threading (SMT). Multi-threading attempts to find inde- tion of other aspects of query processing beyond the single pendent instructions across different threads of execution, hash join operation that we discuss in this paper. instead of detecting independent instructions in the same This paper makes three main contributions. First, we sys- thread. This way, the CPU will schedule instructions from tematically examine the design choices available for each in- each thread and achieve better overall utilization, increasing ternal phase of a canonical main memory hash join algorithm throughput at the expense of per-thread latency. – namely, the partition, build, and probe phases – and enu- We briefly consider two modern architectures that we sub- merate a number of possible multi-core hash join algorithms sequently use for evaluation. At one end of the spectrum, based on different choices made in each of these phases. We the Intel Nehalem family is an instance of Intel’s latest mi- then evaluate these join algorithms on two radically differ- croarchitecture that offers high single-threaded performance ent architectures and show how the architectural differences because of its out-of-order execution and on-demand fre- can affect performance. Unlike previous work that has often quency scaling (TurboBoost). Multi-threaded performance focused on just one architecture, our use of two radically dif- is increased by using simultaneous multi-threading (Hyper- ferent architectures lets us gain deeper insights about hash Threading). At the other end of the spectrum, the Sun join processing on multi-core processors. To the best of our UltraSPARC T2 has 8 simple cores that all share a sin- knowledge, this is the first systematic exploration of multiple gle cache. This CPU can execute instructions from up to hash join techniques that spans multi-core architectures. 8 threads per core, or a total of 64 threads for the entire Second, we show that an algorithm that does not do any chip, and extensively relies on simultaneous multi-threading partitioning, but simply constructs a single shared hash ta- to achieve maximum throughput. ble on the build relation often outperforms more complex al- 3. HASH JOIN IMPLEMENTATION gorithms. This simple “no-partitioning” hash join algorithm In this section, we consider the anatomy of a canoni- is robust to sub-optimal parameter choices by the optimizer, cal hash join algorithm, and carefully consider the design and does not require any knowledge of the characteristics of choices that are available in each internal phase of a hash the input to work well. To the best of our knowledge, this join algorithm. Then using these design choices, we cat- simple hash join technique differs from what is currently egorize various previous proposals for multi-core hash join implemented in existing DBMSs for multi-core hash join processing. In the following discussion we also present infor- processing, and offers a tantalizingly simple, efficient, and mation about some of the implementation details, as they robust technique for implementing the hash join operation. often have a significant impact on the performance of the technique that is described. 38

3 . A hash join operator works on two input relations, R and empty output buffer at the start of the list, and we make its S. We assume that |R| < |S|. A typical hash join algorithm next pointer point to the buffer that overflowed. Locating has three phases: partition, build, and probe. The partition free space is a matter of checking the first buffer in the list. phase is optional and divides tuples into distinct sets using Let p denote the desired number of partitions and n de- a hash function on the join key attribute. The build phase note the number of threads that are processing the hash join scans the relation R and creates an in-memory hash table on operation. During the partitioning phase, all threads start the join key attribute. The probe phase scans the relation reading tuples from the relation R, via a cursor. Each thread S, looks up the join key of each tuple in the hash table, and works on a large batch of tuples at a time, so as to minimize in the case of a match creates the output tuple(s). synchronization overheads on the input scan cursor. Each Before we discuss the alternative techniques that are avail- thread examines a tuple, then extracts the key k, and fi- able in each phase of the join algorithm, we briefly digress nally computes the partitioning hash function hp (k). Next, to discuss the impact of the latch implementation on the it then writes the tuple to partition Rhp (k) using one of the join techniques. As a general comment, we have found that algorithms we describe below. When the R cursor runs out the latch implementation has a crucial impact on the over- of tuples, the partitioning operation proceeds to process the all join performance. In particular, when using the pthreads tuples from the S relation. Again, each tuple is examined, mutex implementation, several instructions are required to the join key k is extracted and the tuple is written to the acquire and release an uncontended latch. If there are mil- partition Shp (k) . The partitioning phase ends when all the lions of buckets in a hash table, then the hash collision rate S tuples have been partitioned. is small, and one can optimize for the expected case: latches Note that we classify the partitioning algorithms as “non- being free. Furthermore, pthread mutexes have significant blocking” if they produce results on-the-fly and scan the in- memory footprint as each requires approximately 40 bytes. put once, in contrast to a “blocking” algorithm that produces If each bucket stores a few <key, record-id> pairs, then the results after buffering the entire input and scanning it more size of the latch array may be greater than the size of the than once. We acknowledge that the join operator overall hash table itself. These characteristics make mutexes a pro- is never truly non-blocking, as it will block during the build hibitively expensive synchronization primitive for buckets phase. The distinction is that the non-blocking algorithms in a hash table. Hence, we implemented our own 1-byte only block for the time that is needed to scan and process latch for both the Intel and the Sun architectures, using the the smaller input, and, as we will see in Section 4.3, this a atomic primitives xchgb and ldstub, respectively. Protect- very small fraction of the overall join time. ing multiple hash buckets with a single latch to avoid cache thrashing did not result in significant performance improve- 3.1.1 Non-blocking algorithms ments even when the number of partitions was high. The first partitioning algorithm creates p shared partitions among all the threads. The threads need to synchronize via 3.1 Partition phase a latch to make sure that the writes to a shared partition The partition phase is an optional step of a hash join al- are isolated from each other. gorithm, if the hash table for the relation R fits in main The second partitioning algorithm creates p ∗ n partitions memory. If one partitions both the R and S relations such in total and each thread is assigned a private set of p parti- that each partition fits in the CPU cache, then the cache tions. Each thread then writes to its local partitions without misses that are otherwise incurred during the subsequent any synchronization overhead. When the input relation is build and probe phases are almost eliminated. The cost depleted, all threads synchronize at a barrier to consolidate for partitioning both input relations is incurring additional the p ∗ n partitions into p partitions. memory writes for each tuple. Work by Shatdal et al. [16] The benefit of creating private partitions is that there is has shown that the runtime cost of the additional memory no synchronization overhead on each access. The drawbacks, writes during partitioning phase is less than the cost of miss- however, are (a) many partitions are created, possibly so ing in the cache – as a consequence partitioning improves many that the working set of the algorithm no longer fits in overall performance. Recent work by Cieslewicz and Ross the data cache and the TLB; (b) at the end of the partition [4] has explored partitioning performance in detail. They phase some thread has to chain n private partitions together introduce two algorithms that process the input once in a to form a single partition, but this operation is quick and serial fashion and do not require any kind of global knowl- can be parallelized. edge about the characteristics of the input. Another recent paper [11] describes a parallel implementation of radix par- 3.1.2 Blocking algorithm titioning [2] which gives impressive performance improve- Another partitioning technique is the parallel multi-pass ments on a modern multi-core system. This implementation radix partitioning algorithm described by Kim et al. [11]. requires that the entire input is available upfront and will The algorithm begins by having the entire input available in not produce any output until the last input tuple has been a contiguous block of memory. Each thread is responsible seen. We experiment with all of these three partitioning al- for a specific memory region in that contiguous block. A gorithms, and we briefly summarize each implementation in histogram with p ∗ n bins is allocated and the input is then Sections 3.1.1 and 3.1.2. scanned twice. During the first scan, each thread scans all In our implementation, a partition is a linked list of output the tuples in the memory region assigned to it, extracts the buffers. An output buffer is fully described by four elements: key k and then computes the exact histogram of the hash an integer specifying the size of the data block, a pointer to values hp (k) for this region. Thread i ∈ [0, n − 1] stores the the start of the data block, a pointer to the free space inside number of tuples it encountered that will hash to partition the data block and a pointer to the next output buffer that j ∈ [0, p−1] in histogram bin j ∗n+i. At the end of the scan, is initially set to zero. If a buffer overflows, then we add an all the n threads compute the prefix sum on the histogram 39

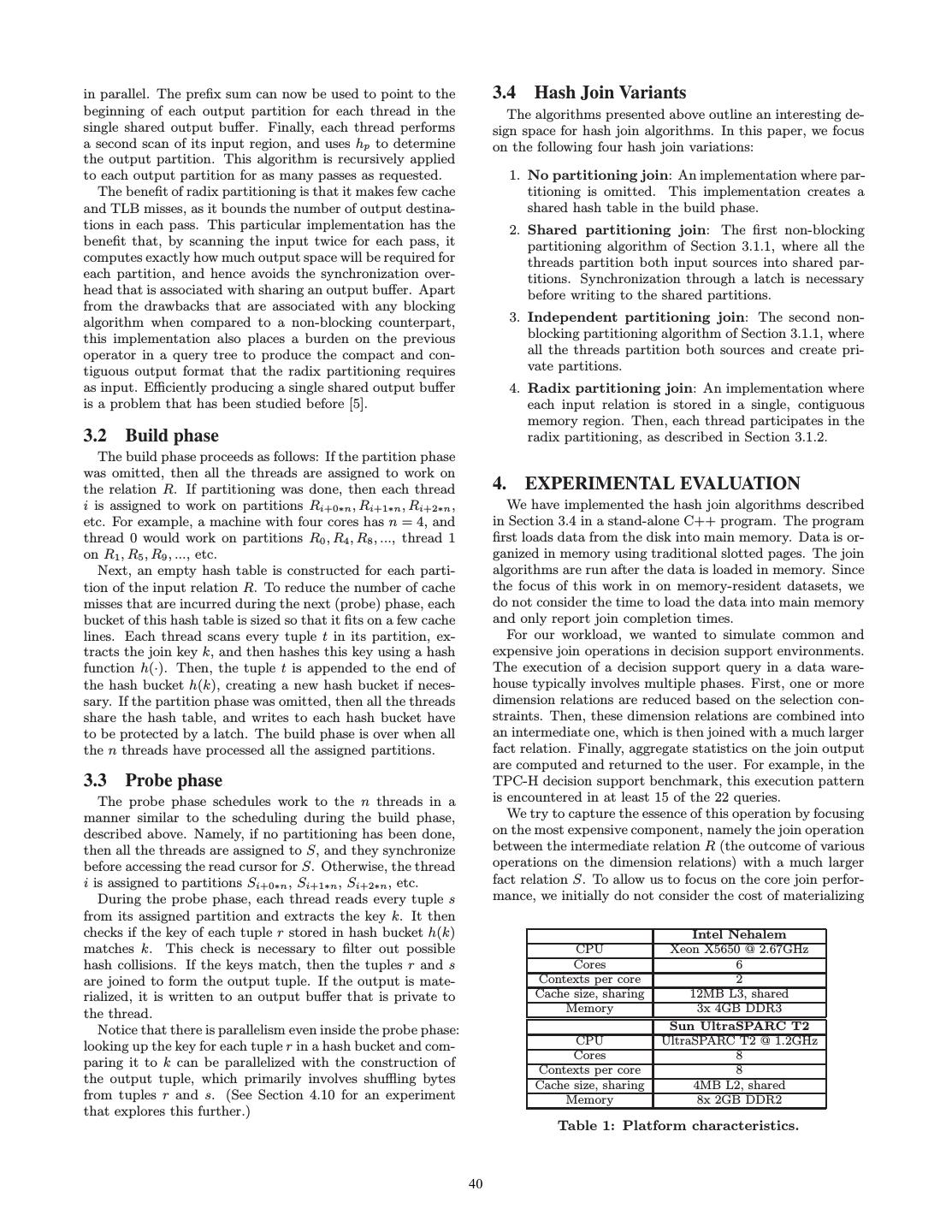

4 .in parallel. The prefix sum can now be used to point to the 3.4 Hash Join Variants beginning of each output partition for each thread in the The algorithms presented above outline an interesting de- single shared output buffer. Finally, each thread performs sign space for hash join algorithms. In this paper, we focus a second scan of its input region, and uses hp to determine on the following four hash join variations: the output partition. This algorithm is recursively applied to each output partition for as many passes as requested. 1. No partitioning join: An implementation where par- The benefit of radix partitioning is that it makes few cache titioning is omitted. This implementation creates a and TLB misses, as it bounds the number of output destina- shared hash table in the build phase. tions in each pass. This particular implementation has the 2. Shared partitioning join: The first non-blocking benefit that, by scanning the input twice for each pass, it partitioning algorithm of Section 3.1.1, where all the computes exactly how much output space will be required for threads partition both input sources into shared par- each partition, and hence avoids the synchronization over- titions. Synchronization through a latch is necessary head that is associated with sharing an output buffer. Apart before writing to the shared partitions. from the drawbacks that are associated with any blocking algorithm when compared to a non-blocking counterpart, 3. Independent partitioning join: The second non- this implementation also places a burden on the previous blocking partitioning algorithm of Section 3.1.1, where operator in a query tree to produce the compact and con- all the threads partition both sources and create pri- tiguous output format that the radix partitioning requires vate partitions. as input. Efficiently producing a single shared output buffer 4. Radix partitioning join: An implementation where is a problem that has been studied before [5]. each input relation is stored in a single, contiguous memory region. Then, each thread participates in the 3.2 Build phase radix partitioning, as described in Section 3.1.2. The build phase proceeds as follows: If the partition phase was omitted, then all the threads are assigned to work on the relation R. If partitioning was done, then each thread 4. EXPERIMENTAL EVALUATION i is assigned to work on partitions Ri+0∗n , Ri+1∗n , Ri+2∗n , We have implemented the hash join algorithms described etc. For example, a machine with four cores has n = 4, and in Section 3.4 in a stand-alone C++ program. The program thread 0 would work on partitions R0 , R4 , R8 , ..., thread 1 first loads data from the disk into main memory. Data is or- on R1 , R5 , R9 , ..., etc. ganized in memory using traditional slotted pages. The join Next, an empty hash table is constructed for each parti- algorithms are run after the data is loaded in memory. Since tion of the input relation R. To reduce the number of cache the focus of this work in on memory-resident datasets, we misses that are incurred during the next (probe) phase, each do not consider the time to load the data into main memory bucket of this hash table is sized so that it fits on a few cache and only report join completion times. lines. Each thread scans every tuple t in its partition, ex- For our workload, we wanted to simulate common and tracts the join key k, and then hashes this key using a hash expensive join operations in decision support environments. function h(·). Then, the tuple t is appended to the end of The execution of a decision support query in a data ware- the hash bucket h(k), creating a new hash bucket if neces- house typically involves multiple phases. First, one or more sary. If the partition phase was omitted, then all the threads dimension relations are reduced based on the selection con- share the hash table, and writes to each hash bucket have straints. Then, these dimension relations are combined into to be protected by a latch. The build phase is over when all an intermediate one, which is then joined with a much larger the n threads have processed all the assigned partitions. fact relation. Finally, aggregate statistics on the join output are computed and returned to the user. For example, in the 3.3 Probe phase TPC-H decision support benchmark, this execution pattern The probe phase schedules work to the n threads in a is encountered in at least 15 of the 22 queries. manner similar to the scheduling during the build phase, We try to capture the essence of this operation by focusing described above. Namely, if no partitioning has been done, on the most expensive component, namely the join operation then all the threads are assigned to S, and they synchronize between the intermediate relation R (the outcome of various before accessing the read cursor for S. Otherwise, the thread operations on the dimension relations) with a much larger i is assigned to partitions Si+0∗n , Si+1∗n , Si+2∗n , etc. fact relation S. To allow us to focus on the core join perfor- During the probe phase, each thread reads every tuple s mance, we initially do not consider the cost of materializing from its assigned partition and extracts the key k. It then checks if the key of each tuple r stored in hash bucket h(k) Intel Nehalem matches k. This check is necessary to filter out possible CPU Xeon X5650 @ 2.67GHz hash collisions. If the keys match, then the tuples r and s Cores 6 are joined to form the output tuple. If the output is mate- Contexts per core 2 rialized, it is written to an output buffer that is private to Cache size, sharing 12MB L3, shared the thread. Memory 3x 4GB DDR3 Notice that there is parallelism even inside the probe phase: Sun UltraSPARC T2 looking up the key for each tuple r in a hash bucket and com- CPU UltraSPARC T2 @ 1.2GHz Cores 8 paring it to k can be parallelized with the construction of Contexts per core 8 the output tuple, which primarily involves shuffling bytes Cache size, sharing 4MB L2, shared from tuples r and s. (See Section 4.10 for an experiment Memory 8x 2GB DDR2 that explores this further.) Table 1: Platform characteristics. 40

5 . 600 180 partition build probe partition build probe 160 500 140 Cycles per output tuple Cycles per output tuple 400 120 100 300 80 200 60 40 100 20 0 0 1 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 1 64 256 512 1K 2K 4K 8K 32K 128K 64 256 512 1K 2K 64 256 512 1K 2K 4K 8K 32K 128K No Shared Independent Radix-best No Shared Independent Radix-best Number of partitions Number of partitions (a) Intel Nehalem (b) Sun UltraSPARC T2 Figure 1: Cycles per output tuple for the uniform dataset. the output in memory, adopting a similar method as pre- popular key appears in the low skew dataset 8% of the time, vious work [7, 11]. In later experiments (see Section 4.8), and the ten most popular keys account for 24% of the keys. we consider the effect of materializing the join result – in In comparison, in the high skew dataset, the most popular these cases, the join result is created in main memory and key appears 22% of the time, and the ten most popular keys not flushed to disk. appear 52% of the time. We describe the synthetic datasets that we used in the In all the experiments, the hash buckets that are created next section (Section 4.1). In Section 4.2 we give details during the build phase have a fixed size: they always have about the hardware that we used for our experiments. We 32 bytes of space for the payload, and 8 bytes are reserved continue with a presentation of the results in Sections 4.3 for the pointer that points to the next hash bucket in case of and 4.4. We analyze the results further in Sections 4.5 overflow. These numbers were picked so that each bucket fits through 4.7. We present results investigating the effect of in a single, last-level cache line for both the architectures. output materialization, and the sensitivity to input sizes and We size the hash table appropriately so that no overflow selectivities in Sections 4.8 through 4.10. occurs. 4.1 Dataset We experimented with three different datasets, which we 4.2 Platforms denote as uniform, low skew and high skew, respectively. We We evaluated our methods on two different architectures: assume that the relation R contains the primary key and the the Intel Nehalem and the Sun UltraSPARC T2. We de- relation S contains a foreign key referencing tuples in R. In scribe the characteristics of each architecture in detail below, all the datasets we fix the cardinalities of R to 16M tuples and we summarize key parameters in Table 1. and S to 256M tuples1 . We picked the ratio of R to S to The Intel Nehalem microarchitecture is the successor of be 1:16 to mimic the common decision support settings. We the Intel Core microarchitecture. All Nehalem-based CPUs experiment with different ratios in Section 4.9. are superscalar processors and exploit instruction-level par- In our experiments both keys and payloads are eight bytes allelism by using out-of-order execution. The Nehalem fam- each. Each tuple is simply a <key, payload> pair, so tuples ily supports multi-threading, and allows two contexts to ex- are 16 bytes long. Keys can either be the values themselves, ecute per core. if the key is numeric, or an 8-byte hash of the value in the For our experiments, we use the six-core Intel Xeon X5650 case of strings. We chose to represent payloads as 8 bytes for that was released in Q1 of 2010. This CPU has a unified two reasons: (a) Given that columnar storage is commonly 12MB, 16-way associative L3 cache with a line size of 64 used in data warehouses, we want to simulate storing <key, bytes. This L3 cache is shared by all twelve contexts ex- value> or <key, record-id> pairs in the hash table, and (b) ecuting on the six cores. Each core has a private 256KB, make comparisons with existing work (i.e. [11, 4]) easier. 8-way associative L2 cache, with a line size of 64 bytes. Fi- Exploring alternative ways of constructing hash table entries nally, private 32KB instruction and data L1 caches connect is not a focus of this work, but has been explored before [15]. to each core’s load/store units. For the uniform dataset, we create tuples in the relation The Sun UltraSPARC T2 was introduced in 2007 and re- S such that each tuple matches every key in the relation R lies heavily on multi-threading to achieve maximum through- with equal probability. For the skewed datasets, we added put. An UltraSPARC T2 chip has eight cores and each core skew to the distribution of the foreign keys in the relation S. has hardware support for eight contexts. UltraSPARC T2 (Adding skew to the relation R would violate the primary does not feature out-of-order execution. Each core has a key constraint.) We created two skewed datasets, for two single instruction fetch unit, a single floating point unit, a different s values of the Zipf distribution: low skew with single memory unit and two arithmetic units. At every cy- s = 1.05 and high skew with s = 1.25. Intuitively, the most cle, each core executes at most two instructions, each taken from two different contexts. Each context is scheduled in a 1 Throughout the paper, M=220 and K=210 . round-robin fashion every cycle, unless the context has ini- 41

6 . 600 500 partition build probe partition build probe 450 500 Cycles per output tuple 400 Cycles per output tuple 350 400 300 300 250 200 200 150 100 100 50 0 0 1 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 1 64 256 512 1K 2K 4K 8K 32K 128K 64 256 512 1K 2K 64 256 512 1K 2K 4K 8K 32K 128K No Shared Independent Radix-best No Shared Independent Radix-best Number of partitions Number of partitions (a) Intel Nehalem (b) Sun UltraSPARC T2 Figure 2: Cycles per output tuple for the low skew dataset. tiated a long-latency operation, such as a memory load that As can be observed in Figure 1(a) for the Intel Nehalem caused a cache miss, and has to wait for the outcome. architecture, the performance of the non-partitioned join al- At the bottom of the cache hierarchy of the UltraSPARC gorithm is comparable to the optimal performance achieved T2 chip lies a shared 4MB, 16-way associative write-back L2 by the partition-based algorithms. The shared partitioning cache, with a line size of 64 bytes. To maximize through- algorithm performs best when sizing partitions so that they put, the shared cache is physically split into eight banks. fit in the last level cache. This figure reveals a problem with Therefore, up to eight cache requests can be handled concur- the independent partitioning algorithm. For a high number rently, provided that each request hits a different bank. Each of partitions, say 128K, each thread will create its own pri- core connects to this shared cache through a non-blocking, vate buffer, for a total of 128K ∗ 12 ≈ 1.5 million output pipelined crossbar. Finally, each core has a 8KB, 4-way buffers. This high number of temporary buffers introduces associative write-through L1 data cache with 16 bytes per two problems. First, it results in poor space utilization, as cache line that is shared by all the eight hardware contexts. most of these buffers are filled with very few tuples. Sec- Overall, in the absence of arbitration delays, the L2 cache ond, the working set of the algorithm grows tremendously, hit latency is 20 cycles. and keeping track of 1.5 million cache lines requires a cache whose capacity is orders of magnitude larger than the 12MB L3 cache. The radix partitioning algorithm is not affected 4.3 Results by this problem, because it operates in multiple passes and We start with the uniform dataset. In Figure 1, we plot limits the number of partition output buffers in each pass. the average number of CPU cycles that it takes to produce Next, we experimented with the Sun UltraSPARC T2 ar- one output tuple, without actually writing the output, for chitecture. In Figure 1(b) we see that doing no partitioning a varying number of partitions. (Note that to convert the is at least 1.5X faster compared to all the other algorithms. CPU cycles to wall clock time, we simply divide the CPU The limited memory on this machine prevented us from run- cycles by the corresponding clock rate shown in Table 1). ning experiments with a high number of partitions for the The horizontal axis shows the different join algorithms (bars independent partitioning algorithm because of the signifi- “No”, “Shared”, “Independent”), corresponding to the first cant memory overhead discussed in the previous paragraph. three hash join variants described in Section 3.4. For the As this machine supports nearly five times more hardware radix join algorithm, we show the best result across any contexts than the Intel machine, the memory that is required number of passes (bars marked “Radix-best”). Notice that for bookkeeping is five times higher as well. we assume that the optimizer will always be correct and pick To summarize our results with the uniform dataset, we the optimal number of passes. see that on the Intel architecture the performance of the no Overall, the build phase takes a very small fraction of partitioning join algorithm is comparable to the performance the overall time, regardless of the partitioning strategy that of all the other algorithms. For the Sun UltraSPARC T2, is being used, across all architectures (see Figure 1). The we see that the no partitioning join algorithm outperforms reason for this behavior is two-fold. First and foremost, the the other algorithms by at least 1.5X. Additionally, the no smaller cardinality of the R relation translates into less work partitioning algorithm is more robust, as the performance during the build phase. (We experiment with different car- of the other algorithms degrades if the query optimizer does dinality ratios in Section 4.9.) Second, building a hash table not pick the optimal value for the number of partitions. is a really simple operation: it merely involves copying the input data into the appropriate hash bucket, which incurs a 4.4 Effect of skew lot less computation than the other steps, such as the out- We now consider the case when the distribution of foreign put tuple reconstruction that must take place in the probe keys in the relation S is skewed. We again plot the average phase. The performance of the join operation is therefore time to produce each tuple of the join (in machine cycles) mostly determined by the time spent partitioning the input in Figure 2 for the low skew dataset, and in Figure 3 for the relations and probing the hash table. high skew dataset. 42

7 . 600 700 partition build probe partition build probe 600 500 500 Cycles per output tuple Cycles per output tuple 400 400 300 300 200 200 100 100 0 0 1 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 1 64 256 512 1K 2K 4K 8K 32K 128K 64 256 512 1K 2K 64 256 512 1K 2K 4K 8K 32K 128K No Shared Independent Radix-best No Shared Independent Radix-best Number of partitions Number of partitions (a) Intel Nehalem (b) Sun UltraSPARC T2 Figure 3: Cycles per output tuple for the high skew dataset. Intel Sun TLB TLB Nehalem UltraSPARC T2 Cycles L3 Instruc- load store miss -tions miss miss NO No / 1 No / 1 partition 0 0 0 0 0 SN Indep. / 16 Indep. / 64 NO build 322 2 2,215 1 0 L2-S Shared / 2048 Shared / 2048 probe 15,829 862 54,762 557 0 L2-R Radix / 2048 Radix / 2048 partition 3,578 18 29,096 6 2 SN build 328 8 2,064 0 0 Table 2: Shorthand notation and corresponding par- probe 21,717 866 54,761 505 0 partition 11,778 103 31,117 167 257 titioning strategy / number of partitions. L2-S build 211 1 2,064 0 0 probe 6,144 35 54,762 1 0 partition 6,343 221 34,241 7 237 By comparing Figure 1 with Figure 2, we notice that, L2-R build 210 1 2,064 0 0 when using the shared hash table (bar “No” in all graphs), probe 6,152 36 54,761 1 0 performance actually improves in the presence of skew! On the other hand, the performance of the shared partitioning Table 3: Performance counter averages for the uni- algorithm degrades rapidly with increasing skew, while the form dataset (millions). performance of the independent partitioning and the radix partitioning algorithms shows little change on the Intel Ne- halem and degrades on the Sun UltraSPARC T2. Mov- 4.5 Performance counters ing to Figure 3, we see that the relative performance of Due to space constraints, we focus on specific partitioning the non-partitioned join algorithm increases rapidly under configurations from this section onward. We use “NO” to higher skew, compared to the other algorithms. The non- denote the no partitioning strategy where the hash table is partitioned algorithm is generally 2X faster than the other shared by all threads, and we use “SN” to denote the case algorithms on the Intel Nehalem, and more than 4X faster when we create as many partitions as hardware contexts than the other algorithms on the Sun UltraSPARC T2. (join threads), except we round the number of partitions up To summarize these results, skew in the underlying join to the next power of two as is required for the radix par- key values (data skew) manifests itself as partition size skew titioning algorithm. We use “L2” to denote the case when when using partitioning. For the shared partitioning algo- we create partitions to fit in the last level cache, appending rithm, during the partition phase, skew causes latch con- “-S” when partitioning with shared output buffers, and “-R” tention on the partition with the most popular key(s). For for radix partitioning. We summarize this notation in Table all partitioning-based algorithms, during the probe phase, 2. Notice that the L2 numbers correspond to the best per- skew translates into a skewed work distribution per thread. forming configuration settings in the experiment with the Therefore, the overall join completion time is determined by uniform dataset (see Figure 1). the completion time of the partition with the most popular We now use the hardware performance counters to un- key. (We explore this behavior further in Section 4.7.1.) On derstand the characteristics of these join algorithms. In the the other hand, skew improves performance when sharing interest of space, we only present our findings from a single the hash table and not doing partitioning for two reasons. architecture: the Intel Nehalem. We first show the results First, the no partitioning approach ensures an even work from the uniform dataset in Table 3. Each row indicates one distribution per thread as all the threads are working con- particular partitioning algorithm and join phase, and each currently on the single partition. This greedy scheduling column shows a different architectural event. First, notice strategy proves to be effective in hiding data skew. Second, the code path length. It takes, on average, about 55 billion performance increases because the hardware handles skew a instructions to complete the probe phase and an additional lot more efficiently, as skewed memory access patterns cause 50% to 65% of that for partitioning, depending on the al- significantly fewer cache misses. gorithm of choice. The NO algorithm pays a high cost in 43

8 . TLB TLB 12 NO Cycles L3 Instruc- load store SN miss -tions miss miss 10 L2-S L2-R partition 0 0 0 0 0 NO build 323 3 2,215 1 0 probe 6,433 98 54,762 201 0 8 partition 3,577 17 29,096 6 1 SN build 329 8 2,064 0 0 Speedup probe 13,241 61 54,761 80 0 6 partition 36,631 79 34,941 67 106 L2-S build 210 5 2,064 0 0 4 probe 8,024 13 54,762 1 0 partition 5,344 178 34,241 5 72 L2-R build 209 4 2,064 0 0 2 probe 8,052 13 54,761 1 0 0 Table 4: Performance counter averages for the high 0 2 4 6 8 10 12 skew dataset (millions). Number of threads Figure 4: Speedup over single threaded execution, terms of the L3 cache misses during the probe phase. The uniform dataset. partitioning phase of the SN algorithm is fast but fails to contain the memory reference patterns that arise during the probe phase in the cache. The L2-S algorithm manages to 30% lower than the number of the cache misses observed minimize these memory references, but incurs a high L3 and during the uniform experiment. However, partitioning per- TLB miss ratio during the partition phase compared to the formance worsens by more than 3X when creating shared NO and SN algorithms. The L2-R algorithm uses multiple partitions under high skew! passes to partition the input and carefully controls the L3 The performance counters don’t provide clean insights and TLB misses during these phases. Once the cache-sized into why the non-partitioned algorithm exhibits similar or partitions have been created, we see that both the L2-S and better performance than the other cache-efficient algorithms L2-R algorithms avoid incurring many L3 and TLB misses across all datasets. Although a cycle breakdown is still fea- during the probe phase. In general, we see fewer cache and sible at a macroscopic level where the assumption of no con- TLB misses across all algorithms when adding skew (in Ta- tention holds (for example as in Ailamaki et al. [1]), this ex- ble 4). periment reveals that blindly assigning fixed cycle penalties Unfortunately, interpreting performance counters is much to architectural events can lead to misleading conclusions. more challenging with modern multi-core processors and will likely get worse. Processors have become a lot more com- plex over the last ten years, yet the events that counters 4.6 Speedup from SMT capture have hardly changed. This trend causes a grow- Modern processors improve the overall efficiency with hard- ing gap between the high-level algorithmic insights the user ware multithreading. Simultaneous multi-threading (SMT) expects and the specific causes that trigger some proces- permits multiple independent threads of execution to better sor state that the performance counters can capture. In a utilize the resources provided by modern processor architec- uniprocessor, for example, a cache miss is an indication that tures. We now evaluate the impact of SMT on the hash join the working set exceeds the cache’s capacity. The penalty algorithms. is bringing the data from memory, an operation the costs We first show a speedup experiment for the Intel Nehalem hundreds of cycles. However, in a multi-core processor, a on the uniform dataset in Figure 4. We start by dedicating memory load might miss in the cache because the operation each thread to a core, and once we exceed the number of touches memory that some other core has just modified. The available physical cores (six for our Intel Nehalem), we then penalty in this case is looking in some other cache for the start assigning threads in a round-robin fashion to the avail- data. Although a neighboring cache lookup can be ten or a able hardware contexts. We observe that the algorithms be- hundred times faster than bringing the data from memory, have very differently when some cores are idle (fewer than six both scenarios will simply increment the cache miss counter threads) versus in the SMT region (more than six threads). and not record the cause of this event. With fewer than six threads all the algorithms scale linearly, To illustrate this point, let’s turn our attention to a case in and the NO algorithm has optimal speedup. With more Table 3 where the performance counter results can be mis- than six threads, the NO algorithm continues to scale, be- leading: The probe phase of the SN algorithm has slightly coming almost 11X faster than the single-threaded version fewer L3 and TLB misses than the probe phase of the NO when using all available contexts. The partitioning-based algorithm and equal path length, so the probe phase of the algorithms SN, L2-S and L2-R, however, do not exhibit this SN algorithm should be comparable or faster than probe behavior. The speedup curve for these three algorithms in phase of the NO algorithm. However, the probe phase of the SMT region either flattens completely (SN algorithm), or the NO algorithm is almost 25% faster! Another issue is increases at a reduced rate (L2-R algorithm) than the non- latch contention, which causes neither L3 cache misses nor SMT region. In fact, performance drops for all partitioning TLB misses, and therefore is not reported in the perfor- algorithms for seven threads because of load imbalance: a mance counters. For example, when comparing the uniform single core has to do the work for two threads. (This imbal- and high skew numbers for the L2-S algorithm, the number ance can be ameliorated through load balancing, a technique of the L3 cache misses during the high skew experiment is that we explore in Section 4.7.1.) 44

9 . Uniform 4.7 Synchronization 6 threads 12 threads Improvement Synchronization is used in multithreaded programs to guar- NO 28.23 16.15 1.75X antee the consistency of shared data structures. In our join SN 34.04 25.62 1.33X implementations, we use barrier synchronization when all L2-S 19.27 18.13 1.06X the threads wait for tasks to be completed before they can L2-R 14.46 12.71 1.14X proceed to the next task. (For example, at the end of each High skew pass of the radix partition phase, each thread has to wait 6 threads 12 threads Improvement until all other threads complete before proceeding.) In this NO 9.34 6.76 1.38X section, we study the effect of barrier synchronization on SN 19.50 17.15 1.14X the performance of the hash join algorithm. In the interest L2-S 38.37 44.87 0.86X of space, we only present results for the Intel Nehalem ma- L2-R 15.04 13.61 1.11X chine. Since the radix partitioning algorithm wins over the other partitioning algorithms across all datasets, our discus- Table 5: Simultaneous multi-threading experiment sion only focuses on results for the non-partitioned algorithm on the Intel Nehalem, showing billions of cycles to (NO) and the radix partitioning algorithm (L2-R). join completion and relative improvement. Synchronization has little impact on the non-partitioned (NO) algorithm for both the uniform and the high skew datasets, regardless of the number of threads that are run- Uniform ning. The reason for this behavior is the simplicity of the 8 threads 64 threads Improvement NO algorithm. First, there is no partition phase at all, and NO 37.30 12.64 2.95X each thread can proceed independently in the probe phase. SN 55.70 22.25 2.50X Therefore synchronization is only necessary during the build L2-S 51.62 23.86 2.16X phase, a phase that takes less than 2% of the total time (see L2-R 46.62 18.88 2.47X Figure 1). Second, by dispensing with partitioning, this High skew algorithm ensures an even distribution of work across the 8 threads 64 threads Improvement threads, as all the threads are working concurrently on the NO 23.92 11.67 2.05X single shared hash table. SN 70.52 49.54 1.42X We now turn our attention to the radix partitioning al- gorithm, and break down the time spent by each thread. L2-S 73.91 221.01 0.33X Unlike the non-partitioned algorithm, the radix partitioning L2-R 66.01 43.16 1.53X algorithm is significantly impacted by synchronization on both the uniform and the high skew datasets. Figure 5(a) Table 6: Simultaneous multi-threading experiment shows the time breakdown for the L2-R algorithm when run- on the Sun UltraSPARC T2, showing billions of cy- ning 12 threads on the Intel Nehalem machine with the high cles to join completion and relative improvement. skew dataset. Each histogram in this figure represents the execution flow of a thread. The vertical axis can be viewed We summarize the benefit of SMT in Table 5 for the In- as a time axis (in machine cycles). White rectangles in these tel architecture, and in Table 6 for the Sun architecture. histograms represent tasks, the position of each rectangle in- For the Intel Nehalem and the uniform dataset, the NO al- dicates the beginning time of the task, and the height repre- gorithm benefits significantly from SMT, becoming 1.75X sents the completion time of this task for each thread. The faster. This algorithm is not optimized for cache perfor- gray rectangles represent the waiting time that is incurred mance, and as seen in Section 4.5, causes many cache misses. by a thread that completes its task but needs to synchro- As a result, it provides more opportunities for SMT to ef- nize with the other threads before continuing. In the radix ficiently overlap the memory accesses. On the other hand, join algorithm, we can see five expensive operations that are the other three algorithms are optimized for cache perfor- synchronized through barriers: (1) computing the thread- mance to different degrees. Their computation is a large private histogram, (2) computing the global histogram, (3) fraction of the total execution time, therefore they do not doing radix partitioning, (4) building a hash table for each benefit significantly from using SMT. In addition, we notice partition of the relation R, and (5) probing each hash table that the NO algorithm is around 2X slower than the L2-R with a partition from the relation S. The synchronization algorithm without SMT, but its performance increases to cost of the radix partitioning algorithm accounts for nearly almost match the L2-R algorithm performance with SMT. half of the total join completion time for some threads. For the Sun UltraSPARC T2, the NO algorithm also ben- The synchronization cost is so high under skew primar- efits the most from SMT. In this architecture the code path ily because it is hard to statically divide work items into length (i.e. instructions executed) has a direct impact on the equally-sized subtasks. As a result, faster threads have to join completion time, and therefore the NO algorithm per- wait for slower threads. For example, if threads are stat- forms best both with and without SMT. As the Sun machine ically assigned to work on partitions in the probe phase, cannot exploit instruction parallelism at all, we see increased the distribution of the work assigned to the threads will in- benefits from SMT compared to the Intel architecture. variably also be skewed. Thus, the thread processing the When comparing the high skew dataset with the uniform partition with the most popular key becomes a bottleneck dataset across both architectures, we see that the improve- and the overall completion time is determined by the com- ment of SMT is reduced. The skewed key distribution in- pletion time of the partition with the most popular keys. In curs fewer cache misses, therefore SMT loses opportunities Figure 5(a), this is thread 3. to hide processor pipeline stalls. 45

10 . work wait work wait 14 14 12 12 10 10 Cycles (billions) Cycles (billions) 8 8 6 6 4 4 2 2 0 0 1 2 3 4 5 6 7 8 9 10 11 12 1 2 3 4 5 6 7 8 9 10 11 12 Thread ID Thread ID (a) High skew dataset (b) High skew dataset with work stealing Figure 5: Time breakdown of the radix join. 4.7.1 Load balancing Machine NO SN L2-S L2-R If static work allocation is the problem, then how would Intel Nehalem 23% 4% 7% 10% the radix join algorithm perform under a dynamic work al- Sun UltraSPARC T2 29% 21% 20% 23% location policy and highly skewed input? To answer this question, we tweaked the join algorithm to allow the faster Table 7: Additional overhead of materialization with threads that have completed their probe phase to steal work respect to total cycles without materialization on from other slower threads. In our implementation, the unit the uniform dataset. of work is a single partition. In doing so, we slightly increase Scale 0.5 Scale 1 Scale 2 the synchronization cost because work queues need to now NO 7.65 (0.47X) 16.15 (1.00X) 62.27 (3.86X) be protected with latches, but we balance the load better. SN 11.76 (0.46X) 25.62 (1.00X) 98.82 (3.86X) In Figure 5(b) we plot the breakdown of the radix par- L2-S 8.47 (0.47X) 18.13 (1.00X) 68.48 (3.78X) titioning algorithm (L2-R) using this work stealing policy L2-R 5.82 (0.46X) 12.71 (1.00X) DNF when running on the Intel Nehalem machine with the high skew dataset. Although the work is now balanced almost Table 8: Join sensitivity with varying input cardi- perfectly for the smaller partitions, the partitions with the nalities for the uniform dataset on Intel Nehalem. most popular keys are still a bottleneck. In the high skew The table shows the cycles for computing the join dataset, the most popular key appears 22% of the time, and (in billions) and the relative difference to scale 1. thread 3 in this case has been assigned only a single par- tition which happened to correspond to the most popular discarded. Recent work by Cieslewicz et al. [3] highlighted key. In comparison, for this particular experiment, the NO the trade-offs involved when materializing the output. algorithm can complete the join in under 7 billion cycles In Table 7 we report the increase in the total join comple- (Table 4), and hence is 1.9X faster. An interesting area for tion time when we materialize the output in memory for the future work is load balancing techniques that permit work uniform dataset and the partitioning strategies described in stealing at a finer granularity than an entire partition with Table 2. If the join operator is part of a complex query a reasonable synchronization cost. plan, it is unlikely that the entire join output will ever need To summarize, under skew, a load balancing technique to be written in one big memory block, but, even in this improves the performance of the probe phase but does not extreme case, we see that no algorithm is being significantly address the inherent inefficiency of all the partitioning-based impacted by materialization. algorithms. In essence, there is a coordination cost to be paid for load balancing, as thread synchronization is neces- 4.9 Cardinality experiments sary. Skew in this case causes contention, stressing the cache We now explore how sensitive our findings are to varia- coherence protocol and increasing memory traffic. On the tions in the cardinalities of the two input relations. Table 8 other hand, the no partitioning algorithm does skewed mem- shows the results when running the join algorithms on the ory loads of read-only data, which is handled very efficiently Intel Nehalem machine. The numbers obtained from the uni- by modern CPUs through caching. form dataset (described in detail in Section 4.1) are shown in the middle column. We first created one uniform dataset where both relations are half the size (scale 0.5). This means 4.8 Effect of output materialization the relation R has 8M tuples and the relation S has 128M tu- Early work in main memory join processing [7] did not ples. We also created a uniform dataset where both relations take into account the cost of materialization. This decision are twice the size (scale 2), i.e. the relation R has 32M tu- was justified by pointing out that materialization comes at a ples and the relation S has 512M tuples. The scale 2 dataset fixed price for all algorithms and, therefore, a join algorithm occupies 9GB out of the 12GB of memory our system has will be faster regardless of the output being materialized or (Table 1) and leaves little working memory, but the serial 46

11 . 800 partition build probe insensitive to different join selectivities, because its out-of- 700 order execution manages to overlap the data transfer with 600 the byte shuffling that is required to assemble the output tuple. On the other hand, for the Sun UltraSPARC T2 ma- Cycles per output tuple 500 chine, there is a strong linear correlation between the code 400 path length and the cycles that are required for the probe phase to complete. The in-order Sun UltraSPARC T2 can- 300 not automatically extract the instruction-level parallelism of 200 the probe phase, unless the programmer explicitly expresses it by using multiple threads. 100 0 4.11 Implications 1 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K 16 64 256 512 1K 2K 4K 8K 32K 128K These results imply that DBMSs must reconsider their join algorithms for current and future multi-core processors. No Shared Independent Radix-best First, modern processors are very effective in hiding cache Number of partitions miss latencies through multi-threading (SMT), as it is shown in Tables 5 and 6. Second, optimizing for cache performance Figure 6: Experiment on Intel Nehalem with uni- requires partitioning, and this has additional computation form dataset and |R|=|S|. and synchronization overheads, and necessitates elaborate load balancing techniques to deal with skew. These costs of access pattern allows performance to degrade gracefully for partitioning on a modern multi-core machine can be higher all algorithms but the L2-R algorithm. The main memory than the benefit of an increased cache hit rate, especially on optimizations of the L2-R algorithm cause many random ac- skewed datasets (as shown in Figures 2 and 3.) To fully cesses which hurt performance. We therefore mark the L2-R leverage the current and future CPUs, high performance algorithm as not finished (DNF). main memory designs have to achieve good cache and TLB We now examine the impact of the relative size of the rela- performance, while fully exploiting SMT, and minimizing tions R and S. We fixed the cardinality of the relation S to synchronization costs. be 16M tuples, making |R| = |S|, and we plot the cycles per output tuple for the uniform dataset when running on the 5. RELATED WORK Intel Nehalem in Figure 6. First, the partitioning time in- There is a rich history of studying hash join performance creases proportionally to |R| + |S|. Second, the build phase for main memory database systems, starting with the early becomes significant, taking at least 25% of the total join work of DeWitt et al. [7]. A decade later Shatdal et al. [16] completion time. The probe phase, however, is at most 30% studied cache-conscious algorithms for query execution and slower, and less affected by the cardinality of the relation R. discovered that the probe phase dominates the overall hash Overall, all the algorithms are slower when |R| = |S| because join processing time. They also showed that hash join com- they have to process more data, but the no partitioning algo- putation can be sped up if both the build and probe relations rithm is slightly favored because it avoids partitioning both are partitioned so as to fit in the cache. input relations. Ailamaki et al. [1] studied where DBMSs spend their time The results show that no join algorithm is particularly sen- on modern processors, whereas Manegold et al. [12] inspected sitive to our selection of input relation cardinalities, there- the time breakdown for a hash join operation. Both papers fore our findings are expected to hold across a broader spec- break down the query execution time by examining perfor- trum of cardinalities. The outcome of the experiments for mance counters, and single out cache and TLB misses as the the Sun UltraSPARC T2 is similar, and is omitted. two primary culprits for suboptimal performance in main memory processing. A follow-up paper [13] presented a cost 4.10 Selectivity experiment model on how to optimize the performance of the radix join We now turn our attention to how join selectivity affects algorithm on a uniprocessor [2]. performance. As all our original datasets are examples of Ross [15] presented a more efficient way to improve the joins between primary and foreign keys, all the experiments performance of hash joins by using cuckoo hashing [14] and that have been presented so far have a selectivity of 100%. SIMD instructions. Garcia and Korth [9] have studied the For this experiment we created two different S relations that benefits of using simultaneous multi-threading for hash join have the same cardinality but only 50% and 12.5% of the tu- processing. Graefe et al. [10] described how hash-based algo- ples join with a tuple in the relation R. The key distribution rithms can improve the performance of a commercial DBMS. is uniform. Finally, there has been prior work in handling skew during Results for the Intel Nehalem are shown Figure 7(a). De- hash join processing. The experiments with a high number creasing join selectivity has a marginal benefit on the probe of partitions that we presented in Section 4.4 are an exten- phase, but the other two phases are unaffected. The out- sion of an idea by DeWitt et al. [8] for a main memory, come of the same experiment on Sun UltraSPARC T2 is multi-core environment. shown in Figure 7(b). In this architecture, the benefit of a small join selectivity on the probe phase is significant. Inspecting the performance counters in this experiment 6. CONCLUSIONS AND FUTURE WORK revealed additional insights. Across all the architectures, The rapidly evolving multi-core landscape requires that the code path length (i.e. instructions executed) increases as DBMSs carefully consider the interactions between query join selectivity increases. The Intel Nehalem is practically processing algorithms and the underlying hardware. In this 47

12 . 100 100 partition build probe partition build probe 90 90 80 80 Cycles per output tuple Cycles per output tuple 70 70 60 60 50 50 40 40 30 30 20 20 10 10 0 0 NO SN L2-S L2-R NO SN L2-S L2-R NO SN L2-S L2-R NO SN L2-S L2-R NO SN L2-S L2-R NO SN L2-S L2-R 12% 50% 100% 12% 50% 100% Join selectivity Join selectivity (a) Intel Nehalem (b) Sun UltraSPARC T2 Figure 7: Sensitivity to join selectivity. Increasing join selectivity impacts the critical path for the Sun UltraSPARC T2, while the out-of-order execution on Intel Nehalem overlaps computation with data transfer. paper we examine these interactions when executing a hash 7. REFERENCES join operation in a main memory DBMS. We implement [1] A. Ailamaki, D. J. DeWitt, M. D. Hill, and D. A. Wood. a family of main memory hash join algorithms that vary in DBMSs on a modern processor: Where does time go? In the way that they implement the partition, build, and probe VLDB, pages 266–277, 1999. phases of a canonical hash join algorithm. [2] P. A. Boncz, S. Manegold, and M. L. Kersten. Database We also evaluate our algorithms on two different multi- architecture optimized for the new bottleneck: Memory core processor architectures. Our results show that a simple access. In VLDB, pages 54–65, 1999. hash join technique that does not do any partitioning of the [3] J. Cieslewicz, W. Mee, and K. A. Ross. Cache-conscious input relations often outperforms the other more complex buffering for database operators with state. In DaMoN, pages 43–51, 2009. partitioning-based join alternatives. In addition, the relative [4] J. Cieslewicz and K. A. Ross. Data partitioning on chip performance of this simple hash join technique rapidly im- multiprocessors. In DaMoN, pages 25–34, 2008. proves with increasing skew, and it outperforms every other [5] J. Cieslewicz, K. A. Ross, and I. Giannakakis. Parallel algorithm in the presence of even small amounts of skew. buffers for chip multiprocessors. In DaMoN, 2007. Minimizing cache misses requires additional computation, [6] D. J. DeWitt and J. Gray. Parallel database systems: The synchronization and load balancing to cope with skew. As future of database processing or a passing fad? SIGMOD our experiments show, these costs on a modern multi-core Record, 19(4):104–112, 1990. machine can be higher than the benefit of an increased cache [7] D. J. DeWitt, R. H. Katz, F. Olken, L. D. Shapiro, M. Stonebraker, and D. A. Wood. Implementation hit rate. To fully leverage the current and future CPUs, high techniques for main memory database systems. In performance main memory designs have to consider how to SIGMOD Conference, pages 1–8, 1984. minimize computation and synchronization costs, and fully [8] D. J. DeWitt, J. F. Naughton, D. A. Schneider, and exploit simultaneous multi-threading, in addition to main- S. Seshadri. Practical skew handling in parallel joins. In taining good cache and TLB behavior. While a large part of VLDB, pages 27–40, 1992. the previous work in this area has mostly focused on mini- [9] P. Garcia and H. F. Korth. Database hash-join algorithms mizing cache and TLB misses for database query processing on multithreaded computer architectures. In Conf. Computing Frontiers, pages 241–252, 2006. tasks, our work here suggests that paying attention to the [10] G. Graefe, R. Bunker, and S. Cooper. Hash joins and hash computation and synchronization costs is also very impor- teams in Microsoft SQL Server. In VLDB, pages 86–97, tant in modern processors. This work points to a rich direc- 1998. tion for future work in exploring the design of more complex [11] C. Kim, E. Sedlar, J. Chhugani, T. Kaldewey, A. D. query processing techniques (beyond single joins) that con- Nguyen, A. D. Blas, V. W. Lee, N. Satish, and P. Dubey. sider the joint impact of computation, synchronization costs, Sort vs. hash revisited: Fast join implementation on load balancing, and cache behavior. modern multi-core CPUs. PVLDB, 2(2):1378–1389, 2009. [12] S. Manegold, P. A. Boncz, and M. L. Kersten. What happens during a join? Dissecting CPU and memory optimization effects. In VLDB, pages 339–350, 2000. Acknowledgments [13] S. Manegold, P. A. Boncz, and M. L. Kersten. Optimizing We thank David DeWitt for his deeply insightful comments main-memory join on modern hardware. IEEE Trans. on this paper. We also thank the reviewers of this paper Knowl. Data Eng., 14(4):709–730, 2002. and Willis Lang for their feedback on an earlier draft of this [14] R. Pagh and F. F. Rodler. Cuckoo hashing. J. Algorithms, paper. David Wood and the Wisconsin Multifacet project 51(2):122–144, 2004. were invaluable supporters of this project and gave us ex- [15] K. A. Ross. Efficient hash probes on modern processors. In ICDE, pages 1297–1301, 2007. clusive access to their hardware, and we thank them. This [16] A. Shatdal, C. Kant, and J. F. Naughton. Cache conscious work was supported in part by a grant from the Microsoft algorithms for relational query processing. In VLDB, pages Jim Gray Systems Lab, and in part by the National Science 510–521, 1994. Foundation under grants IIS-0963993 and CNS-0551401. [17] M. Stonebraker. The case for shared nothing. In HPTS, 1985. 48