- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

通用I/O系统

展开查看详情

1 . The Requirements of I/O CS162 • So far in this course: Operating Systems and – We have learned how to manage CPU and memory Systems Programming Lecture 16 • What about I/O? – Without I/O, computers are useless (disembodied brains?) General I/O – But… thousands of devices, each slightly different » How can we standardize the interfaces to these devices? October 18th, 2017 – Devices unreliable: media failures and transmission errors Neeraja J. Yadwadkar » How can we make them reliable??? http://cs162.eecs.Berkeley.edu – Devices unpredictable and/or slow » How can we manage them if we don’t know what they will do or how they will perform? 10/18/17 CS162 © UCB Spring 2017 Lec 16.2 OS Basics: I/O In a Picture Threads Read / Address Spaces Windows Write wires Processes Files Sockets Processor OS Hardware Virtualization Core I/O Software Controllers Hardware ISA Memory interrupts Read / Secondary DMA transfer Write Main Secondary Storage Processor Protection Core Memory Storage (Disk) Boundary (DRAM) (SSD) OS Ctrlr Networks storage • I/O devices you recognize are supported by I/O Controllers • Processors accesses them by reading and writing IO registers as if they were memory Displays – Write commands and arguments, read status and results Inputs 10/18/17 CS162 © UCB Spring 2017 Lec 16.3 10/18/17 CS162 © UCB Spring 2017 Lec 16.4

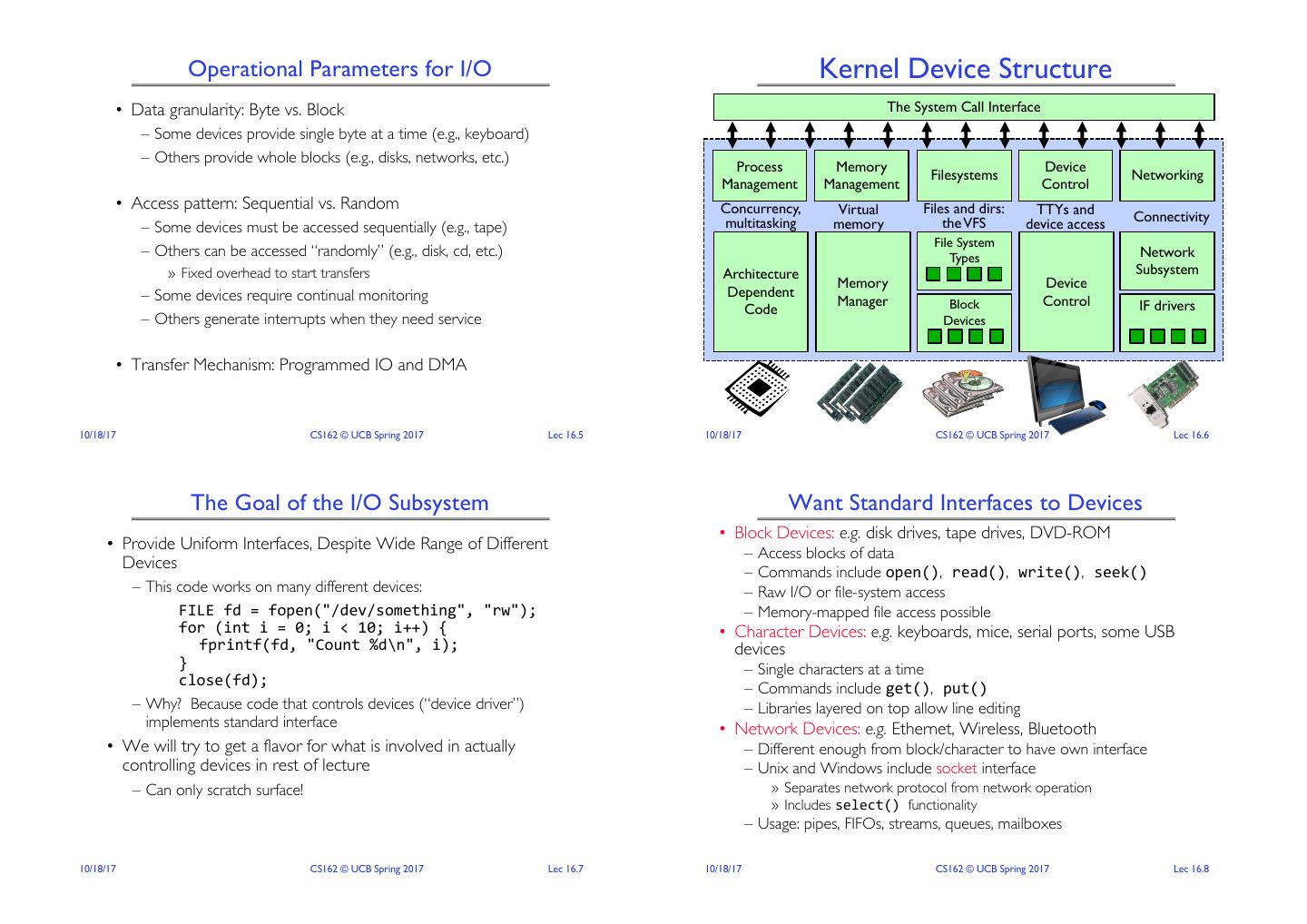

2 . Operational Parameters for I/O Kernel Device Structure • Data granularity: Byte vs. Block The System Call Interface – Some devices provide single byte at a time (e.g., keyboard) – Others provide whole blocks (e.g., disks, networks, etc.) Process Memory Device Filesystems Networking Management Management Control • Access pattern: Sequential vs. Random Concurrency, Virtual Files and dirs: TTYs and multitasking the VFS Connectivity – Some devices must be accessed sequentially (e.g., tape) memory device access File System – Others can be accessed “randomly” (e.g., disk, cd, etc.) Types Network » Fixed overhead to start transfers Architecture Subsystem Memory Device – Some devices require continual monitoring Dependent Manager Block Control IF drivers Code – Others generate interrupts when they need service Devices • Transfer Mechanism: Programmed IO and DMA 10/18/17 CS162 © UCB Spring 2017 Lec 16.5 10/18/17 CS162 © UCB Spring 2017 Lec 16.6 The Goal of the I/O Subsystem Want Standard Interfaces to Devices • Block Devices: e.g. disk drives, tape drives, DVD-ROM • Provide Uniform Interfaces, Despite Wide Range of Different – Access blocks of data Devices – Commands include open(), read(), write(), seek() – This code works on many different devices: – Raw I/O or file-system access FILE fd = fopen("/dev/something", "rw"); – Memory-mapped file access possible for (int i = 0; i < 10; i++) { • Character Devices: e.g. keyboards, mice, serial ports, some USB fprintf(fd, "Count %d\n", i); devices } – Single characters at a time close(fd); – Commands include get(), put() – Why? Because code that controls devices (“device driver”) – Libraries layered on top allow line editing implements standard interface • Network Devices: e.g. Ethernet, Wireless, Bluetooth • We will try to get a flavor for what is involved in actually – Different enough from block/character to have own interface controlling devices in rest of lecture – Unix and Windows include socket interface – Can only scratch surface! » Separates network protocol from network operation » Includes select() functionality – Usage: pipes, FIFOs, streams, queues, mailboxes 10/18/17 CS162 © UCB Spring 2017 Lec 16.7 10/18/17 CS162 © UCB Spring 2017 Lec 16.8

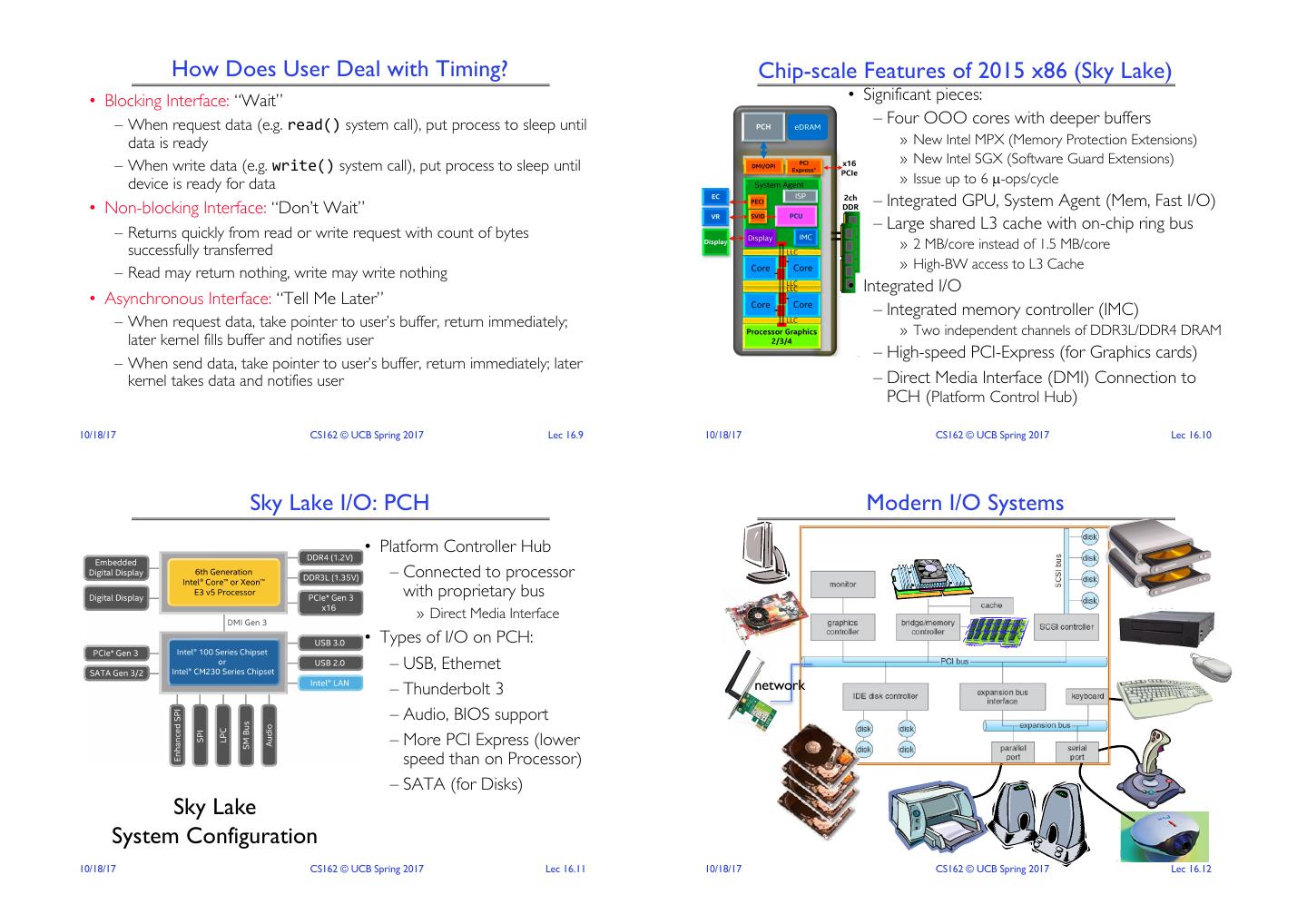

3 . How Does User Deal with Timing? Chip-scale Features of 2015 x86 (Sky Lake) • Blocking Interface: “Wait” • Significant pieces: – When request data (e.g. read() system call), put process to sleep until – Four OOO cores with deeper buffers data is ready » New Intel MPX (Memory Protection Extensions) » New Intel SGX (Software Guard Extensions) – When write data (e.g. write() system call), put process to sleep until device is ready for data » Issue up to 6 µ-ops/cycle • Non-blocking Interface: “Don’t Wait” – Integrated GPU, System Agent (Mem, Fast I/O) – Returns quickly from read or write request with count of bytes – Large shared L3 cache with on-chip ring bus successfully transferred » 2 MB/core instead of 1.5 MB/core » High-BW access to L3 Cache – Read may return nothing, write may write nothing • Integrated I/O • Asynchronous Interface: “Tell Me Later” – Integrated memory controller (IMC) – When request data, take pointer to user’s buffer, return immediately; » Two independent channels of DDR3L/DDR4 DRAM later kernel fills buffer and notifies user – High-speed PCI-Express (for Graphics cards) – When send data, take pointer to user’s buffer, return immediately; later kernel takes data and notifies user – Direct Media Interface (DMI) Connection to PCH (Platform Control Hub) 10/18/17 CS162 © UCB Spring 2017 Lec 16.9 10/18/17 CS162 © UCB Spring 2017 Lec 16.10 Sky Lake I/O: PCH Modern I/O Systems • Platform Controller Hub – Connected to processor with proprietary bus » Direct Media Interface • Types of I/O on PCH: – USB, Ethernet – Thunderbolt 3 network – Audio, BIOS support – More PCI Express (lower speed than on Processor) – SATA (for Disks) Sky Lake System Configuration 10/18/17 CS162 © UCB Spring 2017 Lec 16.11 10/18/17 CS162 © UCB Spring 2017 Lec 16.12

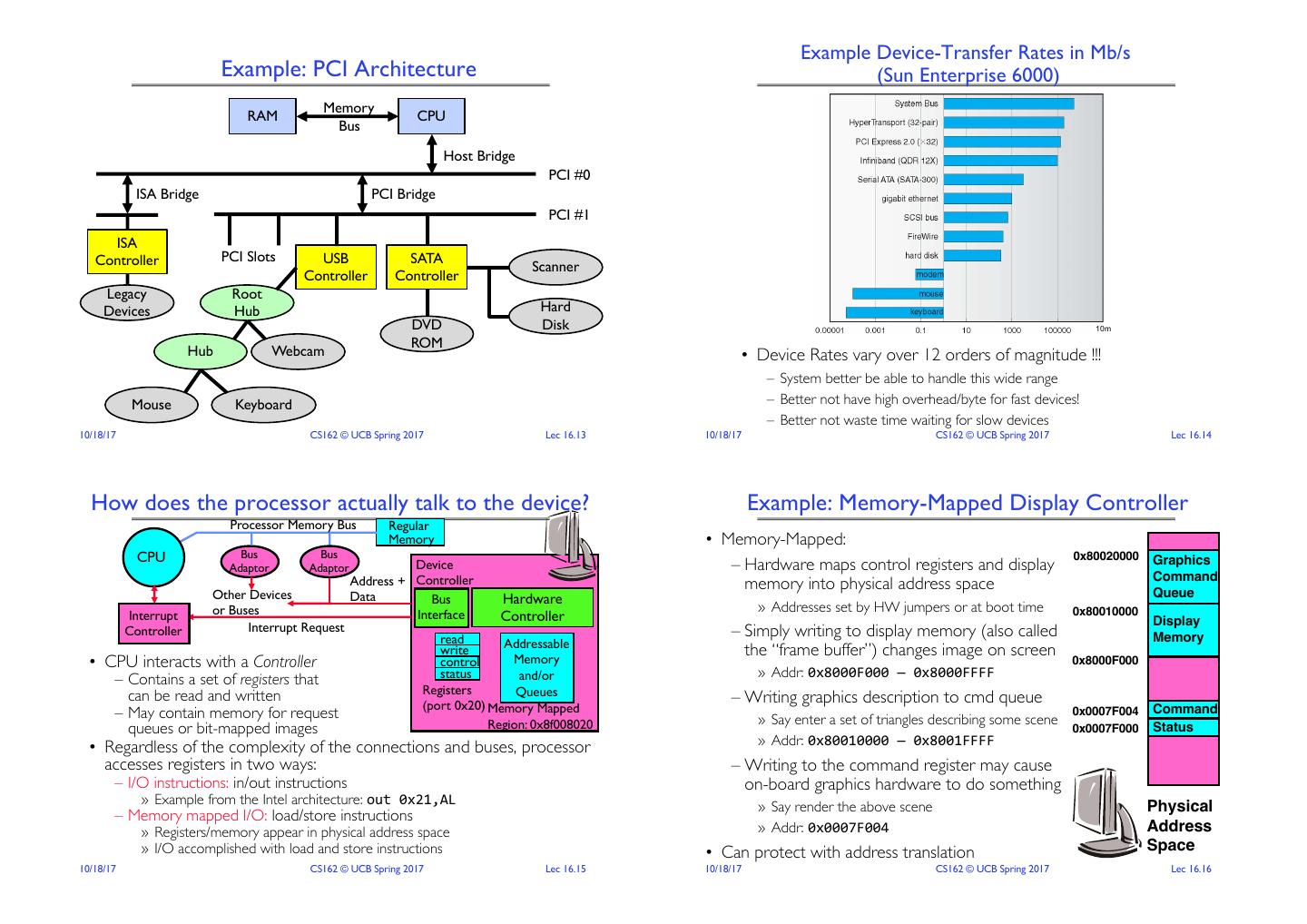

4 . Example Device-Transfer Rates in Mb/s Example: PCI Architecture (Sun Enterprise 6000) Memory RAM CPU Bus Host Bridge PCI #0 ISA Bridge PCI Bridge PCI #1 ISA Controller PCI Slots USB SATA Scanner Controller Controller Legacy Root Devices Hub Hard DVD Disk 10m ROM Hub Webcam • Device Rates vary over 12 orders of magnitude !!! – System better be able to handle this wide range Mouse Keyboard – Better not have high overhead/byte for fast devices! – Better not waste time waiting for slow devices 10/18/17 CS162 © UCB Spring 2017 Lec 16.13 10/18/17 CS162 © UCB Spring 2017 Lec 16.14 How does the processor actually talk to the device? Example: Memory-Mapped Display Controller Processor Memory Bus Regular Memory • Memory-Mapped: CPU Bus Bus 0x80020000 Adaptor Adaptor Device – Hardware maps control registers and display Graphics Address + Controller Command memory into physical address space Queue Other Devices Data Bus Hardware or Buses » Addresses set by HW jumpers or at boot time 0x80010000 Interrupt Interface Controller Display Controller Interrupt Request – Simply writing to display memory (also called read Addressable Memory write the “frame buffer”) changes image on screen • CPU interacts with a Controller control Memory 0x8000F000 status and/or » Addr: 0x8000F000 — 0x8000FFFF – Contains a set of registers that can be read and written Registers Queues (port 0x20) Memory Mapped – Writing graphics description to cmd queue – May contain memory for request 0x0007F004 Command Region: 0x8f008020 » Say enter a set of triangles describing some scene queues or bit-mapped images 0x0007F000 Status » Addr: 0x80010000 — 0x8001FFFF • Regardless of the complexity of the connections and buses, processor accesses registers in two ways: – Writing to the command register may cause – I/O instructions: in/out instructions on-board graphics hardware to do something » Example from the Intel architecture: out 0x21,AL » Say render the above scene Physical – Memory mapped I/O: load/store instructions » Registers/memory appear in physical address space » Addr: 0x0007F004 Address » I/O accomplished with load and store instructions • Can protect with address translation Space 10/18/17 CS162 © UCB Spring 2017 Lec 16.15 10/18/17 CS162 © UCB Spring 2017 Lec 16.16

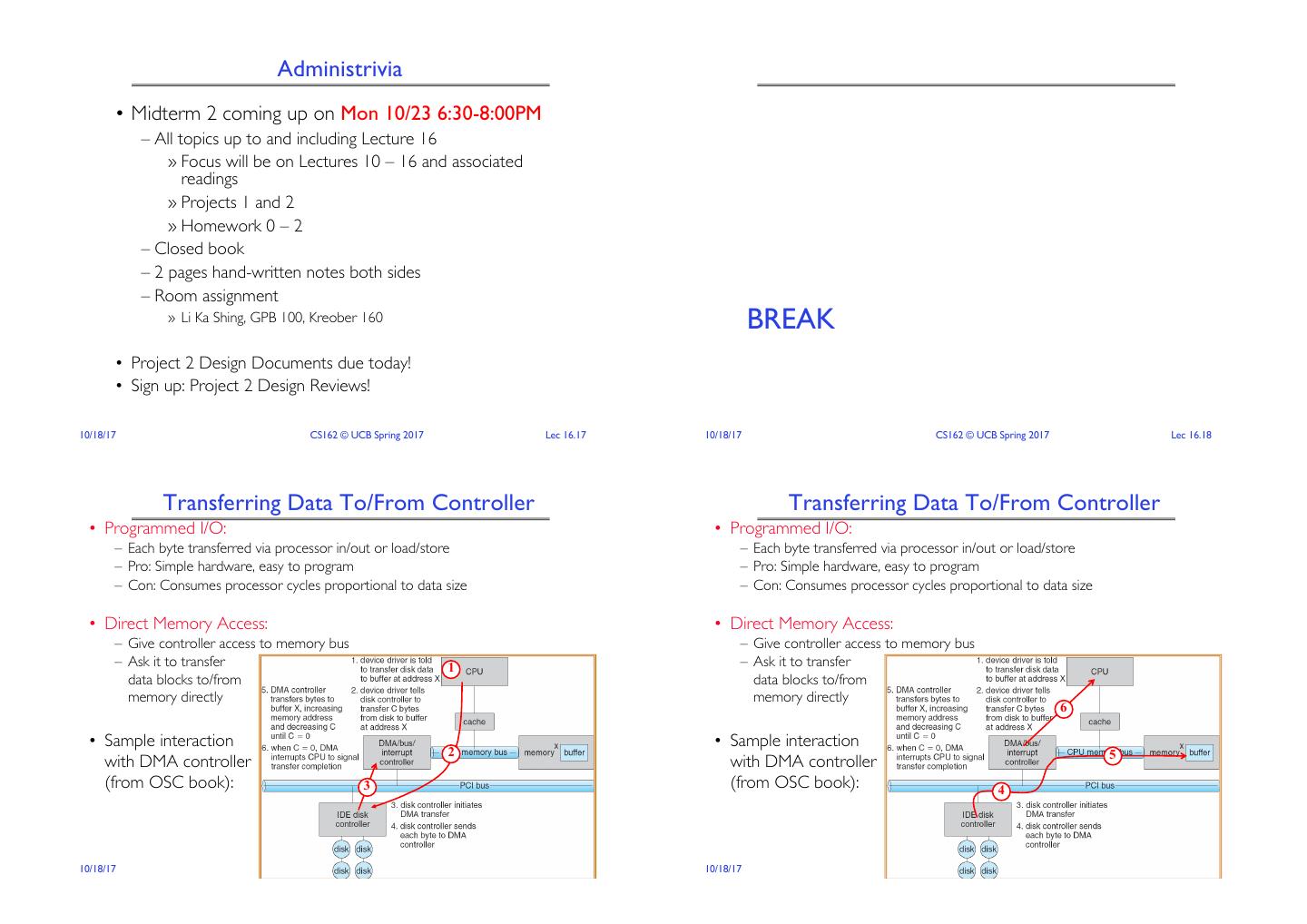

5 . Administrivia • Midterm 2 coming up on Mon 10/23 6:30-8:00PM – All topics up to and including Lecture 16 » Focus will be on Lectures 10 – 16 and associated readings » Projects 1 and 2 » Homework 0 – 2 – Closed book – 2 pages hand-written notes both sides – Room assignment » Li Ka Shing, GPB 100, Kreober 160 BREAK • Project 2 Design Documents due today! • Sign up: Project 2 Design Reviews! 10/18/17 CS162 © UCB Spring 2017 Lec 16.17 10/18/17 CS162 © UCB Spring 2017 Lec 16.18 Transferring Data To/From Controller Transferring Data To/From Controller • Programmed I/O: • Programmed I/O: – Each byte transferred via processor in/out or load/store – Each byte transferred via processor in/out or load/store – Pro: Simple hardware, easy to program – Pro: Simple hardware, easy to program – Con: Consumes processor cycles proportional to data size – Con: Consumes processor cycles proportional to data size • Direct Memory Access: • Direct Memory Access: – Give controller access to memory bus – Give controller access to memory bus – Ask it to transfer 1 – Ask it to transfer data blocks to/from data blocks to/from memory directly memory directly 6 • Sample interaction • Sample interaction 2 5 with DMA controller with DMA controller (from OSC book): 3 (from OSC book): 4 10/18/17 CS162 © UCB Spring 2017 Lec 16.19 10/18/17 CS162 © UCB Spring 2017 Lec 16.20

6 . I/O Device Notifying the OS Device Drivers • Device Driver: Device-specific code in the kernel that interacts • The OS needs to know when: directly with the device hardware – The I/O device has completed an operation – Supports a standard, internal interface – The I/O operation has encountered an error – Same kernel I/O system can interact easily with different device drivers • I/O Interrupt: – Special device-specific configuration supported with the ioctl() – Device generates an interrupt whenever it needs service system call – Pro: handles unpredictable events well – Con: interrupts relatively high overhead • Polling: • Device Drivers typically divided into two pieces: – OS periodically checks a device-specific status register – Top half: accessed in call path from system calls » I/O device puts completion information in status register » implements a set of standard, cross-device calls like open(), close(), – Pro: low overhead read(), write(), ioctl(), strategy() – Con: may waste many cycles on polling if infrequent or unpredictable I/O » This is the kernel’s interface to the device driver operations » Top half will start I/O to device, may put thread to sleep until finished • Actual devices combine both polling and interrupts – Bottom half: run as interrupt routine – For instance – High-bandwidth network adapter: » Gets input or transfers next block of output » Interrupt for first incoming packet » May wake sleeping threads if I/O now complete » Poll for following packets until hardware queues are empty 10/18/17 CS162 © UCB Spring 2017 Lec 16.21 10/18/17 CS162 © UCB Spring 2017 Lec 16.22 Life Cycle of An I/O Request Basic Performance Concepts User • Response Time or Latency: Time to perform an Program operation(s) Kernel I/O Subsystem • Bandwidth or Throughput: Rate at which operations are performed (op/s) – Files: MB/s, Networks: Mb/s, Arithmetic: GFLOP/s Device Driver Top Half • Start up or “Overhead”: time to initiate an operation Device Driver Bottom Half • Most I/O operations are roughly linear in n bytes Device – Latency(n) = Overhead + n/TransferCapacity Hardware 10/18/17 CS162 © UCB Spring 2017 Lec 16.23 10/18/17 CS162 © UCB Spring 2017 Lec 16.24

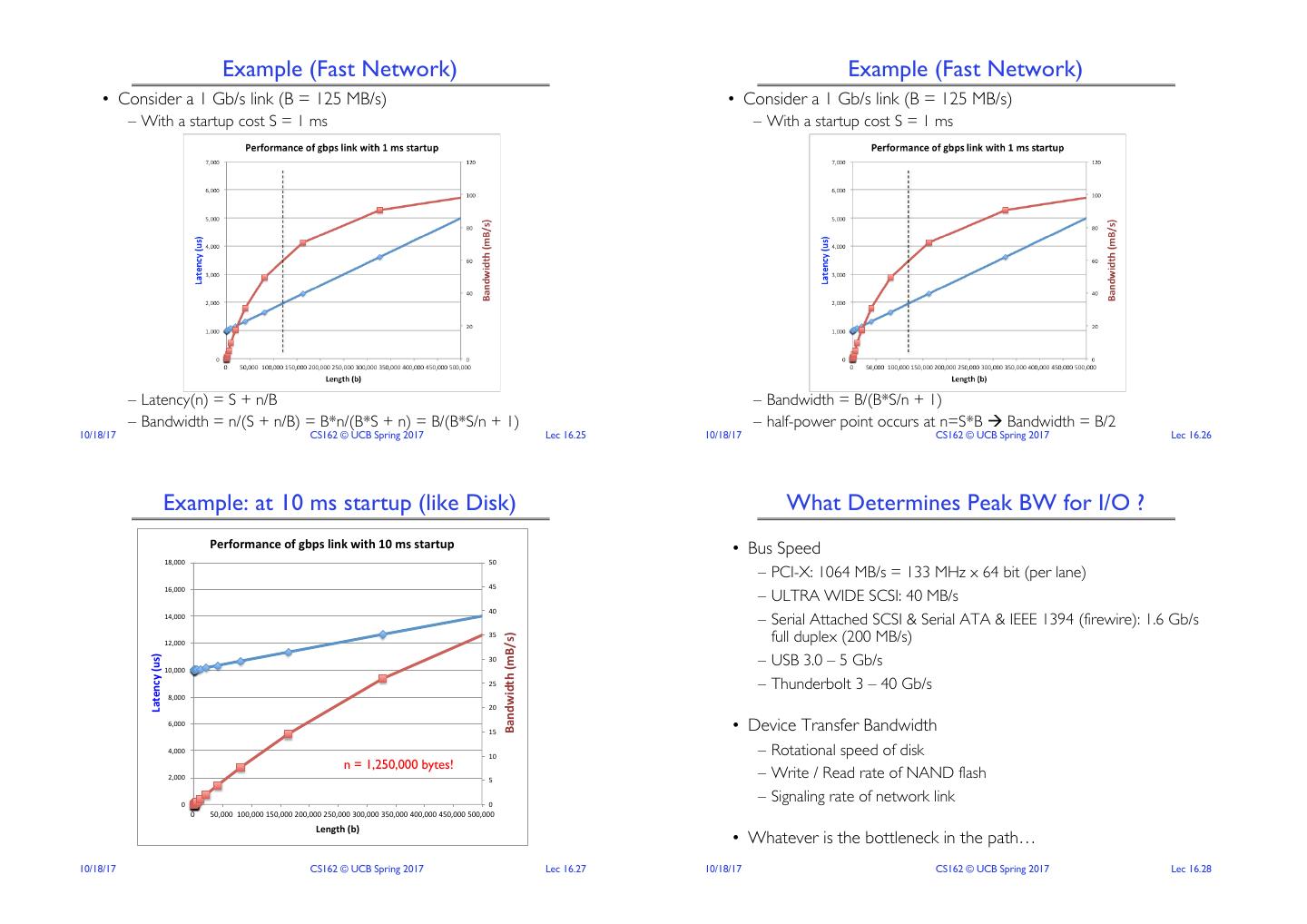

7 . Example (Fast Network) Example (Fast Network) • Consider a 1 Gb/s link (B = 125 MB/s) • Consider a 1 Gb/s link (B = 125 MB/s) – With a startup cost S = 1 ms – With a startup cost S = 1 ms – Latency(n) = S + n/B – Bandwidth = B/(B*S/n + 1) – Bandwidth = n/(S + n/B) = B*n/(B*S + n) = B/(B*S/n + 1) – half-power point occurs at n=S*B à Bandwidth = B/2 10/18/17 CS162 © UCB Spring 2017 Lec 16.25 10/18/17 CS162 © UCB Spring 2017 Lec 16.26 Example: at 10 ms startup (like Disk) What Determines Peak BW for I/O ? Performance)of)gbps)link)with)10)ms)startup) • Bus Speed 18,000"" 50"" – PCI-X: 1064 MB/s = 133 MHz x 64 bit (per lane) 16,000"" 45"" – ULTRA WIDE SCSI: 40 MB/s 40"" 14,000"" – Serial Attached SCSI & Serial ATA & IEEE 1394 (firewire): 1.6 Gb/s full duplex (200 MB/s) Bandwidth)(mB/s)) 35"" 12,000"" – USB 3.0 – 5 Gb/s Latency)(us)) 30"" 10,000"" 25"" – Thunderbolt 3 – 40 Gb/s 8,000"" 20"" 6,000"" 15"" • Device Transfer Bandwidth 4,000"" 10"" – Rotational speed of disk n = 1,250,000 bytes! 2,000"" 5"" – Write / Read rate of NAND flash 0"" 0"" – Signaling rate of network link 0"" 50,000""100,000""150,000""200,000""250,000""300,000""350,000""400,000""450,000""500,000"" Length)(b)) • Whatever is the bottleneck in the path… 10/18/17 CS162 © UCB Spring 2017 Lec 16.27 10/18/17 CS162 © UCB Spring 2017 Lec 16.28

8 . Storage Devices The Amazing Magnetic Disk • Magnetic disks • Unit of Transfer: Sector Spindle Head Arm – Storage that rarely becomes corrupted – Ring of sectors form a track – Large capacity at low cost – Stack of tracks form a cylinder Surface Sector – Block level random access (except for SMR – later!) – Heads position on cylinders Platter Surface – Slow performance for random access Track Arm Assembly – Better performance for sequential access • Disk Tracks ~ 1µm (micron) wide – Wavelength of light is ~ 0.5µm • Flash memory – Resolution of human eye: 50µm – Storage that rarely becomes corrupted – 100K tracks on a typical 2.5” disk – Capacity at intermediate cost (5-20x disk) – Block level random access • Separated by unused guard regions – Good performance for reads; worse for random writes – Reduces likelihood neighboring tracks Motor Motor – Erasure requirement in large blocks are corrupted during writes (still a small non-zero chance) – Wear patterns issue 10/18/17 CS162 © UCB Spring 2017 Lec 16.29 10/18/17 CS162 © UCB Spring 2017 Lec 16.30 Review: Magnetic Disks Review: Magnetic Disks Track Track • Cylinders: all the tracks under the Sector • Cylinders: all the tracks under the Sector head at a given point on all surface head at a given point on all surface Head Head Cylinder Cylinder • Read/write data is a three-stage process: • Read/write data is a three-stage process: Platter Platter – Seek time: position the head/arm over the proper track – Seek time: position the head/arm over the proper track – Rotational latency: wait for desired sector to rotate under r/w head – Rotational latency: wait for desired sector to rotate under r/w head – Transfer time: transfer a block of bits (sector) under r/w head – Transfer time: transfer a block of bits (sector) under r/w head Seek time = 4-8ms Disk Latency = Queueing Time + Controller time + One rotation = 1-2ms Seek Time + Rotation Time + Xfer Time (3600-7200 RPM) Controller Hardware Request Software Result Media Time Queue (Seek+Rot+Xfer) (Device Driver) 10/18/17 CS162 © UCB Spring 2017 Lec 16.31 10/18/17 CS162 © UCB Spring 2017 Lec 16.32

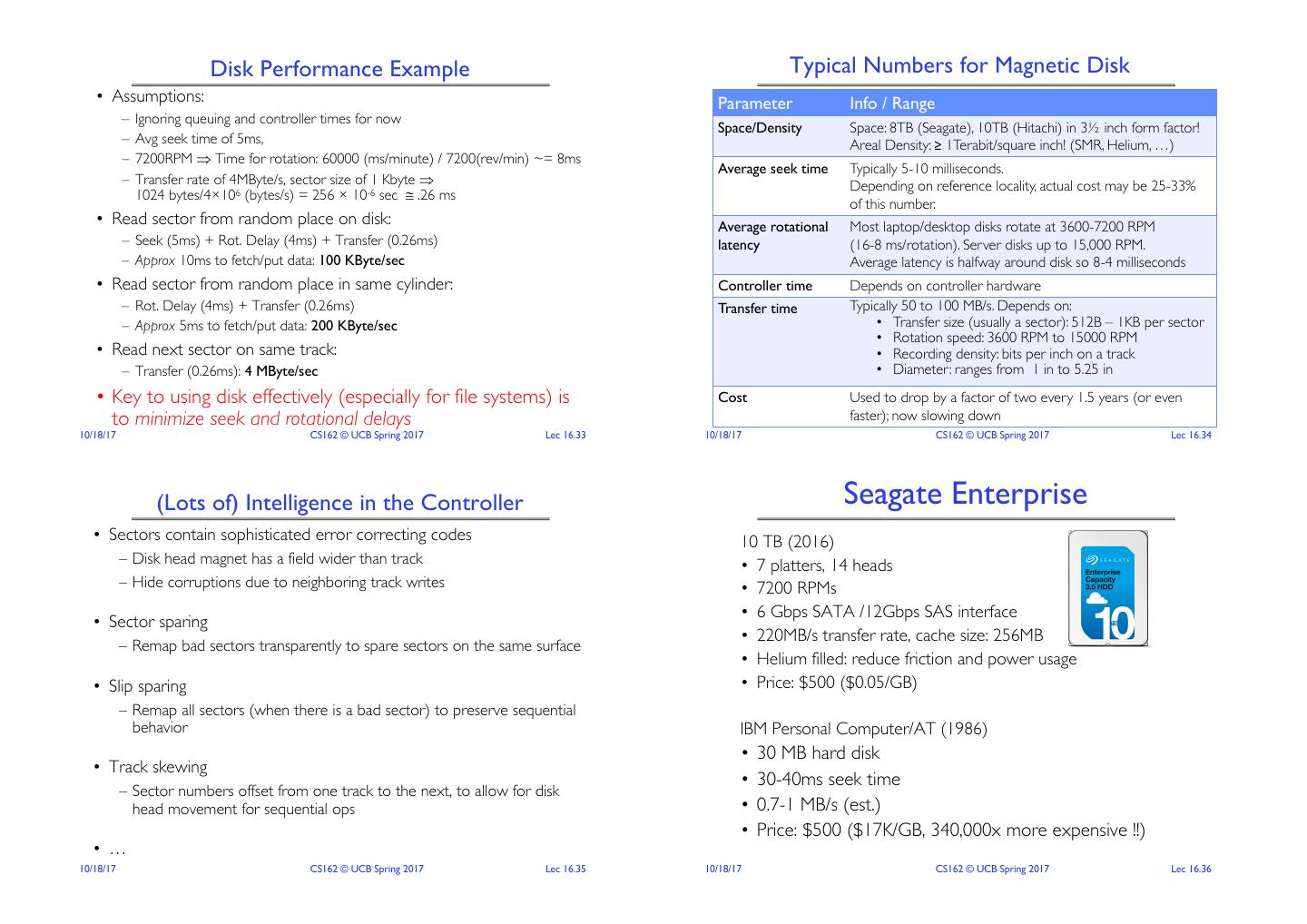

9 . Disk Performance Example Typical Numbers for Magnetic Disk • Assumptions: Parameter Info / Range – Ignoring queuing and controller times for now Space/Density Space: 8TB (Seagate), 10TB (Hitachi) in 3½ inch form factor! – Avg seek time of 5ms, Areal Density: ≥ 1Terabit/square inch! (SMR, Helium, …) – 7200RPM Þ Time for rotation: 60000 (ms/minute) / 7200(rev/min) ~= 8ms Average seek time Typically 5-10 milliseconds. – Transfer rate of 4MByte/s, sector size of 1 Kbyte Þ Depending on reference locality, actual cost may be 25-33% 1024 bytes/4×106 (bytes/s) = 256 × 10-6 sec @ .26 ms of this number. • Read sector from random place on disk: Average rotational Most laptop/desktop disks rotate at 3600-7200 RPM – Seek (5ms) + Rot. Delay (4ms) + Transfer (0.26ms) latency (16-8 ms/rotation). Server disks up to 15,000 RPM. – Approx 10ms to fetch/put data: 100 KByte/sec Average latency is halfway around disk so 8-4 milliseconds • Read sector from random place in same cylinder: Controller time Depends on controller hardware – Rot. Delay (4ms) + Transfer (0.26ms) Transfer time Typically 50 to 100 MB/s. Depends on: – Approx 5ms to fetch/put data: 200 KByte/sec • Transfer size (usually a sector): 512B – 1KB per sector • Rotation speed: 3600 RPM to 15000 RPM • Read next sector on same track: • Recording density: bits per inch on a track – Transfer (0.26ms): 4 MByte/sec • Diameter: ranges from 1 in to 5.25 in • Key to using disk effectively (especially for file systems) is Cost Used to drop by a factor of two every 1.5 years (or even to minimize seek and rotational delays faster); now slowing down 10/18/17 CS162 © UCB Spring 2017 Lec 16.33 10/18/17 CS162 © UCB Spring 2017 Lec 16.34 (Lots of) Intelligence in the Controller Seagate Enterprise • Sectors contain sophisticated error correcting codes 10 TB (2016) – Disk head magnet has a field wider than track • 7 platters, 14 heads – Hide corruptions due to neighboring track writes • 7200 RPMs • 6 Gbps SATA /12Gbps SAS interface • Sector sparing • 220MB/s transfer rate, cache size: 256MB – Remap bad sectors transparently to spare sectors on the same surface • Helium filled: reduce friction and power usage • Slip sparing • Price: $500 ($0.05/GB) – Remap all sectors (when there is a bad sector) to preserve sequential behavior IBM Personal Computer/AT (1986) • 30 MB hard disk • Track skewing • 30-40ms seek time – Sector numbers offset from one track to the next, to allow for disk head movement for sequential ops • 0.7-1 MB/s (est.) • Price: $500 ($17K/GB, 340,000x more expensive !!) • … 10/18/17 CS162 © UCB Spring 2017 Lec 16.35 10/18/17 CS162 © UCB Spring 2017 Lec 16.36

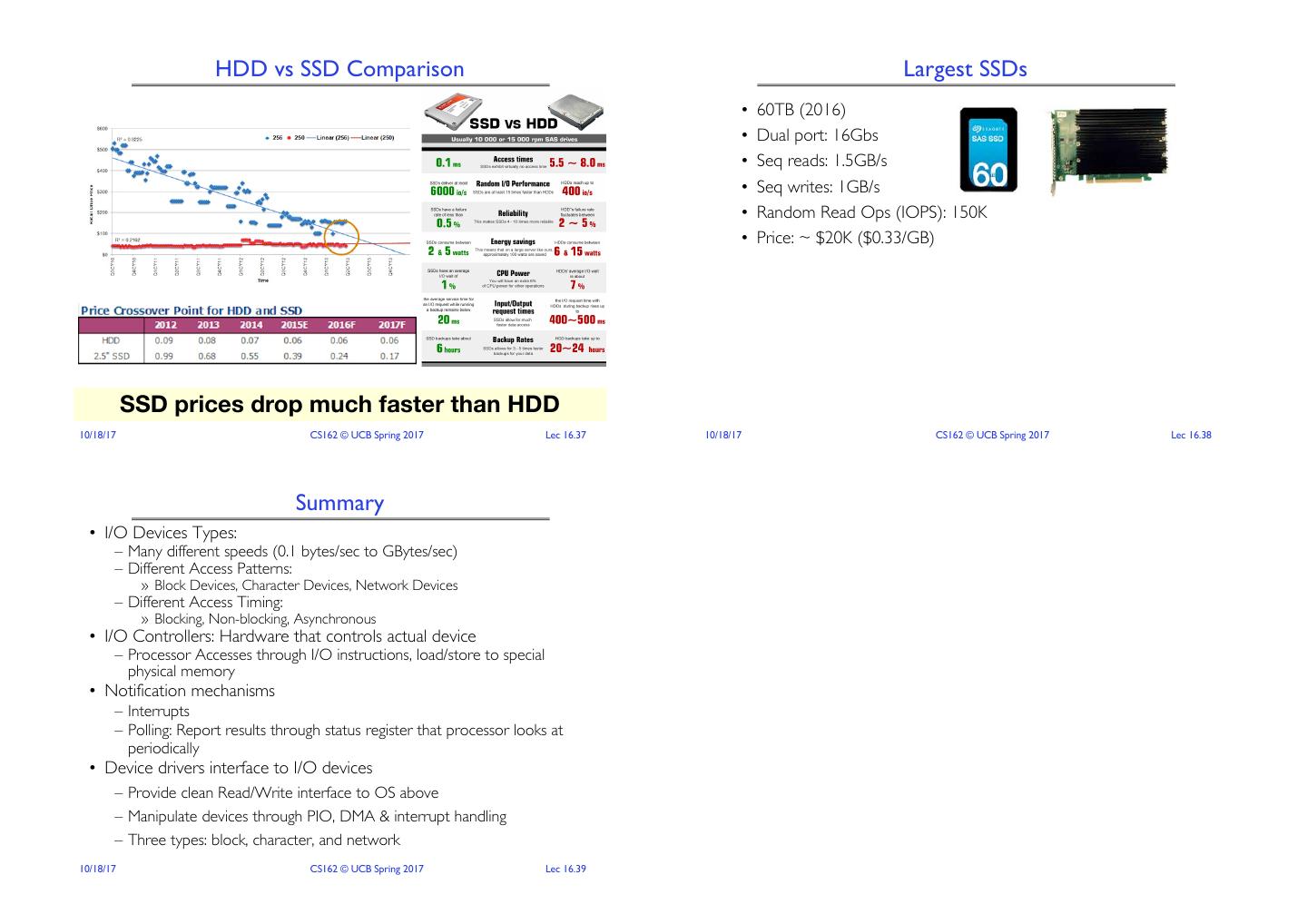

10 . HDD vs SSD Comparison Largest SSDs • 60TB (2016) • Dual port: 16Gbs • Seq reads: 1.5GB/s • Seq writes: 1GB/s • Random Read Ops (IOPS): 150K • Price: ~ $20K ($0.33/GB) SSD prices drop much faster than HDD 10/18/17 CS162 © UCB Spring 2017 Lec 16.37 10/18/17 CS162 © UCB Spring 2017 Lec 16.38 Summary • I/O Devices Types: – Many different speeds (0.1 bytes/sec to GBytes/sec) – Different Access Patterns: » Block Devices, Character Devices, Network Devices – Different Access Timing: » Blocking, Non-blocking, Asynchronous • I/O Controllers: Hardware that controls actual device – Processor Accesses through I/O instructions, load/store to special physical memory • Notification mechanisms – Interrupts – Polling: Report results through status register that processor looks at periodically • Device drivers interface to I/O devices – Provide clean Read/Write interface to OS above – Manipulate devices through PIO, DMA & interrupt handling – Three types: block, character, and network 10/18/17 CS162 © UCB Spring 2017 Lec 16.39