- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

DevOps for Applications in Azure Databricks: Creating Continuous Integration

展开查看详情

1 .WIFI SSID:SparkAISummit | Password: UnifiedAnalytics

2 .Creating continuous integration pipelines on Azure using Azure Databricks and Azure DevOps Premal Shah, Microsoft #UnifiedAnalytics #SparkAISummit

3 .What is DevOps? #UnifiedAnalytics #SparkAISummit 3

4 .What is DevOps? People. Process. Products. Build Deploy & “ Test DevOps is the union of people, process, and Continuous Develop Operate products to enable Delivery continuous delivery of value to your end users. ” Donovan Brown, MSFT PM Plan & Track Monitor & Learn #UnifiedAnalytics #SparkAISummit 4

5 .What is Azure Databricks? Built with your needs in mind Increase productivity Enterprise grade Azure security Native integration with Azure services Build on a secure, trusted cloud Live collaboration Enterprise-grade SLAs Scale without limits E2E data pipelines using ADF Integrated billing #UnifiedAnalytics #SparkAISummit 5

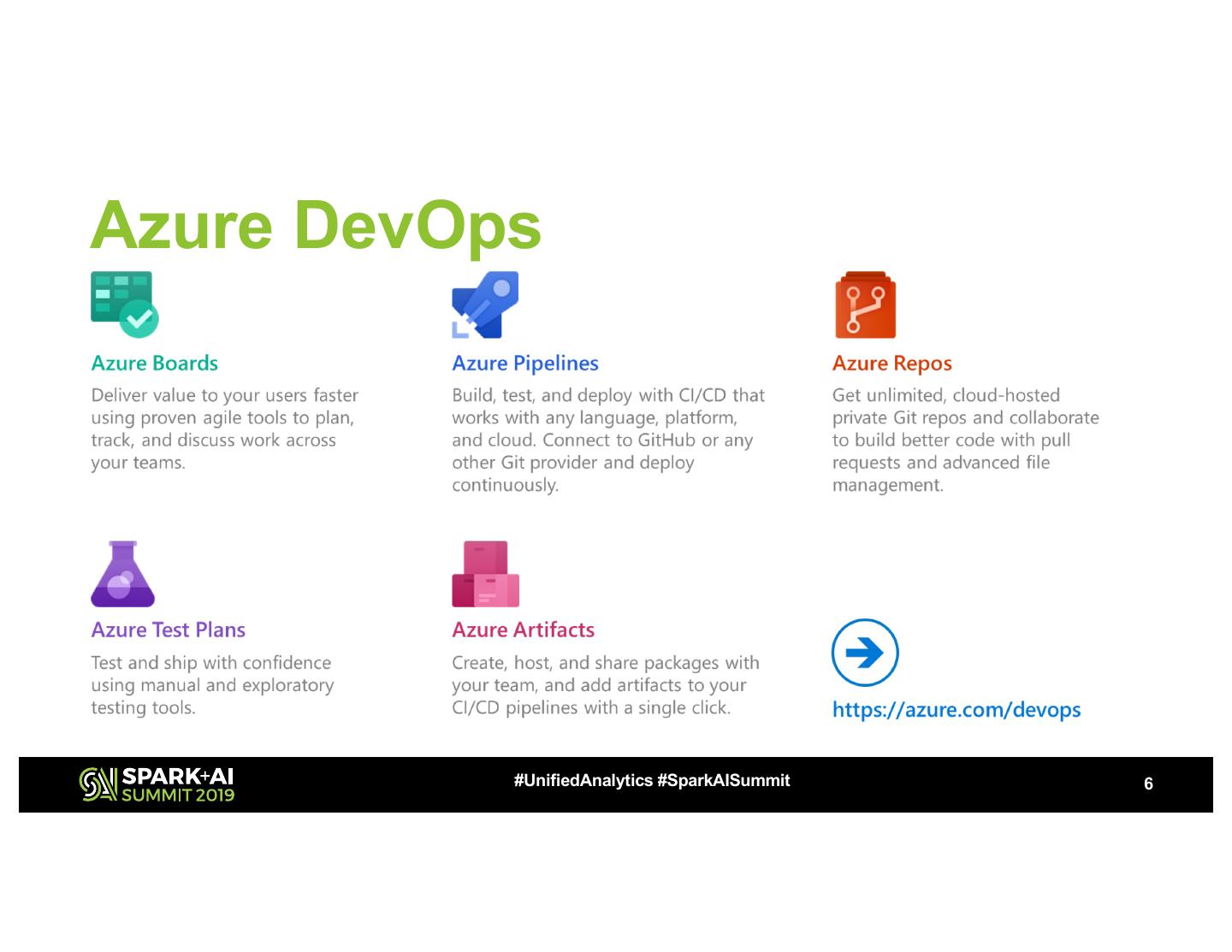

6 .Azure DevOps #UnifiedAnalytics #SparkAISummit 6

7 .Azure DevOps: Choose what you like Any Language, Any Platform #UnifiedAnalytics #SparkAISummit 7

8 .Implementation in Azure Databricks Notebooks Artifact Push Azure Azure notebooks Databricks DevOps Build Pipeline to Azure Dev WS Repo Devops Release Pipeline Deploy Deploy Notebook Notebook to staging to prod Azure Azure Execute Databricks Databricks Tests Staging WS Prod WS #UnifiedAnalytics #SparkAISummit 8

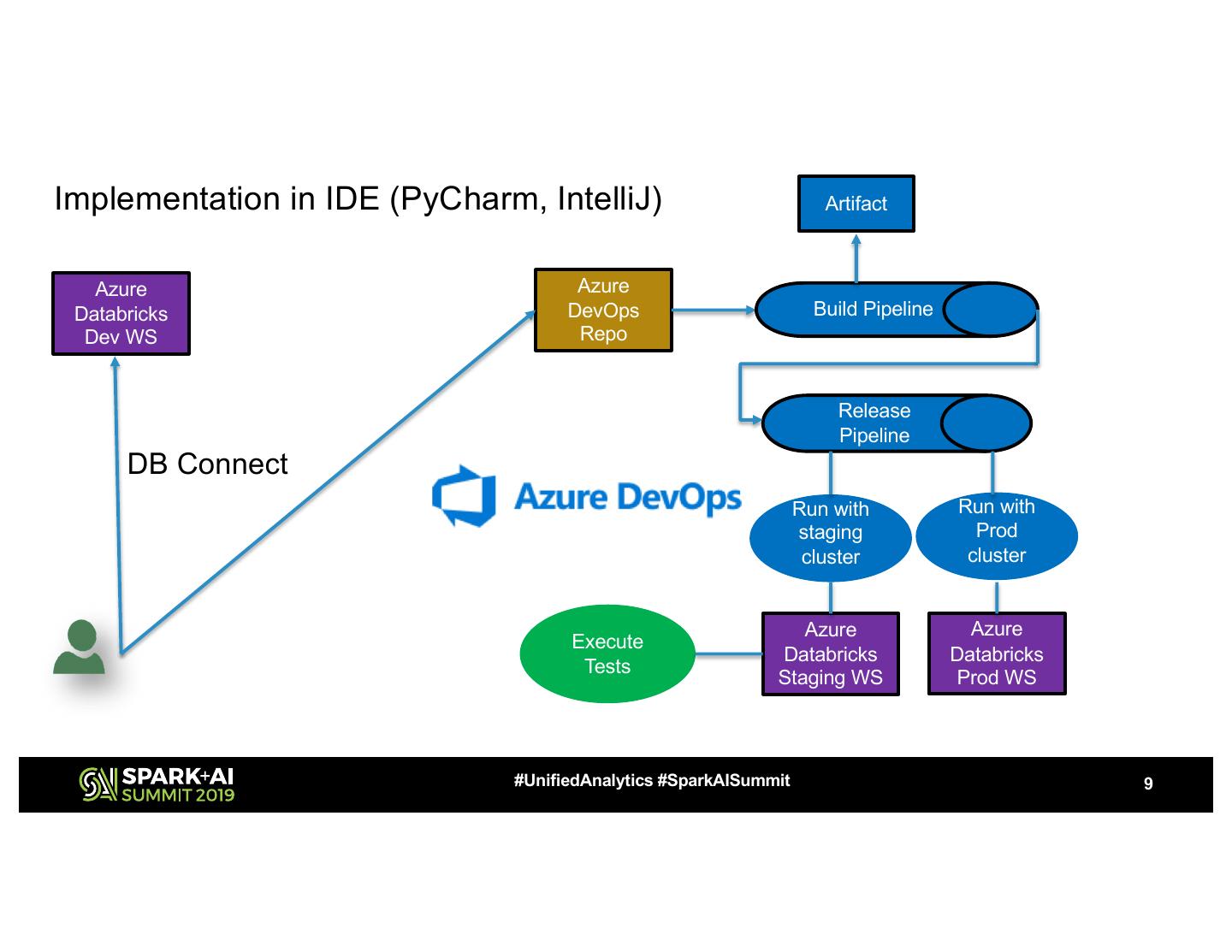

9 .Implementation in IDE (PyCharm, IntelliJ) Artifact Azure Azure Databricks DevOps Build Pipeline Dev WS Repo Release Pipeline DB Connect Run with Run with staging Prod cluster cluster Azure Azure Execute Databricks Databricks Tests Staging WS Prod WS #UnifiedAnalytics #SparkAISummit 9

10 .Azure Databricks REST API/CLI • Provides an easy-to-use interface to the Azure Databricks platform. CLI (open source project) is built on top of the REST APIs – Workspace API • Deploy notebooks from Azure DevOps to Azure Databricks – DBFS API • Deploy libraries from Azure DevOps to Azure Databricks – Jobs API • Execute notebooks and Spark code once deployed #UnifiedAnalytics #SparkAISummit 10

11 .Demo #UnifiedAnalytics #SparkAISummit

12 .DevOps for ML: Goals • Repeatability of model creation & behavior • Evaluation of model predictions • Managing different model versions and files • Operationalization of the model • Monitoring of training and scoring pipelines #UnifiedAnalytics #SparkAISummit 12

13 .#UnifiedAnalytics #SparkAISummit 13

14 .Demo #UnifiedAnalytics #SparkAISummit

15 .Summary • Two approaches • Implementation in Azure Notebooks • Implementation in IDE • Azure DevOps to build CI/CD pipelines (you can selectively use) • REST/CLI APIs • Model CI/CD on Azure • Azure Databricks: Data preparation and model training • Azure ML: Model deployment and management • Azure DevOps: CI/CD pipeline #UnifiedAnalytics #SparkAISummit 15

16 .Call to action • Build a CI/CD pipeline using Azure DevOps • Azure Databricks documentation • Azure DevOps pipelines • Incorporate DevOps in your Azure Databricks implementation #UnifiedAnalytics #SparkAISummit 16

17 . THANK YOU! “It is not the answer that enlightens, but the question” Eugene Ionesco #UnifiedAnalytics #SparkAISummit

18 .DON’T FORGET TO RATE AND REVIEW THE SESSIONS SEARCH SPARK + AI SUMMIT