- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

HBase Off heaping

展开查看详情

1 .HBase Off heaping Anoop Sam John (Intel) Ramkrishna S Vasudevan (Intel)

2 .HBase Cache HBase Cache for better read performance –Keep data local to the process L1 on heap LRU Cache –Larger Cache Sizes => Larger Java Heap => GC issues L2 Bucket Cache –LRU –Backed by off heap memory / File –Can be larger than L1 allocation –Not constrained by Java Heap Size 2

3 .Bucket Cache - Reads Bucket slot RegionServer Read block by block Read request Region1 On heap block copy Scanner layers On heap Block Less throughput vs L1 Read request Region2 Scanner layers GC Impacts On heap Block Java ByteBuffer Predictable latency problems Off heap Bucket Cache 3

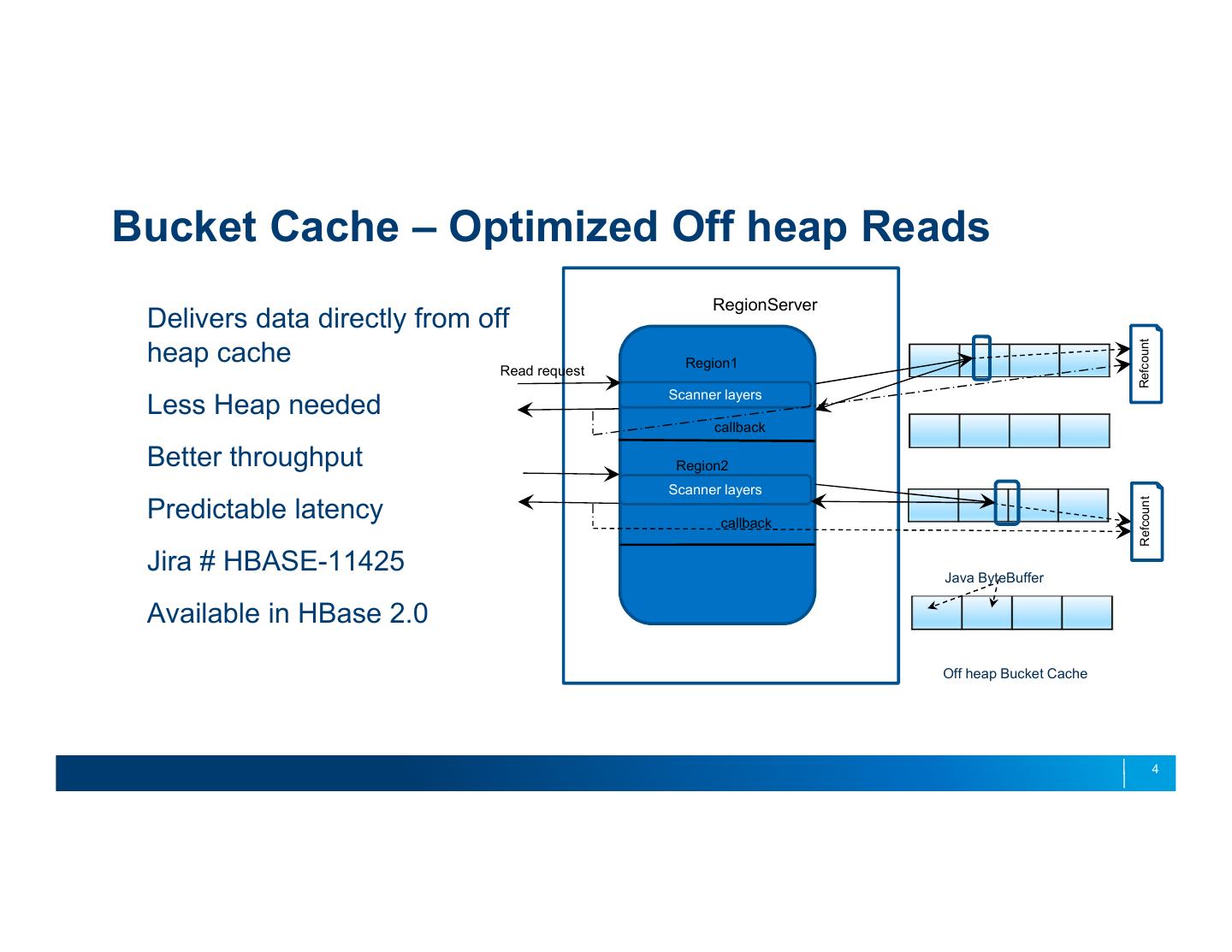

4 .Bucket Cache – Optimized Off heap Reads RegionServer Delivers data directly from off Refcount heap cache Read request Region1 Scanner layers Less Heap needed callback Better throughput Region2 Scanner layers Refcount Predictable latency callback Jira # HBASE-11425 Java ByteBuffer Available in HBase 2.0 Off heap Bucket Cache 4

5 .Alibaba : Old Performance Data With L2 Cache From A/B test cluster with 400+ nodes and on simulated data 12 GB off heap L2 cache Inconsistent throughput. Dips in latency HBase read from L2 does data copy to on heap – More garbage and GC workload 5

6 .Performance Data After Off heaping read path Backported patches to hbase-1.2 based codebase Average throughput improved from 18 to 25 million QPS – 30% improvement Linear throughput. Predictable latency. In production since Oct 2016 Used in 2016 Singles’ Day, with peaks at 100K QPS per single RegionServer 6

7 .HBase Writes Accumulate cells in memstore RegionServer Region 1 –Default flushes at size >= 128 MB Region N Global memstore heap size Store – Defaults to 40% of Xmx memstore memstore memstore MSLAB Chunks & Pool – On heap Chunks of 2 MB – Cells in memstore have data copied over to chunks – Reduce fragmentation – Pool to Reuse chunks chunk v chunk chunk MSLAB Chunk Pool 7

8 .Write path Offheaping HBASE-15179 In HBase 2.0 Reading request data into reusable off heap ByteBuffers – ByteBuffer pool. Response Cell blocks already use this – Using the same off heap ByteBuffers – Each of 64 KB size – Reduce garbage and GC – Usage of off heap avoid temp off heap copy in NIO. Protobuf upgrade – PB 3.x latest version support off heap ByteBuffers throughout – Shaded PB jars to avoid possible version conflict – New PB ByteStream to work over 1 or more Off heap ByteBuffers 8

9 .Write path Offheaping HBASE-15179 RegionServer Off heap MSLAB Chunk Pool Region 1 Region N – 2 MB off heap chunks memstore – Global off heap memstore size – New config memstore memstore hbase.regionserver.offheap.global.memstore .size – Separation of Cell data size, heap overhead size tracking – Region flush decision on cell size alone (128 MB default flush decision) chunk v chunk chunk Off heap MSLAB Chunk Pool – Global level checking with data size and heap overhead 9

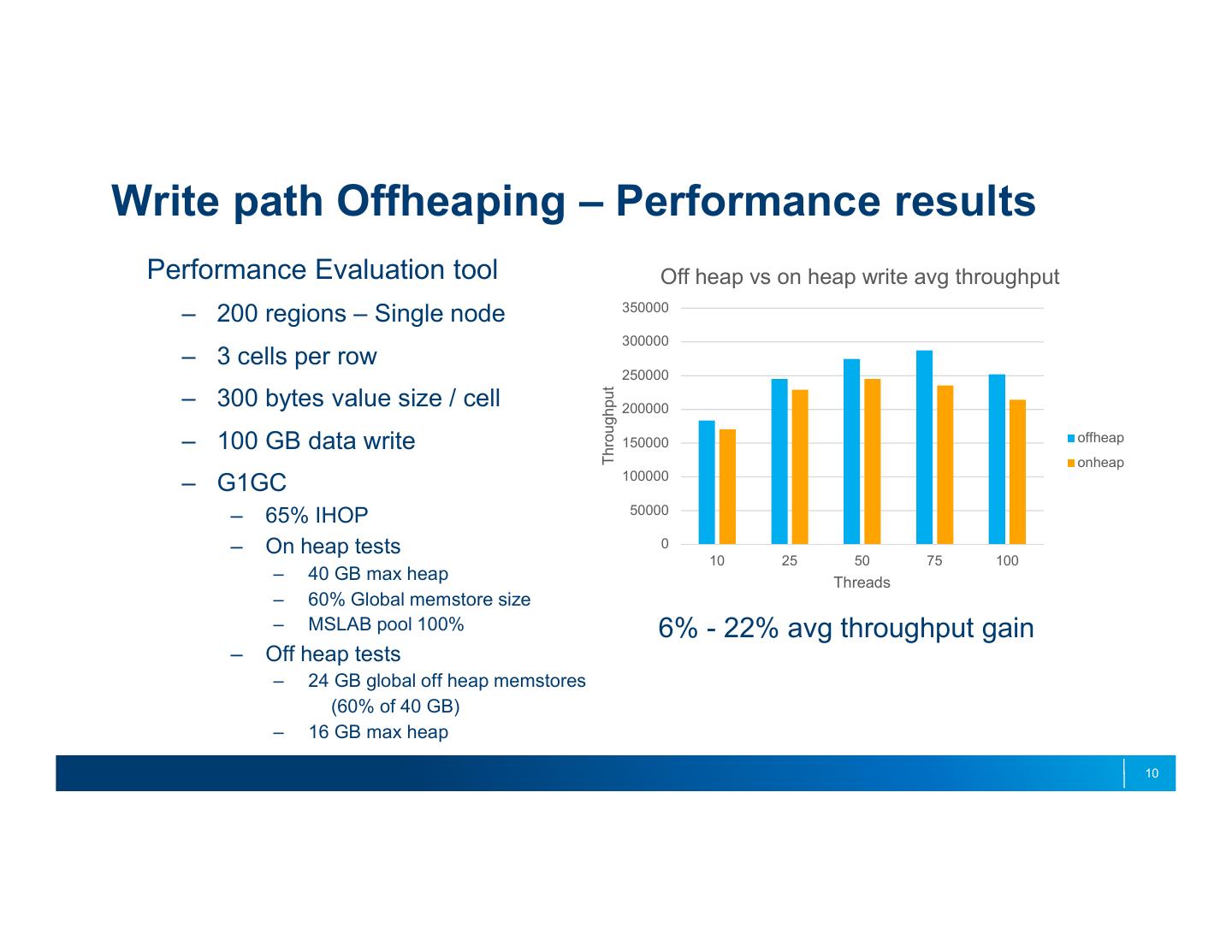

10 .Write path Offheaping – Performance results Performance Evaluation tool Off heap vs on heap write avg throughput 350000 – 200 regions – Single node 300000 – 3 cells per row 250000 Throughput – 300 bytes value size / cell 200000 – 100 GB data write 150000 offheap onheap 100000 – G1GC 50000 – 65% IHOP – On heap tests 0 10 25 50 75 100 – 40 GB max heap Threads – 60% Global memstore size – MSLAB pool 100% 6% - 22% avg throughput gain – Off heap tests – 24 GB global off heap memstores (60% of 40 GB) – 16 GB max heap 10

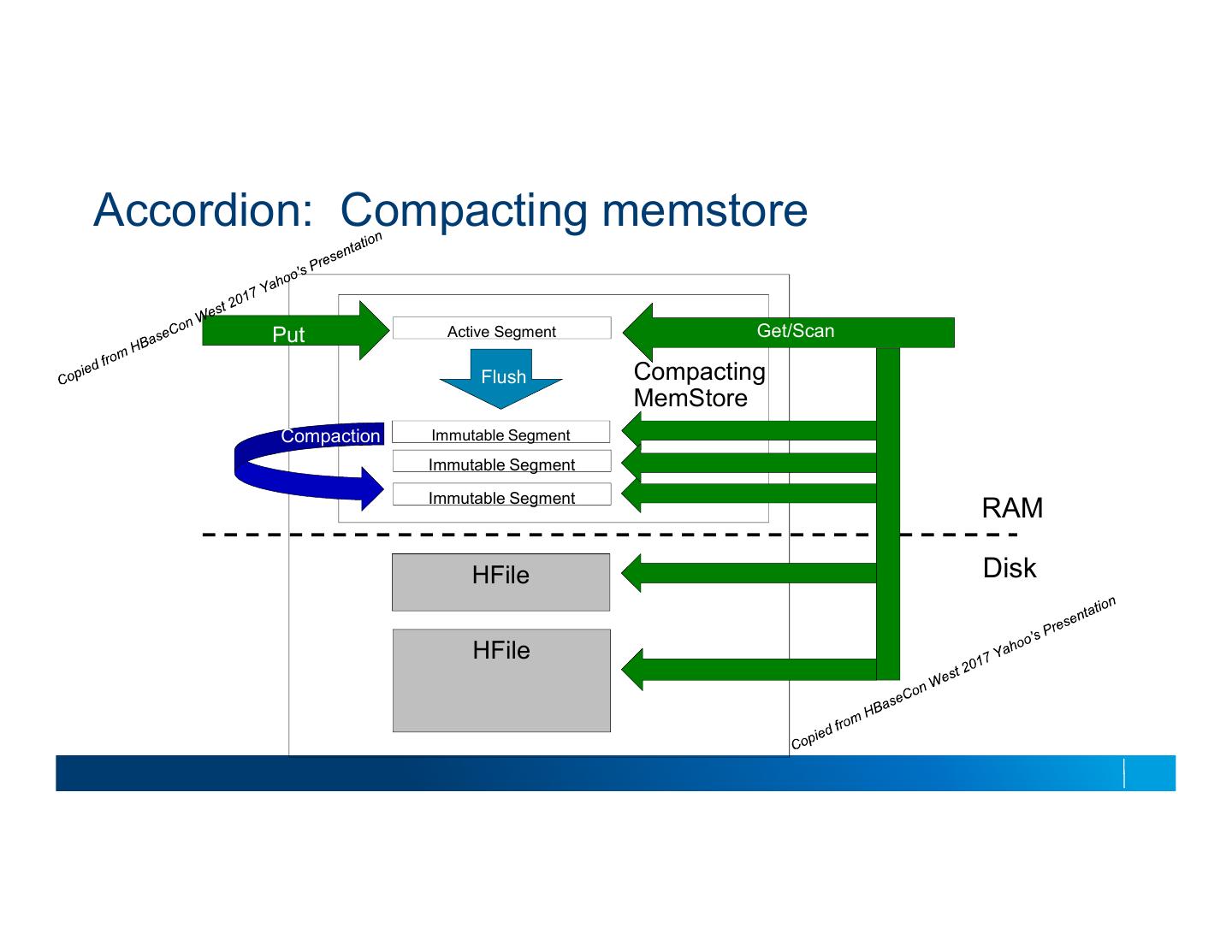

11 .Accordion: Compacting memstore Put Active Segment Get/Scan Flush Compacting MemStore Compaction Immutable Segment Immutable Segment Immutable Segment RAM HFile Disk HFile

12 .Write path Offheaping – Performance results Off heap with Compacting memstore PE Tool – 50 threads and writing 25GB – In memory flushes/flattening reduces heap overhead. – Allow memstore to grow bigger 450000 – Off heap MSLAB pool with compacting 400000 memstores perform the best. 350000 Avg Throughput 300000 On heap 250000 On heap+ Compacting 200000 Memstore 150000 On heap+ Compacting Memstore + MSLAB off 100000 offheap +Compacting Memstore 50000 0 100 500 1024 Value Size per Cell 12

13 . References ● https://blogs.apache.org/hbase/ ● https://blogs.apache.org/hbase/entry/offheaping_the_read_path_in Questions? 13

14 .