- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

16/07 - Cassandra backups and restorations using Ansible submmi

展开查看详情

1 .Cassandra backups and restorations using Ansible Dr. Joshua Wickman Database Engineer Knewton

2 .Relevant technologies ● AWS infrastructure ● Deployment and configuration management with Ansible ○ Ansible is built on: ■ Python ■ YAML ■ SSH ■ Jinja2 templating ○ Agentless - less complexity

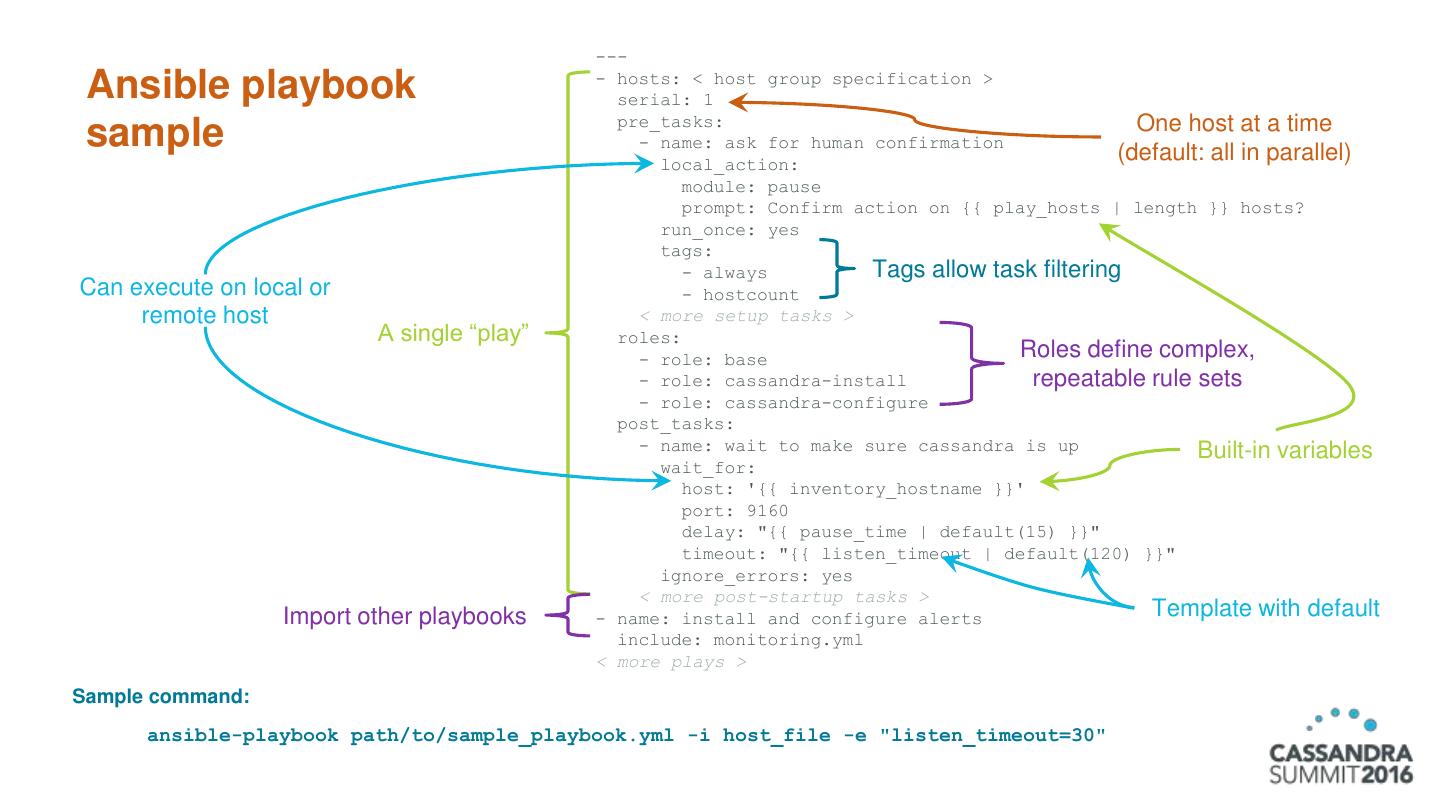

3 . --- - hosts: < host group specification > Ansible playbook serial: 1 pre_tasks: One host at a time sample - name: ask for human confirmation (default: all in parallel) local_action: module: pause prompt: Confirm action on {{ play_hosts | length }} hosts? run_once: yes tags: - always Tags allow task filtering Can execute on local or - hostcount remote host < more setup tasks > A single “play” roles: - role: base Roles define complex, - role: cassandra-install repeatable rule sets - role: cassandra-configure post_tasks: - name: wait to make sure cassandra is up Built-in variables wait_for: host: '{{ inventory_hostname }}' port: 9160 delay: "{{ pause_time | default(15) }}" timeout: "{{ listen_timeout | default(120) }}" ignore_errors: yes < more post-startup tasks > Import other playbooks - name: install and configure alerts Template with default include: monitoring.yml < more plays > Sample command: ansible-playbook path/to/sample_playbook.yml -i host_file -e "listen_timeout=30"

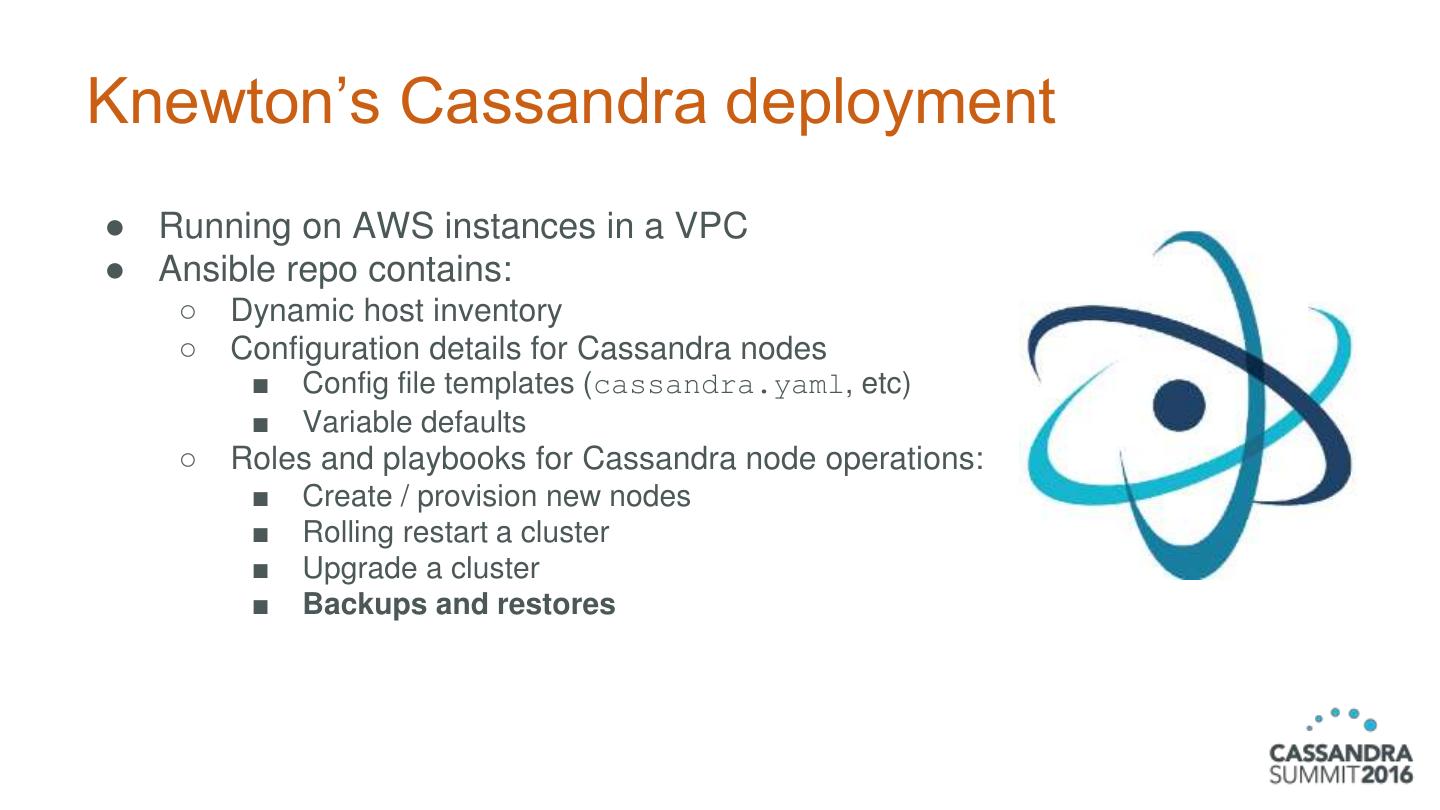

4 .Knewton’s Cassandra deployment ● Running on AWS instances in a VPC ● Ansible repo contains: ○ Dynamic host inventory ○ Configuration details for Cassandra nodes ■ Config file templates (cassandra.yaml, etc) ■ Variable defaults ○ Roles and playbooks for Cassandra node operations: ■ Create / provision new nodes ■ Rolling restart a cluster ■ Upgrade a cluster ■ Backups and restores

5 .Backups for disaster recovery Data Data loss corruption AZ/rack loss Data center loss

6 .But that’s not all... Restored backups are also useful for: ● Benchmarking ● Data warehousing ● Batch jobs ● Load testing ● Corruption testing ● Tracking down incident causes

7 . Backups Those sound like a good idea. I can get those for you, no sweat!

8 .Backups — requirements ● Simple to use ● Centralized, yet distributed Easy with Ansible ● Low impact ● Built with restores in mind Obvious, but super important to get right!

9 .Backup playbook 1. Ansible run initiated Ansible 2. Commands sent to each Cassandra node over SSH 3. nodetool snapshot on each node 4. Snapshot uploaded to S3 SSH Via AWS CLI Cassandra cluster 5. Metadata gathered centrally by AWS CLI Ansible and uploaded to S3 6. Backup retention policies enforced by separate process Retention AWS S3 enforcement

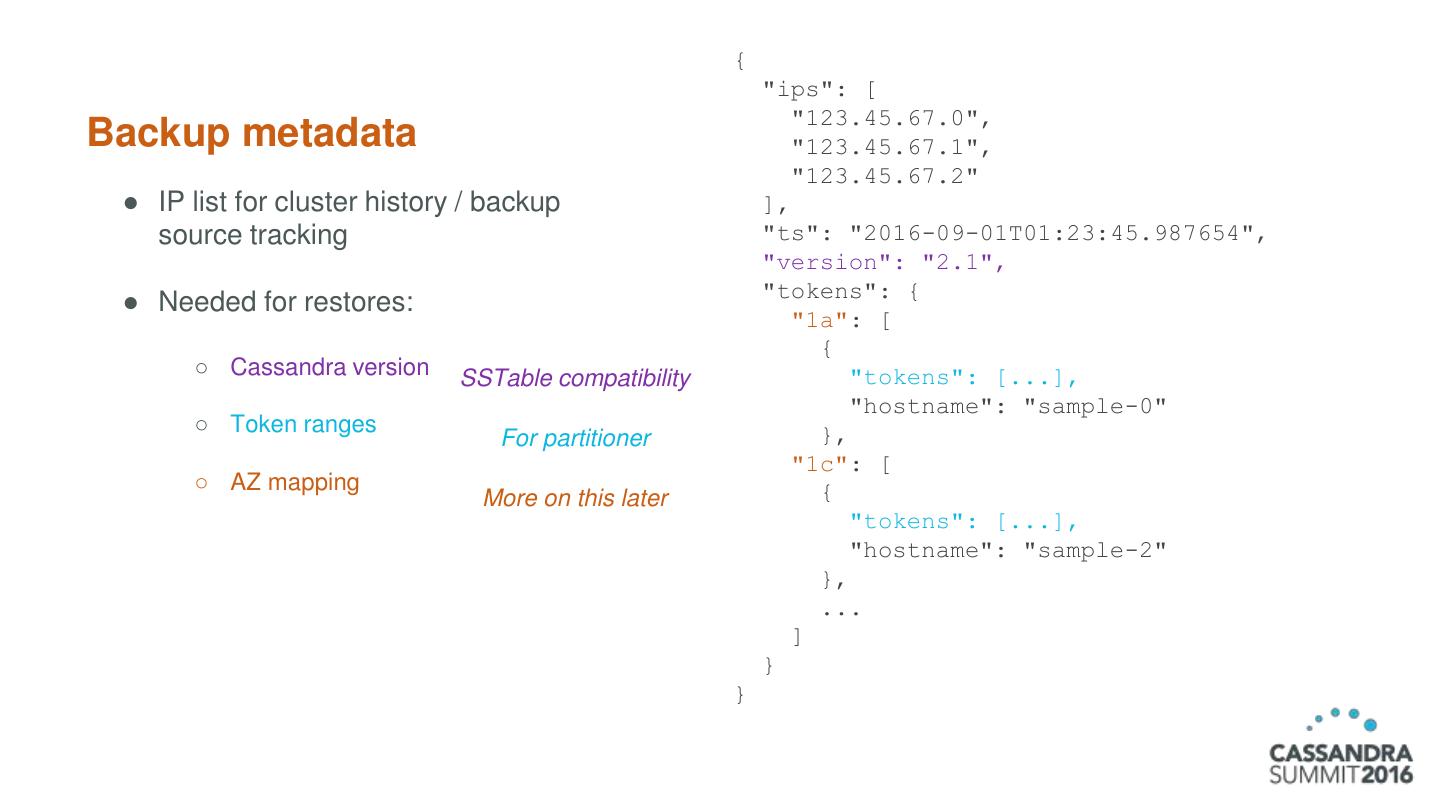

10 . { "ips": [ "123.45.67.0", Backup metadata "123.45.67.1", "123.45.67.2" ● IP list for cluster history / backup ], source tracking "ts": "2016-09-01T01:23:45.987654", "version": "2.1", "tokens": { ● Needed for restores: "1a": [ { ○ Cassandra version SSTable compatibility "tokens": [...], "hostname": "sample-0" ○ Token ranges }, For partitioner "1c": [ ○ AZ mapping { More on this later "tokens": [...], "hostname": "sample-2" }, ... ] } }

11 .Backups — results ● Simple and predictable ● Clusterwide snapshots ● Low impact ● Automation-ready Everything’s good! ...right?

12 . Restores Oh, you actually wanted to use that data again? That’s… harder.

13 .Restores — requirements ● Primary ○ Data consistency across nodes ○ Data integrity maintained ○ Time to recovery Spin up new ● Secondary cluster using ○ Multiple snapshots at a time restored data ○ Can be automated or run on-demand ○ Versatile end state

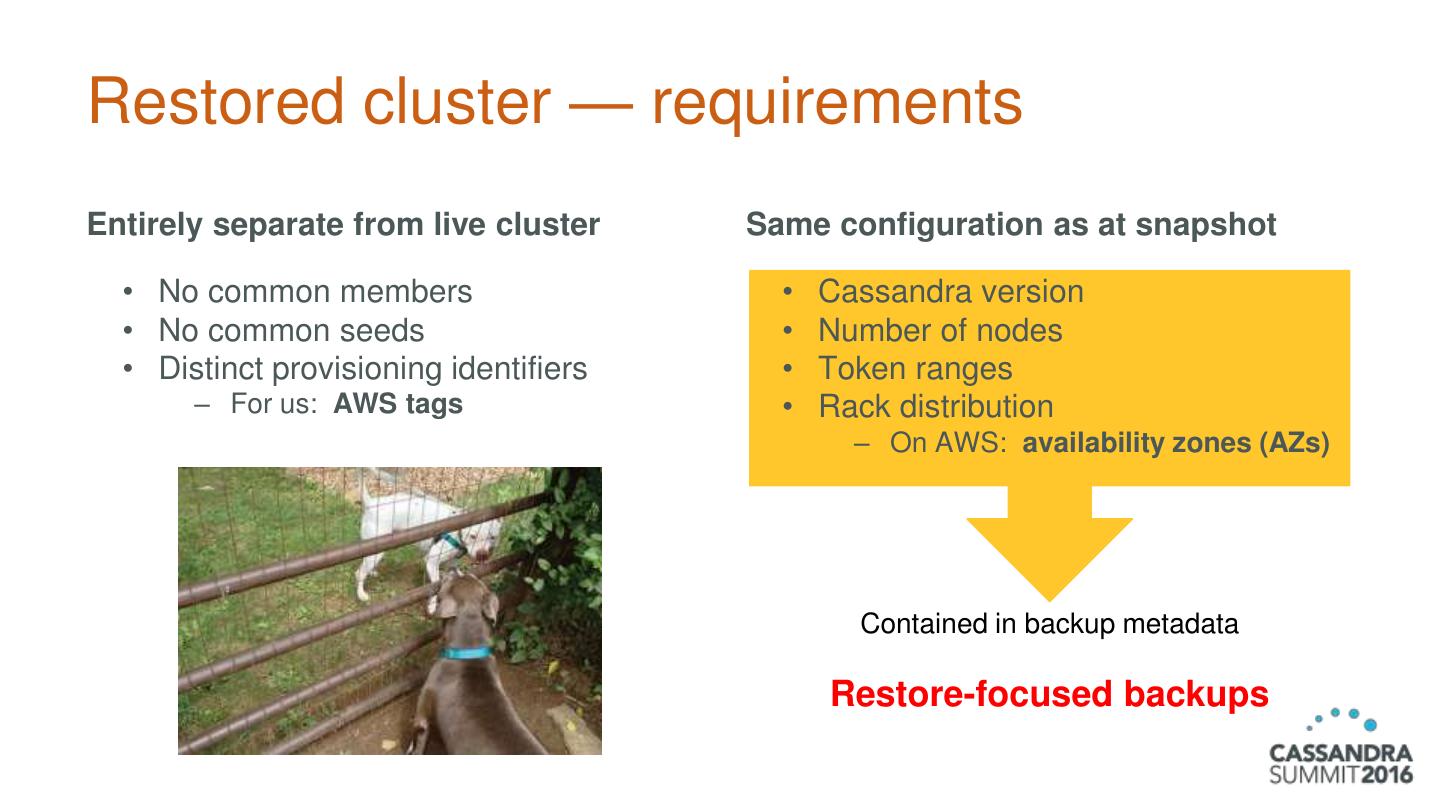

14 .Restored cluster — requirements Entirely separate from live cluster Same configuration as at snapshot • No common members • Cassandra version • No common seeds • Number of nodes • Distinct provisioning identifiers • Token ranges – For us: AWS tags • Rack distribution – On AWS: availability zones (AZs) Contained in backup metadata Restore-focused backups

15 .Ansible in the cloud — a caveat Programmatic launch of servers + Ansible host discovery happens once per playbook = Launching cluster requires 2 steps: 1. Create instances 2. Provision instances as Cassandra nodes

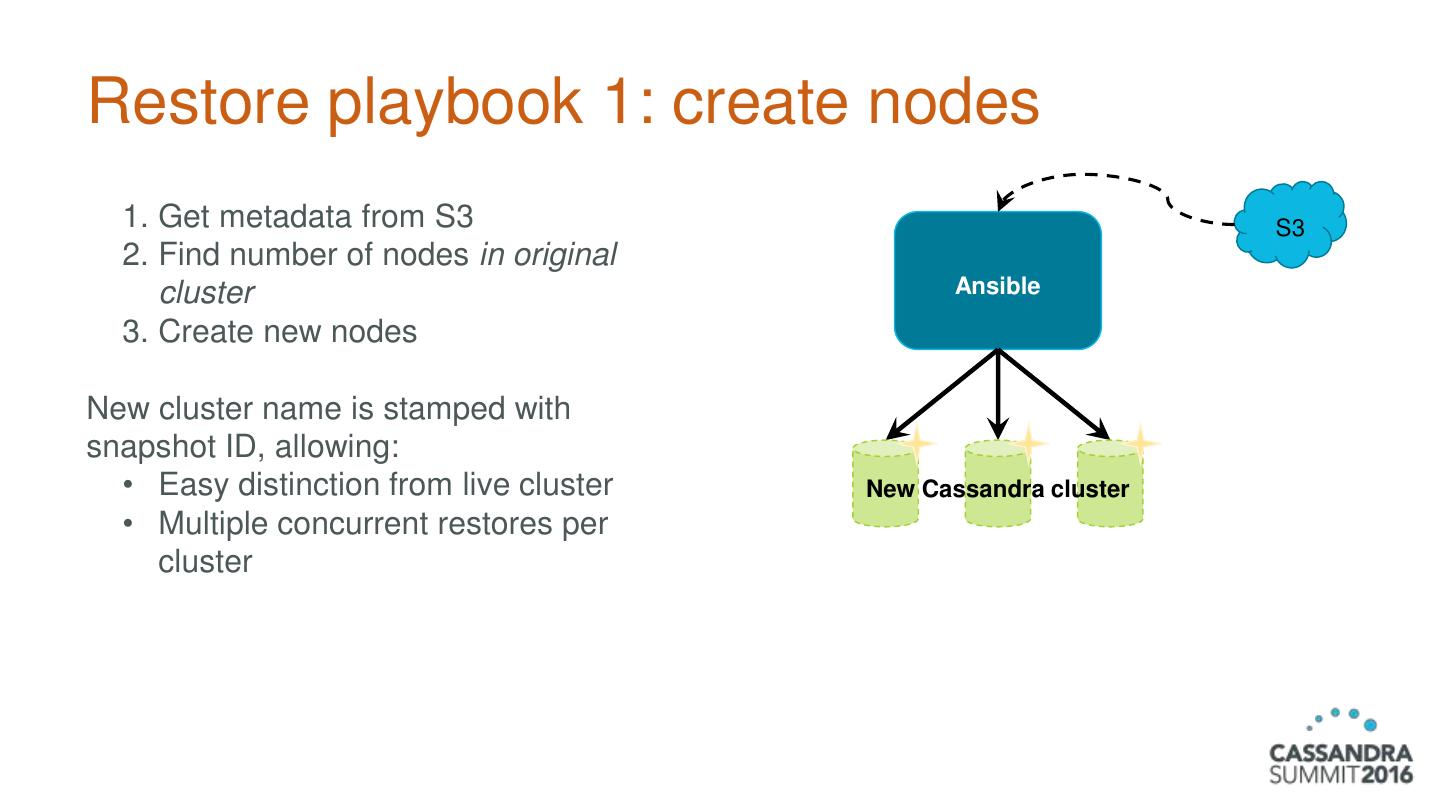

16 .Restore playbook 1: create nodes 1. Get metadata from S3 S3 2. Find number of nodes in original cluster Ansible 3. Create new nodes New cluster name is stamped with snapshot ID, allowing: • Easy distinction from live cluster New Cassandra cluster • Multiple concurrent restores per cluster

17 .Restore playbook 2: provision nodes 1. Get metadata from S3 (again) S3 2. Parse metadata – Map source to target Ansible 3. Find matching files in S3 – Filter out some Cassandra system tables 4. Partially provision nodes – Install Cassandra New Cassandra cluster • Use original C* version – Mount data partition 5. Download snapshot data to nodes 6. Configure Cassandra and finish provisioning nodes S3 LOADED

18 .Restores: node mapping Source ⇒ Target Include token ranges Source AZs ⇒ Target AZs

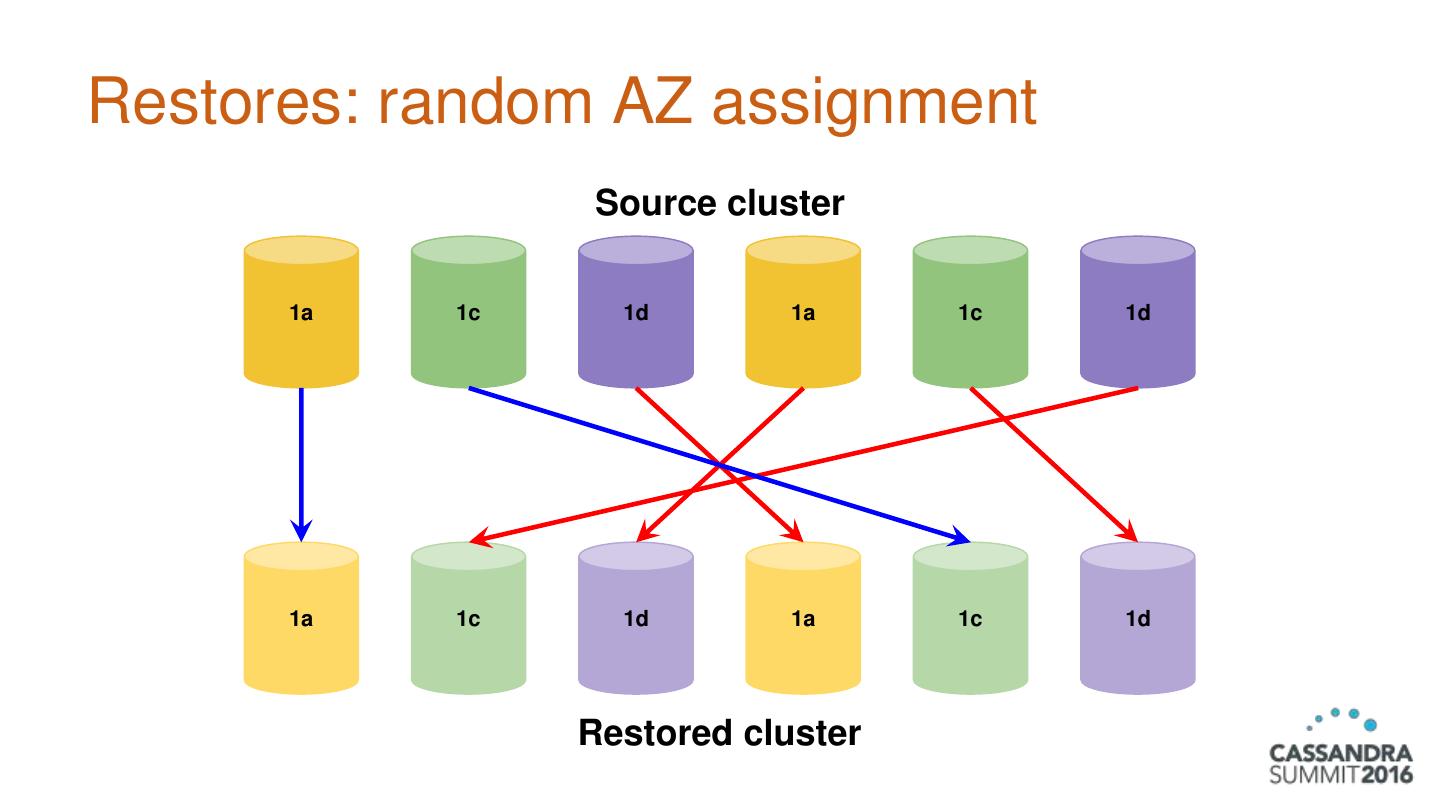

19 .Restores: random AZ assignment Source cluster 1a 1c 1d 1a 1c 1d 1a 1c 1d 1a 1c 1d Restored cluster

20 .Why is this a problem? With NetworkTopologyStrategy and RF ≤ # of AZs, Cassandra would distribute replicas in different AZs… ...so data appearing in the same AZ will be skipped on read. ● Effectively fewer replicas ● Potential quorum loss ● Inconsistent access of most recent data

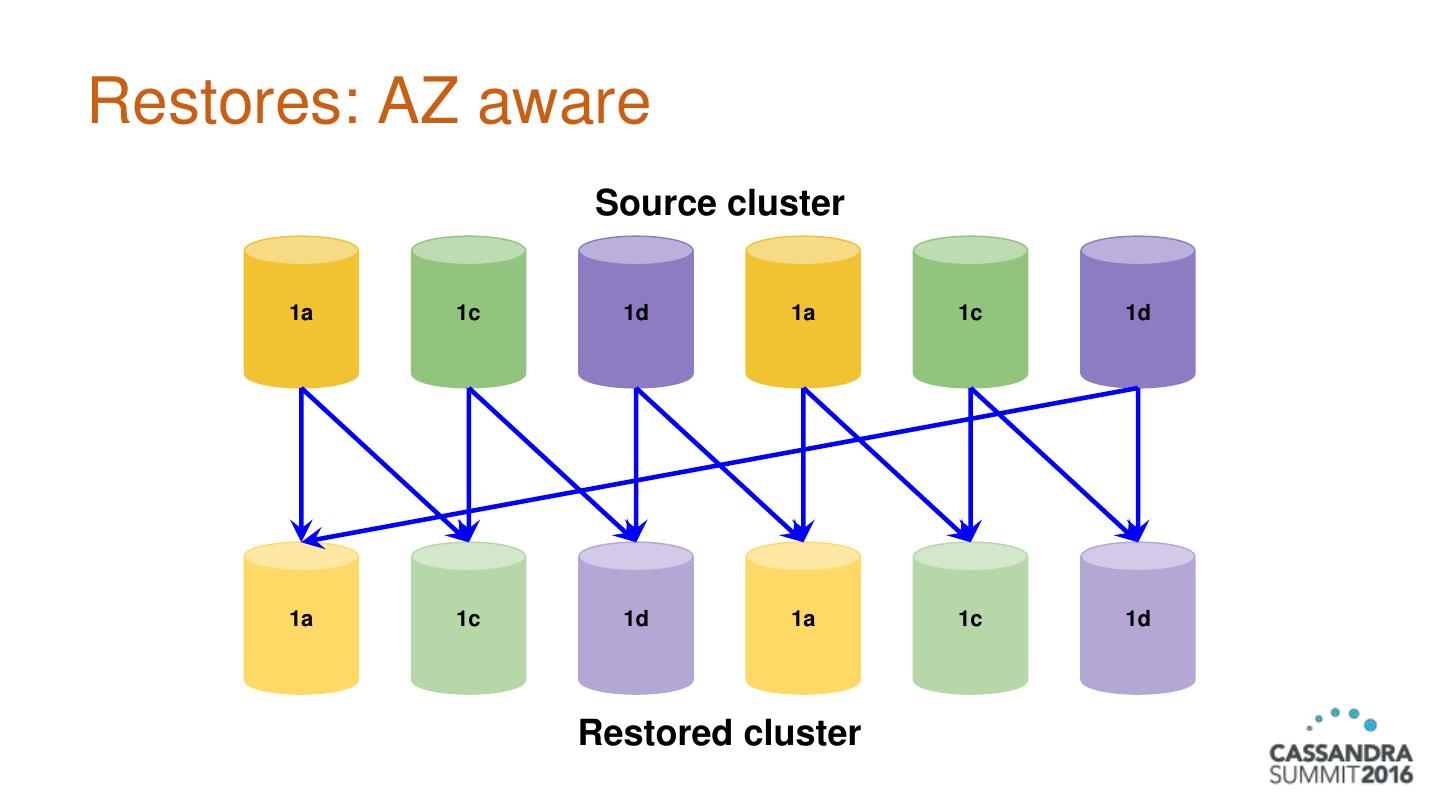

21 .Restores: AZ aware Source cluster 1a 1c 1d 1a 1c 1d 1a 1c 1d 1a 1c 1d Restored cluster

22 .Implementation details ● Snapshot ID ○ Datetime stamp (start of backup) ○ Restore defaults to latest ● Restores use auto_bootstrap: false ○ Nodes already have their data! ● Anti-corruption measures ○ Metadata manifest created after backup has succeeded ○ If any node fails, entire restore fails

23 .Extras ● Automated runs using cron job, Ansible Tower or CD frameworks ● Restricted-access backups for dev teams using internal service

24 .Conclusions ● Restore-focused backups are imperative for consistent restores ● Ansible is easy to work with and provides centralized control with a distributed workload ● Reliable backup restores are powerful and versatile

25 .Thank you! Questions?