- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Five years of operating a large scale globally replicated Pulsar installation— Francis&Ludwig Pu

展开查看详情

1 .Five Years of Operating a Large Scale Globally Replicated Pulsar Installation June 18, 2020 Ludwig Pummer Joe Francis ludwig@verizonmedia.com joef@verizonmedia.com

2 .Who are we Ludwig Pummer Principal Production Engineer Verizon Media Joe Francis Director, Core Platforms Verizon Media 2

3 .What do we do ● Operate a hosted pub-sub service within VMG ○ open-sourced as Pulsar ● Global presence ○ 6 DC (Asia, Europe, US) ○ full mesh replication ● Business critical use cases ○ Serving use cases ○ Lower latency bus for other low latency service 3

4 .Use cases ● Application integration ○ Server-to-server control, status, notification messages ● Persistent queue ○ Buffering, feed ingestion, task distribution ● Message bus for large scale data stores ○ Durable log ○ Replication within and across geo-locations 4

5 .Trajectory 2015 2016 2020 1 tenant 20 tenants 100+ tenants 2 clusters, 2 DC 12 clusters, 6 DC 18 clusters, 6 DC 60K wps @ 2KB 500K wps avg 1.3M wps avg / 3M peak 60K rps 1.1M rps avg 2M rps avg / 6M peak <100 topics 1.4M topics 2.8M topics 5

6 .Scaling up a Cluster More deliveries (increase fanout) Add Brokers More publishes Add Bookies, Brokers More storage Add Bookies Massively more topics Add Clusters (SuperCluster) SuperCluster (peer cluster) 6

7 .Storage & I/O auto-balancing wps by namespace wps by bookkeeper 7

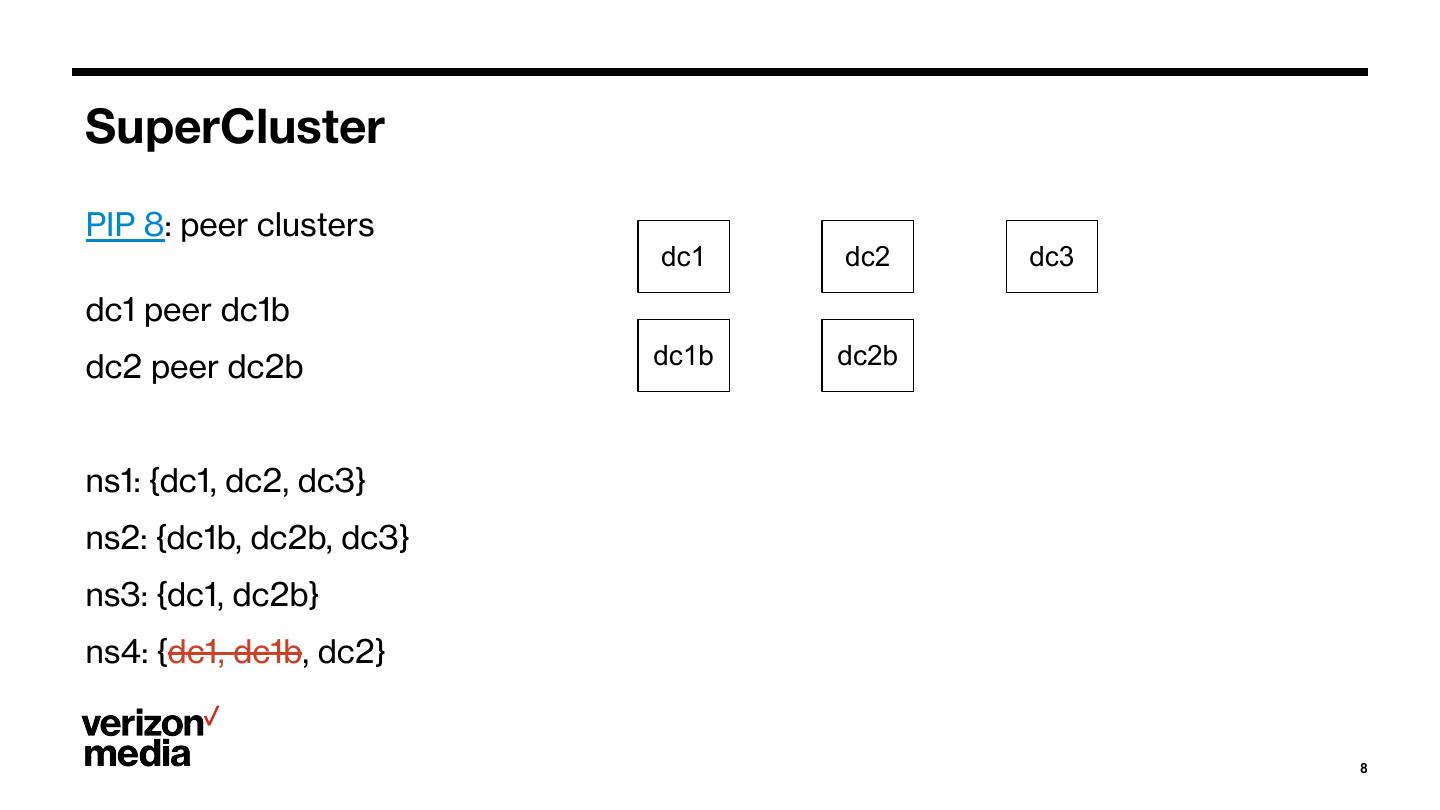

8 .SuperCluster PIP 8: peer clusters dc1 dc2 dc3 dc1 peer dc1b dc2 peer dc2b dc1b dc2b ns1: {dc1, dc2, dc3} ns2: {dc1b, dc2b, dc3} ns3: {dc1, dc2b} ns4: {dc1, dc1b, dc2} 8

9 .Provisioning Model New Tenant: ● Average message size ● Peak publishes per second ● Steady-state deliveries per second (fan out) ● Per cluster/DC ● x509 principal Calculate: ● Broker messages/sec ● Broker bandwidth ● Bookie MB/sec 9

10 .Hardware Evolution: Brokers Pre-2015 2015 2020 Dual Xeon E5620 (Gulftown) Dual Xeon E5-2620 (Ivy Dual Xeon E5-2620 (Ivy 8-core Bridge) 12-core Bridge) 12-core 24GB RAM 48GB RAM 96GB RAM 1G NIC 10G NIC 10G or 25G NIC 10

11 .Hardware Evolution: BookKeepers Pre-2015 2015 2020 Dual Xeon E5-2630 (Sandy Dual Xeon E5-2620 (Ivy Dual Xeon Gold 6240 Bridge) 12-core Bridge) 12-core 36-core 32GB RAM 64GB RAM 192GB RAM 1G NIC 10G NIC 25G NIC 12 x 300GB 15K RPM SAS 10 x 4TB 7.2k RPM SATA 4x 4TB NVMe drives 2 x 120GB SSD 2x 128GB Optane Persistent (1 x HW RAID-10 of 10 drives Memory (2 x HW RAID-10 of 6 drives) 1 x HW RAID-1 of 2 SSDs) Variants: Variants: 4x 8TB NVMe HW RAID-1 of 2 add’l 4TB SW RAID-1 of 2 larger SSD 11

12 .Hardware Evolution: ZooKeepers Pre-2015 2015 2020 Dual Xeon E5620 (Gulftown) Dual Xeon E5-2620 (Ivy Dual Xeon E5-2620 (Ivy 8-core Bridge) 12-core Bridge) 12-core 24GB RAM 64GB RAM 64GB RAM 1G NIC 10G NIC 10G NIC 240 GB SSD 2x 240 GB SSD 2x 960 GB SSD 12

13 .Footprint Brokers: Mix of 2015+ config 550 brokers Heterogeneous even within cluster Bookies: Mostly 2015 config 450 bookies Heterogeneous across clusters. Homogeneous within cluster (or isolation group) 13

14 .JVM Garbage Collector: G1GC Pulsar 1.x heap usage gc pause 14

15 .JVM Garbage Collector: G1GC Pulsar 2.x heap usage gc pause 15

16 .JVM Garbage Collector: ZGC heap usage gc pause 16

17 .Statistics and Monitoring 17

18 .Statistics and Monitoring: Too much data 18

19 . Statistics and Monitoring: Tenant metrics collector Brokers /admin/destinations topic monitor 19

20 .Statistics and Monitoring: Tenant metrics 20

21 .Deployment ● No downtime. Manage risk. ● Staged. Sequenced. ● Low parallelism ● Managed by Screwdriver jobs ○ https://docs.screwdriver.cd/ ● Screwdriver launches Ansible-like tool 21

22 .Deployment Sequence Hosts 1. ZooKeeper (not prod) 2. BookKeeper 3. Broker 22

23 .Broker Isolation Policies { "namespaces" : [ "tenant-one/.*" ], "primary" : [ "broker6[7-9].example.com" ], "secondary" : [ "none" ], "auto_failover_policy" : { "policy_type" : "min_available", "parameters" : { "min_limit" : "0", "usage_threshold" : "100" } } } 23

24 .Broker Isolation Policies Uses ● High Profile/Reserved capacity ● Misbehaving tenants ● Debugging 24

25 .BookKeeper Storage Utilization Factors and Configuration impacting cluster storage utilization ● Number of topics x write throughput ● MinLedgerRolloverTime, MaxLedgerRolloverTime, CursorRolloverTime ● Over-replication ● Increased write-quorum ● Increased topic TTL ● Increased retention period ● Crossing BookKeeper compaction threshold ● Compaction thresholds and intervals 25

26 .BookKeeper Rack Awareness Rack as a failure domain Historical Rack Aware implementation 2 Rack vs N Racks 26

27 .Host Failure Response BookKeeper down: handled automatically, no problem slow (hardware issue): enable ForceReadOnly, wait for repair Broker down: handled automatically, no problem GC too busy: restart topic issue: unload topic, unload bundle, restart broker 27

28 .Operational Tools pulsar-admin ● primary troubleshooting & investigative tool orphan ledger cleanup tool ● delete ledgers not referenced by any topic ad-hoc python scripts using kazoo and ML protobuf ● abandoned namespace/topic cleanup ● storage utilization analysis 28

29 .Thank you.