- 快召唤伙伴们来围观吧

- 微博 QQ QQ空间 贴吧

- 文档嵌入链接

- 复制

- 微信扫一扫分享

- 已成功复制到剪贴板

Apache HBase Improvement about GC/SLA in Alibaba

展开查看详情

1 . hosted by Use CCSMap to Improve HBase YGC Time & Efforts on SLA improvements from Alibaba search in 2018 Chance Li, Xiang Wang Lijin Bin

2 . hosted by Use CCSMap to Improve HBase YGC Time Agenda 01 Why we need CCSMap 02 CCSMap structure 03 How to use CCSMap 04 Future work

3 . hosted by Why we need CCSMap Overview Heap is huge Big memory preserved for HBase, BucketCache for read path but 1 what about write path Overhead of JDK CSLM 2 Needs 3 objects to store one key- value: index, Node and Cell, plenty of objects required than those for real CSLM not GC-friendly Thousands of millions objects, long time to scan card table/old 3 data. space leads to slow YGC Memory fragment 4 Need SLAB to prevent CSLM from fragment.

4 . hosted by Why we need CCSMap Anatomy of JDK CSLM title text" text" text" text" text" Extra Objects Memory overhead Example of 5M cell Index: Almost Overhead 40B Overhead 40B Extra 6.25M 250M memory Nodes" ¼ of Nodes" per Index" per Node" objects" overhead"

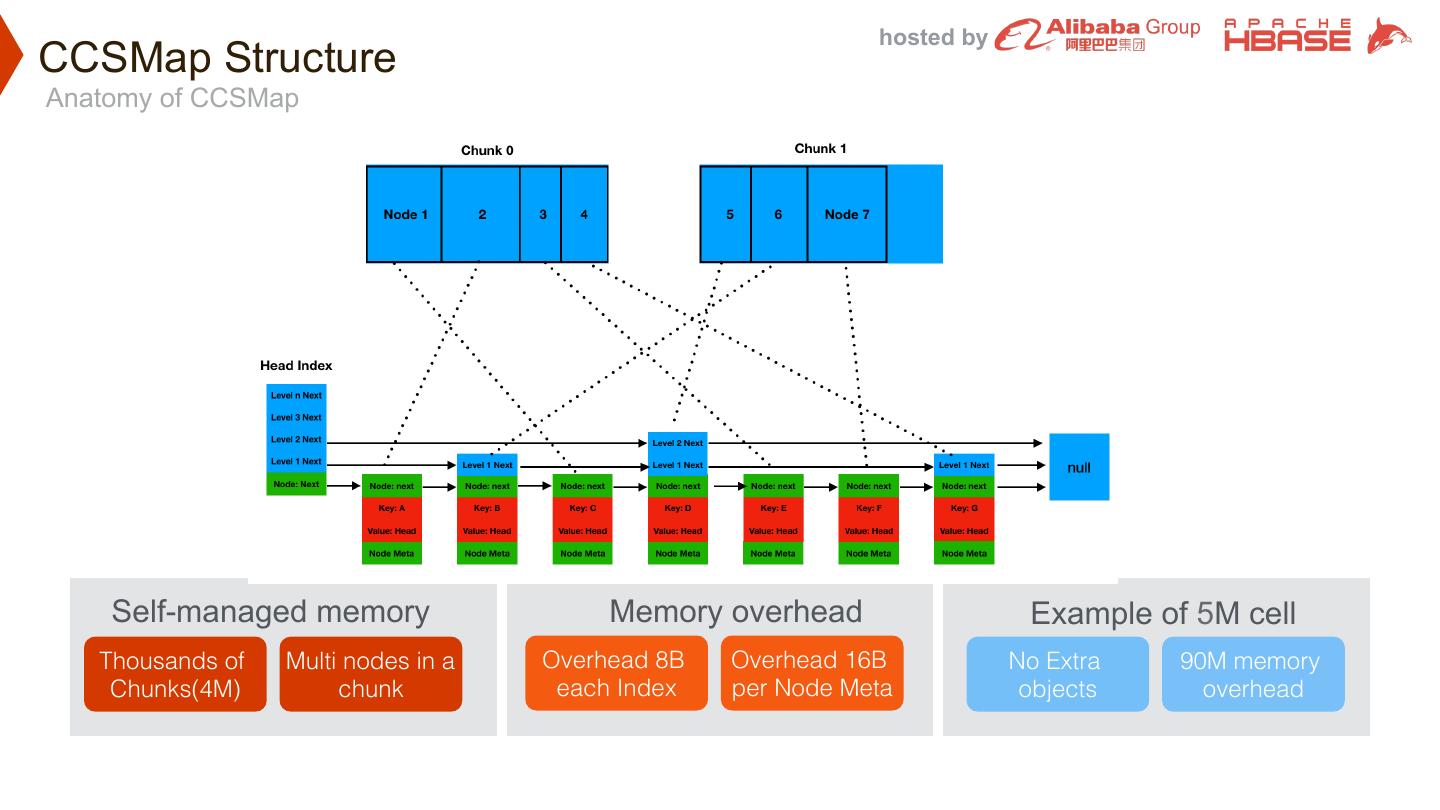

5 . hosted by CCSMap Structure Anatomy of CCSMap title text" text" text" text" text" Self-managed memory Memory overhead Example of 5M cell Thousands of Multi nodes in a Overhead 8B Overhead 16B No Extra 90M memory Chunks(4M)" chunk" each Index" per Node Meta" objects" overhead"

6 . hosted by CCSMap Structure Overall look Maps Data Structure BaseCCSMap<K,V BaseTwinCCSMap<K Direct Two Type >" >" CellCCSMap" Meta" Data" align" Pointer" of data" ChunkPool On-heap/off-heap Special for big Global " Space efficiency" Big chunk(4M)" pooled chunk" object" INodeComparator ISerde Configuartion CCSMapCellCom CCSMapCellComp paratorDefault" artorDirectly" CellSerde" Reuse " individual"

7 . hosted by CCSMap Structure Data structure of Node For Hbase, key and value are all Cell, so data is byte[] of Cell, and we can deserialize the data to Cell(ByteBufferKeyValue) directly 4

8 . hosted by CCSMap Structure Classes for HBase internal 1. CCSMapMemstore 2. ImmutableSegmentOnCCSMap 3. MutableSegmentOnCCSMap 4. MemstoreLABProxyForCCSMap 4

9 . hosted by How to use CCSMap To enable CCSMap CCSMap will be enable by default after turning off CompactingMemstore on-heap or off-heap Other configurations CCSMap individual configuration, or Chunk pool capacity, Chunk size, original configuration used by count of pooled chunk to be initialized DefaultMemstore

10 . hosted by Before using CCSMap

11 . hosted by After using CCSMap

12 . hosted by Future work 01 Combine CompactingMemstore To support in-memory flush/compaction 02 To further improve ability of Memstore To improve performance of put with dense columns To improve performance of get with dense columns, especially hot key To support on Persistent Memory 4

13 . hosted by Efforts on SLA improvements Agenda 01 Client Connection Flood 02 HDFS Failure Affection 03 Disaster Recovery 04 Stall Server 05 Dense Columns 06 Client Meta Acess Flood

14 . hosted by Client Connection Flood ² Separate client and server ZK quorum to avoid client connection boost exhausted zookeeper and cause RegionServer ZK session loss(HBASE-20159) ª Configurations to enable this feature • hbase.client.zookeeper.quorum • hbase.client.zookeeper.property.clientPort • hbase.client.zookeeper.observer.mode

15 . hosted by HDFS Failure Affection ² Allow RegionServer to live during HDFS failure(HBASE-20156) ª Not abort when HDFS failure • Check FS and retry if flush fail • Postpone WAL rolling when FileSystem not available ª RegionServer service will recover after HDFS comes back ª Upstreaming in progress

16 . hosted by Disaster Recovery ² Reduce ZK request to prevent ZK suspend during disaster recovery (HBASE-19290) ª Before: request flood to ZK during disaster recovery • Get all SplitTasks from splitLogZNode • For each SplitTask • getChildren of rsZNode’s to get number of available RSs • try grab SplitTask’s lock break if no splitter available • Try to grab task if no splitter available ª After • getChildren of rsZNode’s to get number of available RSs • For each SplitTask: try grab SplitTask’s lock break if no splitter available • Won’t try grab task if no splitter available

17 . hosted by Stall Server ² Enhance RegionServer self health check to avoid stall server (HBASE-20158) ª Before • Heartbeat still works when resource exhausted, RS regarded as alive but inaccessible • Request hang on Disk failure cannot be captured by existing metrics ª After (w/ DirectHealthChecker) • Pre-launched chore thread, not affected if physical resource exhausted • Periodically pick some regions on the RS and send request to itself • Shortcut requests, don’t need to access zookeeper/master/meta ª Upstreaming in progress

18 . hosted by Dense Columns ² Limit concurrency of put with dense columns to prevent write handler exhausted(HBASE-19389) ª MemStore insertion is at KV granularity, dense columns will cause CPU bottleneck ª We must limit the concurrency of such puts ª Configurations • hbase.region.store.parallel.put.limit.min.column.count • hbase.region.store.parallel.put.limit

19 . hosted by Client Meta Acess Flood ² Separate client and server request of meta (to be upstreamed)

20 . hosted by Thanks HBase Dingding Group Personal Wechat